-

-

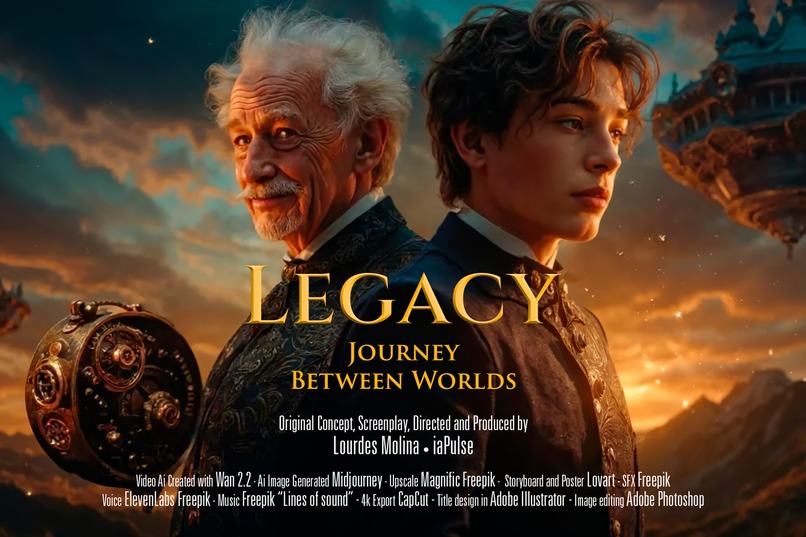

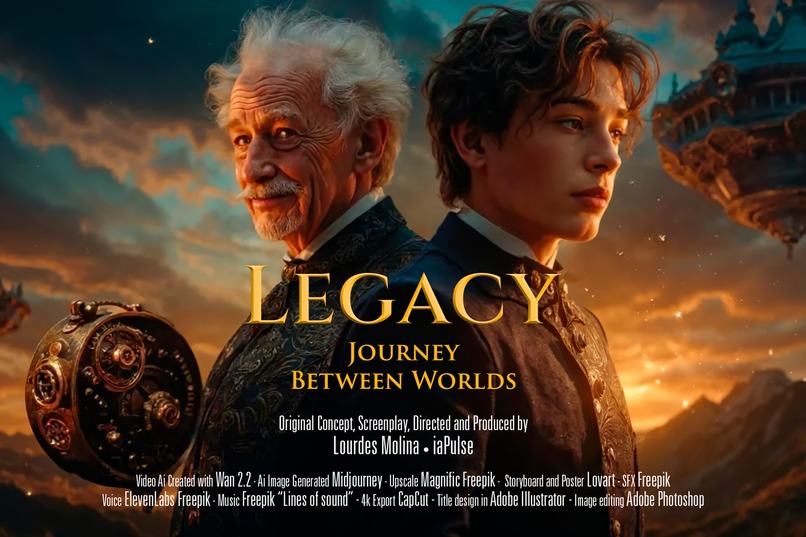

Legacy: Journey Between Worlds – The Making of an AI-Generated Short Film

-

Legacy: Journey Between Worlds – The Making of an AI-Generated Short Film

-

Legacy: Journey Between Worlds – The Making of an AI-Generated Short Film

-

Legacy: Journey Between Worlds – The Making of an AI-Generated Short Film

-

Legacy: Journey Between Worlds – The Making of an AI-Generated Short Film

-

Legacy: Journey Between Worlds – The Making of an AI-Generated Short Film

-

Legacy: Journey Between Worlds – The Making of an AI-Generated Short Film

Legacy: Journey Between Worlds – The Making of an AI-Generated Short Film

Inspiration

My inspiration for this project came from a fascination with interdimensional travel stories and the relationship between generations. I wanted to explore how an object can become a bridge between people, times, and worlds. The steampunk aesthetic, with its intricate mechanisms and its fusion of the old and the futuristic, felt like the perfect vehicle to tell this story.

The films of Hayao Miyazaki, especially Howl’s Moving Castle, along with the work of Jules Verne and the visual style of films like Martin Scorsese’s Hugo, were important references. I wanted to capture that sense of wonder and discovery, but through the new possibilities offered by artificial intelligence.

Learning

This project has been a deep learning journey about the current capabilities of AI in audiovisual creation:

I discovered how AI can maintain visual coherence across multiple images to create a fluid narrative.

I learned to write effective prompts that communicate not only visual elements but also emotions and atmospheres.

I understood the importance of rhythm in visual storytelling, even when working with static images.

I developed techniques to combine music, sound effects, and narration to bring generated images to life.

Perhaps the most valuable insight was realizing that AI does not replace human creativity, but rather amplifies it and offers new forms of expression that were previously inaccessible to individual creators.

Production Process

The creation of Legacy followed these steps:

Conceptualization: I developed the central idea of a grandfather who gives a magical device to his grandson before passing away, allowing him to travel between worlds.

Script and Storyboard: I wrote a simple script divided into three acts (Legacy, Discovery, Journey) and planned the key scenes I needed to visualize.

Image Generation: I worked with AI tools to create all the images for the short film, constantly refining prompts to maintain consistency in characters, environments, and visual style.

Narrative Organization: I organized the images into logical sequences, assigning specific timing to each one to create a cinematic rhythm.

SFX: I generated sound effects with AI from scratch.

Visual Effects and Editing: I applied Ken Burns effects (subtle zooms and pans) to static images to create a sense of movement and added coherent transitions between scenes.

Promotional Materials: I created posters, social media backgrounds, and promotional descriptions to share the project.

Voices: I used ElevenLabs via Freepik to choose the best voice to match the character.

Challenges Faced

The journey was not without obstacles:

Visual Coherence: Maintaining consistency in character features and key elements like the magical device across different generated images was a constant challenge.

Technical Limitations: Working with static images to create a sense of motion and dynamism required creative editing and effects techniques.

Clear Narrative: Telling an emotional and understandable story without traditional dialogue, relying mainly on images and voice-over narration.

Creative Balance: Finding the balance between guiding the AI with specific prompts and allowing unexpected creative elements to emerge that enriched the story.

Audiovisual Integration: Perfectly synchronizing music, sound effects, and narration with the images to create a cohesive immersive experience.

Conclusion

Legacy: Journey Between Worlds represents not only a story about intergenerational connections and discovery but also an experiment in how AI can democratize creation.

What's next for LEGACY: A Journey Between Worlds | AI-Generated Short Film

I would love to see if a producer likes the idea and can make either a movie or a netflix series for example.

In terms of outreach, use the project as a case study for educational institutions interested in the intersection between technology and storytelling.

Built With

- adobe

- adobe-illustrator

- capcut

- elevenlabs

- freepik

- lovart

- magnific

- midjourney

- photoshop

- wan

Log in or sign up for Devpost to join the conversation.