-

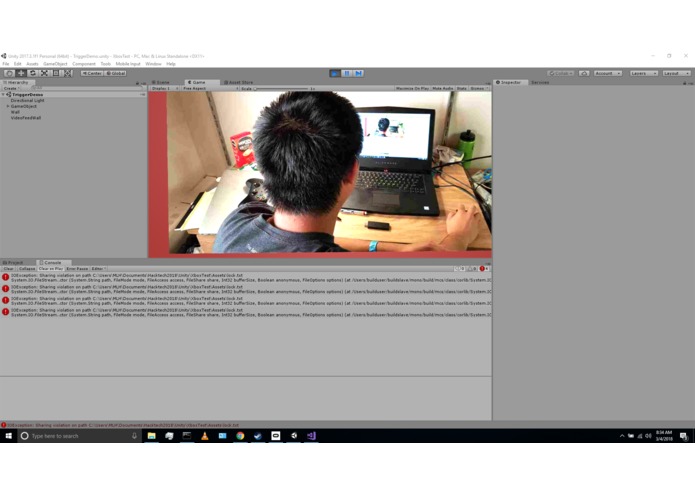

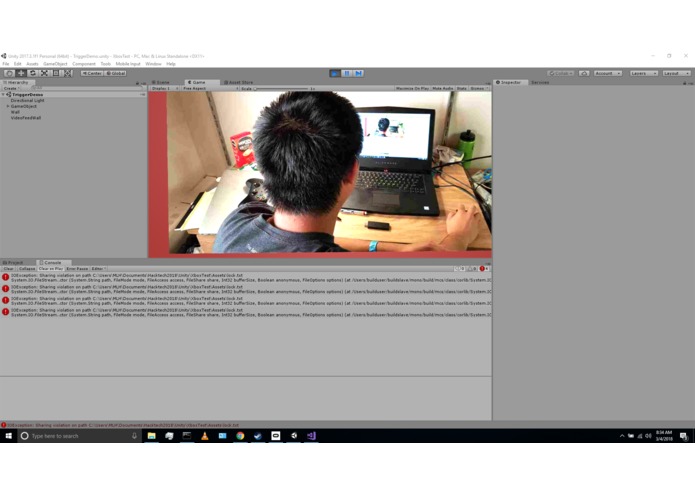

Gunshield detection of person - trigger locked

-

Gunshield detection of person - trigger locked

-

Gunshield detection of person - trigger locked

-

Gunshield detection of person - trigger locked

-

Gunshield detection of person - trigger locked

-

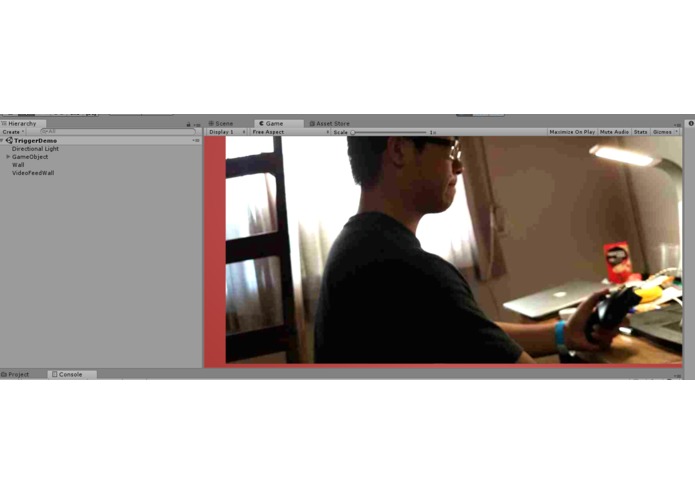

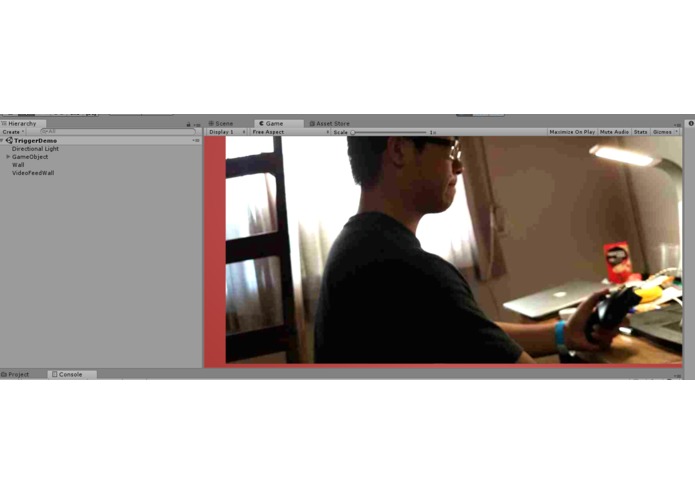

Gunshield - no detection of person

-

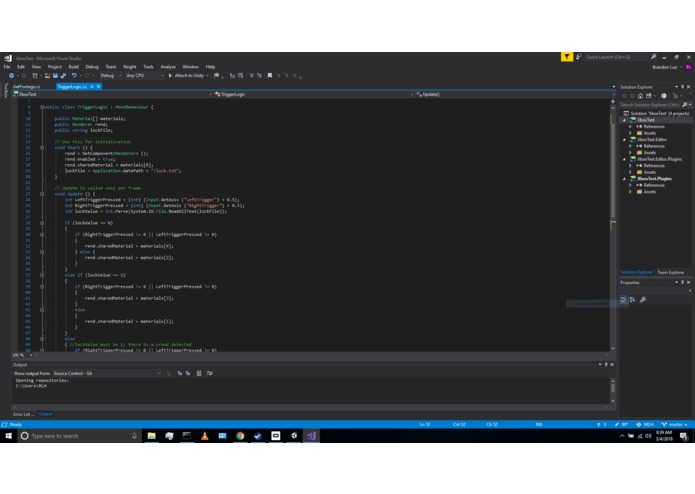

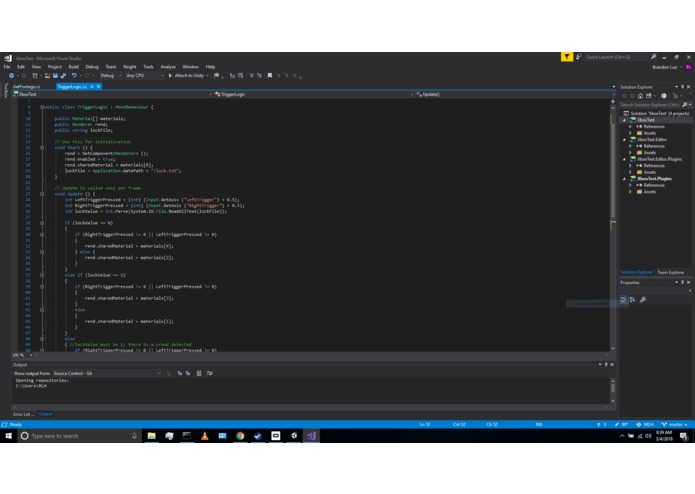

C# code for Unity VR project

-

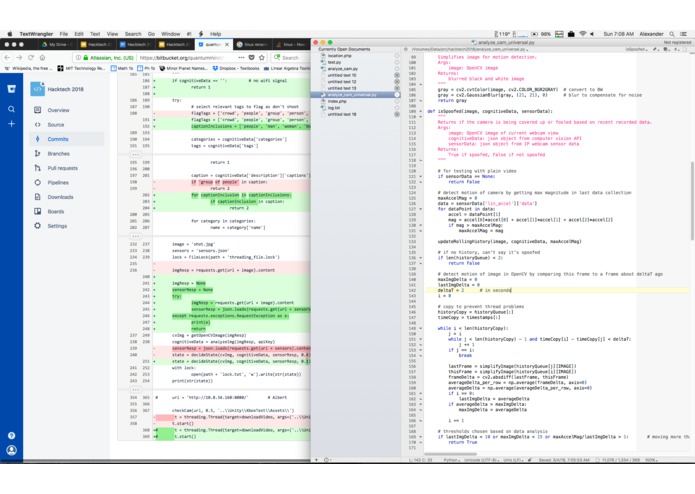

Microsoft cognitive services API implementation

-

SMS messages sent from Twilio API

-

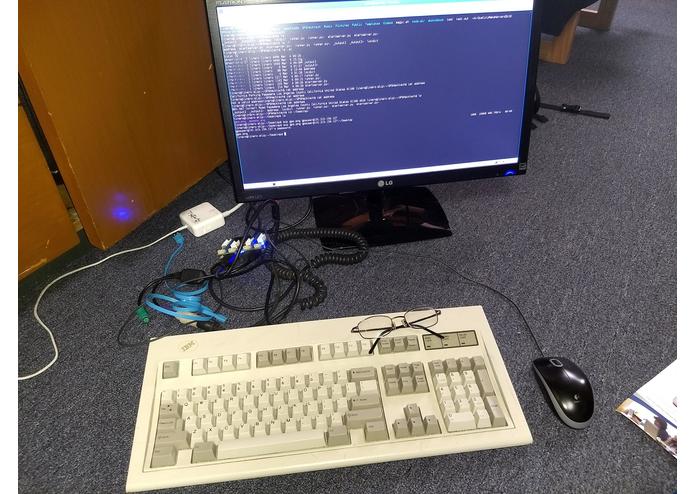

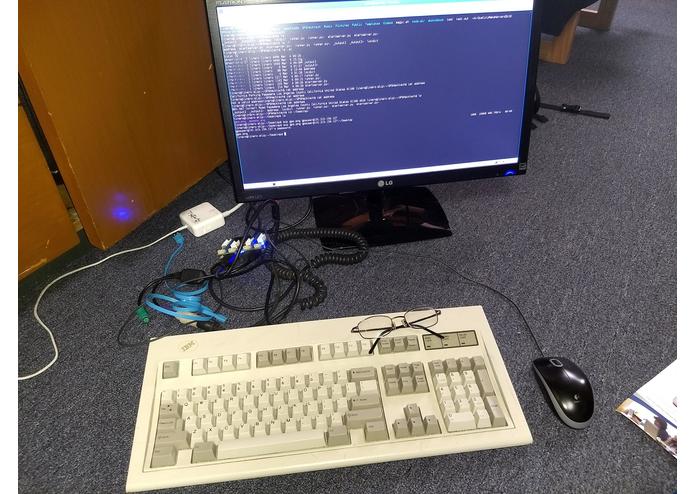

Qualcomm Dragonboard

-

GPS location detection

-

Microsoft cognitive services API implementation

-

Twilio Studio

Inspiration

The United States suffers from an epidemic of shootings. In fact, the number of fatalities from mass shootings has drastically increased in recent years, indicating a pervasive problem that demands an immediate and effective solution. In light of recent tumultuous events, in particular the school shooting in Florida, we aimed to create a solution to prevent mass murders. Hence, we created Gunshield as a method to detect attempted active, mass shooters and alert the police as well as those in the same geographical area.

What it does

Gunshield uses real-time crowd recognition to determine whether there is a potential active shooter. An attached camera on the gun sends live video feed through an AI system to detect if people are present at the trigger. If people are detected in the video stream, the trigger is locked. If the trigger is pulled, law enforcement is notified with the location of the shooter and alerts are sent to average citizens and passersby in the local geographic area of the shooter.

Using Gunshield, we are able to ensure every citizen’s right to bear arms, but, at the same time, prevent fatal and accidental shootings. We believe that Gunshield is a good technological solution to reduce gun violence in the United States.

How we built it

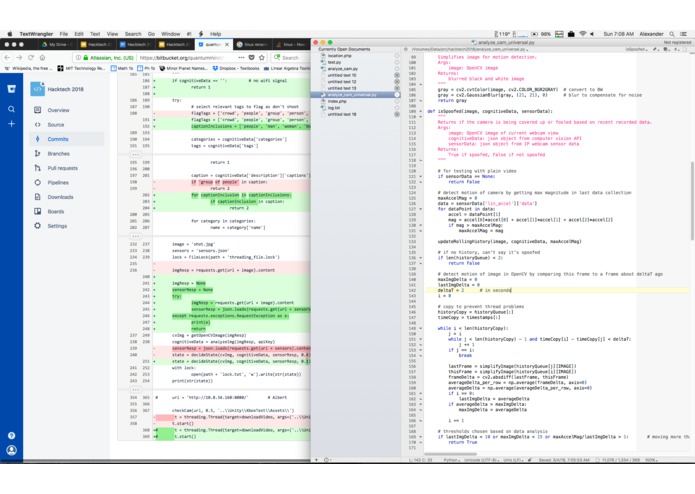

This detection of other people requires multiple components. First, data is collected via IP Webcam on an Android phone, which uploads live video and accelerometer data to a host server. The image is then processed by the Microsoft Computer Vision API to detect if people are present. We prevent spoofing through motion deduced from accelerometer data, which is compared to motion deduced from image sequence. A mismatch shows that the camera was covered. If there are people or the camera is blocked, a signal is sent to stop the gun from firing, which locks the trigger. As our display for our demo, we used Unity to develop a VR project for broadcasting the real-time live stream via the oculus headset, and a wirelessly connected Xbox controller to serve as our “gun.” Thus, the user could run through a simulation of Gunshield.

Emergency notification, which uses the Twilio API, has several parts: location detection, address identification, data distribution, and emergency calling. The GPS coordinates of the gun are detected by the Qualcomm DragonBoard 410C, which broadcasts the location in real-time. The DragonBoard 410C then calls the Google Maps API to provide a human readable address, allowing immediate response by law enforcement agencies. The address data is uploaded to a web server for quick and reliable access, enabling rapid communication and a high degree of accessibility. If the trigger is pulled while people are in view of the gun, the Twilio API is automatically called to notify emergency services and surrounding bystanders.

Challenges we ran into

Our biggest challenge was the integration of our separate components: the Twilio API implementation, the Microsoft cognitive services API implementation, the Unity VR project for simulation, and the location detection by the Qualcomm DragonBoard 410C. Since our code was written in different languages, including Python, C#, and PHP, we faced difficulties in combining our code for a seamless run-through of our project from start to finish using our demo simulation in Unity. We first brought the code together for Twilio API and the location detection by the Qualcomm DragonBoard 410C, and the code for implementing the Microsoft cognitive services API with the Unity project, which was able to display the live stream. Additional obstacles involving hardware included: obtaining the location of the Qualcomm DragonBoard 410C, since we were unable--at first--to connect it to satellites; setting up the Unity project on Mac vs. Window systems; and connecting the Oculus headset, sensor, and remote to the alienware laptop and then launching the headset to be used with our Unity simulation. Once we were able to connect the Oculus headset, we worked to disable headset tracking so that the live video stream would remain in the center of the field of view, which took time debugging and looking up Unity forums.

Accomplishments that we're proud of

In the making of this project, we have learned how to incorporate many cool features, both hardware and software, into a single project. Through our hard work, time put in, and efforts, we have all worked on areas of the project that we were not particularly familiar with--whether it was VR, implementing APIs, or extracting information from hardware--and struggled to implement and/or utilize. In the end, we were able to create a project with all the components and functionalities we initially planned on implementing in the 36 hours we were allotted.

What we learned

Through our Hacktech experience, we’ve not only learned how to implement the hardware and software necessary to our project, but we’ve also learned how to work together under a time constraint to put together a functioning project. We’ve also learned from one another’s skills as we each came into the project with various backgrounds and experiences in computer science and hacking.

What's next for Gunshield

In the future, we plan to utilize public databases of phone numbers to be able to specify our emergency notifications (i.e. call the phone number of the local police station given the location of the attempted shooter). We would also send text message alerts within a certain geographical area, as we were unable to implement this feature to its fullest extend in the time given (also because it requires the collection of personal data).

Thank you to the organizers and sponsors for a great time at Hacktech!

Built With

- alienware-laptop

- apache-server

- artificial-intelligence

- c#

- computer-vision

- google-maps

- gps

- machine-learning

- microsoft

- microsoft-cognitive-services

- nmea

- oculus

- phone-camera

- php

- postman

- python

- qualcomm-dragonboard-410c

- twilio

- unity

- xbox-controller

Log in or sign up for Devpost to join the conversation.