-

-

Dreamscapes combines AR and VR, rewarding exploration of the first floor paintings by bringing the immersive second floor spaces to life.

-

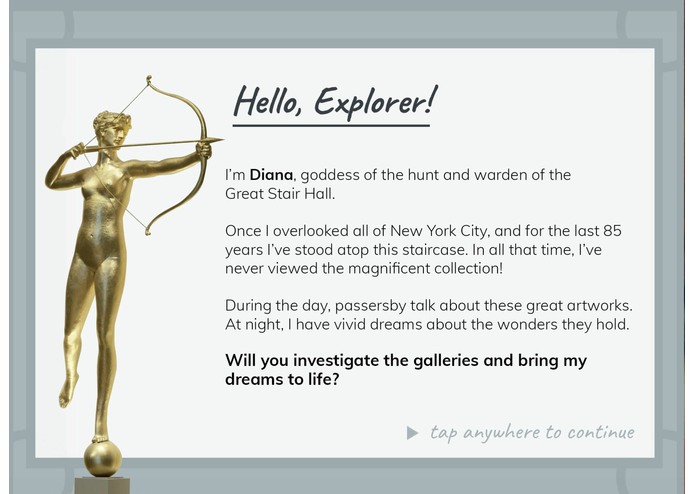

The player is guided by Diana, who provides a map and hints about where to go.

-

By scanning with the iPad camera, players can discover augmented 3D paintings scattered around the first floor.

-

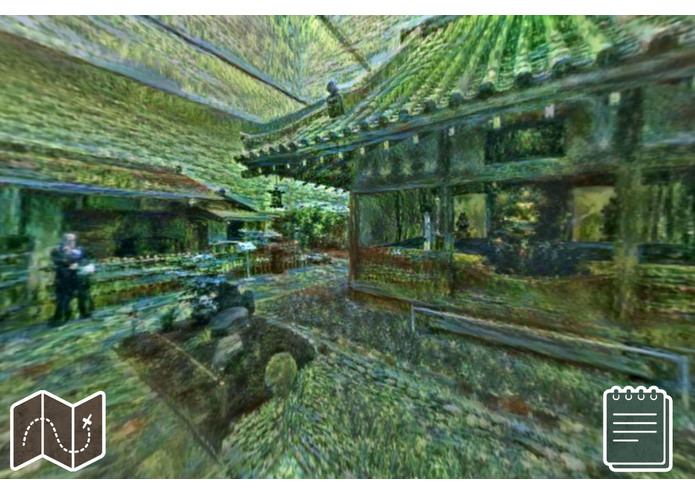

When the correct locations are found, the spaces transform into stylized VR panoramas that track the player's point of view in space.

-

Our goal is to encourage playful exploration of the galleries and conversations about the styes and connections between different artworks.

-

-

-

We had a lot of fun capturing VR panoramas without a tripod!

-

Eight Dreamscapes were built into the storyline (a total of 16 stops). Can you guess the inspirations for all of them?

Inspiration

Who hasn’t imagined themselves stepping into the landscape of a Van Gogh or a Monet?

We’re team “day bakers,” from the design studio Night Kitchen Interactive (whatscookin.com). For over 20 years, Night Kitchen has created engaging digital experiences for museums and cultural institutions across the country.

We approached this hackathon with the goal of using AR and VR to cultivate visitors’ real space engagement with the museum’s collection and environments. The app rewards visitors for engaging with their favorite artworks on the first floor and encourages them to venture deeper into the galleries on the second floor. We created a site-specific mixed reality experience that entices players to explore the museum’s collection by transforming the spaces around them in playful and compelling ways.

What it does

Overview

In “Dreamscapes,” the player is invited to discover the dreams of Diana, warden of the Great Stair Hall. By searching the galleries for paintings that have inspired Diana’s dreams, the player unlocks dreamscapes, Virtual Reality (VR) panoramic transformations of the museum’s immersive architectural spaces.

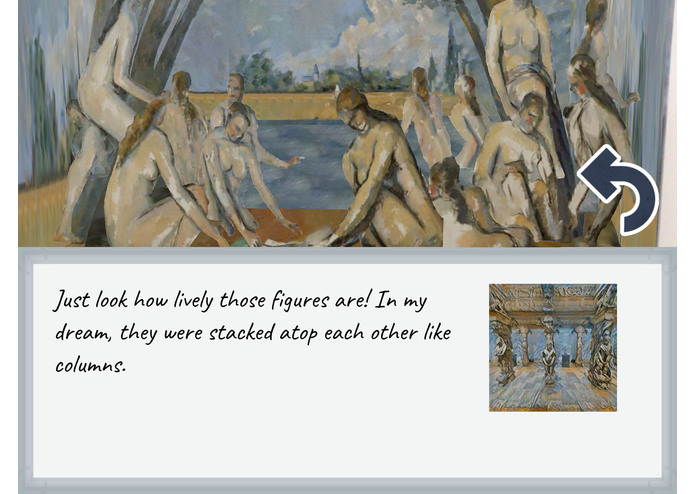

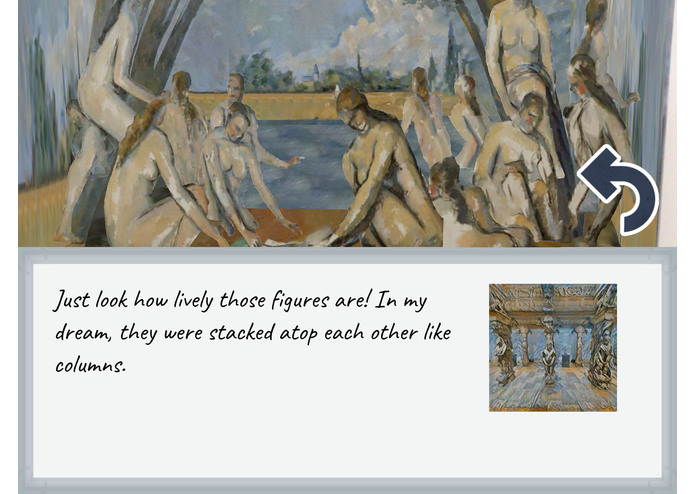

With eight Augmented Reality (AR) paintings on the first floor and eight VR locations on the second, our game is a nonlinear experience that can be played as a visitor moves through the museum. To experience an impressionist-inspired eastern dreamscape, a visitor must collect Monet’s Japanese Footbridge and walk to the Japanese Tea House. To find an immersive cascade of post-impressionist form, scan Cezanne’s The Bathers and visit the Pillared Temple Hall. By bringing the paintings and spaces to life, the app encourages the player to draw connections between objects in the collection, encourage exploration, and engage in conversations about the artistic styles, content and techniques.

Gameplay Walkthrough

On the first floor, or Level One, the player uses the iPad camera to scan paintings in the north wing. When a painting is found that inspired one of Diana’s dreams, a 3D projection of the painting appears, giving the player a visual treat. They can move the iPad around to compare details and perspective, encouraging exploratory close-looking.

Diana comments on the painting and notes that the style is familiar, but instructs the player that her dream took place elsewhere in the museum. A clue is added to the player’s log, and they gain access to a map of the second floor. The player may stay on the first floor to gather paintings and unlock dreamscapes, or move upstairs to experience their dreamscapes at any time.

On the second floor, or Level Two, the player searches the environment for architectural details that match the picture clues in their log. These are scattered around the map and only unlocked if the player knows which gallery they are in. To get things started, scanning Rousseau’s “The Merry Jesters” directs the player to the nearest clue: a close-up photo of Diana in the Great Stair Hall. The player taps the Great Stair Hall in the map, revealing an image of Diana and a prompt with instructions to line up the clue to their camera view and tap.

The dreamscape is activated and the iPad becomes a VR window into a stylized world. From the player’s point of view, the Great Stair Hall transforms with overgrown foliage inspired by Rousseau’s painting. The player can move the iPad in any direction and the VR will update accordingly, using the device’s accelerometer. Once enjoyed, the player closes the VR and may continue the quest, searching for more paintings and clues on the first floor, or continuing throughout the second floor to unlock more Dreamscapes.

How we built it

This project was built in Unity and uses Vuforia (Augmented Reality), the Google VR SDK (Virtual Reality), and a custom narrative controller in C# that ties it all together. We’ve also designed a Location Manager that links experiences to the halls they appear in.

Art assets were created with scalability in mind, combining several generative image processing techniques. The 3D “diorama” augmentations on the First Floor were created by projection mapping the Museum’s original database images onto simple geometry. This technique applies the paintings’ original texture to 3D models, adding true depth that compliments the perspective of the artwork.

Our approach to generating VR Dreamscapes utilizes the neural-style algorithm, a machine learning method of transposing patterns, colors, and aesthetic qualities from one image to another. We captured VR photographs of the space with a Gear360 VR camera, split them into a cube map, applied style influences from the AR target paintings, re-stitched, and post-processed them.

Challenges we ran into

As with any development cycle, there were challenges along the way. The two biggest difficulties involved integrating iBeacon technology and designing VR dreamscapes with the neural-style algorithm.

iBeacons

Despite having access to the Museum’s experimental iBeacon API, we ran into difficulty implementing beacons into our Unity builds. The API was written in the 2015 version of Swift for iOS, but our Unity project was based on C#. We were able to re-wrap the code in Objective C, import it as a package and deploy test builds, but realized early on that this technique compromised the beacon accuracy and would take additional time to solve. In order to spend more time optimizing the VR and AR components, we decided to table the iBeacon integration and pivot to a map-based location approach (for now).

VR Dreamscapes

Due to the generative nature of neural styling, paintings in the Museum’s database influenced the VR panoramas in very different ways. Sometimes the results were unexpected (such as “Follette” by Henri de Toulouse-Lautrec, which imposed dog faces over the walls and ceiling!)

We went through several rounds of iterating our Dreamscape choices before settling on 30 solid picks. Of those, we chose eight to build out in our MVP narrative. Convolutional neural networking takes a lot of time, especially for large, high-resolution images. The equirectangular projection of VR panoramas also distorted the Dreamscapes at the poles, so we had to find a work-around. This was achieved by splitting the VR panoramas into undistorted cube maps, applying the neural-style filtering, and stitching them back into the VR format.

Accomplishments that we're proud of

We’re very proud of the reactions of our first unbiased playtesters! We were testing the AR targets on-site when a few curious Museum visitors wandered up and asked what we were doing. Upon seeing Cezanne’s “The Bathers” expanded into 3D, they first yelped with glee, then started commenting about the poses and texture of background characters. When we showed off the app in the Japanese Tea House, our playtesters immediately picked up the architectural similarities between the space and Monet’s Japanese Footbridge inspiration. We’re proud that we accomplished our goal of engaging visitors with the space itself, using the iPad as a supplement instead of a distractor.

We’re also proud of “Jacobvision,” our approach to taking eye-level VR panoramas in the galleries without a tripod. We duct-taped a Gear360 camera to our teammate Jacob’s hat and remotely took the photos while hiding behind the walls.

What we learned

This Hackathon taught us a lot, ranging from best practices when designing for a real space to the nuances of preparing seamless VR content. We learned that the controlled lighting of Museum environments make for very effective AR targets, and that visitors are naturally curious about exploring deeper with the 3D augmentations -- so much that we’ll need to add a distance cut-off to make sure they don’t get too close to the paintings! We also learned a lot about the constraints of neural-styled artwork, and that certain subjects (abstracted styles, landscapes) work better than others (representation, portraiture).

What's next for day bakers

This proof of concept includes eight full Dreamscape stories, but is ready to expand at any point! We captured over 40 VR photos of immersive locations on the second floor, and tested and identified an additional 30 viable AR targets in the first floor collection. Once the pipeline was established, we were able to largely automate the neural-style process to produce additional Dreamscape combinations, currently unused in the game. The potential to expand fits within our nonlinear narrative structure, and given the opportunity, we’d love to continue creating content and refining the experience.

One awesome feature we discussed but did not have an opportunity to explore is the integration of hotspots and additional content within the AR and VR experiences. These hotspots could encourage more close looking and a better understanding of the artwork and architecture.

We plan to continue development on this codebase and explore other narrative applications of location-based VR and AR. One key next step is to finish wrangling the iBeacons and streamline the dreamscape activation process. When all's said and done, we’d like to engage in more thorough A/B playtesting to optimize our approach and evaluate the app’s effectiveness in meeting our interpretative goals.

Log in or sign up for Devpost to join the conversation.