-

-

IOS App bowlingMate (private as of now - will be rebranding)

-

Startup

-

home/landing page with the default back camera view

-

Record clips with the camera (or pick a clip)

-

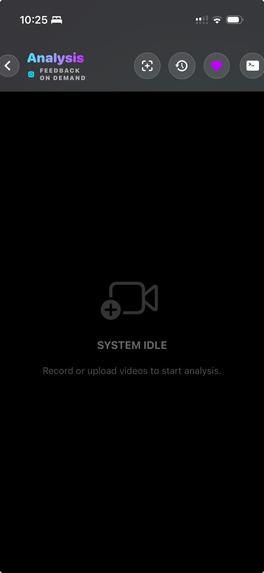

System idle if none to process

-

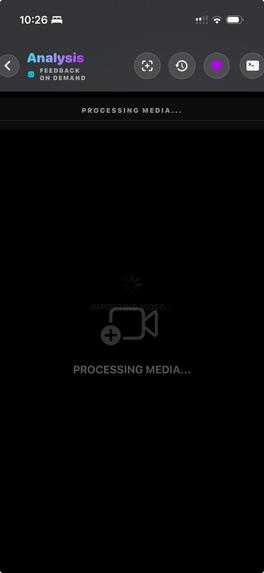

video clip picked -> processing media

-

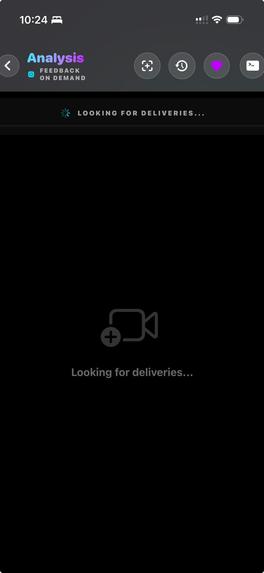

done recording -> looking for deliveries

-

found deliveries - sideways swipe to find each delivery. As of now configured to 5 seconds clips

-

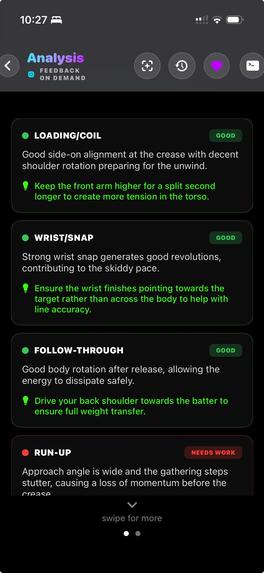

analysis summary and swipe down for more expert feedback

-

The Analyze button for deep expert analysis is clicked - spinning

-

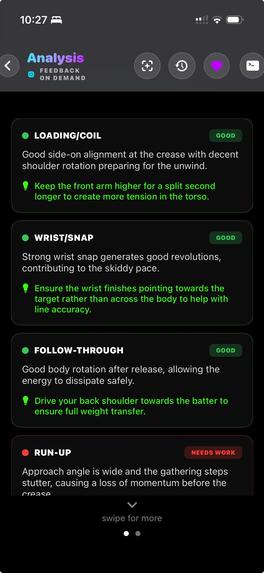

Expert breakdown - starting from the positives. Swipe downward for annotated analysis

-

Furthe expert breakdown - attention areas. Swipe down for annotated analysis

-

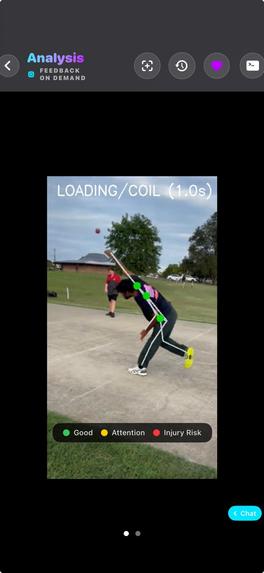

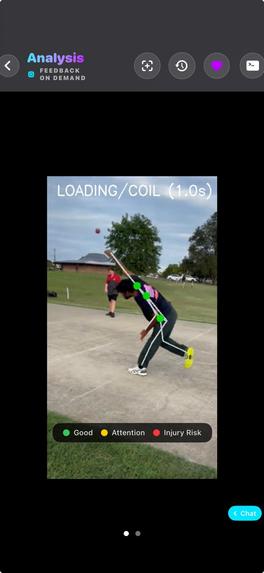

Annotated analysis. Interactive chat.

-

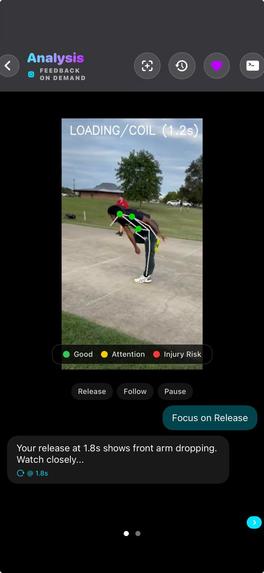

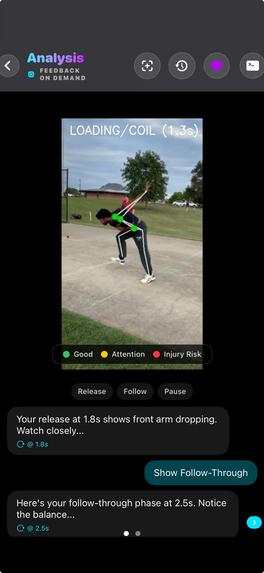

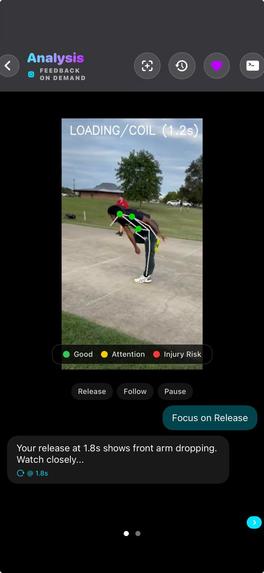

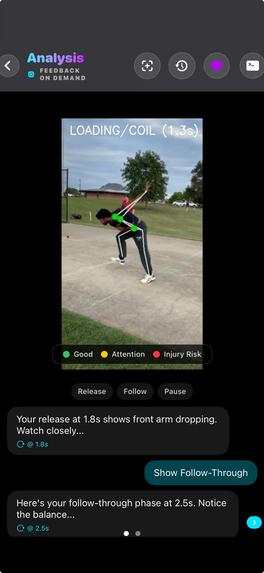

Interactive chat controlling the annotated clip to focus and highlight according to the discussion point/user chat

-

Interactive chat controlling the annotated clip to focus and highlight according to the discussion point/user chat

-

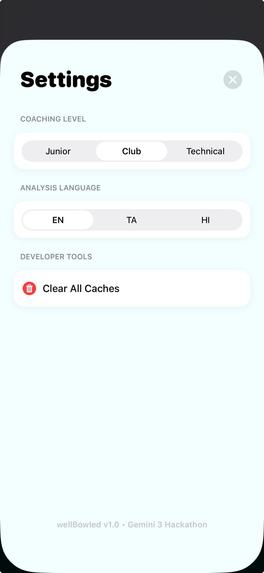

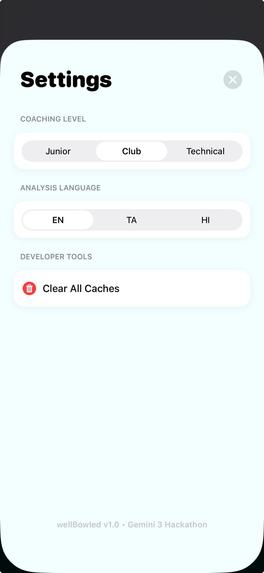

basic settings - immediate future plans

## Inspiration

Cricket is the second most popular sport on Earth — 2.5 billion fans across 100+ countries. In cricket, bowling is the equivalent of pitching in baseball, but with a critical difference: the ball is delivered with a straight arm through an explosive, bio-mechanically coordinated full-body action — making technique crucial for speed, accuracy, and longevity (staying injury-free).

Yet most bowlers — from kids in backyards to club-level competitors — practice alone with zero feedback. Professional bio-mechanical analysis costs $100+ per session, requires travel to specialized facilities, and is booked weeks in advance. Coaches are overloaded: "too many players, not enough time for personal feedback."

I wanted to give every bowler (or at least or me) what only professionals have: instant, objective, bio-mechanical feedback to complement their coach's guidance. Democratize the access to Cricket Intelligence (starting from bowling). And I wanted to see how far Gemini 3's native video understanding could go — could it replace an entire classical computer vision stack? In fact in the past I had tried a few times with the traditional vision models but I couldn't come up with a satisfactory/good-enough solution feasible in terms of my efforts.

What it does

BowlingMate is an AI-powered cricket bowling analysis tool. Record yourself bowling anywhere — backyard, park, nets— and get professional technique feedback in seconds.

The pipeline has two AI stages:

The Scout (Gemini 3 Flash) — Scans your entire video and detects every bowling delivery by identifying the peak arm arc. Returns exact timestamps. Works even with shadow bowling (no ball needed), because it understands the bio-mechanics of the human body, not ball trajectories.

The Expert (Gemini 3 Pro) — Takes a precision 5-second clip around each delivery and performs deep analysis across 6 bio-mechanical areas:

- Run-up (rhythm, balance, momentum)

- Loading/Coil (hip-shoulder separation, torque generation)

- Release Action (arm path, release point consistency)

- Wrist/Snap (seam position, spin generation)

- Head/Eyes (stability at release, target focus)

- Follow-through (energy dissipation, balance, injury risk)

Each phase gets a status (GOOD / NEEDS WORK), a specific observation, and an actionable tip. The Expert also provides bio-mechanical insights — speed estimation, effort level, repeatability cues, and hints on areas like elbow extension that may warrant closer review.

A MediaPipe joints-sticks overlay is generated after analysis — color-coded by phase (green = good form, red = injury risk, yellow = needs work) all based on the Gemini 3 response.

## How we built it

The key architectural decision: zero classical CV.

There is no YOLO, no OpenCV for detection, no frame extraction, no pose estimation in the detection pipeline. Raw MP4 video bytes go directly to Gemini 3. The model is the entire vision engine.

Backend — Python/FastAPI on Google Cloud Run. The Scout endpoint sends video bytes to gemini-3-flash-preview with a cricket-specific prompt. Videos >5MB use the Gemini File API with polling;

smaller clips use inline bytes for speed. The Expert endpoint streams analysis via Server-Sent Events (SSE) using gemini-3-pro-preview, with structured JSON output (response_mime_type:

"application/json") for reliable parsing.

Split-stack architecture — Flash for speed (scanning minutes of video cheaply), Pro for depth (deep reasoning on 5-second clips). This reduces API cost by ~80% compared to running Pro on everything.

iOS app — SwiftUI with AVFoundation. Videos are chunked and sent to the Scout in parallel. Detected deliveries are clipped using bit-stream pass-through (no re-encoding, near-instant). The Expert is triggered on-demand based on the detected delivery (the release point is set as the thumbnail/paused point in each clip for quick verification) to prevent credit waste.

Web demo — a basic Streamlit front-end calling the same Cloud Run back-end, so judges can try it without an iPhone and get a feel for it. https://bowlingmate-web-m4xzkste5q-uc.a.run.app/

Orchestration — LangGraph for agent workflow, Google Cloud Storage for clip persistence, SQLite for delivery history.

## Challenges we ran into

Gemini as sole vision engine was a bet. Early on, the detection accuracy was inconsistent — Gemini would sometimes hallucinate timestamps or miss deliveries in complex backgrounds and in peculiar angles. I iterated heavily on the Scout prompt: focusing it on bio-mechanical motion (the straight-arm vertical rotation), not the ball. This was the breakthrough — bowling has a unique kinetic signature that Gemini can reliably detect.

Context window size vs accuracy. The iOS app initially sent 10-second video chunks (with overlaps) to the Scout — results were inconsistent. When I switched to sending a full 69-second video as a single request, detection accuracy jumped to 100% over 20+ consecutive attempts. Gemini 3 reasons better about temporal motion with more surrounding context. This was a key architectural discovery — more context = dramatically better accuracy.

File size routing. Inline bytes work great for small clips but timeout on longer videos. I built conditional routing: >5MB goes through File API with polling, ≤5MB goes inline. This cut Expert latency by ~5 seconds.

Latency management. Scout takes ~15s for a 60-second video. Expert takes ~28s for a 5-second clip. I had to design the UX around this: SSE streaming for Expert feedback, and user-triggered analysis to prevent wasted API calls. *****these numbers varied quite a lot based on the test sessions

## Accomplishments that we're proud of

- 4/4 delivery detection on my test video (68 seconds, 4 deliveries) — timestamps accurate within ±1 second. No training data. No custom models. Just a prompt.

- 100% accuracy with full context. Sending the complete video (not chunks) to Gemini 3 Flash achieved perfect detection over 20+ consecutive runs — a key discovery about how context window size affects multimodal reasoning.

- Shadow bowling works. The Scout detects bowling actions without a ball present — something impossible with classical object detection approaches. This is only possible because Gemini understands human motion, not object trajectories.

- Zero classical CV architecture. We deleted the entire traditional pipeline (YOLO, OpenCV, frame extraction) and trusted Gemini 3 to be the sole vision engine. And it works in production.

- Split-stack cost efficiency. Flash scans 55 seconds of "dead air" cheaply, Pro only analyzes the 5 seconds that matter. ~80% cost reduction.

## What we learned

- Prompt engineering > model engineering for sports. Cricket bowling has a unique bio-mechanical signature (straight-arm vertical rotation) that's rare outside the sport. Describing this motion precisely in the prompt was more effective than training a custom classifier.

- Context window size matters for video reasoning. 10-second chunks gave inconsistent results. The full 69-second video gave 100% accuracy. Gemini 3 reasons about temporal motion better with more surrounding context — a non-obvious finding that shaped the architecture.

- Structured JSON output is essential for production. Without

response_mime_type: "application/json", every Gemini response needed regex parsing and retry logic. Structured output made the pipeline reliable. - The Flash/Pro split is powerful. Using two models with different cost/latency profiles in one pipeline is an underused pattern. Flash for breadth, Pro for depth.

- Native video understanding changes what's possible. Sending raw video bytes (no frame extraction) means the model reasons about temporal motion — something that frame-by-frame approaches fundamentally cannot do.

## What's next for BowlingMate

BowlingMate is a proving ground for a larger vision: a universal AI analysis platform for any physical, repetitive activity. Cricket bowling was chosen because (personally) the domain expertise exists to validate the AI's output and the overall system design/process— but the underlying architecture (detect the activity → clip the moment → deep bio-mechanical analysis) generalizes to any discipline where technique matters.

Near-term (BowlingMate):

- The Researcher — RAG-powered personalization: "Your release resembles XXXX — here's how to optimize that action." FAISS vector store is scaffolded, knowledge base needs expanding.

- The Historian — Progress tracking across sessions: "Your release point is 20% more consistent than last week." SQLite persistence exists; trend visualization is next.

- On-device pre-filtering — Apple Vision Framework or MoveNet for instant (<1s) bowling detection on-device, with Gemini reserved for deep analysis only.

- Multi-language feedback — Tamil, Hindi, Urdu. The prompt already accepts a language parameter.

Long-term (the platform): The same Scout → Expert pipeline can analyze any repetitive physical action where form matters: all sports (batting, tennis serve, golf swing), yoga poses, calisthenics, dancing, martial arts, weight lifting, physiotherapy exercises. The findings from BowlingMate — context window sizing, prompt engineering for bio-mechanics, split-stack cost optimization — become the foundation for a universal movement analysis platform. At the same time I know the caveats of a generalized solution, so I am giving equal or more importance to specialized, well-focused app with super deep and thorough cricket bowling action and bowling (ball tracking etc) analysis.

Built With

- avfoundation

- docker

- events

- fastapi

- ffmpeg

- github-actions

- google-cloud

- google-cloud-run

- google-gemini-3-flash

- google-gemini-3-pro

- langgraph

- mediapipe

- python

- server-sent

- sqlite

- swift

- swiftui

Log in or sign up for Devpost to join the conversation.