-

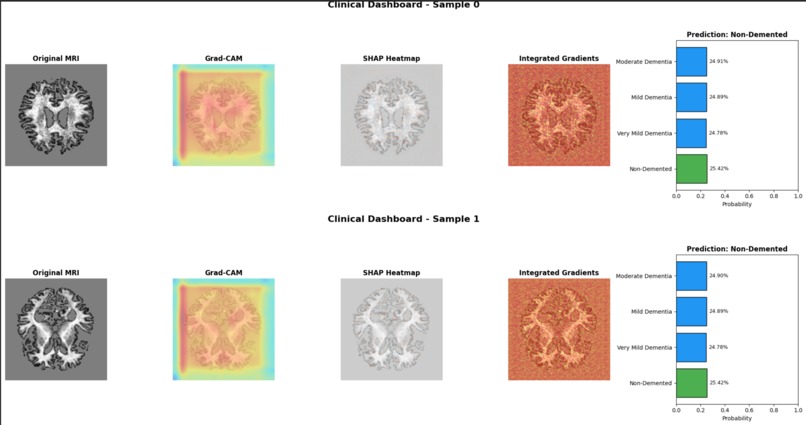

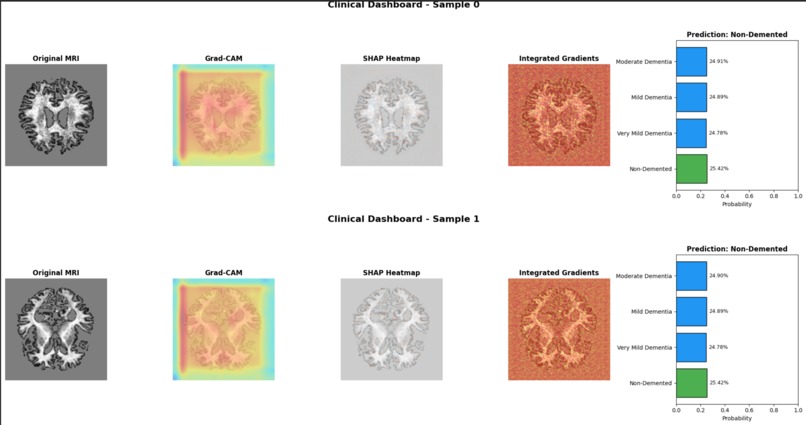

Clinical Dashboard – Side-by-Side Comparison

-

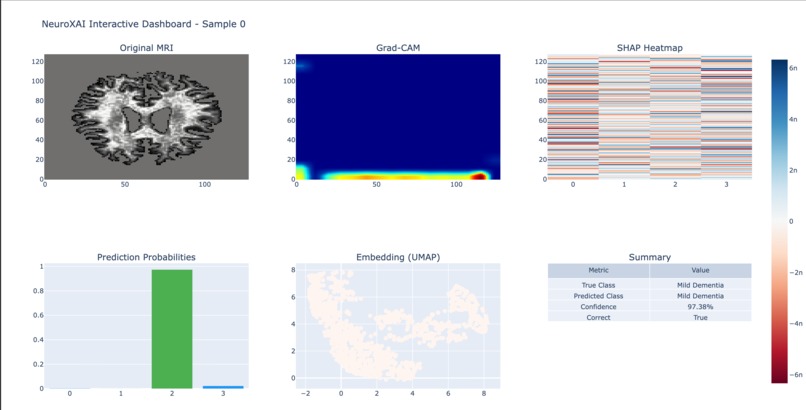

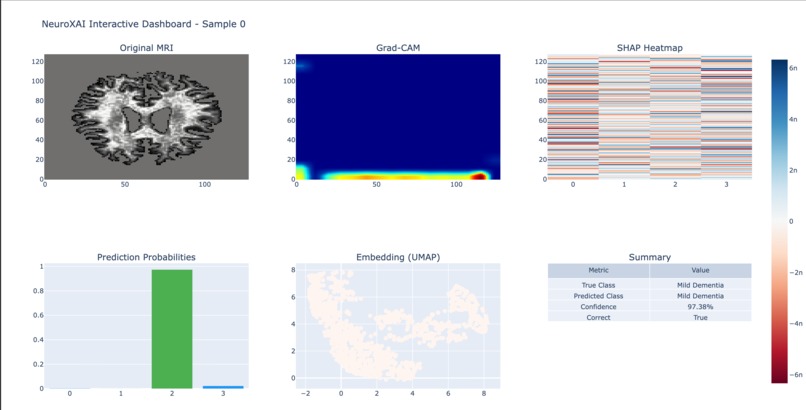

Plotly Interactive Clinical Dashboard

-

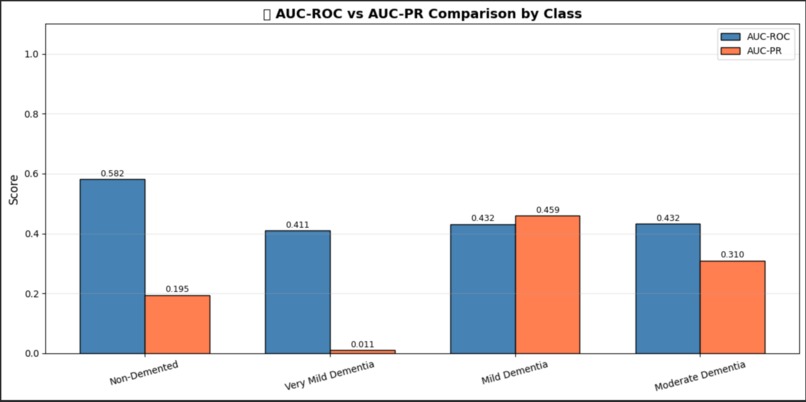

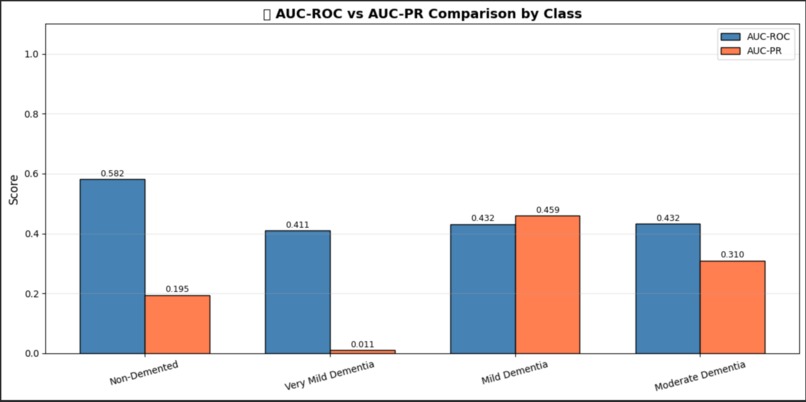

Precision-Recall Curves

-

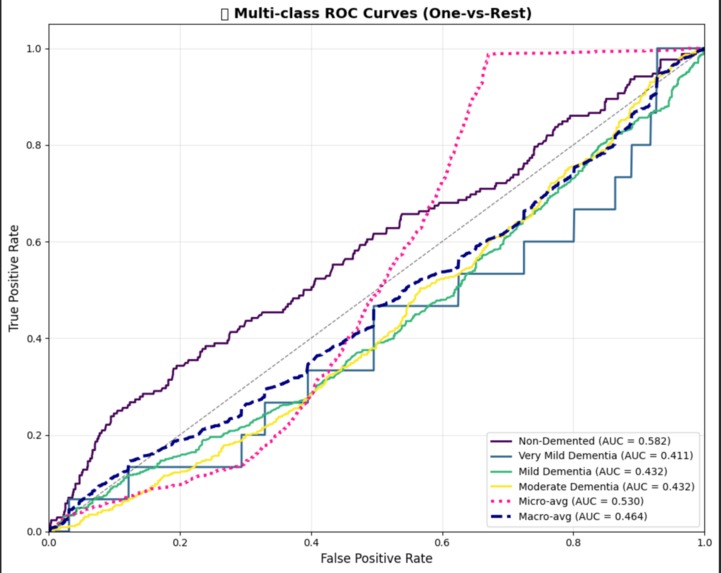

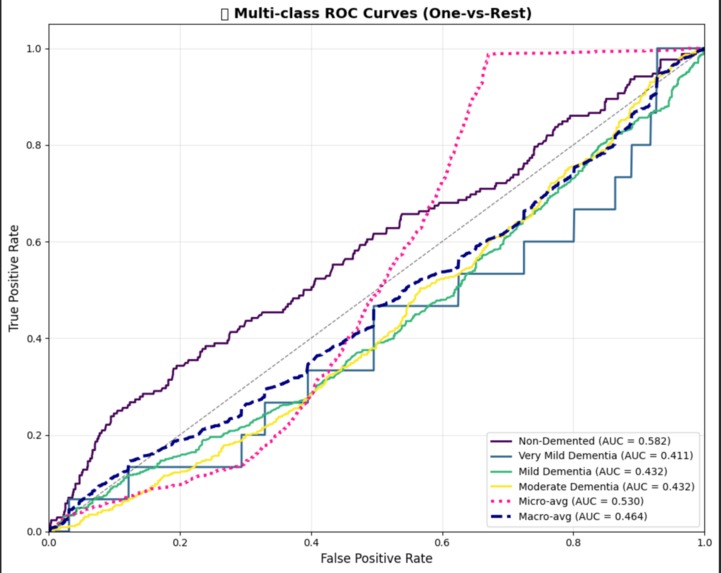

ROC Curves (Multi-class)

-

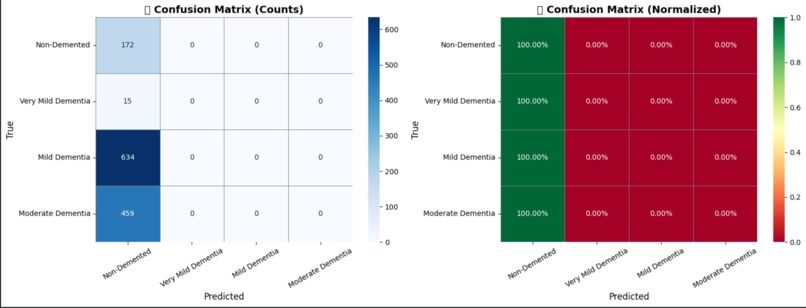

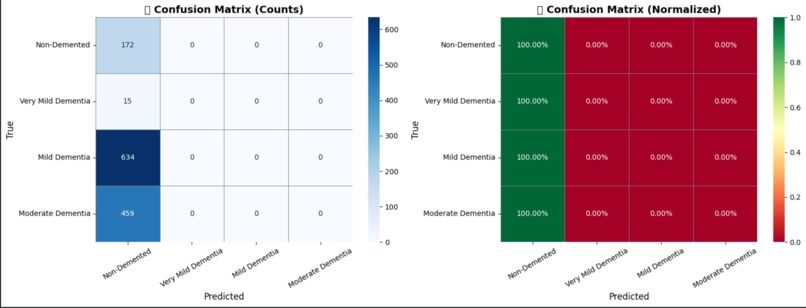

Model Evaluation – Confusion Matrix

-

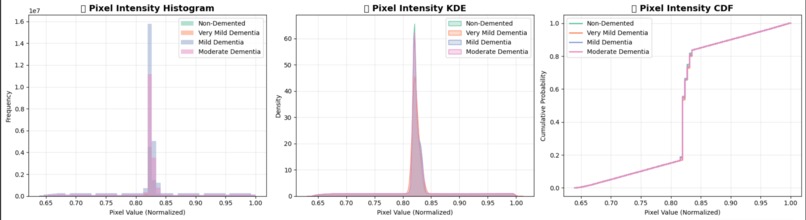

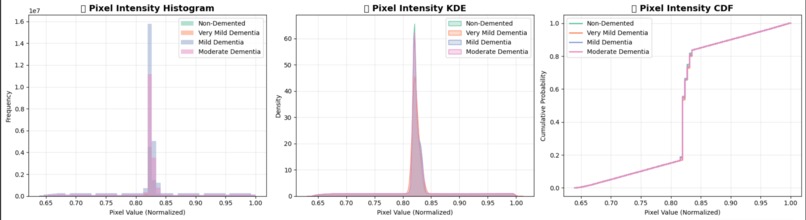

Pixel Intensity Distribution Analysis

-

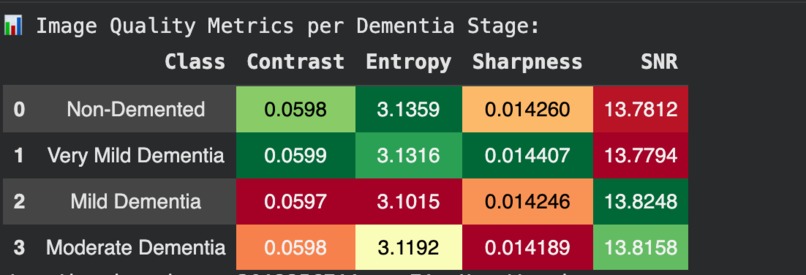

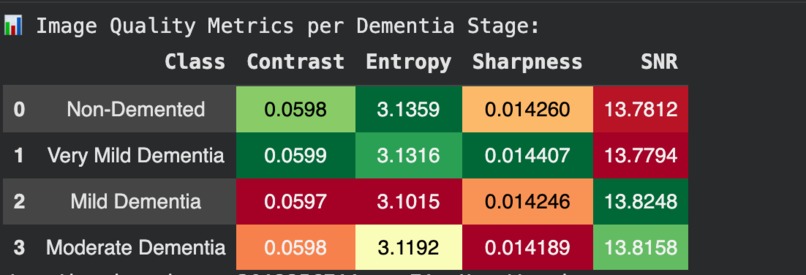

Image Quality Metrics

-

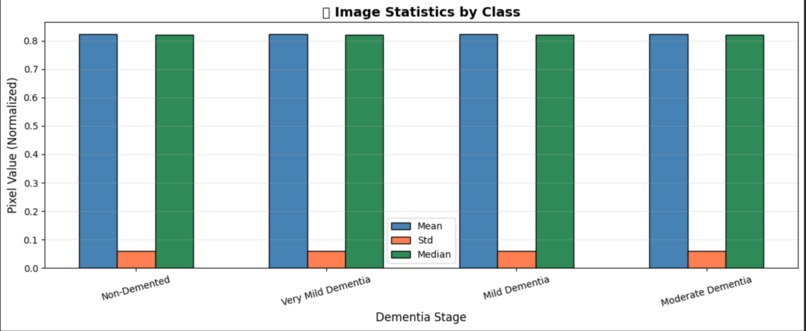

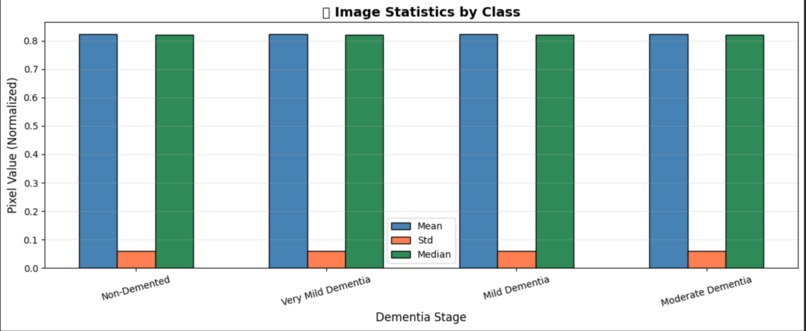

Exploratory Data Analysis – Image Statistics

-

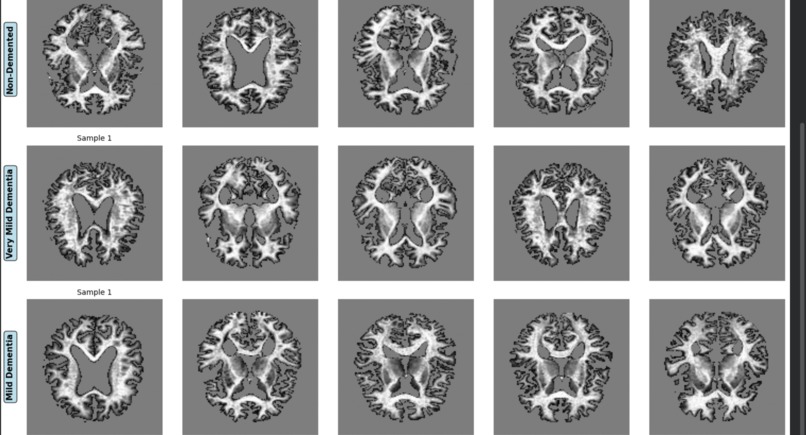

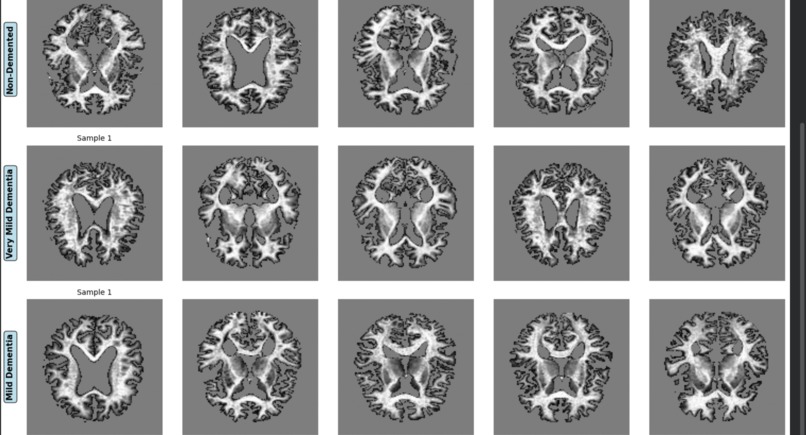

Sample MRI Images Grid by Class

-

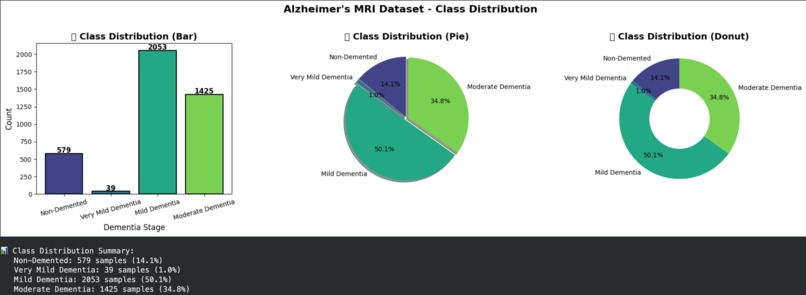

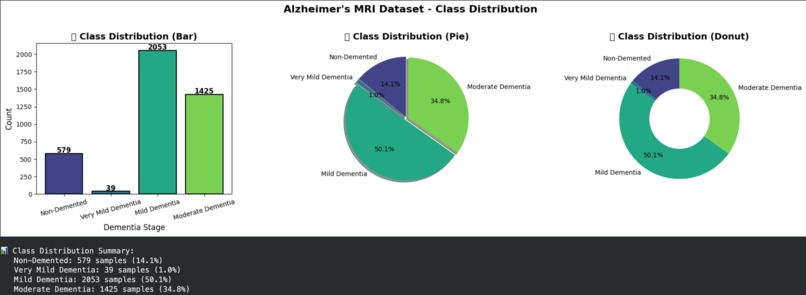

Exploratory Data Analysis – Class Distribution

-

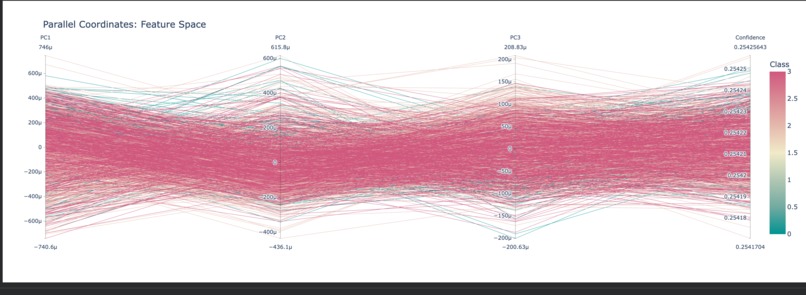

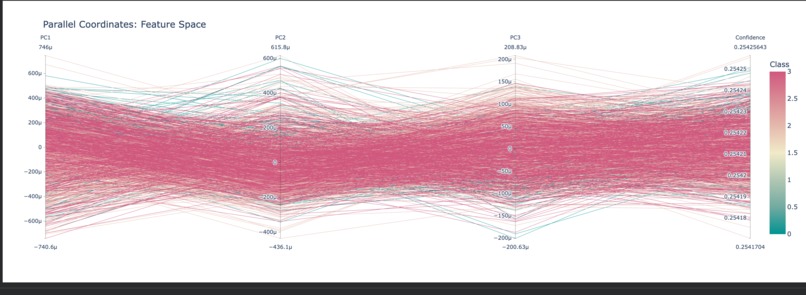

Parallel Coordinates Plot

-

Sunburst Chart – Hierarchical Prediction Analysis

-

Sankey Diagram – Prediction Flow

-

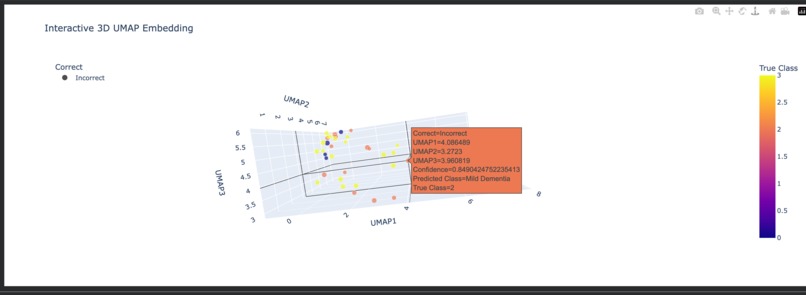

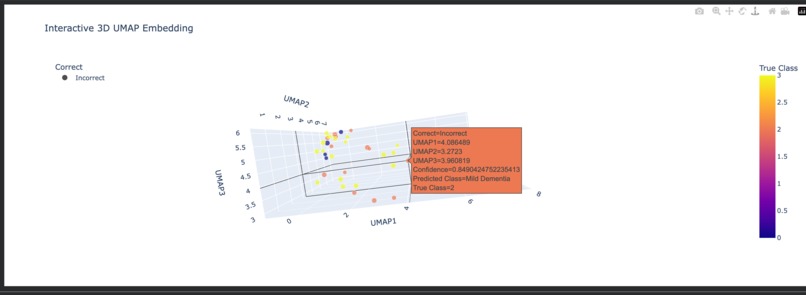

Interactive 3D Scatter Plot (Plotly)

NeuroXAI: An Explainable AI Framework for Alzheimer’s Disease Detection

Abstract

Alzheimer’s disease (AD) is a progressive neurodegenerative disorder with significant clinical, social, and economic impact. Although deep learning models have demonstrated expert-level performance in medical image classification, their adoption in clinical environments is limited by poor interpretability. NeuroXAI addresses this limitation by introducing an explainable artificial intelligence framework that combines high-accuracy MRI-based classification with rigorous, clinically meaningful visual explanations. The system is designed to function as a decision-support tool for neurologists, enabling transparent, verifiable, and biologically aligned predictions across multiple stages of Alzheimer’s disease.

Problem Statement

Current AI-based diagnostic systems for Alzheimer’s disease largely operate as black-box models, providing probabilistic predictions without insight into the underlying decision process. In high-stakes medical settings, such opacity undermines clinical trust and poses risks, including misdiagnosis, delayed intervention, and ethical concerns. There is a critical need for AI systems that not only achieve high diagnostic accuracy but also provide interpretable, evidence-driven reasoning aligned with established neurological biomarkers.

Proposed Solution

NeuroXAI is an interpretable deep learning framework that delivers stage-wise Alzheimer’s disease classification while simultaneously exposing the anatomical regions responsible for each prediction. The system is designed to act as a visual second opinion for clinicians, bridging the trust gap between automated inference and human medical judgment.

Core Capabilities

Stage-Level Classification: Classifies MRI scans into four clinically defined categories—Non-Demented, Very Mild Dementia, Mild Dementia, and Moderate Dementia.

Explainable Inference: Employs SHAP and Grad-CAM to generate pixel-level attribution maps overlaid on MRI scans, highlighting regions such as hippocampal atrophy and ventricular enlargement.

Clinical Validation Interface: Provides side-by-side visualization of raw scans, saliency maps, and prediction confidence scores to support rapid clinical verification.

System Architecture

Data Engineering

Biomedical imaging data stored in Parquet format is decoded using custom byte-stream parsers. Binary data is reconstructed into 128×128 grayscale matrices with localized intensity normalization to preserve anatomical fidelity.

Deep Learning Model

A custom Convolutional Neural Network (CNN) architecture enhanced with attention mechanisms is used to prioritize gray matter density and structural degeneration. Class-weighted loss functions are applied to improve sensitivity to early-stage Alzheimer’s progression.

Explainability Module

SHAP GradientExplainer is integrated to compute feature-attribution values grounded in cooperative game theory. Explanations are based on the Shapley value formulation, ensuring mathematically rigorous and unbiased importance assignments.

Implementation Challenges

Non-Standard Data Formats: Transitioning from conventional image formats to Parquet-based biomedical data required custom decoding and reconstruction pipelines. Early-Stage Signal Detection: Subtle structural changes in very mild dementia necessitated architectural tuning and loss rebalancing. Noise in Saliency Maps: Preprocessing enhancements, including skull-stripping logic, were implemented to ensure that explanations focus exclusively on relevant brain tissue.

Outcomes and Insights

Demonstrated that interpretability significantly enhances clinical trust without compromising predictive performance. Observed strong alignment between model-generated saliency maps and established neurological biomarkers. Achieved efficient inference through mixed-precision training, supporting deployment in resource-constrained clinical environments.

Future Scope

3D Volumetric Analysis: Extend the framework to full 3D MRI volumes for precise quantification of brain atrophy. Multimodal Learning: Integrate neuroimaging data with genetic and clinical biomarkers for earlier risk prediction. Longitudinal Modeling: Analyze temporal MRI sequences to forecast disease progression at the individual patient level.

Conclusion

NeuroXAI demonstrates that explainability is not a trade-off but a requirement for clinically viable AI systems. By unifying diagnostic accuracy with transparent reasoning, NeuroXAI represents a step toward trustworthy, deployable AI in neurological healthcare.

Built With

- keras

- pil

- python

- seaborn

- shap

- tensorflow

Log in or sign up for Devpost to join the conversation.