-

-

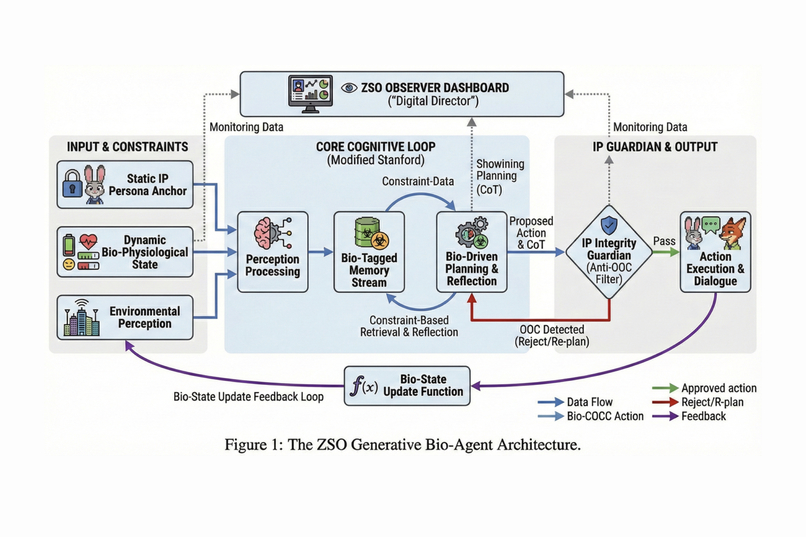

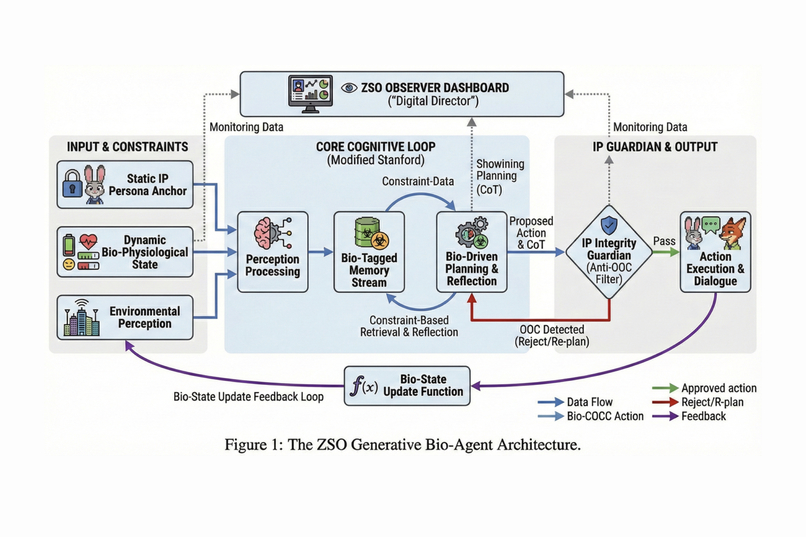

The ZSO Generative Bio-Agent Architecture and core cognitive loop.

-

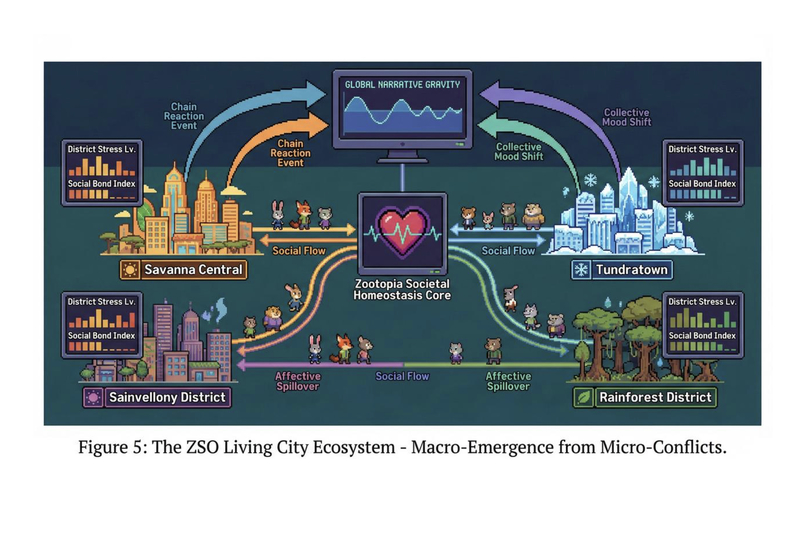

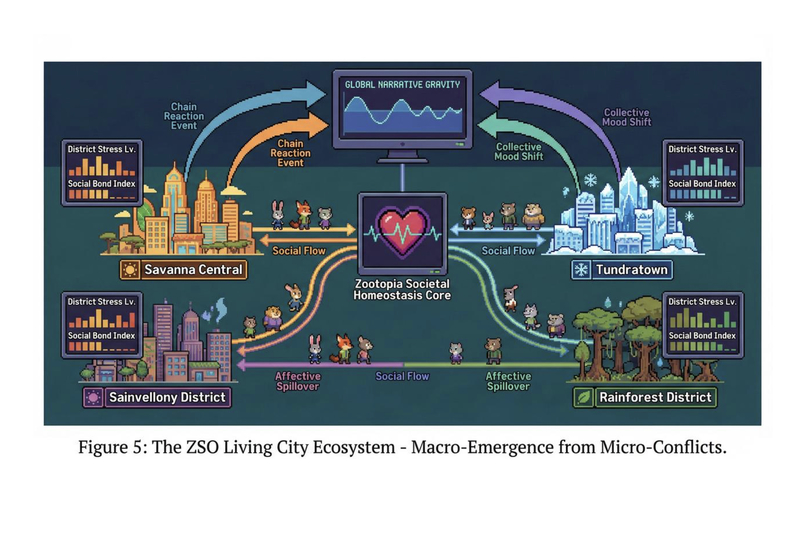

The ZSO Living City Ecosystem showing macro-emergence and societal homeostasis.

-

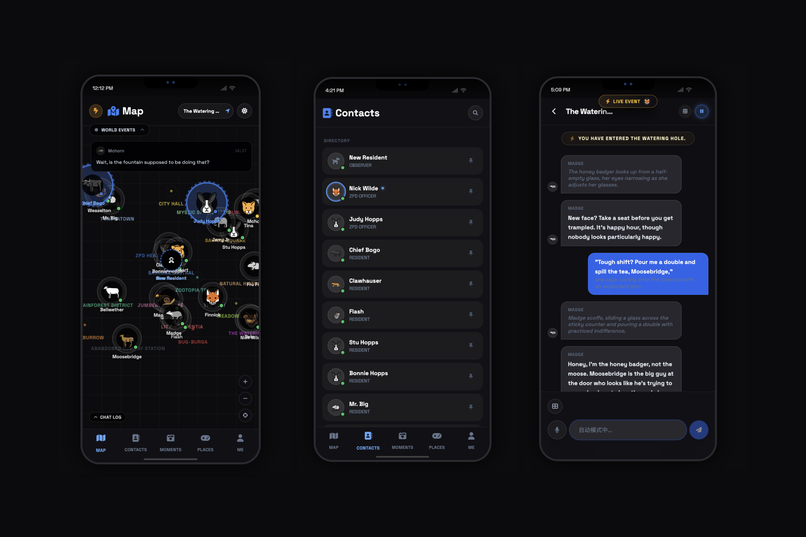

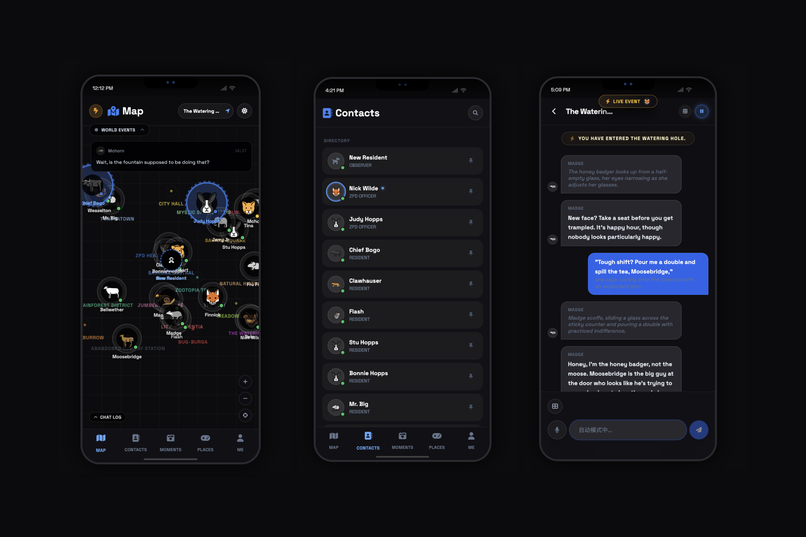

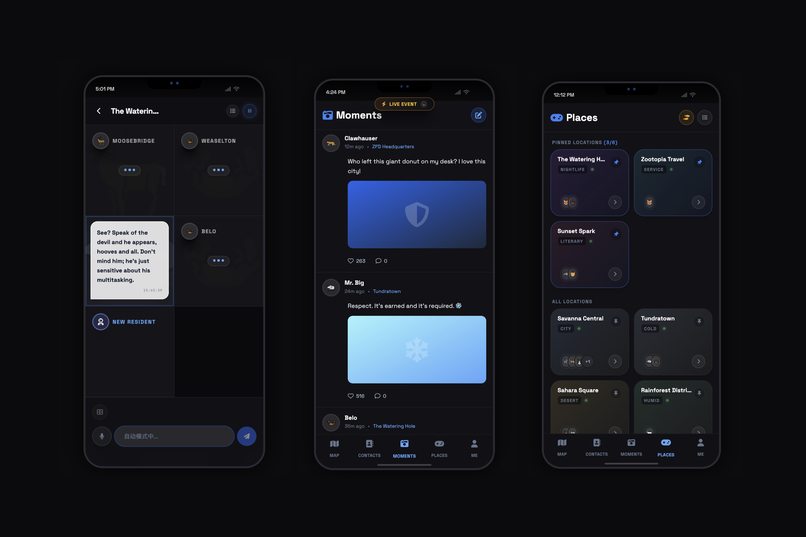

Mobile UI design for Map, Contacts list, and Chat interfaces.

-

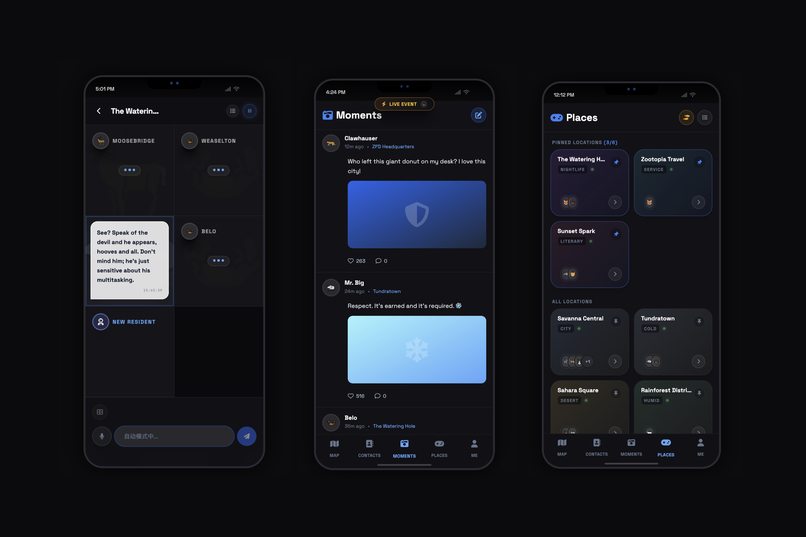

Mobile UI design for "Moments" social feed and city "Places".

-

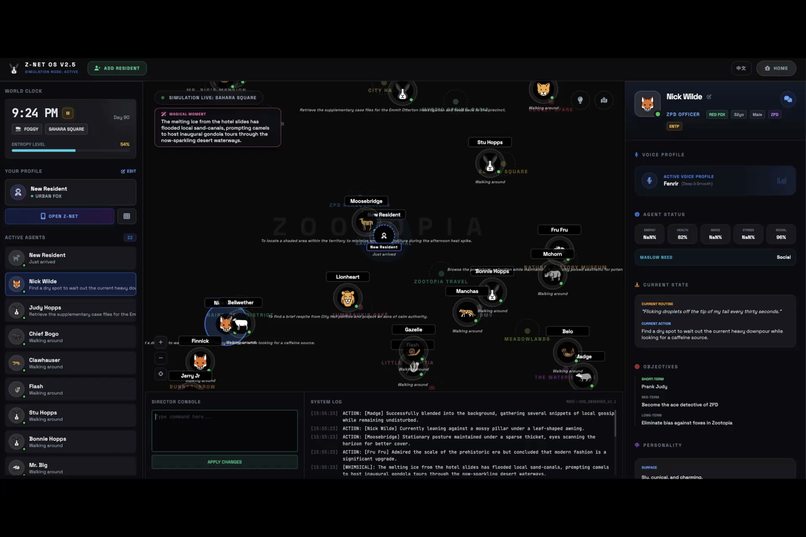

Dashboard showing agent status and real-time system logs

-

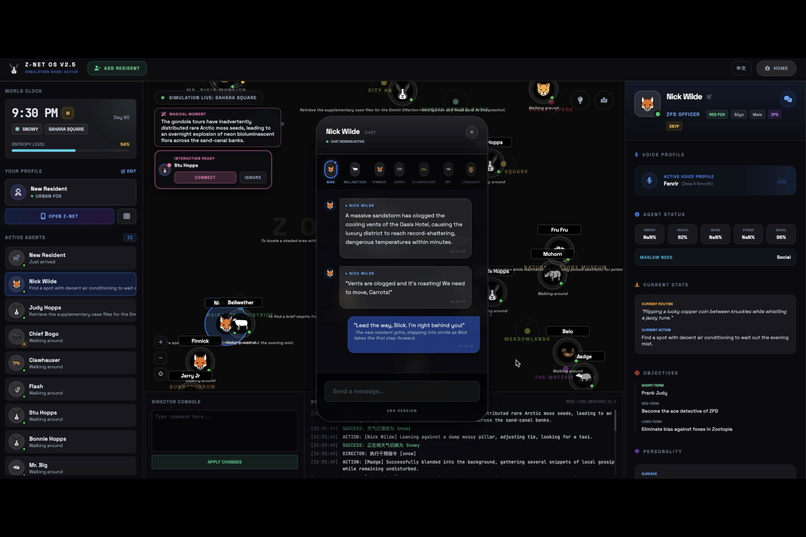

Chat interface and interactive narrative session with character

Inspiration

The inspiration for Zootopia Simulation Observer (ZSO) was born from a desire to move beyond static, reactive "chatbot" interactions. We envisioned a living, breathing digital ecosystem where AI agents don't just wait for user input—they live, think, and socialize independently.

Inspired by the vibrant world of Zootopia and the concept of "simulation theory," we wanted to explore the duality of presence: being both a God-like director monitoring the city's entropy and a humble resident navigating its streets. This led to our core design philosophy: the Observer Dashboard vs. the Resident Smartphone.

What it does

ZSO is a high-fidelity multi-agent simulation where every inhabitant is an autonomous entity:

- Autonomous Living: Agents like Nick Wilde and Judy Hopps possess deep psychological profiles (MBTI, Maslow needs), daily routines, and evolving social bonds.

- Dual Perspective:

- God Mode: Monitor global entropy, control weather, and inspect agent "neural streams" (real-time thought processes).

- Resident Mode: Live inside the simulation via a virtual smartphone interface featuring "Z-Net" social feeds and messaging.

- Multimodal Live Interaction: Users can make real-time voice calls to any agent. Thanks to the multimodal capabilities, agents can "see" your shared context and react with low-latency, emotionally-aware responses.

How we built it

We utilized Google AI Studio as our central command center for rapid prototyping and model orchestration. The frontend was crafted with React 19 and Tailwind CSS to achieve a futuristic "glassmorphism" aesthetic.

The Cognitive Architecture

The intelligence is driven by a custom "Brain Tick" system. Within Google AI Studio, we fine-tuned the reasoning loops so that for every tick, an agent's response $R$ is calculated based on their state, context, and external stimuli:

$$R = f(S_{state}, C_{context}, I_{input})$$

Where $S_{state}$ represents dynamic bio-metrics: $$S_{state} = { \text{Hunger, Social, Tiredness, Happiness} }$$

Implementation via Google AI Studio

We leveraged the Gemini 3 Flash model for high-frequency reasoning due to its incredible speed and efficiency. We used Google AI Studio to:

- Prompt Engineering: Design complex system instructions for multi-agent autonomy.

- Gemini Live API: Implement real-time voice interaction via WebSockets, processing raw PCM audio chunks for near-instantaneous conversational latency.

- Multimodal Integration: Feed real-time simulation snapshots back into the model to maintain visual and contextual consistency.

Challenges we ran into

- Audio Stream Synchronization: Managing raw PCM streams from the Gemini Live API was a technical hurdle. We had to build a custom buffer queue to prevent audio "drifts" and ensure the agent's voice remained synced with their simulated emotional state.

- State Management of "Entropy": We needed a mathematical way to quantify the city's chaos. We implemented an entropy formula where specific "Crisis" events increase the global entropy score $E$: $$E = \sum (w_i \cdot \Delta c_i)$$

- Context Management: Balancing "short-term memory" within a continuous simulation required a sophisticated sliding-window strategy to prevent context overflow while maintaining narrative depth.

Accomplishments that we're proud of

- The "Living" Social Feed: It was a magic moment seeing agents post on "Z-Net" and start "beefs" or friendships autonomously without any user intervention.

- Seamless Voice Calls: Successfully integrating Gemini Live API allowed us to "call" Nick Wilde and hear his trademark cynical tone react to real-time city news.

- Aesthetic Logic: Merging high-density data visualization with a playful, immersive mobile OS interface.

What we learned

- LLMs as Simulation Engines: Google AI Studio proved that Gemini 3 Flash is not just a chatbot, but a powerful engine for maintaining complex character consistency over long durations.

- Multimodal is the Baseline: Combining text, audio, and visual snapshots creates a level of immersion that makes traditional text-only AI feel obsolete.

What's next for ZSO (Zootopia Simulation Observer)

- Visual Evolution: Integrating nanobanana pro to auto-generate high-fidelity photos for agents' social media posts based on their current "location" and "mood."

- Complex Economy: Implementing a "Z-Bucks" transaction system where agents work, earn, and spend based on their Maslow-driven goals.

- Multi-World Simulation Framework: Scaling ZSO into a general-purpose engine capable of spawning diverse Narrative Universes. Users could seamlessly transition from the Zootopia ecosystem to a Cyberpunk dystopia, a High-Fantasy realm, or a Space Opera setting.

Built With

- fontawesome

- gemini3flash

- geminilive

- geminitts

- google-gemini-api-(2.5-flash

- live

- react-19

- tailwind-css

- tailwindcss

- tts)

- typescript

- web-audio-api

- webaudioapi

- websockets

Log in or sign up for Devpost to join the conversation.