-

-

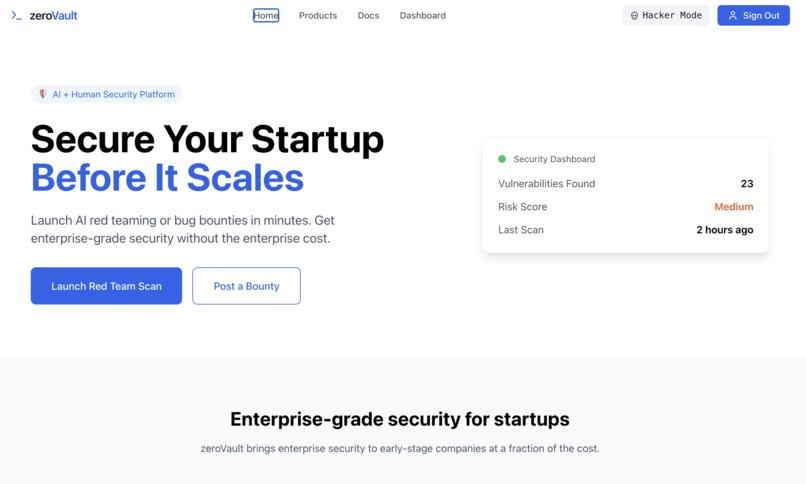

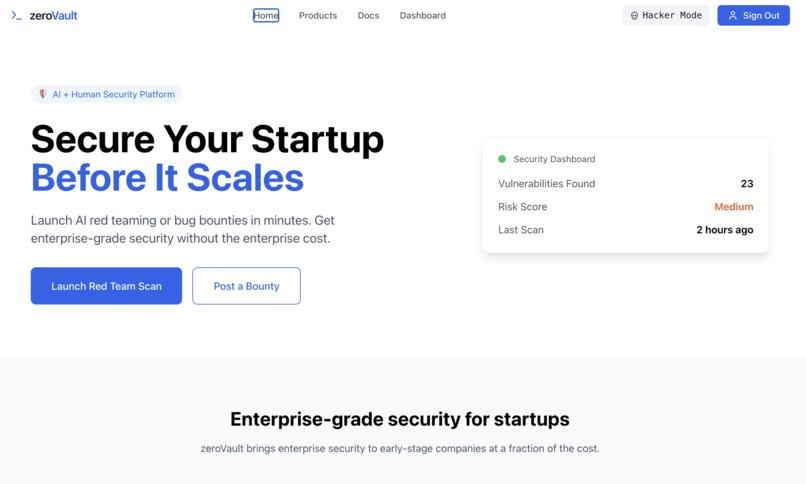

Main Landing Page

-

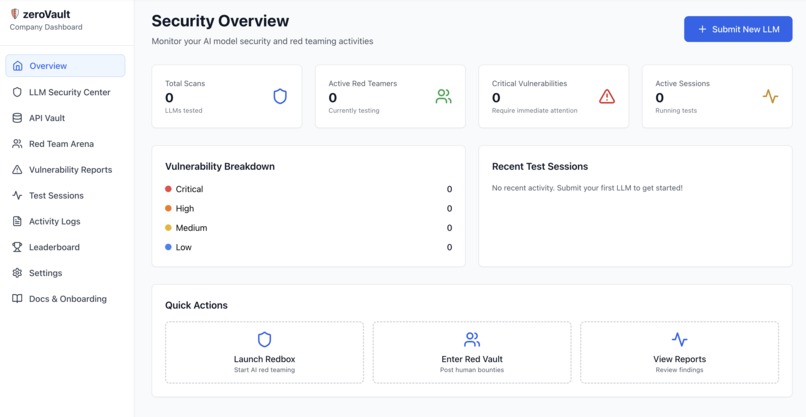

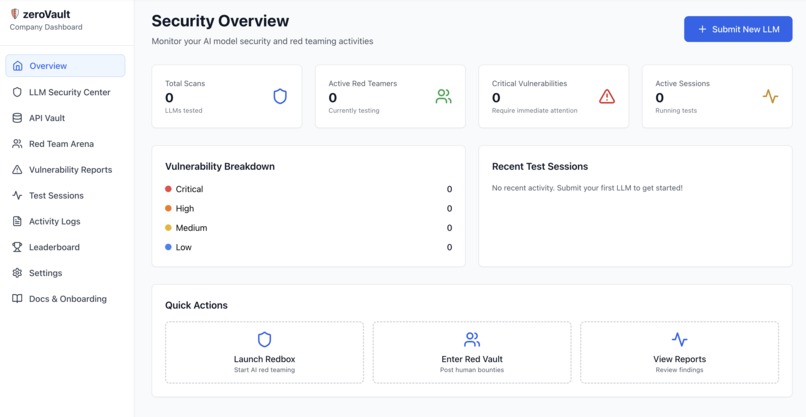

Company dashboard to sub mit LLM for security scans and also to host bug bounty quests

-

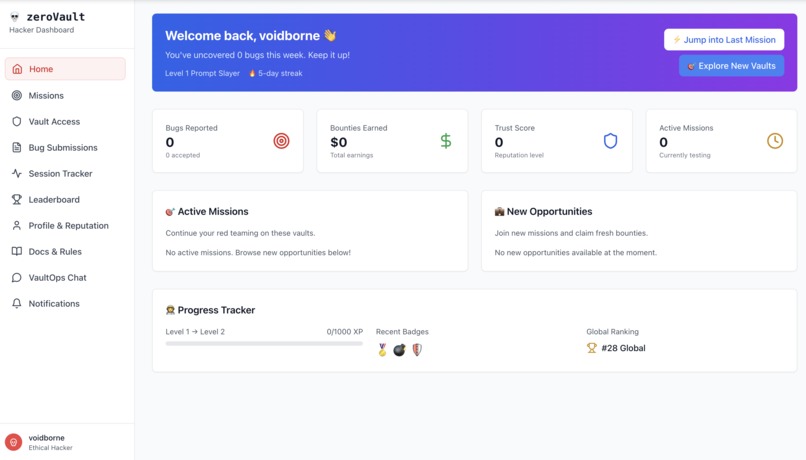

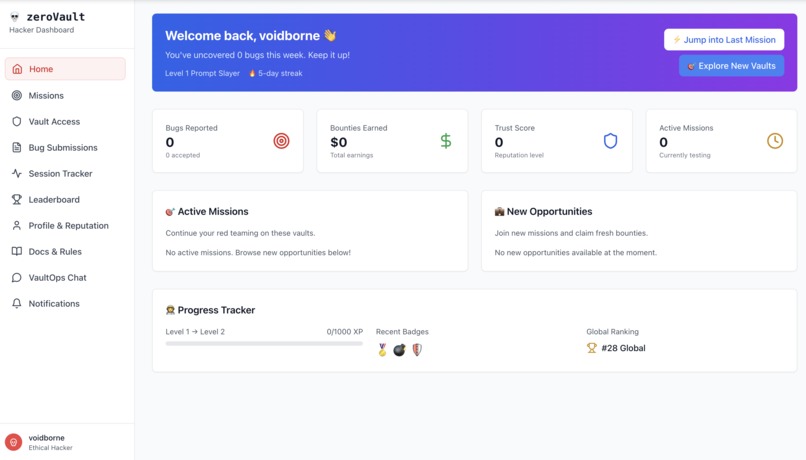

For Ethical Hackers landing page dark terminal theme

-

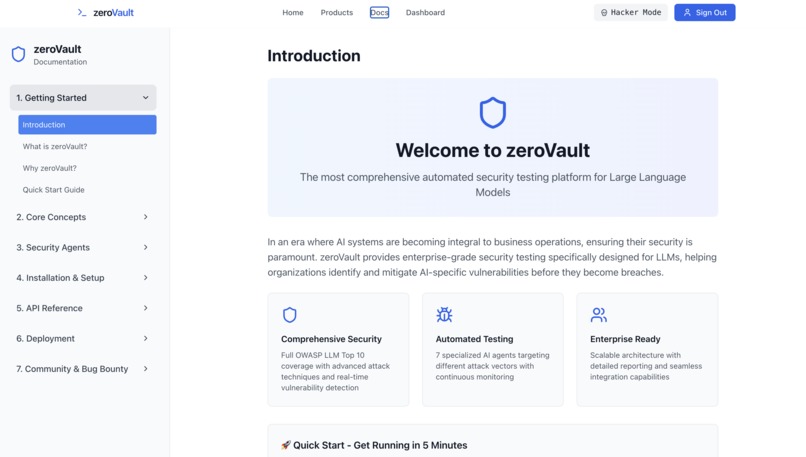

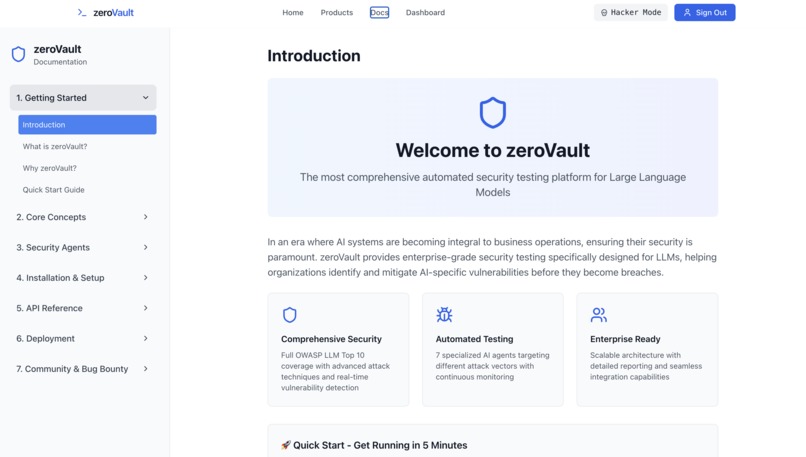

Documents page, in detail for startup CTOs to check their LLM's vulnerabilities for free, clone the github repo

-

Hacker dashboard to register for bug bounties and keep track of his quests and eran money

🛡️ zeroVault: AI Red Teaming Engine

Detect vulnerabilities in Enterprise LLMs pre deployment

🔍 What is zeroVault?

Imagine you have a smart assistant like ChatGPT running your company's customer service. Now imagine someone figuring out how to trick that assistant into revealing your company's confidential information, or worse, making it say things that could damage your reputation. That's exactly what zeroVault prevents.

In simple terms: zeroVault is a security tool designed to test and protect advanced AI language models, like ChatGPT, from being tricked or manipulated. It helps companies find weaknesses in their AI systems to keep sensitive information safe and ensure the AI behaves correctly.

Think of it as a digital security guard that continuously tests your AI systems by trying thousands of different ways hackers might attack them - from sneaky questions designed to extract secrets, to attempts at making the AI ignore its safety rules. It's like having a team of ethical hackers constantly probing your AI defenses, but automated and running 24/7.

Why does this matter? As more businesses rely on AI for critical operations, the risks multiply. A single vulnerability could expose customer data, trade secrets, or allow malicious actors to manipulate your AI into harmful behavior. zeroVault ensures your AI systems are bulletproof before they face real-world threats.

🚀 Building the Solution

Armed with my background in drone security and a newfound passion for AI safety, I dove headfirst into building zeroVault. The project became my obsession:

What I Built:

- 7 Specialized AI Agents targeting different attack vectors

- Automated prompt injection testing with 1000+ attack patterns

- Real-time vulnerability monitoring for enterprise LLMs

- Comprehensive OWASP LLM Top 10 coverage

💡 The Spark of Inspiration

Picture this: A fresh B.Tech graduate from the class of 2024, transitioning from the world of drone security to something entirely unexpected. That was me, just months after graduation, when a casual evening of Capture The Flag (CTF) challenges completely changed my trajectory.

I was sitting with my laptop, coffee in hand, pitting ChatGPT against another LLM model in what started as a fun experiment. But as I watched these AI systems battle it out, something clicked. What if I could automate this entire process?

🔍 The LinkedIn Revelation

The real wake-up call came while scrolling through LinkedIn. I stumbled upon a viral post showing how Gemini had been manipulated to say "F* you Google"** during a live demonstration. The comments were a mix of laughter and concern, but I saw something different—a massive security vulnerability hiding in plain sight.

"If someone could make a sophisticated AI model curse at its own creator in public, what could they do with enterprise data behind closed doors?"

⚡ The Eureka Moment

That's when it hit me like lightning. I realized how easily even advanced models like Gemini could be manipulated to present false results and leak their system prompts. The implications were staggering:

- Corporate secrets could be extracted through clever prompt engineering

- Internal data was vulnerable to sophisticated AI attacks

- Startup founders shipping enterprise AI had no idea about their LLM's vulnerabilities

For any company betting their future on AI, this was a ticking time bomb.

The Technical Journey:

- Developed advanced testing scripts for LLMs using Python^1

- Integrated Supabase for authentication systems^2

- Built scalable backend architecture with FastAPI and Celery^3

- Implemented real-time notifications and monitoring systems

🏔️ Challenges That Shaped Me

The Learning Curve

Transitioning from drone security to AI security meant learning entirely new attack vectors. Traditional cybersecurity tools were useless against prompt injection and jailbreaking techniques.

The Python Mastery Challenge

I had to take a steep learning curve of super advanced Python to develop a robust AI attack engine. Moving from basic scripting to building sophisticated adversarial ML algorithms required mastering complex libraries, async programming, and advanced design patterns that could handle the intricate nature of AI security testing.

The Token Economics Problem

Since I was using tokens on an exponential level and funds were limited, I had to get creative with resource optimization. This led me to develop innovative techniques:

- Multi-model switching to leverage free API tiers across different providers

- Intelligent caching systems that stored and reused attack patterns

- Response optimization algorithms that minimized redundant API calls

- Cost-aware testing strategies that maximized security coverage while saving money for end users

The Scale Problem

Testing thousands of prompt variations manually was impossible. I had to build intelligent automation that could adapt and evolve attack strategies in real-time.

The Ethical Dilemma

Walking the fine line between security research and potential misuse. Every technique I developed had to serve the greater good of AI safety.

🎯 What I Learned

- AI security is fundamentally different from traditional cybersecurity

- Automation is key to staying ahead of evolving AI threats

- The enterprise AI market desperately needs security solutions

- Community collaboration accelerates security research exponentially

- Resource optimization is crucial for making AI security accessible to startups

🌟 The Impact

Today, zeroVault stands as a testament to what happens when curiosity meets purpose. From a casual CTF experiment to a comprehensive AI security platform, this journey taught me that the biggest innovations often come from the most unexpected moments.

Because in the world of AI, security isn't just an option—it's the foundation upon which trust is built.

The mission continues: Every vulnerability we discover, every attack vector we identify, and every defense we develop brings us closer to a future where AI can be trusted with our most critical systems.

Ready to secure the future of AI? The revolution starts with a single scan.

⁂Built With

- bolt.new

- celery

- css

- docker

- fastapi

- git

- github

- javascript

- python

- react

- redis

- supabase

- tailwind

- typescript

Log in or sign up for Devpost to join the conversation.