-

-

WarRoom Agent Console for AI-driven incident coordination, enabling real-time triage, human approval, and secure action execution.

-

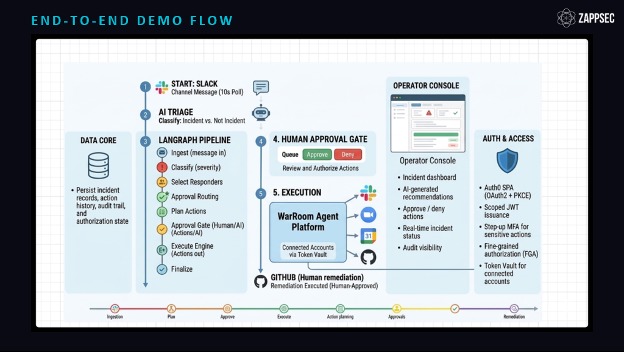

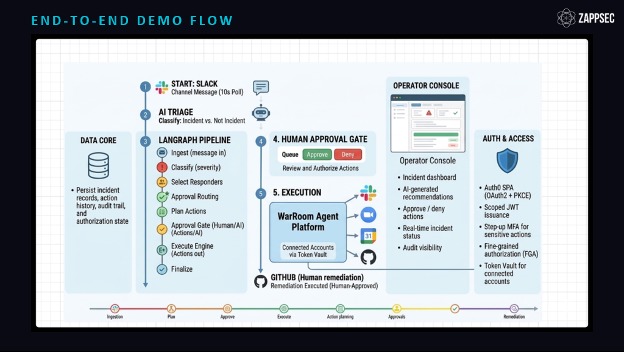

End-to-end incident flow from Slack ingestion to AI triage, human approval, and secure execution via connected systems.

-

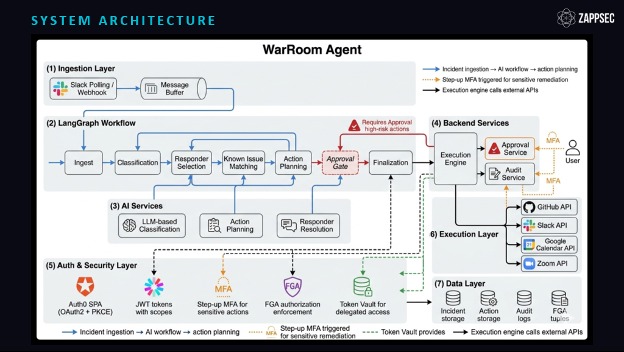

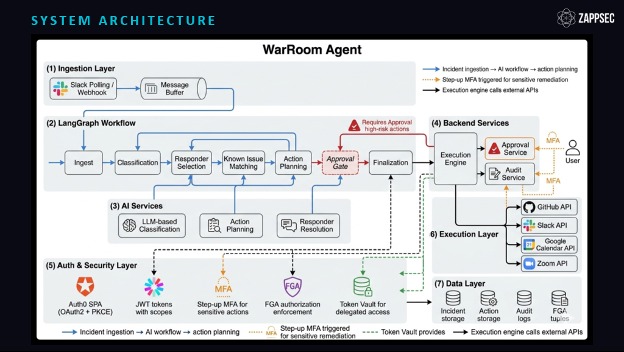

System architecture showing Auth0 integration with Token Vault. FFGA, and step-up authentication for secure AI-driven operations.

Inspiration

Every engineer who has been on call knows the feeling. A P1 drops at 2am. Someone posts in Slack. Three side threads spin up. Nobody is sure who is on call. The Zoom link gets buried in a thread reply. Stakeholders find out an hour late. And somewhere in the scramble, someone shares an API key in a public channel because they need to "just fix it quickly."

We wanted to build the system we wished we had during those incidents. An AI agent that handles the coordination, the triage, the war room setup, and the responder notification, but never acts without explicit human approval, and never touches a raw credential. When we saw Auth0's Token Vault, it clicked. Token Vault gave us a way to let the agent interact with external systems on behalf of the operator's real identity, without storing or managing OAuth tokens ourselves. That was the missing piece.

What it does

WarRoom Agent monitors a Slack channel for production incidents. When an engineer reports an issue, the AI agent validates the message, classifies severity (P1/P2/P3), identifies the right responders based on domain expertise, matches the incident against a known-issue knowledge base, and generates a full response plan.

The plan includes coordination actions (Zoom war room, Google Calendar bridge, Slack notifications, stakeholder emails) and remediation actions (GitHub config changes). Every external action runs through Auth0 Token Vault. The agent exchanges the operator's Auth0 access token for a scoped provider token at execution time. No stored credentials. No shared service accounts.

High-risk remediation actions get three additional gates: human approval, Auth0 FGA to verify the operator has the specific executor privilege, and step-up MFA re-verification. The Token Vault exchange only happens after all three pass.

Everything is logged. Every classification, every approval, every FGA check, every Token Vault exchange, every execution result. Full audit trail from Slack message to completed remediation.

How It Works

Ingestion: A background poller monitors a Slack channel every 10 seconds using the conversations.history API. Messages are buffered and passed to an AI triage agent (Claude Haiku). The agent determines whether the message describes a real incident or normal conversation. Non-incidents are logged and skipped.

AI Workflow: Qualified incidents enter a LangGraph pipeline with eight nodes. Ingest captures the raw message. Classify determines severity (P1/P2/P3) with confidence scoring and reasoning. Select Responders matches on-call engineers by domain expertise, scored and ranked. Match Known Issues searches a knowledge base by keyword and domain overlap. Plan Actions generates coordination and remediation actions, each tagged with a risk level. The Approval Gate pauses the workflow for any high or critical-risk action. Execute routes approved actions through integration adapters. Finalize marks the workflow complete.

Token Vault Integration: The frontend is a React SPA using Auth0 OAuth2 + PKCE. After sign-in, the SPA requests an access token scoped to the WarRoom backend API. The backend validates the JWT (RS256, JWKS-cached), enforces audience and scope claims, and preserves the raw access token for downstream use.

When an approved action targets a vault-backed provider (Google, Slack, or GitHub), the backend performs a federated token exchange. It sends Auth0 Custom API Client credentials, the operator's access token as the subject token, the target provider's connection name, and the requested scopes to Auth0's token endpoint. Auth0 returns a short-lived provider access token. The integration adapter uses that token, then discards it. No caching. No storage.

FGA Enforcement: When an incident is created, the backend writes FGA tuples mapping specific operators to specific remediation objects. Before any remediation action fires, the backend calls FGA to check whether the signed-in operator holds the correct relation. If FGA denies, the Token Vault exchange never happens.

CIBA Step-up Authentication: Sensitive GitHub remediation actions trigger a step-up challenge through push notifications through the Auth0 Guardian App, powered by Auth0 CIBA. The operator must approve the push event before the backend proceeds.

Audit Trail: Every state transition, Token Vault exchange, CIBA approval decision, FGA check result, and execution outcome is logged to an append-only audit table. Each entry carries actor identity, actor type (AI agent, human, or system), timestamp, target system, and result. Nothing is silent.

How we built it

Frontend: React + TypeScript + Vite with the Auth0 SPA SDK (OAuth2 + PKCE). The Auth0 access token is injected into every API call through a custom AuthTokenBridge hook.

Backend: Python FastAPI with LangGraph for the AI workflow. Eight nodes in a stateful pipeline: ingest, classify, select responders, match known issues, plan actions, approval gate, execute, finalize. Anthropic Claude handles classification and action planning. SQLAlchemy ORM on SQLite for the MVP.

Token Vault: The backend uses Auth0's federated token exchange grant. When an action targets Google, Slack, or GitHub, it sends the operator's access token as the subject token, the Custom API Client credentials, the connection name, and the requested scopes to Auth0's token endpoint. Auth0 returns a short-lived provider token. The adapter uses it and discards it.

FGA: We write relationship tuples when incidents are created. The application engineer gets executor on app-config remediation. And the network engineer gets executor on network-policy remediation. The backend checks FGA before every sensitive execution. If the FGA check fails, the Token Vault exchange never fires.

CIBA: Auth0 CIBA triggers an MFA approval request for high-risk remediation through the Auth0 Guardian App.

Deployment: GitHub Actions CI/CD deploys application to AWS EC2.

Challenges we ran into

Debugging Token Vault's federated token exchange took an extra iteration than expected. There was a duplicate Github connection added to the users profile, and the check for linked accounts was bringing up an extra entry (4 linked accounts instead of 3). This was on the app operators profile, and stopped the app config Github execution action from going through. We spent a substantial amount of time debugging exchange failures, but the easy fix turned out to be deleting the user and reconnecting his provider accounts.

In parallel, testing CIBA introduced another challenge. Our initial approach attempted to enforce MFA through a Post-Login Action, but this conflicted with the CIBA flow. Because CIBA fully owns the authentication transaction, invoking MFA inside the Post-Login trigger caused the flow to fail. The resolution was to let Auth0 control MFA enforcement entirely at the platform level, and remove MFA logic from Actions. Once we did this, the CIBA approval flow worked cleanly, allowing step-up authentication to happen at the exact moment of sensitive action remediation without breaking the backchannel flow.

Accomplishments that we're proud of

The FGA denial moment is the one we keep coming back to. The App Operator gets blocked by FGA for the Network Config Github remediation execution because they lack the executor relation for that specific remediation. Even though they are added as a collaborator on the network repo, the Token Vault exchange never happens. That is not a mock. That is a real authorization check preventing a real credential exchange. Seeing that work end-to-end was the moment we knew the architecture was sound. For the App Config, the App Operator passes step-up MFA and the App Config Github remediation execution executes successfully.

We would also like to highlight the layered security model. Five layers working in sequence: app API scopes, FGA resource-level authorization, CIBA for step-up identity assurance, Token Vault delegated exchange, and the external provider's own enforcement. Each layer catches something the others miss. We did not design this from a whiteboard. We discovered it by stress-testing our own system and finding the gaps.

The full audit trail is the third thing. Every action the agent takes is traceable to a specific human identity, a specific approval decision, a specific FGA check result, and a specific Token Vault exchange. Nothing is silent. If something goes wrong in production, you can reconstruct exactly what happened and why.

What we learned

The biggest insight: Token Vault and FGA solve different problems, and you need both.

Token Vault solves credential management. It eliminates raw OAuth tokens from your application. But it delegates the access the user has, not necessarily the access the agent should have. If upstream provider permissions are broader than intended, the delegated token inherits that broader access.

FGA solves authorization policy. It lets you define, at the application level, which operator can execute which specific action on which specific resource. Even if the operator's linked GitHub identity has broad access, FGA can restrict what the agent is allowed to do with it.

Step-up authentication through CIBA solves identity assurance. It confirms that the person requesting execution is actually present, not a stale session or a compromised token.

These three are complementary layers, not alternatives. And the order matters: verify identity, then check authorization, require step-up identity assurance for sensitive actions, then exchange tokens. That sequence is the most important thing we built.

What's next for ZAPP WarRoom Agent

Immediate: PostgreSQL migration, Redis task queue for async execution, WebSocket notifications for real-time dashboard updates, and vector embeddings for semantic known-issue matching. Other lifecycle events like invalidating an incident, marking an incident as fully resolved, and final RCA comments for resolved incidents.

Longer term: automatic Token Vault token revocation on incident resolution, Rich Authorization Requests (RAR) for per-action consent prompts, real-time FGA tuple updates as team rosters change, and a consent dashboard where operators can see and manage exactly what the agent can do on their behalf.

The goal is a platform where AI agents operate within the same identity and authorization boundaries as the humans they assist. Not as shadow actors with broad API keys, but as accountable participants in an auditable chain of trust.

Bonus Blog Post

Token Vault Alone Is Not Enough: Building Layered Authorization for AI Agents

We built WarRoom Agent for the Auth0 "Authorized to Act" hackathon. We went in thinking Token Vault would handle the hard part of agent authorization. For 80% of the problem, it does.

Token Vault eliminated our biggest pain point overnight: managing raw OAuth credentials for Google, Slack, and GitHub inside our application. The agent never touches a refresh token. It exchanges the operator's Auth0 access token for a scoped provider token at execution time, uses it, and discards it. That pattern alone represents a major step forward from how most AI agent systems handle third-party access today.

The Test That Broke Our Assumptions

We set up two operators. Operator 1 had GitHub collaborator access to an app-config repository. Operator 2 had access to a network-policy repository. We expected Token Vault delegation to enforce this boundary naturally.

It did, mostly. If an operator's linked GitHub account lacked repo access, the delegated API call failed at GitHub's end.

The problem was the inverse case. What happens when upstream provider permissions are broader than your application intends? If Operator 1 gets accidentally granted access to both repositories at the GitHub level (which happens constantly in real organizations), Token Vault faithfully delegates that broader access. It has to. Token Vault is not an authorization policy engine. It is a credential mediation layer. It gives the agent the access the user has, not necessarily the access the agent should have.

How We Closed the Gap

That finding reshaped our entire security model.

We added Auth0 Fine-Grained Authorization (FGA) as an internal gate before any Token Vault exchange for sensitive actions. FGA checks whether the specific operator holds the executor relation on the specific remediation object. Even if their linked GitHub identity has broad access, the application blocks execution unless FGA explicitly permits it. The Token Vault exchange never starts for unauthorized actions.

Then we added Auth0 CIBA human-in-the-loop approval on top. Before a high-risk remediation fires, the operator approves the action through the Auth0 Guardian App.

The Five-Layer Model

The result is five layers working together:

| # | Layer | What It Catches |

|---|---|---|

| 1 | App API Scopes | Prevents unauthorized API access at the application boundary. |

| 2 | FGA Resource Authorization | Blocks execution when the operator lacks a specific relation on the target resource. |

| 3 | Step-up Identity Assurance | Confirms the operator's identity through human-in-the-loop approval. |

| 4 | Token Vault Exchange | Provides delegated, short-lived credentials scoped to the operator's linked external account. |

| 5 | Provider Enforcement | The external provider's own access controls as the final gate. |

Each layer catches something the others miss. Remove any single layer and a specific attack vector opens up.

The Insight for the Auth0 Community

Token Vault solves credential management. FGA solves authorization policy. Step-up authentication solves identity assurance. These are not competing tools. They are complementary layers, and the order you apply them matters.

Check authorization before you exchange credentials. Verify identity before sensitive remediation actions.

We did not plan this architecture from the start. We discovered it by breaking our own system. That is exactly the kind of layered model that agent authorization needs to evolve toward. AI agents should operate within the same identity and authorization boundaries as the humans they assist. Not as shadow actors with broad API keys, but as accountable participants in an auditable chain of trust.

Token Vault, FGA, and CIBA are the building blocks. WarRoom Agent is our proof that they work together.

Built With

- anthropic

- auth0

- awsec2

- ciba

- dockercompose

- fastapi

- fga

- githubactions

- githubapi

- googlecalendarapi

- langgraph

- python

- react

- slackapi

- smtp

- sqlalchemy

- sqlite(mvp)

- tailwindcss

- tokenvault

- typescript

- vite

- zoomapi

Log in or sign up for Devpost to join the conversation.