-

-

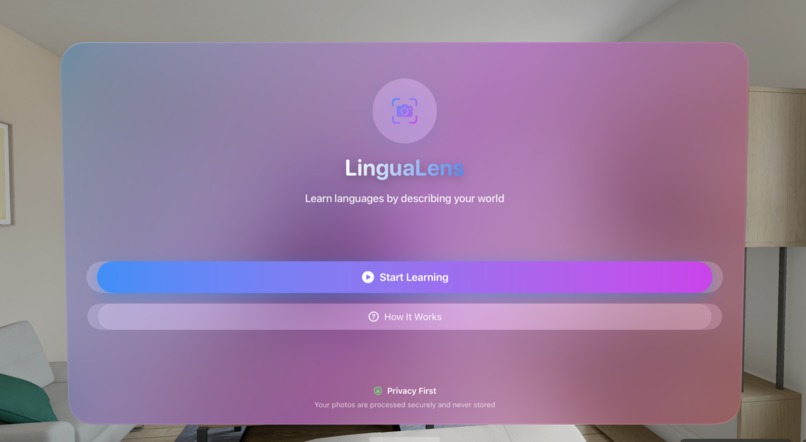

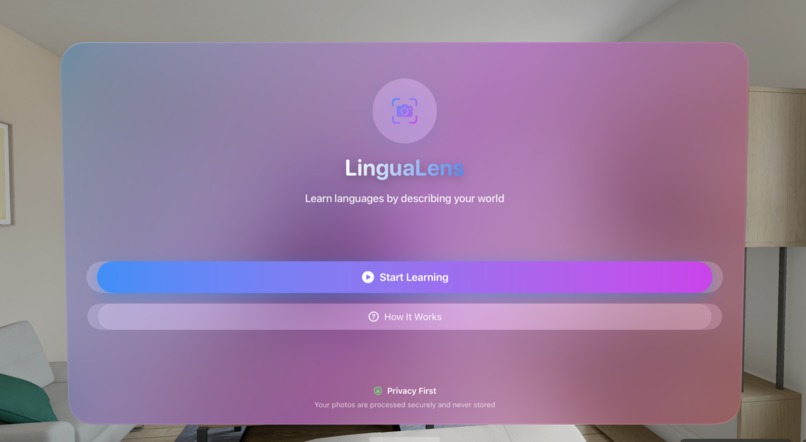

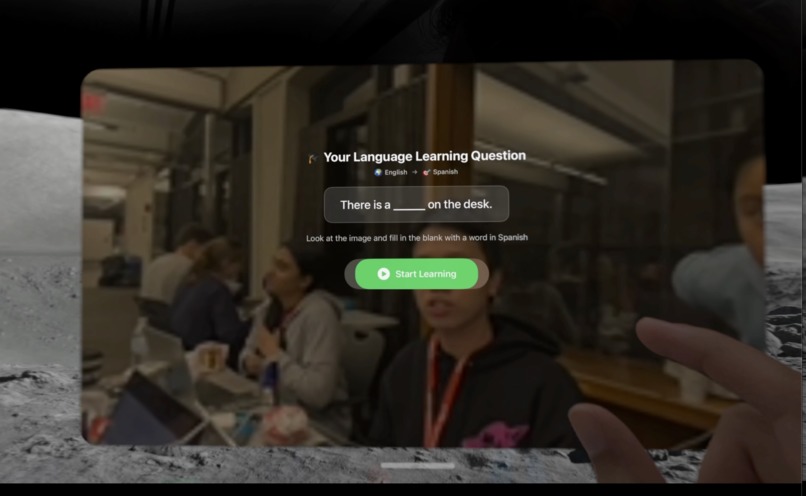

LinguaLens landing page as seen on Vision Pro

-

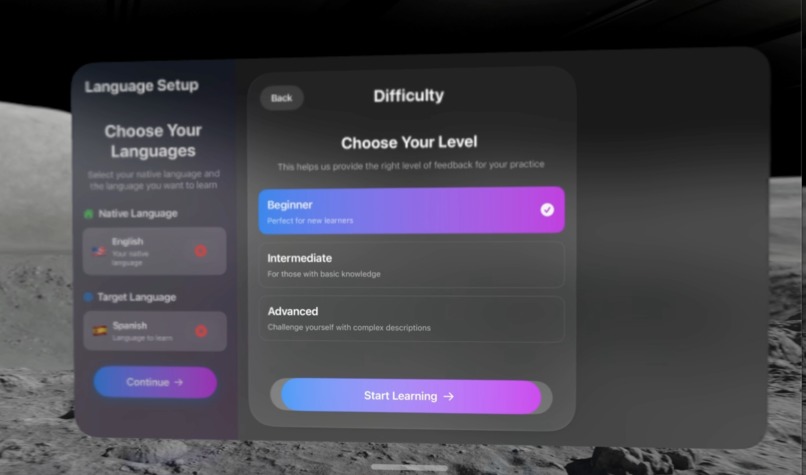

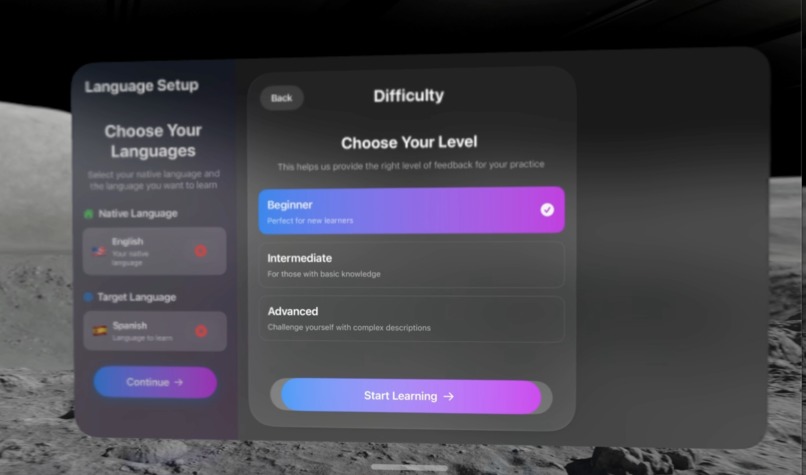

User can choose a difficulty, their native language, and the language they want to learn.

-

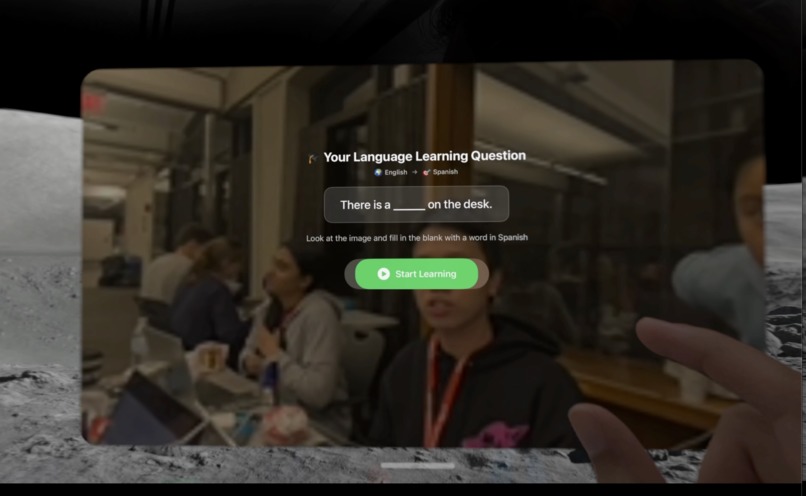

This is the photo selection page. Select the Analysis button to push image to backend

-

Gemini AI API is prompted to detect an object in the image and create a fill in the blank question for the user.

-

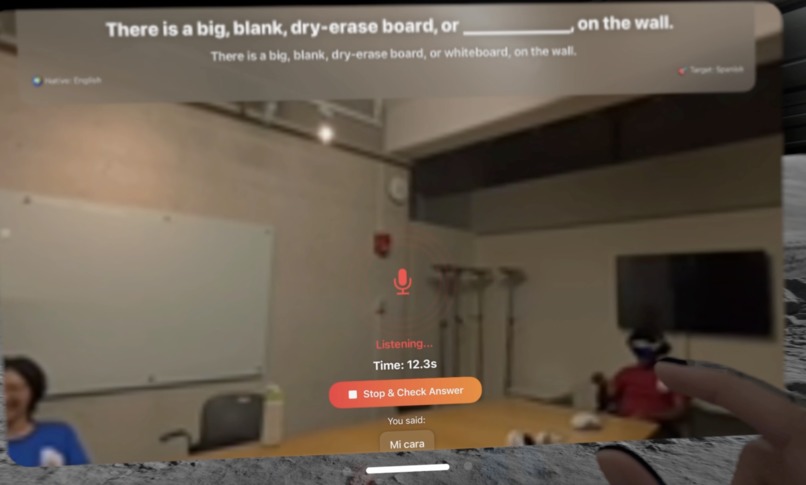

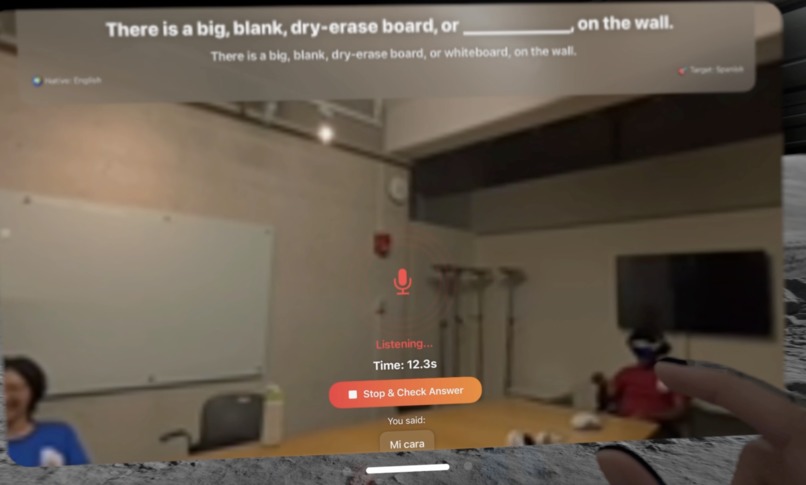

The user need to enable their microphone and try to say the missing word in the language they are trying to learn.

-

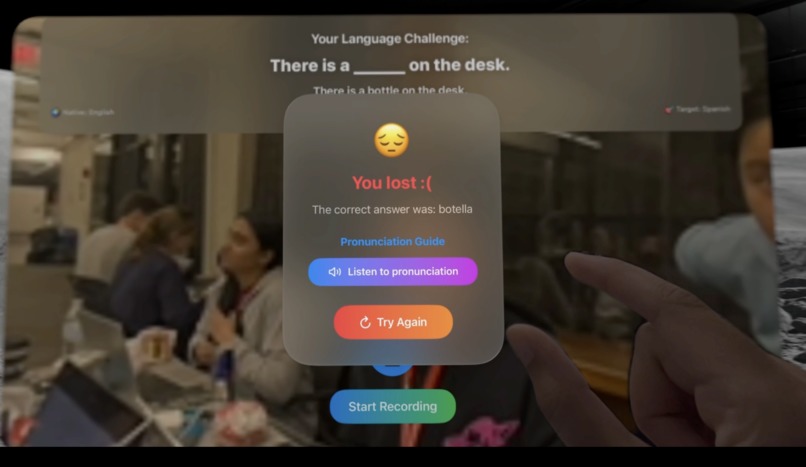

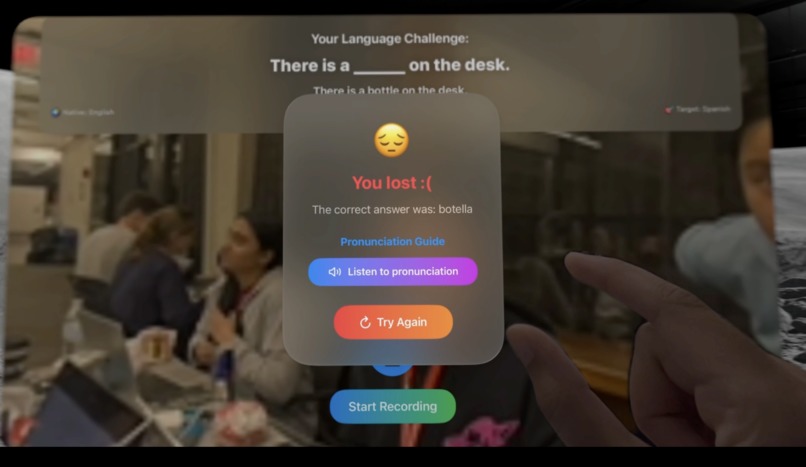

If the user does not answer correctly, the app prompts user to either listen to pronunciation or try again.

-

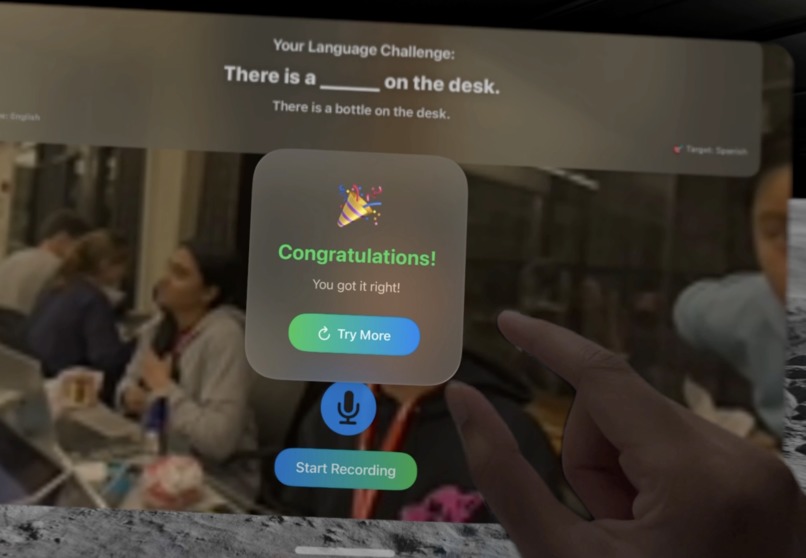

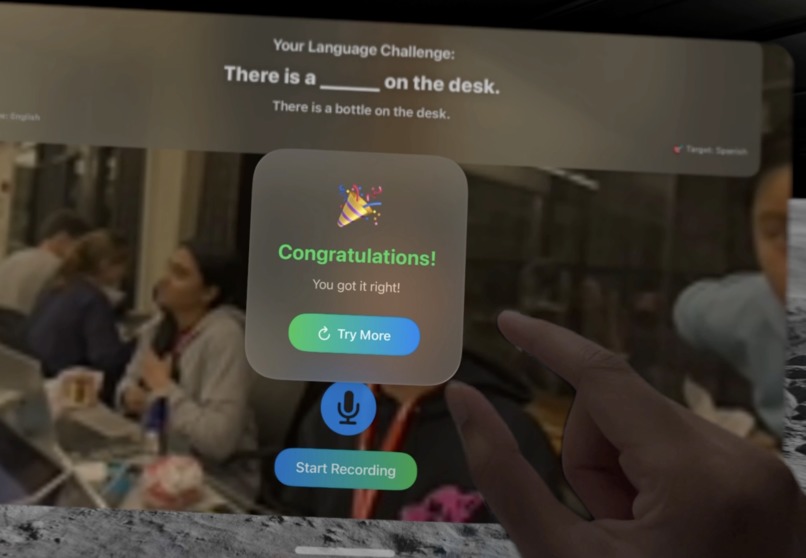

If user answers correctly, the user will be directed back to the main page to upload a new photo.

Inspiration

In the age of globalization, there is an increase in interest to learn foreign languages to better appreciate other people’s cultures and strengthen their cognitive skills. While learning a new language sounds exciting, the tools available often feel outdated. Books, quizzes, and even the typical language apps are often boring and one-sided — you never truly get to interact with them or practice verbal skills. We wanted to change that with our application.

What it does

LinguaLens transforms everyday objects into opportunities to practice any new language. You start with an image, like a kitchen or a park. The system generates a sentence with a blank: “The ___ is on the table.” You respond out loud in your target language, e.g. “la manzana.” The app listens, checks your pronunciation, and builds on the conversation. It’s like having a tutor that adapts to your environment, making learning feel like a quest instead of homework.

How we built it

- In order to incorporate Vision Pro for immersive interaction, we worked with VisionOS in Swift/SwiftUI for the app interface.

- Google Gemini powers the image-to-prompt generation, creating dynamic fill-in-the-blank sentences.

- FastAPI serves as the backend to connect the Vision Pro client with Gemini and handle data flow.

- Speech recognition evaluates pronunciation and captures answers.

- Real-time feedback turns practice into a conversational game.

Challenges we ran into

- Learning how to use Vision Pro and using Swift with it when it is very restrictive and more difficult to work with than other AR/VR headsets.

- Balancing performance with limited tokens for LLM calls.

- Handling privacy concerns around image processing on Vision Pro.

- Integrating Gemini with FastAPI and speech recognition smoothly in real time.

- Managing scope — we had to focus on the most impactful core features.

Accomplishments that we're proud of

- We built an interactive prototype that actually lets users learn words by talking to their surroundings.

- We created a smooth end-to-end experience: from image recognition, to sentence generation, to spoken feedback.

- We pushed the boundaries of what Vision Pro can do for education.

What we learned

- The importance of designing for natural learning, not just memorization.

- How to integrate multiple technologies — Vision Pro, VisionOS, Gemini, FastAPI, and speech recognition — into one cohesive experience.

- We learned that small, well-designed interactions (like fill-in-the-blank prompts) can make AI-powered learning feel fun and approachable.

What's next for LinguaLens

- Expanding to more languages and difficulty levels.

- Technology and time permitting, incorporating live view and 3D eye-tracking for object selection.

- Incorporating a reward or daily task system to offer users more incentives to build learning habits.

- Adding multiplayer or cooperative modes where learners practice together.

- Building a mobile version so learning isn’t limited to Vision Pro.

- Enhancing personalization — adapting prompts to each learner’s level and progress.

- Adding features where people can test their accents or help them with speech therapy

Log in or sign up for Devpost to join the conversation.