-

-

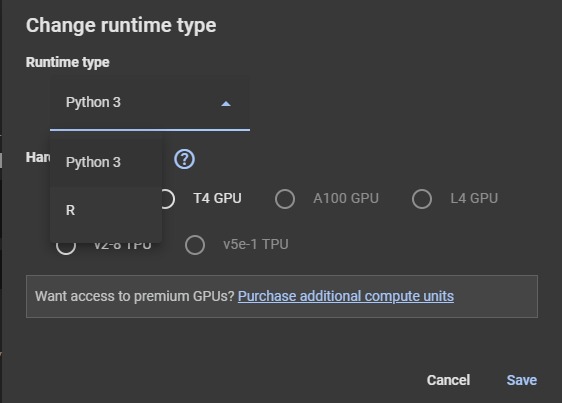

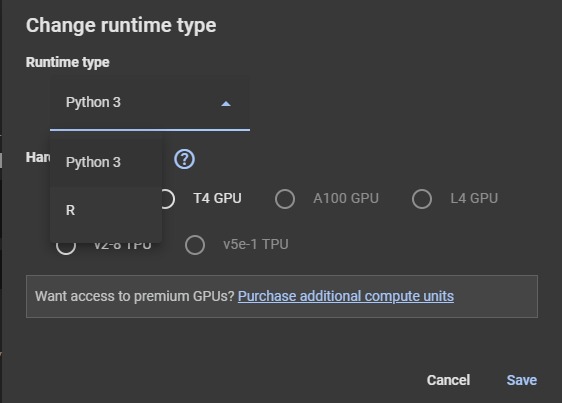

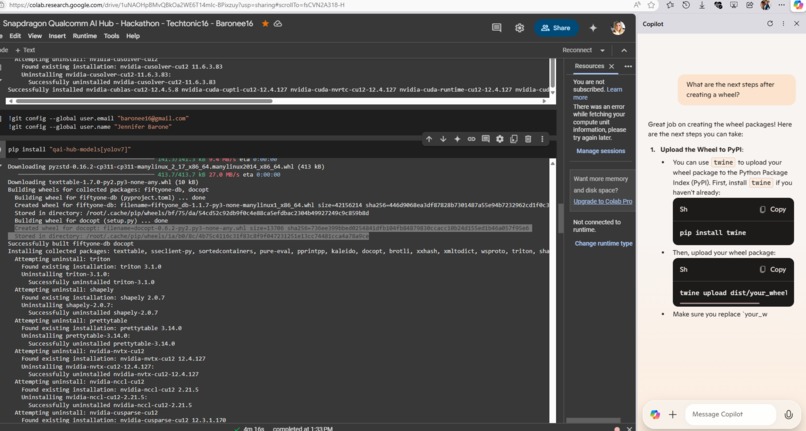

These are alternatives for runtime options when executing a script (I am running this from a collab notebook (google collab)

-

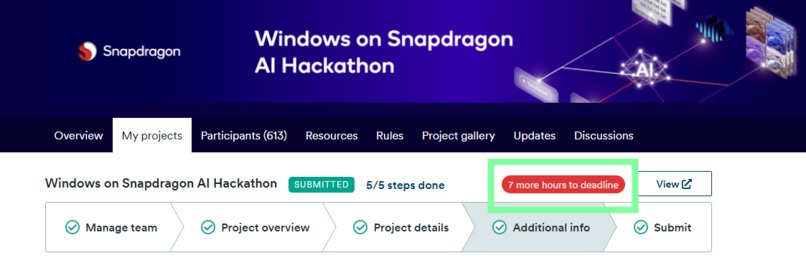

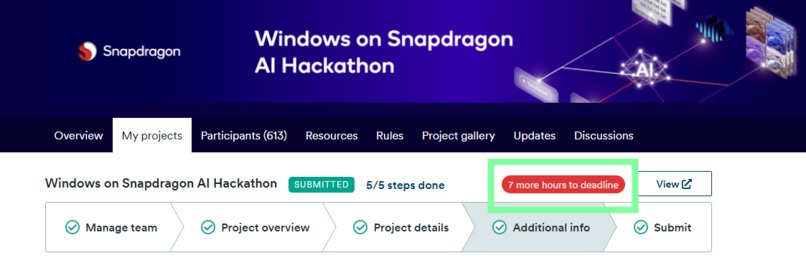

Deadline Approaching Good Luck Everyone!

-

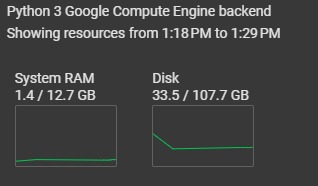

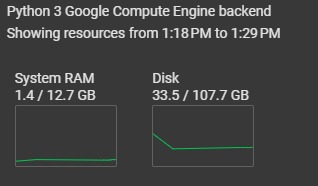

Resources in Google Collab while installing Yolov7 on Windows 11 Machine

-

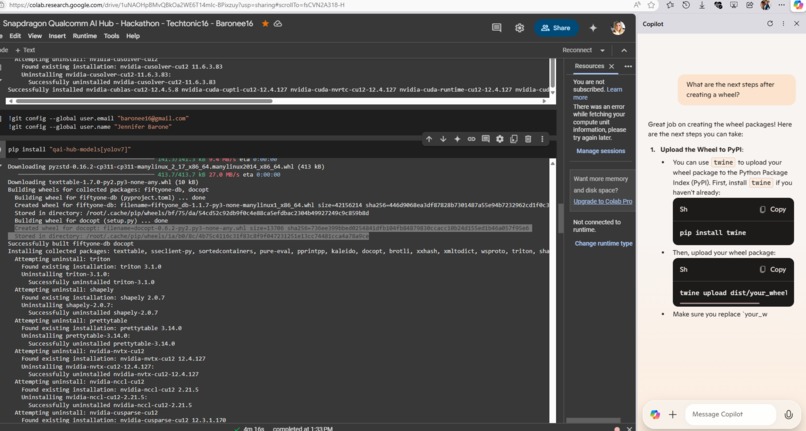

Analyzing my Pip Install with CoPilot - Twine What? haha

-

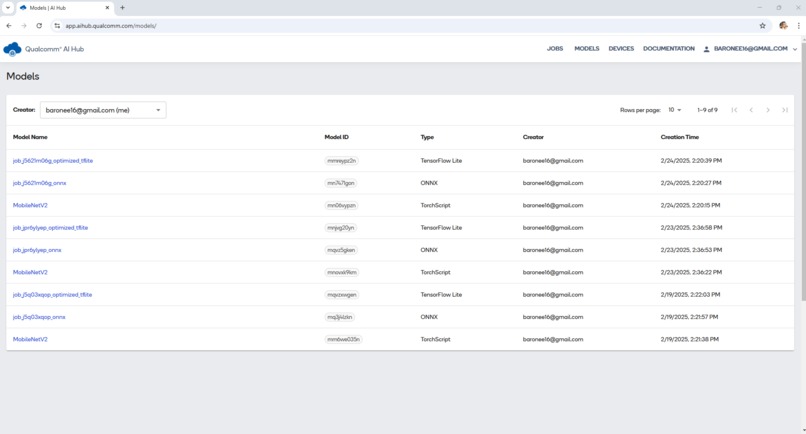

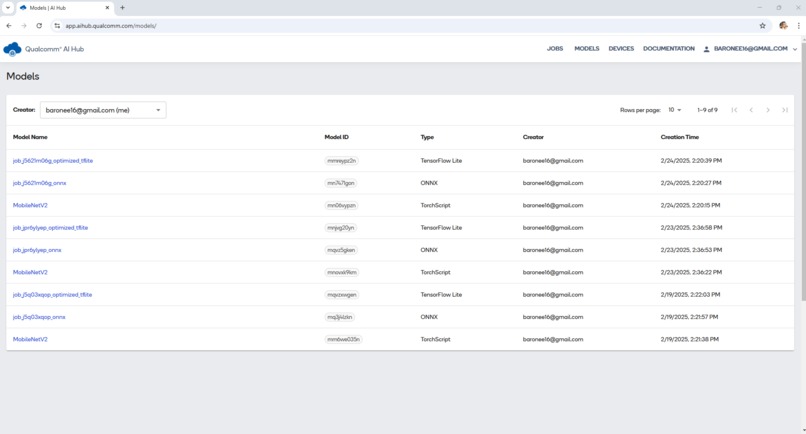

https://app.aihub.qualcomm.com/models/

Inspiration

Thank you to all the coordinators and support staff—this was an incredible experience. I recently began studying Large Language Models (LLMs), and this hackathon became the perfect opportunity to explore them further. I’m grateful to have discovered this challenge through continued research.

What It Does

Generative AI Assistants Using LLMs, I created intelligent assistants and personalized AI-driven experiences. The customizable model can be easily adapted and integrated into various business environments.

Qualcomm AI Hub Jobs Using Qualcomm AI Hub, I created three detailed jobs that generate layer-by-layer execution statistics—down to the millisecond—to help baseline model performance for on-device deployment. These jobs include:

They support tasks such as image classification, object detection, semantic segmentation, pose estimation, and more. The optimized model and functional Colab notebook are included in this submission for download and testing.

How I Built It

When I saw “On-Device,” my first thought was the Raspberry Pi. I’ve always been fascinated by small form-factor devices and the challenge of optimizing them for seamless performance. A core part of my process was ensuring the device could properly recognize assets and operate efficiently under constraints.

I iteratively refined my AI chat application by feeding LLMs specific context, testing with technical questions, and improving responses based on interaction. Once optimized, the model can be downloaded, zipped, and shared for review. Qualcomm AI Hub and Azure AI Foundry enabled rapid experimentation and performance analysis.

Challenges I Ran Into

Choosing the best graphical interface was initially challenging. Restarting with a new approach and refocusing my efforts significantly improved my results.

Accomplishments I’m Proud Of

Successfully integrating LLMs—especially completing major work on the final day—was a major milestone. I plan to continue refining the project beyond the submission deadline to enhance functionality and reliability.

What I Learned

Always review all resources on the Devpost page before beginning. My excitement caused me to dive in early, which taught me to pace myself with preparation.

Tech Hub Vision

I am developing a comprehensive technical, educational, and cybersecurity training hub for virtual collaboration. Through Qualcomm’s support, I successfully created jobs like MobileNetV2 for the Samsung Galaxy S24. The final hours spent optimizing LLMs and packaging them for deployment were incredibly rewarding.

This is some of the most exciting technology I’ve encountered in my career, and I’m grateful to have experienced it through this QUALCOMM challenge.

Additional Planned Features

Tech Support Channel: AI-powered support chatbot, automated ticketing, a knowledge base, and a “lessons learned” archive.

Customizable Training Environments: Pre-configured hubs for company-specific needs (e.g., Azure training for a 12-person team), deployable on-device or via cloud.

Next Steps

Continue developing and refining LLMs, improve model personalization, explore deeper integrations with Qualcomm’s AI tools, and build toward a virtual training center for remote education and collaboration.

Works Cited

Microsoft, “Accelerating AI reasoning for developers on Azure AI Foundry,” Azure Blog. Accessed February 24, 2025.

Built With

- baronee16

- builtwith

- builtwithqualcomm

- builtwithsnapdragon

- techtonic16

Log in or sign up for Devpost to join the conversation.