-

-

Splash page

-

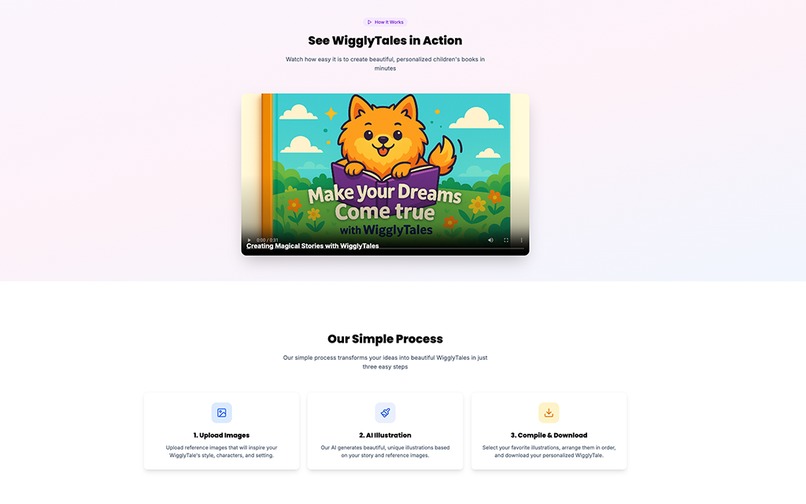

Splash 2

-

Splash 3

-

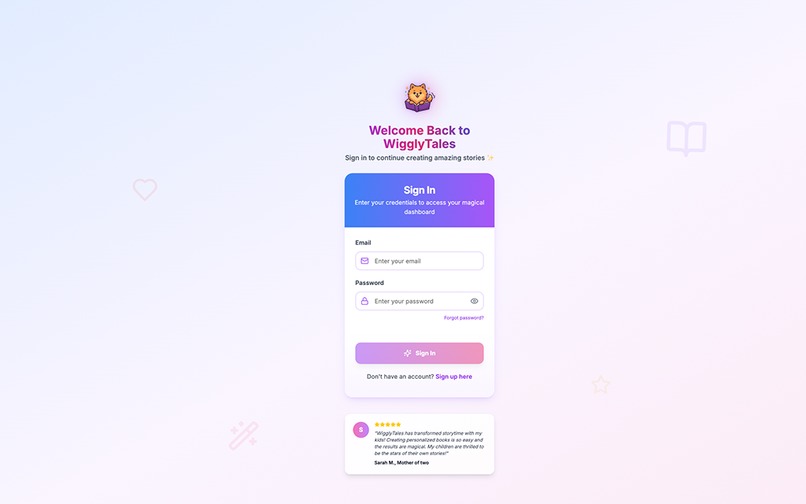

Sign in page with fully functionality of password reset

-

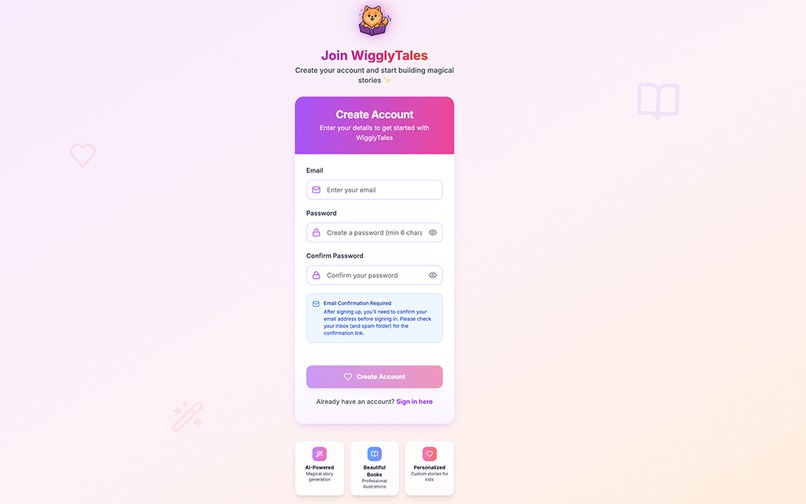

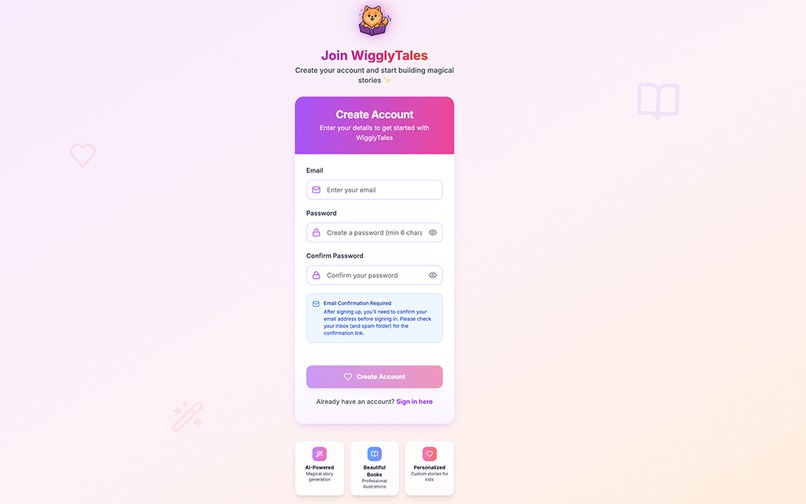

Sign up page

-

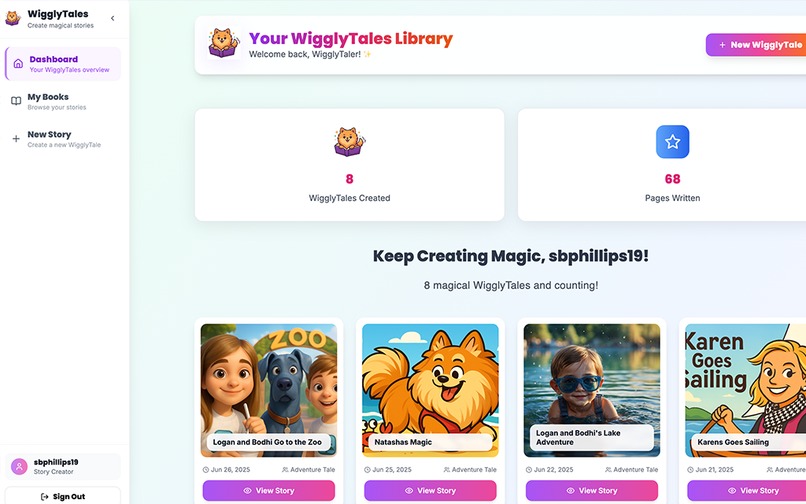

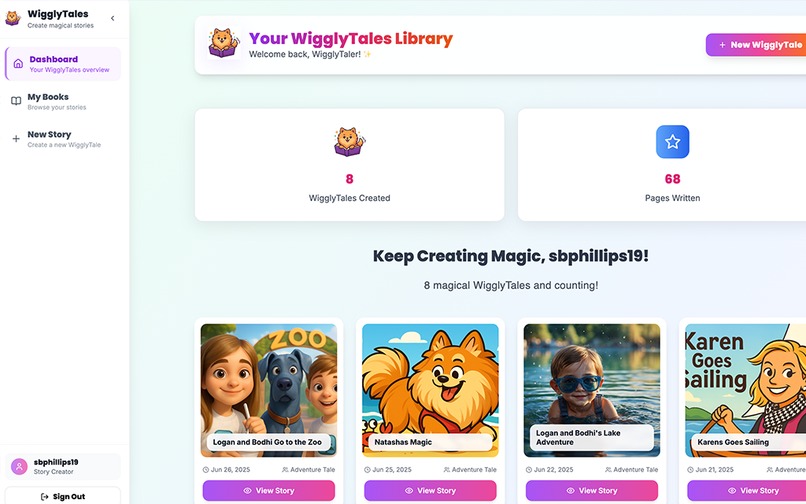

Dashboard page page with lots of books that I created for family

-

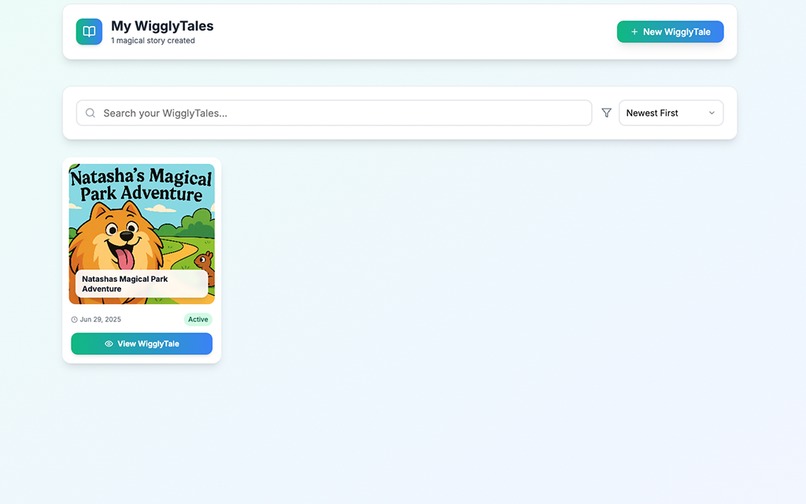

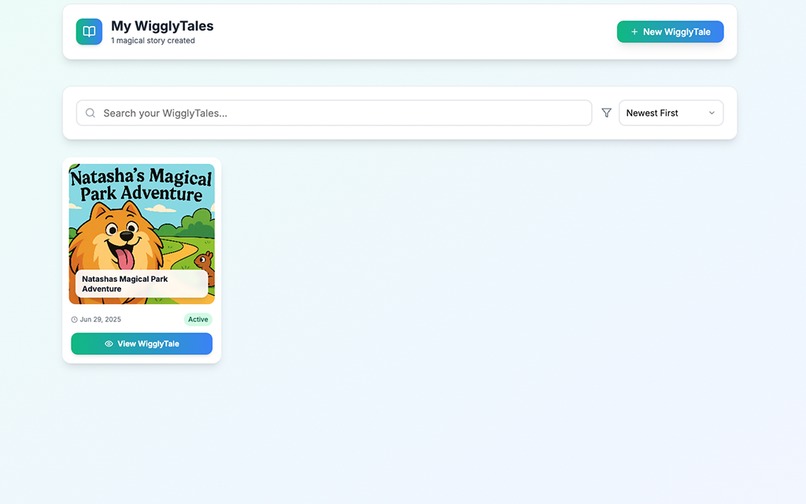

My Books Page With New account and 1 book created

-

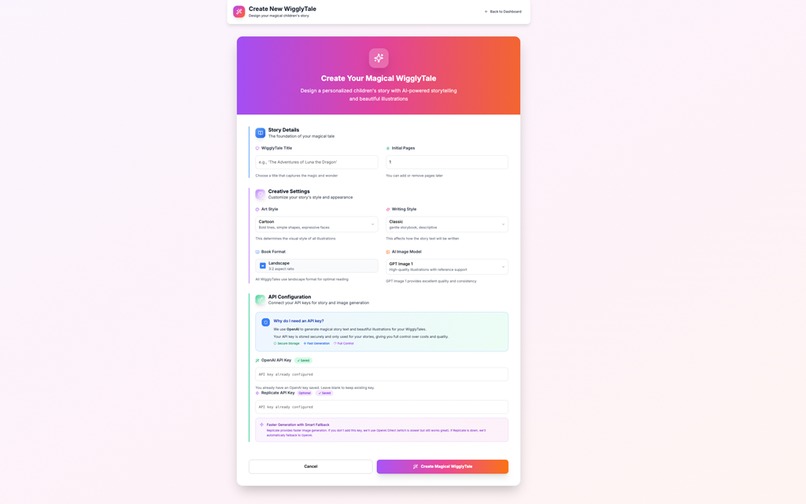

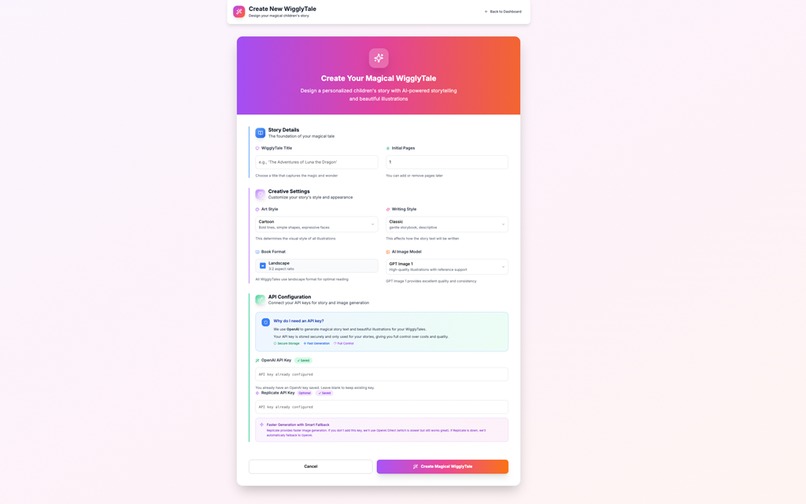

Create a new book

-

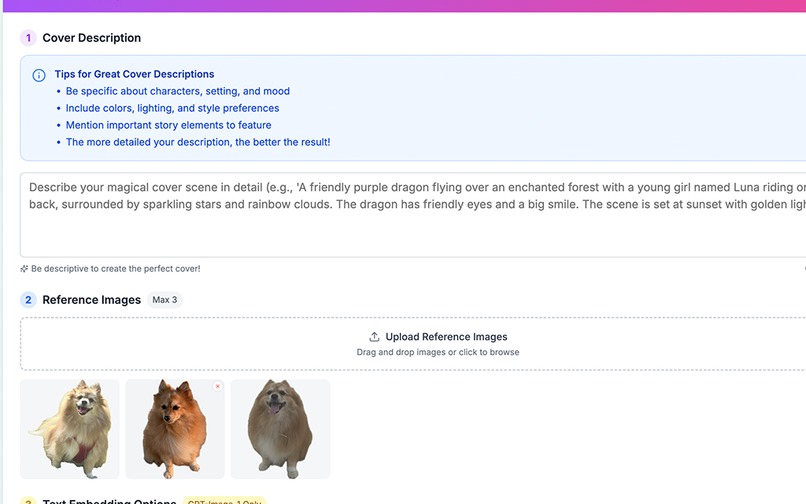

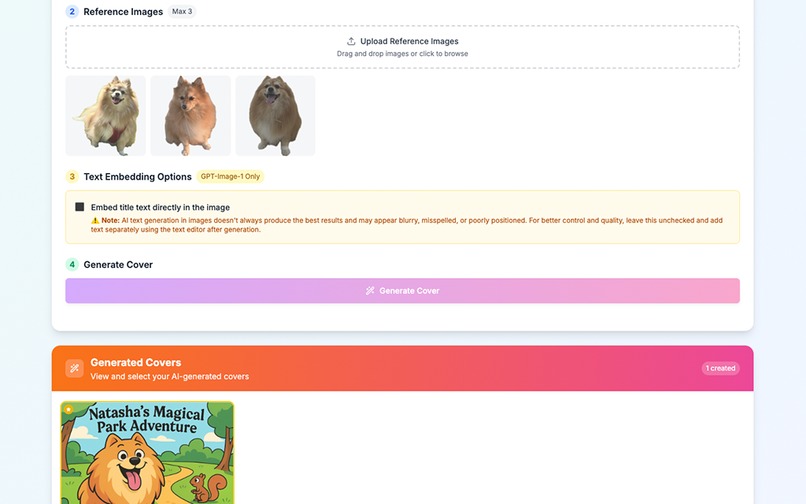

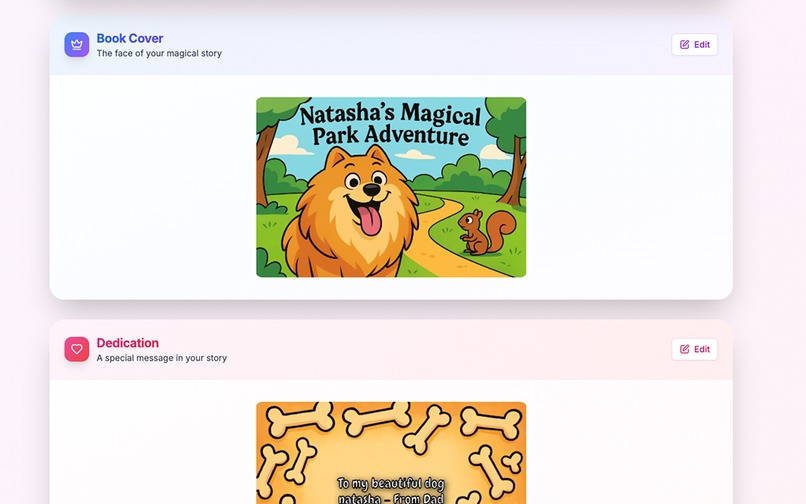

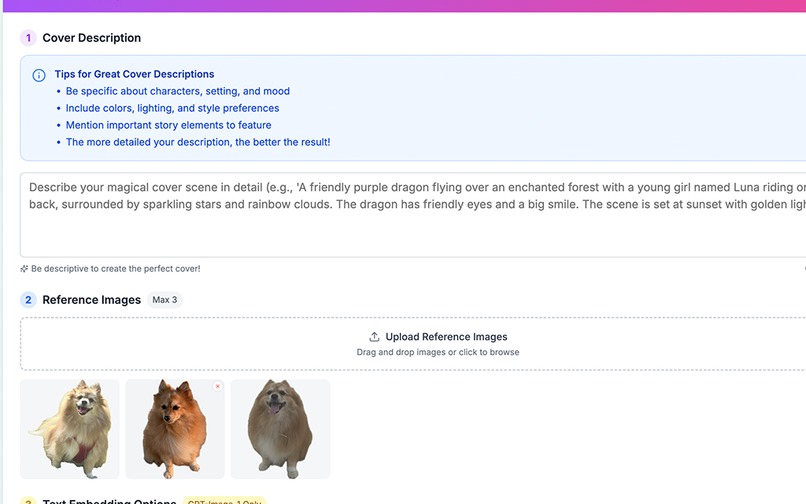

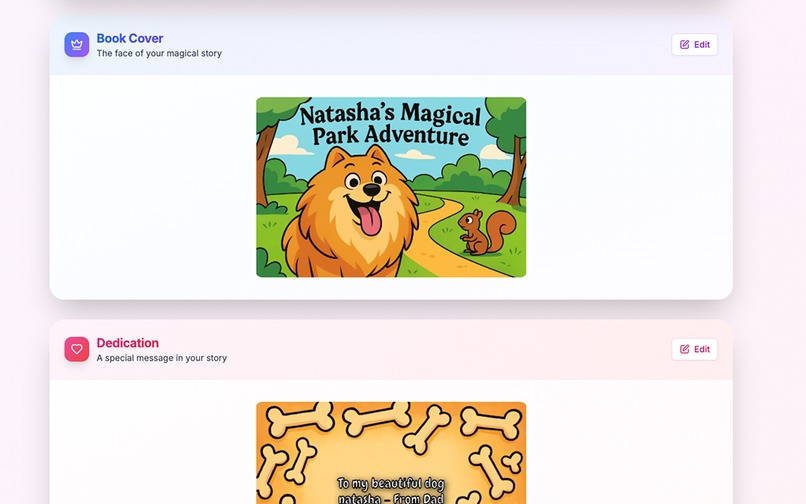

Create cover

-

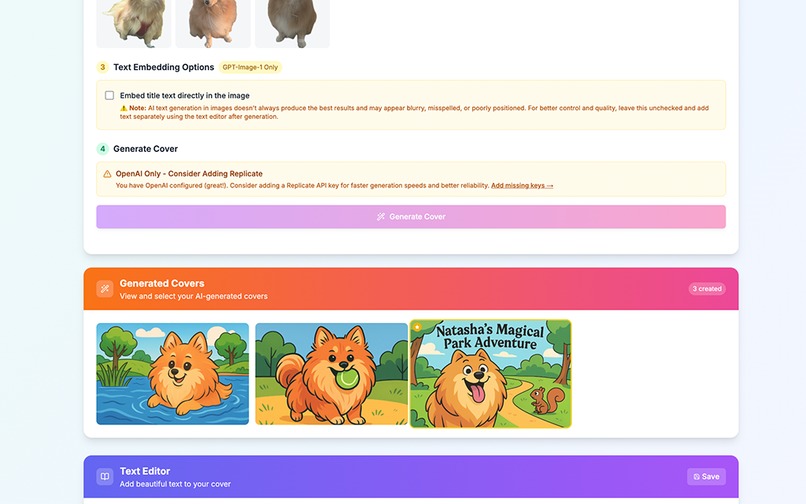

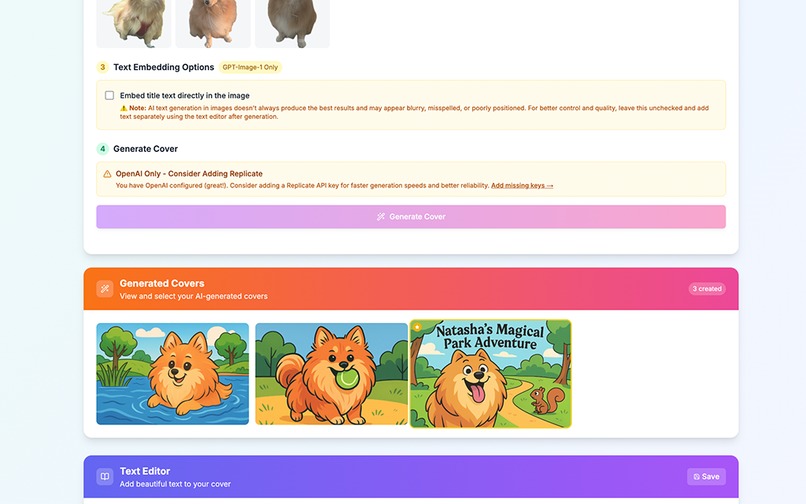

Create Cover and with Generated Text And multiple covers

-

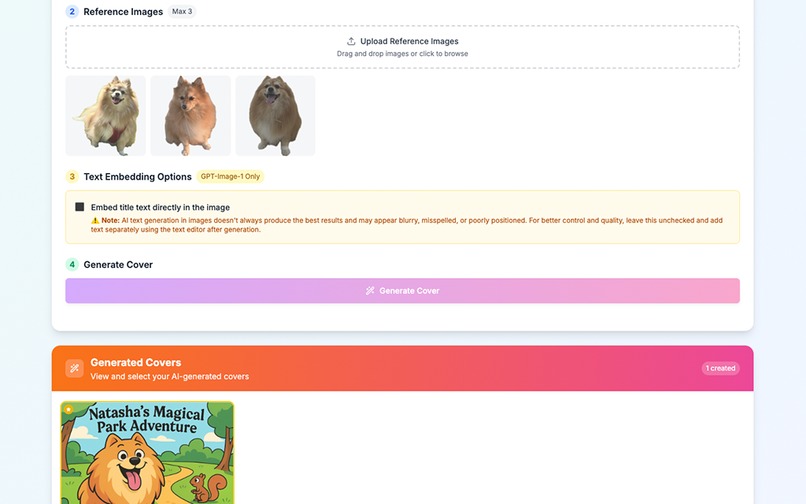

Cover page with generated text

-

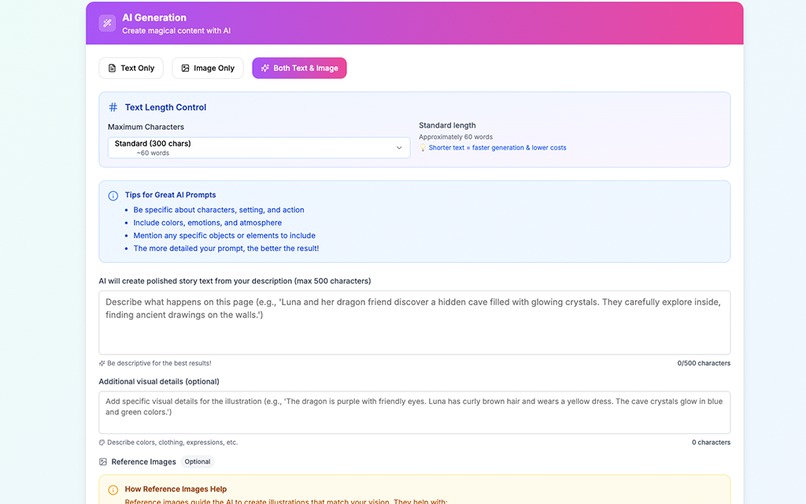

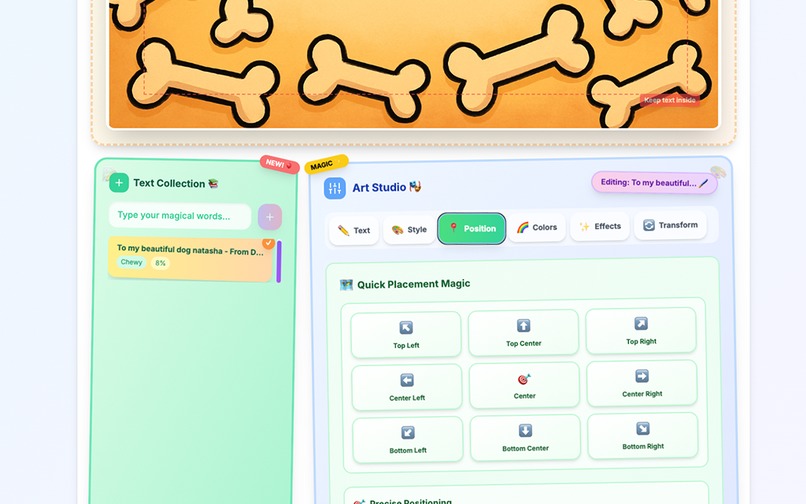

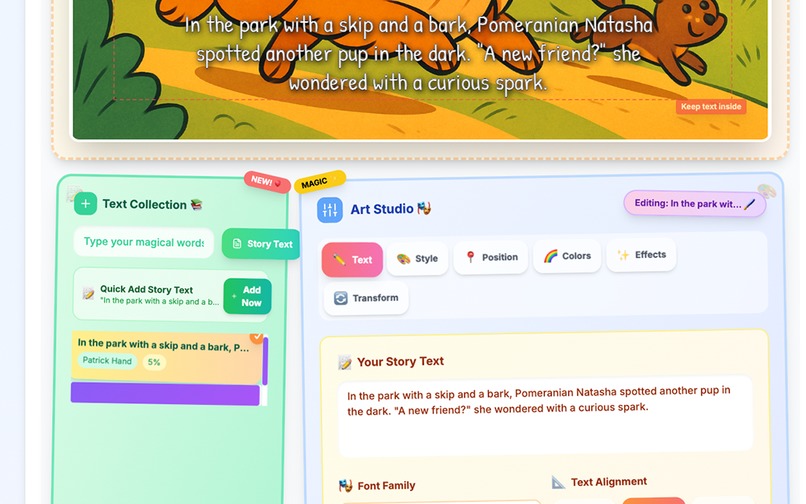

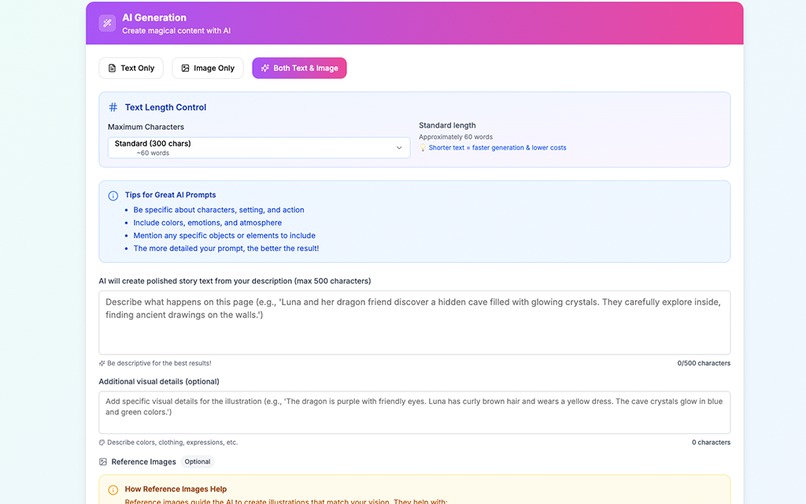

Creating content

-

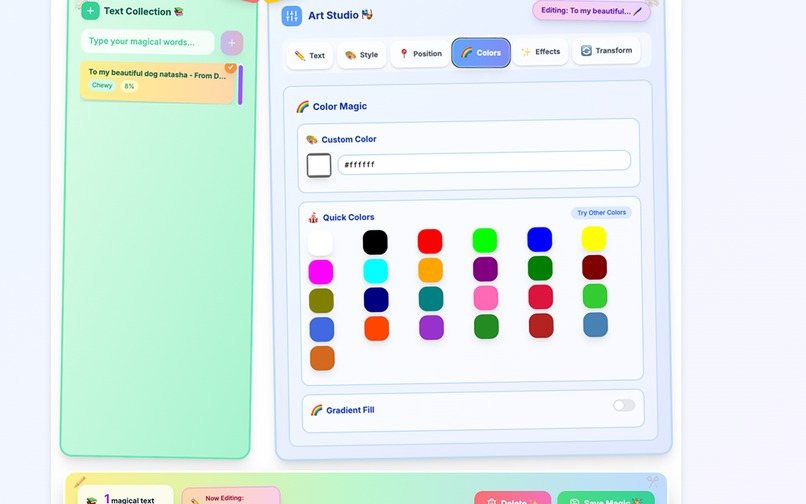

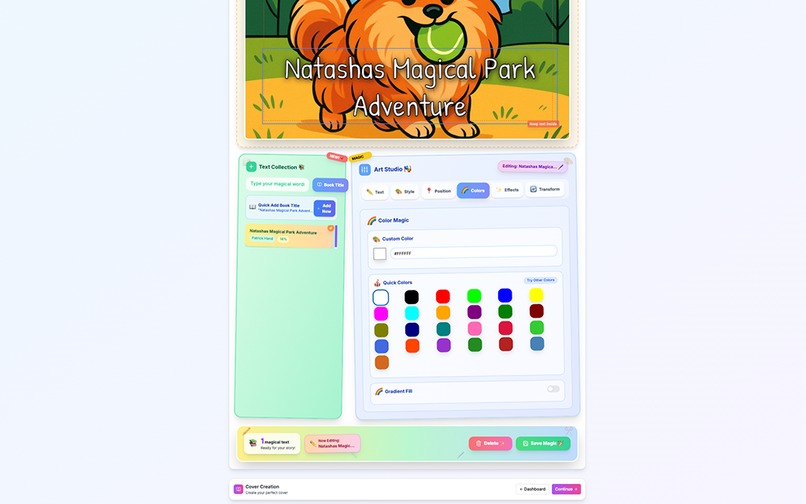

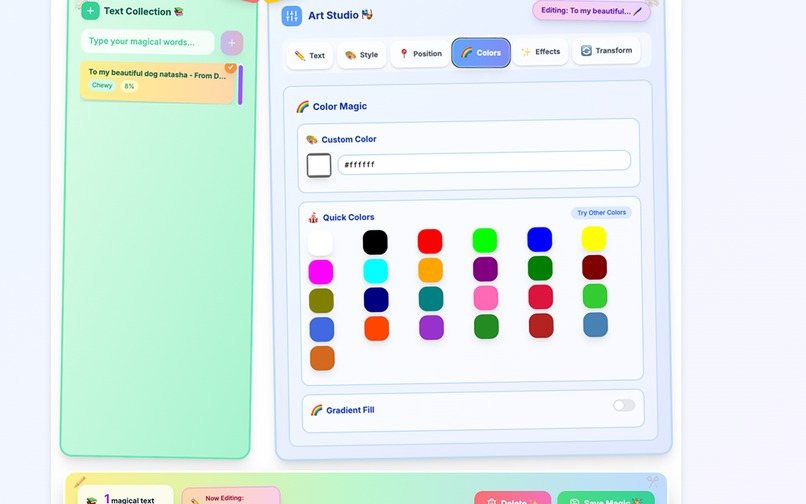

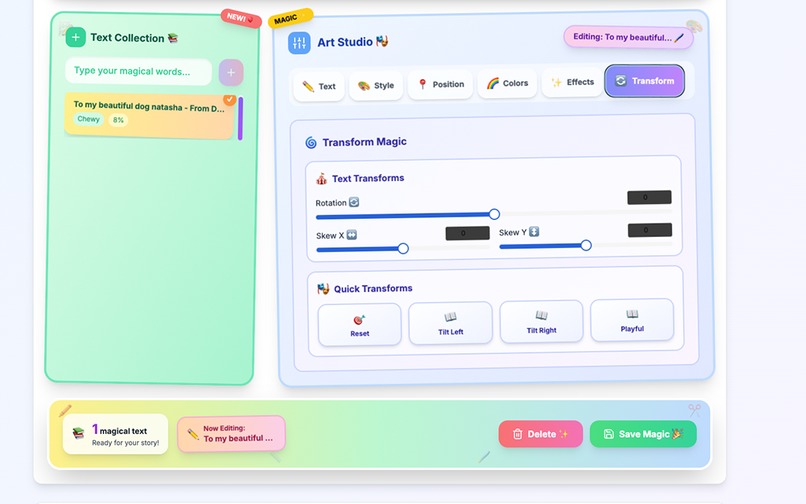

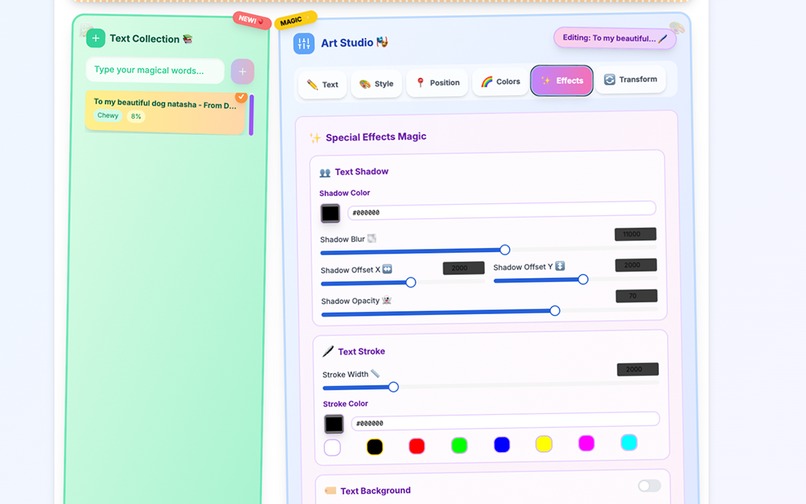

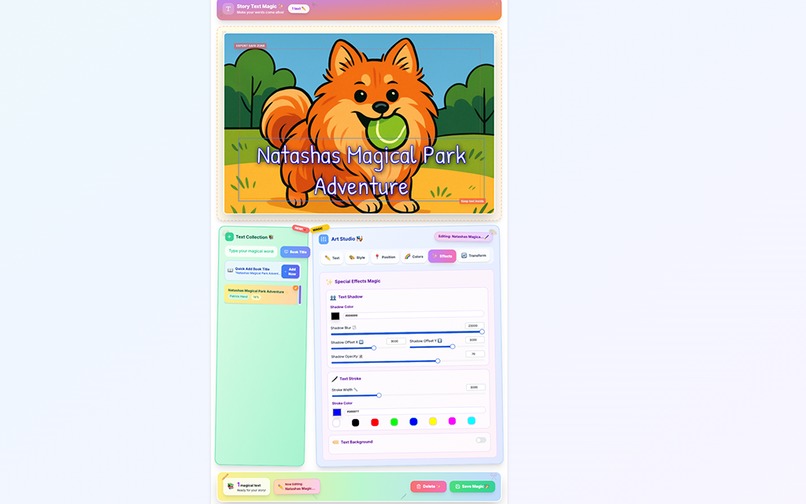

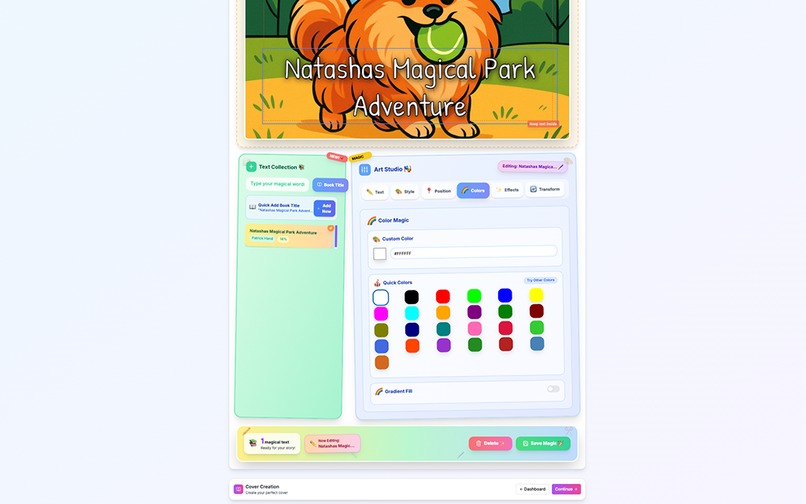

Editor colors

-

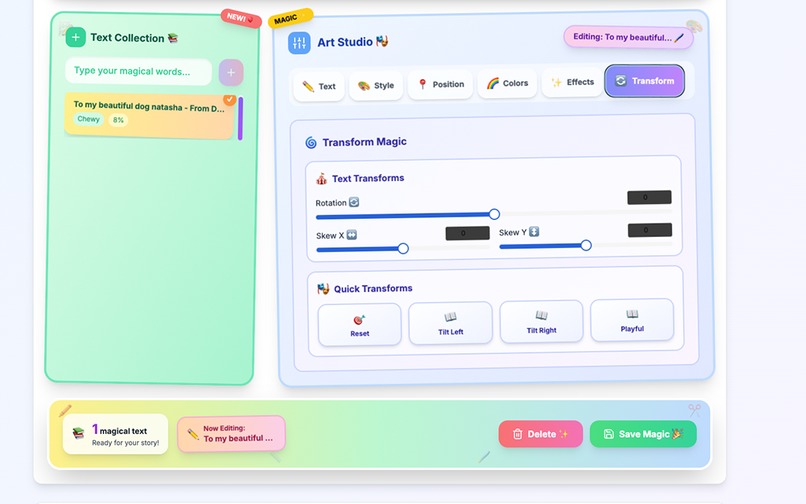

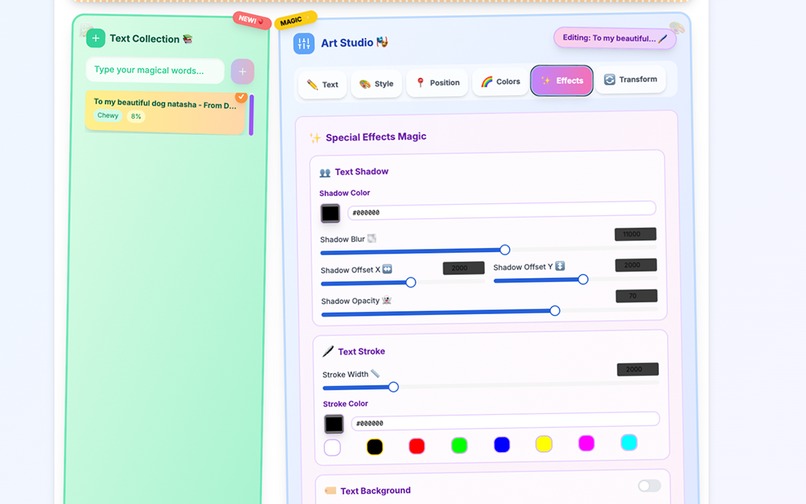

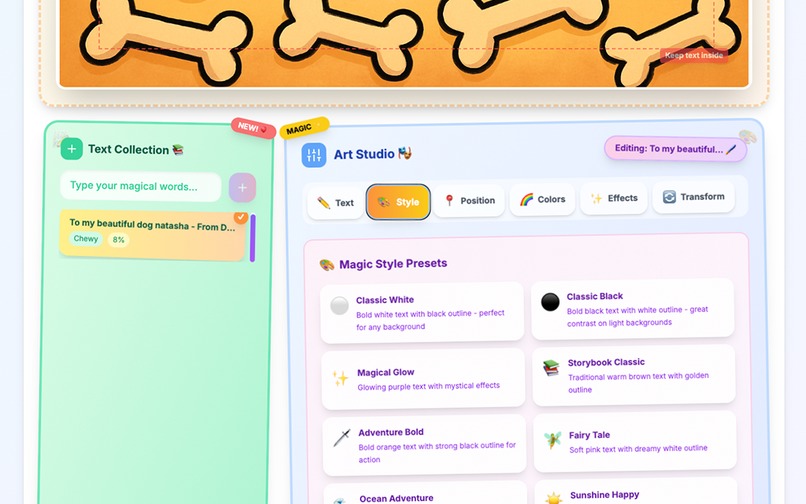

Editor Shadows

-

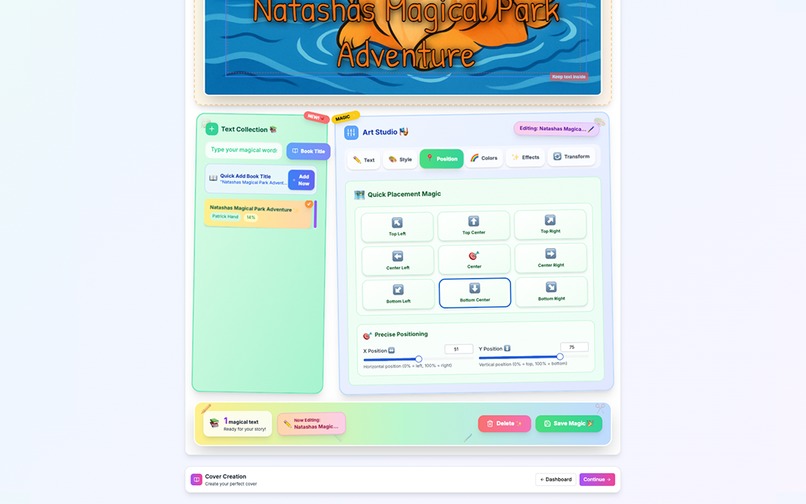

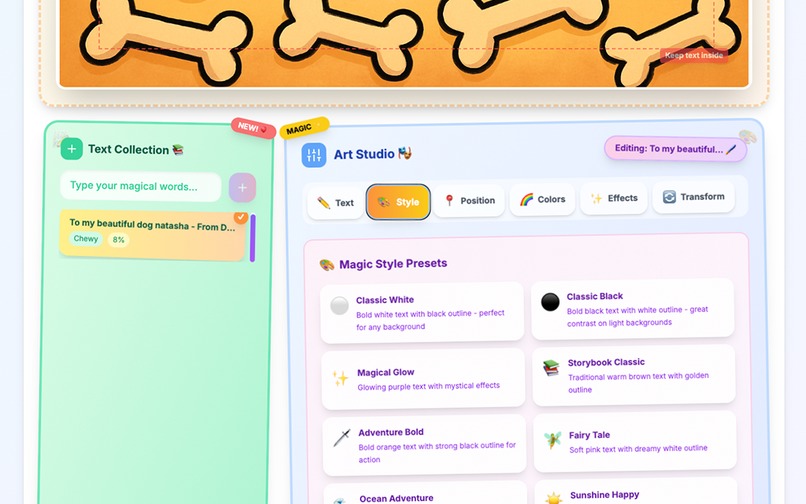

Editor text styles

-

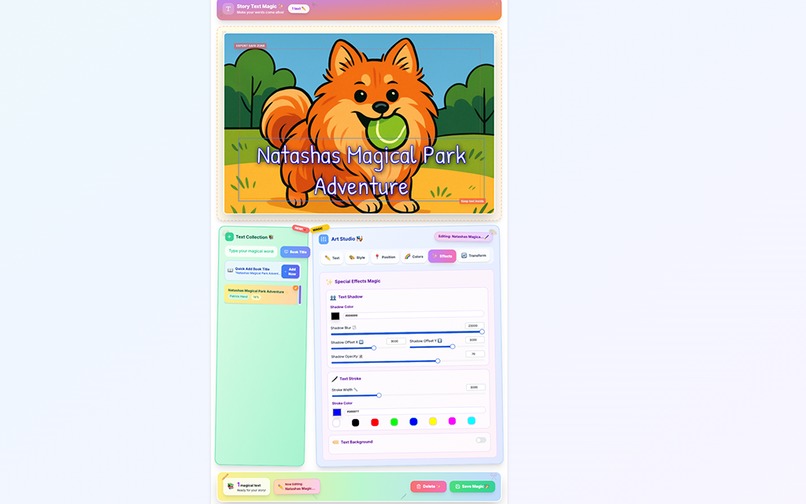

Studio Full Page

-

Studio Full Page

-

Studio Full Page

-

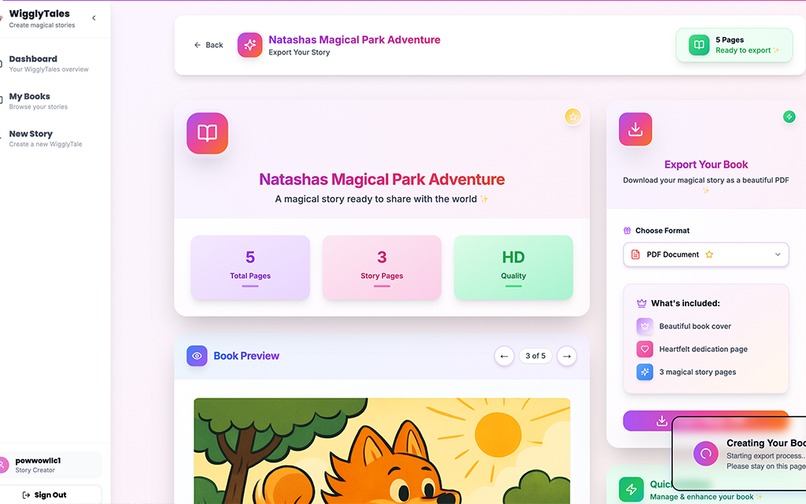

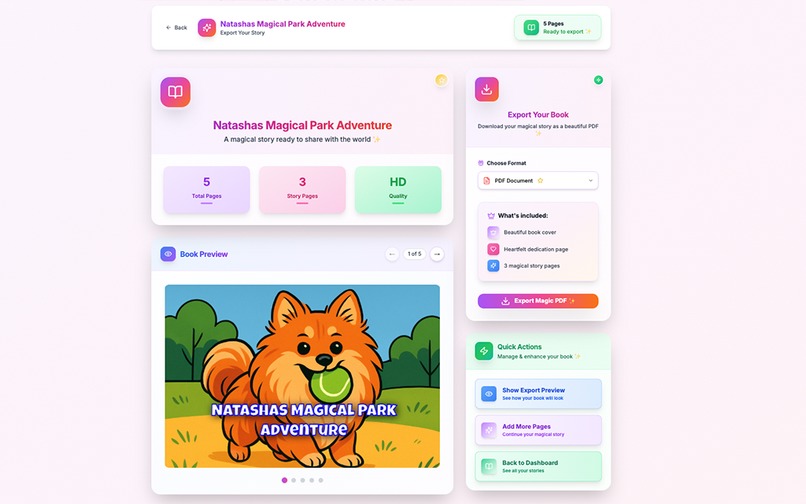

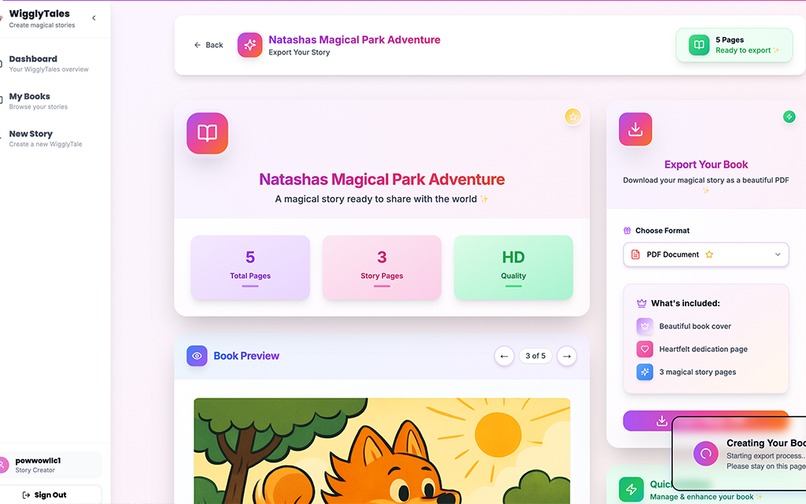

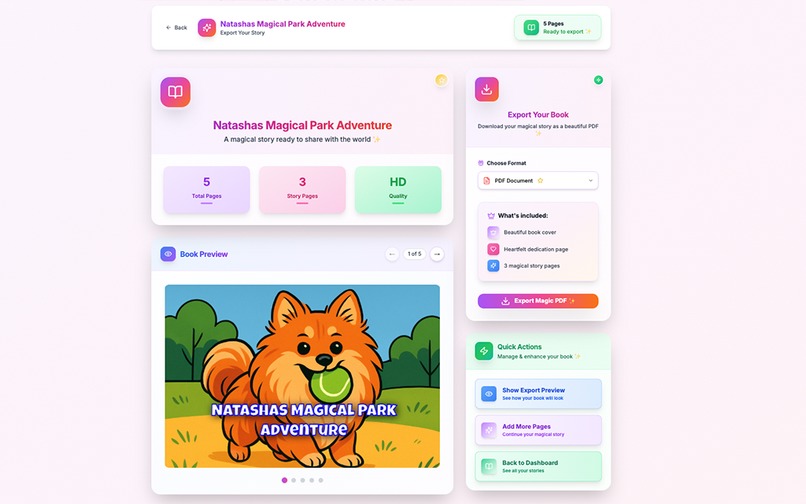

Export Page

-

Editor styles

-

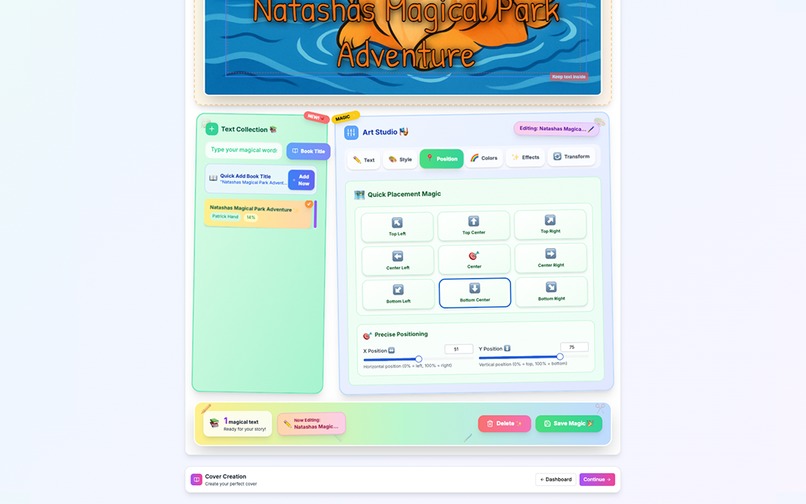

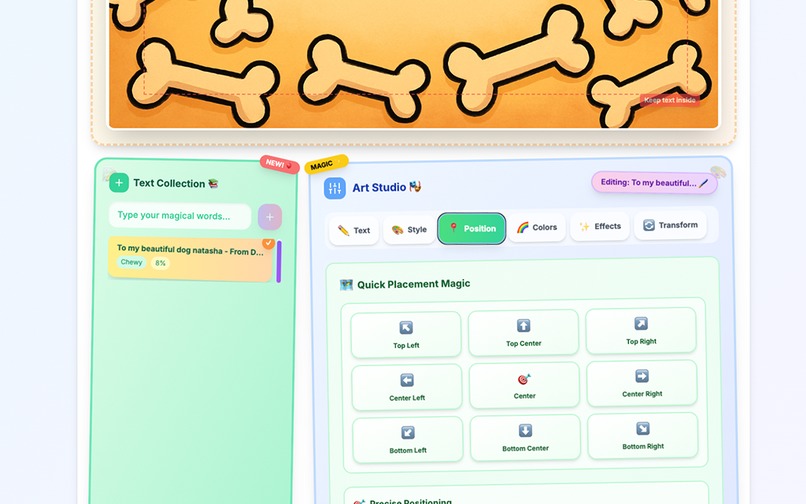

Editor positioning

-

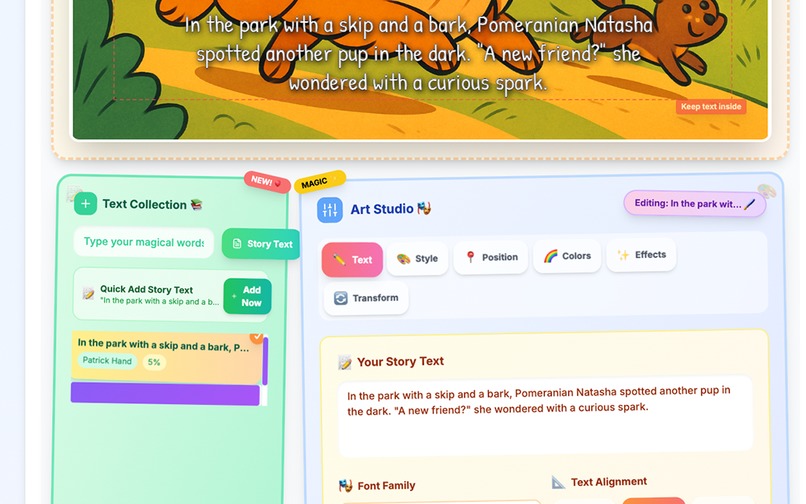

Editing content

-

Summary page

-

Export Page full

-

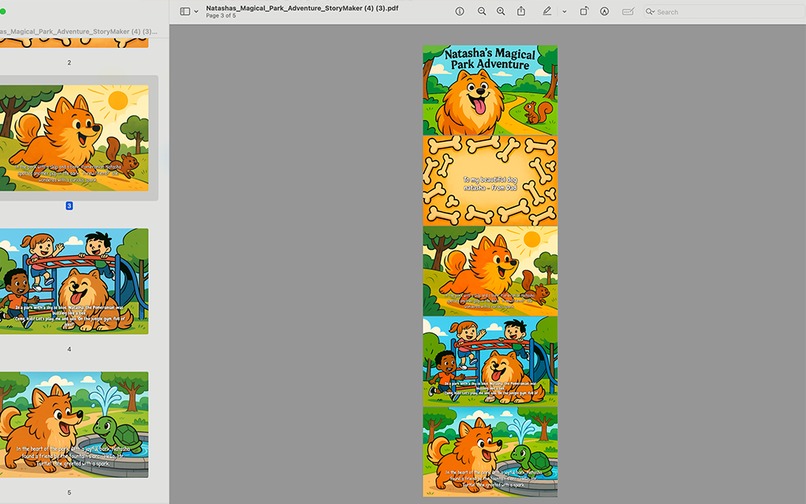

Exported PDF

Inspiration

Over a year ago, I created a book for my niece and nephew using Midjourney and had it printed. Reading it to them quickly became a favorite activity — they loved how personal it felt. But the process was time-consuming: getting consistent characters, building layouts in Canva — it took me 3 days to get the finished product and I was already very experienced with these tools. I wanted to create a much better experience, one that let anyone easily create beautiful, personalized books in minutes. I personally love thoughtful gifts and a personalized book shows you put time and effort into thinking about your loved ones in a very special way. I've always wanted to build this out to make it easier for people who don't have the technical skills or time to create their own books.

What it does

WigglyTales lets anyone create fully customized children’s books using AI. Users can upload photos, generate consistent characters, pick from popular fonts and styles, and fine-tune their stories with an intuitive canvas editor. With one click, you can export your story as a printable PDF. Everything is built to be fast, magical, and easy to use — whether you're making a bedtime book, a gift, or an educational tool.

How we built it

I used Bolt to build the app using Next.js and Tailwind. OpenAI powers both the image and text generation — after testing over 70 APIs and models (from Replicate, Leonardo, Midjourney, etc.), GPT was the only one producing consistent results. As credits got low, I created a second Bolt app using React-Konva to build a flexible canvas for positioning text, changing fonts, adding backgrounds, and more. React-PDF didn’t support the styles and layout properly, so I ended up changing this approach. I also used Cursor and Claude Code for some portions, but over 85% of the app was built directly with Bolt, using more than 40M credits being used in 3 weeks. I had a fully working application before even uploading it to github from bolt and using these other tools.

Challenges we ran into

Switching from Fabric.js to React-Konva was tough, especially when it came to rendering and managing styled text accurately. I tested over 100 different PDF exports using React-PDF, but it consistently misaligned text or stripped key styles — bold, line height, spacing — no matter how much I tweaked the layout. With limited time and a need for something reliable, I ultimately pivoted to exporting the pages directly from DOM elements. It wasn’t the most elegant solution, but it worked consistently and allowed me to ship.

A personal challenge: my uncle passed away unexpectedly this week. I hopped on a plane immediately and traveled International to be with family through the grieving process, and while I couldn’t dedicate as much time to the project, I stayed focused and pushed forward with what I had. In the final stretch, I used Claude, Cursor, and Bolt simultaneously creating multiple branches and merging PRS in left and right on small tweaks — but the vast majority of the project was built using Bolt.

Image generation in general- I wanted to find the cheapest model but also the most consistent with characters. I worked on even creating custom models but while these work great for super lifelike images, I found them to not be consistent when converting people or animals into different sketch styles. In terms of actual code, originally started with Replicate for image generation, but it broke entirely for over a full day — all requests failed, making it unusable at a critical early stage. That led me to switch to using the gpt-image-1 model directly for its reliability, despite the slower generation times. As the deadline approached and gpt-image-1 became too slow, I reintroduced Replicate into the app. It’s generally faster because it uses optimized hosting, runs on dedicated GPUs, has less overhead, and avoids some of OpenAI’s latency tradeoffs for safety and consistency. I decided to give users the option to generate images using either engine — depending on whether they prefer speed or consistency.

Deployment was another challenge. I spent nearly 8 hours trying to get things working on Netlify, staying up until 4am tonight to troubleshooting. I purchased Netlify Pro in attempts to fix this, but still image generation kept timing out due to background function limits, and while I requested an increase from Netlify, it wouldn’t come in time for the hackathon deadline. Ultimately, I had to switch to Vercel at the last minute to ensure the demo worked in production before I head off to bed but now everything seems to work smooth. I wish I could have gotten it to work but i'm running on fumes and want to release a working product today.

Accomplishments that we're proud of

Building a fully functional, user-ready application in under 3 weeks. Learning new tools like Supabase for backend and storage integration. Using vibe coding to develop the entire product — I didn’t touch the database logic, and only lightly adjusted styles. Overcoming major blockers and not giving up — especially when the original idea (text inside the image) failed due to GPT limitations. Facing crunch time once again where lots of issues were popping up, but able to keep my composure even into the very early morning to get a finished product out. Delivering a polished project I’m proud to share and excited to keep building on.

What we learned

One of the biggest things I learned was how much easier it is to move fast when you break big features into separate Bolt apps. Trying to do everything in one place quickly became messy and expensive. Once I started splitting things out, each part became cheaper to run, easier to test, and way more manageable overall. It also made it simpler to debug when something went wrong — I could isolate the problem instead of digging through a huge chain of steps. AI does the best with much less of a context window so if you can break individual pieces into chunks and then combine them it works way better.

AI image generation was also a huge learning curve. I didn’t realize how much of a tradeoff it would be — the fast models gave quick results but were super hit-or-miss, while slower ones like GPT-image-1 took way more time but were way more consistent, especially when I wanted the same character to appear across multiple pages. I also spent a lot of time just getting better at prompting. Tiny tweaks made a big difference, and I learned how important it is to guide the model with clear instructions instead of just hoping it gets the vibe. The prompts for the image generation with the text inside took a very long time to get working perfectly (not fully utilized anymore since AI text is still iffy, but the user has the option on the cover page to use).

Honestly, I didn’t expect how far I could get with just vibe coding. I built working apps without writing traditional logic — just through enough iteration and feedback. It felt more like shaping something collaboratively than writing code line-by-line, and it ended up being surprisingly powerful. I also touched lots of new technologies and packages I haven't used.

Lastly, I learned the hard way how important it is to deploy earlier. I hadn’t really hit deployment issues in the past, so I assumed it would be smooth. But when I finally deployed near the end, a bunch of unexpected problems came up — function limits, missing environment configs, things that just didn’t behave like they did locally. As long as my application runs locally and the build works properly I assumed the application would run the same (other than obvious browser differences). If I’d deployed earlier, I could’ve caught those with way less stress. Now I know: just because it works in Bolt, doesn’t mean it’ll work everywhere — and the earlier you find that out, the better. For me deployment is never much of a thought and something I leave till the end, not anymore.

What's next for WigglyTales

I want to integrate Eleven Labs so books can be read aloud with real-time word highlighting — turning the app into a literacy tool. I plan to support custom image generation models to lower costs and improve speed. More image model integrations (I removed two during this build for stability) will come back in. I'd love to add birthday card generation and build in an API connection for printing and shipping physical books and cards — because there’s nothing better than holding your favorite story in hardcover form. I would like to go through the app with friends and family more and get feedback on how to make the app even easier to use.

Built With

- bolt

- claude

- cursor

- gpt

- html2pdf

- ionos

- lottie

- netlify

- nextjs

- react-kanva

- tailwind

Log in or sign up for Devpost to join the conversation.