Inspiration

The idea of this project is to enable people to find their belongings which they left somewhere in the house but can't seem to find them when needed.

What it does

*Couldn't upload the video on youtube due to some technical issues, please watch them here : * https://drive.google.com/open?id=1Q0Tu1h6lxvj1LuG1htZBOEJTYInE8-oc https://drive.google.com/open?id=126vgxcdv3GKA5tI0b6FrNBrwy44hsH78

To save your time in searching these items, we've developed a system wherein you just have to ask Alexa the location of that object and get the precise location of the item! Be it under a pile of clothes on your bed or inside a cupboard! Alexa will be there to the rescue! It can be very useful at times when you're getting late and can't find your mobile phone or wallet or some other item you immediately have the use of. The Raspberry Pi Camera keeps track of these small objects (input by the user) in the room with respect to bigger things in the room like table, chair, bed etc. It can also help you make sure your possessions are in the right place as they should be!

How I built it

Setting up the system

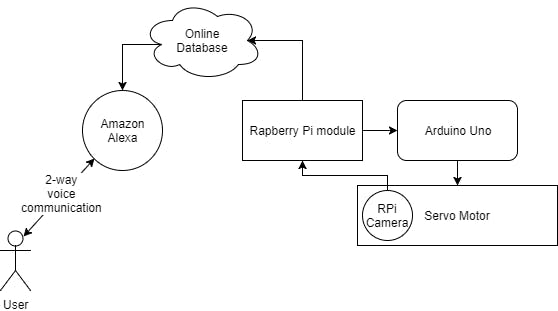

Flowchart depicting the working

The Raspberry Pi (RPi) module is connected to RPi camera, Arduino Uno, and a server machine via the Internet. RPi continuously transmits the video recorded by RPi camera to the server machine which tracks the position of smaller, already marked as important objects and saves their last known location in an online database. Arduino Uno has also been connected to RPi for controlling a servo motor to manage the orientation of the camera to ensure that maximum area is covered and all objects are recorded in the best possible conditions. The camera is embedded onto the motor.

YOLO's Darknet trained on COCO dataset has been used for object recognition and can be set-up on any (x86/x64) machine by following the amazing tutorial given by Joseph Redmond at https://pjreddie.com/darknet/yolo/.

In case you need to train the model for your own models, keep following the code and we will try to add a well-documented version of the same very soon!

After launching the where's my stuff skill on your Alexa device using the following command:

"Alexa, Open where's my stuff."

You can then simply ask it about the whereabouts of your stuff. Alexa would then connect to our database and answer your question with the item's last known location.

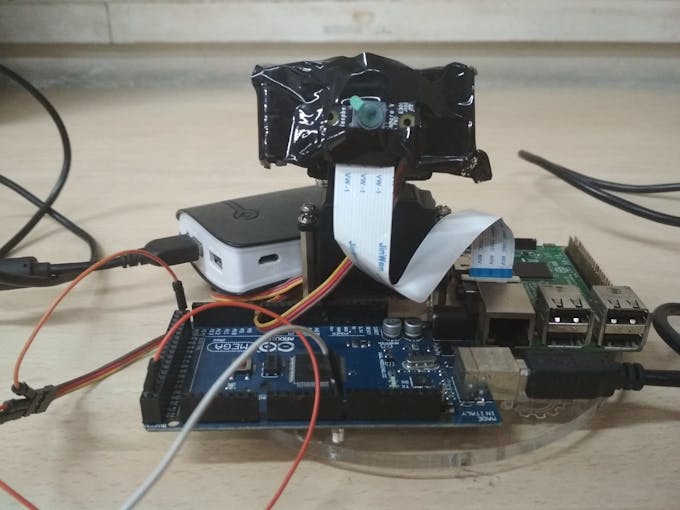

The final hardware module

Object Tracking

Once an object has been recognized in an image, our system saves its whereabouts in real time and then it is only a matter of keeping track of its movements!

Simply put, locating an object in successive frames of a video is called tracking.

For object tracking, there are many different types of approaches which can be used. These include:

Dense Optical flow: These algorithms help estimate the motion vector of every pixel in a video frame. Sparse optical flow: These algorithms, like the Kanade-Lucas-Tomashi (KLT) feature tracker, track the location of a few feature points in an image. Kalman Filtering: A very popular signal processing algorithm used to predict the location of a moving object based on prior motion information. One of the early applications of this algorithm was missile guidance! Also as mentioned here, “the on-board computer that guided the descent of the Apollo 11 lunar module to the moon had a Kalman filter”. Meanshift and Camshift: These are algorithms for locating the maxima of a density function. They are also used for tracking. Single object trackers: In this class of trackers, the first frame is marked using a rectangle to indicate the location of the object we want to track. The object is then tracked in subsequent frames using the tracking algorithm. In most real life applications, these trackers are used in conjunction with an object detector. Multiple object track finding algorithms: In cases when we have a fast object detector, it makes sense to detect multiple objects in each frame and then run a track finding algorithm that identifies which rectangle in one frame corresponds to a rectangle in the next frame. For the purposes of this tutorial, we will stick to Kalman Filter. Other algorithms can be easily switched into via these lines of code in the file real_time.py:

tracker_types = ['BOOSTING', 'MIL','KCF', 'TLD', 'MEDIANFLOW', 'GOTURN'] tracker_type = tracker_types[2]

We then use RPi Camera's real time feed along with YOLO's predicted bounding boxes to track the objects with ease! The rest of the well commented code has been included in the Github repository!

Challenges I ran into

Working with hardware projects are always troublesome. So that was the most challenging part of this project.

Accomplishments that I'm proud of

Coming out of this hackathon with a super useful smart home product.

What I learned

Connecting Alexa to IoT based projects.

What's next for Where's my stuff??

Improvement in the ML model to recognize objects more efficiently.

Log in or sign up for Devpost to join the conversation.