-

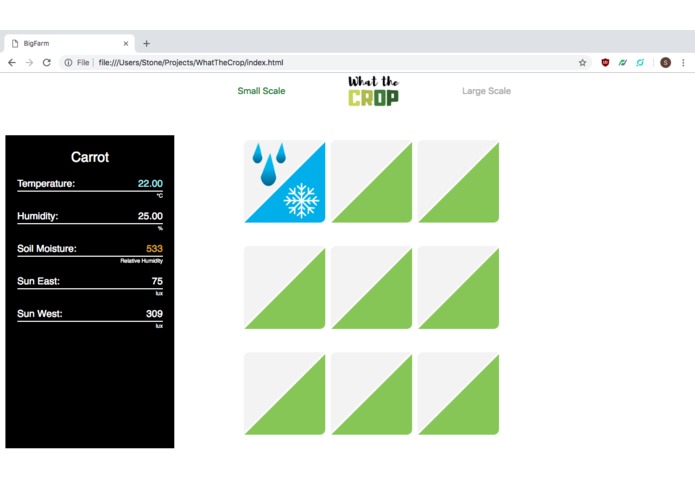

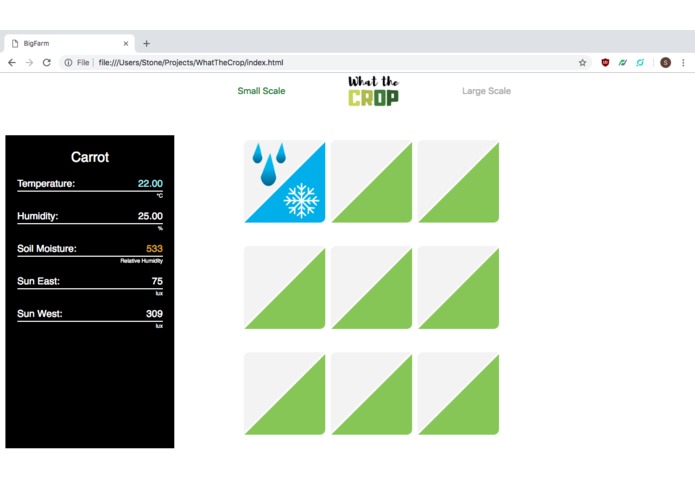

Gridlayout with graphical notifications for temperature and moisture control

-

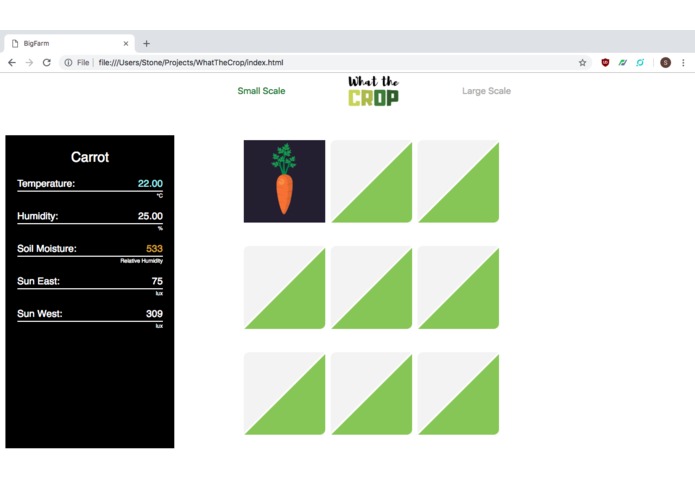

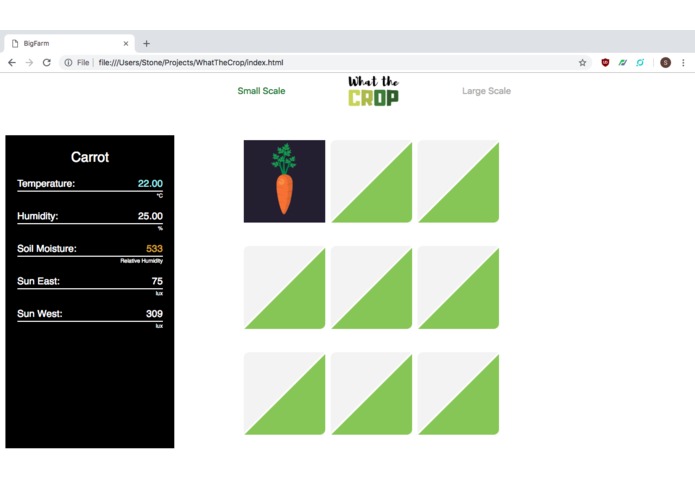

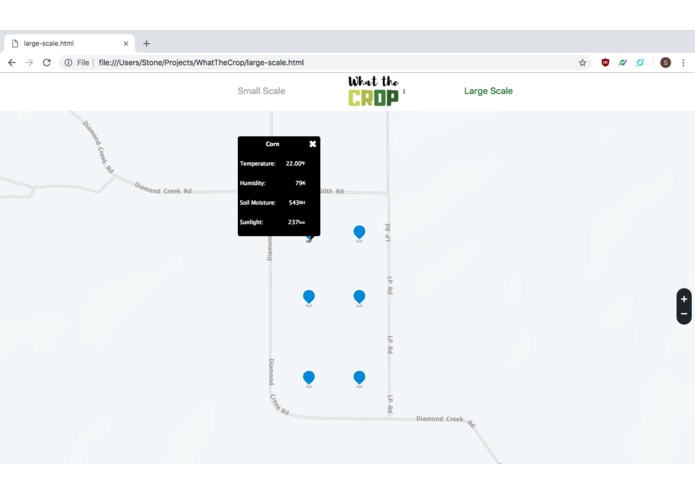

Shows crop on hover, sensor information panel on the left

-

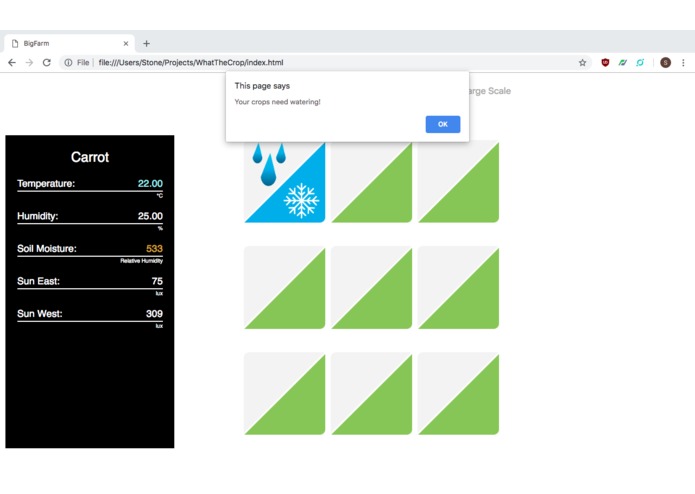

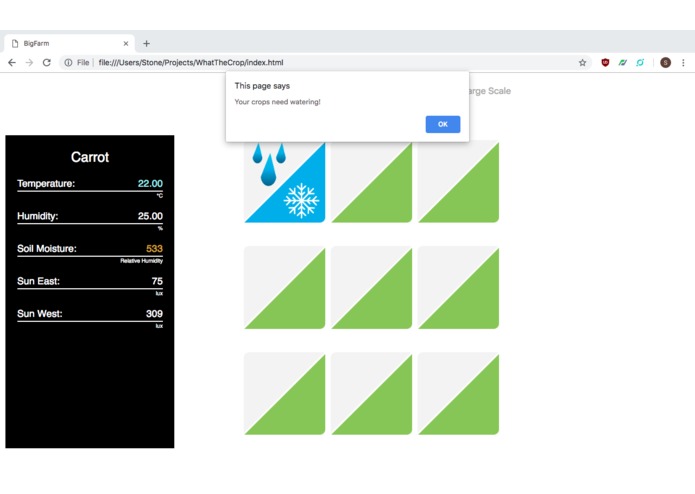

Notifications at specified interval for when action is required

-

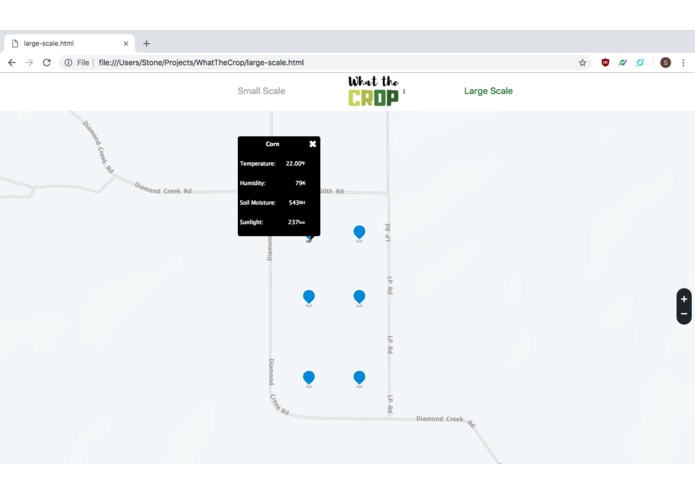

For larger farms, used HERE.com API to load map and markers, and then display sensor data at each node

-

Inspiration

We were excited to work together from diverse backgrounds. The task ahead of us was a real-world problem and we achieved with a unique and easy to use solution.

What it does

WHAT THE CROP takes care of your garden, farm or plants for you autonomously and keeps everything at your fingertips.

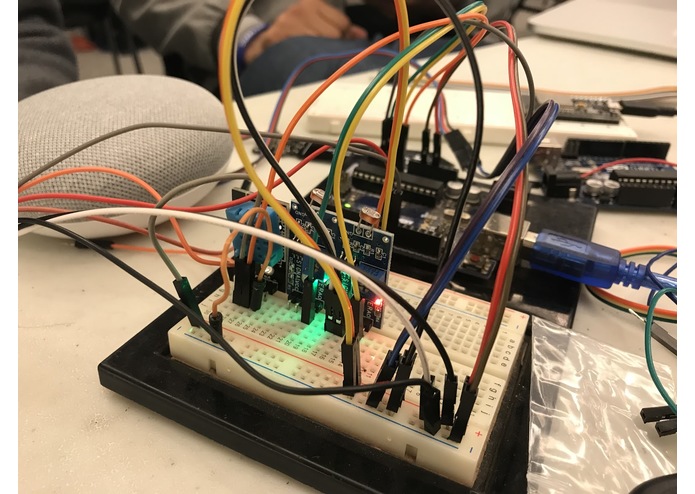

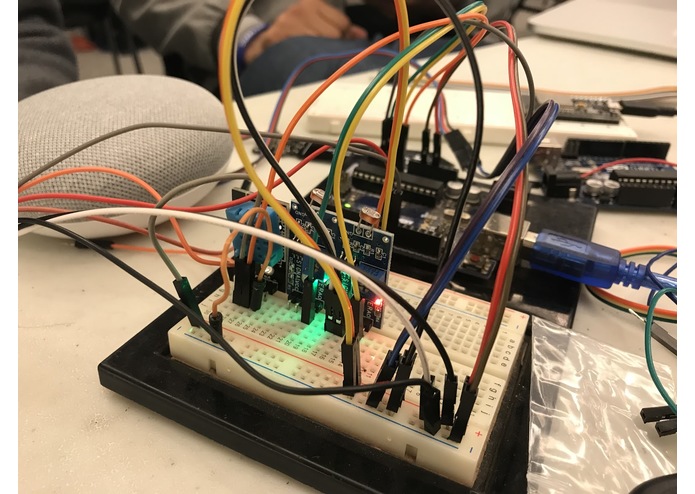

How we built it

We made sure to provide the user an easy to use experience with a dynamic graphical user interface to create a convenient application that continuously informs the user of the various sensor parameters updates.

Challenges we ran into

The biggest roadblock was to figure out how to implement the transfer of sensor values from the hardware board to the io.adafruit using various protocols, such as MQTT. On the software side, the biggest challenge was dynamically rendering the incoming data into the interface.

Accomplishments that we're proud of

We were able to successfully integrate the Here Maps and Google Assistant APIs to enhance the aesthetics of the overall application and keep the experience simple and neat.

What we learned

We were able to equally divide the tasks and work together to create this cohesive application.

What's next for What the Crop!

Our next aim is to integrate everything we have achieved into a small traversal rover which moves autonomously through various environments by means of simultaneous localization and mapping. For the software, we want to implement a database to save the data and create sensor information history charts to improve decision making based on sensor parameters vs harvest yield. We also want to add more customization for users to choose crops, sensor parameter alerts, and farm/garden size. Make taking care of the farms more easier for the farmers to make decisions by Google home mini.

Built With

- a-to-b-cable

- ajax

- api

- arduino

- breadboard

- buzzer

- css3

- dialogflow

- esp32

- esp8266

- google-cloud

- google-home-mini

- here-map

- html5

- http

- humidity-sensor

- javascript

- jquery

- json

- jumpercables

- leds

- mosquitto-mqtt

- mqtt

- soil-moisture-sensor

- sunlight-sensor

- temperature-sensor

- uno

- wifi-module

Log in or sign up for Devpost to join the conversation.