Inspiration

In today's digital age, we spend countless hours online, often encountering content that can be harmful or toxic. Given the vast amount of information, it's challenging to filter out the bad content efficiently, specially for children and elderly people. Our goal is to provide a solution that helps users navigate the internet safely by detecting and managing harmful content.

What it does

Our solution is a browser extension that detects and highlights or blocks harmful content. It acts as a protective shield, allowing users to surf the web having full controll of the negative aspects of the internet.

How we built it

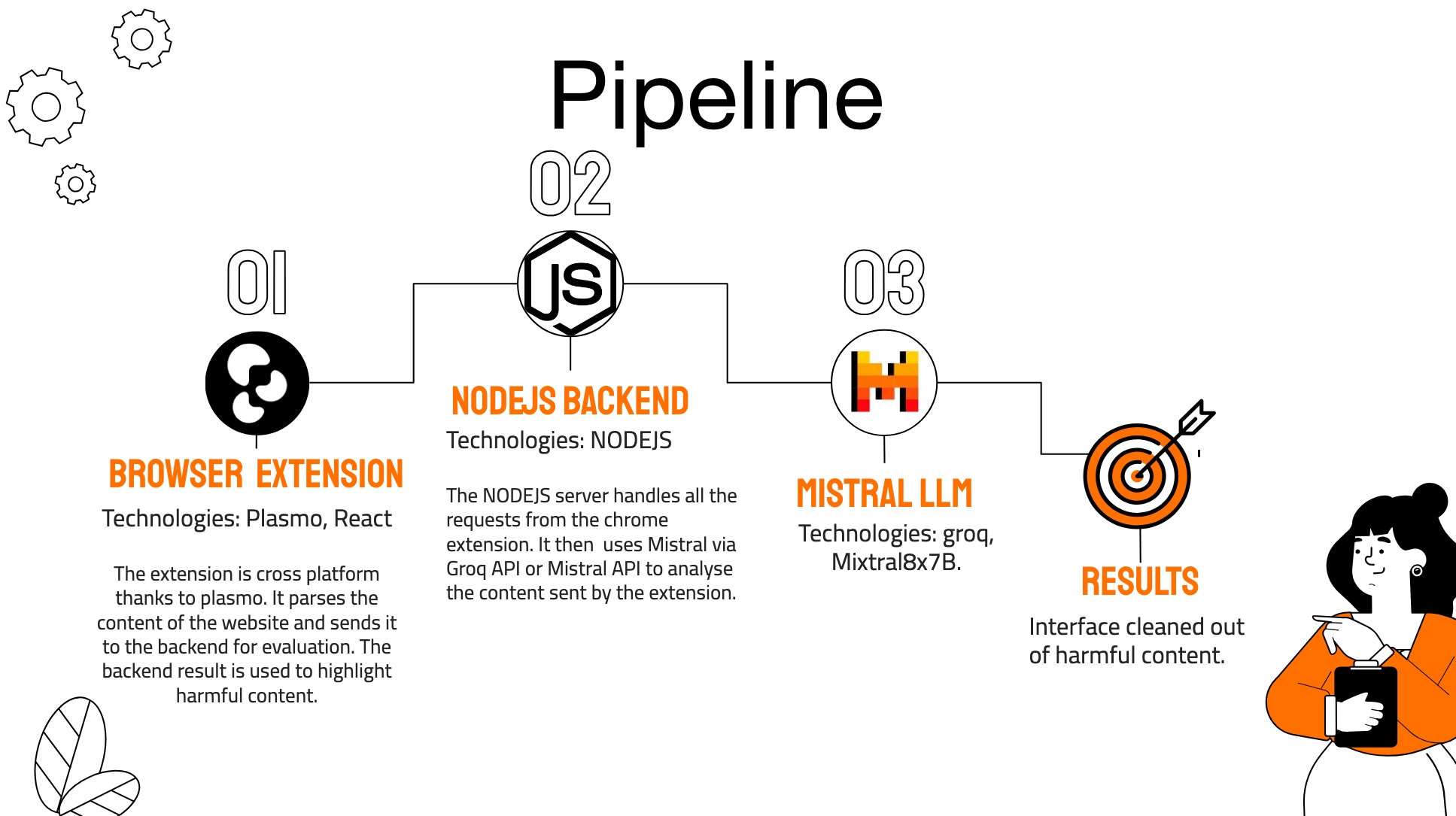

We leveraged the open-source Mistral model (Mixtral8x7b), accessed via Groq or Mistral API to develop our extension. The model's advanced capabilities in natural language processing enable it to identify harmful content effectively. We integrated this model into our extension, creating a seamless user experience.

To try out the project yourself, you can find more details on the project's repository README file: WebSanity GitHub Repository README.

The full pipeline can be seen in the following image:

Challenges we ran into

One significant challenge was the "needle in the haystack" problem. Harmful content can be hidden within large texts, making it difficult to detect. To address this, we had to split the text into smaller, more manageable parts, allowing the language model to classify the content more confidently. However, this approach increased the number of API calls, quickly hitting the rate limits. We had to find a balance between text size and the number of API calls to maintain efficiency.

Accomplishments that we're proud of

We are proud to have developed a system that accurately detects and classifies harmful content. Our extension provides a reliable tool for users to protect themselves from the negativity found online.

What we learned

While developing the solution, we realised that there is actually much more harmful content that we initially expected. We also got to learn about the Mixtral8x7b model and groq platform which offers the fastest inference.

What's next for the project

Next, we plan to launch the extension on the Chrome Web Store as a purely frontend solution. Users will need to input their API keys to use the service. Additionally, we will give users the opportunity to choose from a range of LLM models, each varying in speed, price, and intelligence. This approach will allow us to reach a broader audience and help more people navigate the internet safely.

Log in or sign up for Devpost to join the conversation.