-

-

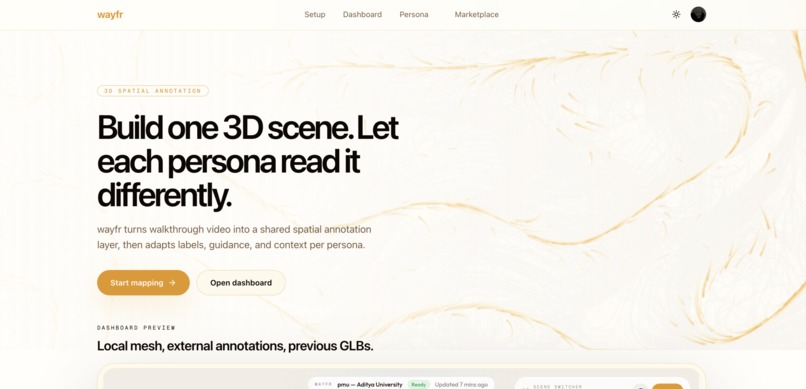

Landing Page

-

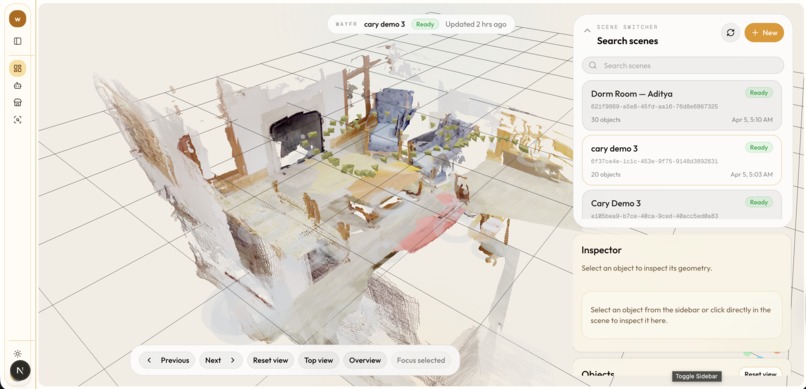

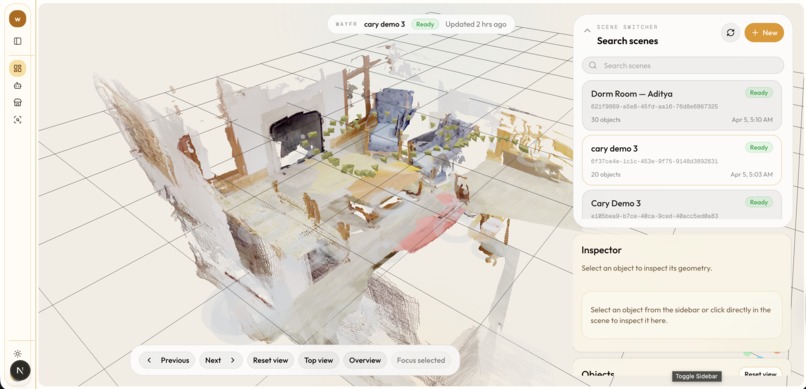

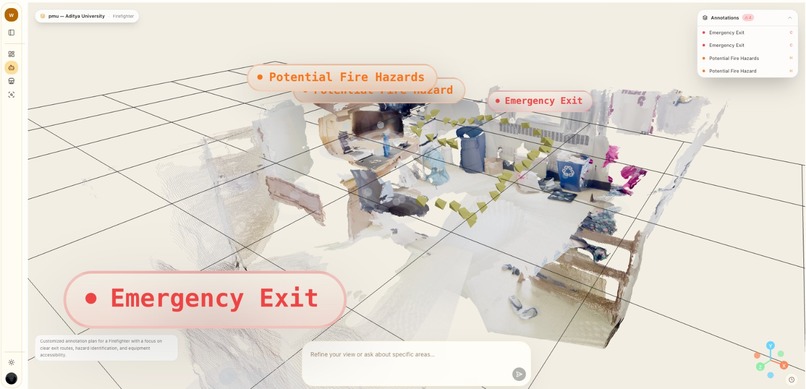

Dashboard - 3d Visualizer Page

-

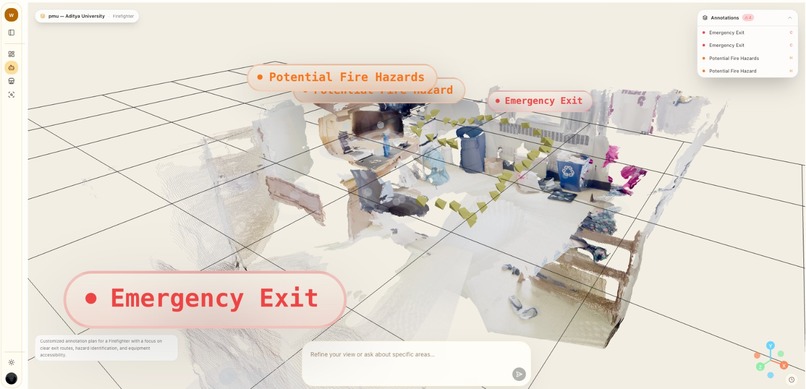

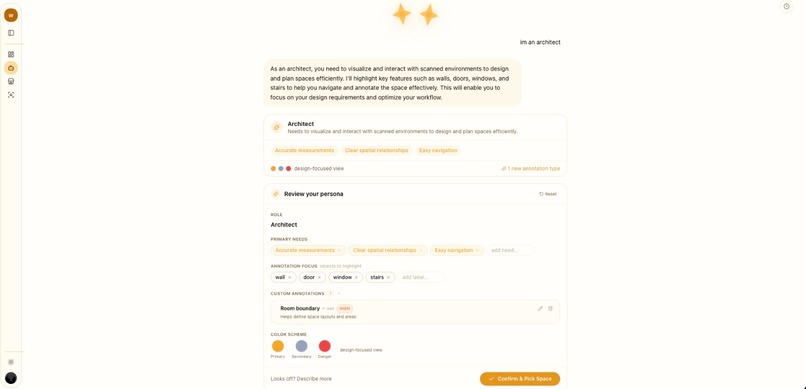

Persona - Custom Annotation View

-

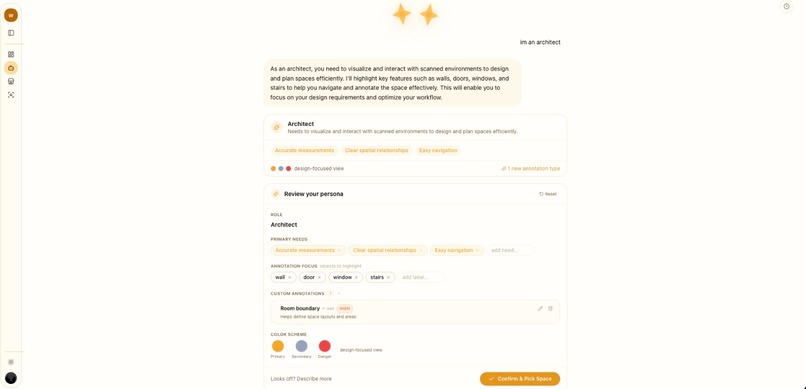

Persona - Agentic Chat Page

-

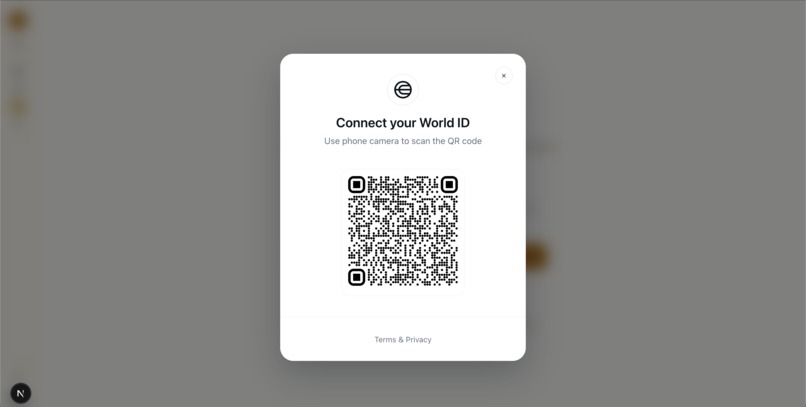

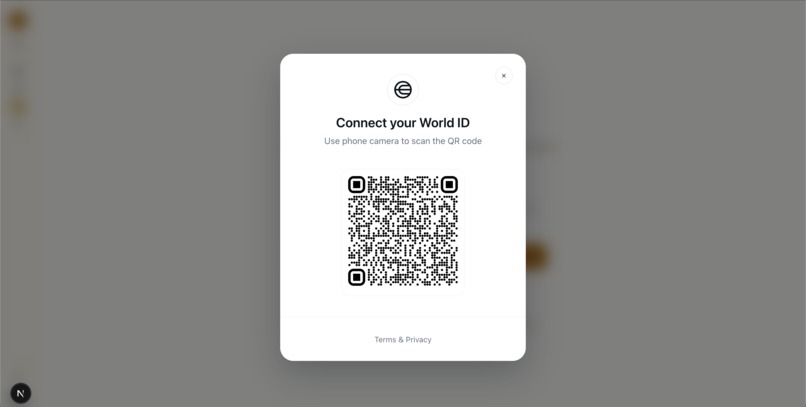

World ID - QR Code

-

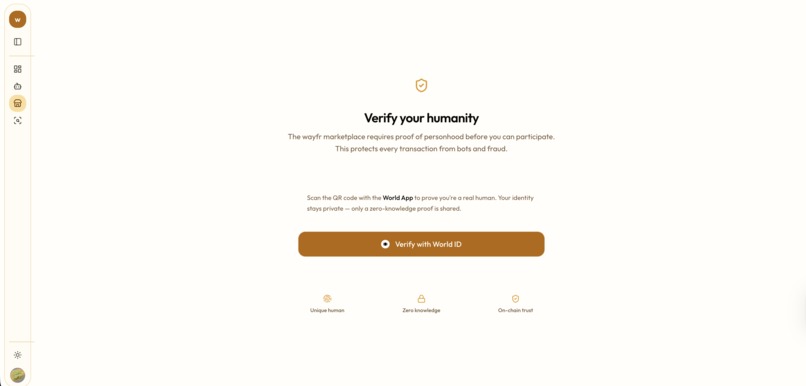

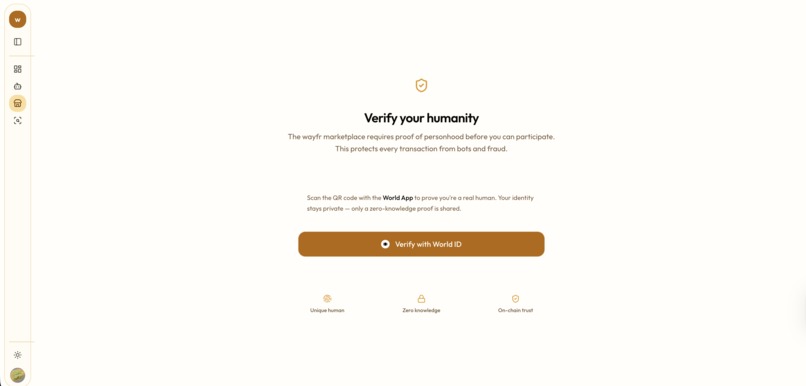

World ID - Human Verification

-

Phone Recording Redirect

-

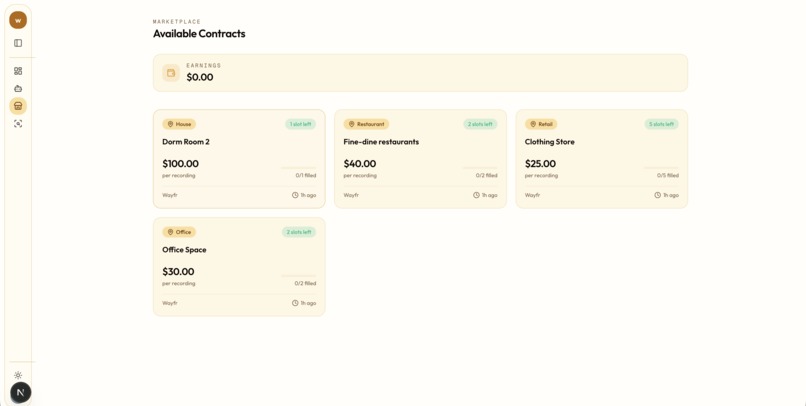

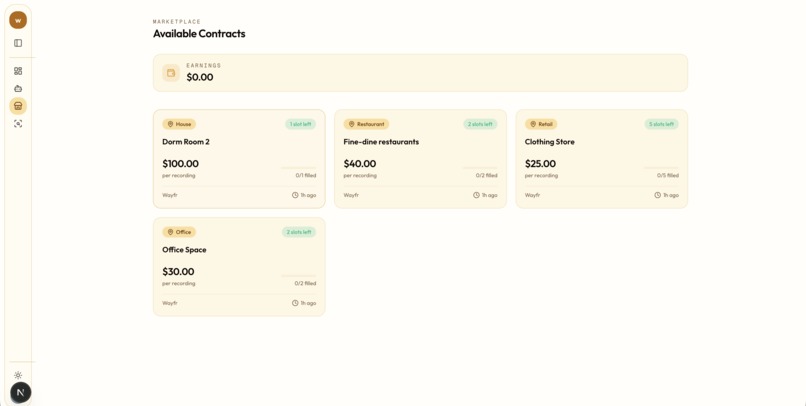

Marketplace - Customer Contracts

-

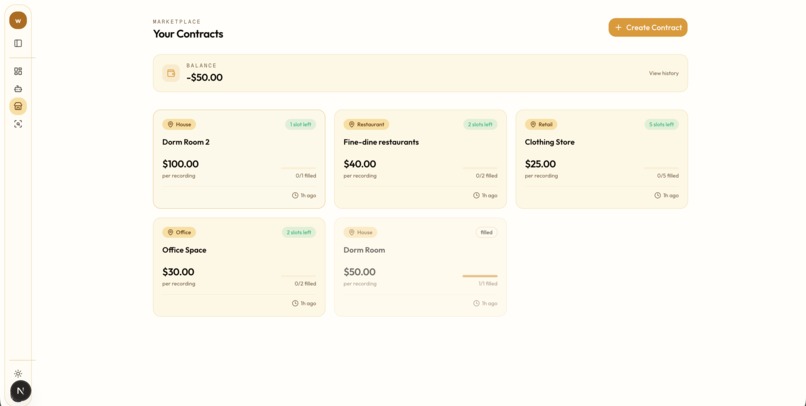

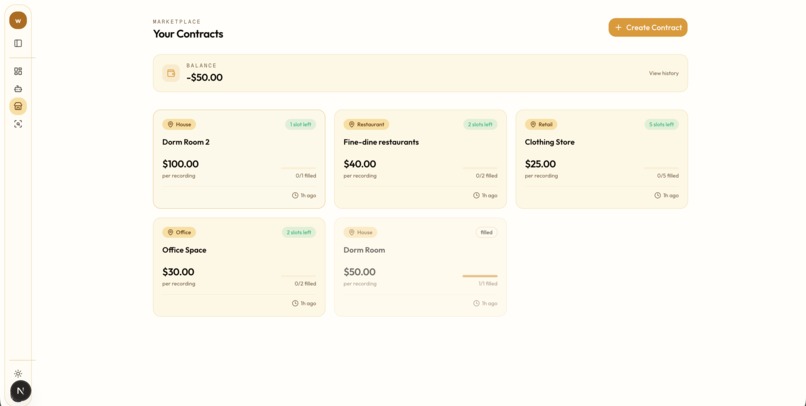

Marketplace - Business Contracts

-

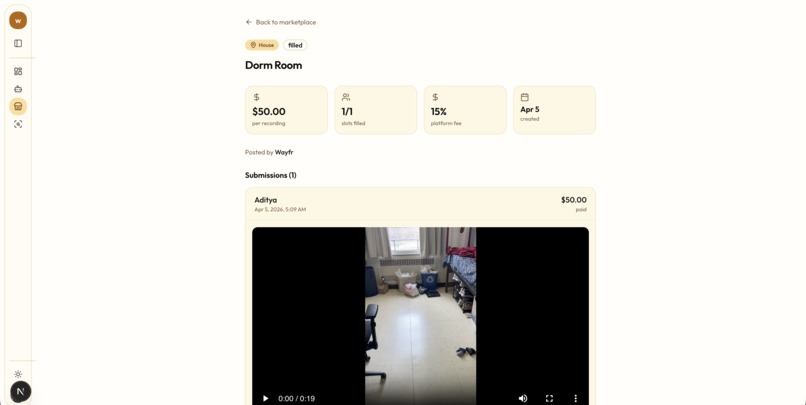

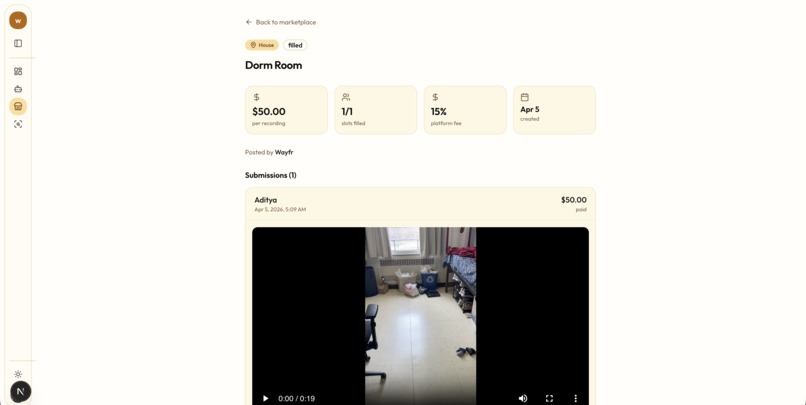

Marketplace - Fulfilled Contract

-

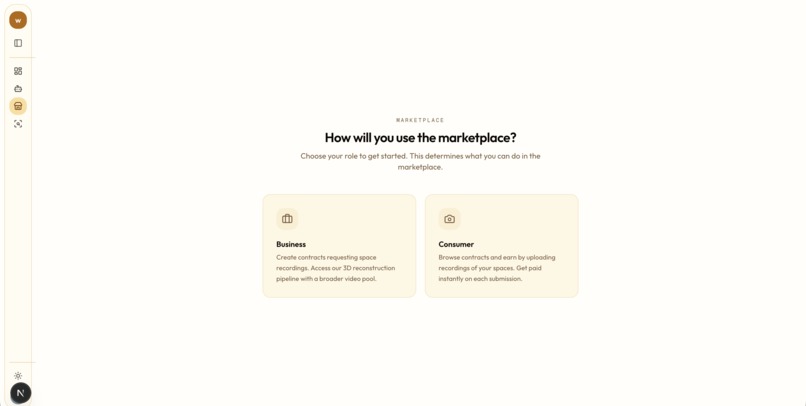

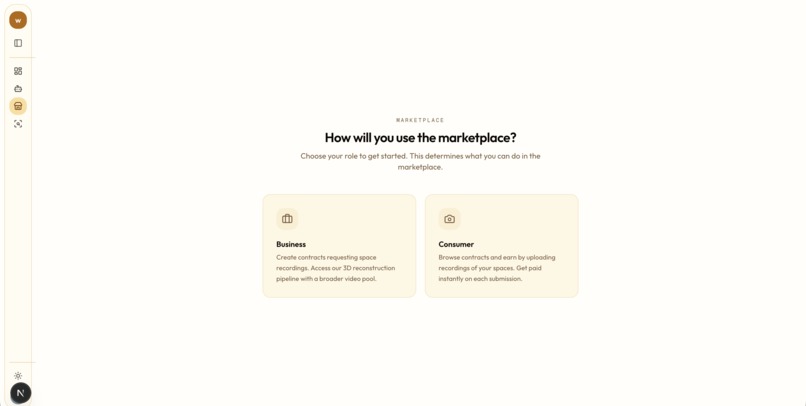

Marketplace - Landing Page

Inspiration

We were inspired by the massive data gap in the robotics and simulation industries. While high-end LiDAR setups exist, they are expensive and difficult to scale. We wanted to allow anyone with a smartphone to contribute to a global library of 3D spatial data.

What it does

Wayfr is a dual-sided marketplace and machine learning pipeline for turning real spaces into structured 3D data. It allows contributors to accept small recording contracts and capture walkthrough videos of their environments. Our backend then processes that footage into high-fidelity 3D reconstructions, automatically locating, labeling, and annotating important objects in the scene. On top of that, we built Personas, a layer that can reinterpret the same space for different users, changing which objects matter most and how they are described. For businesses like robotics labs and AI teams, that means they do not just get a raw 3D scan, they get an explorable, searchable, and customizable spatial dataset.

How we built it

Wayfr is built on a Next.js and FastAPI stack, with GPU-heavy processing running on Modal and storage handled through Supabase. Our core ML pipeline has three stages: MapAnything reconstructs a 3D scene from a walkthrough video, Grounding DINO and GSAM2 performs object detection and segmentation across the video, and a bridging step lifts those 2D detections into 3D world coordinates so objects are anchored inside the final scene.

Around that core pipeline, we built the full product workflow: a contributor capture flow, World ID verification to confirm users are real humans, a marketplace where businesses can post recording contracts and contributors can submit footage, and a Three.js powered 3D viewer for exploring completed scenes.

We also added Personas that let the same reconstructed environment be re-labeled and prioritized differently depending on the user or use case, making the output feel more like spatial intelligence than a static scan. We used llama4:latest hosted by Purdue GenAI Studio to power our Persona agent.

Challenges we ran into

A major challenge was designing how users interact with the data. We had to map messy, multi-stage ML outputs into a clean interface where users can filter for specific objects, like “industrial hazards” or “navigational obstacles.” Bridging raw metadata into something usable and intuitive took a lot of iteration. Scope was also a constant challenge. With a vision this broad, it was easy to get pulled into bigger ideas like Ray-Ban integrations early, but we had to stay focused on building a stable pipeline from video upload to annotated 3D mesh.

Accomplishments that we're proud of

We’re proud that this became a full product, not just a demo. A user can start with a phone capture or desktop upload, and the system turns that into an explorable 3D scene with anchored objects and supporting evidence frames. On the frontend, users can search, filter, and inspect objects in the scene, and clearly see what the system recognized and where it exists in space. We also built personalization into the experience, so the same scene can be interpreted differently depending on the user’s needs. Beyond that, we expanded into a full platform with authentication, World ID verification, marketplace flows, and a pipeline loop that feeds submitted data back into the system.

What we learned

We learned that building a compelling spatial AI product is about more than just reconstruction. The real challenge is making the scene explainable, searchable, and useful, which is why features like object inspection and evidence frames became just as important as the ML pipeline. We also learned that reducing friction and building trust is what makes advanced systems feel real. Cross-device phone capture and identity verification were important in that.

What's next for wayfr

To make our data more accessible, we’re building a developer API so robotics labs and AI researchers can directly pull annotated 3D environments into their simulation engines. We’re also expanding beyond smartphones by integrating with the Meta Ray-Ban SDK for hands-free capture, where users can map environments just by walking through them. Finally, we’re moving toward SAM3D-based segmentation, where instead of a single static mesh, each object becomes its own GLB file with physics properties. This makes scenes fully interactive, allowing users to modify layouts or run simulations directly on top of the data.

Built With

- clerk

- css

- css3

- dino

- fastapi

- html

- javascript

- machine-learning

- modal

- nextjs

- postgresql

- python

- react

- sam2

- supabase

- typescript

- world-id

Log in or sign up for Devpost to join the conversation.