-

Our first webcam picture taken purely with our code.

-

Our second webcam picture. This time, we were able to capture a clear image of a fruit snacks wrapper for our ML model.

-

Our workspace. Very chaotic!

-

Our webcam. This will be attached to the upper exterior of the box.

-

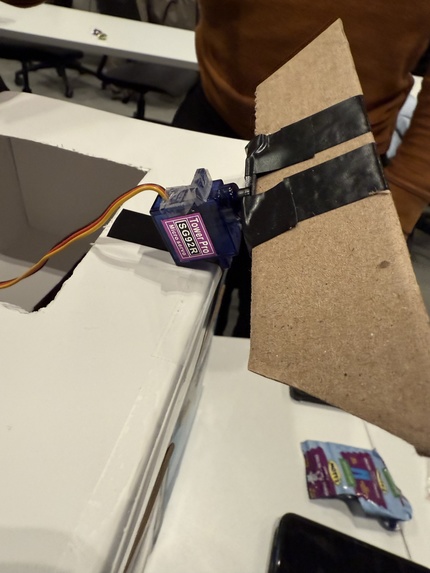

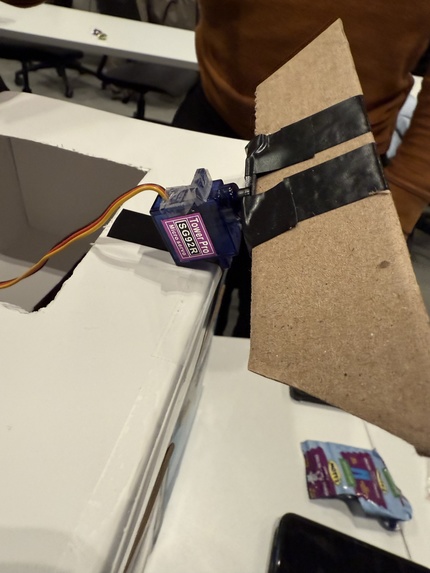

The moving platform used to sort the given piece of waste. It's attached to a motor that rotates depending on the result of the ML model.

-

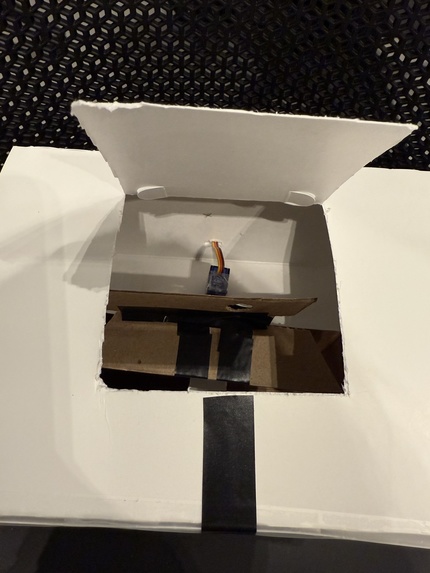

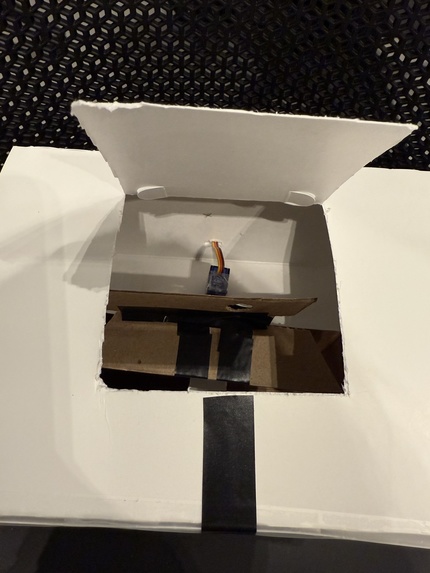

The moving platform inside of the box. Within the box there are two holes representing the trash and recycling bins.

-

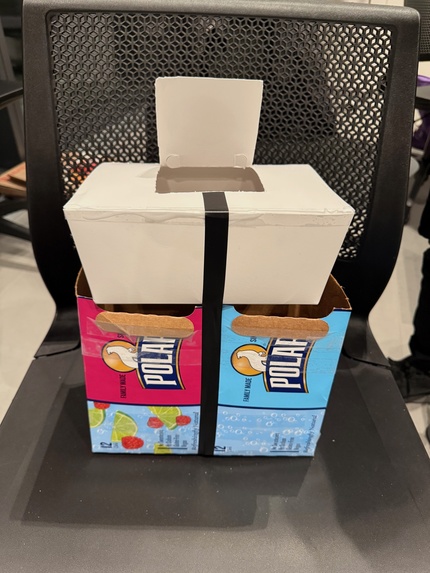

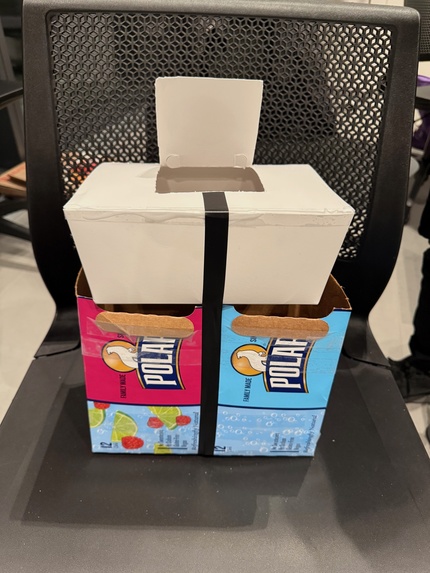

Here is the box! The flap on top acts as a trap door once a picture of the piece of waste has been captured by the webcam.

-

Our final implementation of our trash bin with the camera on top.

Inspiration

When throwing away our trash, we noticed that a lot of recyclables were being thrown away as opposed to being recycled. This, combined with our general concern for the environment, helped shaped our idea.

What it does

This public trash can takes in a unit of trash, scans it, sends it to a machine learning model, and sorts it into either the recycling bin or the trash bin according to the result. There are six categories: organic, plastic, metal, paper, glass, and food waste. If the trash is classified as any of these categories, it will be sorted into the recycling bin. Otherwise, it will be sorted into the trash bin.

How we built it

We utilized multiple hardware components, including Arduino servo motors, drivers, IR break beam sensors, a webcam, and cardboard to build the physical parts of our trash can. We used cardboard as the body of our trash can and installed the hardware components around and within it. With a custom machine learning model from ImageAI, we trained our model using datasets of trash images. Using MongoDB, we implemented the Arduino hardware.

Challenges we ran into

We had a few troubleshooting issues with the Arduino hardware; the stepper was not properly communicating with the Arduino board and as a result the motor used for the sorting mechanism was not working. We also had some trial and error with our machine learning models. It also took a lot of time to train these models, so we couldn’t test and fine tune for accuracy until very late in our process.

Accomplishments that we're proud of

We are proud of building a physical prototype, successfully troubleshooting hardware for the camera and sensors, and integrating machine learning into our project.

What we learned

We learned a lot about machine learning and hardware implementation.

What's next for Wall-E Revived

If we were to explore Wall-E Revived further, we would likely work on further refining our sorting mechanism. As of now, we are only accounting for trash and recyclables with no regard for the type of recyclable. For next steps, we could try sorting the recyclables into specific categories. Further refinement could also include modifying our trash bin to adhere to specific behaviors of waste, such as the decomposition of compost and the degeneration of batteries.

Built With

- arduino

- clip

- gradio

- kaggle

- meshy

- mongodb

- openai

- python

- raspberry-pi

Log in or sign up for Devpost to join the conversation.