-

-

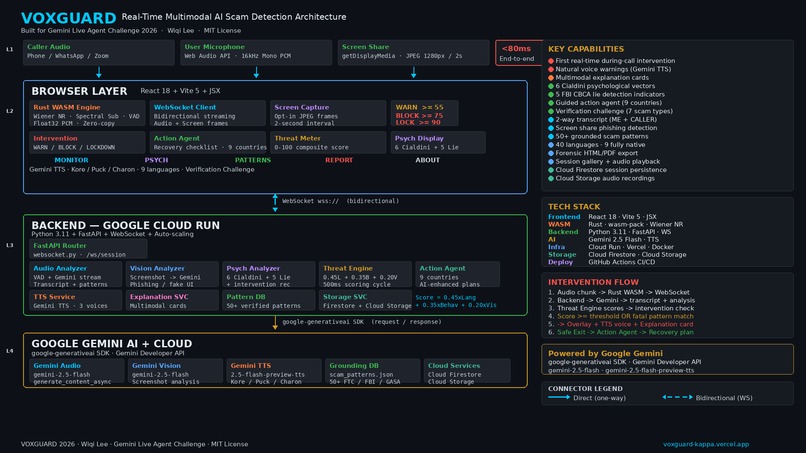

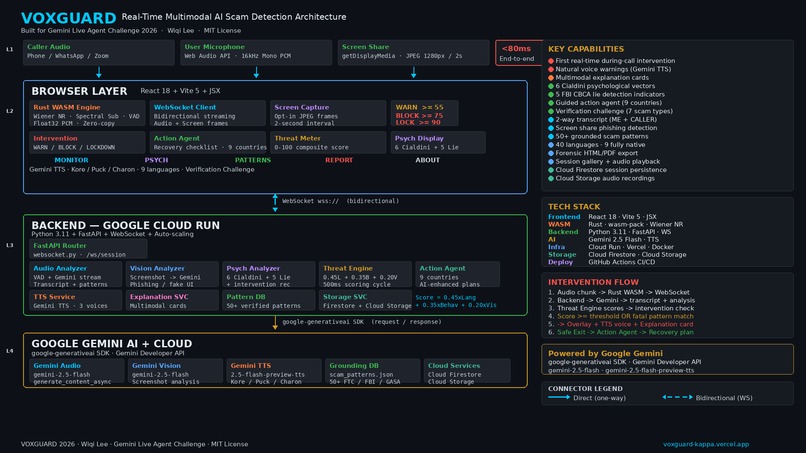

VoxGuard: Real-time multimodal AI scam detection architecture

-

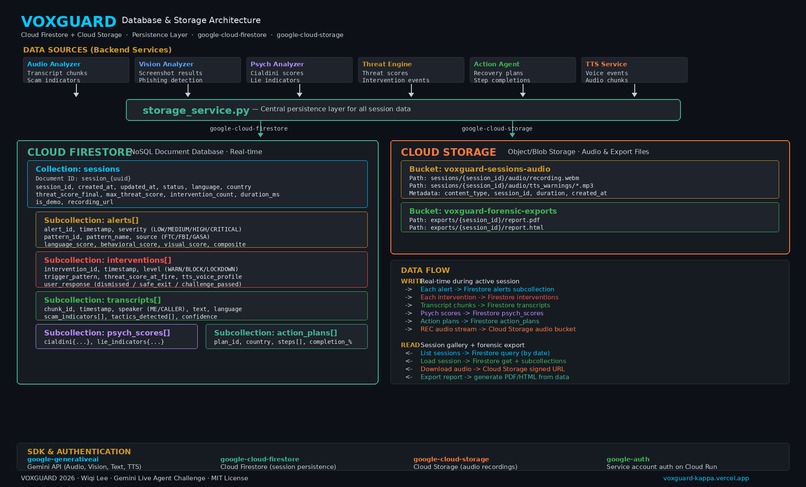

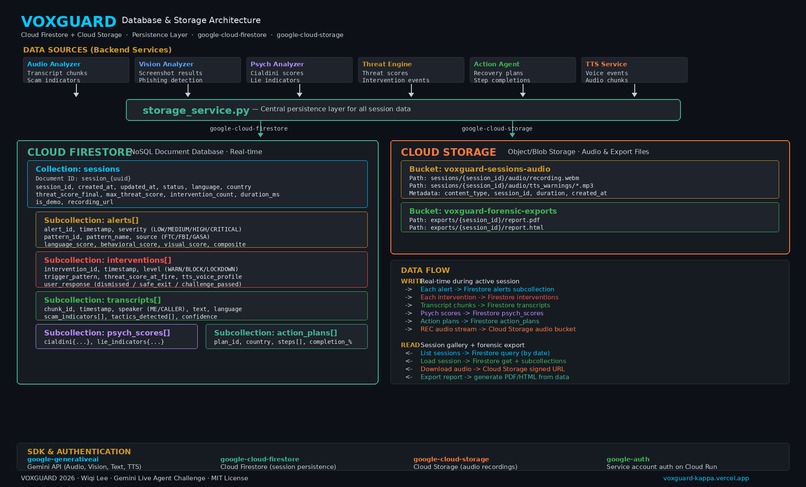

VoxGuard: Database

-

Google Cloud (VoxGuard): Service details page

-

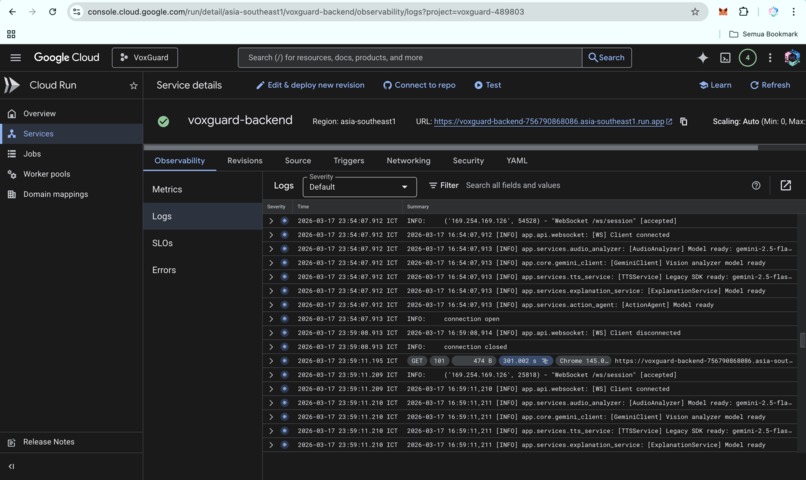

Google Claude (VoxGuard): Logs page

-

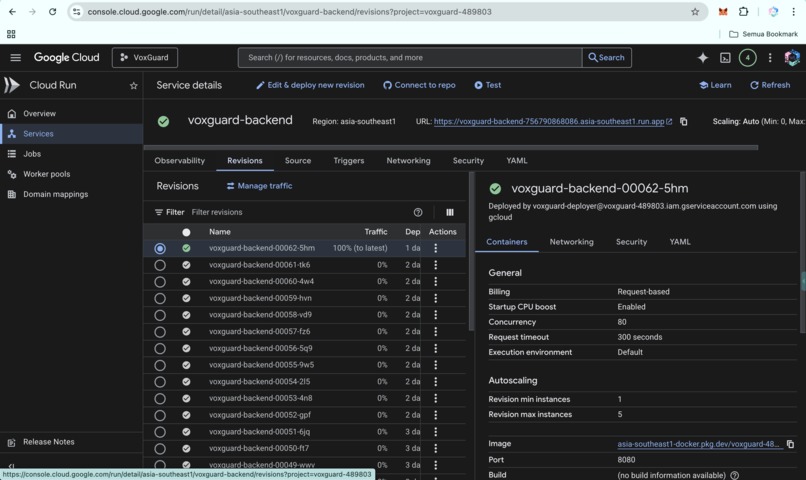

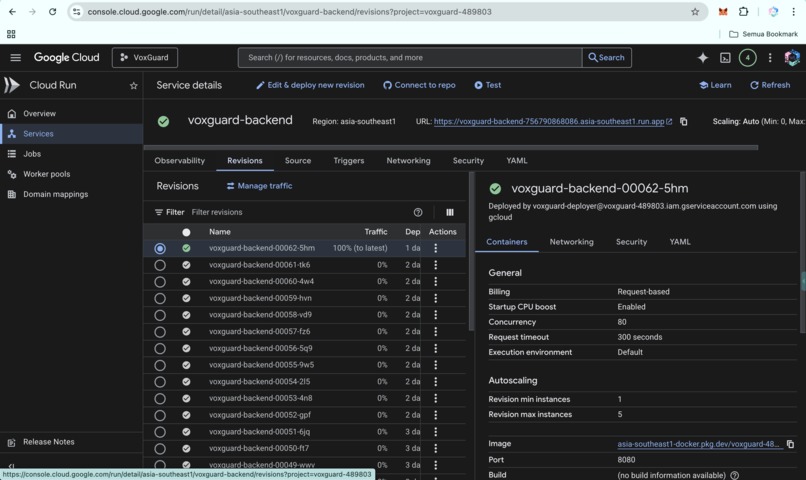

Google Claude (VoxGuard): Revisions page

-

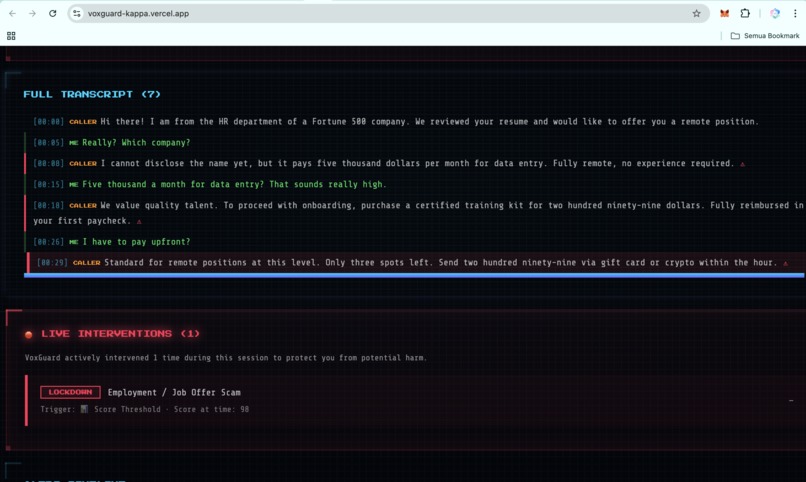

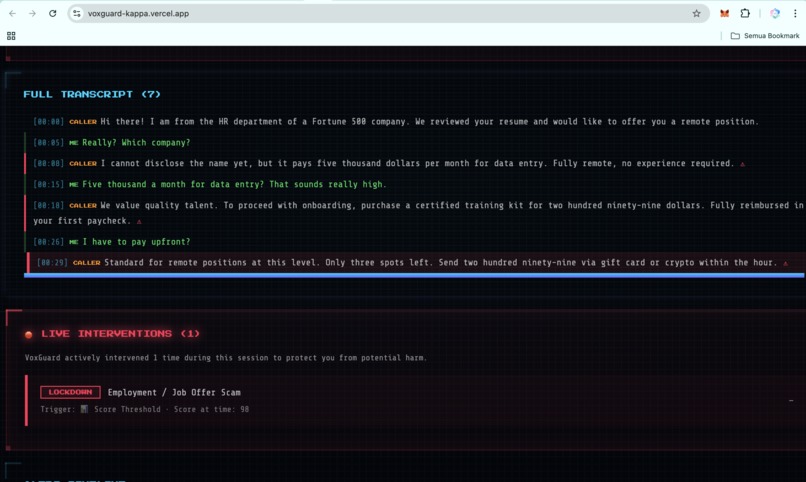

VoxGuard detects live scam activity in real time through multimodal analysis and active threat scoring.

-

Full Transcript

-

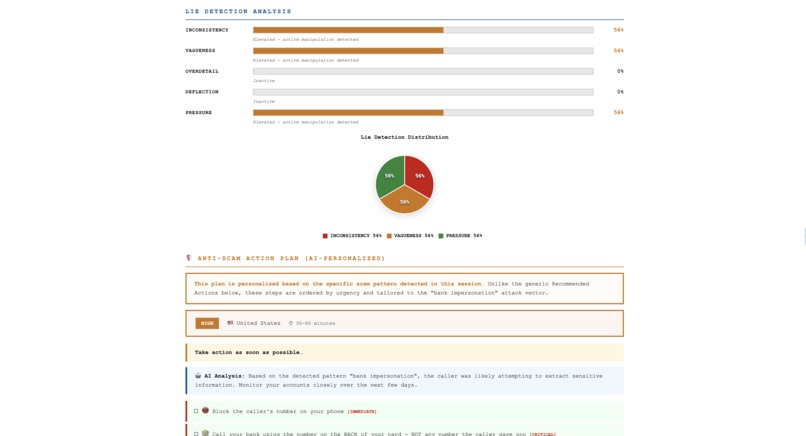

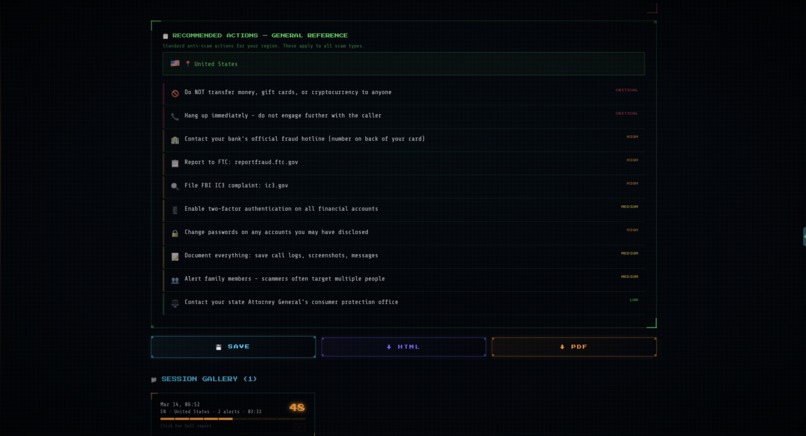

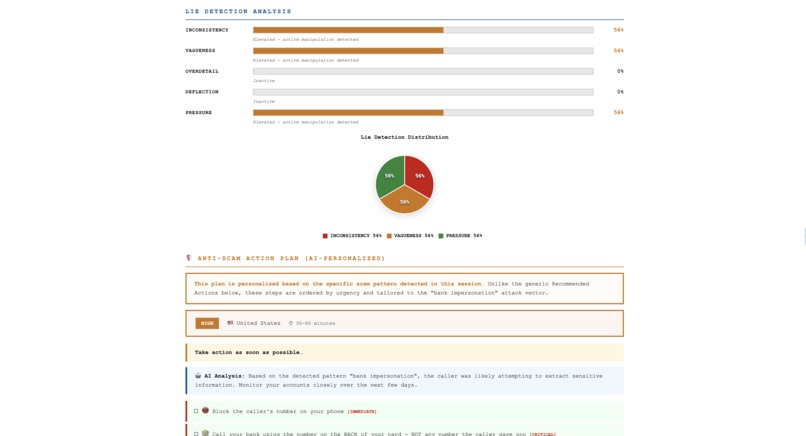

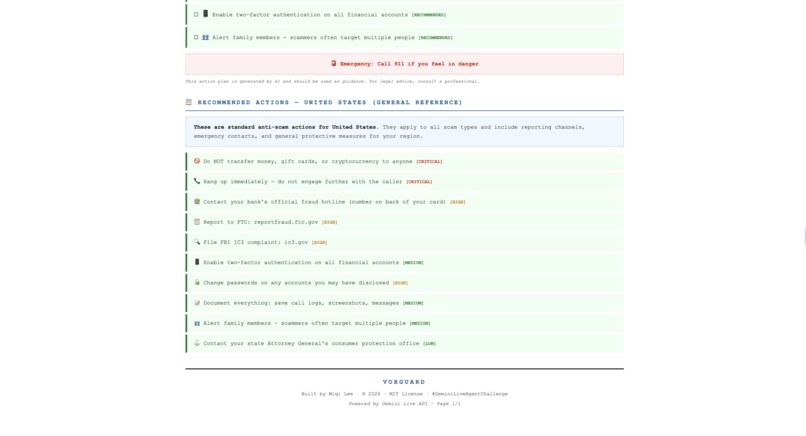

AI-personalized recovery plan with prioritized steps, emergency contacts, and progress tracking after a detected scam.

-

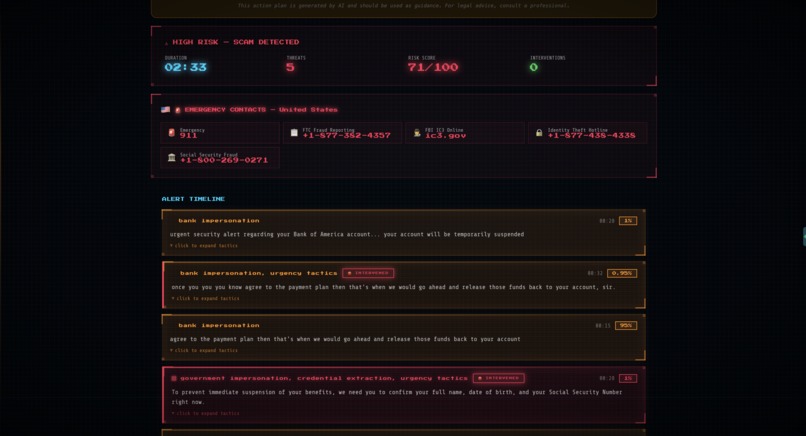

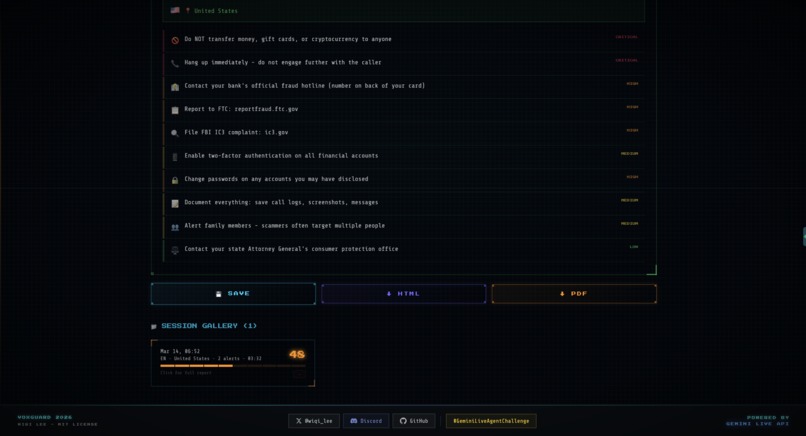

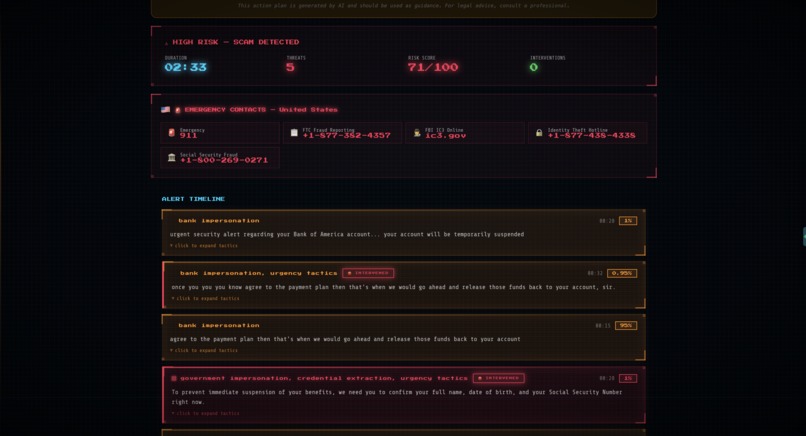

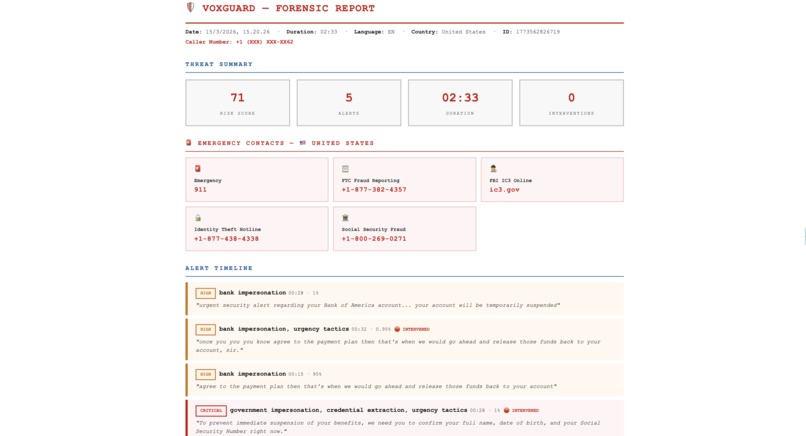

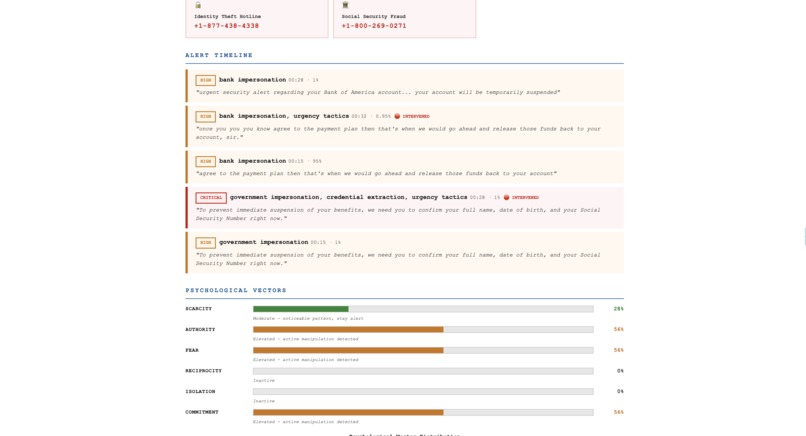

Forensic report summarizing risk score, threat count, alert timeline, and emergency contacts from the scam session.

-

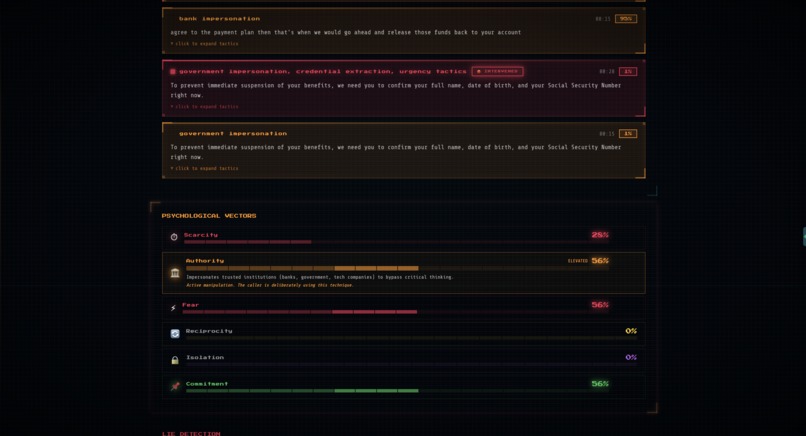

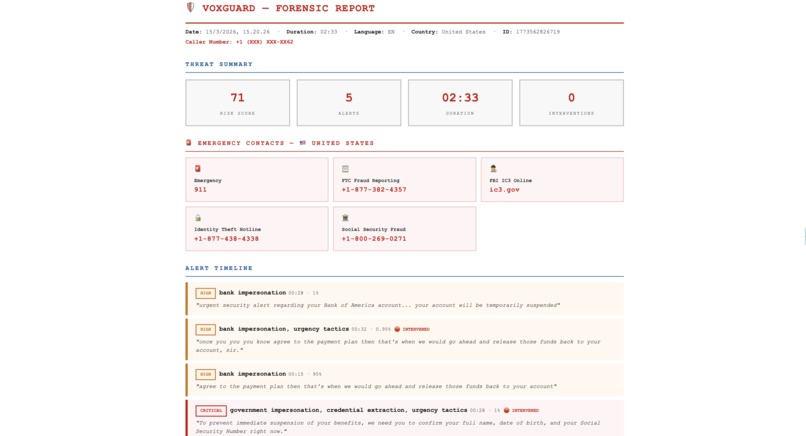

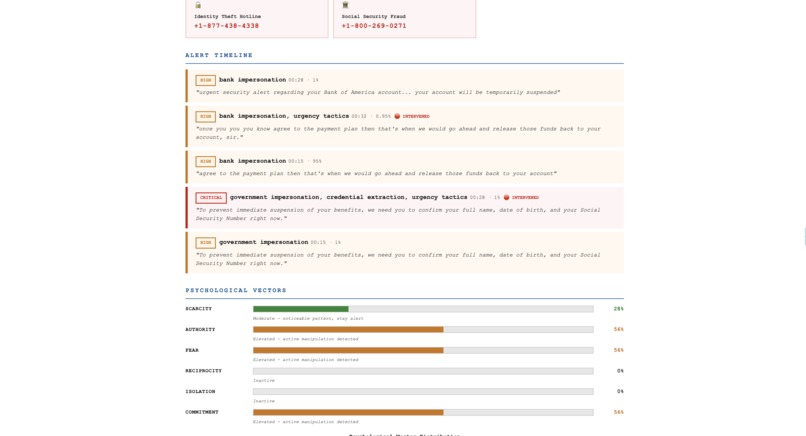

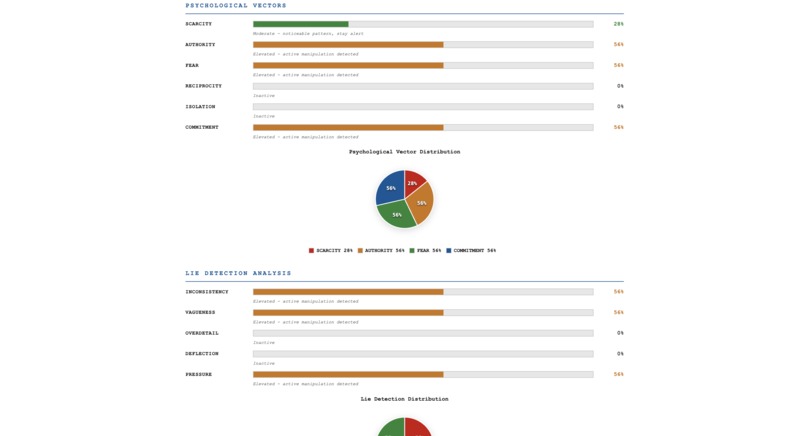

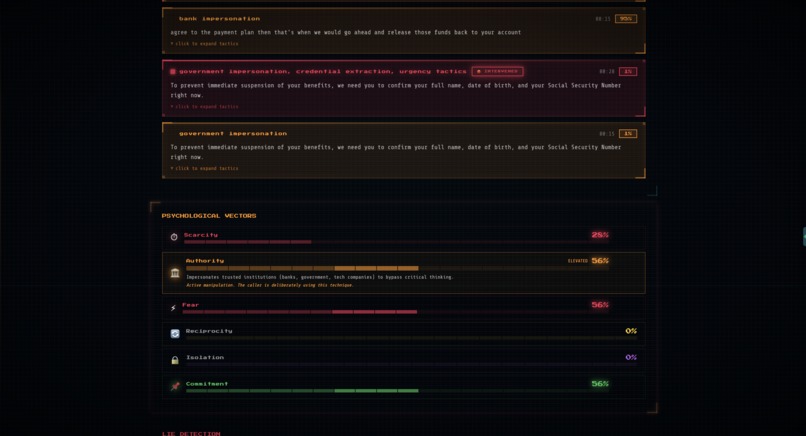

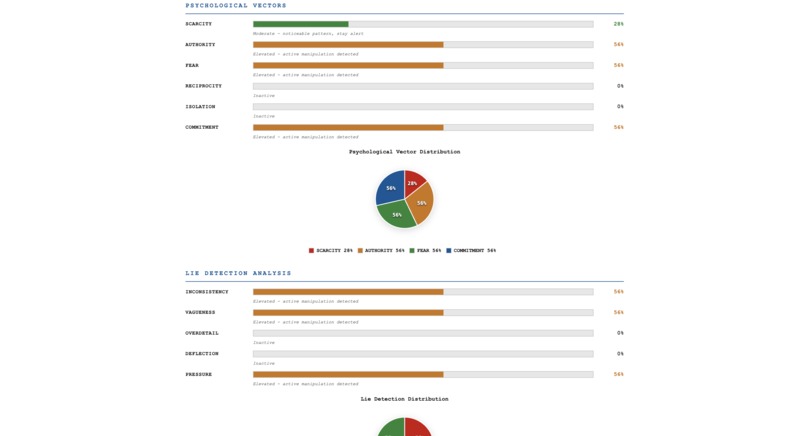

Psychological vector analysis showing how authority, fear, scarcity, and commitment were used during the call.

-

CBCA-inspired lie detection highlighting inconsistency, vagueness, and pressure-to-comply signals in the transcript.

-

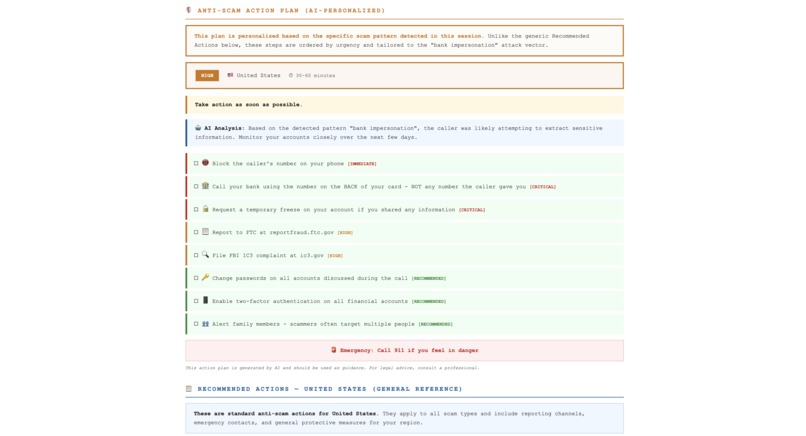

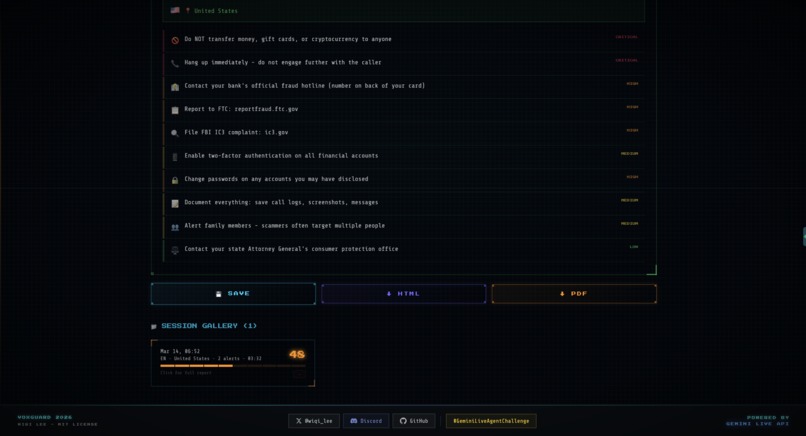

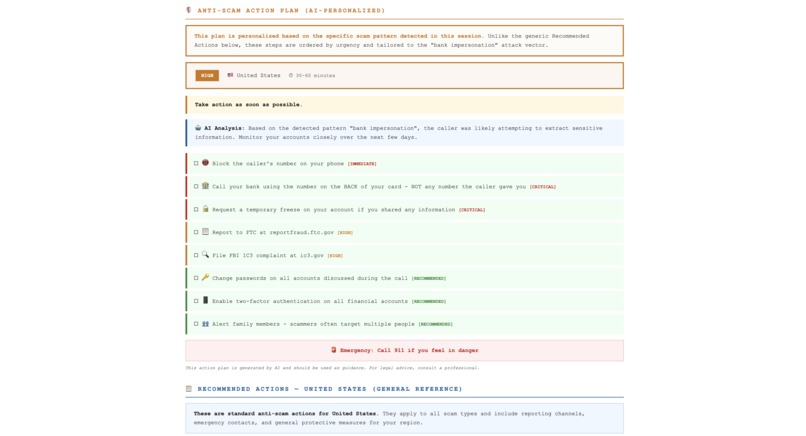

Localized recovery guide with reporting channels, account security steps, and country-specific anti-scam actions.

-

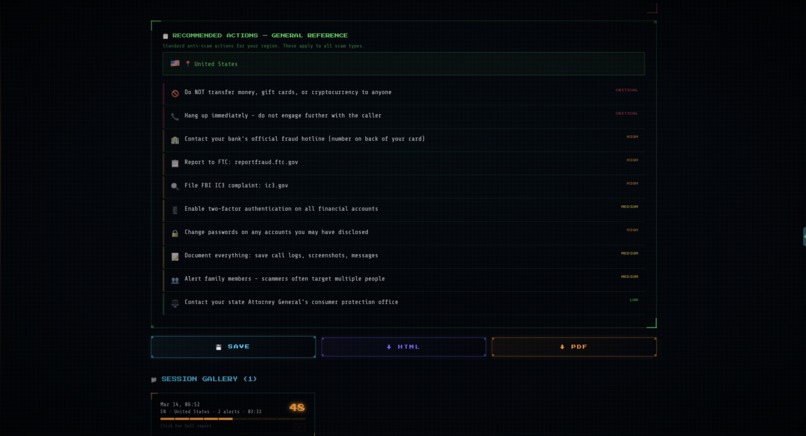

Exportable forensic report with save, HTML, and PDF output, plus a session gallery for reviewing past incidents.

-

Exported PDF forensic report summarizing risk score, alerts, emergency contacts, and the full scam session timeline.

-

PDF report section showing psychological vector analysis across authority, fear, scarcity, and commitment signals.

-

PDF-based lie detection analysis highlighting inconsistency, vagueness, and pressure signals extracted from the transcript.

-

AI-personalized anti-scam action plan generated in the PDF report and tailored to the detected scam pattern.

-

PDF recovery checklist with immediate, critical, and recommended actions generated after the detected scam session.

-

Exported PDF reference guide with country-specific reporting channels, security steps, and emergency anti-scam actions.

-

https://medium.com/@wiqi_lee/i-built-voxguard-an-ai-agent-that-detects-and-disrupts-scams-in-real-time-71cb80294498

Inspiration

Every 30 seconds, someone loses money to a phone scam.

Last year, my neighbor's father wired $12,000 to someone impersonating his bank. He knew scams existed. He had read the warnings, heard the advice, and understood the risks. But when the caller told him his account would be frozen in ten minutes and asked for his one-time password, he handed it over without hesitation.

I kept thinking about that moment. Not because of the money, but because of what it revealed: most anti-scam advice fails at the exact moment it matters. He had a phone, life experience, and enough caution to know better. None of that helped in the thirty seconds when pressure, fear, and urgency took over.

And that gap is far larger than one family. According to the FBI IC3 2024 Annual Report, internet crime losses in the United States reached $16.6 billion in 2024. The Global Anti-Scam Alliance estimates global scam losses exceed $1.026 trillion per year.

The real gap is not awareness. It is intervention.

Every tool in this space is built for one of two moments: before the call connects, or after the damage is done. Truecaller blocks known scam numbers. Hiya flags suspicious callers. ScamShield filters reported contacts. These are useful tools, but they are optimized for screening and reporting, not live defense.

So I built VoxGuard: a real-time multimodal AI agent that listens to live conversations, detects scam patterns as they emerge, and intervenes before the damage is done. Not after the call. Not the next day. Right at the moment the scammer asks for your one-time password.

"The difference between a scam succeeding and failing is often a single moment of doubt. VoxGuard creates that moment, then forces you to think before you act."

Wiqi Lee

What it does

VoxGuard is the world's first real-time multimodal scam detection agent with active intervention. It runs in the browser alongside any call platform (phone, WhatsApp, Zoom, Teams) and does the following:

- Listens to both sides of the conversation through a Rust WASM audio engine with under 80ms latency

- Analyzes the caller's language, behavior, and optionally the shared screen using Gemini Live API in real time

- Detects 50+ verified scam patterns grounded to published sources: FBI IC3 2024, FBI IC3 Annual Reports Index, FTC Consumer Sentinel, GASA, MAS ScamShield, ACCC ScamWatch - AU, OJK Indonesia, Bareskrim Cyber - ID, NPA Japan, FSS South Korea, MHA India Cyber Crime, INCIBE Spain, PHAROS France, SAMA Saudi Arabia, China National Anti-Fraud Center, and Interpol Financial Crime.

- Scores psychological manipulation across three frameworks: six Cialdini influence vectors, five FBI CBCA lie detection indicators, and a derived user vulnerability state (Panic Level, Compliance Risk, Misplaced Trust)

- Intervenes the moment a fatal action is detected: full-screen overlay, natural voice warning via Gemini TTS, a multimodal Explanation Card showing exactly why this is dangerous, scenario-based verification challenge, and a one-click Safe Exit

Three escalation levels:

| Level | Trigger | Response |

|---|---|---|

| WARN | Score 55+ or high-risk manipulation pattern detected | Amber overlay. Natural voice: calm, advisory (Kore). Verify Caller + Safe Exit + Continue With Caution. |

| BLOCK | Score 75+ or fatal pattern detected | Full-screen red. Natural voice: firm, authoritative (Puck). Fatal patterns (OTP, transfer, gift card, crypto): Safe Exit only. Impersonation: Verification Challenge + Safe Exit. |

| LOCKDOWN | Score 90+. Maximum confidence confirmed scam. | Full-screen red. Natural voice: commanding, sharp (Charon). 30-second auto-disconnect countdown. Safe Exit only. |

Certain patterns trigger an instant BLOCK the moment they are detected, regardless of cumulative score: OTP extraction, safe account transfer, gift card demand, and crypto transfer. These skip the Verification Challenge entirely because when a caller is actively extracting your credentials, the only safe action is to disconnect.

When intervention fires, VoxGuard speaks. gemini-2.5-flash-preview-tts generates a natural voice warning contextual to the scam type, urgency level, and the user's language. Not a pre-recorded clip. Generated in real time.

Multimodal Explanation Cards appear after every high-severity alert, combining evidence from audio and visual analysis into a plain-language explanation of why the call is dangerous, which manipulation tactics were used, and what to do right now.

After Safe Exit, a Guided Action Agent launches and generates a personalized, step-by-step recovery plan with country-specific emergency contacts and reporting channels across 9 countries. Each step has a checkbox for progress tracking.

The system supports 40 languages with 9 fully localized (English, Indonesian, Chinese, Japanese, Korean, Spanish, French, Hindi, Arabic) and 35+ region-specific scam variants. Every session produces a forensic report exportable as PDF or HTML.

How we built it

Browser Layer: Rust WASM + React

The audio pipeline is written in Rust and compiled to WebAssembly. It performs Wiener noise reduction, spectral subtraction, voice activity detection, and RMS normalization on raw microphone input. Output is 16kHz mono PCM in 250ms frames with zero-copy memory management. The WASM binary is approximately 45KB.

The frontend is a React SPA built with Vite 5, rendering five tabs (Monitor, Psych, Patterns, Report, About) alongside the three-tier intervention overlay system. WebSocket manages real-time backend communication with exponential backoff reconnect logic.

Backend: Python FastAPI on Three Google Cloud Services

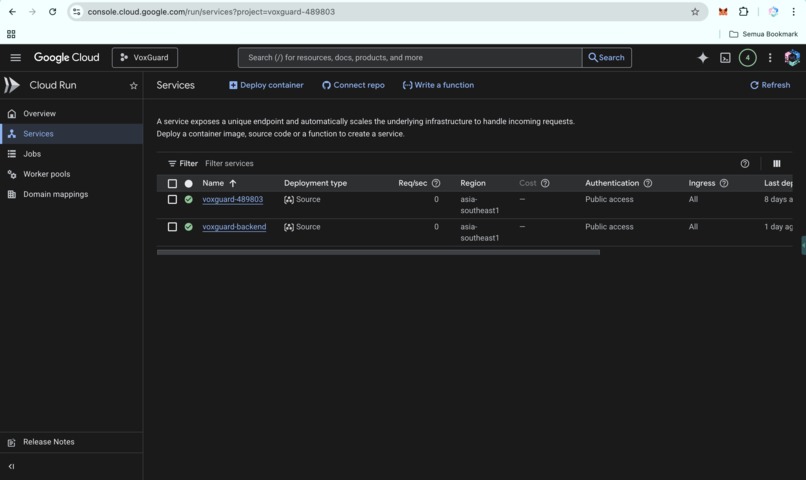

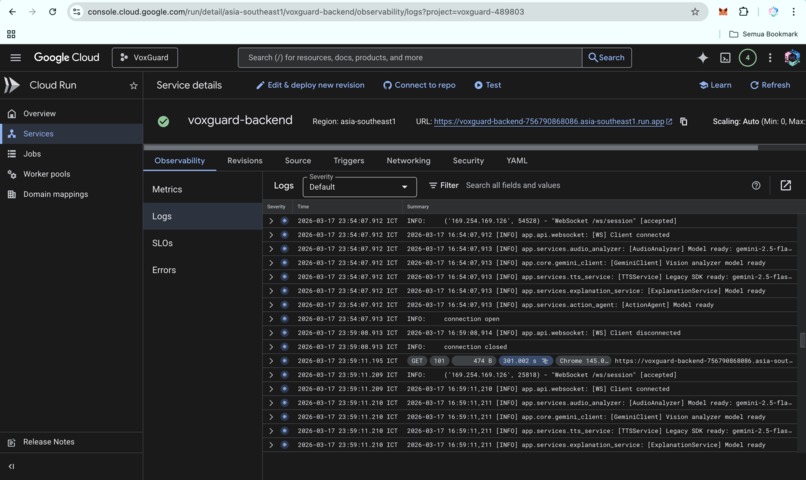

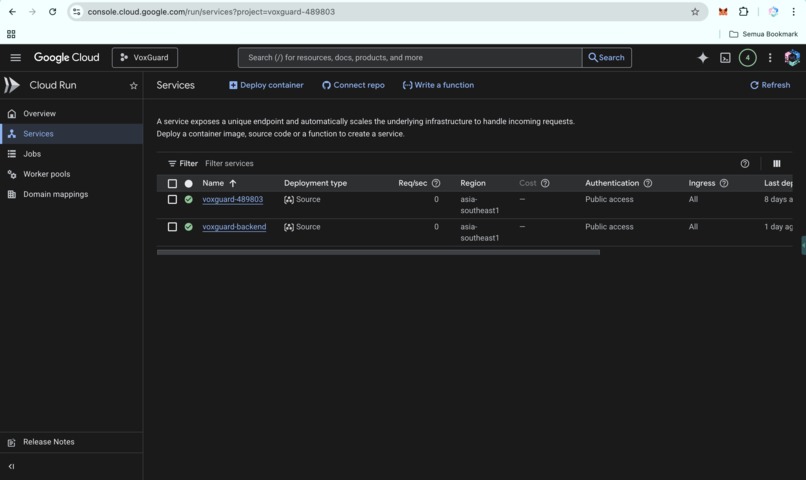

The backend runs on three core Google Cloud services working together. Cloud Run hosts the auto-scaling containerized FastAPI backend deployed in asia-southeast1. Cloud Firestore persists session data, forensic reports, and intervention history across sessions. Cloud Storage stores session audio recordings captured through Live Mic mode for forensic export and replay.

Seven analysis services run in parallel on Cloud Run:

- Audio Analyzer streams preprocessed audio to

gemini-2.5-flashviagenerate_content_asyncwith inline 16kHz PCM audio data, buffered at 2-second flush intervals with VAD, returning structured transcription and threat assessment. - Vision Analyzer processes screen captures (JPEG at 1280px, every 2 seconds) through

gemini-2.5-flashto detect fake login pages, phishing domains, fraudulent dashboards, and malicious QR codes - Psych Analyzer sends the running transcript to

gemini-2.5-flashand receives Cialdini vectors, CBCA lie indicators, user vulnerability scores, and an intervention recommendation in a single call - TTS Service generates natural voice interventions via

gemini-2.5-flash-preview-ttsusing three contextual voice profiles: Kore (WARN, calm advisory), Puck (BLOCK, firm authoritative), and Charon (LOCKDOWN, sharp commanding), fully scripted in 9 languages - Explanation Service combines audio transcript analysis and screenshot analysis into a single Gemini call, producing plain-language explanation cards with signal badges, confidence scores, and recommended actions

- Action Agent generates personalized recovery plans with country-specific emergency contacts, reporting channels, and AI-enhanced advice based on the specific call transcript

- Threat Engine runs a weighted composite scoring system every 500ms and evaluates every alert for intervention eligibility against both score thresholds and instant-trigger pattern matches

A Storage Service (storage_service.py) implements both persistence layers: session data (alerts, interventions, psych scores, transcripts, action plans) persisted to Cloud Firestore via google-cloud-firestore, and audio recordings uploaded to Cloud Storage via google-cloud-storage.

Threat Engine Scoring

Score = 0.45 x Language + 0.35 x Behavioral + 0.20 x Visual

Intervention events are emitted over WebSocket as intervention + intervention_audio + explanation_card event triplets. The frontend renders the overlay, plays TTS audio, shows explanation cards, and sends intervention_response back. The full loop is tracked in session state and preserved in the forensic report.

Google Cloud Services (3 in production)

| Service | Purpose |

|---|---|

| Cloud Run | Auto-scaling containerized backend hosting FastAPI + 7 analysis services |

| Cloud Firestore | Session persistence: alerts, interventions, psych scores, transcripts, action plans |

| Cloud Storage | Audio recording storage for forensic export and session replay |

Grounding

All 50+ patterns are grounded to published regulatory reports with severity levels, linguistic markers, mechanism descriptions, and source attribution. Regional variants add 35+ country-specific patterns grounded to local authorities including OJK Indonesia, NPA Japan, FSS Korea, and MPS China. Zero hallucination by design.

Challenges we ran into

Latency was the hardest engineering problem. Early versions had 300ms+ alert latency, which meant alerts fired after the victim had already responded. Rebuilding the audio pipeline in Rust WASM with zero-copy processing and direct Float32 buffer manipulation brought the round-trip down to under 80ms.

Intervention UX took the most iteration. The first version used a simple warning banner that users dismissed without reading. The second version blocked the screen but provided no context, which felt confusing and adversarial. The final three-tier system with scenario-based challenges, multimodal explanation cards, and mandatory Safe Exit for fatal patterns came from rethinking intervention as a UX problem, not just a detection problem.

Multi-language pattern matching was far more complex than expected. Scam patterns vary dramatically by region. Indonesian Mama Minta Pulsa carries completely different linguistic markers than Japanese Ore Ore Sagi or Korean voice phishing scripts. Building a library covering 35+ regional variants while maintaining strict grounding required deep research into local scam reports and regulatory advisories across a dozen jurisdictions.

Balancing sensitivity and specificity. Overly aggressive detection trains users to ignore alerts. We tuned the weighted composite scoring and threshold logic to ensure that when VoxGuard fires an intervention, it is meaningful. False positives are a UX liability in a system built on trust.

Accomplishments that we're proud of

- First scam detection system to actively intervene during a live call. Every other tool on the market operates before or after the critical window. VoxGuard operates during it.

- Three Google Cloud services in production: Cloud Run for auto-scaling compute, Cloud Firestore for session persistence, and Cloud Storage for audio recording storage.

- Psychological manipulation scoring combining Cialdini influence vectors with FBI CBCA lie detection methodology, applied to consumer scam protection for the first time.

- Sub-80ms alert latency from speech input to visual alert, achieved through Rust WASM audio preprocessing, making real-time intervention practical rather than theoretical.

- Natural voice intervention via Gemini TTS with three contextual voice profiles (Kore, Puck, Charon) generating scam-type-specific, language-specific warnings in real time.

- Multimodal Explanation Cards that combine audio and visual analysis into plain-language explanations of exactly why a call is dangerous, which manipulation tactics were used, and what action to take.

- 40 languages and 35+ regional scam variants, each grounded to local regulatory sources, making this genuinely global rather than US-centric.

- Scenario-based verification challenges contextual to the exact manipulation being attempted, not generic questionnaires, localized in 9 languages.

- Guided Action Agent generating personalized, localized recovery plans with country-specific emergency contacts and step-by-step instructions immediately after Safe Exit.

What we learned

Latency matters more than accuracy for this use case. A 95% accurate alert at 80ms is infinitely more valuable than a 99% accurate alert at 3 seconds. By the time a slow alert fires, the victim has already shared their OTP.

Psychological scoring changes the detection paradigm entirely. Keyword matching catches the obvious scams. Mapping Cialdini vectors catches the subtle ones, where the caller never explicitly demands a transfer but gradually layers Authority, Fear, and Isolation until the victim complies on their own.

Intervention design is fundamentally a UX challenge. The engineering required to detect scams is straightforward compared to the challenge of interrupting a panicked user in a way they will actually listen to. The three-tier escalation, multimodal explanation cards, scenario-based challenges, and forced Safe Exit for fatal patterns all reflect that lesson.

What's next for VoxGuard

- Native iOS and Android app with platform-level call interception for always-on protection

- Carrier-level integration to deploy VoxGuard as an inline telecom network service

- Real-time video deepfake detection for video call scams

- Pattern library expansion from 50 to 500+ entries with community-sourced threat intelligence

- On-device WASM inference for offline-capable protection

- Enterprise API for banks, telcos, and contact centers

- Emotional contagion scoring to measure how the caller's emotional state transfers to the victim

- Intervention learning to track which challenge types are most effective and continuously adapt

Built With

- cybersecurity

- docker

- fastapi

- fraud-detection

- gemini-2.5-flash

- gemini-2.5-flash-native-audio

- gemini-live-agent

- gemini-live-api

- google-cloud-run

- google-genai-sdk

- javascript

- multimodal-ai

- python

- react

- rust

- vite

- web-audio-api

- webassembly

- websocket

Log in or sign up for Devpost to join the conversation.