-

-

Landing page - CAD in the AI Era

-

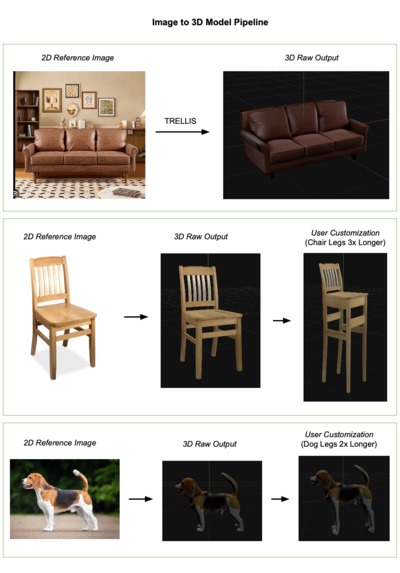

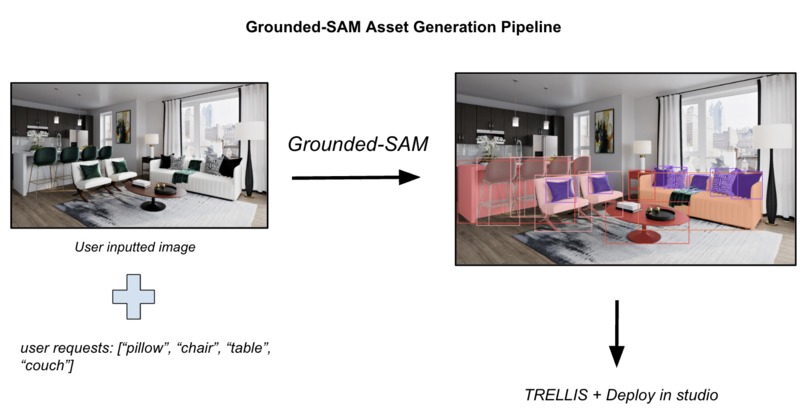

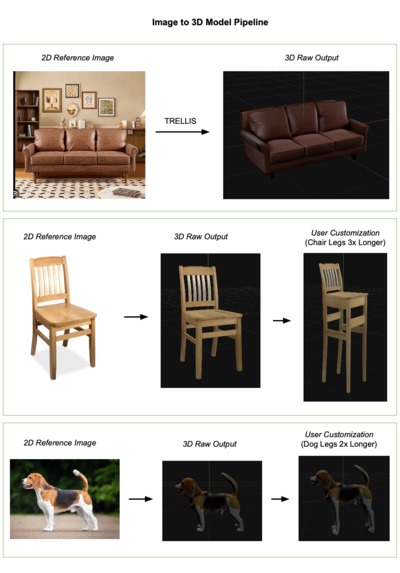

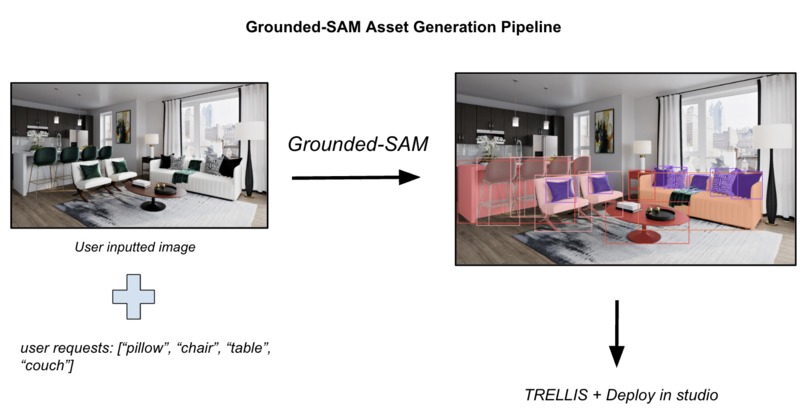

Overview of the pipeline used in Voxal to convert user inputted image data into an editable 3D model.

-

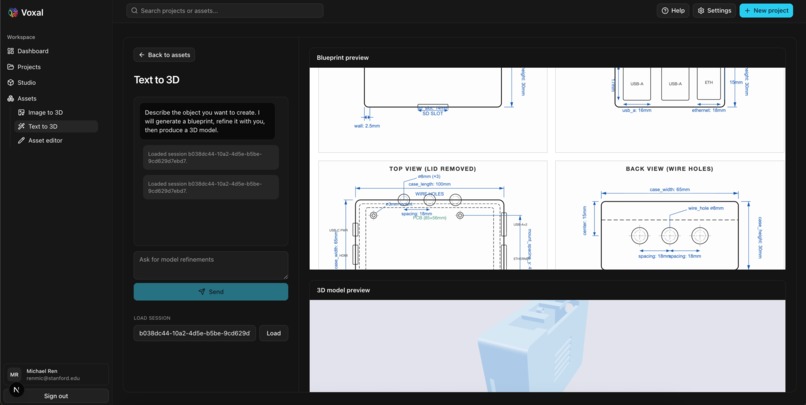

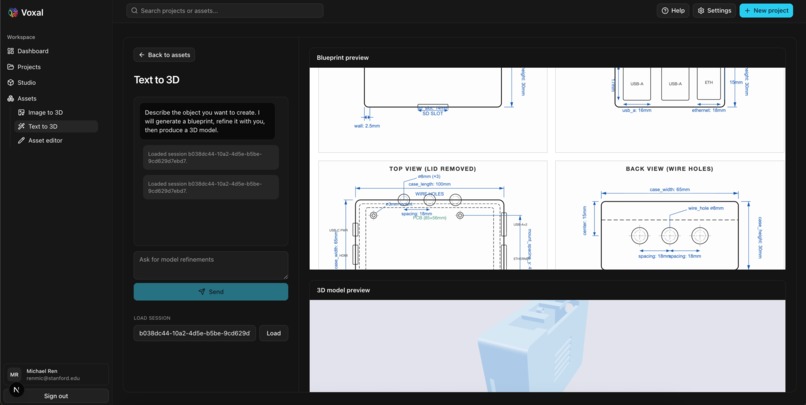

Prompt: "Design a raspberry pi case"

-

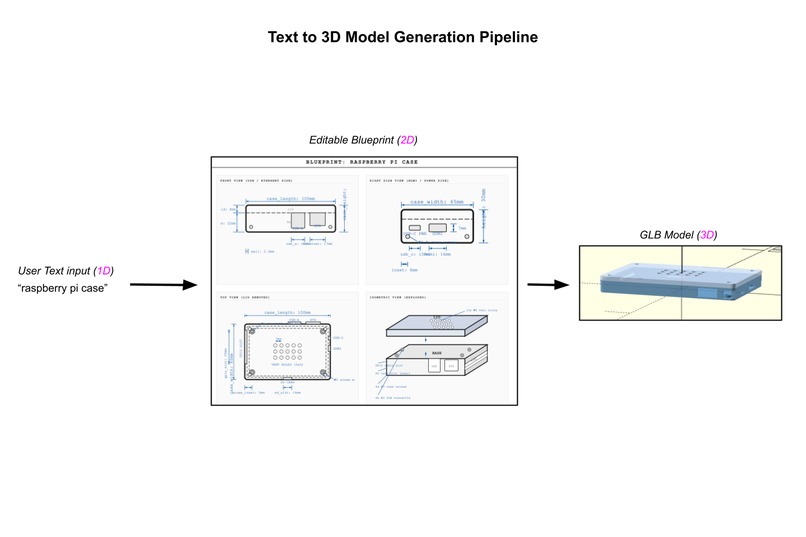

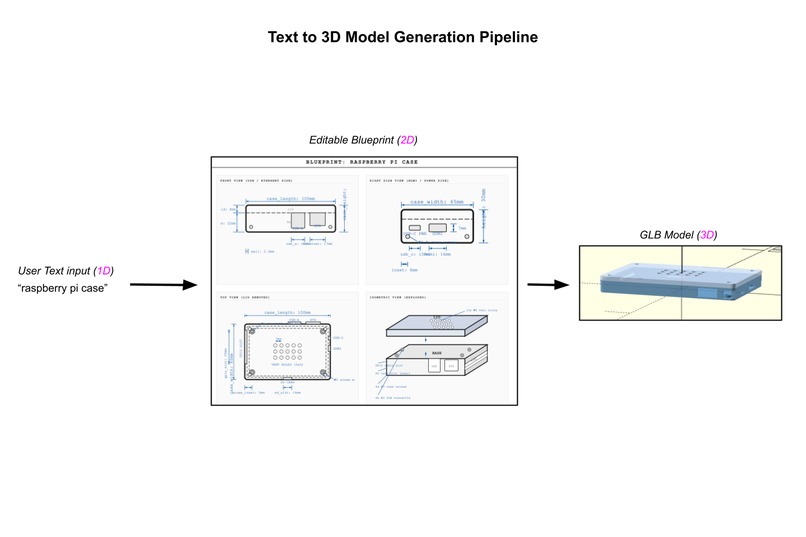

Overview of the pipeline used in Voxal to convert user text into a fully customizable accurate and precise 3D model.

-

-

Segmentation analysis of the Grounded SAM (Segment Anything Model) used for extracting

Inspiration

Engineers routinely spend hours using CAD software to build similar components, or alternatively, having to scavenge for appropriate models online and make countless adjustments. It's time — not only for engineers, but everyone — to be able explore CAD modeling in an accessible manner. We've waited long enough — it's time to do what Cursor couldn't. It's time for engineers to explore their own version of "vibe-coding" through Voxal.

What It Does

Voxal accepts text descriptions or images and generates fully editable 3D models in .glb format. Users can then refine these models through natural language commands such as "make it taller," "add rounded edges," or "change the texture" and watch their modifications happen in real-time. Voxal also isolates all dimensional parameters for components, so that users can manually adjust the models with high precision.

For greater accuracy, we provide a workflow that takes in multiple perspectives of the same image; for convenience of importing media, we provide a workflow that segments objects from a larger image, so that users can select which ones to model in 3D.

How We Built It

Pipeline 1: Image to CAD + Natural Language Editing of CAD

Two-stage pipeline using spatial clustering and Claude Sonnet 4.5:

Stage 1 — GLB Analysis:

- Input: one or multi-image input via TRELLIS → .glb file

- Processing: parse raw vertex positions from GLB binary, compute bounding box, slice mesh into 20 Y-axis layers, cluster vertices on XZ plane via greedy connected-components

- Output: structural column map (center, radius, height range, narrow/wide labels) + pre-computed vertex selection masks

Stage 2 — LLM Edit:

- Input: spatial analysis + user's natural language edit instruction

- Processing: single Claude Sonnet 4.5 call maps instruction to structural columns, generates self-contained Python script using compound vertex masks (height range + XZ proximity)

- Output: modified .glb with only targeted vertices transformed

The edited GLB is rendered via Three.js on our model viewer WebUI.

Pipeline 2: Blueprint-to-OpenSCAD Agent Pipeline

Two-layer agentic workflow using the Claude Agent SDK:

Layer 1 — Blueprint Agent:

- Input: text description

- Output:

blueprint.html(inline-SVG orthographic views, CSS custom-property dimensions),blueprint_dimensions.json - Refinement: resumes the same SDK session with user feedback

Layer 2 — Coding Agent:

- Input: confirmed blueprint files +

openscad_docs.json - Output:

model.scad(parametric OpenSCAD),parameters.json - Refinement: resumes the same SDK session

Finally, the OpenSCAD is compiled and displayed via three.js on our CAD studio WebUI.

Pipeline 0: Preprocessing — Image Segmentation (Modal)

Remote inference runs on Modal (H100 GPU):

- GroundingDINO (SwinT) — open-vocab object detection. Produces bounding boxes for each class prompt.

- SAM (ViT-H) — segment-anything. Takes each bbox as a prompt, outputs per-object masks.

- NMS deduplicates overlapping detections.

Inputs an image with background and many objects; returns pairs of each segment with its class label, and returns the result.

Challenges We Ran Into

Building out the logic for using text to edit .glb models was the most challenging part. The initial idea was to turn .glb into a more "programmable CAD language" like OpenSCAD. However, this turned out to be much more difficult than expected. We tried pipelines that turn .glb into OpenSCAD before using natural language to prompt edits of OpenSCAD. This did not perform as well as expected.

The initial plan for the OpenSCAD coding agent involved using various CV algorithms to extract contours from a source image. We experimented with Canny edge detection, bilateral filtering, several blurring algorithms, perspective correction, and depth perception from multiple views of the same object. The time-consuming process of trial-and-error with algorithm parameters, unfortunately, did not help the coding agent get a much better understanding of the geometry of the requested objects.

There was some friction in learning how to use Modal for inferences, but it was worth it considering the $250 worth of credits and a significant reduction in inference speed.

Differing Python environments requiring different Python versions made infra very difficult while hosting multiple Python backends.

Accomplishments That We're Proud Of

First public software to make TRELLIS generation results editable, allowing users to edit on top of 3D models once already generated. We enable end-to-end CAD generation and modelling for users with no experience in CAD or design.

Convert text input to HTML/JSON file into .glb 3D file (context: for parts that require extreme precision, i.e., 3D-printed parts). Allowing high-precision pieces to be designed for users with little to no experience in CAD or design.

With our robust set of methods, Voxal can excel in various creative pipelines: interior designers can generate and iterate on furniture pieces instantly, e-commerce platforms can produce product variations on demand, engineers can design hardware components just by typing prompts on a phone, and game developers can rapidly prototype environmental objects. Voxal responds to conversational requests, making 3D modeling as intuitive as describing what you want to a colleague.

On the research side, we'd like to highlight Voxal's potential in generating domain-randomized training assets. Voxal's ability to mass-generate and control objects' dimensional parameters could bulk up the volume of reinforcement learning training data through micro-adjustments in virtually simulated environments. The boost in data could massively accelerate Physical AI research in high-precision domains including manufacturing, autonomous driving, agriculture, healthcare & surgery, and drone operations.

Additionally, our coding agent pipeline closely resembles Adam, a startup from the Winter 2025 YC batch. Their open-sourced AI-powered CAD tool also involves prompting coding agents to write in OpenSCAD. Instead of direct code generation from text, we add an intermediate step where the agent designs a 2D blueprint with HTML. Having Claude think like a human engineer, we saw promising improvements. With extra refinements, we believe that our alternative approach can outperform Adam in the future.

Not only do we have the 2D blueprint intermediary step for extra precision, but users are also able to edit the final 3D model output as well with the TRELLIS-generated model.

What We Learned

The biggest lesson learned was definitely coordinating polyglot systems. Managing different frameworks, languages, and runtime environments taught us invaluable lessons about API design, abstraction layers, and integration patterns.

Working with the Claude SDK showed us how quickly AI can help developers master new tools — Claude literally taught us how to use Claude effectively. This meta-learning experience highlighted AI as a development partner, not just a tool.

We deepened our understanding of:

- Agent SDK design and extensibility

- File I/O and agent skill composition

- Infrastructure orchestration across runtimes

- Real-time AI inference optimization

- The limits of computer vision for parametric reconstruction

What's Next for Voxal CAD

We believe that with more optimal visual aids, the OpenSCAD-based coding agent can produce more complex builds. We've not had enough time to explore various CV algorithms that extract critical features from images. Methods like Canny edge detection, bilateral filtering, perspective correction, spline smoothing, and symmetry detection can help a coding agent understand the exact curvatures and overall topology of a given object.

Built With

- better-auth

- claude

- claude-api

- claude-sdk

- fal

- github

- grounded-sam

- javascript

- modal

- next.js

- node.js

- openscad

- postgresql

- prisma

- python

- replicate

- three.js

- trellis

Log in or sign up for Devpost to join the conversation.