-

-

Logo

-

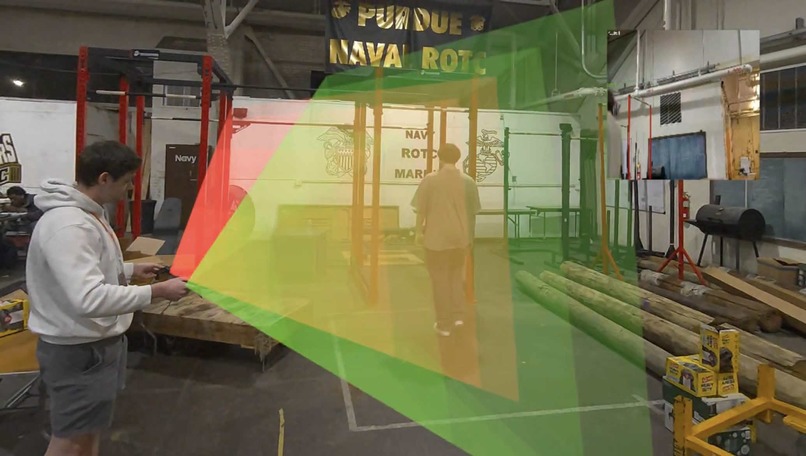

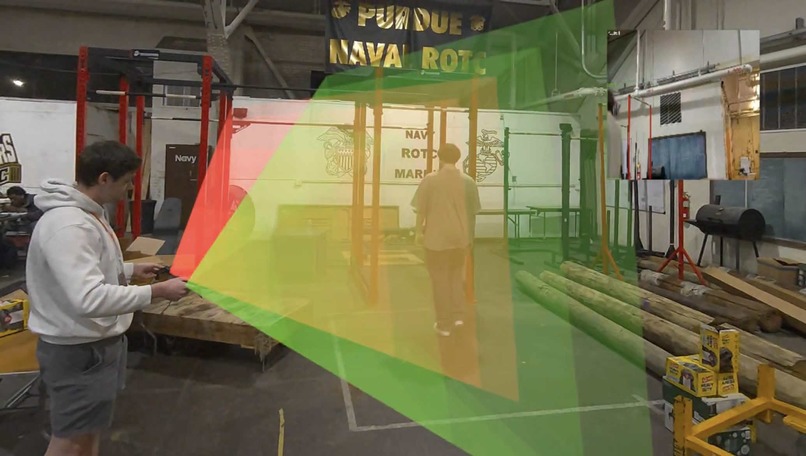

Scanning the April Tag to swap camera view and see the cone of view of the camera.

-

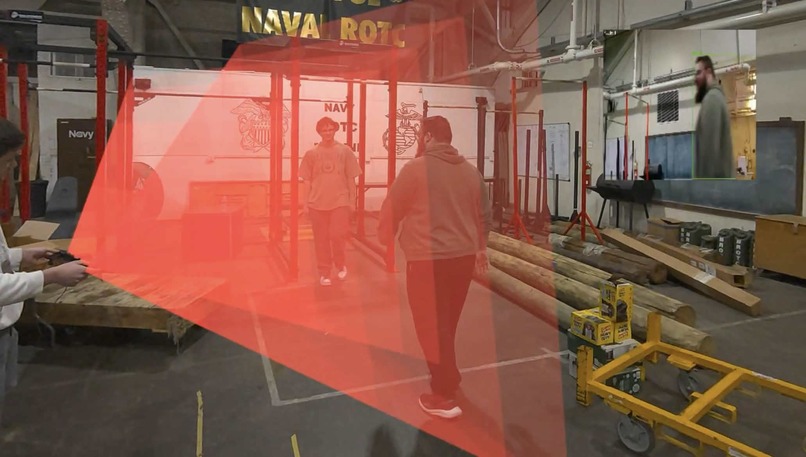

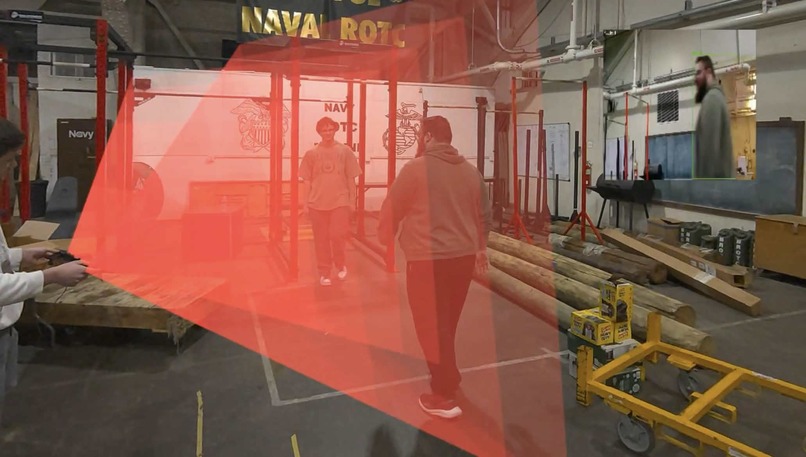

One camera detecting somebody and the other not.

-

The cameras detecting people in both.

-

Bounding Box from Human Detection Model

-

See the camera feed of the camera whose April Tag you scanned.

-

Meta Quest 3

-

April Tag

Inspiration

We thought: what kind of mischief could you get up to with AI and augmented reality (AR) technology? For example, somebody could pull off a high-stakes heist by creating a 3d map of an area they were trying to sneak through, including security system blind-spots. They could use AR to overlay that map on top of the location as they performed their misdeeds.

Enabling theft seemed like a bad premise for a hackathon project. Then we realized: any security-breaching technology can be applied defensively. Why not make a system like this for auditing purposes?

What it does

VoidView is an XR audit and remote-operation platform that allows for the auditing and analysis of vulnerabilities that may exist in your security system. Utilizing the Meta Quest 3 Mixed Reality Headset, our platform supports the analysis of camera vision cones and the ability to remotely ‘jump’ into and take control of a camera, all with in a user friendly wireless (standalone) app.

How we built it

Our stack includes a variety of technologies, matching our strange fusion between reality and the virtual world. Our original plan included the use of an NVIDIA Jetson Orin Nano, utilizing CUDA and edge computing for our YOLO model, but it didn’t pan out due to unforeseen issues with our Jetpack (NVIDIA’s Jetson software stack).

We utilized the Unity game engine for developing and deploying our XR application to the Meta Quest 3. We also run a server (which would’ve been on the Jetson in our ideal world) connected to the cameras and running inference for YOLO. This server, on top of the camera view WebRTC channels, also contains a channel that sends back (on any changes) whether or not a human is in the view of the cameras, which changes the color of the camera’s vision cone inside the Mixed Reality application in real time. Our server is written in Python, using Ultralytics’s YOLO, OpenCV, and aiortc/aiohttp.

AprilTags are used for the selection and identification of IoT devices for analysis, which includes the tracking of the orientation (for processing the direction of a view cone), and for ‘jumping’ into the camera’s view.

Challenges we ran into

One of the first problems we ran into were USB Bus Bandwidth Issues. Actual security systems have 100s if not thousands of cameras that are connected to one system. We wanted to mimic that as much as we possibly could, so we aimed for three cameras. However, that proved to be too many for any of our systems and so we had to cut it down to two cameras in order to demo the features.

Our next problem we had were dependency issues mainly with the relationship between CUDA and torch for running a YOLO model on our NVIDIA Jetson Orin Nano. This would have been nice to use as our server for the project, but it seemed the solution to our problem would have to be to reflash the entire OS on the Jetson, so as a solution we are using one of our laptops as a server.

Our iteration time in Unity was a huge weak point. Mixed Reality is difficult to simulate, and compilation took a long time even on a beefy workhorse laptop.

Accomplishments that we're proud of

Implementing many of the features that we set out to do initially even after having to tackle all of the problems mentioned above. Being able to scope a project correctly for a hackathon is something that is difficult to do, and we feel as a group that we accomplished a scope that was well within our grasp, yet still taught us a lot.

What we learned

Most of us are purely software people, so a hardware event is unique to all of us. Developing for the Meta Quest was a new experience, and doing something large/serious with an embedded system was also unique, even if at the end of the day we didn’t end up going with our embedded environment (Jetson). There were some inexperience/gaps in knowledge on WebRTC, which we chose to use for networking, which led to some confusion in implementation.

What's next for VoidView

VoidView seeks to allow for the operation of more devices, including things like smart locks (access logging), motion detectors (movement heatmaps and trend analysis), and more. Outside of security features, things like lights and smart thermostats could also be implicated to see potential effects on security systems (such as lights to show the visibility of security devices or the effects of darkness or glare on cameras; or for a smart thermostat, the temperature changes with humans in the room indicating someone is inside). Implementing more motion features to create a smart security system could be an interesting path to take VoidView.

We seek to also improve the capabilities of our existing feature (cameras), such as customizability/modifications for our object detection model and the implementation of servos for remote rotation of our cameras.

Some more ideas for expansion of this project is to add support for more technologies beyond ones that are specifically for viewing like lights and cameras. Something like being able to use this system to access speakers remotely and broadcast your voice from the microphone inside the headset.

Our easiest and ideal next change is to transfer to an edge device (any of the NVIDIA Jetsons) for the CUDA capabilities.

Log in or sign up for Devpost to join the conversation.