-

-

VOID_TERMINAL: Gemini Edition

-

Random encounters. Make the right choice! Fight, flee or trade.

-

Throw a dance party to motivate your crew.

-

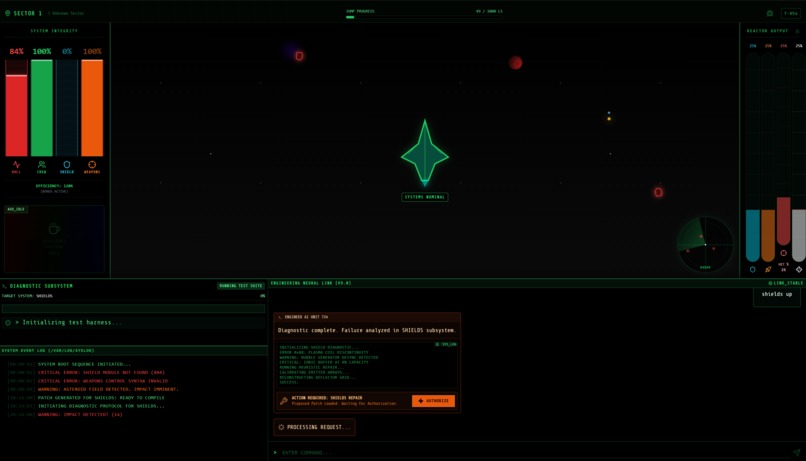

Repair shields, weapons and hull. Manage the energy from the reactor.

-

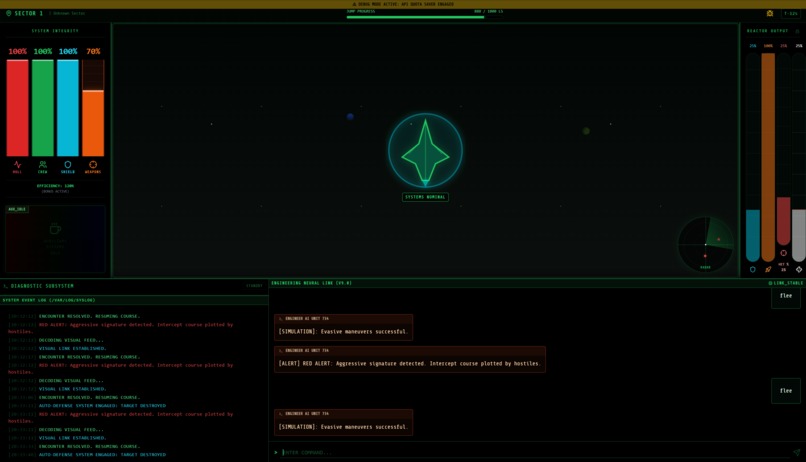

Use simulation mode, to enjoy the game even without Gemini.

-

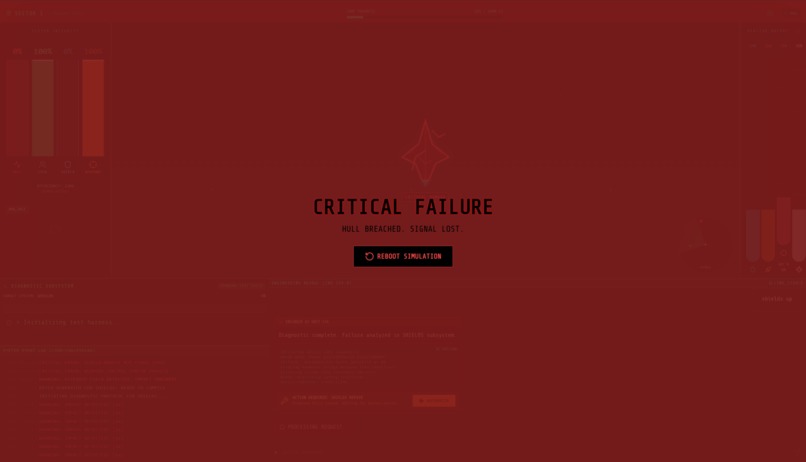

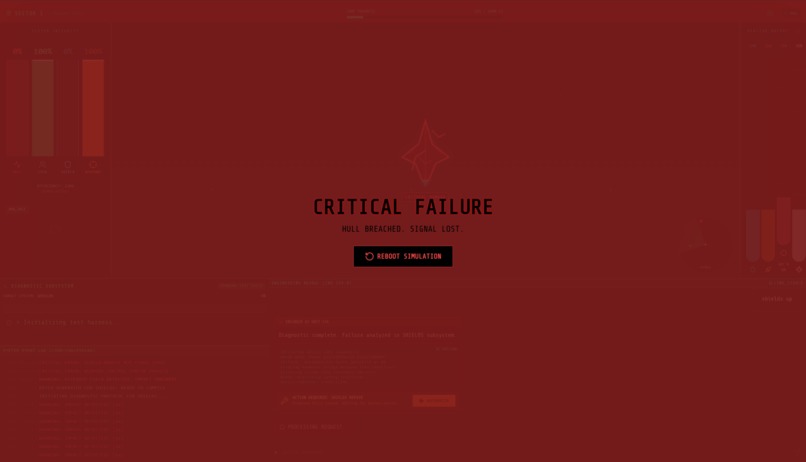

If your hull breaks, your journey is over. Be smart and use your AI.

Inspiration

I grew up on the tension of sci-fi worlds. The limitless imagination of text adventures like Zork, rouge-like games as FTL and universes like Star Wars, as well as Star Trek. But in traditional games, the "computer" is just a script. It has a limited set of responses and can’t truly improvise.

With the release of Gemini 3 and its multimodal capabilities, I asked myself: What if the ship's computer was actually intelligent?

So I wanted to recreate the feeling of being the captain of a starship, ordering "Tea, Earl Grey, Hot" from an AI, which understands context. But not just a chatbot, I wanted a Game Master that controls the physics of the world, generates the visuals of the aliens I encounter and synthesizes the sounds of the ship. All in real-time!

What it does

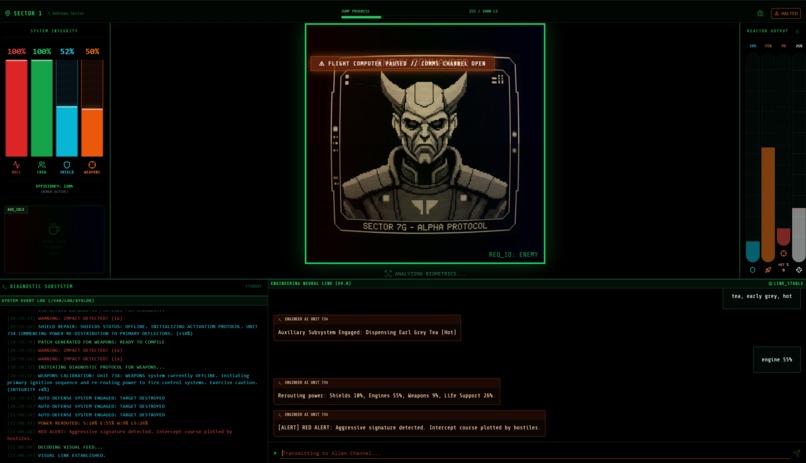

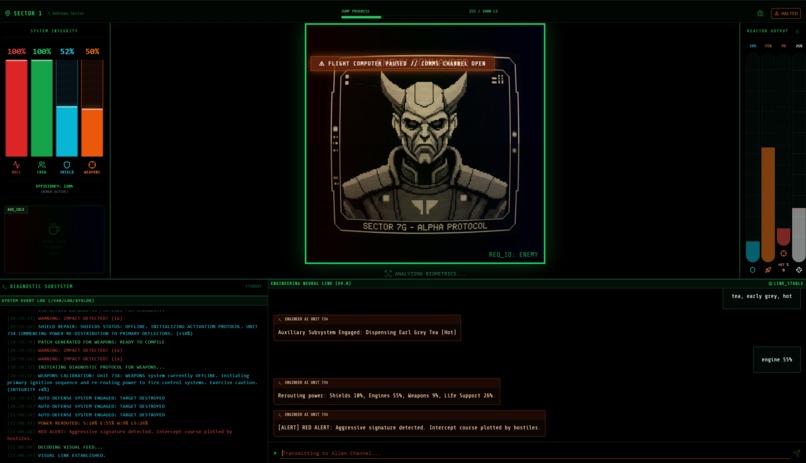

VOID_TERMINAL is a single-page sci-fi survival game, disguised as a retro-futuristic CRT terminal. You are the Captain. You command the USS Reaction, a ship falling apart in deep space. Gemini is "Unit 734". The AI isn't just a text generator, it is an agent. It monitors the ship's state (Hull, Shields, Crew, Power) and responds to your natural language commands.

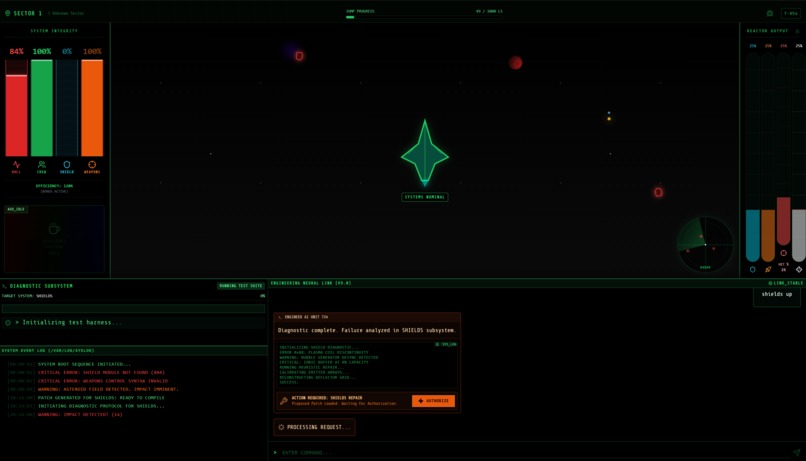

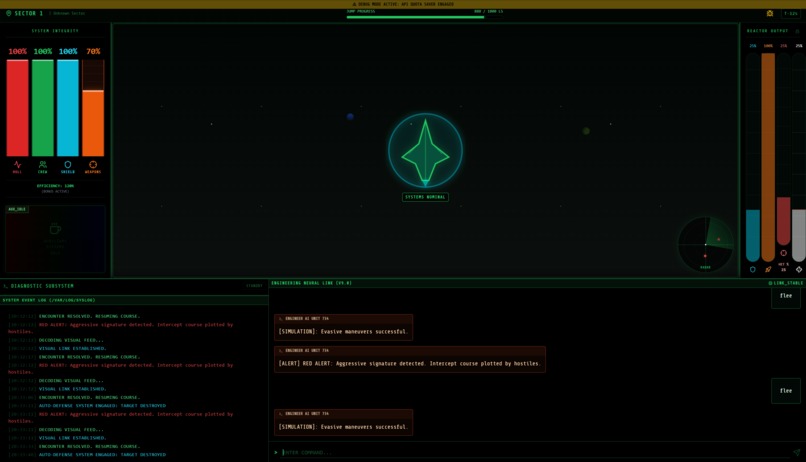

Survival Mechanics: You must reach the Jump Point (1000 Light Seconds away) while managing power distribution, repairing hull breaches via "software patches" and fending off enemies.

Multimodal Immersion:

- Vision: When radar blips appear, the AI generates pixel-art visualizations of the aliens using gemini-2.5-flash-image.

- Video: If you ask for a coffee break or a holodeck simulation, the app generates retro-style video loops using veo-3.1. or falls back to generating images using gemini-2.5-flash-image, if the user can't use Veo.

- Audio: The AI announces actions using gemini-2.5-flash-preview-tts and plays procedural sound effects generated by the Web Audio API.

How we built it

The project is built with React 19 and Tailwind CSS, using Gemini 3 as the brain.

The Brain (Gemini 3 Flash): The entire game state (health percentages, sector info) is injected into the system prompt. Defined specific Function Tools (reroute_power, generate_patch, resolve_encounter) allow the LLM to execute code. When you say "Full power to shields!", Gemini doesn't just reply "Okay", it actually calls the function that updates the shipState React state.

The Eyes (Gemini 2.5 Image & Veo): A dynamic visual pipeline is implemented. When an encounter triggers, the image model is prompted for "retro-futuristic pixel art on a green CRT." For miscellaneous actions, the video generation model is used to create atmospheric loops.

The Ears (Web Audio API & TTS): A custom AudioService was built, that uses oscillators and gain nodes to generate procedural space drones, alarms, and laser sounds without using external MP3 assets. Gemini's TTS output is used for voice feedback.

The Physics: A custom game loop runs at 50ms intervals to handle radar physics, asteroid collisions and resource decay, which the AI then reacts to.

Challenges we ran into

Hallucination vs. Game State: Early on, the AI would claim it fixed the ship, but the health bar wouldn't move. I solved this by strictly enforcing Function Calling. The AI is not allowed to say it fixed something, unless it successfully calls the generate_patch tool, which triggers the actual logic.

API Quotas & Latency: Generating video and high-quality responses takes time and quota. A Fallback Simulation Mode was implemented to account for that. If the API throws a 429 (Quota Exceeded) error, the game automatically switches to a local heuristic "Debug" or "Simulation" Mode that mimics the AI's behavior, ensuring the player is never stuck staring at a loading screen.

Audio Decoding: Gemini's audio models return raw PCM data. A custom buffer decoder needed to be written to convert that raw data into an AudioBuffer playable by the browser's AudioContext.

Accomplishments that we're proud of

The "Simulation Mode" Fail-safe: If the AI goes offline (quota/network), the game seamlessly degrades into a playable "offline simulation" with mocked responses, preserving the user experience.

Atmosphere: The CSS CRT effects (scanlines, flicker, glow) combined with the procedural audio engine create a deeply immersive "lo-fi sci-fi" vibe that feels cohesive.

True Agentic Gameplay: Seeing the health bars move and the ship speed change based purely on natural language conversation ("Get us out of here, fast!") feels like magic.

What I learned

Context is King: Feeding the exact JSON state of the ship into the AI's system prompt was critical. The AI needs to know exactly how close to death you are to respond with the right level of panic.

Latency Masking: In game design, waiting 2 seconds for an AI response feels like an eternity. We learned to use "decoding..." animations and procedural sound effects to fill the void while the LLM "thinks," making the delay feel like part of the ship's retro technology.

Multimodal orchestration: Coordinating text, audio and video generation requires careful state management to ensure they don't overlap or desync.

What's next for VOID_TERMINAL: Gemini Edition

Voice Command (Gemini Live): I want to integrate the Gemini Live API, so players can shout commands at their microphone instead of typing, creating a true "Star Trek" bridge experience.

Persistent Universe: Using the AI to generate unique sectors, planet names, and lore that persist between runs, effectively creating an infinite, AI-generated universe.

Multi-Crew Mode: Allowing a second player to join as the "Tactical Officer," with the AI mediating the state between two different clients.

Built With

- gemini3

- react

- tailwind

Log in or sign up for Devpost to join the conversation.