-

-

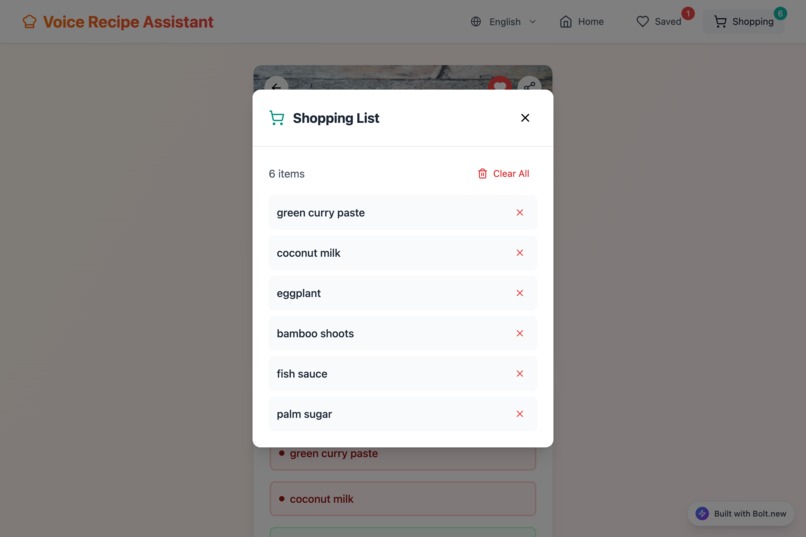

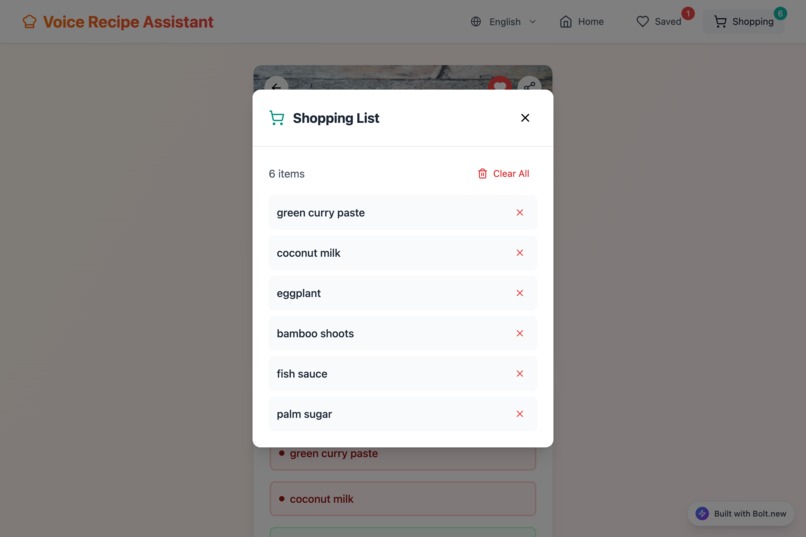

The auto-generated shopping list feature. It lists all needed ingredients for a recipe, simplifying meal prep.

-

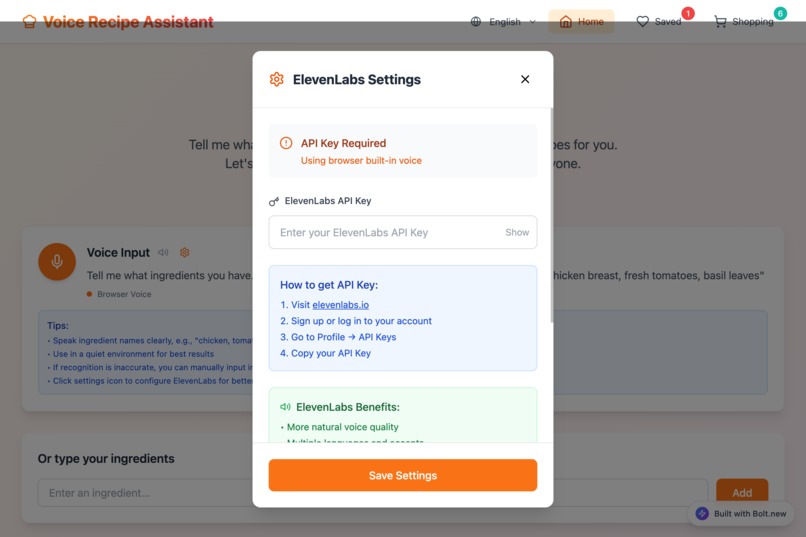

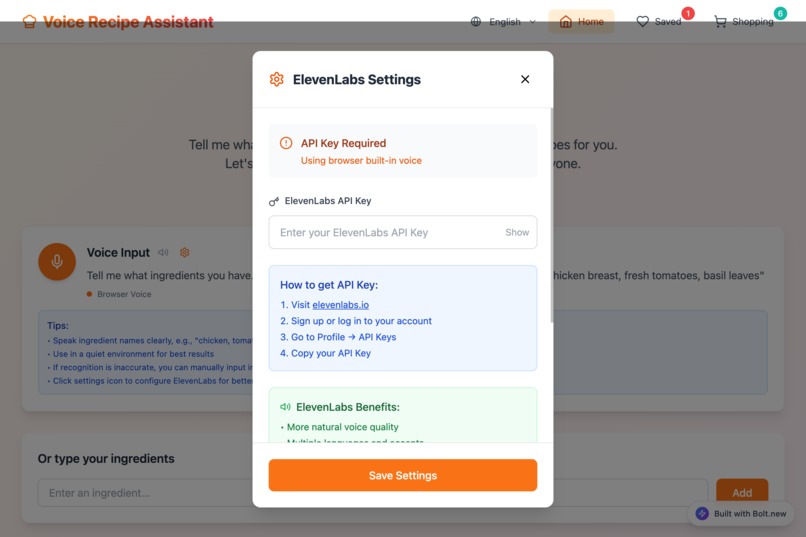

Advanced Voice AI settings. Users can enter an ElevenLabs API key to enhance voice quality.

-

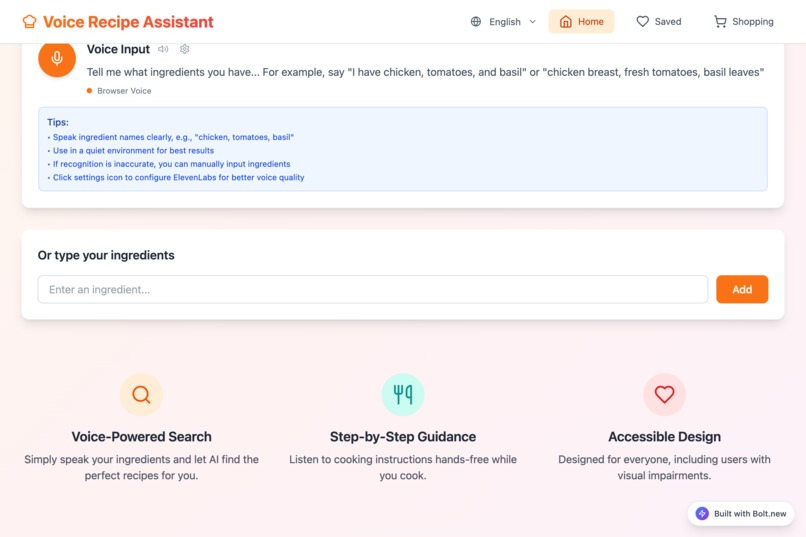

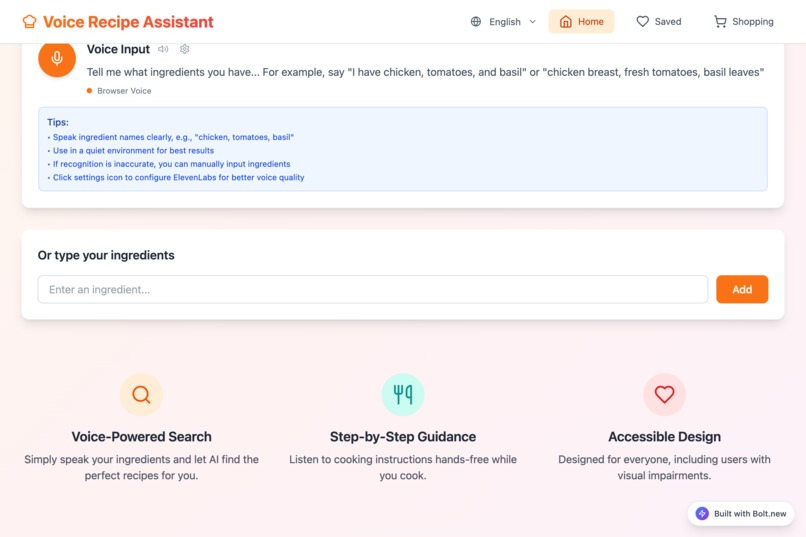

The main interface, showcasing core features like voice input, step-by-step guidance, and accessible design.

-

Showcasing multilingual support. This is the Traditional Chinese interface, reflecting the app's localization.

-

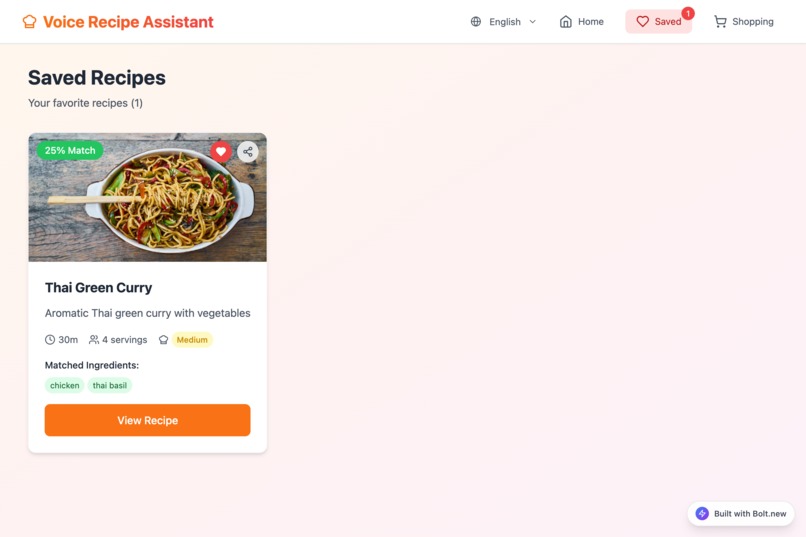

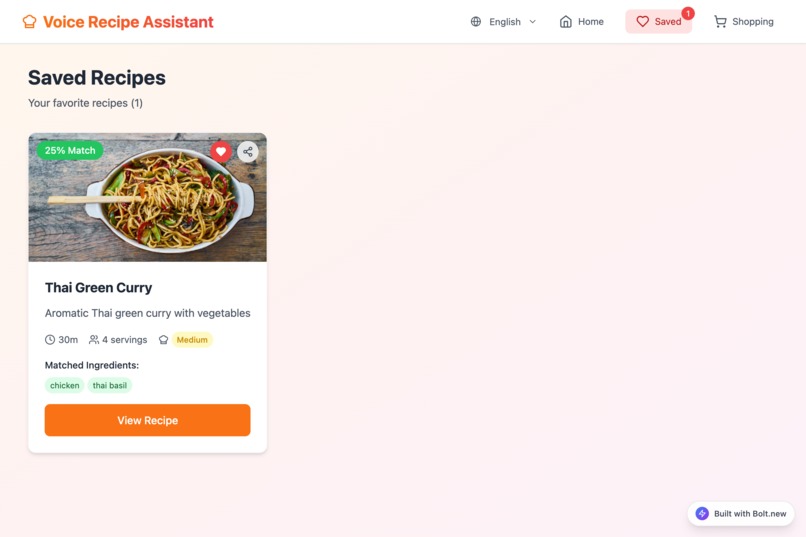

The "Saved Recipes" page, where users can store their favorite recipes for quick access later.

-

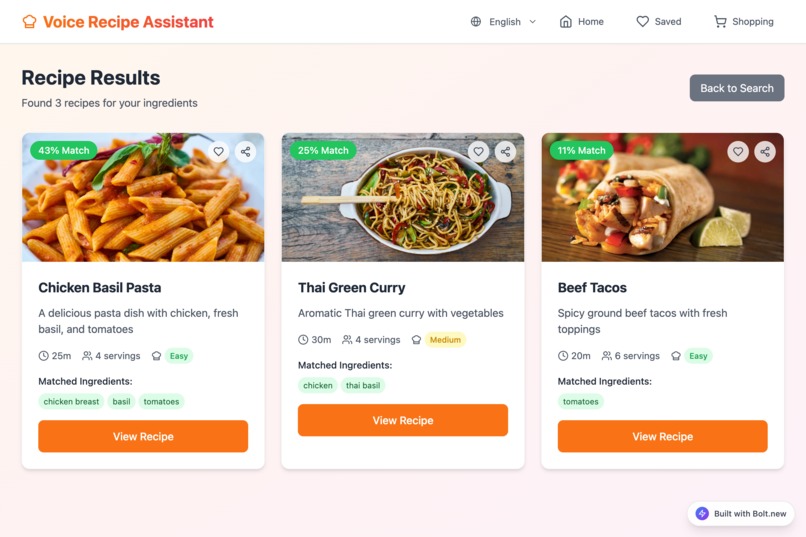

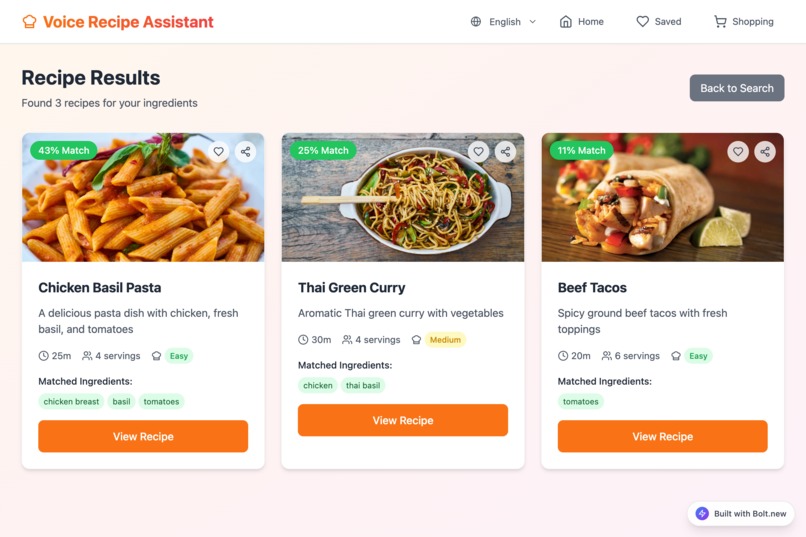

Smart search results page. The system recommends matching recipes as cards based on the user's ingredients.

VoiceChef

Inspiration

The inspiration for VoiceChef comes from a kitchen dilemma we've all faced: hands covered in flour or grease, needing to scroll through a recipe on a phone. Traditional recipe apps are clumsy and inconvenient at crucial cooking moments.

We wanted to create a more fluid and natural cooking experience. What if you could just talk to your recipe? This idea became even more meaningful when we considered accessibility. For busy parents with their hands full, for culinary enthusiasts who want to immerse themselves in the flow of cooking, and especially for our friends with visual impairments, a voice-only interface is not just a convenience—it's a tool that empowers them with independence and joy. We aim to build a kitchen companion that listens and assists, making the joy of cooking accessible to everyone.

What it does

VoiceChef is a fully voice-driven AI recipe assistant that transforms your scattered ingredients into delicious meals. Here’s how it works:

- Voice-Input Ingredients: Simply say what you have in your fridge, like, "I have chicken, tomatoes, and onions."

- Smart Recipe Matching: VoiceChef instantly analyzes your ingredients and searches its vast database to recommend the best-matching recipes.

- Step-by-Step Voice Guidance: Once you select a recipe, VoiceChef acts like a true sous-chef, guiding you through each step with clear, audible instructions.

- Voice Command Control: You can control the flow at any time with voice commands like "next step," "repeat the last step," or "what ingredients are needed?"—all without touching your screen.

It solves the inconvenience of handling a phone in the kitchen and provides a fully accessible cooking solution for the visually impaired.

How we built it

During this hackathon, we adopted a modern, API-first development approach, using Bolt.new as the core platform for our project. Our tech stack is as follows:

- Voice AI: We integrated the ElevenLabs API to handle all voice-related tasks. Its Speech-to-Text function recognizes the user's ingredients and commands, while its Text-to-Speech function reads out the recipe steps in a natural, fluid voice.

- Recipe Database: We connected to the Spoonacular API, leveraging its powerful search capabilities to accurately find suitable recipes based on the ingredients provided by the user.

- Backend & Integration: Bolt.new served as the central nervous system of our project. It managed the workflow between the frontend and backend APIs, processed data from Spoonacular, sorted recipes based on match relevance, and presented the final results to the user.

- Frontend: We built a clean, responsive web interface to display the text version of the recipe and images of the dish, serving as a visual aid to the voice guidance.

Challenges we ran into

Within the tight hackathon schedule, we encountered several challenges:

- Voice Recognition Accuracy: The AI sometimes confused similarly pronounced ingredients, such as "basil" and "hazel." We needed to design a fault-tolerance mechanism, but this was not fully implemented due to time constraints.

- Integrating Multiple APIs: Coordinating two distinct external APIs (one for voice, one for recipe data) to work together seamlessly within the Bolt.new environment required fine-tuning and robust error handling.

- Real-Time Interaction Experience: To make the conversation feel natural, we had to minimize latency. Optimizing API requests and backend processing speed to achieve near-instant voice feedback was a constant goal.

- Time Constraints: This was the biggest challenge. We had to make trade-offs, prioritizing the core voice interaction and recipe-matching features, while listing secondary features like "shopping list generation" as future goals.

Accomplishments that we're proud of

Despite the challenges, we are proud of what we achieved:

- A True "Hands-Free, Voice-On" Experience: We successfully built a functional prototype that allows a user to go from listing ingredients to completing a dish entirely without touching a screen.

- Accessibility-First Design: We prioritized accessibility from the very beginning, rather than as an afterthought. We believe VoiceChef has the potential to bring tangible benefits to the visually impaired community.

- Seamless Tech Integration: In a limited time, we successfully integrated three different services (Bolt.new, ElevenLabs, Spoonacular) and made them work in harmony.

- An Intuitive Interaction Flow: User feedback confirmed that our conversational interface felt natural with almost no learning curve, validating our design approach.

What we learned

This hackathon was a tremendous learning experience:

- First, we gained a deep appreciation for the uniqueness of Voice User Interface (VUI) design. It's fundamentally different from traditional graphical interfaces, demanding clearer commands and more concise feedback.

- Second, we witnessed the development efficiency brought by modern tools. Platforms like Bolt.new and powerful APIs enable a small team to rapidly build complex and meaningful applications in a short amount of time.

- Most importantly, this project reaffirmed the importance of incorporating empathy and inclusivity into product design. A design created to solve a problem for a specific group often ends up benefiting all users.

What's next for VoiceChef

The journey for VoiceChef has just begun, and we are excited about its future:

- Enhance Interaction Accuracy: Add a voice confirmation mechanism (e.g., "Did you say 'basil'?") and allow users to manually correct misidentified ingredients.

- Expand Functionality: Implement a user account system to save favorite recipes and add the originally planned one-click "shopping list" generation feature.

- Support for Multiple Languages: Expand the service from English to more languages like Chinese and Spanish to serve a global user base.

- Smarter Cooking Guidance: Add more interactive commands like "set a timer for ten minutes" or "what's needed for the next step?" and integrate a timer function.

- Develop Native Apps: Build native iOS and Android applications for a more deeply integrated and fluid mobile experience.

Built With

- elevenlabs-api

- eslint

- fetch-api

- localstorage-api

- lucide-react

- navigator-api

- postcss-&-autoprefixer

- react-18

- speech-synthesis-api

- tailwind-css

- typescript

- typescript-eslint

- vite

- web-audio-api

- web-speech-api

Log in or sign up for Devpost to join the conversation.