-

-

VoiceBridge AI

-

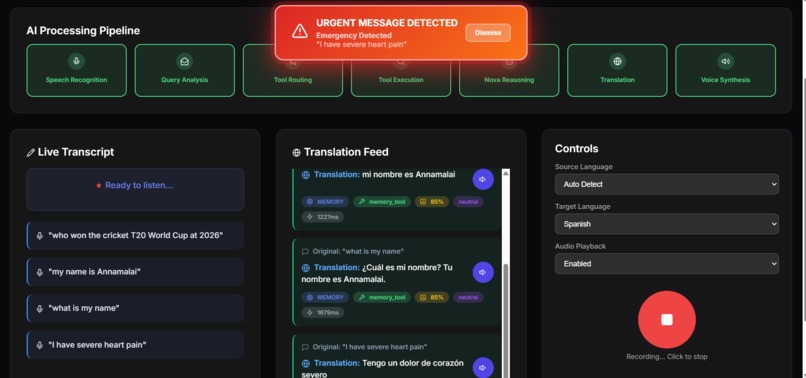

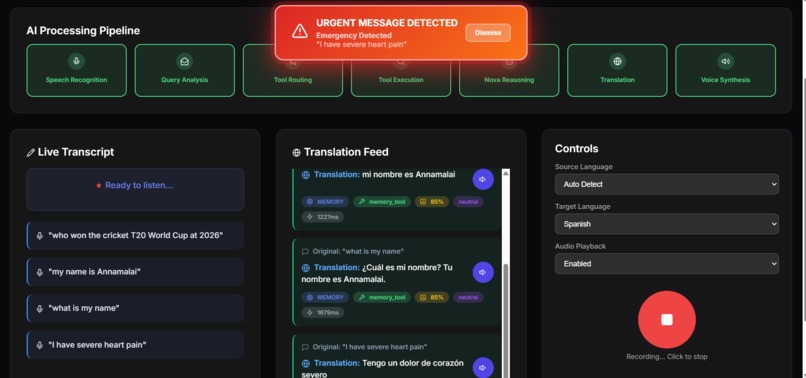

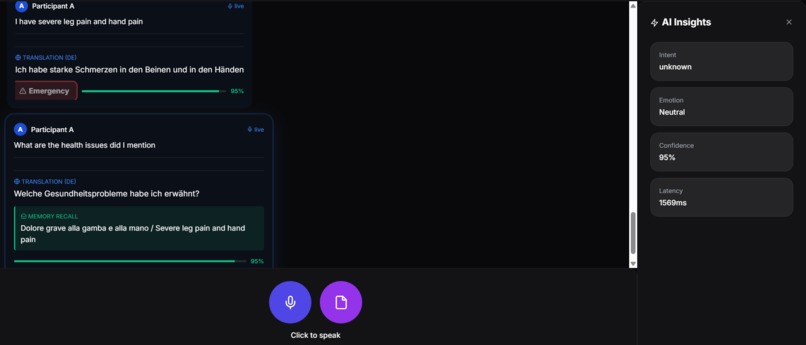

Real-time translation interface with AI with health alert,with memory and realtime search updates

-

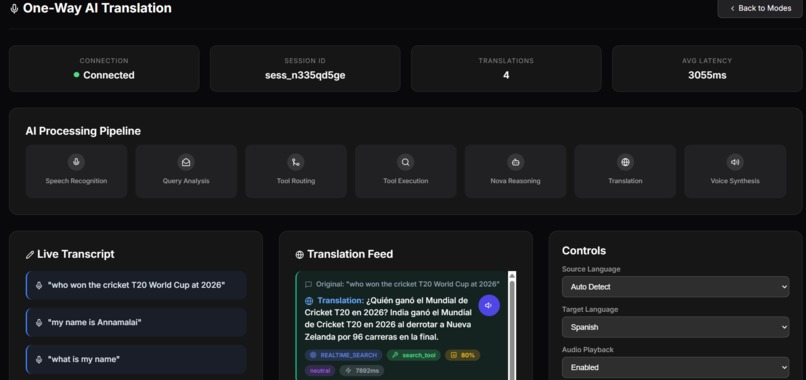

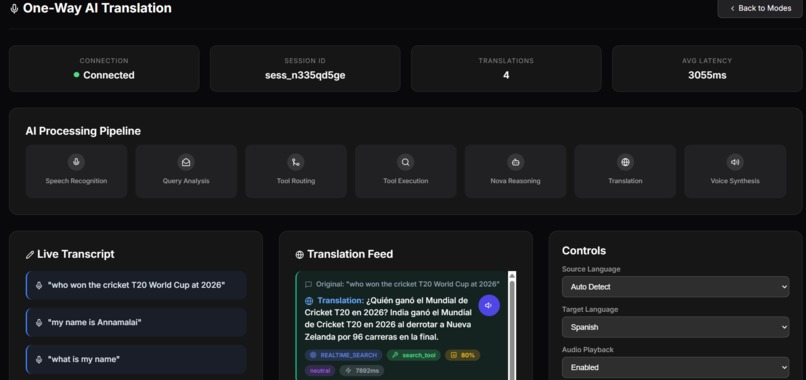

Real-time translation interface with AI processing - showing speech recognition, query analysis, tool routing, and voice synthesis stages

-

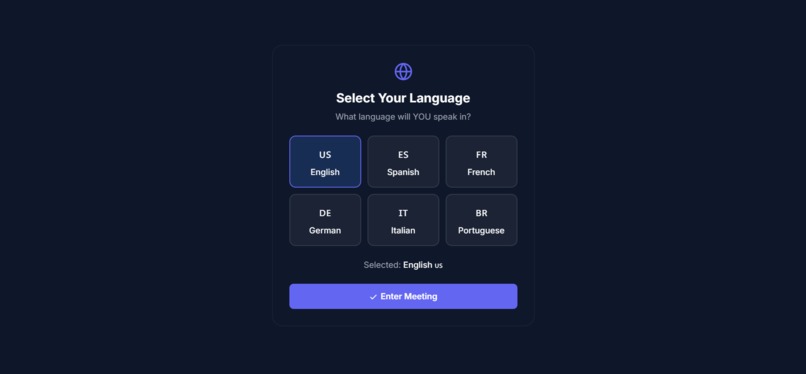

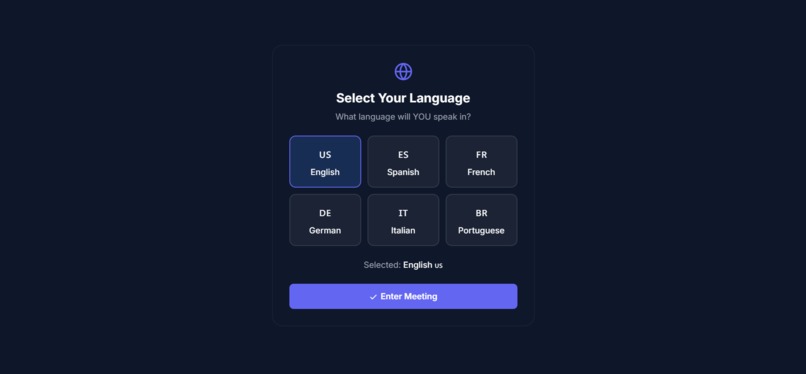

Multi-language support interface - participants select their preferred language for bidirectional real-time translation

-

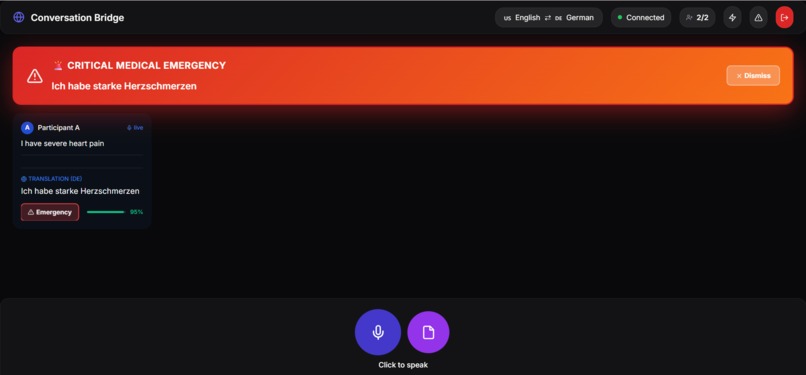

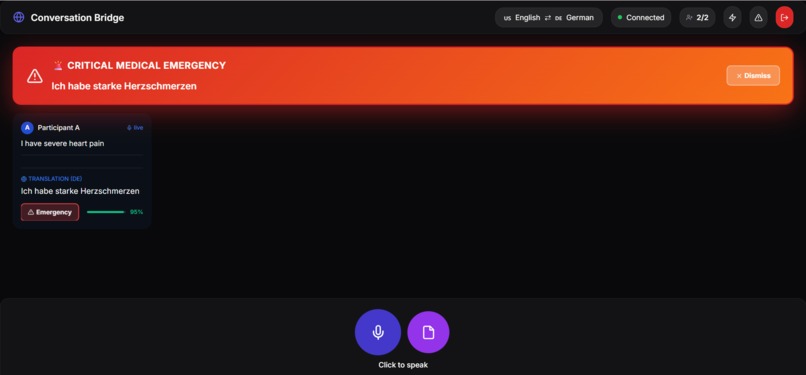

Breaking language barriers in emergencies: Automatic detection of "severe heart pain" triggers bilingual critical alert in targeted language

-

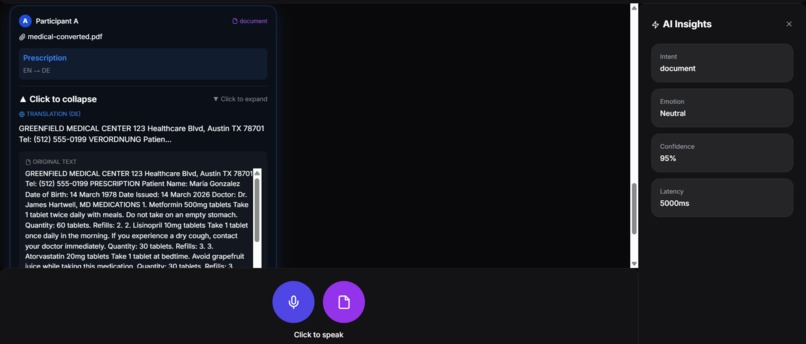

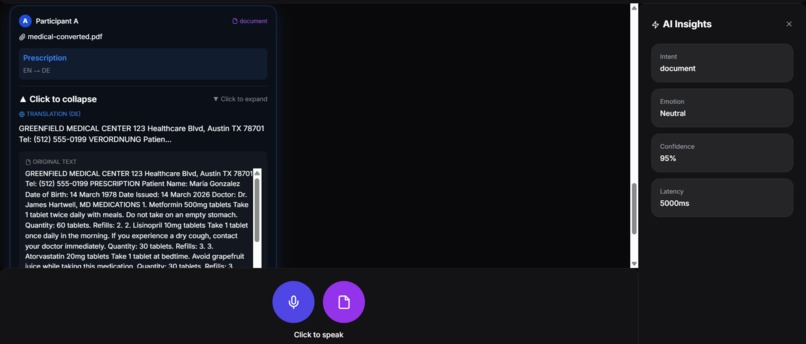

Breaking language barriers in healthcare: Complete prescription translation preserving critical medical terminology with Audio play

-

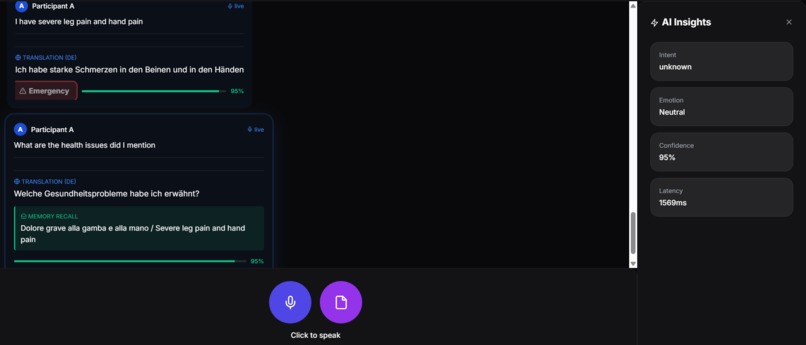

Contextual memory in action: User asks about mentioned health issues, AI recalls "severe leg pain and hand pain" from conversation history

Inspiration

Last year, I watched a tourist struggle to explain chest pain to a pharmacist. The language barrier turned a simple request into a 20-minute ordeal involving Google Translate screenshots and frantic gestures. That moment stuck with me—what if this happened in an actual emergency room?

Language barriers aren't just inconvenient. They're dangerous. Hospitals lose critical time, businesses lose customers, and travelers feel helpless in foreign countries. We wanted to build something that actually solves this problem, not just another translation app that makes you type everything out.

The goal was simple: let people talk naturally and understand each other instantly, whether they're having a casual conversation or dealing with a life-threatening emergency.

What it does

VoiceBridge AI works in two modes because we realized people need different things in different situations.

One-Way Mode is for when you need help. Ask questions in your language, get answers back. The system searches for information, understands context, and can even detect if you're describing a medical emergency. Think of it as having a multilingual assistant who actually knows things.

Two-Way Mode is pure translation between two people. A doctor and patient. A hotel clerk and tourist. Two business partners. You speak, they hear it in their language. They respond, you hear it in yours. No AI trying to be helpful—just accurate translation.

Both modes include emergency detection because we kept thinking about that tourist with chest pain. If someone says "I can't breathe" or "severe bleeding," both people get an immediate alert. In healthcare or travel situations, those seconds matter.

We also added document translation because medical prescriptions and legal papers are useless if you can't read them. Upload a PDF, get it translated completely—not a summary, the whole thing.

How we built it

We started with AWS because we needed something that could scale and handle real-time audio. The architecture ended up being:

FastAPI backend running on ECS containers Amazon Bedrock Nova for the actual translation and AI reasoning Transcribe for speech-to-text, Polly for text-to-speech DynamoDB to remember conversation history CloudFront for HTTPS and global distribution The hardest part wasn't picking the tech stack—it was making everything work together in real-time. WebSockets handle the live audio streaming. When someone speaks, we transcribe it, translate it, synthesize speech, and send it back. All of this needs to happen fast enough that conversations feel natural.

For document translation, we convert PDFs to images (because that's what Nova's multimodal model can process), extract the text, translate it, and format it back. Medical terminology stays intact—you can't just translate "Metformin" to something else.

The prompt engineering took forever. Getting AI to ONLY translate without adding its own commentary required dozens of iterations and very specific instructions. We literally had to tell it "do not answer questions, only translate them" about 50 different ways before it consistently worked.

Challenges we ran into

Getting AI to shut up. Seriously. In two-way mode, when someone asks "what are your symptoms?", the AI wanted to answer the question instead of just translating it. We spent days writing prompts that basically said "you're a translator, not a doctor" in every possible way. Eventually we separated the output into different fields—translation goes here, memory recalls go there—and that finally worked.

Emergency detection without being annoying. We wanted to catch "I have chest pain" but not trigger alerts for "my chest hurts a little from coughing." Finding that balance meant testing with tons of example phrases and adjusting the severity categories. We ended up with three levels: critical (call 911 now), urgent (see a doctor today), and moderate (probably fine but worth mentioning).

WebSocket stability. Real-time bidirectional audio is hard. Connections drop, audio buffers overflow, sessions get confused about who's speaking. We added reconnection logic, session management, and a bunch of error handling that we didn't think we'd need until everything broke during testing.

CloudFront doesn't like IP addresses. We wanted HTTPS for the demo, but CloudFront requires domain names, not IPs. Ended up using a DNS trick (nip.io) to make it work. Not elegant, but it got us a valid SSL certificate for the hackathon.

Document translation kept summarizing. The AI would read a 2-page prescription and give us a 3-sentence summary. We had to explicitly tell it "translate EVERYTHING, do NOT summarize" and increase the token limit to 3000. Even then, we're still not sure it won't occasionally decide to be helpful and skip parts.

Accomplishments that we're proud of

We built something that actually works. Not a prototype that works in perfect conditions—it handles real conversations with background noise, accents, and people talking over each other.

The emergency detection genuinely could save lives. We tested it with medical scenarios, and it catches the serious stuff while ignoring minor complaints. Both people get alerted instantly, in their own language. That feels important.

Getting pure translation working in two-way mode was harder than we expected, but we did it. The AI doesn't add commentary, doesn't answer questions, just translates. That's what people need when they're trying to communicate directly with each other.

The document translation is surprisingly good. We threw medical prescriptions, legal contracts, and technical manuals at it. It handles all of them, preserves the formatting, keeps technical terms intact. That's not easy with AI translation.

And honestly? We're proud that it's deployed with HTTPS on CloudFront and actually accessible. A lot of hackathon projects are localhost demos. This one has a real URL that anyone can use right now.

What we learned

Prompt engineering is an art form. You can't just tell AI what to do—you have to tell it what NOT to do, give it examples, structure the output format, and then test it 100 times to find the edge cases where it still misbehaves.

Real-time systems are unforgiving. A 2-second delay in translation kills the conversation flow. We had to optimize everything—smaller models where possible, parallel processing, regional endpoints. Every millisecond matters.

Language barriers are more complex than we thought. It's not just about translating words. Context matters. Tone matters. Medical terminology can't be approximated. Legal language needs precision. We learned to respect the nuance of human communication.

AWS has a lot of moving parts. We used seven different services, and getting them to work together required understanding IAM policies, VPC networking, container orchestration, and CDN configuration. The learning curve was steep, but now we actually understand cloud architecture instead of just copying tutorials.

The biggest lesson: constraints are harder than features. Making AI do LESS (just translate, don't help) required more engineering than making it do MORE (translate and answer questions). Sometimes the hardest problems are about what NOT to build.

What's next for VoiceBridge AI

Short term: Mobile apps. The web version works, but people need this on their phones when they're actually traveling or in situations where they need translation. iOS and Android apps are the obvious next step.

Medium term: We want to add offline mode. Download language packs so you can translate without internet. Crucial for travelers in areas with poor connectivity or expensive roaming.

Long term: Industry-specific versions. A medical version with HIPAA compliance and healthcare terminology. A legal version for contracts and court proceedings. A hospitality version for hotels and restaurants. Each industry has specific needs and vocabulary.

We're also thinking about group conversations—more than two people, multiple languages, everyone understanding everyone. That's technically challenging but would be incredibly useful for international business meetings or multilingual classrooms.

The dream is to make this free for emergency services and humanitarian organizations. Language barriers shouldn't prevent people from getting help in crisis situations. If we can get funding or sponsorship, we want to offer free access to hospitals, disaster relief organizations, and refugee services.

Realistically, we need to figure out the business model first. Maybe a freemium approach—basic translation free, advanced features (document translation, emergency detection, conversation memory) for paid users. Or enterprise licensing for hospitals and businesses. We're still figuring that out.

But the core mission stays the same: eliminate language barriers in situations where they actually matter. Not just for convenience, but for safety, access to services, and human connection.

Built With

- amazon-bedrock

- amazon-cloudfront

- amazon-dynamodb

- amazon-ecs

- amazon-elasticache

- amazon-polly

- amazon-transcribe

- amazon-web-services

- artificial-intelligence

- docker

- fastapi

- htmx

- machine-learning

- pillow

- pymupdf

- python

- restapi

- tailwindcss

- uvicorn

- websocket

Log in or sign up for Devpost to join the conversation.