-

-

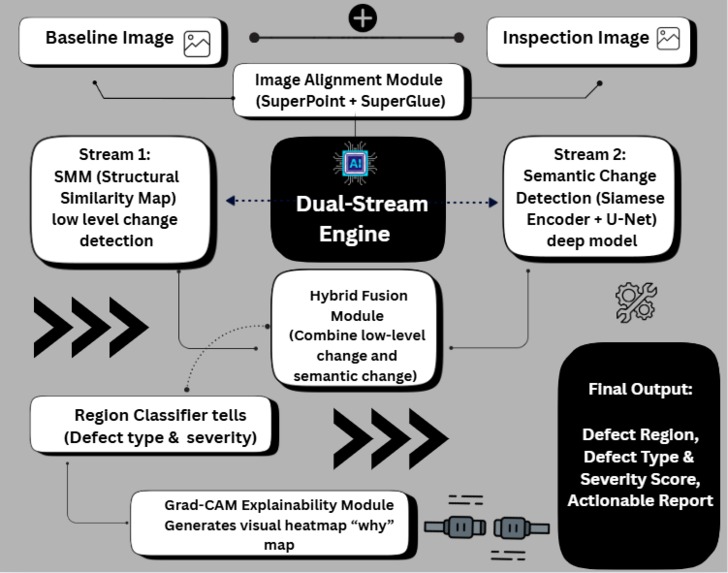

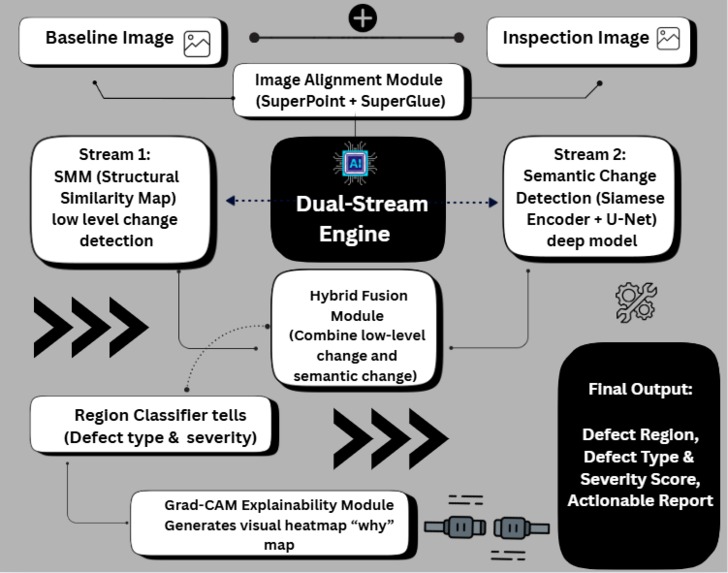

Visualytix "Dual-Stream Confidence" Engine: Our hybrid AI architecture

-

Brand Compliance in Action: Visualytix detects a logo alteration on the Haas F1 car

-

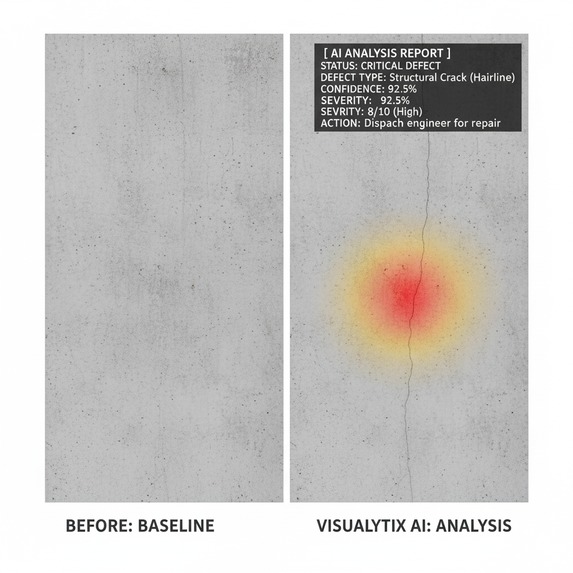

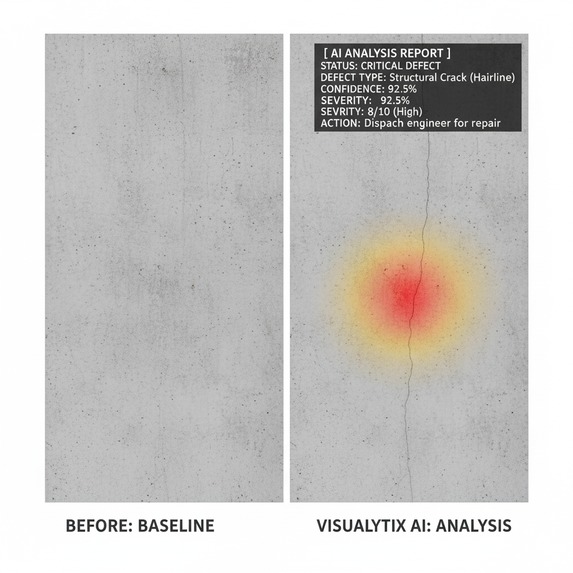

Infrastructure Inspection: Visualytix identifies a hairline structural crack with high confidence and severity

-

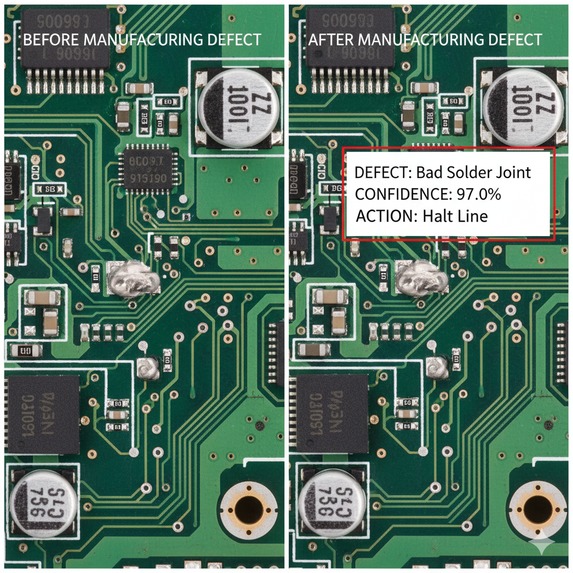

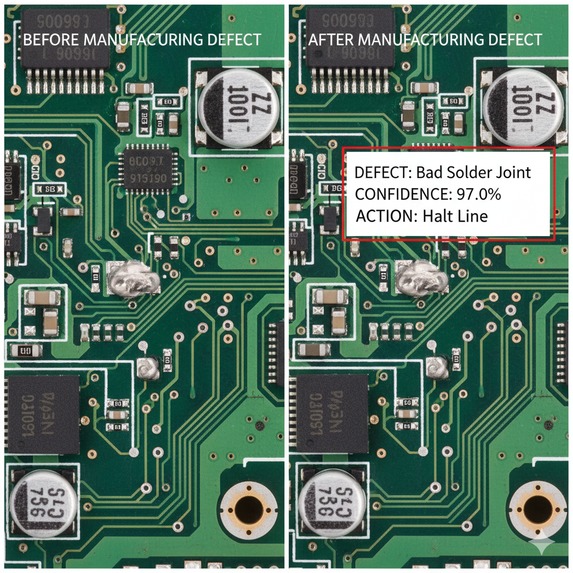

Manufacturing QA: Visualytix flags a critical "Bad Solder Joint" on a circuit board

Inspiration

In manufacturing, infrastructure, and brand compliance, inspections are a high-stakes gamble.

A missed hairline crack on a production line can halt operations. A misprinted logo can damage a brand’s reputation.

The problem? Current systems fail in two ways — they either miss real defects or chase shadows, flagging harmless lighting changes as critical errors.

This “alert fatigue” has made existing automation untrustworthy.

We built Visualytix to solve this. We created an AI inspector that you can actually trust.

What it does

VisuaLytix is an intelligent inspection engine that brings a new era of trust to automation.

It doesn’t just find change — it understands it.

Given a “baseline” (reference) image and a “new” (inspection) image, our system automatically:

- Finds the Real Defect — intelligently separates critical issues (cracks, rust, logo mismatch) from visual noise (shadows, reflections).

- Classifies the Threat — tells not just where the change is, but what it is (e.g., crack, corrosion/rust, missing component, discoloration, logo tamper)

- Explains the Why — uses Grad-CAM heatmaps to prove why it flagged an issue, eliminating the black-box problem.

- Delivers an Actionable Report — human-readable report with a recommended next action with final outputs a diagnosis like:

“Critical Crack Detected — Severity 9/10”

How we built it

Our innovation is a "Dual-Stream Confidence" Engine. It's a hybrid architecture that fuses classical computer vision with state-of-the-art deep learning for maximum robustness.

2. Alignment: First, we precisely align the inspection image to the baseline using SOTA (State-of-the-Art) feature matching like SuperPoint + SuperGlue.

3. Stream 1 (The "Signal"):

(Low level detection) We compute a Structural Similarity (SSIM) map. This is our "First check" that finds all physically different pixels to capture structural changes.

3. Stream 2 (The "Context"):

A Siamese U-Net (a deep neural network with two identical branches) analyzes both images to understand semantic context. It learns to tell the difference between a harmless shadow and the texture of a crack.

4. Hybrid Fusion:

Our secret weapon. We fuse both streams. A change is only flagged if it's both physically different (from Stream 1) and semantically anomalous (from Stream 2). This is how we stop chasing shadows and minor changes.

5. Synthetic Data Generation:

To train our models without a million-dollar dataset, we designed a pipeline to programmatically generate thousands of realistic, perfectly-labeled training images of defects.

We built VisuaLytix to fix that — an AI inspector you can finally trust.

Step-by-Step workflow :

1. Ingest & Align: The system ingests the 'baseline' (reference) and 'new' (inspection) images. It first uses SuperPoint+SuperGlue to find thousands of keypoints and perfectly align the new image to the baseline, compensating for any camera shift or rotation.

2. Dual-Stream Analysis (The Core): Both aligned images are fed into our "Dual-Stream Confidence" Engine:

Stream A (The "Signal"): A classical SSIM algorithm runs a structural check. It's fast and finds all physically different pixels. Stream B (The "Context"): A Siamese U-Net (our deep learning model) analyzes both images to find semantically anomalous regions. It's smart enough to know a shadow's texture is normal, but a crack's texture is not.

3. Hybrid Fusion & Filtering: Our secret weapon. We fuse the outputs. A defect is only flagged if it is both physically different (from Stream A) AND semantically anomalous (from Stream B). This is how we stop chasing shadows.

4. Classify & Score: The final, confirmed defect region is passed to a lightweight classifier which labels it (Crack, Rust, Mismatch) and assigns a Severity Score.

5. Explain & Report: The system generates the final, actionable report, complete with a Grad-CAM heatmap that proves to the operator exactly what the AI saw and why it was flagged.

Challenges we ran into

The biggest challenge in all automated inspection is Trust.

Challenge 1: False Positives. How do you stop a "dumb" AI from flagging a shadow and stopping a million-dollar production line?

Solution: Our Dual-Stream Confidence Engine requires both structural and semantic agreement before flagging a defect.

Challenge 2: The "Cold Start" Problem. You can't train an AI to find cracks without thousands of images of cracks.

Solution: Our Synthetic Data Pipeline. We don't find data; we create it. This makes our system adaptable to any new product in days, not months.

Challenge 3: The "Black Box." Operators won't trust an AI if they can't understand why it made a decision.

Solution: We built in Grad-CAM explainability to provide a heatmap, proving to the human inspector exactly what the AI saw.

Accomplishments that we're proud of

Our proudest accomplishment is designing an architecture that is truly production-ready from day one. We didn't just identify a problem; we engineered a feasible and robust solution to the three biggest failures in automated inspection.

We Solved the "False Positive" Problem:

Our "Dual-Stream Confidence" Engine is not just a theory. It's a practical, elegant architecture that solves the #1 complaint of all existing systems—stop chasing shadows.We Solved the "Cold Start" Data Problem:

Most AI projects fail because they can't get data. We're proud of designing the Synthetic Data Pipeline from the start, making our solution immediately adaptable to any industry and product without needing a million-dollar dataset.We Solved the "Black Box" Trust Problem:

We built explainability (Grad-CAM) into the core of the design, not as an afterthought. This proves we're focused on building a tool that real-world operators and engineers will actually trust and use.

What we learned

We learned that innovation isn’t about bigger models — it’s about smarter architectures.

By fusing classical CV and deep learning, we achieved a rare mix of robustness, transparency, and real-world reliability.

We also learned the importance of data agility — that synthetic data can turn any inspection AI from concept to prototype in days, not months.

The Visualytix Edge: Our Novelty and Uniqueness

Our innovation isn't just one model; it's the production-ready architecture that solves the three core problems of automated inspection.

Solves False Positives (The "Hybrid AI"): Our "Dual-Stream Confidence" Engine is fundamentally more robust than any "pure" AI solution. By requiring both structural (SSIM) and semantic (U-Net) agreement, we virtually eliminate false alarms from lighting, reflections, and shadows—the #1 failure of other systems.

Solves the Data Problem (The "Agile AI"): We don't wait for data; we create it. Our Synthetic Data Pipeline solves the "cold start" problem. It allows us to adapt Visualytix to any new product or defect type (from circuit boards to bridges) in days, not months.

Solves the Trust Problem (The "Explainable AI"): We are not a "black box." Our system is built for transparency from the ground up. By providing Grad-CAM heatmaps for every decision, we build trust with human operators, making our AI a tool they can rely on, not a threat they have to work around.

What's next for VisuaLytix - Visual Difference Enginee

This is an ideathon, but we've built the complete architectural blueprint. Our immediate next steps are:

- Execute the synthetic data pipeline and train the v1 Siamese U-Net.

- Deploy the model via a FastAPI backend to a Streamlit demo app.

- Pilot Visualytix with an industry partner (like Haas F1 for compliance checks or Mphasis for infrastructure audits).

Log in or sign up for Devpost to join the conversation.