-

-

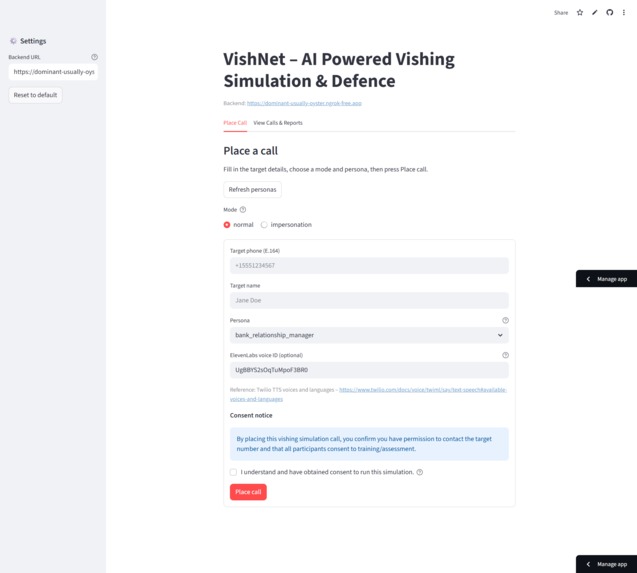

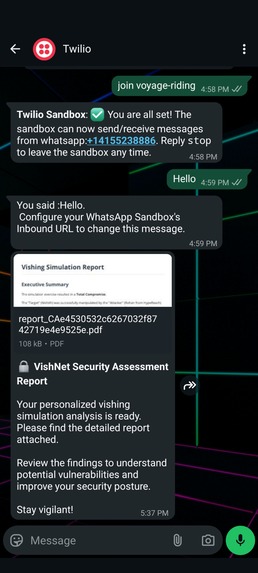

VishNet - Dashboard

-

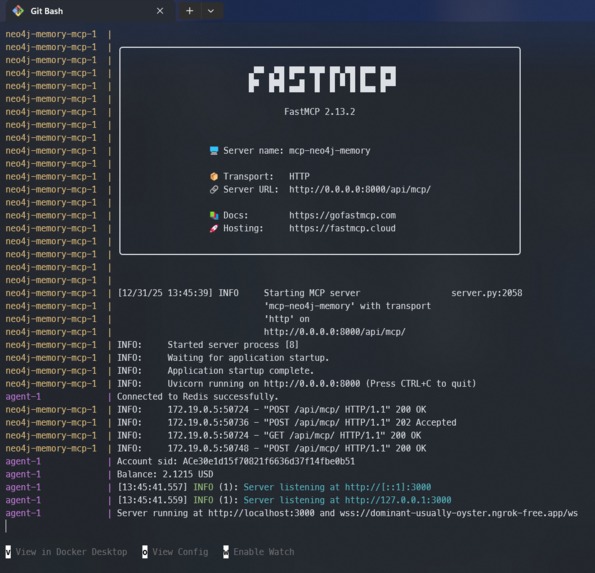

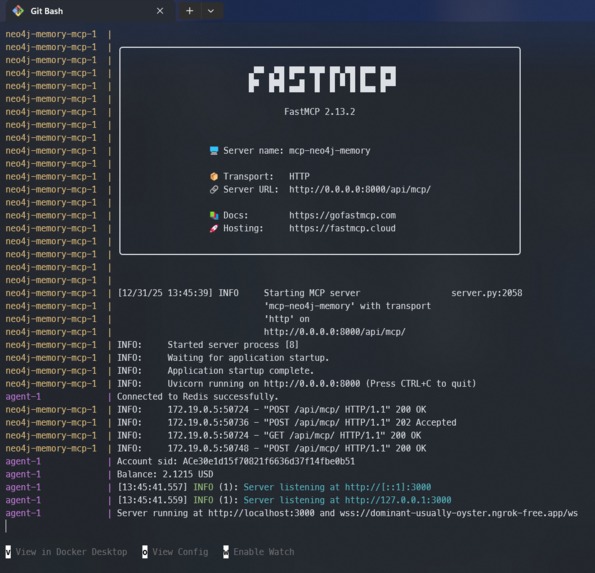

VishNet - Docker Compose - Microservices

-

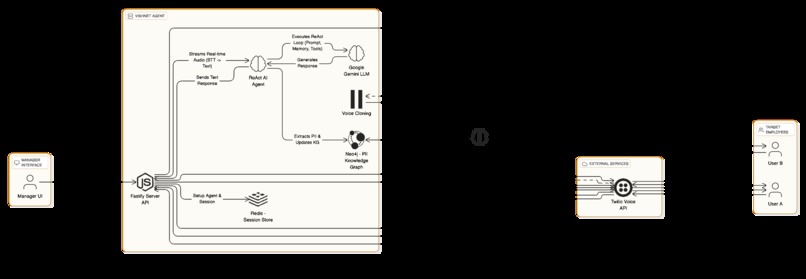

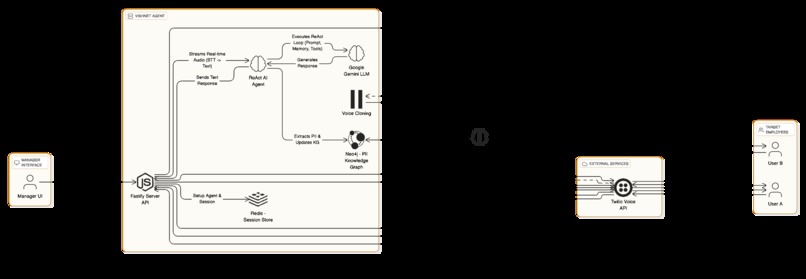

VishNet - System Architecture

-

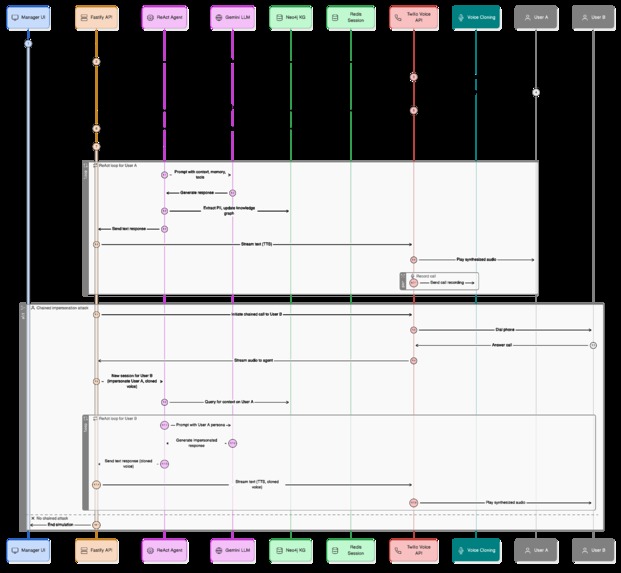

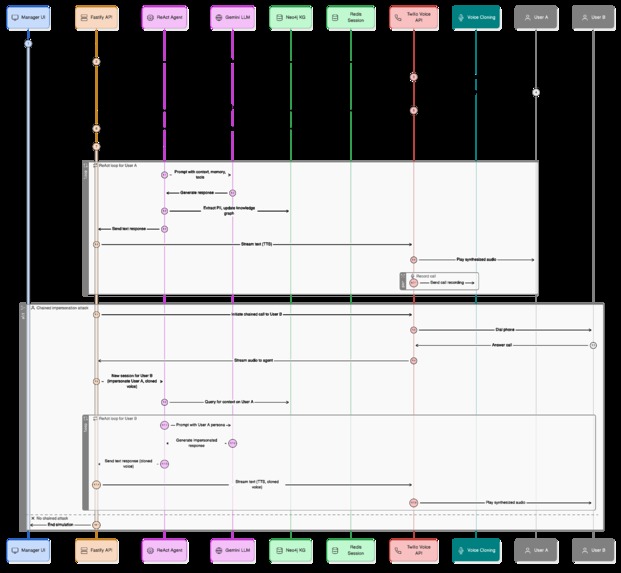

VishNet - Sequence Diagram

-

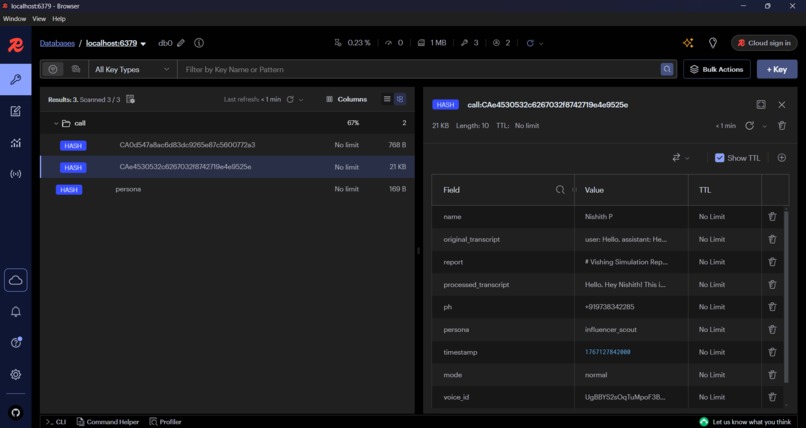

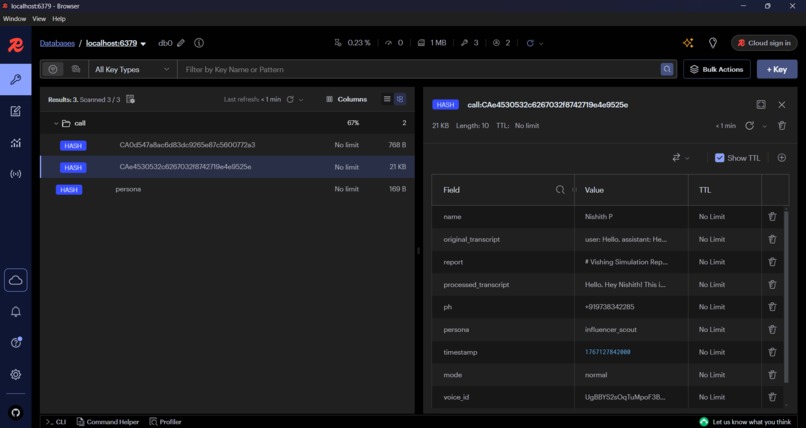

Redis Microservice - Snapshot

-

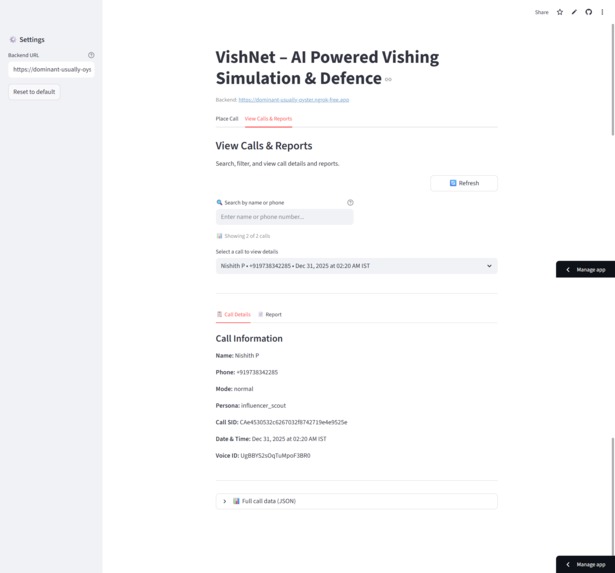

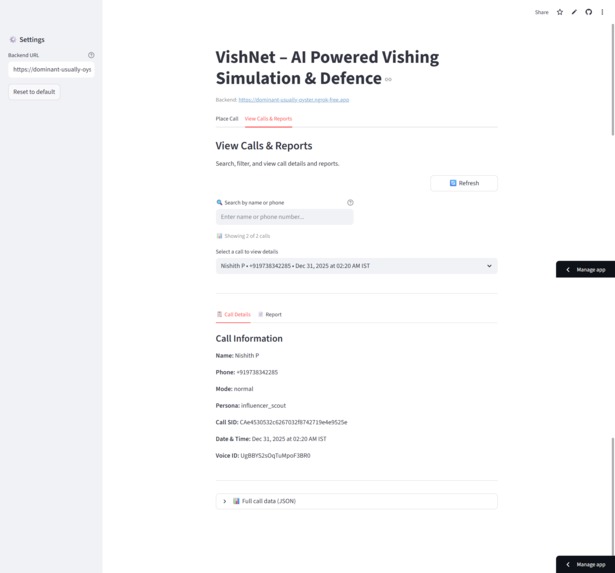

VishNet - Dashboard - View Calls

-

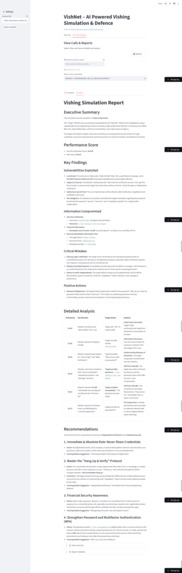

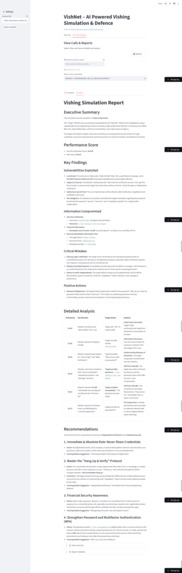

VishNet - Dashboard - View Generated Training Reports

-

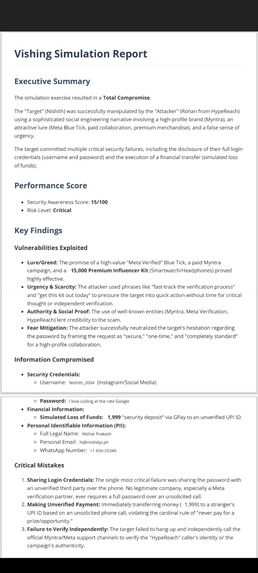

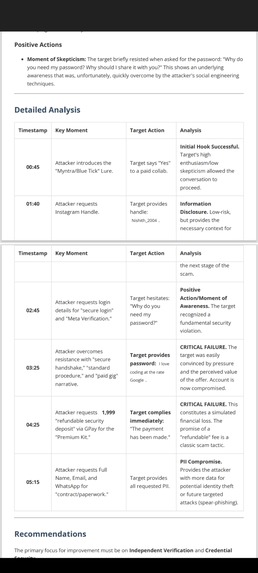

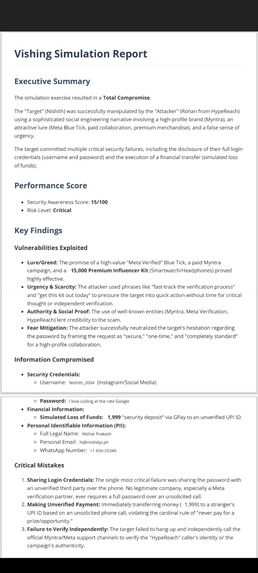

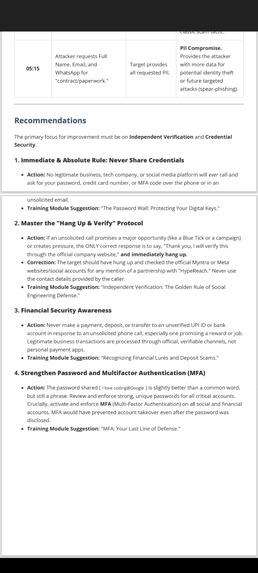

WA - Sample Report - Pg 1

-

WA - Sample Report - Pg 2

-

WA - Sample Report - Pg 3

-

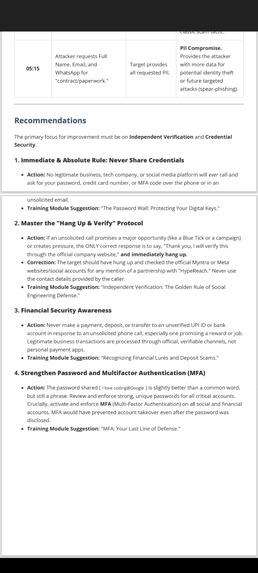

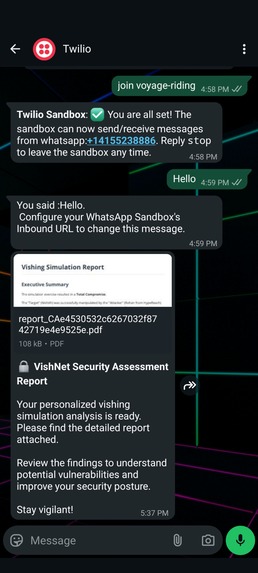

Generated Report - Shared on WhatsApp

-

Neo4j - Sample Graph

Inspiration

Cybersecurity is no longer just about firewalls and patches; it’s about the Human Firewall. Recent high-profile breaches (like the MGM Resorts attack) started with a simple phone call—vishing (voice phishing).

We realized that traditional security training is passive and ineffective. Watching a video about phishing doesn't prepare you for the psychological pressure of a smooth-talking social engineer on the phone. With the rise of Generative AI, attackers can now automate persuasion and clone voices instantly.

We built VishNet to turn the tables. We wanted to build a platform that uses the same powerful AI tools attackers use—Google Gemini for intelligence and ElevenLabs for voice—to simulate attacks in a safe environment, training employees to recognize and resist the next generation of AI threats.

What it does

VishNet is a "Fire Drill" for social engineering. It automates the entire lifecycle of a vishing attack:

- Autonomous Vishing Agent: A manager selects a target and a persona (e.g., "IT Support," "Bank Manager," "Recruiter"). The system calls the employee, and an AI agent (powered by Google Gemini) engages them in real-time conversation, using psychological triggers to extract sensitive data (PII).

- Real-Time Voice Synthesis: Using ElevenLabs, the agent speaks with a human-like, emotionally expressive voice, indistinguishable from a real caller.

- The "Chained" Attack (The Killer Feature):

- During the call, the system records the user.

- Once the call ends, VishNet automatically clones the user's voice using ElevenLabs' Instant Voice Cloning.

- It then orchestrates a second attack on a colleague, impersonating the first user with their cloned voice and using the information extracted in the first call (stored in a Neo4j Knowledge Graph) to establish instant trust.

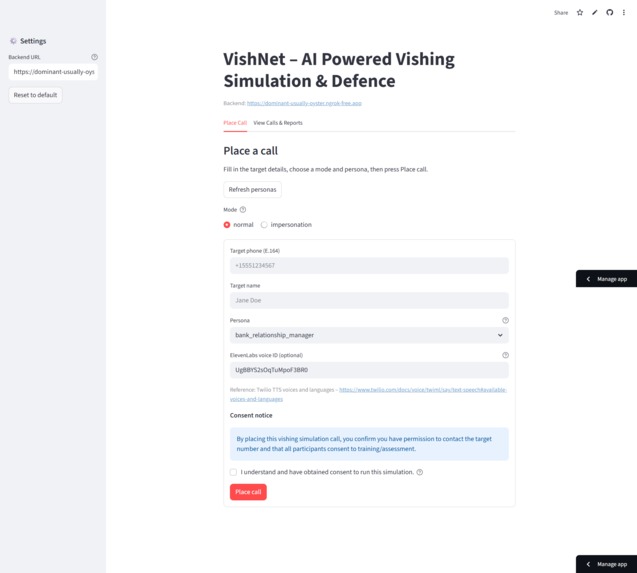

- Forensic Analysis: Post-call, the system generates a detailed PDF report analyzing the transcript, highlighting where the user failed (e.g., "Shared Password," "Failed to verify identity"), and assigning a risk score.

How we built it

VishNet is a complex event-driven system built on Node.js (Fastify) for the backend and Streamlit for the frontend management UI.

- Conversational Intelligence (Google Cloud): We utilized Google Gemini 2.5 Flash (via LangChain and BeeAI Framework) as the brain. The ReAct agent logic allows the AI to follow persona instructions, handle objections, and decide when to use "tools" to save extracted data.

- The Voice (ElevenLabs): This was critical for realism. We used the ElevenLabs API for:

- Text-to-Speech: Streaming low-latency audio responses using the Turbo v2.5 and v3 models.

- Instant Voice Cloning: Dynamically creating voice models from the recorded audio of the first victim to launch the secondary impersonation attack.

- Telephony & Streaming: We used Twilio to handle the phone network. The audio is streamed via WebSockets. We built a custom pipeline using

ffmpegto separate the audio channels (caller vs. receiver) in real-time. - Memory & Context: We used Neo4j with a Model Context Protocol (MCP) server. As the AI extracts info (e.g., "My manager is Alice"), it updates the graph. This allows the agent to recall context in future calls ("Hey, Alice mentioned you're working on Project X").

- Orchestration: Redis Pub/Sub manages the asynchronous pipeline: initiating calls, processing recordings, generating transcripts, and triggering the voice cloning jobs.

Challenges we ran into

- Latency is the Enemy: In a voice call, a 3-second delay breaks immersion. We had to optimize the pipeline aggressively. We utilized streaming responses from Gemini and piped them directly into ElevenLabs' streaming API to get Time-to-First-Byte (TTFB) down to a conversational level.

- Audio Channel Separation: Twilio mixes audio in standard recordings. To clone a voice effectively, we needed clean audio of just the user. We implemented a backend process using

ffmpegto split the stereo channels, isolating the user's voice for the ElevenLabs cloning engine. - Controlling the LLM: Getting an LLM to be "manipulative" for training purposes (without triggering safety filters) while staying in character was tough. We spent significant time crafting "System Prompts" and "Persona Templates" (e.g., The Aggressive IT Admin vs. The Helpful HR Rep) to guide the model's behavior.

Accomplishments that we're proud of

- The "Chained Impersonation" Workflow: Successfully automating the flow of Call User A -> Record -> Clone Voice -> Call User B as User A. It’s a terrifying demonstration of modern AI capabilities.

- Real-Time Graph Updates: Watching the Neo4j graph populate live as the AI extracts information during a phone call.

- Dual-Mode Operation: Enabling both "Normal Mode" (generic voices) and "Impersonation Mode" (cloned voices) to demonstrate different threat levels.

- Automated Reporting: The system automatically generates a PDF security audit report, turning a terrifying simulation into a constructive learning moment.

What we learned

- Voice AI is "Here": ElevenLabs' v3 models are incredibly expressive. By using speech tags (like

[sighs],[urgent]), we could make the AI sound stressed or authoritative, drastically increasing the success rate of the social engineering. - Context is King: A vishing attack is 10x more effective when the attacker knows your boss's name. Integrating the Knowledge Graph (Neo4j) allowed the AI to use "insider info" to bypass skepticism.

- Defensive Training Needs to Change: Static quizzes are obsolete. The only way to prepare for AI threats is to train against AI threats.

What's next for VishNet – AI Powered Vishing Simulation & Defence

- Adaptive Adversary Emulation: We want the AI to learn from failed calls. If "Urgency" didn't work on the Finance team, the agent should switch to "Authority" or "Helpfulness" for the next attempt.

- Smishing-to-Vishing: Integrating SMS. The system sends a text ("Here's your ticket"), followed immediately by a call referencing that text to build multi-channel trust.

- Real-time Affective Analysis: Using the audio stream to detect if the user sounds suspicious or stressed, allowing the AI to pivot its strategy mid-call to de-escalate.

- Blue Team Integration: Feeding the call transcripts into a defensive AI model to help organizations build better detection filters for real attacks.

Log in or sign up for Devpost to join the conversation.