-

Main Page

-

Page Options

-

Problem Statement Brainstorming and Clarification

-

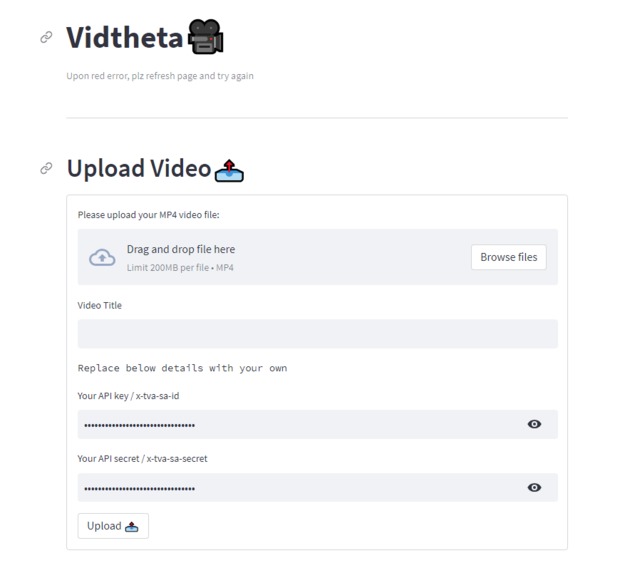

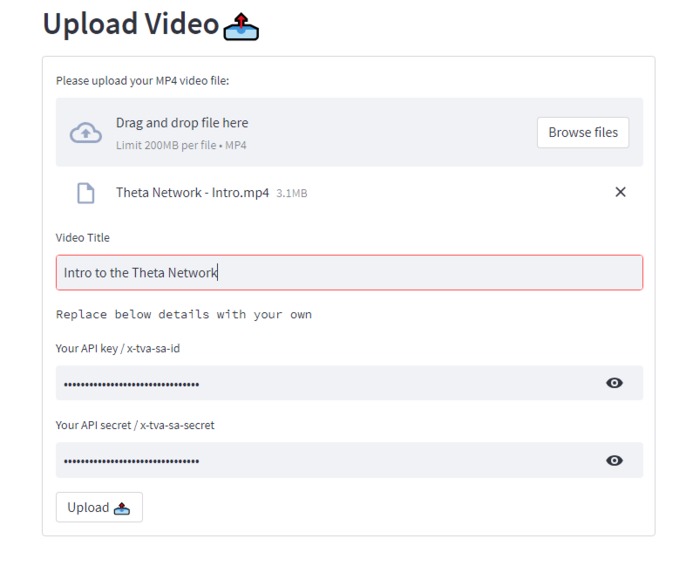

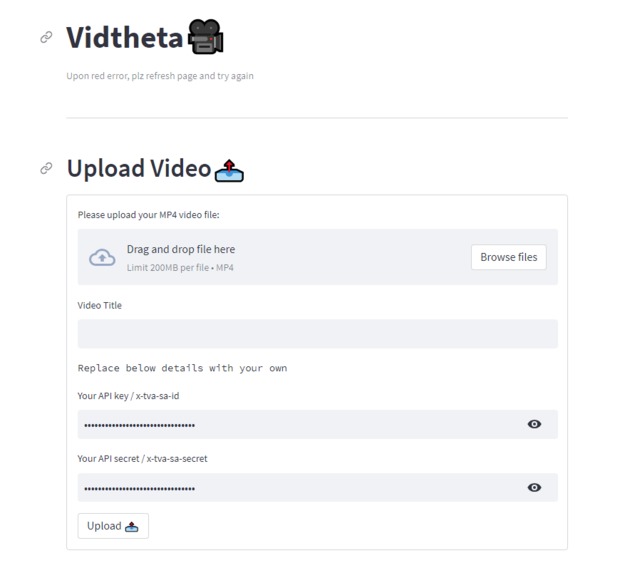

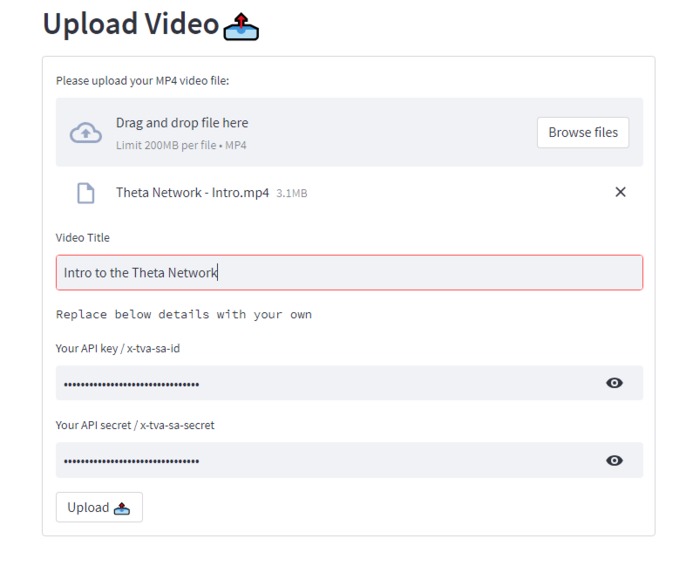

Upload Video Section

-

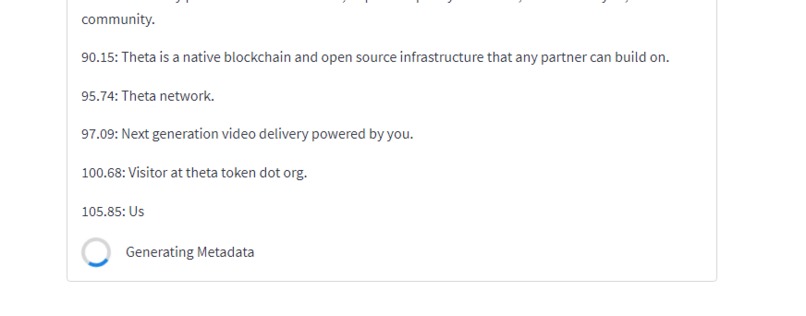

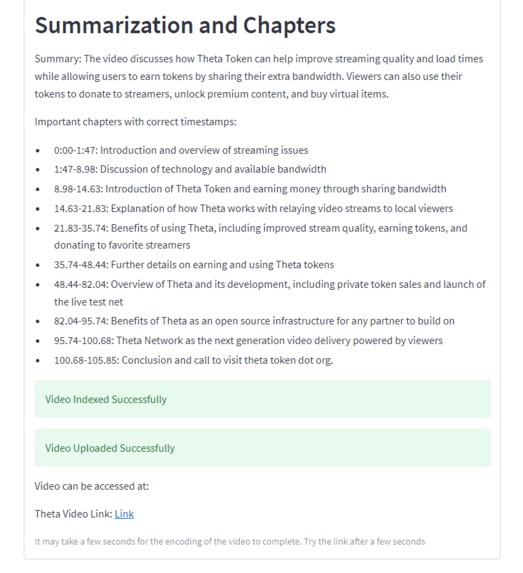

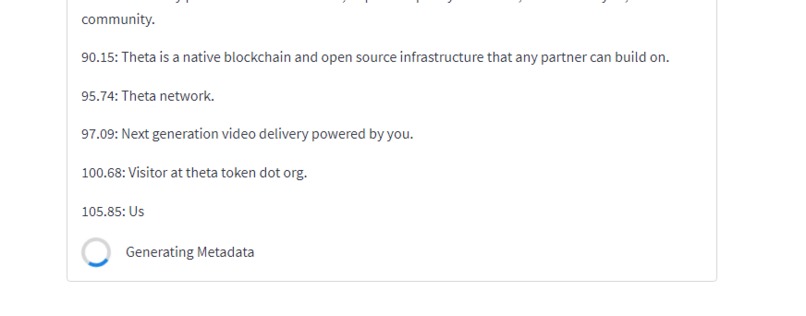

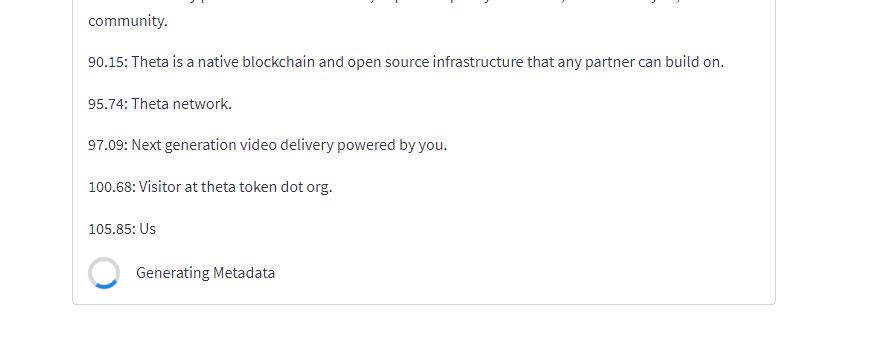

Metadata Generation

-

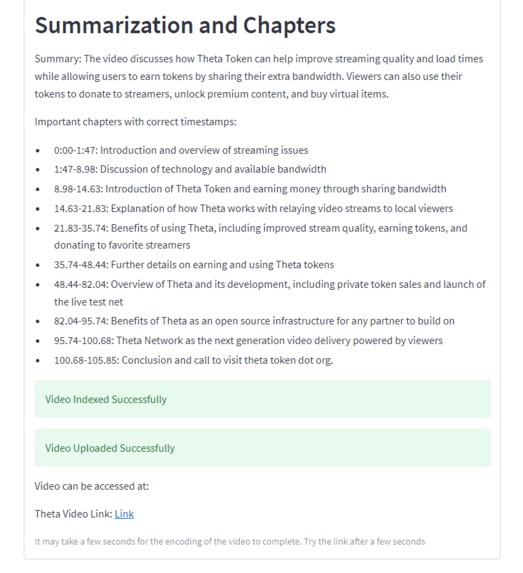

Utility Outputs

-

Video Upload and Indexing

-

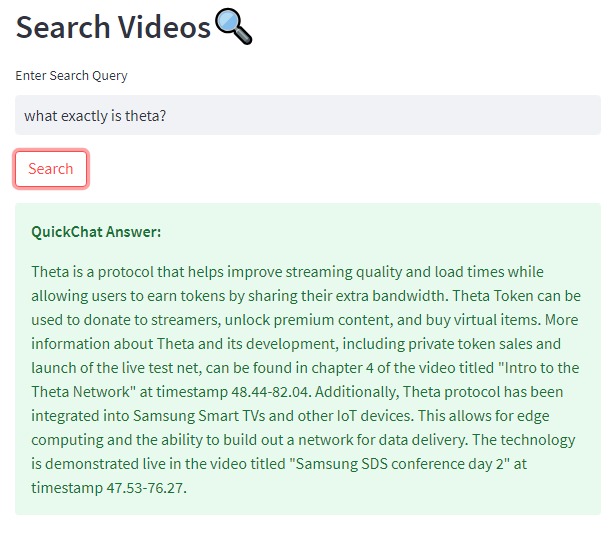

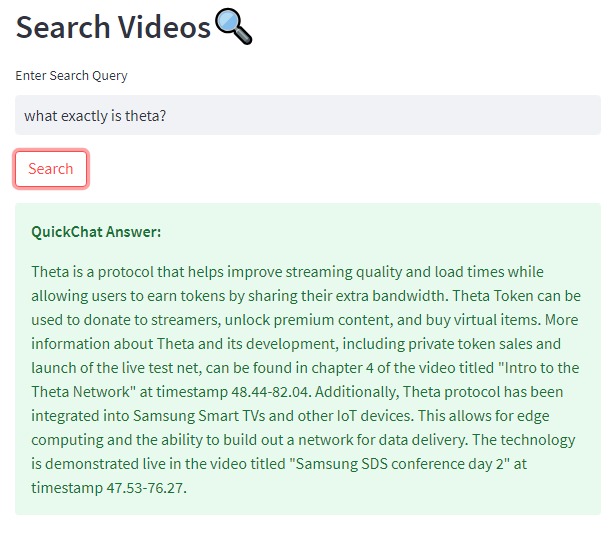

QuickChat Answer Feature

-

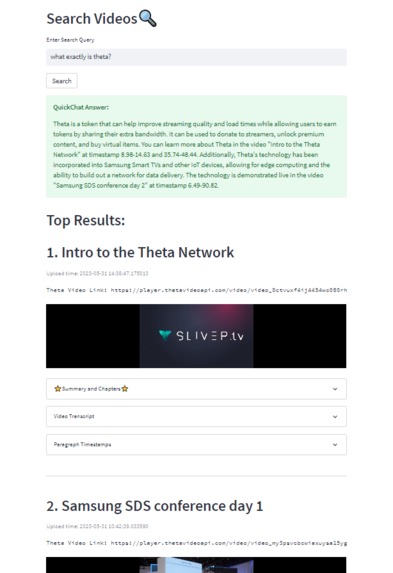

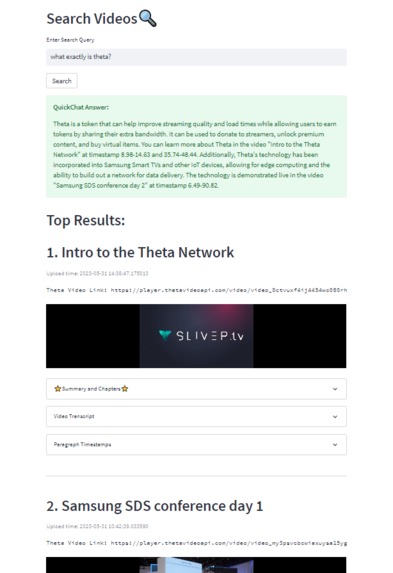

Search Section (complex query example - related to Theta)

-

Expansion of utility search tools

-

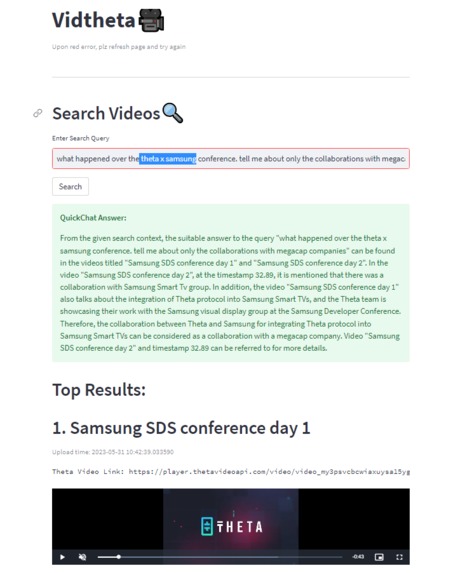

Relevant Search Results with Helpful navigation tools

-

Search Credit Units -- TFUEL Reward Mechanism

-

Another Cool Search Result based on videos that have been indexed on Vidtheta

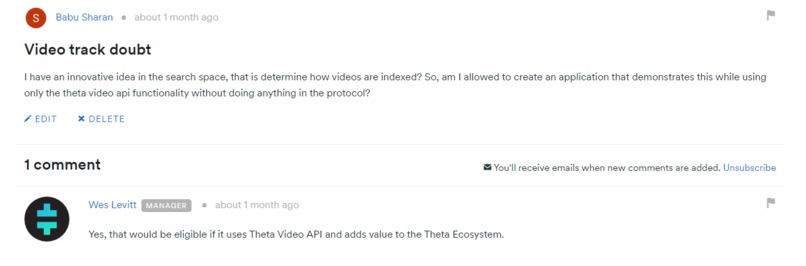

Inspiration

Tooling, Utility and Incentive are very important for the success of a consumer-video platform and that is what Vidtheta focuses on.

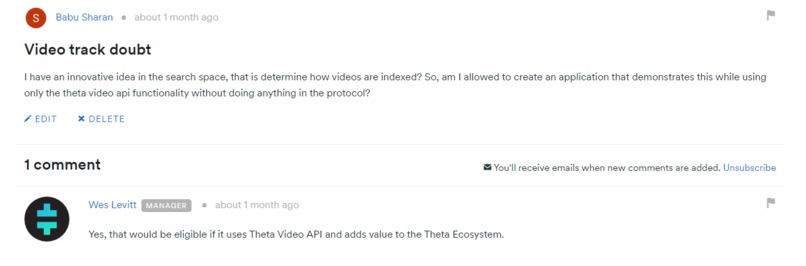

Vidtheta uses the Theta Video API and builds several innovations including a futuristic search engine which makes searching uploaded videos on the platform (any ecosystem that uses the Theta Video API; potentially Theta TV too) a breeze.

Note: Currently, Theta TV provides only channel-based search (streamers or games) which can feel limiting to the user pretty soon.

Vidtheta solves many such related issues by providing utility tools (discussed below) and using state-of-the-art Natural Language Processing (NLP) search techniques.

What it does and How we built it

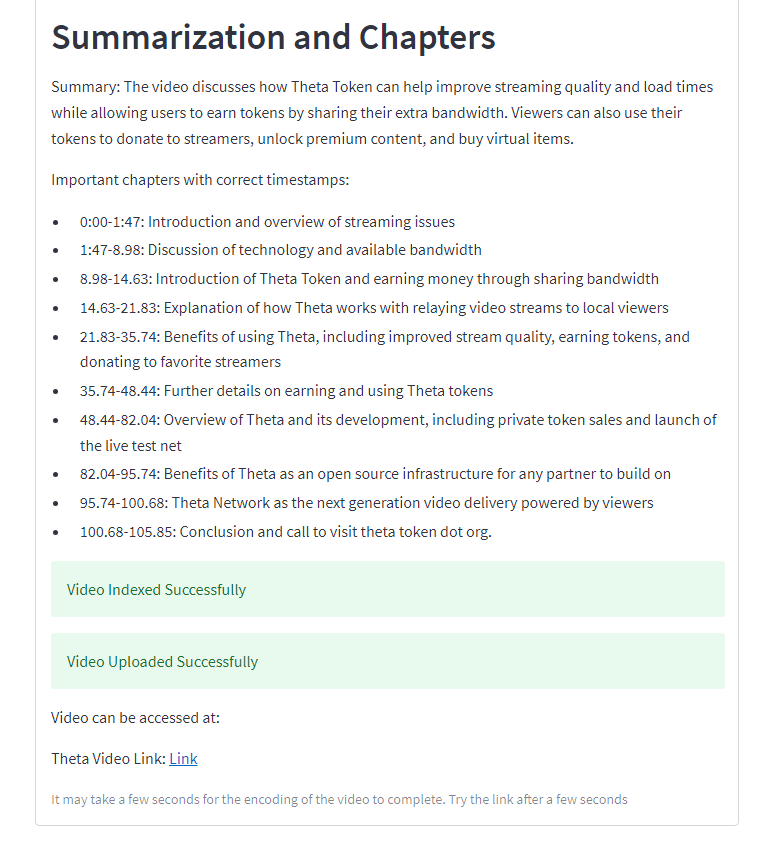

Using Vidtheta, anyone in the world can simply upload their video and receive a link along with several other useful outputs like ‘Full Video Transcripts’, ‘Paragraph Timestamps’, and ‘Automatic Chapters with Comprehensive Summary’. All these outputs help the uploader effectively iterate upon their videos thereby improving them and more importantly, providing the consumers (regular users of the app) a delightful search and viewing experience.

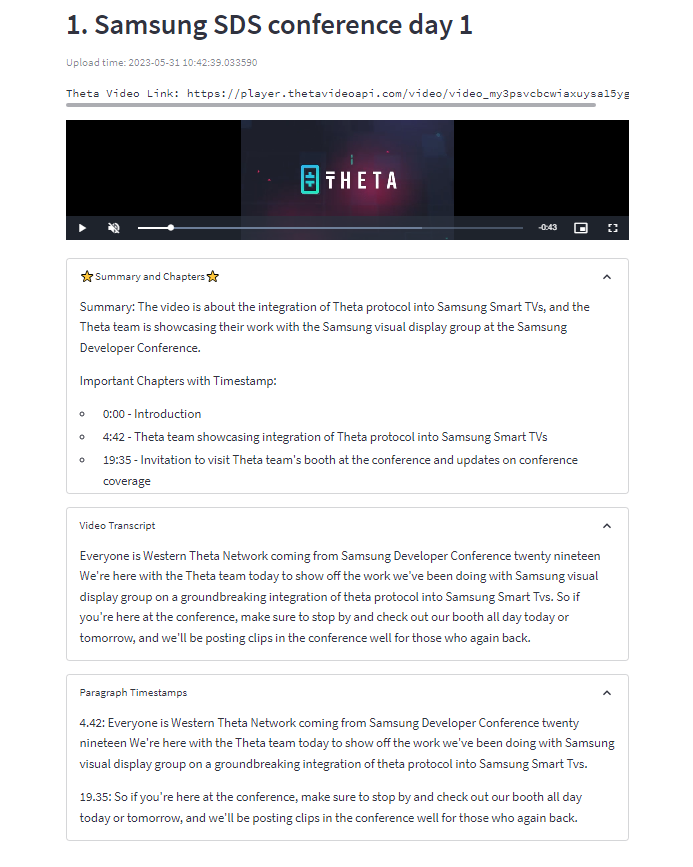

To expand, in the Upload section of the Vidtheta Web app, by simply providing the ‘Title of the video’ and ‘uploading the video file’, the user gets back 1. Transcripts – an accurate representation of the spoken English content, 2. Paragraph Timestamps – each meaningful sentence/paragraph is split & timestamped which can provide a lot of editing and content progression insights to the uploader; 3. Automatic Chapters with Summary – important timestamps in the video where crucial content is discussed along with the summary so that the end-user is directly able to seek-to that position in the video if the user wishes to. While this is happening, the video is also getting uploaded and transcoded via the Theta Video API, so the uploader gets a ‘usable link’ back. This way, we are placing powerful tools in the hands of the creators.

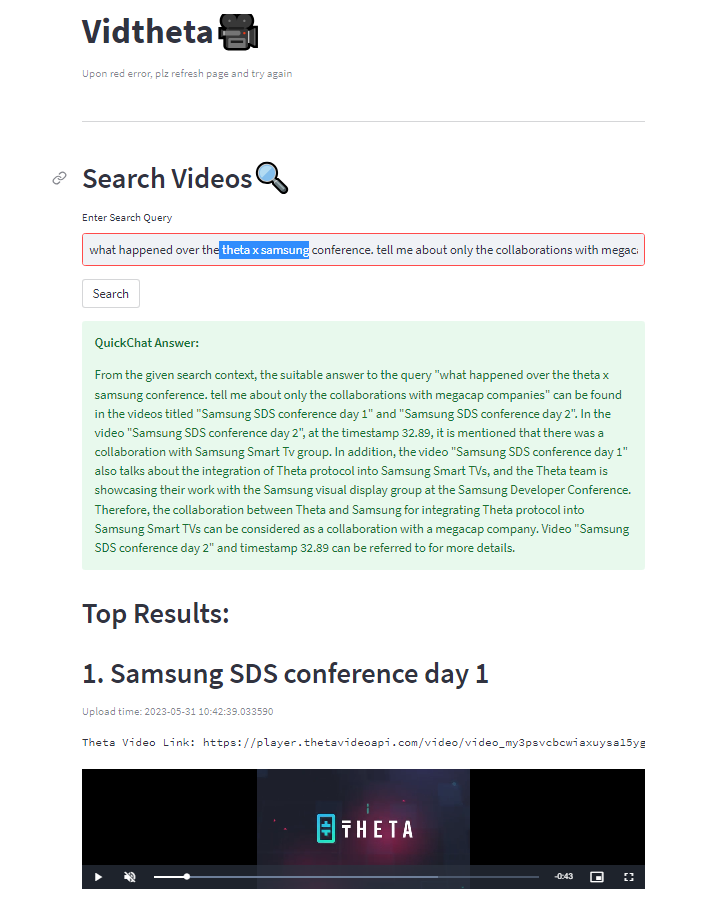

On the Search side of Vidtheta, we enable any person to simply enter an English search query and get a super smart summarized answer along with relevant results. To expand, based on the summaries and titles of the uploaded videos, we first retrieve the top most contextually similar video results from the search index. This is done using a powerful NLP technique called Semantic Similarity. In simple words, it goes beyond word similarity and is able to match pieces of text based on the context or intent of the content. This leads to superior results and more relevant results for the user performing the searches.

On top of these Search Results, we provide a feature called ‘QuickChat Answer’ which looks through the content of all the similar results and gives a very concise and to-the-point summarizing answer for the query asked. This is very powerful and unlocks a lot of potential for users of Theta Blockchain for Video in general as even on streaming services like YouTube, this would have meant clicking on several videos and then individually searching and seeking the relevant part of the video.

This naturally implies that users on Vidtheta can make more powerful search results like: “what happened over the theta x samsung conference. tell me about only the collaborations with megacap companies” – This query returns back an answer along with a roadmap on how you can navigate the provided search results (along with suitable timestamps) to quickly see the video part of the answer you are looking for! I have already uploaded several videos on Vidtheta including ones from the official Theta Youtube Channel so the answer to the above query is something like this:

QuickChat Answer: Based on the given query and search context, the suitable answer is: Theta has collaborated with Samsung in integrating Theta protocol into Samsung Smart TVs and other IoT devices for edge computing and data delivery. The collaboration includes working with Samsung's Smart TV group, as mentioned in the Samsung SDS conference day 1 video at timestamp 4:42 and Samsung SDS conference day 2 video at timestamp 32.89. For more information on Samsung and Theta's collaboration, you can watch the video "Samsung + Theta Collab" at timestamp 2:23-3:03.

Another example:

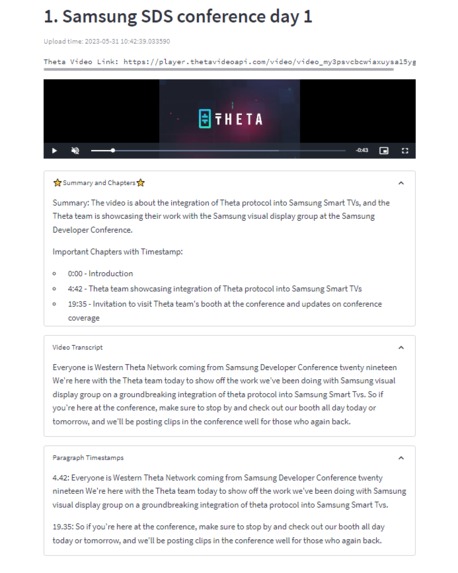

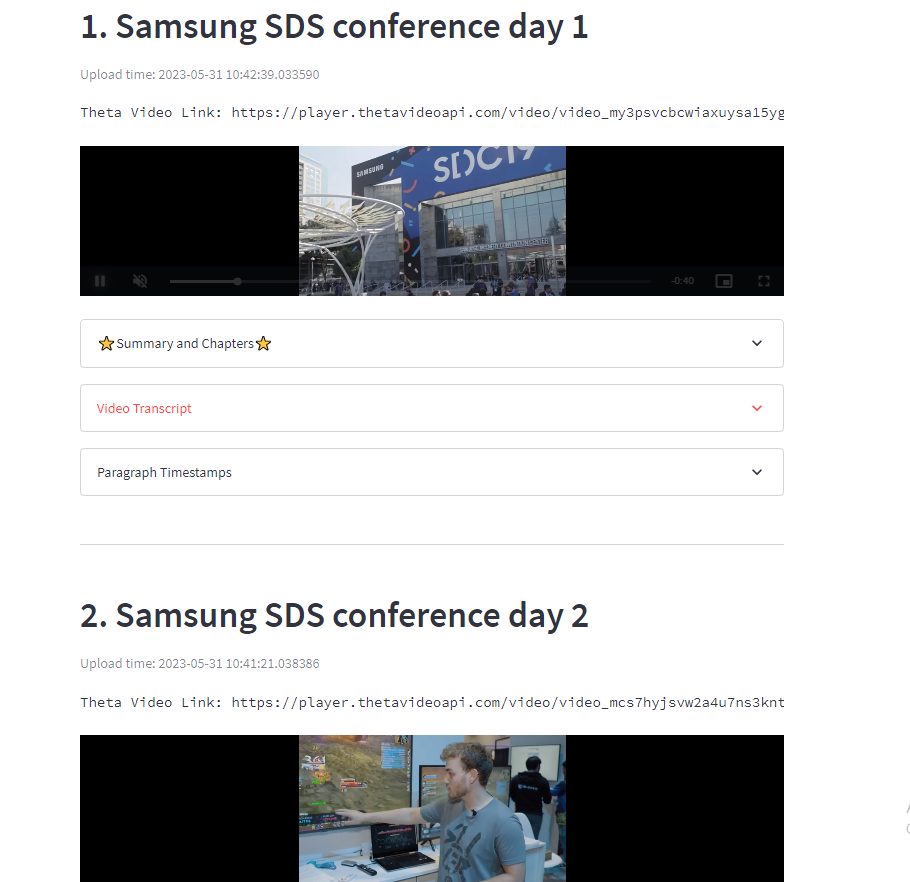

This is followed by the Top Results section where in a beautiful way, we present to the user the following details: Title of the video, Upload time details, Theta Video Link, embedded theta video player of the video which can be viewed without leaving Vidtheta, summary and chapters, Video transcript and Paragraph timestamps. So, the user has all necessary search tools ready for use making it a seamless and effective experience for those who search.

At the very bottom, we display what we call as ‘Search Credit Units’, i.e, a measurable compute metric based on which we can reward tokens (TFUEL) to both the worker node that computes the search results and the uploader whose video pops up in the search results. Let us think about the implications for a second, this ultimately incentivizes both the uploader and the ones who use their compute resources to perform the search itself. This will incentivize people to do more on the Theta Video network thereby creating a vibrant community where all involved entities benefit.

Is that all though? Turns out, Answer Engines are all the rage these days, i.e., they perform a search on the information source and then use Large Language Models to provide a concise answer. They currently pay the search provider and use scrapers to accomplish this. This is also heavily done on video sources like YouTube to access relevant content hidden within. Some of the prominent answer engines today are: Perplexity AI, You.com and andisearch; there are many of these now (Several tens of millions of users) and they are constantly looking for video search API providers to better integrate with their solutions.

Perplexity AI search result example (It tries to generate an answer by summarizing transcripts):

Now, this is a huge economic opportunity for Theta Video as well. Imagine providing an API endpoint to these answer engines that lets them access all the knowledge and content on the Theta platform. This will empower them to aggregate results from the Theta Network as well making it a win-win situation for both as they pay for API requests and this can go back to the creators and search performers in the form of TFUEL. Theta is a blockchain that has tremendous intrinsic value, and adding such an API system will only better the ecosystem value.

Another important point I would like to bring to light is that all the components of Vidtheta can be implemented in an Open source fashion making it safe and tunable for actual deployment for use by millions (The search index can also be migrated to ThetaEdge Store in the future to leverage deeper ecosystem integration and performance benefits). A natural future step can also be the creation of Oracles to enable wider access to the Theta Video data.

To conclude, Vidtheta introduces and implements several innovative ideas that can have huge positive use cases and business implications for the Theta Video ecosystem.

Accomplishments that we're proud of

Building a useful Theta Video API integration that can massively increase user adoption by adding value.

What we learned

My first Blockchain-related build. Theta is very interesting and am bullish on it.

What's next for Vidtheta

- Potential mainstream/native integration

- Multilingual support

- Migrate the video search index from local storage to Theta EdgeStore.

Built With

- llms

- natural-language-processing

- python

- semantic-similarity

- streamlit

- theta

- theta-video-api

Log in or sign up for Devpost to join the conversation.