-

-

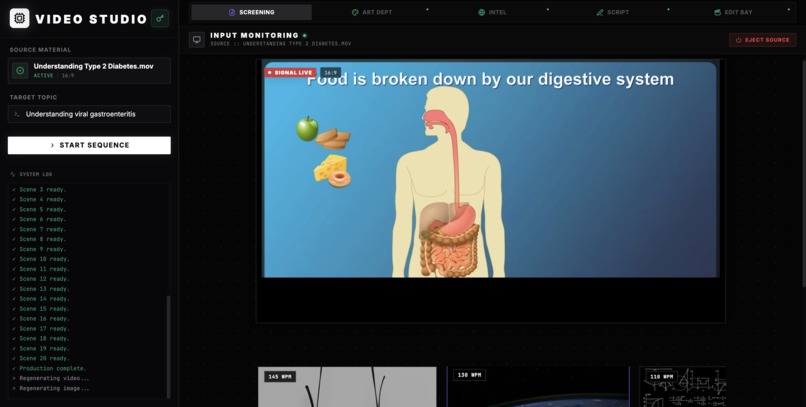

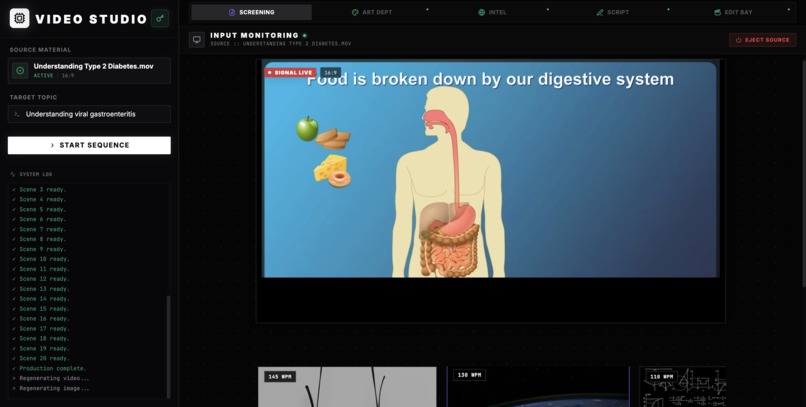

Input Monitoring: Upload raw footage or select a template. The system ingests source material and prepares for multimodal style analysis.

-

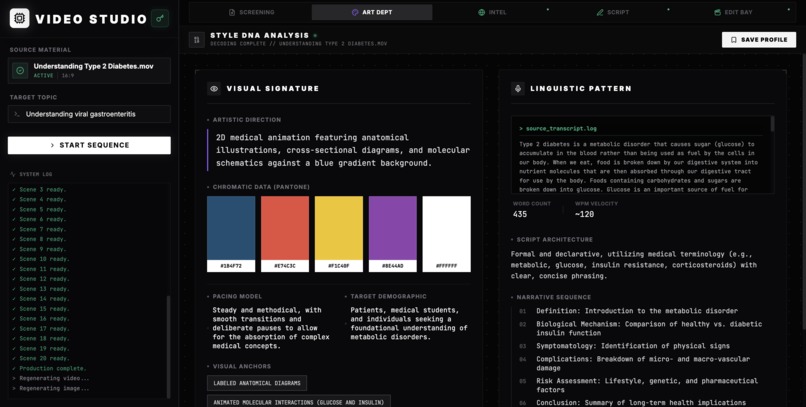

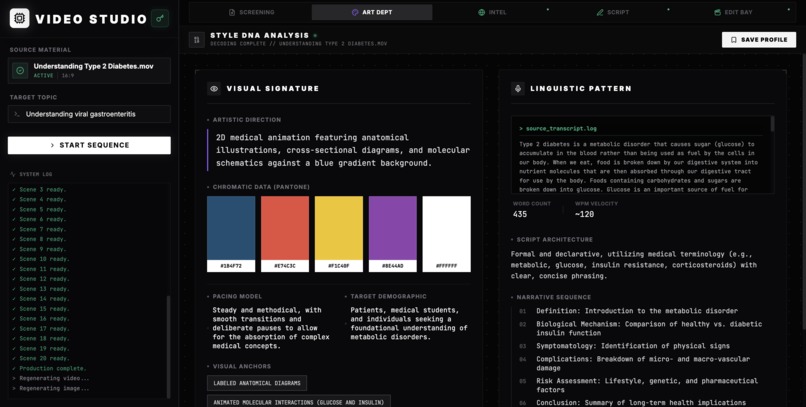

Style DNA: Gemini 3 Pro extracts the unique artistic signature: HEX palettes, WPM pacing, and pedagogical logic from the reference video.

-

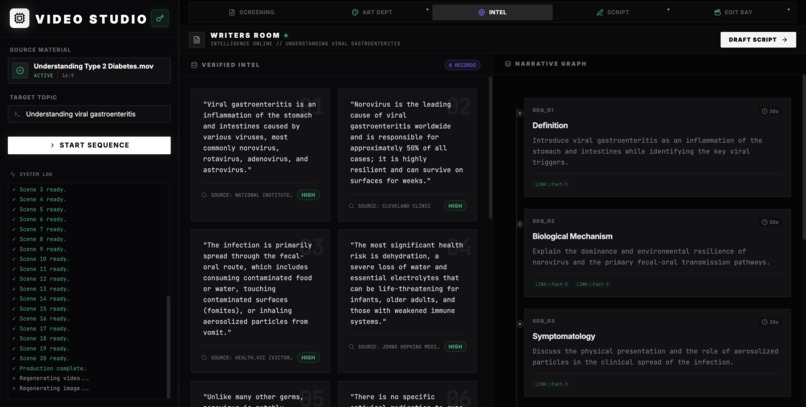

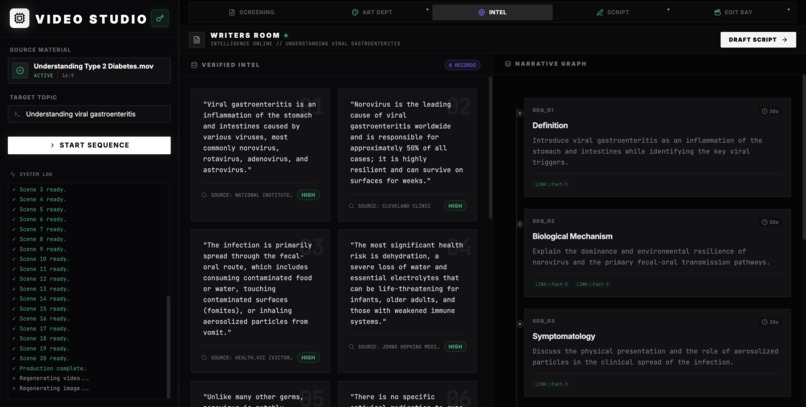

Research Room: Gemini 3 Flash with Google Search Grounding retrieves verified facts, busts myths, and maps out the narrative structure.

-

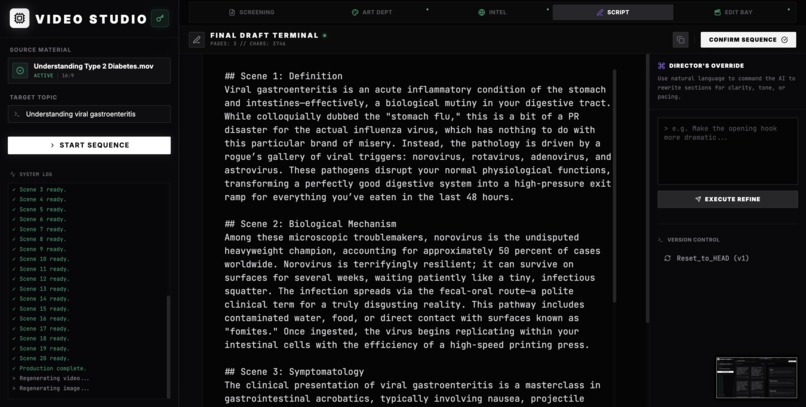

Script Terminal: Generates a production-ready script that matches the reference's specific tone and voice. Includes AI refinement tools.

-

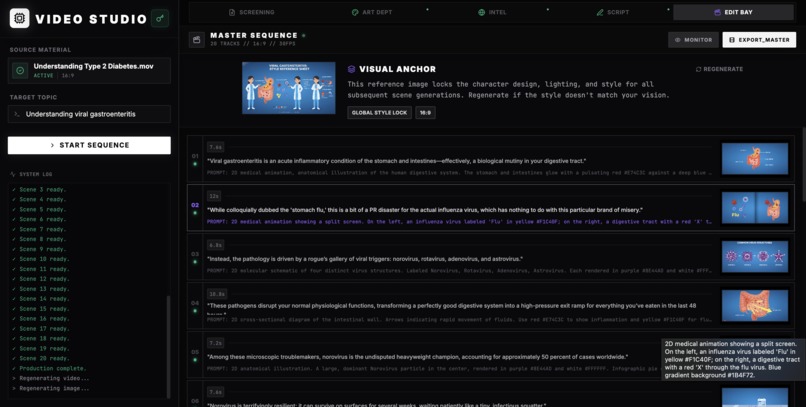

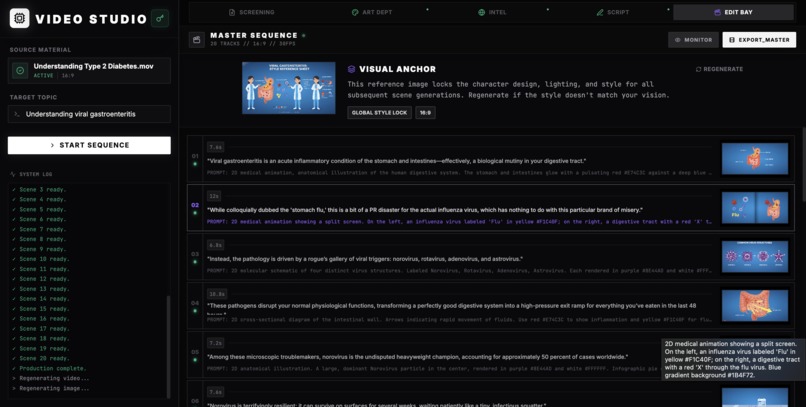

Production Bay: Gemini 3 Pro Image generates consistent keyframes. The browser-based engine renders the final MP4 without servers.

Inspiration

Video Studio was built to showcase what Gemini 3 is uniquely good at: understanding and reproducing how a human explains something, not just what they say.

Most people — especially kids, seniors, and busy learners — rely on short videos to understand the world. But great explainers are rare, and existing AI video tools only generate generic clips because they don’t understand a creator’s pacing, tone, or teaching style.

Using Gemini 3’s multimodal reasoning, Video Studio watches a single reference video (image, audio, and transcript) and extracts a structured Style DNA — things like words-per-minute, narrative flow, and visual language. It then grounds a new topic with real search data and maps those facts into the same style to produce a new episode that feels like it was made by the same creator.

This project demonstrates Gemini 3’s core strengths: multimodal understanding, grounded reasoning, and structured generation — applied to a real, high-impact problem: making high-quality educational video scalable without losing the human touch.

What It Does

Video Studio is an AI-native production workstation that turns a single reference video into an infinite series. It operates through a 5-stage pipeline:

- Style Extraction (The Art Dept) You upload a reference video (e.g., a fast-paced Kurzgesagt style explainer). Using Gemini 3 Pro's multimodal capabilities, the system analyzes the video, audio, and transcript to extract a StyleProfile. It quantifies:

- Words Per Minute (WPM)

- HEX Color Palette

- Pedagogical Approach (e.g., Socratic Method)

- Visual Style mapping

Agentic Research (The Intel Room) You input a target topic (e.g., Quantum Entanglement). Gemini 3 Flash uses Google Search Grounding to retrieve verified facts, bust myths, and build a glossary — ensuring the content is factually accurate, not just creative.

Narrative Mapping (The Writers Room) Instead of writing a generic script, the system builds a Narrative Map — a beat-sheet that forces the new facts into the structural formula of the reference video.

Auto-Storyboard (The Director) The system generates a shot list. It uses Gemini 3 Pro Image to create a Visual Anchor (Reference Sheet) to lock in character design and style, then generates high-fidelity keyframes (16:9 or 9:16) for every scene.

Client-Side Rendering (The Edit Bay) A custom-built browser engine renders the video in real-time using HTML5 Canvas and the Web Audio API, applying Ken Burns animations and burning in subtitles, resulting in a downloadable MP4 without a backend render farm.

How We Built It

The project is a React application built with Vite and Tailwind CSS, designed with a Cyberpunk Industrial aesthetic to feel like a professional tool, not a toy.

The AI Stack (@google/genai)

- Gemini 3 Pro Preview (thinkingConfig)

Used for Style DNA analysis. We enabled

thinkingBudgetso the model can “reason” about tone, pacing, and cinematography before outputting the JSON profile. - Gemini 3 Flash Preview (googleSearch) Used for the research phase — fast and able to use the Google Search tool for grounding.

- Gemini 3 Pro Image Preview Used for asset generation — high-fidelity images that adhere to complex visual prompts.

The Rendering Engine

To keep the app lightweight and privacy-focused, we built a Client-Side Video Renderer (services/videoRenderer.ts). Instead of sending images to a server to stitch into a video, we:

- Draw images and text to an off-screen

<canvas>. - Synthesize audio via the Web Audio API.

- Capture the stream using MediaRecorder.

- Encode the Blob to MP4 in the browser.

Code example (capture canvas → MP4):

// Minimal example: draw canvas frames, capture stream and record MP4

const canvas = document.createElement('canvas');

const ctx = canvas.getContext('2d');

// ... draw frames into ctx ...

const stream = canvas.captureStream(30); // 30 fps

const mediaRecorder = new MediaRecorder(stream, { mimeType: 'video/webm; codecs=vp9' });

const chunks = [];

mediaRecorder.ondataavailable = (e) => chunks.push(e.data);

mediaRecorder.onstop = async () => {

const blob = new Blob(chunks, { type: 'video/webm' });

// Optionally transcode to MP4 or provide webm download

const url = URL.createObjectURL(blob);

// download or upload

};

mediaRecorder.start();

Challenges We Ran Into

The "Generic AI" Look Early images looked like stock AI art. Solution: Implemented a Visual Anchor workflow. We first generate a Character Sheet from the Style DNA, then include that image in the context of every scene-generation prompt to enforce visual consistency.

Rate Limits & Quotas Gemini 3 Pro Image is powerful but rate-limited. Solution: Built a robust

withRetryutility that handles429(Quota Exceeded) and403(Permission Denied) errors. The retry includes exponential backoff and an automatic fallback togemini-2.5-flash-imageif the Pro model is unavailable, so the user workflow continues.Audio/Visual Sync Syncing dynamically generated TTS with pans/zooms in the browser is complex. Solution: We calculate the exact duration of the audio buffer for each scene before rendering frames, and dynamically adjust zoom speed (pixels per frame) so visual cuts align with sentence boundaries.

Accomplishments We're Proud Of

- Multimodal Style Cloning — we copy not just content but the soul of a video file.

- Serverless Architecture — we generate 720p/30fps videos entirely in the client.

- Structured "Thinking" — leveraging Gemini 3's thinking tokens to output complex JSON structures (like Narrative Maps) that actually follow storytelling logic.

What We Learned

- Grounding is non-negotiable. Educational videos must be accurate; integrating the Google Search tool elevated scripts from plausible to verified.

- Style is quantifiable. Style = WPM + HEX palette + sentence structure. Once quantified, it becomes reproducible.

What's Next for Video Studio

- Veo Integration: Replace panned-image simulations with real motion video generation per scene.

- Voice Cloning: Integrate services (e.g., 11Labs) to clone the narrator’s voice from the reference video.

- YouTube API: One-click publishing to the creator’s channel.

Built With

- gemini-3-flash-preview

- gemini-3-pro-image-preview

- gemini-3-pro-preview

- google-gemini-api

- google-search-grounding

- html5-canvas-api

- mediarecorder-api

- react-19

- tailwind-css

- typescript

- vite

- web-audio-api

Log in or sign up for Devpost to join the conversation.