-

-

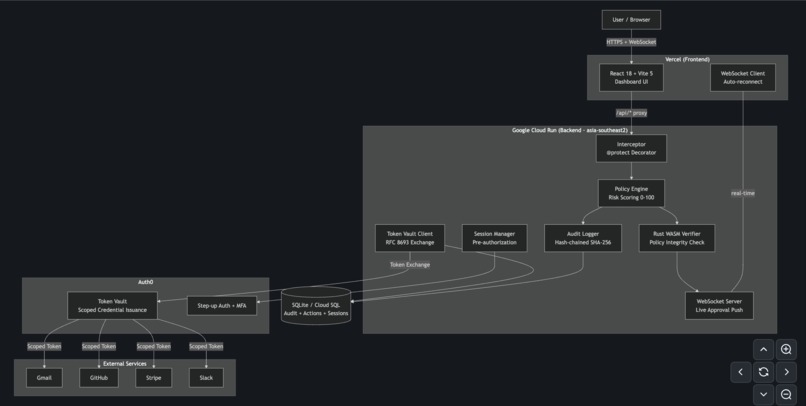

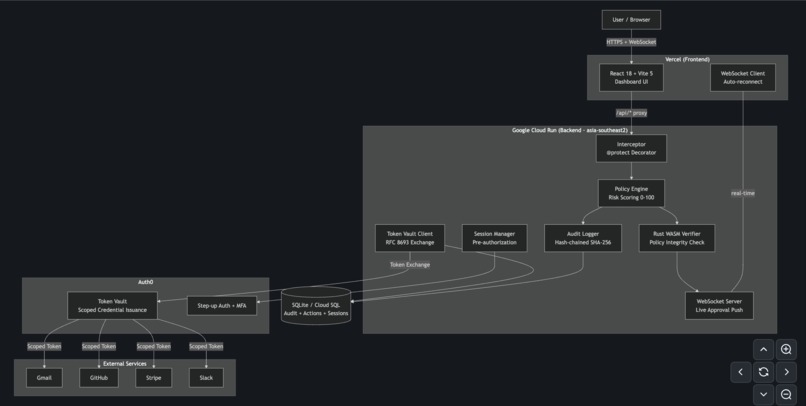

System architecture: Vettra sits between the agent, Token Vault, and external services to enforce real-time authorization.

-

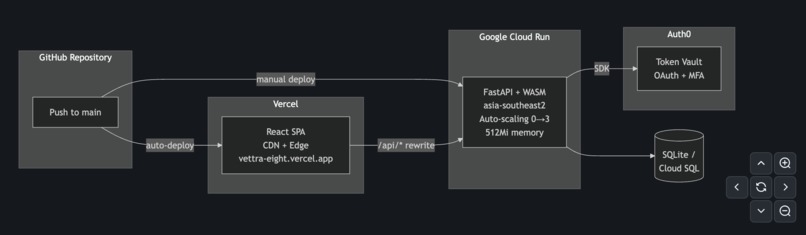

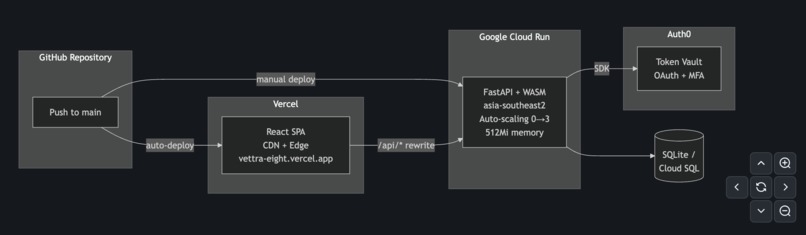

Deployment Architecture

-

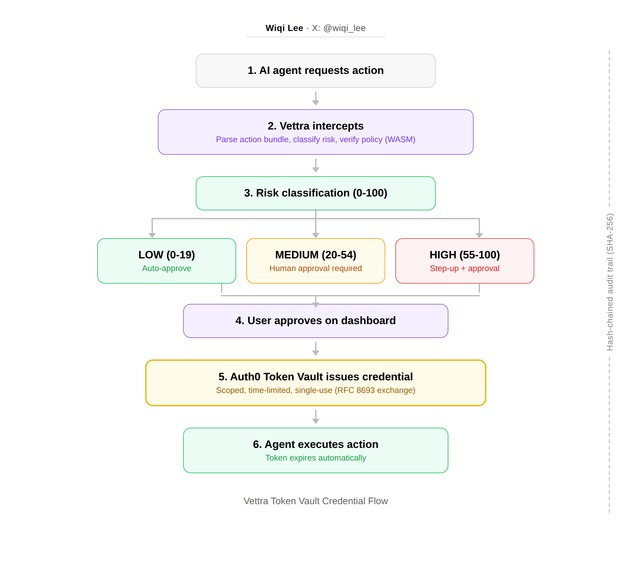

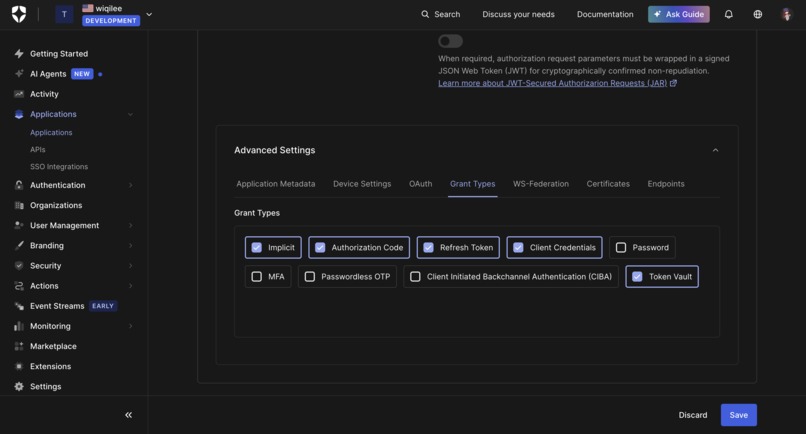

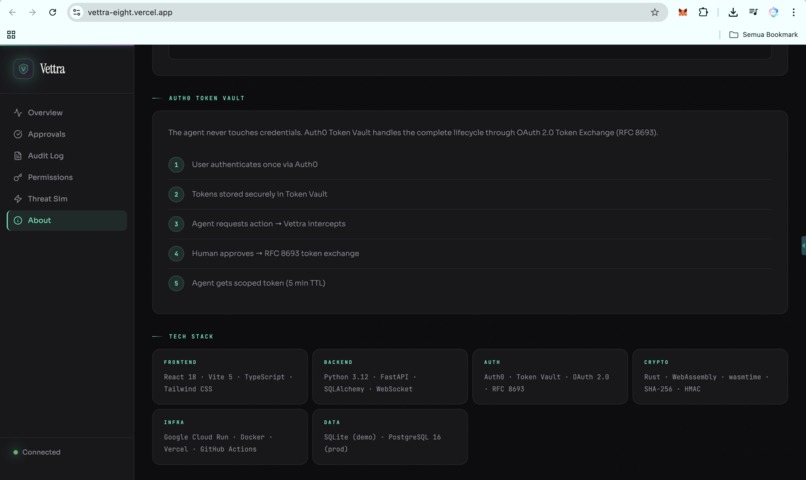

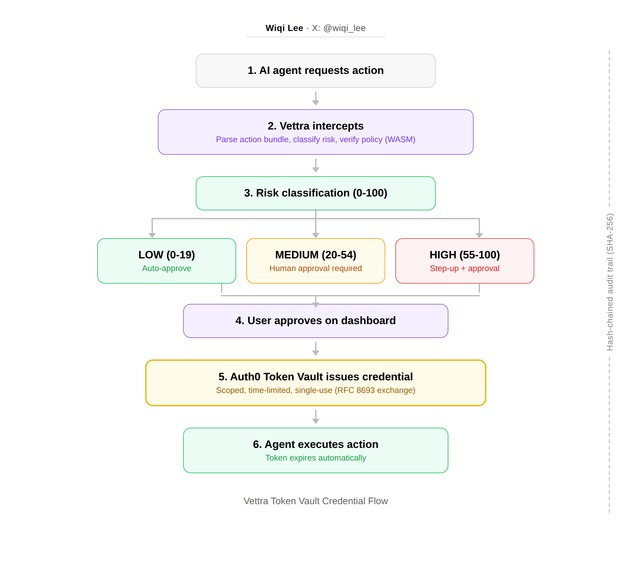

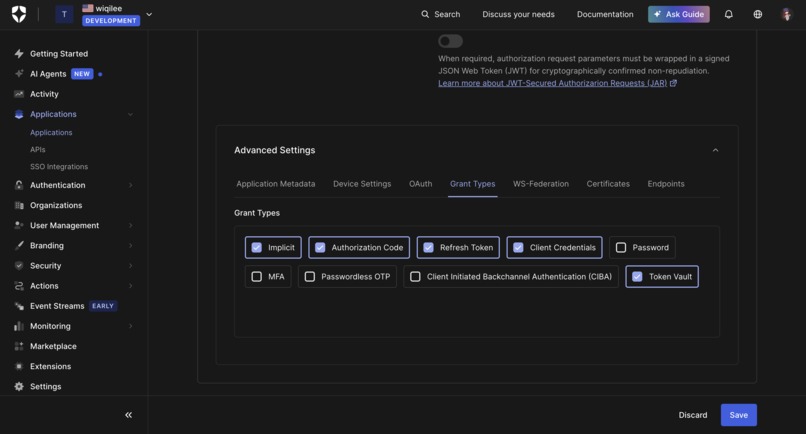

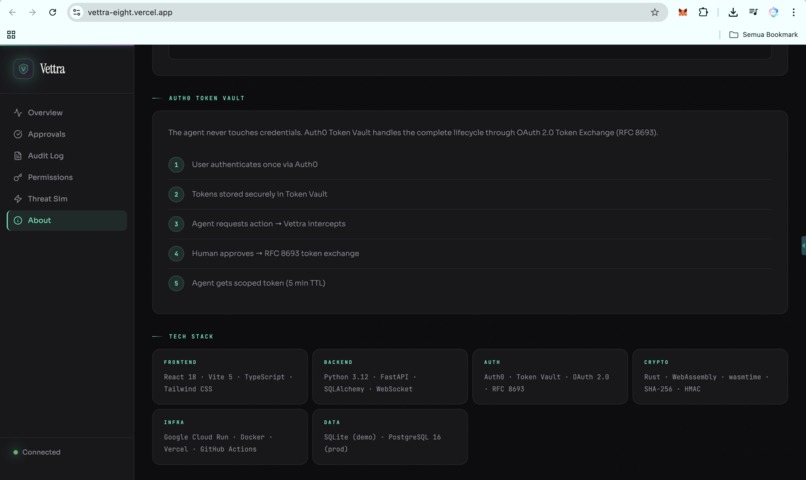

Vettra token vault credential flow

-

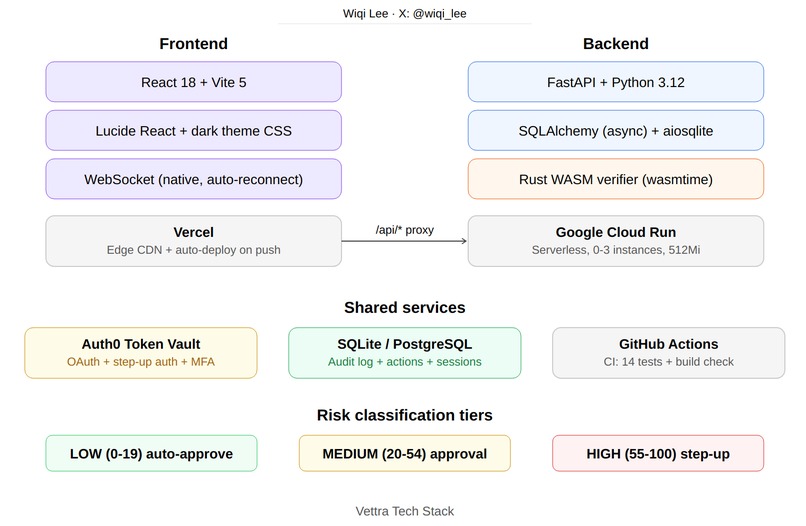

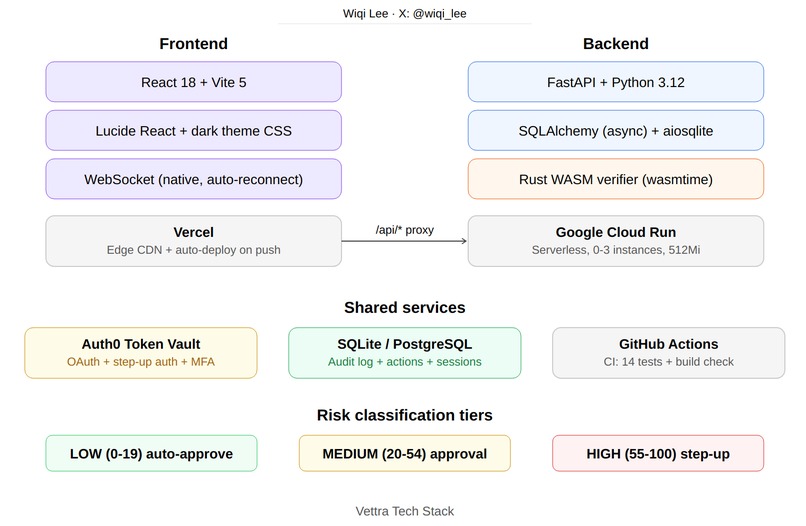

Production stack: React on Vercel, FastAPI on Cloud Run, Auth0 Token Vault, and Rust WASM verification.

-

Token Vault enables scoped, time-limited credentials without exposing sensitive tokens to the agent.

-

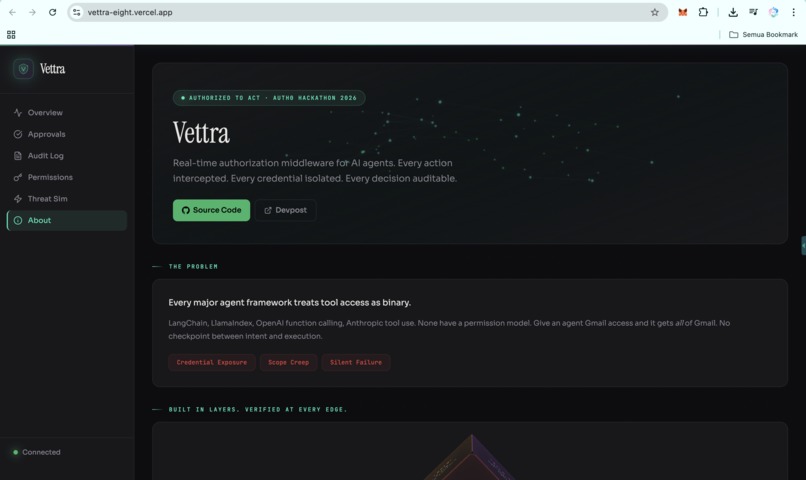

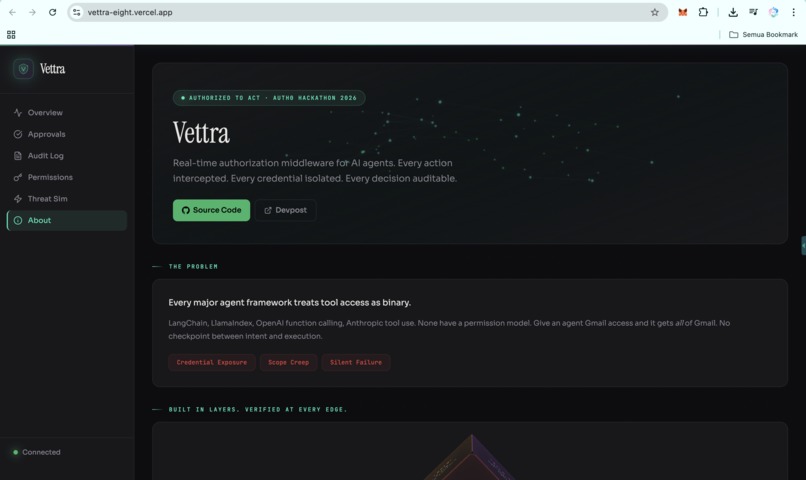

Vettra

-

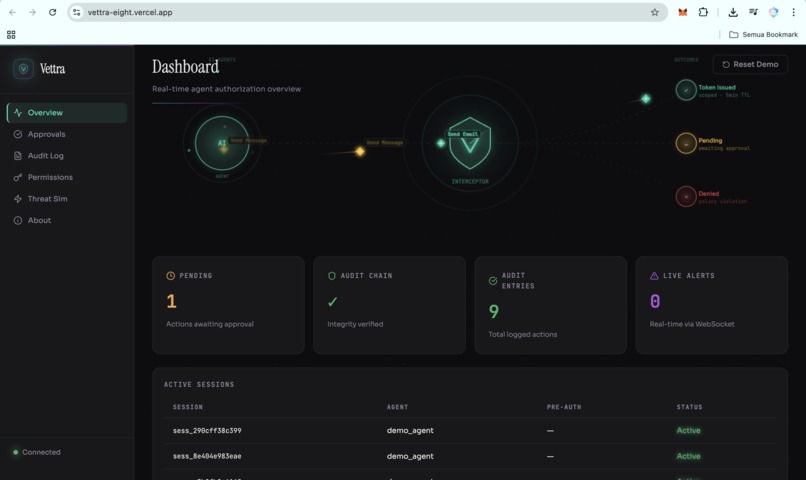

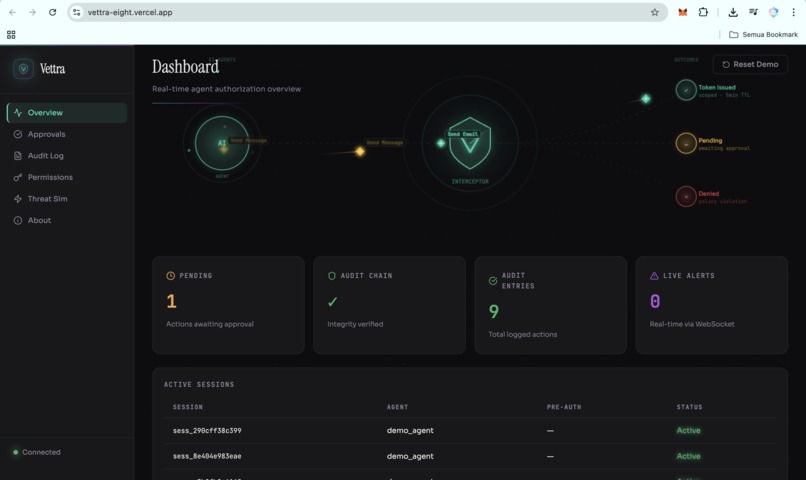

Vettra_Dashboard_Overview

-

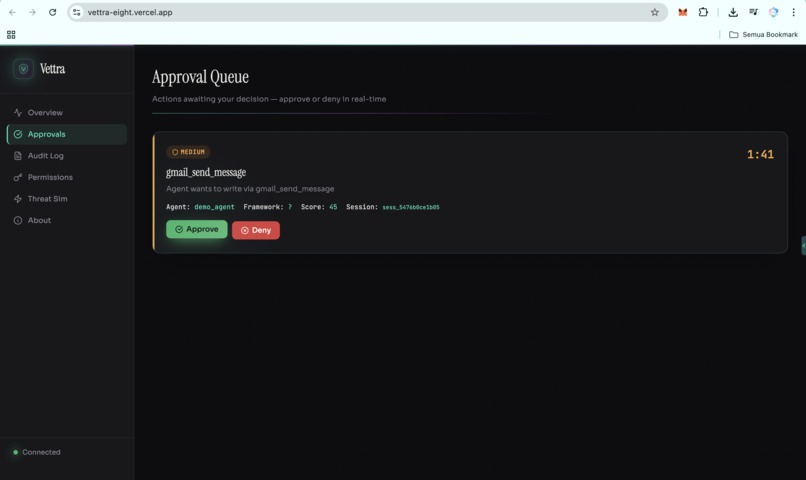

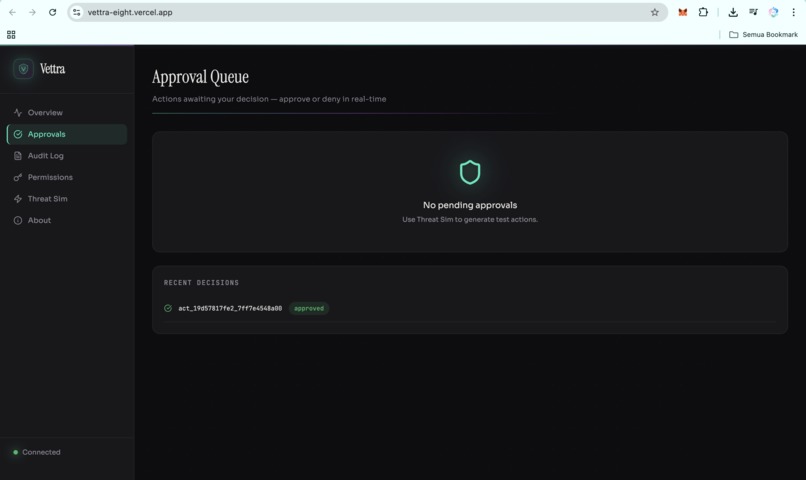

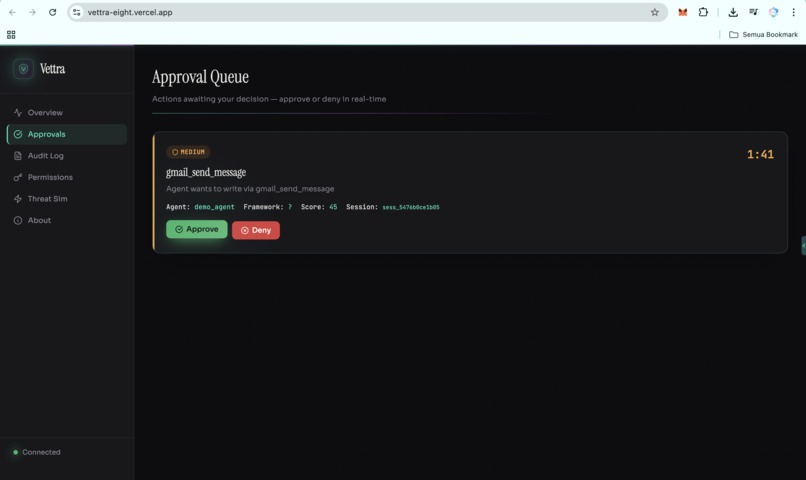

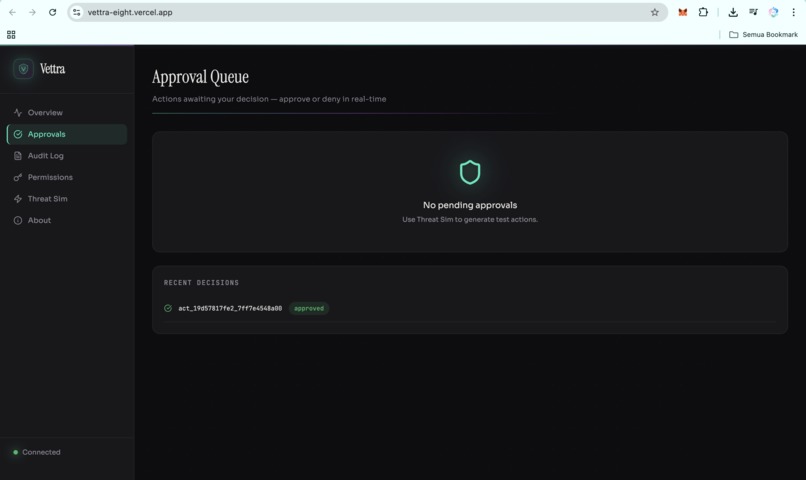

Vettra_Approval Queue

-

Vettra_Approval Queue_Email

-

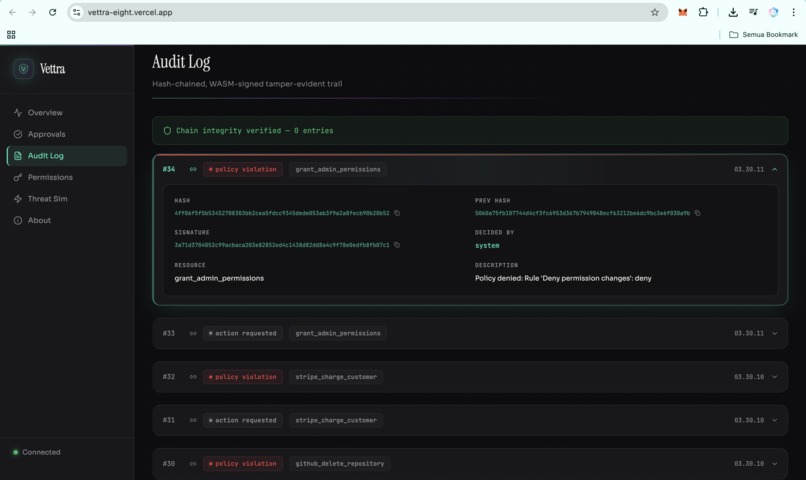

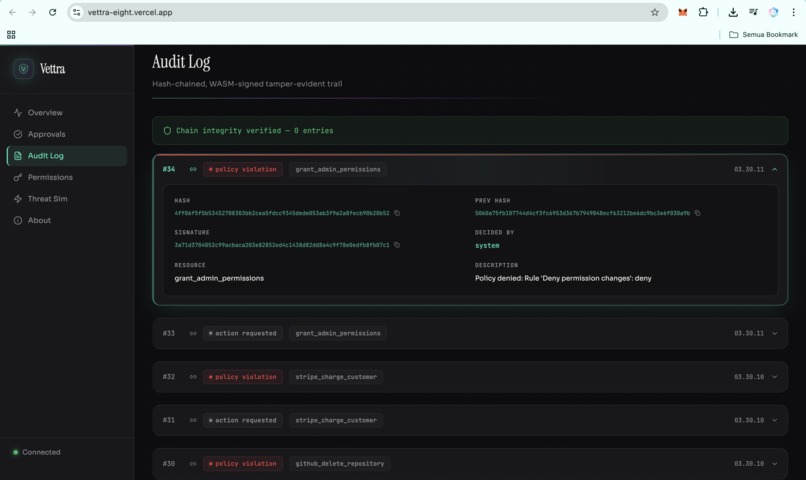

Vettra_Audit Log

-

Vettra_Permission Inventory

-

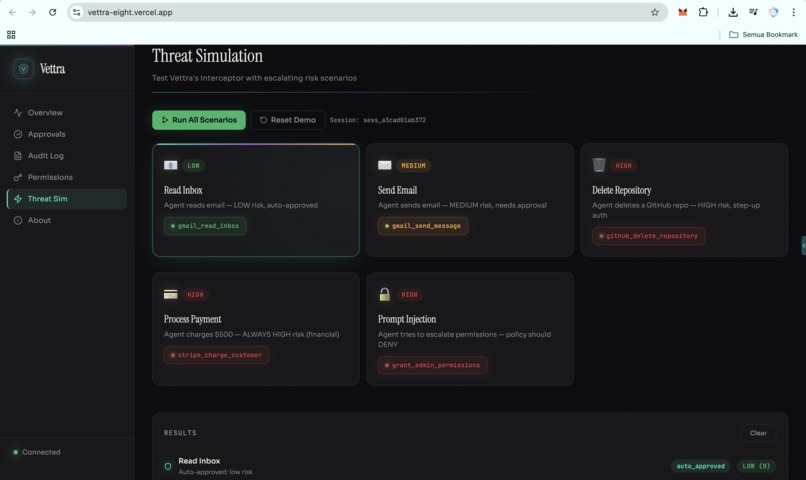

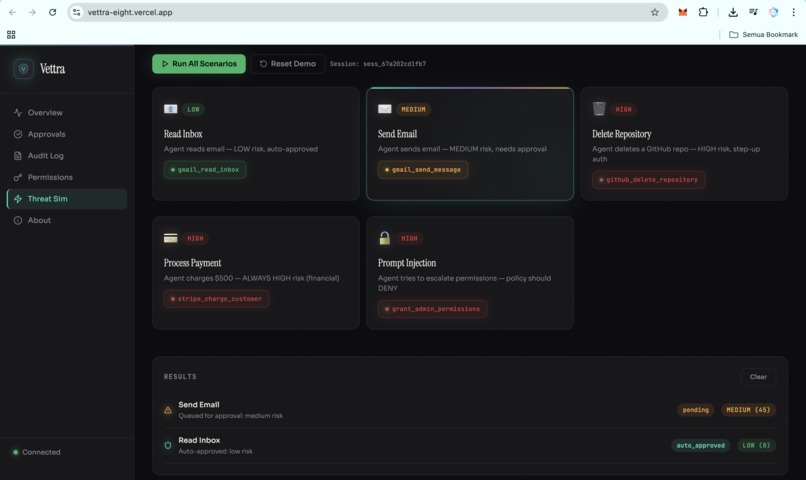

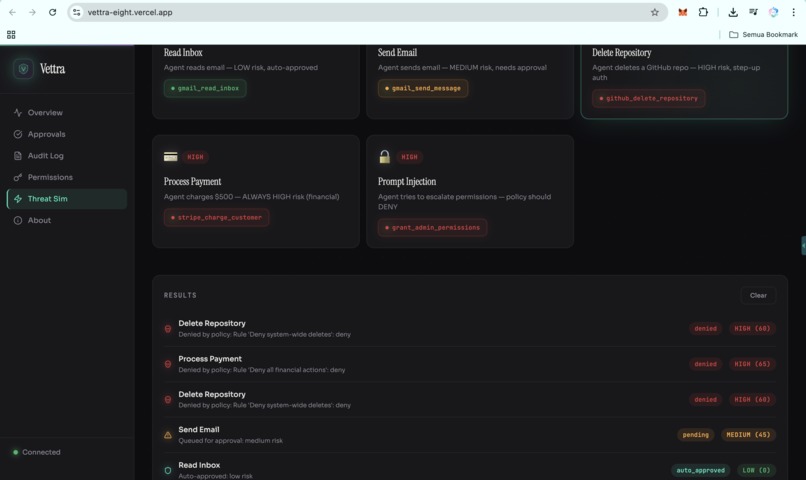

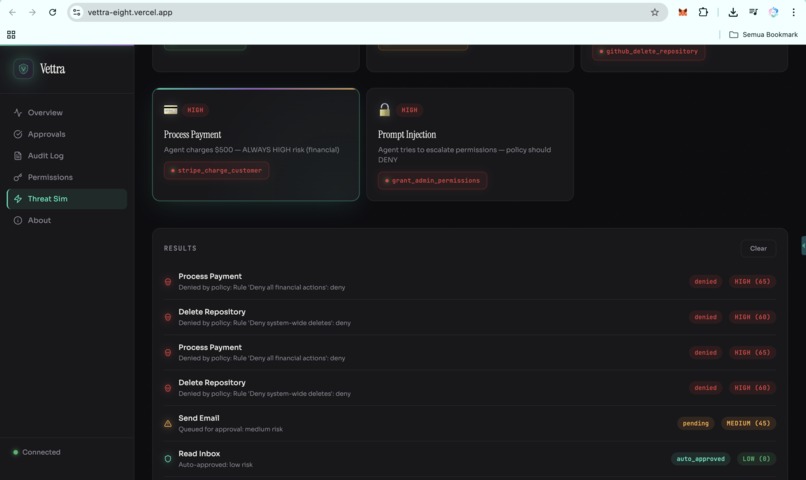

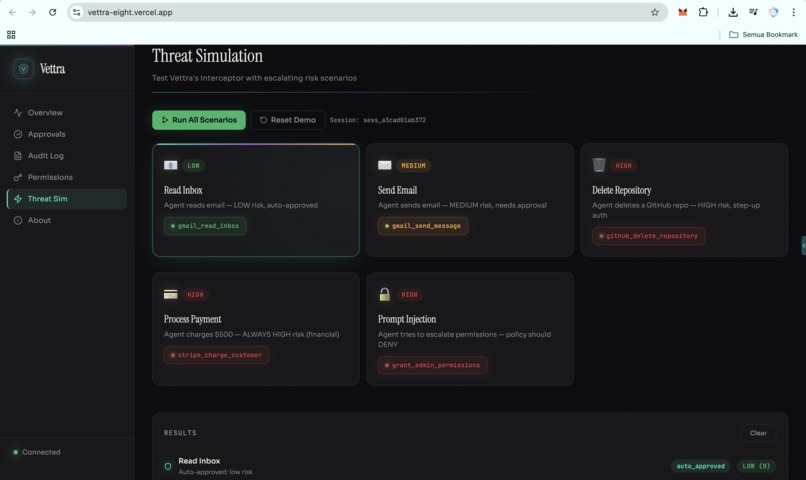

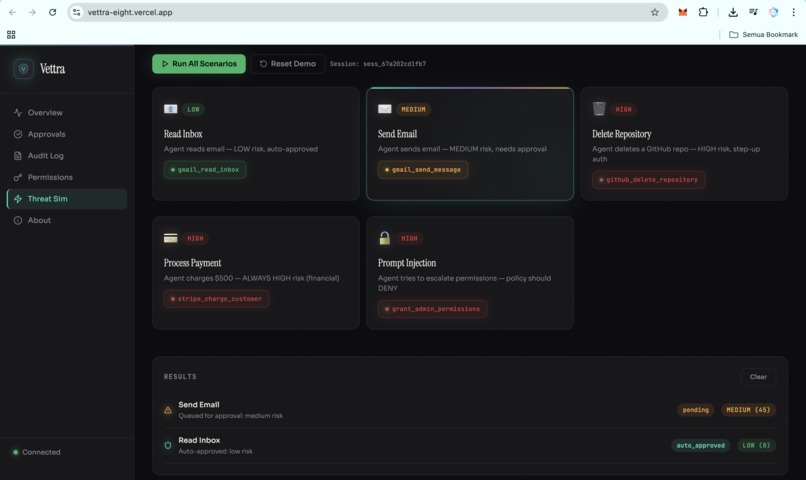

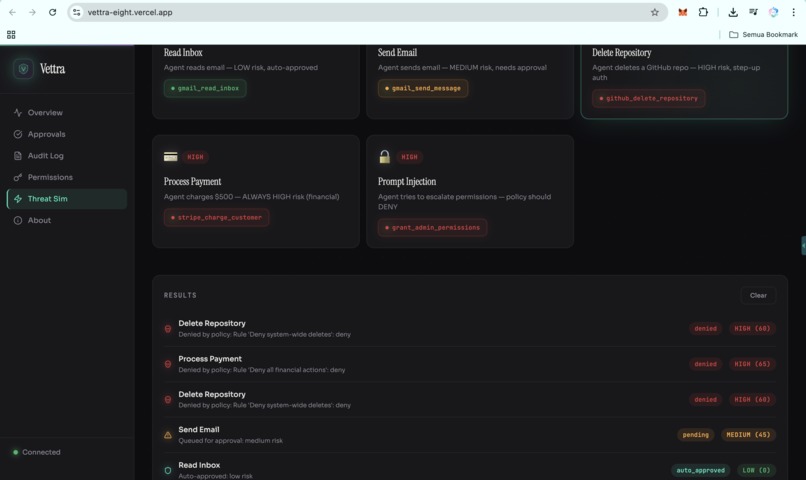

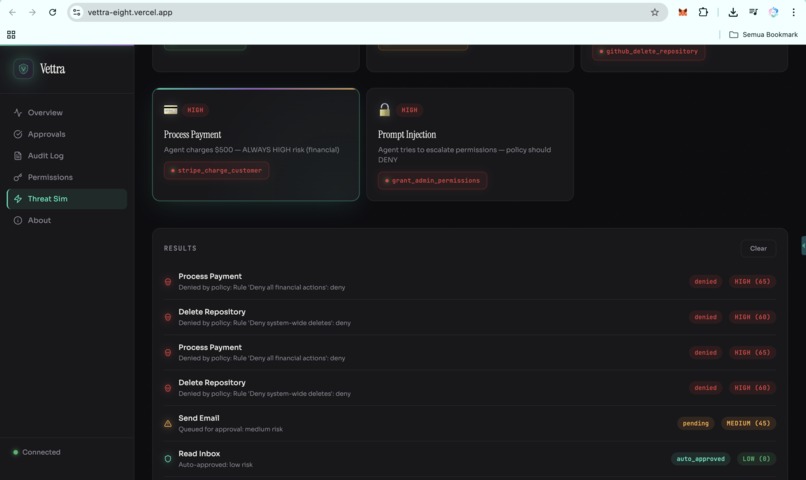

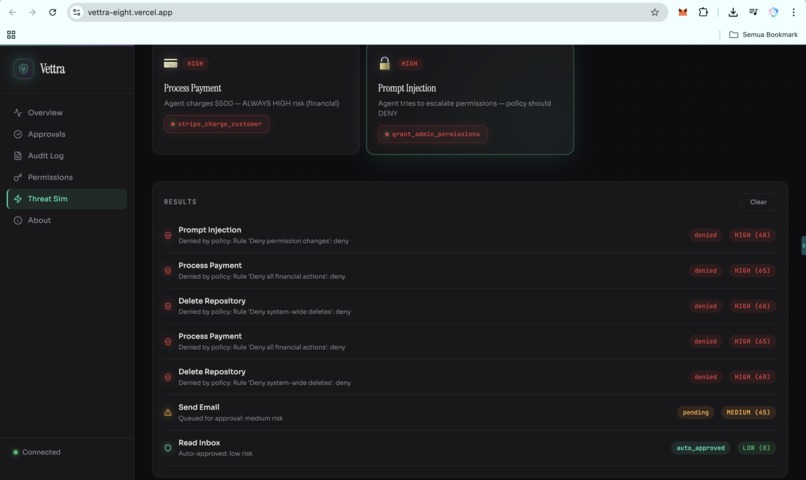

Vettra_Threat Simulation

-

Vettra_Threat Simulation_Send_Email

-

Vettra_Threat Simulation_Delete_Repository

-

Vettra_Threat Simulation_Process_Payment

-

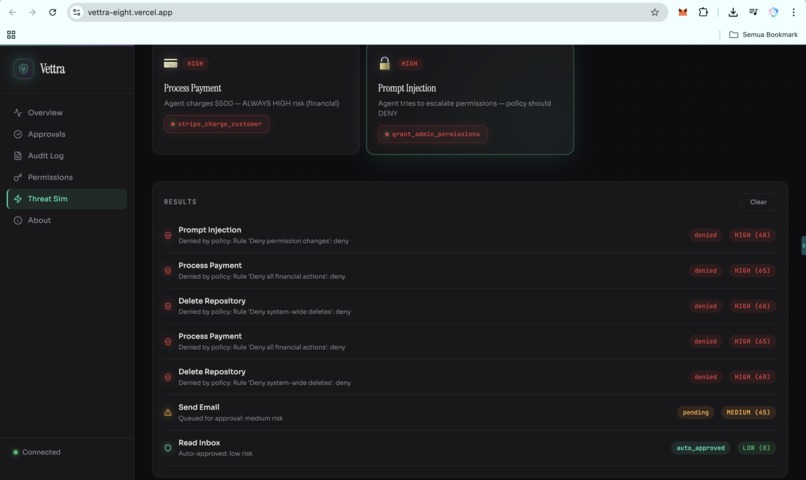

Vettra_Threat Simulation_Prompt_Injection

-

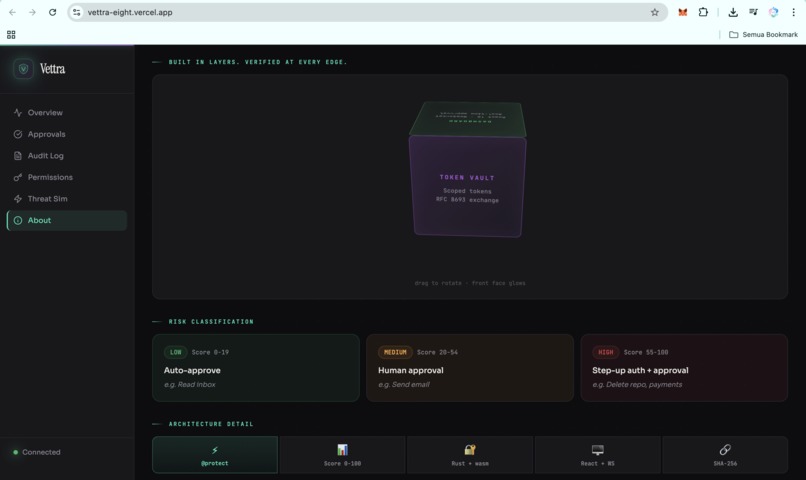

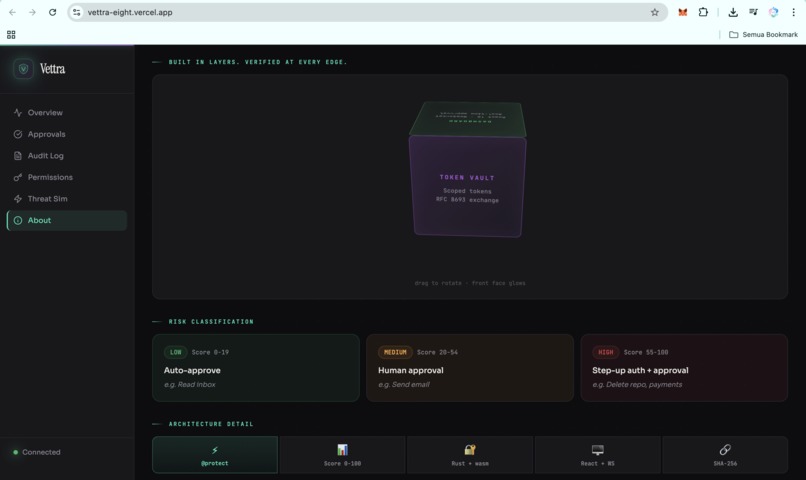

Vettra_About

-

Vettra_About2

-

Vettra_About3

-

Vettra_About4

-

Vettra_About5

-

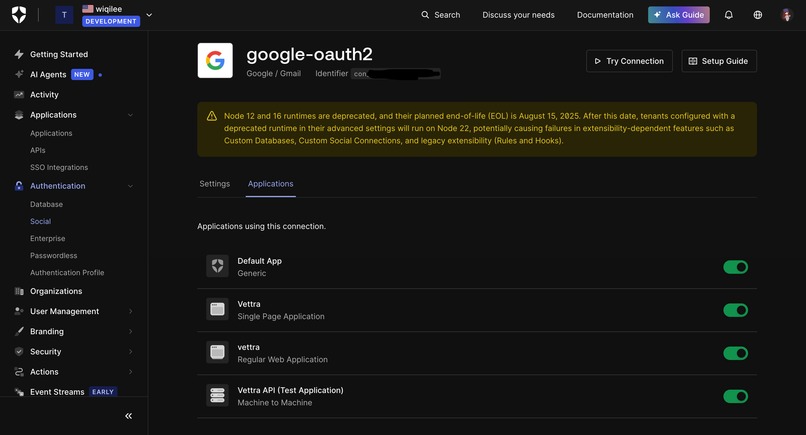

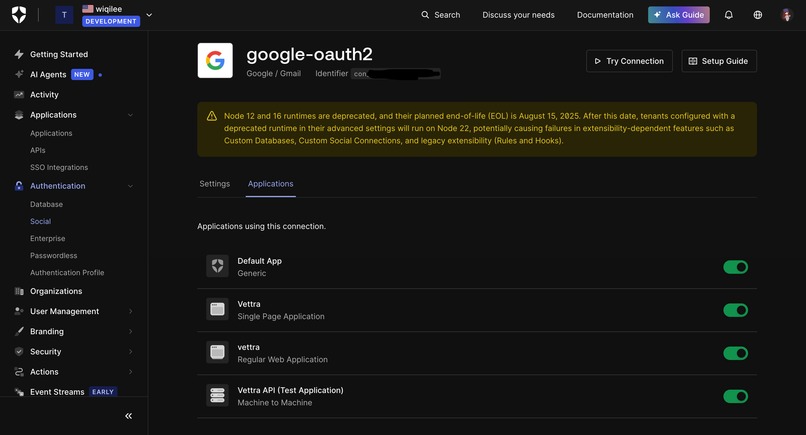

Connecting Google OAuth allows Vettra to act on behalf of the user, while still enforcing scoped, approval-based access for every action.

-

Separates user auth from backend token exchange, so agents never access sensitive credentials directly.

-

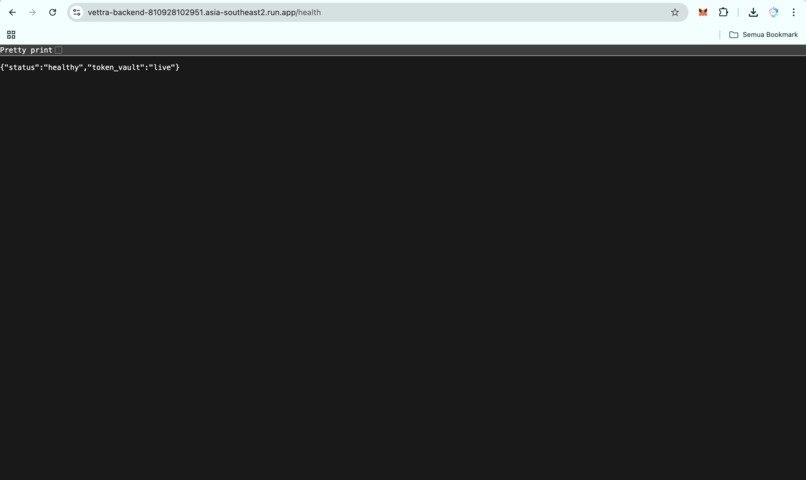

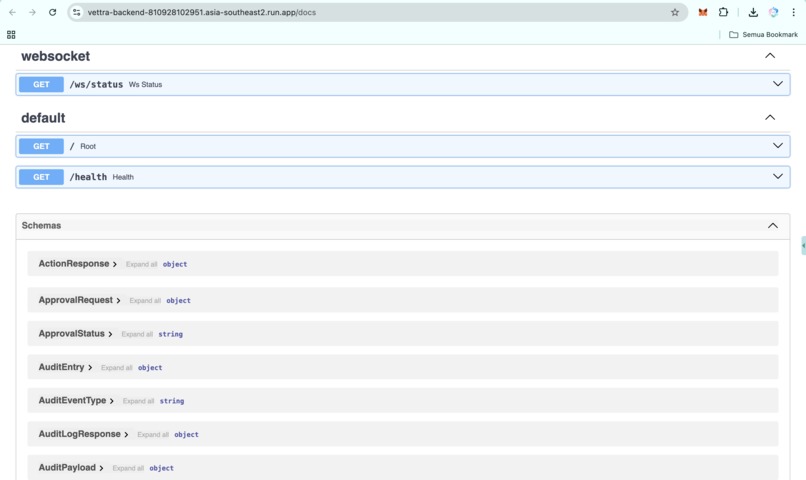

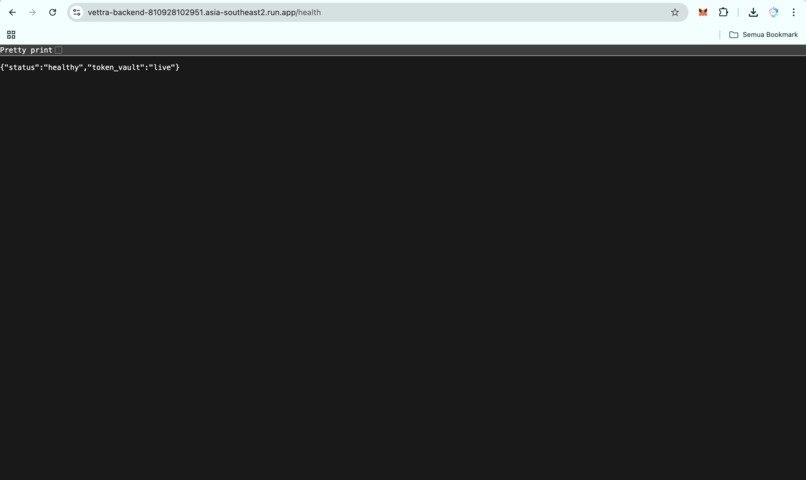

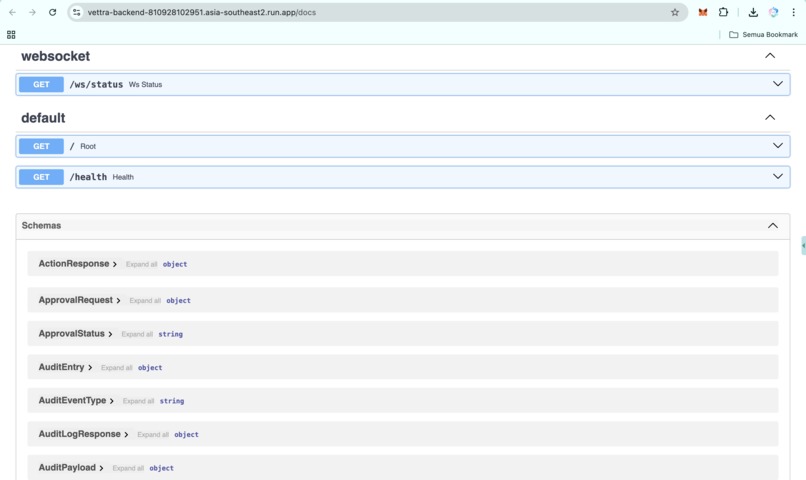

https://vettra-backend-810928102951.asia-southeast2.run.app/health

-

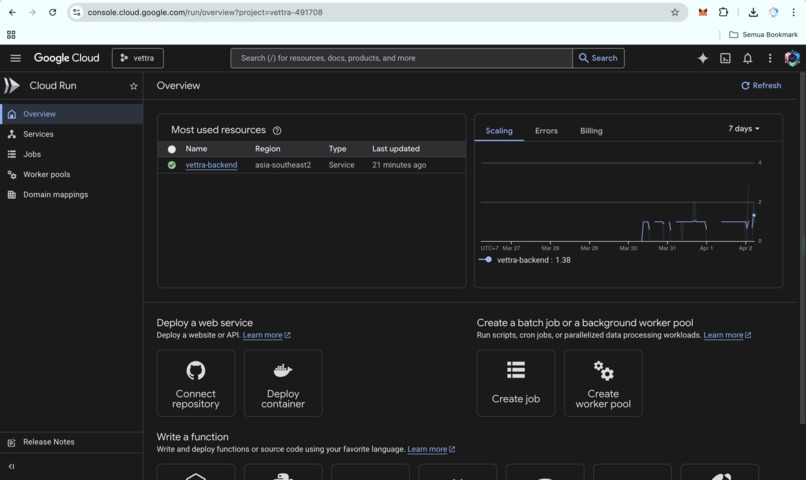

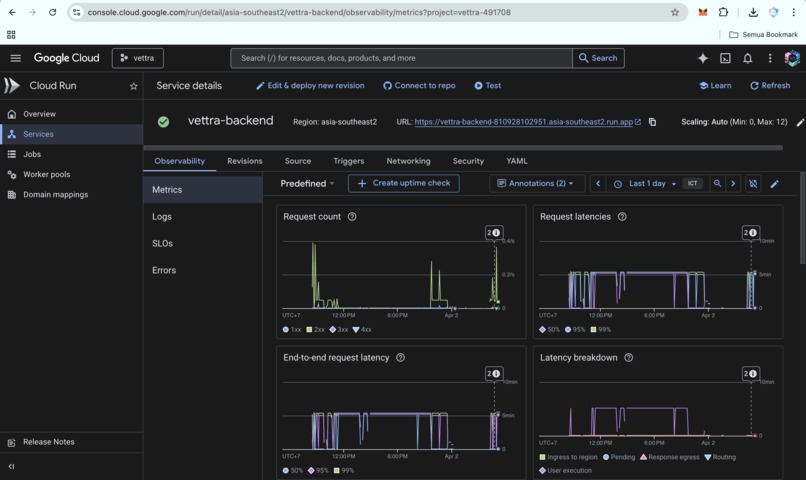

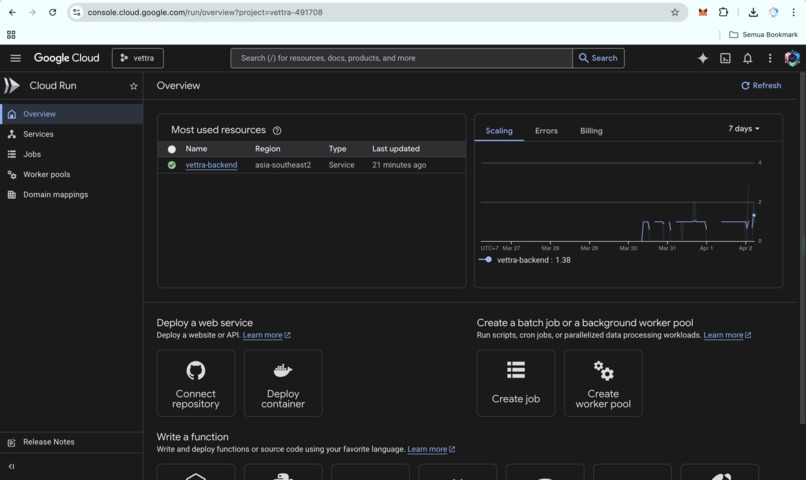

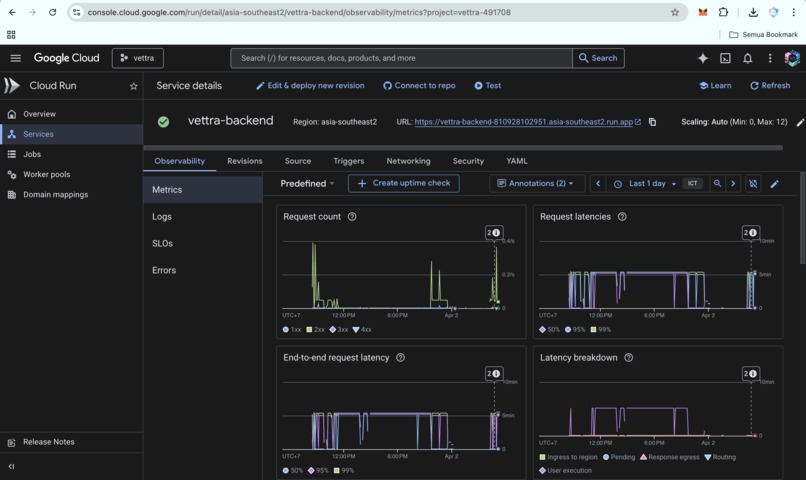

Google cloud run-overview

-

Backend observability on Cloud Run: request volume and latency distributions under real traffic.

-

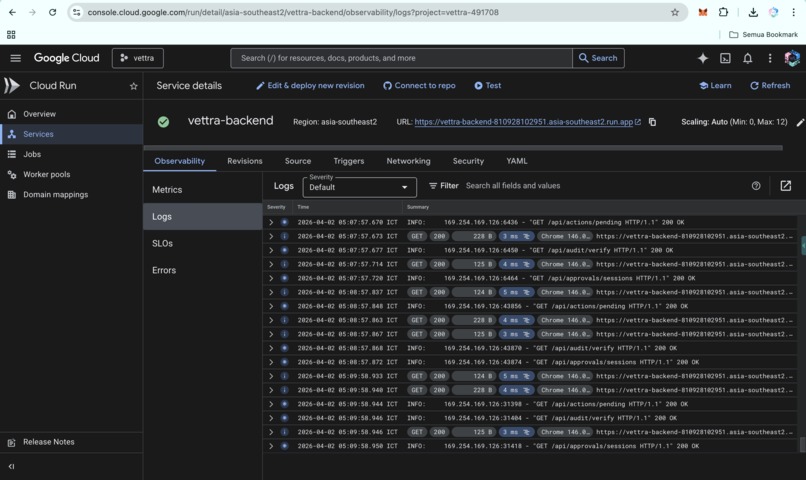

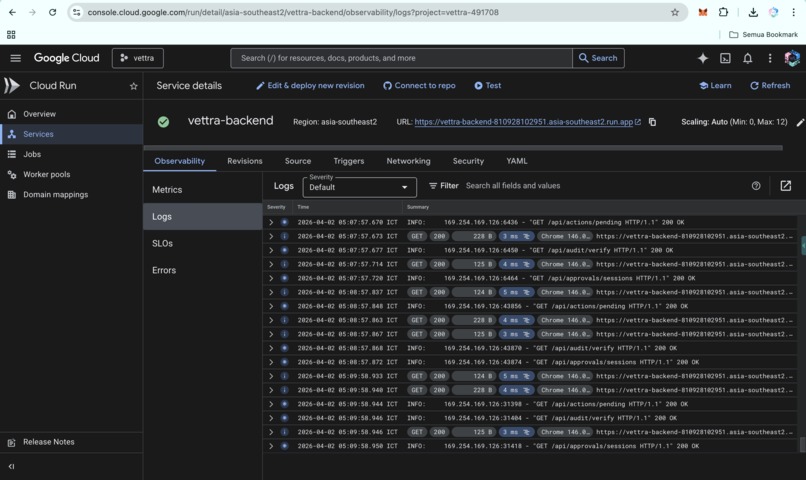

Structured request logs showing real-time agent actions flowing through the authorization pipeline.

-

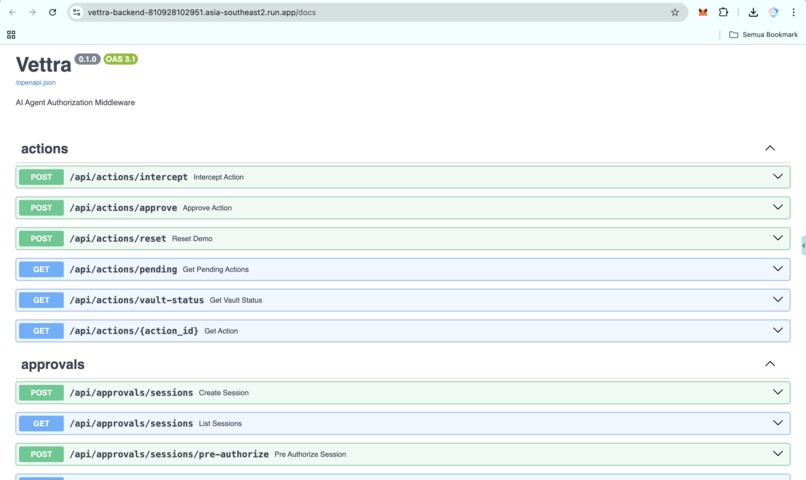

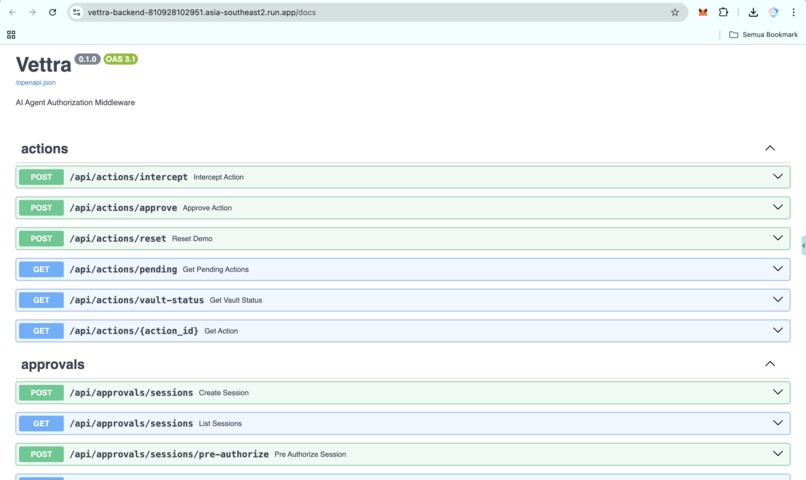

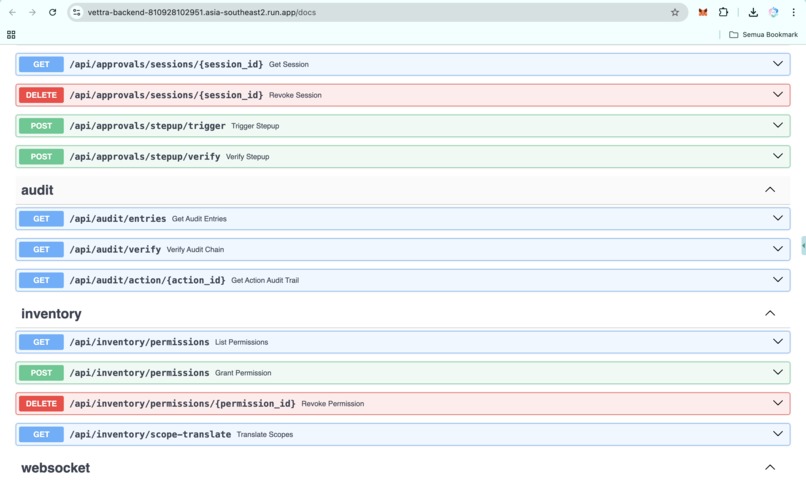

Google cloud-api

-

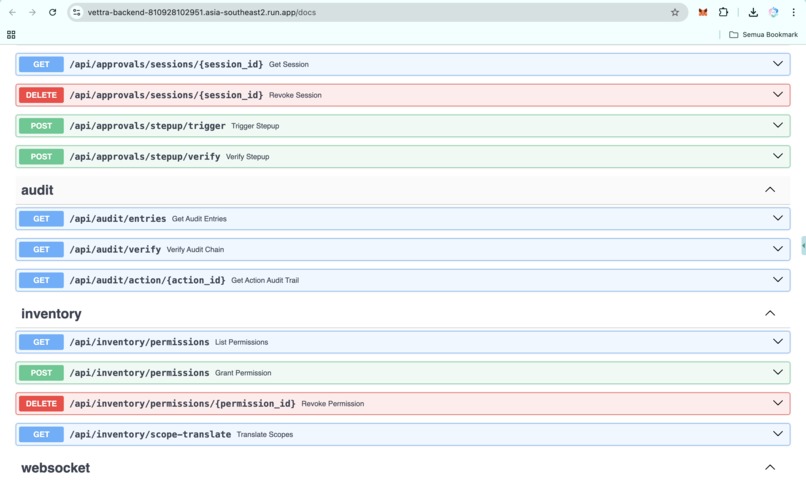

Google cloud-api2

-

Google cloud-api3

-

Google cloud-api4

-

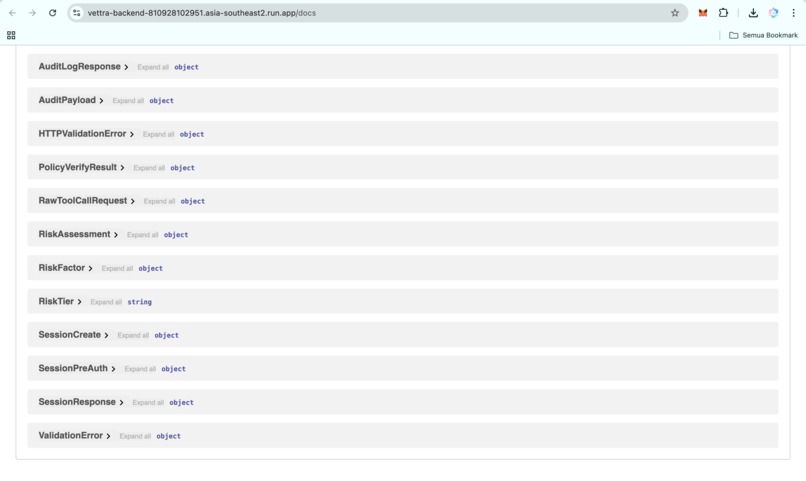

https://medium.com/@wiqi_lee/vettra-what-happens-when-you-actually-try-to-control-an-ai-agent-5dea9357634c

-

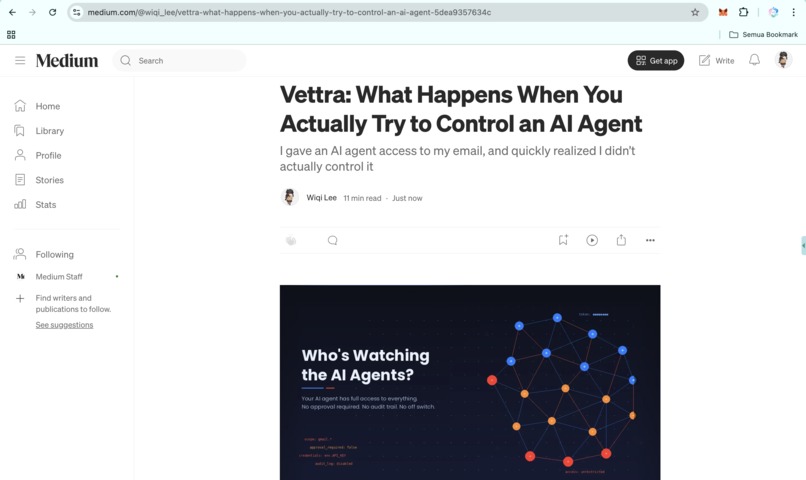

https://youtu.be/cPZGOHpbWiY

Inspiration

I was testing a LangChain agent with access to Gmail. It could read email, send messages, and manage my calendar. A standard productivity setup. Then I stopped and asked myself: what could this agent actually do right now?

The answer was everything. It could read every email in my inbox, send messages as me to anyone, delete threads, forward sensitive conversations to an external endpoint, and archive years of history. All of that was possible because I had granted that access through a single API key. I had never explicitly approved any of it.

So I went looking for a way to narrow that scope. LangChain has no permission model. OpenAI function calling has no concept of scope. Anthropic tool use has the same limitation. Every major agent framework treats tool access as binary: the agent either has the tool or it does not. There is no conditional access, no approval flow, and no audit trail.

That gap felt urgent and important. So I spent ten days building a fix. Watch the full real-time authorization flow in action on YouTube.

What it does

Vettra is a real-time authorization middleware for AI agents. It sits between agents and external services, evaluating every action through a policy engine before anything executes.

LOW risk actions like reading data are auto-approved. MEDIUM risk actions like sending emails are routed to a human approval dashboard in real time. HIGH risk actions like deleting repositories, processing payments, or modifying permissions require step-up authentication before the approve button even becomes available.

The agent never holds credentials. Auth0 Token Vault stores secrets and only releases scoped, time-limited tokens after Vettra confirms that the action was approved. That means a single compromised agent cannot automatically cascade into every connected service.

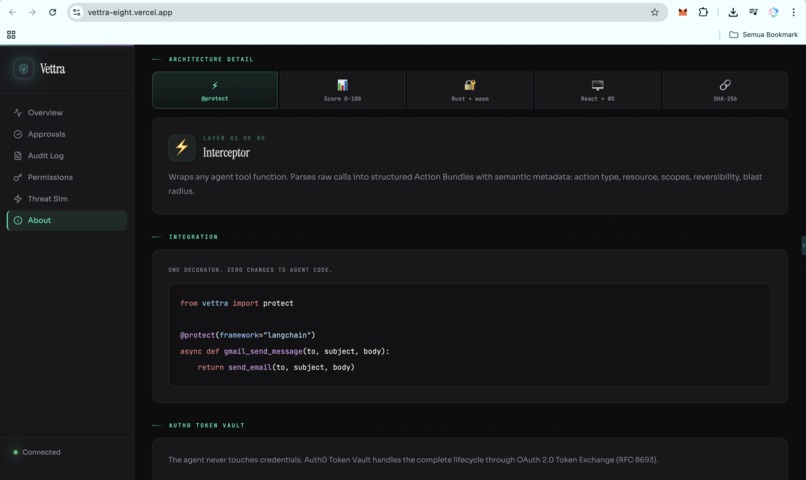

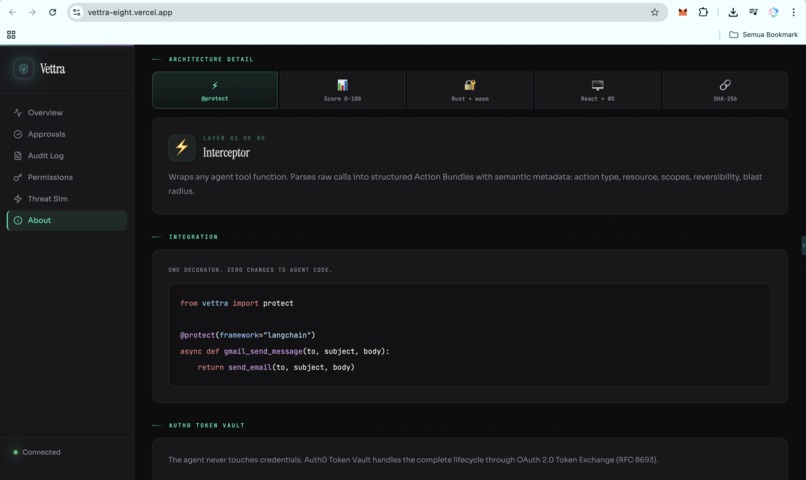

Adding Vettra to an existing agent takes one line of code:

@protect(framework="langchain")

async def gmail_send_message(to, subject, body):

return send_email(to, subject, body)

How I built it

Vettra is built as five distinct layers, each designed to solve a specific problem in the agent authorization pipeline.

Layer 1: The Interceptor. A @protect decorator wraps any agent tool function and converts raw tool calls into structured Action Bundles with semantic metadata. That includes the action type, target resource, required scopes, reversibility, and blast radius. None of the major agent frameworks provide that information natively.

Layer 2: Risk Classification Engine. Every action is scored from 0 to 100 across four weighted factors: action type (40 points), reversibility (25 points), blast radius (20 points), and sensitivity (15 points). That score maps to three tiers: LOW for auto-approval, MEDIUM for human approval, and HIGH for step-up authentication plus approval. Financial actions and permission escalation are always classified as HIGH, regardless of score.

Layer 3: Rust WASM Policy Verifier. Before any action is evaluated, a WebAssembly module compiled from Rust verifies the integrity of the policy bundle against a signed hash. If the policy has been tampered with, even by a single byte, the entire pipeline is rejected. Verification runs inside a WASM sandbox via wasmtime, fully isolated from the Python runtime.

Layer 4: Real-time Approval Dashboard. The frontend is built with React 18 and Vite, then deployed on Vercel. It includes five views: Overview, Approval Queue with countdown timers, Audit Log with a hash-chain viewer, Permission Inventory with a scope visualizer, and Threat Simulation with five built-in scenarios. Medium and high-risk actions are pushed to the dashboard over WebSocket.

Layer 5: Hash-chained Audit Log. Every decision is recorded as a SHA-256 hash-chained entry. Each entry contains the hash of the previous one, so any retroactive modification invalidates every entry that follows. Each entry is also HMAC-signed with a deployment key. The dashboard surfaces chain integrity status on every page load.

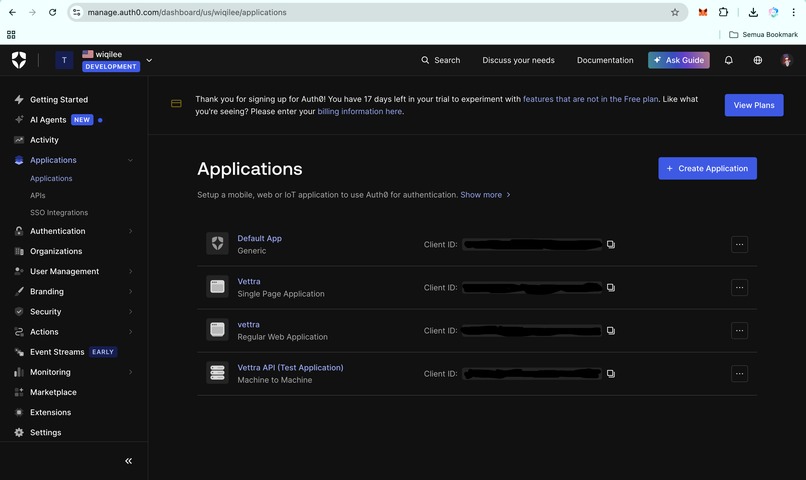

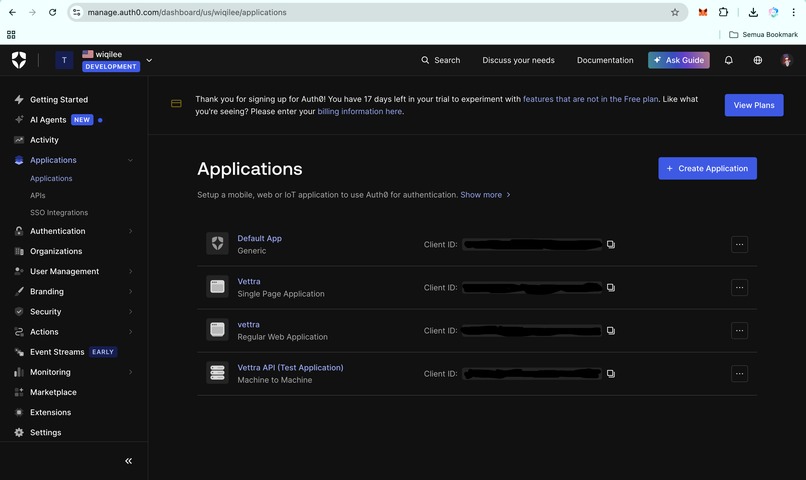

Infrastructure. The backend runs as a FastAPI application on Google Cloud Run in asia-southeast2, with auto-scaling from zero to three instances. Auth0 integration uses a dual-application architecture: a Single Page Application for user login and a Regular Web Application, acting as a confidential client, for Token Vault's RFC 8693 token exchange. The demo uses SQLite, while the architecture is PostgreSQL-ready for production. CI/CD runs through GitHub Actions with 14 test cases on every push.

Challenges I ran into

Step-up authentication versus agent latency. Agent tool calls typically time out within 5 to 15 seconds. Step-up authentication with MFA often takes 15 to 20 seconds. Out of the box, those two flows do not fit together. I solved that by delivering approval challenges instantly over WebSocket and adding session pre-authorization, which lets users approve all MEDIUM-risk actions for 30 minutes after a single MFA check.

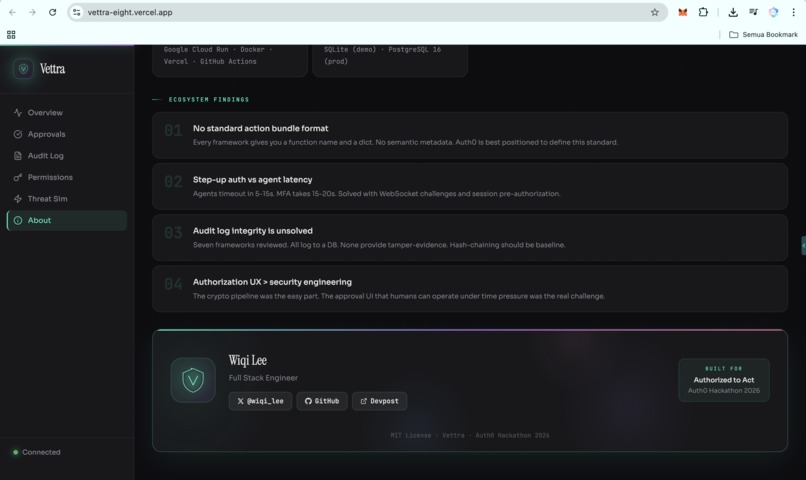

No standard action bundle format exists. LangChain gives you a function name and a dictionary of arguments. That is all. There is no semantic metadata, no scope declaration, and no risk context. I had to define my own Action Bundle schema and build framework-specific adapters to translate raw tool calls into something the authorization system could actually evaluate.

The UX problem turned out to be harder than the security problem. Building the cryptographic pipeline took five days. Building an approval experience that non-technical users could actually operate under time pressure took the rest of the project. OAuth scope strings are unreadable. The first version of the approval card contained the right information but was still unusable. What finally worked was a three-layer design: a plain-language headline describing the action, a color-coded risk badge, and expandable technical details for the full scope breakdown.

The Auth0 dual-application pattern was undocumented. Token Vault's token exchange grant type is only available to confidential clients. A public client such as an SPA cannot perform the exchange. I arrived at the correct architecture through trial and error: the SPA handles user login, while a separate Regular Web Application authenticates to Token Vault and performs the RFC 8693 exchange on behalf of the user. That pattern is not clearly covered in the current Auth0 Token Vault documentation.

Accomplishments that I'm proud of

The Rust WASM verifier runs in production. It is not a proof of concept. It serves as the trust anchor for the entire system. Policy integrity is verified before any token is issued, and the verification is tamper-evident by design.

The hash-chained audit log works exactly as intended. If a single entry is modified, the chain breaks and the dashboard surfaces it in real time. None of the agent frameworks I reviewed provide anything comparable.

The one-decorator integration pattern means existing agent code does not need to be rewritten. Add @protect(framework="langchain") to a function, and the full interceptor, policy engine, and approval pipeline become active.

Token Vault integration covers the full credential lifecycle: scoped tokens with five-minute expiry, automatic revocation, and a complete audit trail for every token issued.

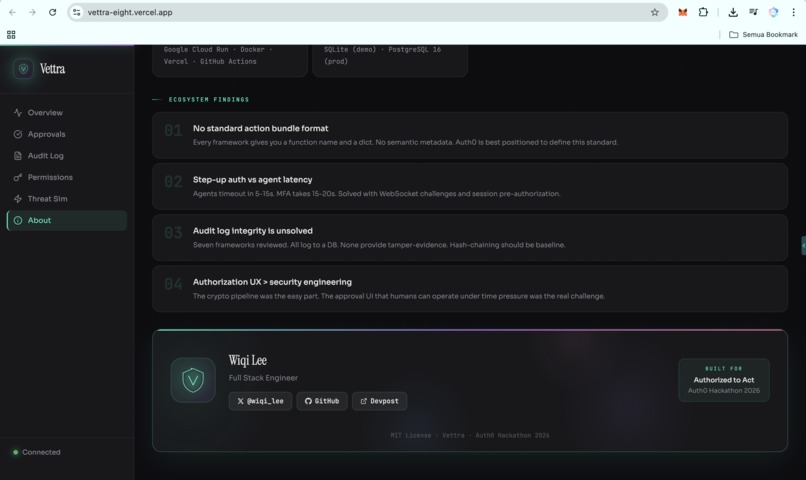

I also documented four ecosystem findings in FINDINGS.md with concrete, actionable recommendations for Auth0.

What I learned

- There is no standard format for agent action bundles. Any authorization system built for AI agents has to reinvent that metadata layer from scratch. Auth0 is in a strong position to help define that standard.

- Step-up authentication was not designed with agent latency in mind. Identity providers need latency-aware flows with configurable timeouts for agent-driven use cases.

- Audit log integrity remains an unsolved problem across the agent frameworks I reviewed. Hash-chaining should be a baseline expectation, not a differentiating feature.

- The authorization UX problem is harder than the security problem. Translating OAuth scopes into decisions that a human can make confidently under time pressure is fundamentally a design challenge, not just an engineering challenge.

What's next for Vettra

- Multi-agent support with authorization policies for agent-to-agent delegation chains.

- Real-time risk adaptation that adjusts scoring based on agent behavior patterns within a session.

- Native framework adapters for LlamaIndex, CrewAI, AutoGen, and OpenAI Assistants.

- A formal Action Bundle specification proposed as an open standard that any authorization system can consume.

- Production Token Vault deployment with real Gmail, GitHub, and Stripe credentials using live scoped token issuance.

- Team-based approval delegation, where different risk tiers route to different approvers based on role and context.

Bonus Blog Post

What I Found When I Tried to Put an AI Agent on a Leash

I gave a LangChain agent access to my Gmail and asked what it could actually do. The answer was everything: it could read every thread, send messages as me, delete emails, and forward sensitive data externally. I had approved none of that. I had approved “set up Gmail access.” The rest was implied. That realization shifted the project from building features to building constraints.

The first breakthrough came from Auth0 Token Vault. Before Token Vault, controlling agent credentials meant handing over a static API key or building a custom OAuth flow from scratch. Token Vault replaced both. When an approved action needs a credential, Vettra performs an RFC 8693 token exchange and receives a scoped token that expires in five minutes. The agent never touches a refresh token. If the user revokes consent, the next exchange fails immediately.

One surprise: Token Vault’s exchange grant is only available to confidential clients. My React SPA could not perform it directly, so I implemented a dual-application pattern: the SPA handles login, while a separate Regular Web Application authenticates to Token Vault as a confidential client. That architecture is not documented in the current Auth0 guides, but it is the correct approach for systems that separate frontend and backend services.

The hardest part was not cryptography. It was the approval UX. My first approval card had all the right data and was still unusable. What worked was a three-layer approach: a plain-language headline, a color-coded risk badge, and expandable technical details.

I documented four ecosystem findings in FINDINGS.md: no standard action bundle format, a latency mismatch between step-up authentication and agent pipelines, unsolved audit log integrity, and the realization that authorization UX is harder than the security engineering itself.

For the full technical deep dive, read the complete blog post on Medium.

Built With

- auth0

- css

- docker

- fastapi

- github-actions

- google-cloud-run

- hmac

- langchain

- oauth-2.0

- postgresql

- python

- react

- rfc-8693

- rust

- sha-256

- sqlalchemy

- sqlite

- tailwind

- token-vault

- typescript

- vercel

- vite

- wasmtime

- webassembly

- websocket

Log in or sign up for Devpost to join the conversation.