-

-

Vanguard splash screen

-

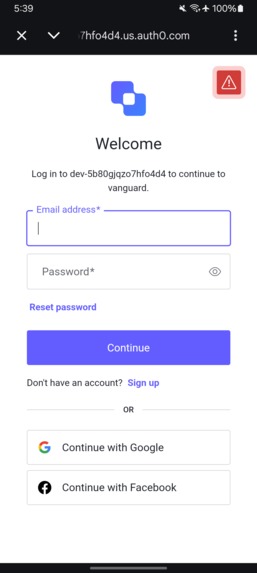

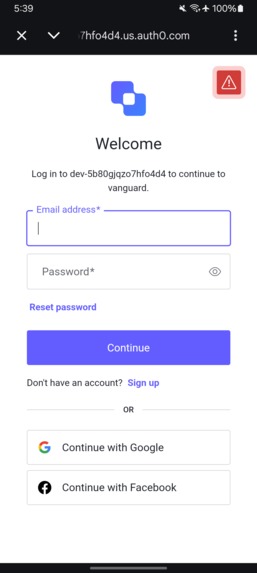

Secure login powered by Auth0

-

Auth0 Universal Login (OAuth flow)

-

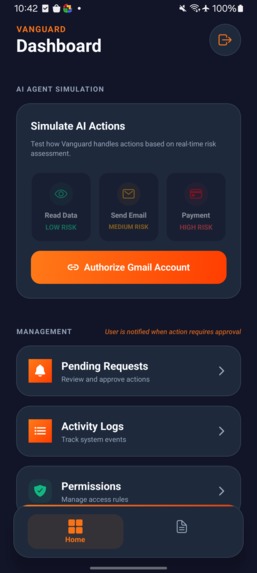

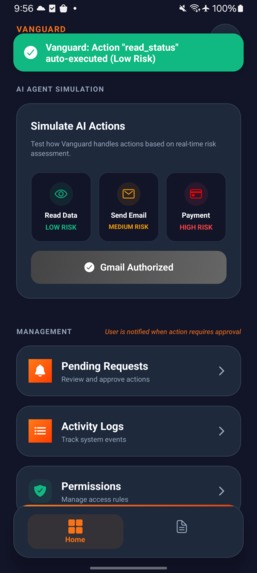

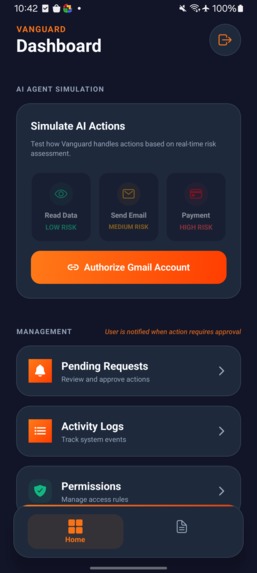

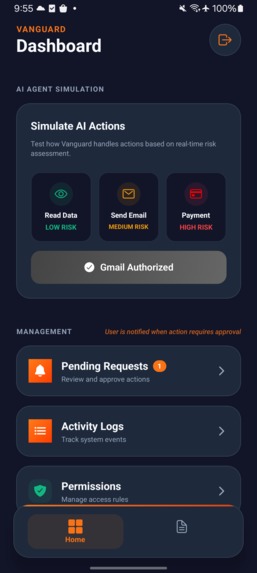

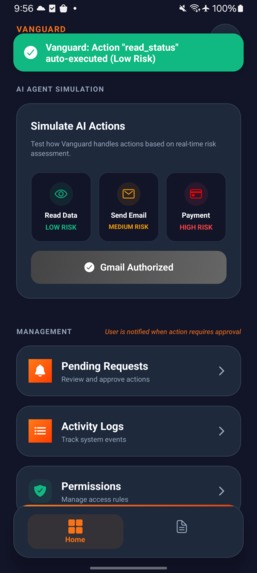

Dashboard – AI action simulation & system overview

-

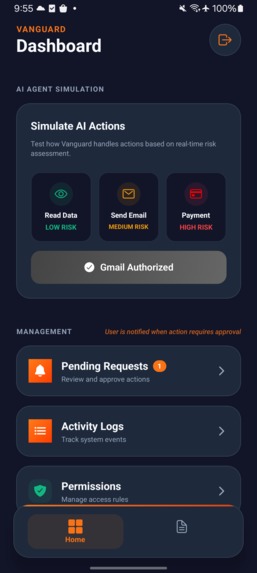

Gmail connected via Auth0 secure authorization

-

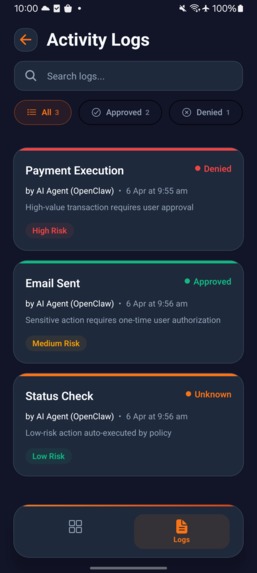

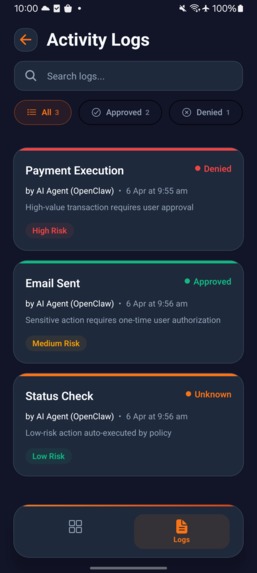

Audit logs – all AI actions tracked and recorded

-

Successful AI action execution (low-risk auto approved)

-

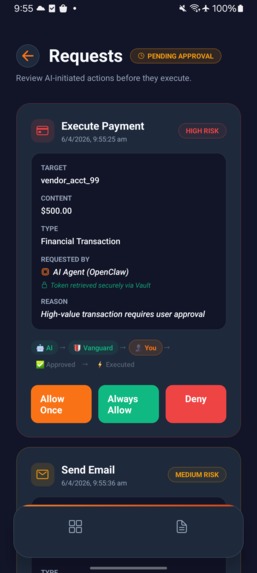

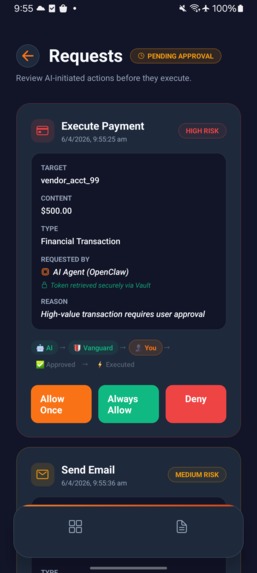

User approval required for high-risk AI actions

-

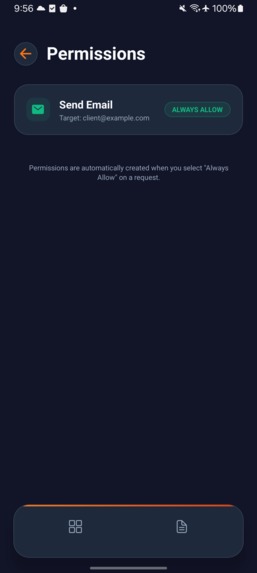

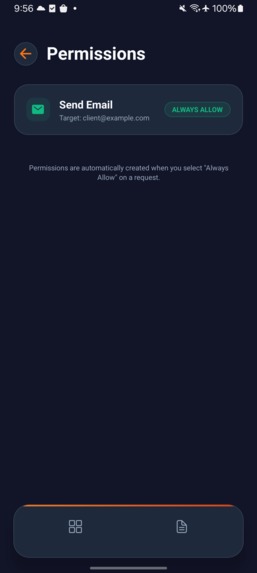

User-defined permission rules (Allow Once / Always / Deny)

-

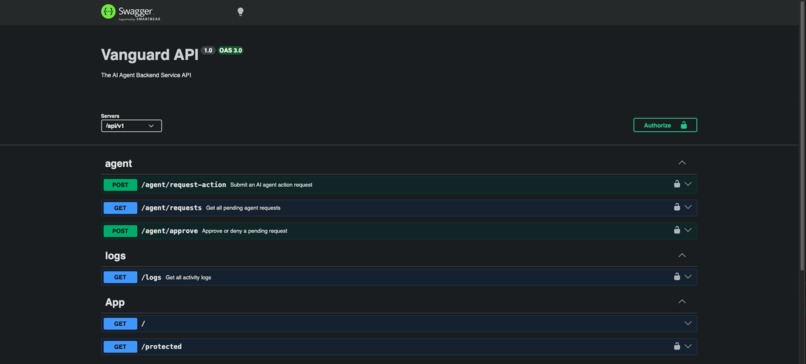

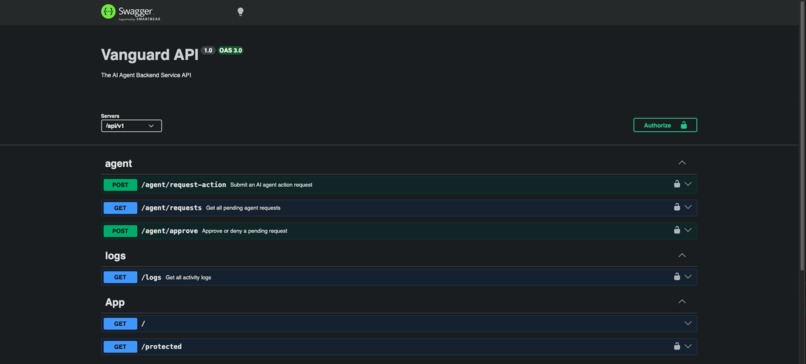

API layer validating and handling AI action requests

-

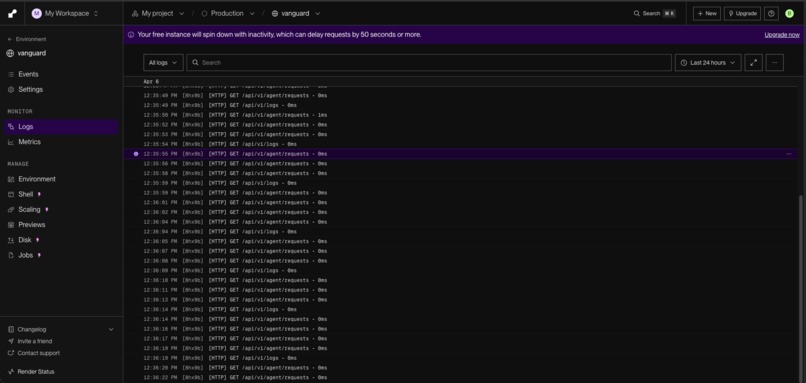

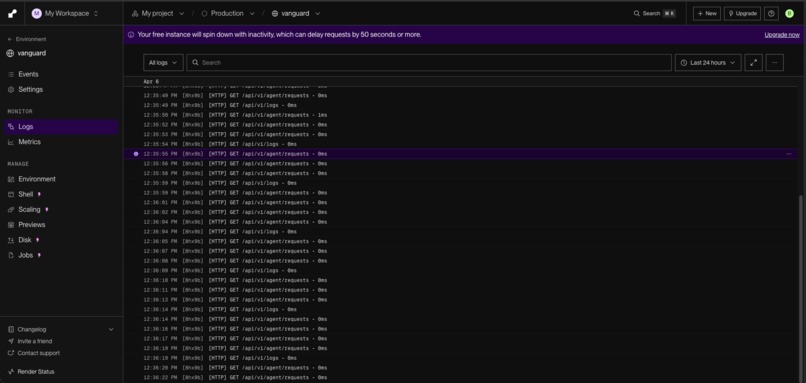

Backend processing AI requests in real-time (Render deployment)

🚀 Inspiration

As AI agents move from assistants to autonomous systems, they are increasingly trusted with real-world actions—sending emails, accessing data, or even initiating transactions. However, once access is granted, there is no runtime control.

Vanguard was built to solve this gap by introducing a model of explicit user consent, ensuring AI actions are not blindly executed.

🛡️ What it does

Vanguard acts as a secure permission proxy between AI agents and external services. Instead of giving AI direct access to credentials:

- Every action is intercepted before execution

- Users can Allow Once, Always Allow, or Deny

- High-risk actions require explicit approval

- All actions are logged for transparency

This ensures AI operates within clear permission boundaries.

🛠️ How I built it

Vanguard consists of

Mobile App (React Native / Expo)

Interface for approving actions, viewing logs, and managing permissionsBackend (NestJS)

Middleware that intercepts requests, applies permission logic, and controls executionAuth0 Integration (Core Security Layer)

- Secure authentication using Auth0

- Token-based access control

- Secure token retrieval using a Token Vault model

- Secure authentication using Auth0

🔐 Execution Flow

- AI agent sends an action request

- Vanguard intercepts and evaluates it

- If required, user approval is triggered

- Upon approval, a scoped token is securely retrieved

- Action is executed and logged

This ensures the AI never directly accesses sensitive credentials.

🚧 Challenges I ran into

- Designing a system where AI can act, but only with explicit authorization

- Handling asynchronous approval flows between backend and mobile

- Translating complex security concepts into a simple user experience

🎓 What I learned

- AI systems require runtime governance, not just initial permissions

- The principle of least privilege is critical for safe AI adoption

- User trust depends on visibility and control over actions

- Auth0 enables secure identity and token-based execution models

🗺️ What's next

- Push notifications for real-time approvals

- Context-aware risk scoring

- More integrations (Slack, GitHub, etc.)

- Biometric approval for sensitive actions

🏆 Impact

Vanguard shifts AI systems from:

❌ Execution-first → uncontrolled actions

✅ Control-first → user-authorized execution

This approach makes AI safer, more transparent, and ready for real-world use.

Bonus Blog Post

I started building Vanguard after running into a problem that felt risky in practice — once an AI agent gets access to an API, there’s no real control over what happens next. It can send emails, access data, or trigger actions instantly, without any pause or user confirmation.

That didn’t feel safe, especially for higher-risk actions.

I wanted to build something in between — not to limit AI, but to make sure it doesn’t act without explicit permission. That’s where Vanguard came in: a simple idea of putting a “human approval layer” between AI intent and execution.

The hardest part was handling this approval flow. AI systems expect immediate execution, but users don’t respond instantly. I had to design the backend to intercept requests, store them, and resume only after the user approves. Getting that flow right was more complex than I expected.

A key part of the system was integrating Auth0’s Token Vault. Instead of storing tokens myself or exposing them to the AI, Vanguard retrieves them only when needed and only for a specific action. The AI never gets direct access to credentials — it only triggers actions through the backend after approval.

This approach naturally aligns with the principle of least privilege. Access is not permanent — it is scoped, temporary, and controlled.

Working on Vanguard made me realize that AI systems shouldn’t run on blind trust. There needs to be a clear boundary between intent and execution.

Vanguard is my attempt to build that boundary — where users stay in control, even when AI is doing the work.

Built With

- auth0

- auth0-token-vault

- expo.io

- jwt-authentication

- nestjs

- node.js

- react-native

- rest-apis

- typescript

Log in or sign up for Devpost to join the conversation.