-

-

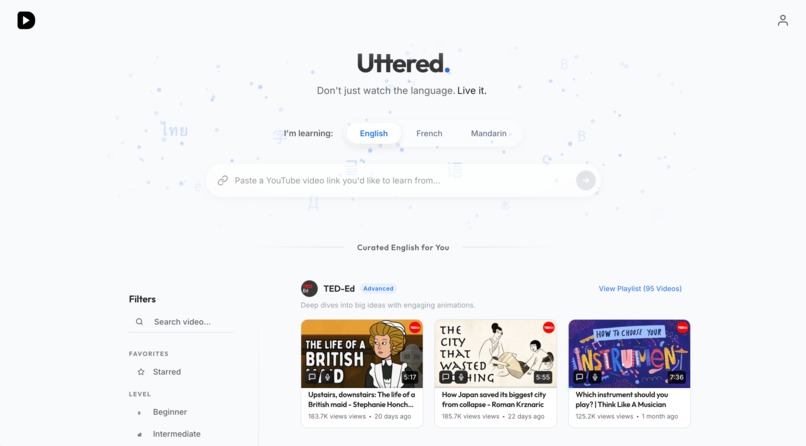

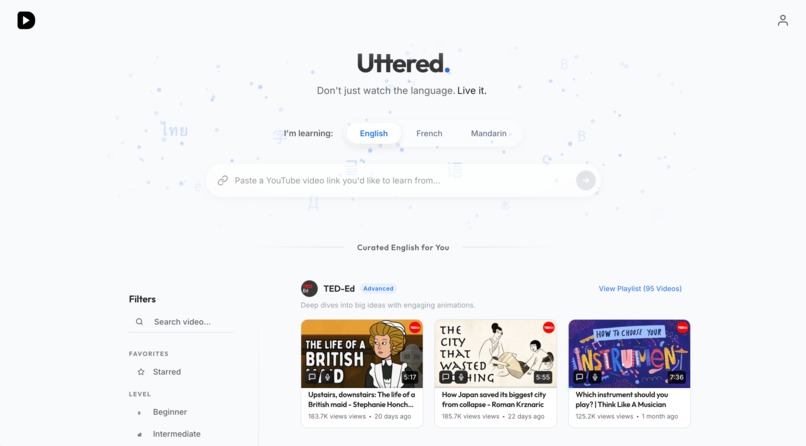

Homepage: YouTube video input and curated playlists, using Gemini 3 for transcription

-

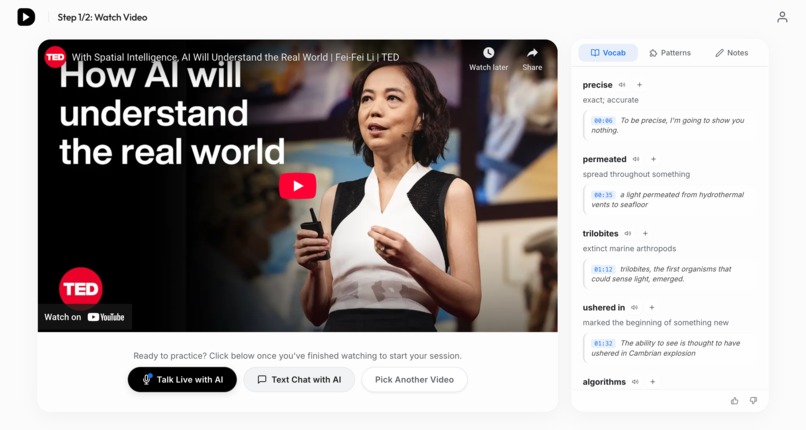

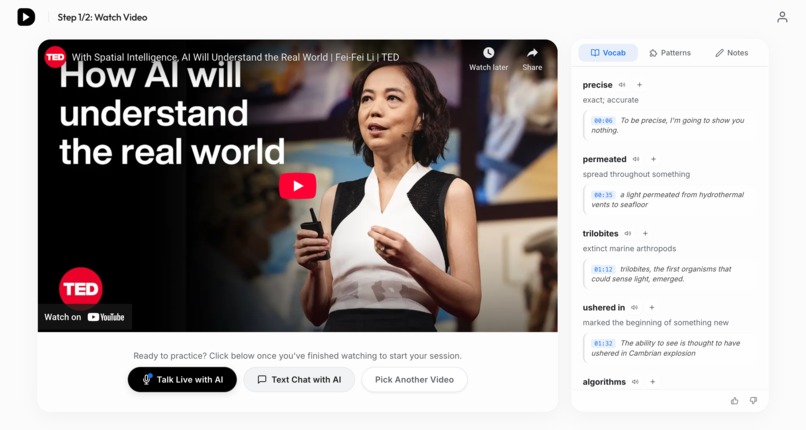

Learning page 1: timestamped vocab/patterns extracted by Gemini 3, one-click to add to notes

-

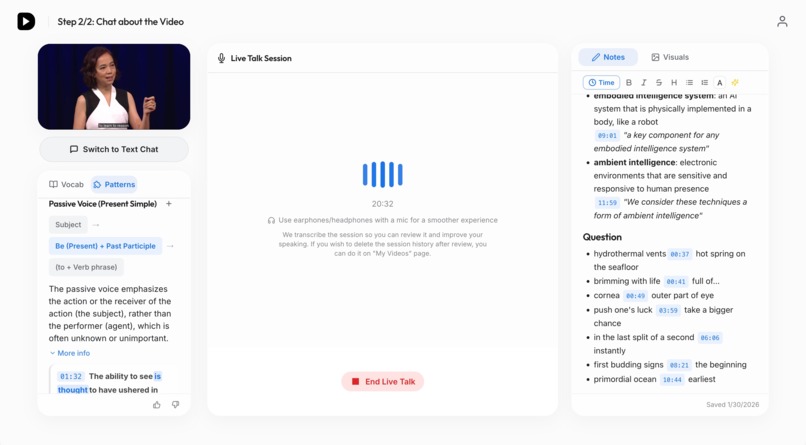

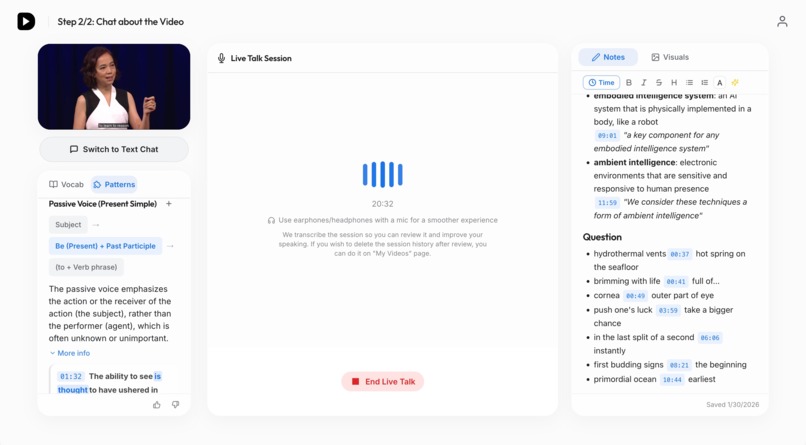

Learning page 2: live talk mode, using Gemini Live API, with grammar patterns and notes editor

-

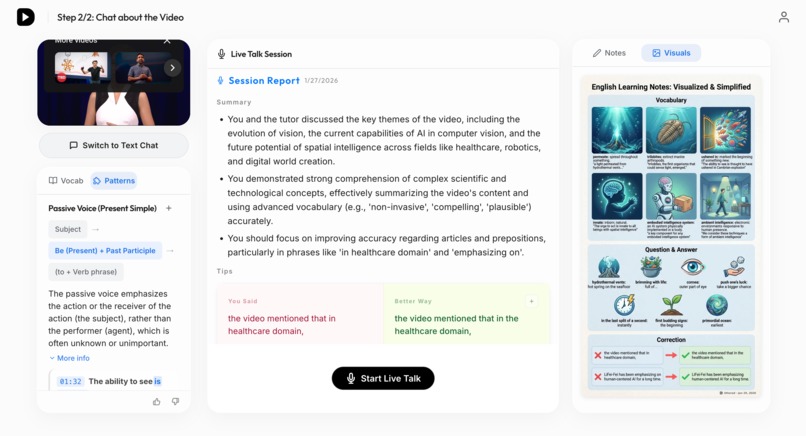

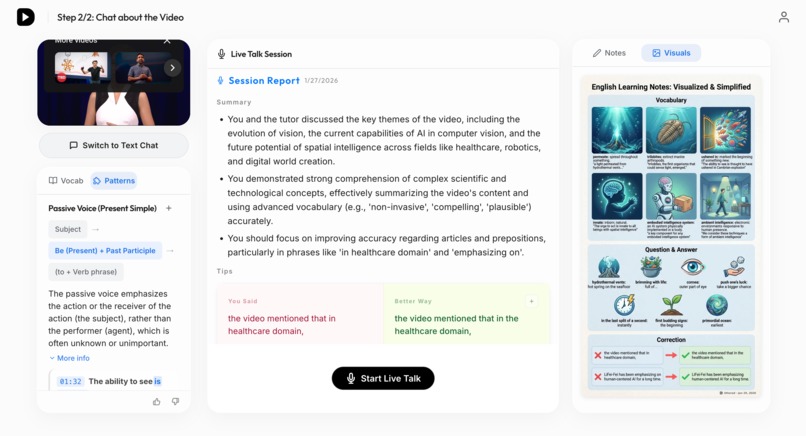

Learning page 2: live session report, turn notes into magic visual with Nano Banana Pro

-

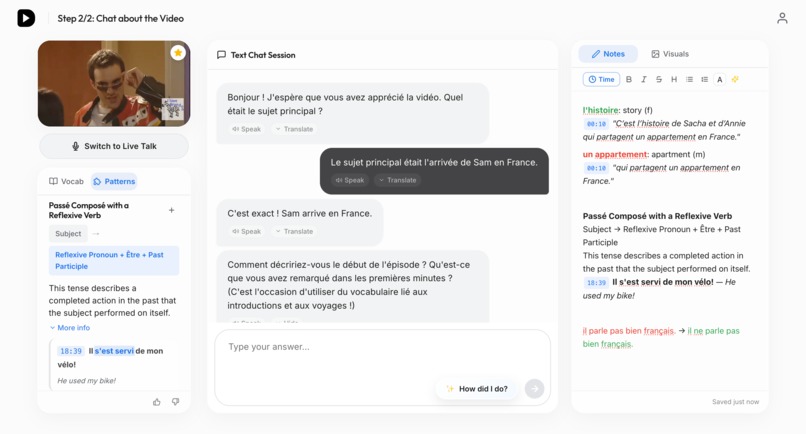

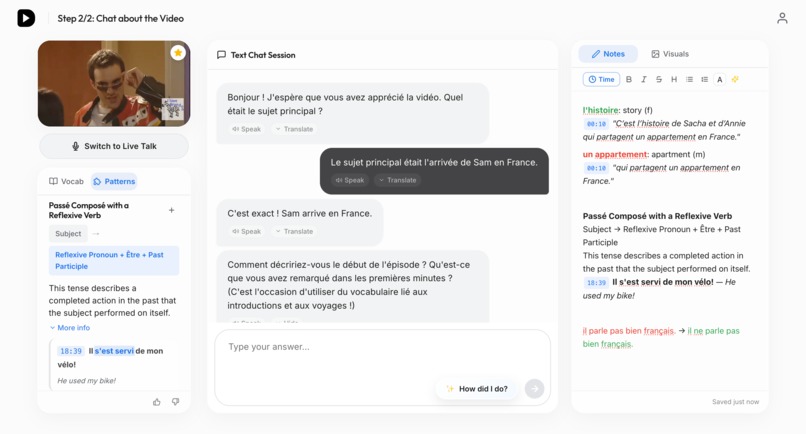

Learning page 2: text chat mode, using Gemini 3 Flash, with vocab/patterns, one-click to add session report corrections to notes

-

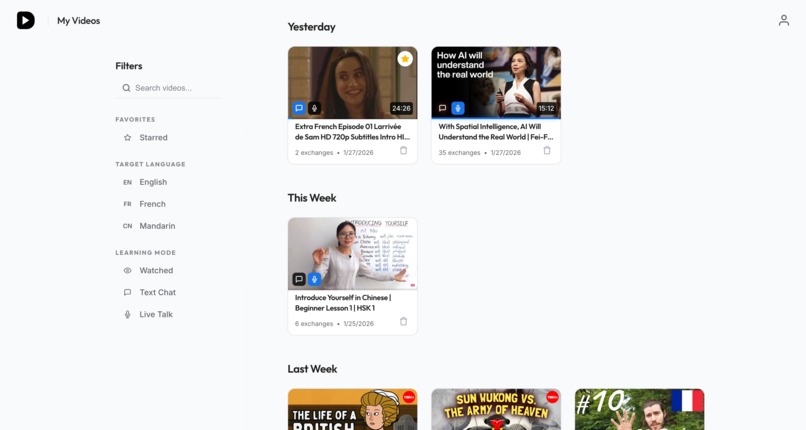

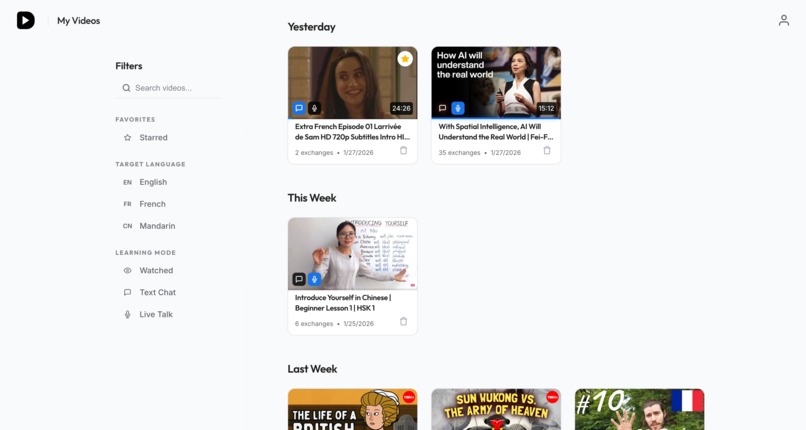

My videos page: UI designed and developed with Gemini 3 (like all other pages!)

-

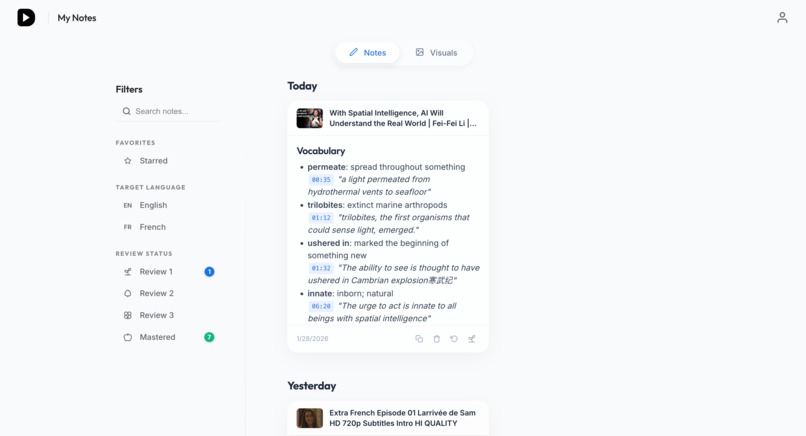

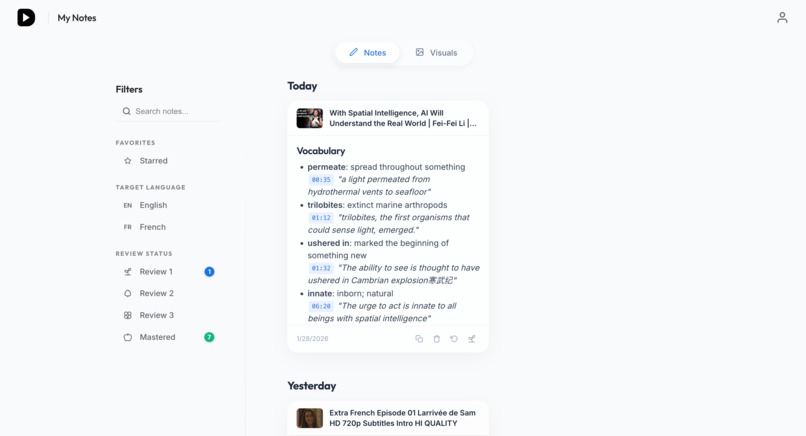

My notes page: notes toggle, with review reminders based on forgetting curve

-

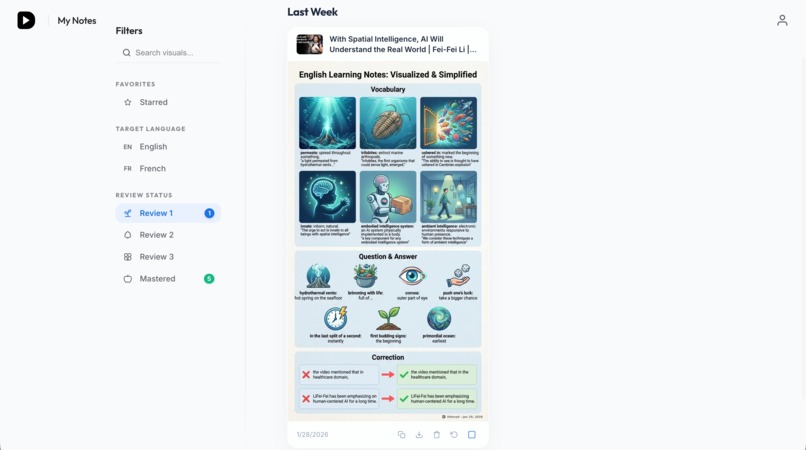

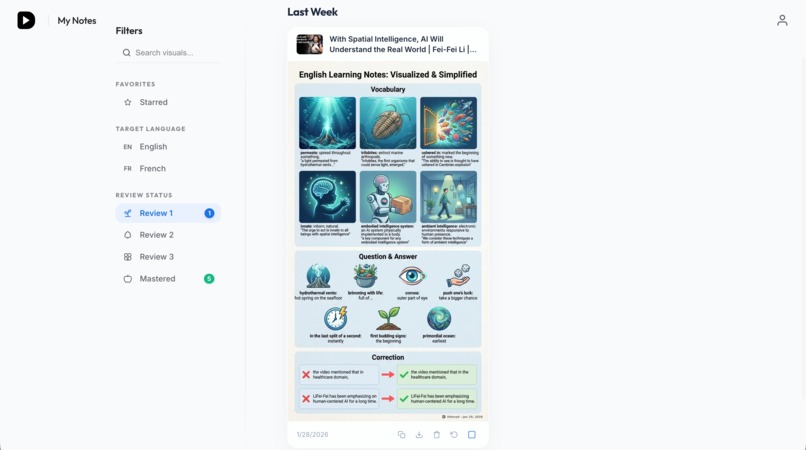

My notes page: visuals toggle, with review reminders based on forgetting curve

-

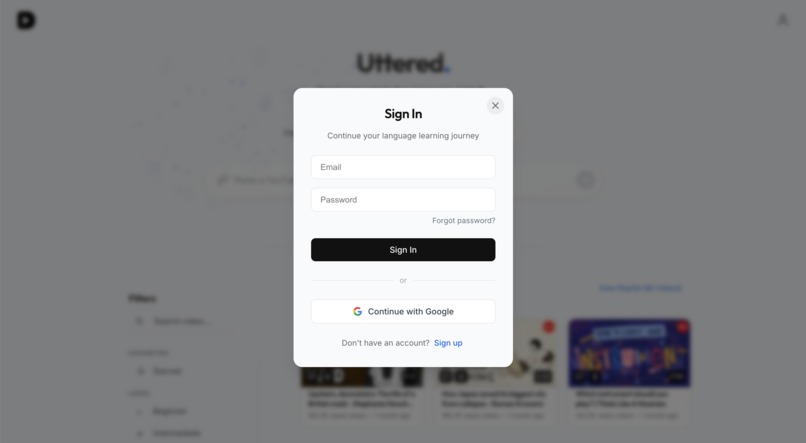

Auth modal: integrated with Supabase

-

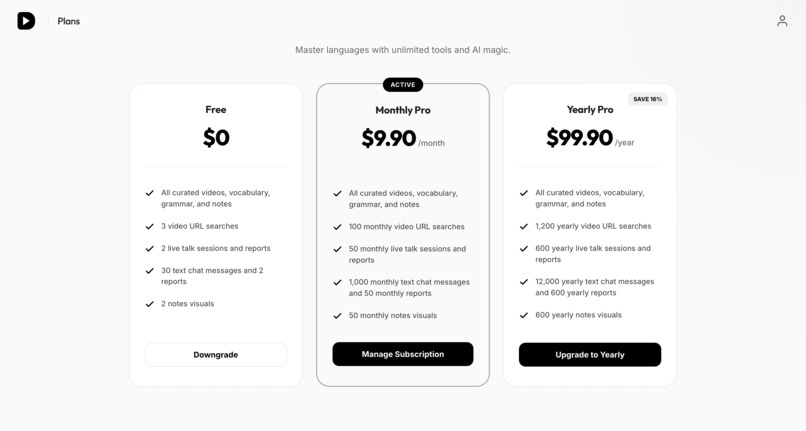

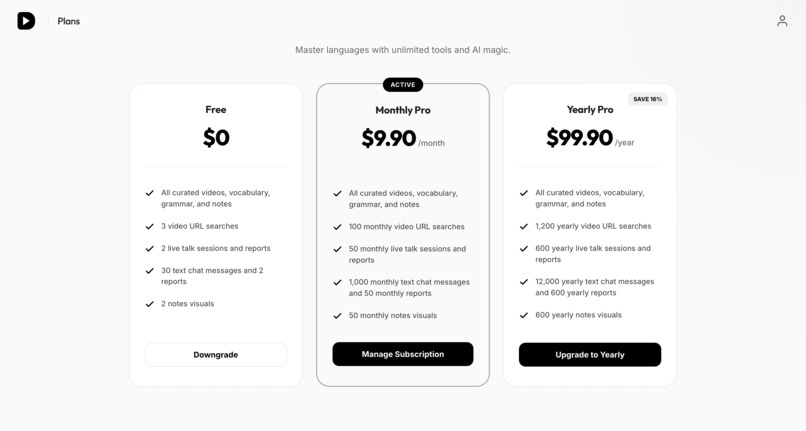

Plans page: integrated with Stripe

Inspiration

We all spend hours consuming content in other languages—lessons, talks, vlogs, and series. Yet, this experience is overwhelmingly passive. We watch, we might rely on subtitles, and if we don't understand something, we simply skip past it. A few days later, none of that knowledge has actually stuck in our memory.

Uttered was born from the desire to bridge the gap between entertainment and education. I wanted to build more than just another chat interface, but set out to create a multimodal learning companion that transforms video watching from a passive habit into an active learning experience. The goal was to create a "live tutor" that watches with you, helps you engage with authentic content, and ensures that what you learn stays with you permanently.

What it does

Uttered uses AI to turn any YouTube video into an interactive lesson. It follows a simple yet powerful 4-step loop:

- Select: Choose from curated authentic playlists (TED talks, sitcoms, vlogs, lessons) or paste any YouTube video URL to create a personalized lesson instantly.

- Immerse: As you watch, the AI automatically extracts key vocabulary, idioms, and grammar patterns relevant to that specific video. You can add any of these items to your notes with a single click, complete with timestamps that jump to the exact moment in the video. Take timestamped notes effortlessly using a specialized editor designed for language learners.

- Practice: Engage with the content through Text Chat or Live Talk (Voice). Instead of passively answering questions, the AI tutor proactively guides the conversation based on the video, asking relevant questions and encouraging you to speak. Every session will be transcribed, allowing you to review your conversation and learn from it. After the session, you'll receive a report too with tips and corrections that can also be added to your notes in one click.

- Master: The "Magic Visual" feature generates mnemonics directly from your personal notes after learning, ensuring the imagery is fully relevant to your key takeaways. All notes and visuals are tied to specific videos, making review context-rich and easy. Finally, a smart reminder system based on the Forgetting Curve (1, 3, and 6 days) ensures optimal long-term retention.

How I built it

Built with Antigravity: This application itself was developed using Antigravity. The frontend product design, UI/UX, and significant portions of the codebase were created with Gemini 3's assistance.

The technical foundation of Uttered is designed for speed, context-awareness, and reliability:

- Framework: Built with Next.js 16 (App Router) for a seamless, performant frontend.

- Gemini 3 Integration: Uttered is built on the latest advancements in the Gemini ecosystem. It relies on Gemini-3-Flash for its advanced reasoning capabilities to deconstruct complex grammar patterns and idiom usage from subtitles, as well as context-aware tutor guiding sessions, etc. It leverages the multimodal capabilities of Nano Banana Pro to transform abstract text notes into concrete visual mnemonics. Finally, the Gemini Live API drives the real-time voice tutor, providing the low-latency, emotionally resonant conversation partner essential for language practice.

- Data Strategy: To ensure consistency, I implemented a "URL as Single Source of Truth" system. All AI analysis (vocab, grammar, chat) follows the target language specified in the URL.

- Infrastructure: Hosted on Fly.io with Supabase for PostgreSQL database and Authentication. I use Cloudflare R2 for storing generated visuals and Supadata/Gemini transcription for reliable YouTube transcript fetching.

- Reliability: I built a custom "Hallucination Prevention System" that validates AI-generated vocabulary/grammar against the actual video content to ensure every word provided was actually spoken.

Challenges I ran into

- Transcription Gaps: Many YouTube videos lack accurate captions. I solved this by building a 3-tier fallback system: first trying curated data, then YouTube captions, and finally falling back to verbatim AI transcription using Gemini's audio processing capabilities.

- AI Consistency: Early on, AI responses could be unpredictable. I moved to Native JSON Mode (

application/json) for all high-stakes data like vocabulary and grammar to eliminate parsing errors and ensure reliable structure. - Live Talk Technical Limits: Implementing the real-time voice feature came with unique infrastructure hurdles, specifically the 10-minute WebSocket connection limit and the 10-15 minute context window within the Gemini Live API. Managing long-running sessions while maintaining context required careful session handling and state management.

- Language Source of Truth (SOT): Handling multi-language scenarios presented a major architectural challenge. I had to manage "teaching videos" (e.g., learning Mandarin through English instruction) where the transcript might be in one language while the target learning material is in another. Discrepancies between YouTube captions and actual spoken audio added further complexity. I solved this by implementing a SOT pattern, ensuring that everything—from AI extraction logic through voice/text chat to TTS pronunciation and UI behavior—consistently follows the user's intended learning language (or the curated language for curated videos).

Accomplishments that I'm proud of

- Smart Content Caching Strategy: I implemented a multi-layer caching architecture (database + edge). By proactively caching expensive AI analysis like vocab and grammar, I ensure that once a video is processed, the learning content becomes instantly available and free for all subsequent learners. This dramatically reduces latency and proves that high-quality AI education can be delivered economically at scale.

- Live Talk & Session Reports: Creating a voice-based AI tutor that provides a detailed report after every session—pointing out grammar mistakes, pronunciation tips, and suggesting better expressions.

- Seamless Session Resumption: Developed a sophisticated state management system to overcome the technical limits of cutting-edge real-time AI. By implementing automatic session resumption, I ensured that learners can continue their conversations uninterrupted, even when hitting 10-minute WebSocket timeouts or 15-minute API context limits.

- Magic Visuals: The ability to turn a user's post-learning notes into beautiful, fully relevant mnemonic images that aid memory.

- The Forgetting Curve Integration: A non-intrusive reminder system that helps users move from "just watching" to "actually mastering" a language.

What I learned

Building Uttered taught me that for AI learning tools, context is everything. A generic AI isn't enough; the tutor needs to "see" what the user is seeing. I also learned that users value a "hallucination-free" experience, which led me to prioritize grounding all AI outputs in the video's actual content. Balancing the cost and latency of different Gemini models was also a key learning curve in providing a premium experience that remains fast and interactive.

What's next for Uttered

I am looking to expand the supported languages beyond English, French, and Mandarin. I also plan to deepen the community aspects of the app, allowing users to share their "Magic Visuals" and learning notes. Expanding our compatibility to support more video platforms and formats beyond YouTube is also on the roadmap. The mission remains the same: making any video you like an interactive, permanent lesson.

Built With

- antigravity

- cloudflare-r2

- css-modules

- fly.io

- gemini

- google-translate-tts

- javascript

- next.js-16

- postgresql

- react-19

- sentry

- sharp

- stripe

- supabase-auth

- supadata

- tiptap

- typescript

Log in or sign up for Devpost to join the conversation.