-

-

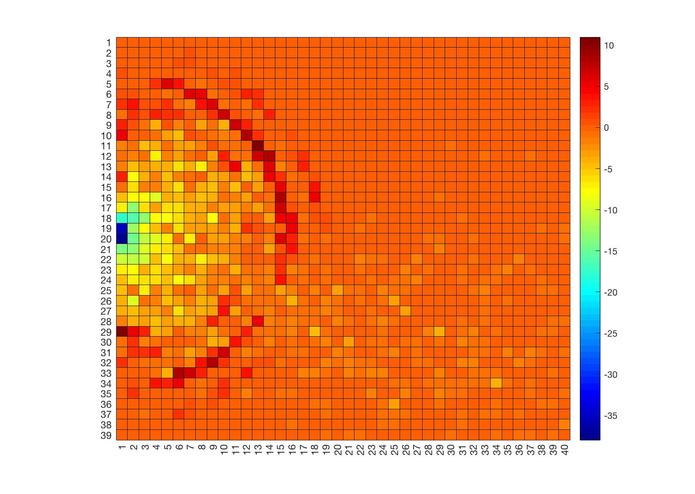

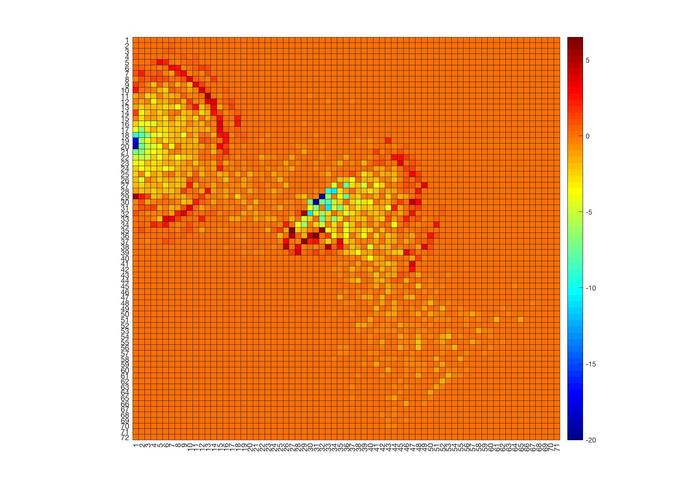

Example of another scan

-

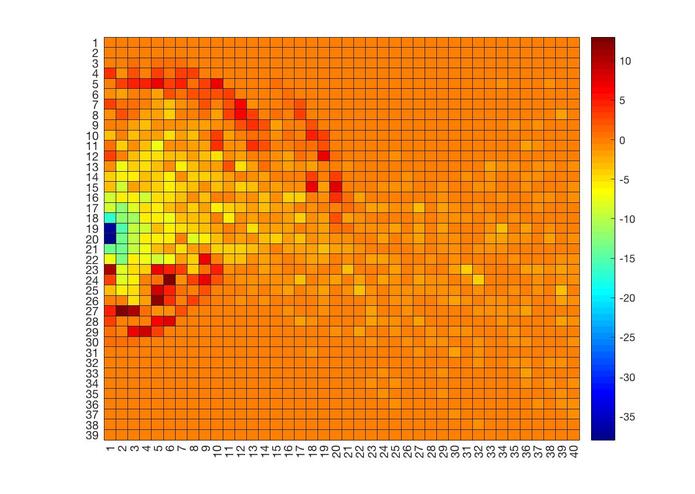

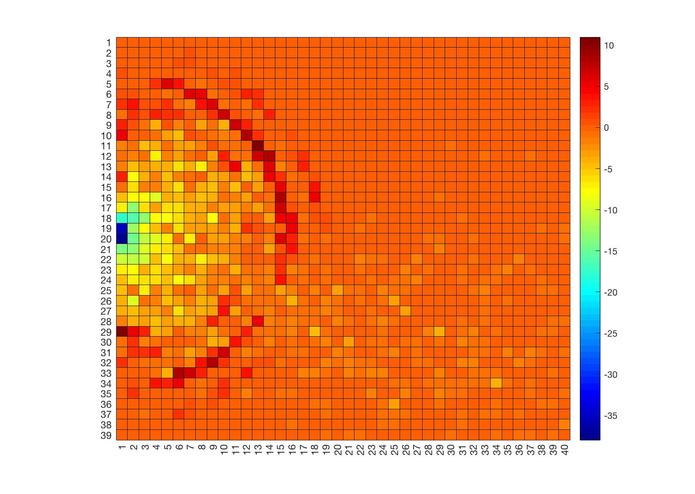

Example of one scan

-

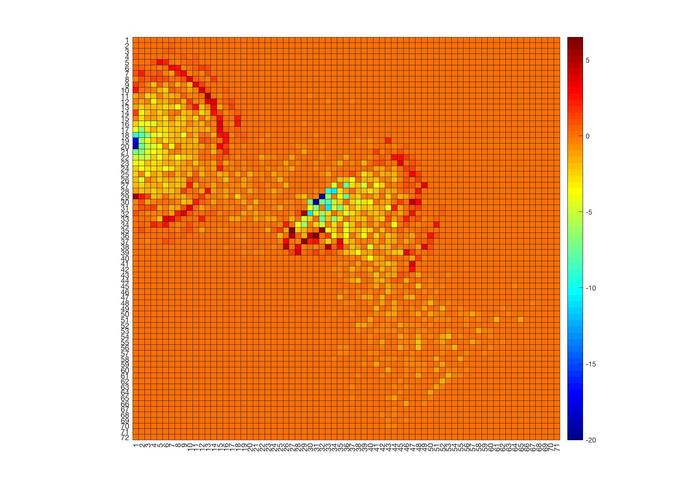

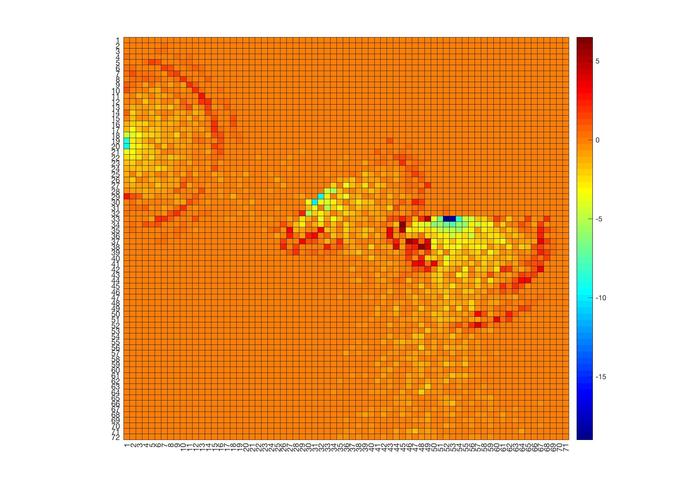

Example of fusing two scans

-

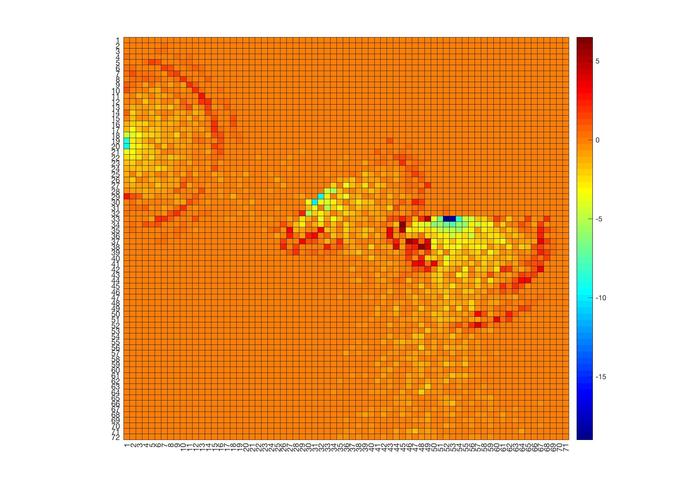

Example of fusing three scans

-

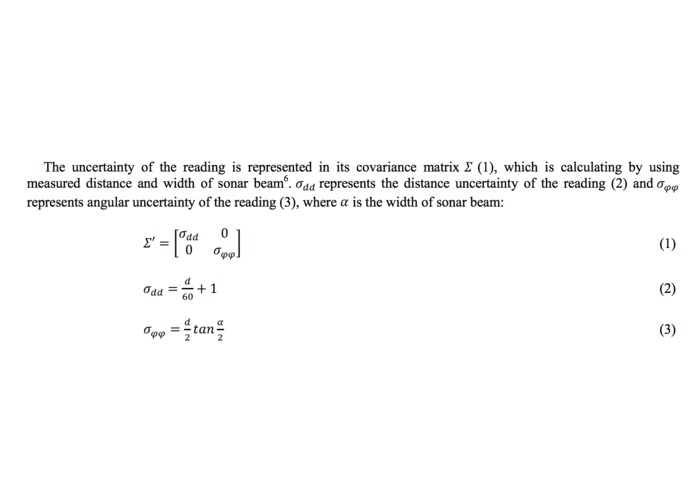

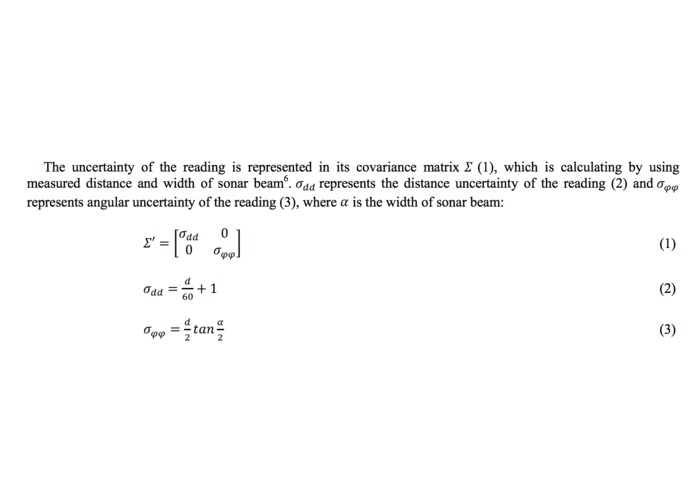

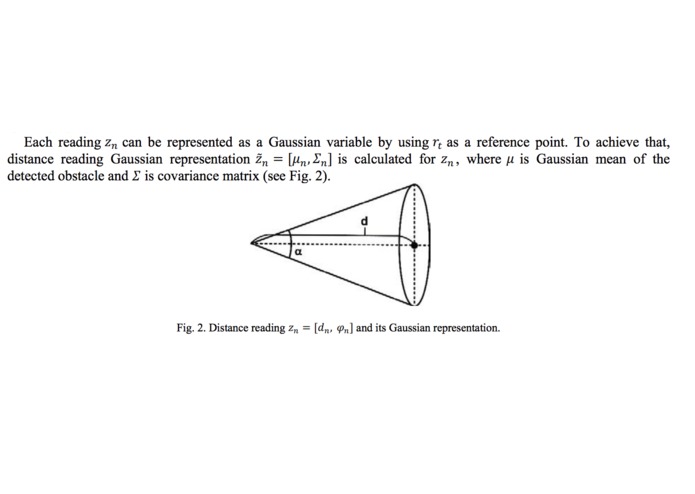

Modelling the uncertainty of an ultrasound beam (taken from "Probabilistic Mapping with Ultrasonic Distance Sensors" by Andersone I.)

-

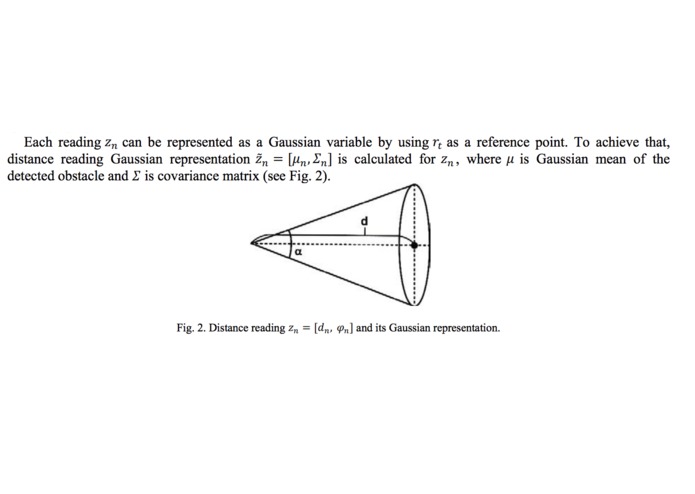

Uncertainty of an ultrasound beam (taken from "Probabilistic Mapping with Ultrasonic Distance Sensors" by Andersone I.)

-

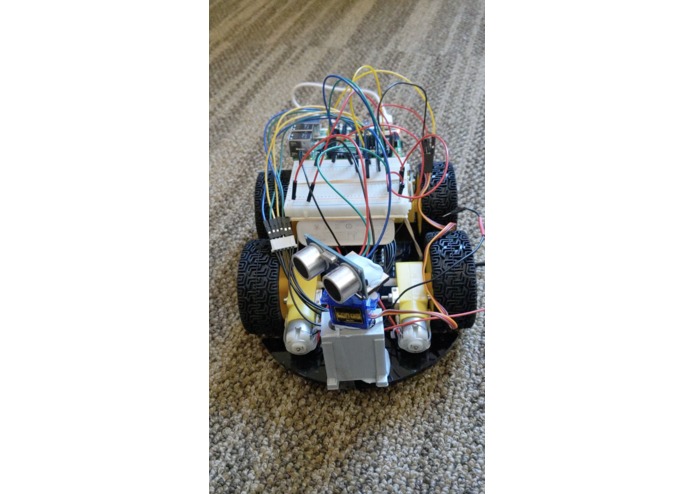

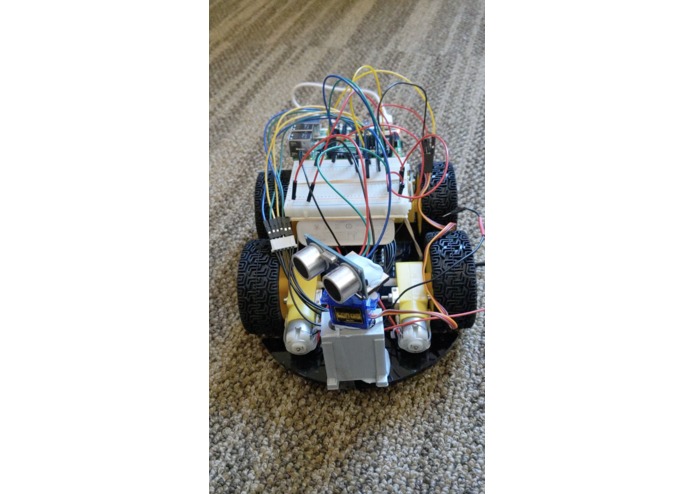

Final Bot!

Inspiration

The motivation for this project is to mimic the LIDAR using an ultrasound sensor, and use it to conduct a probabilistic mapping of the environment. LIDARs are usually expensive and I wanted to make a more affordable alternative for it. The bot is just a proof of concept and can be scaled (possibly) for unmanned exploration in future.

What it does

The bot is controlled remotely using movement commands and commands to start an ultrasound scan. In a single scan, the ultrasound sensor is rotated along the xy plane and the return value from the sensor at different angles is recorded.

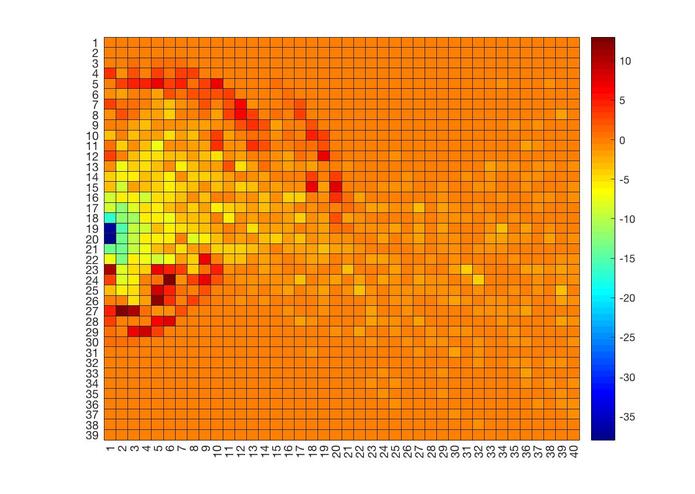

We know that the ultrasound sensor has a conic shaped uncertainty due to the width of the beam. To model this uncertainty, I represent each distance point as a Gaussian random variable and use scan matching to reduce uncertainty in the mapping process -- when there is overlap between to sensor scans, we can know with higher certainty that there is a surface or no surface in the overlapped region. Furthermore, I also use space carving methods to record areas of free space -- when we receive a distance reading from the ultrasound, we know that the space before that surface should be empty. I then combine these two methods to obtain a probabilistic heatmap of where detected surfaces may be in the environment.

Next, I also attempt to stitch and build map accumulatively, by combining each of these scans. Given a point and angle which we make the scan, I can combine heatmaps generated and piece them together to make a bigger heatmap.

Although the ultrasound sensor may not be as accurate as the LIDAR, this projects aims to tackle this drawback by fusing multiple LIDAR readings probabilistically and maximising the information we can obtain from each scan.

How I built it

I simply build a 4WD robot and added on it an ultrasound that is attached to the arm of the servo. In our 'measure' command, this servo arm rotates and allows us to capture distance readings at multiple angles.

Mapping and data processing is currently still done on matlab.

Challenges I ran into

Initially, I tried using a Kalman filter to fuse different scans together. However, I realised that it would be hard to incoporate the free space notions from the space carving result with these fused scans. Existing methods weight these fused variables and use an occupancy grid to store the free space points. However, I also hoped to model the uncertainty with the free space and hence I eventually used gaussian random variables to represent both the free space points and surface points.

Hardware challenges include the slow connectivity when using ssh to send in our remote commands. This project was also limited due to the accuracy of the servo -- I currently only have on hand an open looped servo which makes the angle at which each ultrasound reading is taken very uncertain too.

What I learned

I learnt a lot about using the raspberry pi and implementing my theoretical ideas.

What's next for bot

The initial idea was to also implement a kalman filter for localisation of the bot. If we map the environment once when we start in an unknown location, we can have a rough estimation of ultrasound values we should expect based on this map. Perhaps, a future extension is to add on an accelerometer and use an unscented kalman filter to combine ultrasound feedback as we move (by using our previous states to estimate the ultrasound value that we should expect and calculating the error), with position estimation from the accelerometer and timing of our commands.

Log in or sign up for Devpost to join the conversation.