-

-

View today's trending topics

-

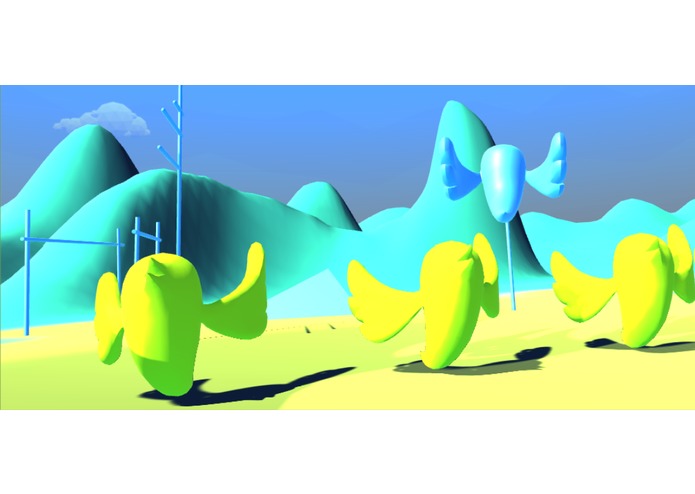

Welcome to the Twitter Universe!

-

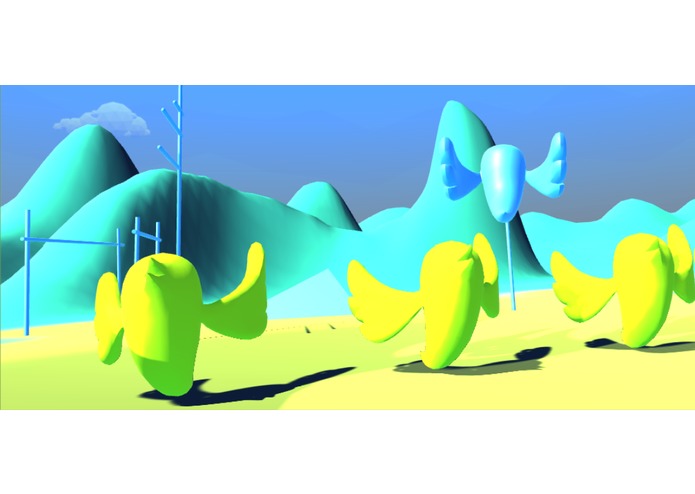

Four twitter birds are reading four trending tweets in the topic you just selected to you

-

Point your hand at this block and pull the trigger to start speaking about your opinion!

-

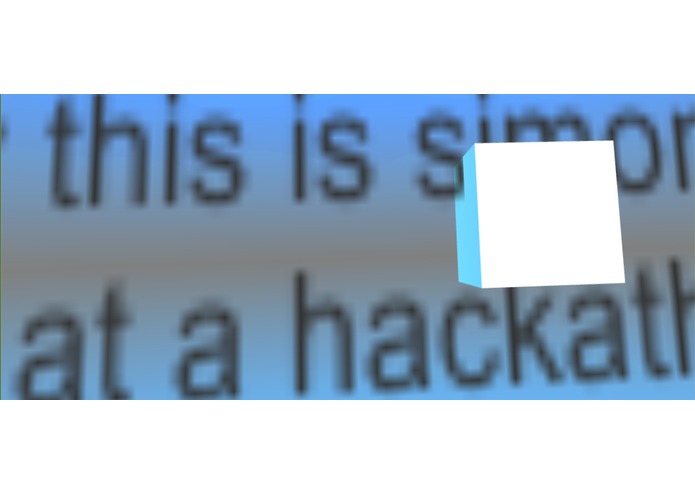

Your message has being recognized and shown in front of you!

-

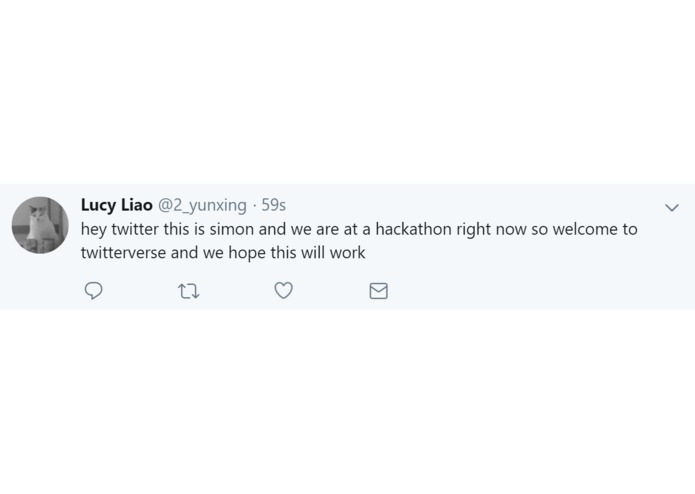

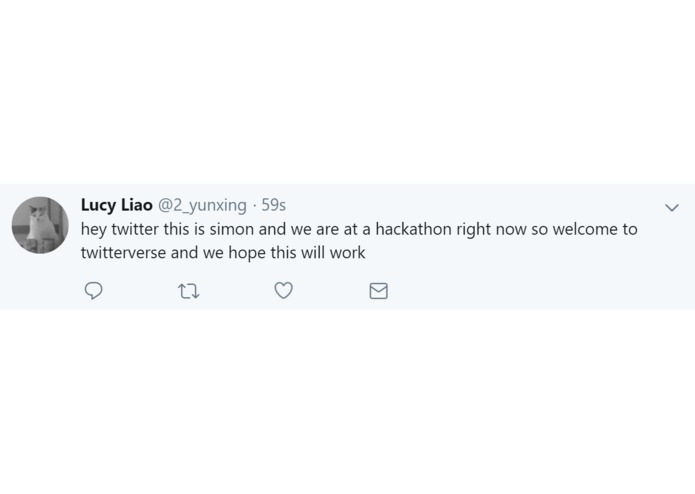

Your message/tweet is published on your Twitter account in real time!

-

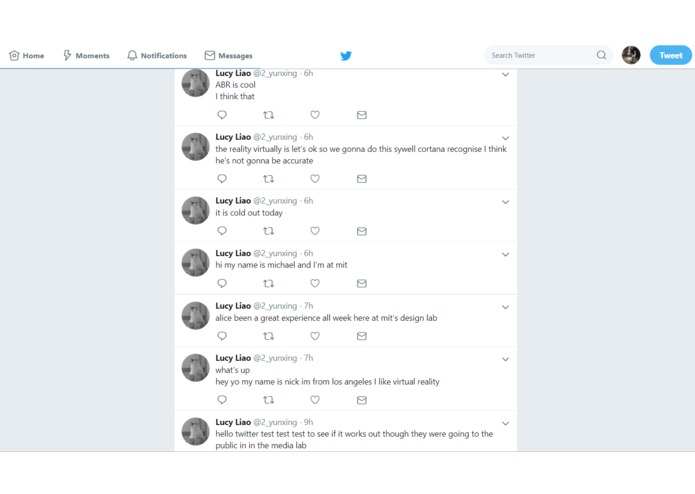

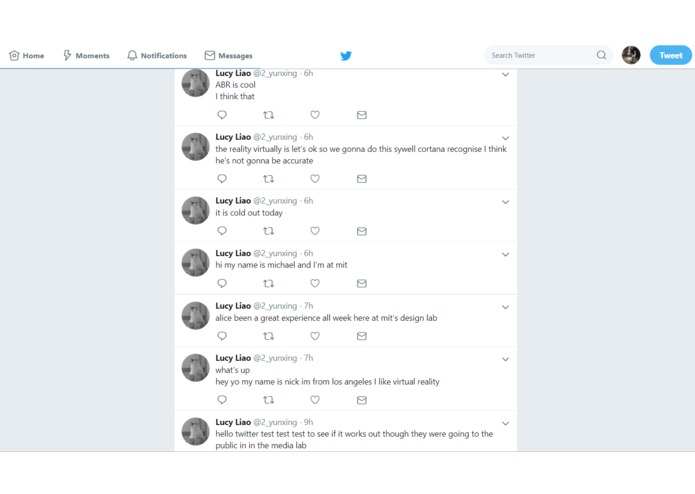

Tweets published by visitors at the hackathon Demo day (screenshotted 9P.M. Jan 21, 2019)

-

The team (Austin Edelman, Lucy Yunxing Liao, Dana Elkis, Simon Zirui Guo, Rebecca Skurnik)

-

GIF

GIF

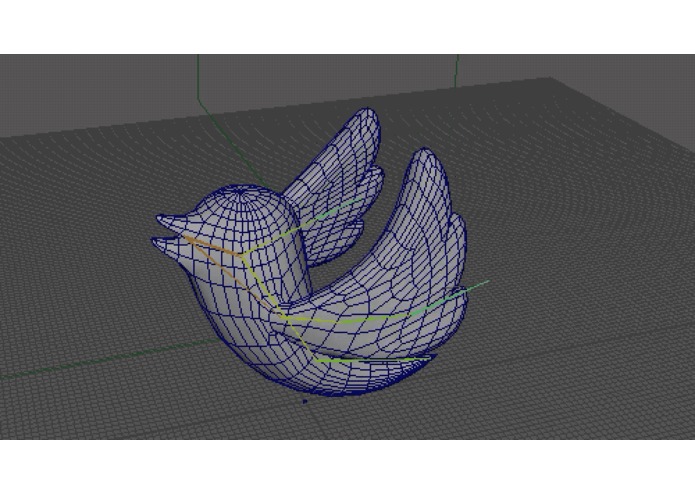

Animation of a Twitter bird flapping its wings

-

Project logo

Team info

This project was created from Jan 18-20, 2019 at the Reality Virtually Hackathon at the MIT Media Lab.

- Simon (Zirui) Guo: I'm a high school senior currently studying in Toronto, Canada and I love attending hackathons to explore new technologies and build cool projects. Particularly, I want to explore better interactions between humans and machines.

- Rebecca Skurnik: Rebecca is master's student at the Interactive Telecommunications Program at NYU. She is passionate about creative technology and working in the intersection of the physical and digital world.

- Lucy Liao: I'm a sophomore majoring in computer science at MIT interested in learning more about virtual reality.

- Austin Edelman: A sophomore at MIT interested in creating amazing virtual worlds that people can live in. Consequently, I decided to train and become a biomedical engineer, majoring in computer science and electrical engineering and choosing a pre-medical track.

- Dana Elkis: Dana Elkis is a Graphic Designer / Visual Artist from Tel Aviv (Israel), currently living in New York City. Master student at Interactive Telecommunications Program, NYU, New York.

Judging information

- Location: Table 22, 6th-floor Vive Lounge, Media Lab E14.

- Category: Art, Media and Entertainment.

Inspiration

We believe immersive technologies have the potential to upgrade our current experiences and we would like to experience it with social media. Social media posting has been mechanical and the same for every platform. We want to bring the experience to the next level by immersing the user in a Twitter universe and make them feel that they are apart of the Twitter community.

What it does

TwitterVRse is a Virtual Reality experience that seeks to transform the way that we interact with the social media platform Twitter. We have created a VR interface that allows users to access tweets using virtual birds, interact with tweets like physical media, and post your own tweets through voice input.

User experience

The video above demonstrates a complete session.

- The user puts on an Oculus Rift and uses controllers to open TwitterVRse.

- The top 5 trending topics will be listed, in descending order of popularities. This will correspond with the number of birds in a flock. Users point at "continue" to enter the next scene.

- The Twitter universe will be presented with 5 flocks of birds scattered around in the environment. Those flocks have different numbers of birds, which corresponds to the 5 trending topics listed before (e.g. flocks with 5 birds is the most trending topic). Users point at the flock that he/she wants to investigate by pressing down a trigger.

- The birds in the flock will come to the user and, one by one reads out tweets by converting text to speech. Once users finished listening to the tweets, he/she can press the trigger to enter the next scene.

- In this scene, the user can record his/her own opinion by starting the record button and holding down the trigger while speaking. The user's voice will be recognized as text and posted on his/her Twitter account immediately.

How we built it

- Hardware: Oculus Rift system (headset, Touch controllers, trackers)

- Engine: Unity

- SDKs: Oculus Integration

- APIs: Twitter API (Twity), Microsoft Cortana (Windows Speech Recognition)

- Assets: Oculus Integrations, Boxophobic

- Modeling and animations: Cinema 4D, Autodesk Maya, Adobe Illustrator

Challenges we ran into

- Deciding on the platform to use: The hackathon provides too many options for us to experiment. We were not sure if we want to make this a mobile, VR, AR, or MR experience. We then settled on VR. Originally, we chose Oculus Go, yet the controller SDK had issues due to Unity versioning and the prototyping cycle was too lengthy. We then turn to the more stable option of using an Oculus Rift.

- Getting used to Unity and VR development: Most of the team has little prior experience with VR development. We had to learn many Unity-specific concepts, such as ray casting, setting user camera position, switching between scene, spawning prefabs with properties.

- Pulling and Posting data with Twitter and incorporating voice engine (STT & TTS): We spent a lot of time on using Twitter API in Unity. The Twitter Unity integration is specific for Mobile. Luckily, we found Twity, a C# Twitter API client. We were also stuck on finding a speech-to-text engine, and Google cloud API and IBM Watson does not work well with Unity. We found a workaround using Windows Cortana since the headset is connected to a Windows machine. Due to the limitation of using only free assets, the only text-to-speech engine we found requires a different .NET version. We had to give up coding that features and instead we played pre-recorded audio files.

- Bird animations: We found a free asset of birds with flapping wings, yet the algorithm was too complex to be included in our application. We then tried to animate our bird to flap its wing in Maya and import as an animation. However, animation import did not work.

Accomplishments that we are proud of

- Getting every component working together: In the end, we put models, environment, interactions, and backend into each scene and connected them seamlessly. We completed most of the features that we proposed.

- Design and construct complicated multi-scene: We also needed to carry data between scenes, and we did that by carrying data in instances of the prefab models.

What we learned

- Learning Unity and all spectrum of the VR development process: For most of us, this is our first VR application. Thanks to the workshops and mentors, we overcame lots of issues with versioning, importing, and structuring components.

- Learning how to work as a team with diverse skillsets: We had clear roles. Austin on audio, Lucy on Twitter APIs, Simon on interactions and putting Unity project together, Dana on designing, and Rebecca on animations. We cooperated well and held hourly update, and everything worked together in the end.

- Exploring the best practices of developing projects: Before starting to construct the project, we played with various available tools to test their technical viability. A feature tree and UX diagram are drawn to layout the development road map. Then we started to build features one by one in components for easier debugging. We had the core unity environment testing on one machine and many feature branches to avoid Git issues.

What's next for TwitterVRse

- Better UI and UX: We would like to get the flapping wing animation working and let the birds move across the scene. Many of the interactions are developed from simple prototypes and their design can be improved. Instruction will also be useful to guide the user through the experience.

- Platform Supports: We would like to add support for Oculus Go as it is designed as a new platform to interact with social media, and soon the most popular VR device. A mobile AR version will also make the experience more accessible.

- Location-based visualizations: We also had the idea to see birds migrate based on the locations of the tweets, as a geo-based visualization of trending discussions.

Credit and Acknowledgement

We would like to particularly thank John La from Oculus, Wiley Corning from the MIT Media Lab, Louis DeScioli from Google, Madison Hight from Microsoft, and Jasmine Roberts from Unity for their mentorship during the hackathon.

Log in or sign up for Devpost to join the conversation.