-

-

Sophie, Buzz, and Joe as rendered by Google Nano Banana

-

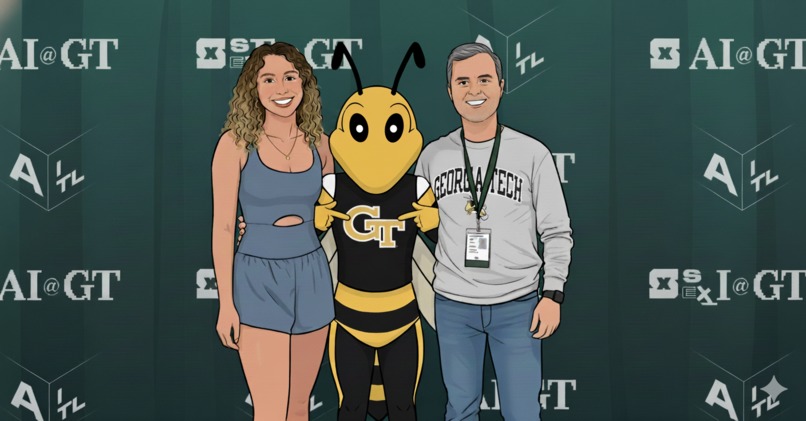

Truth Verifier system overview

-

Veo 3 project video featuring avatars of Sophie & Joe

-

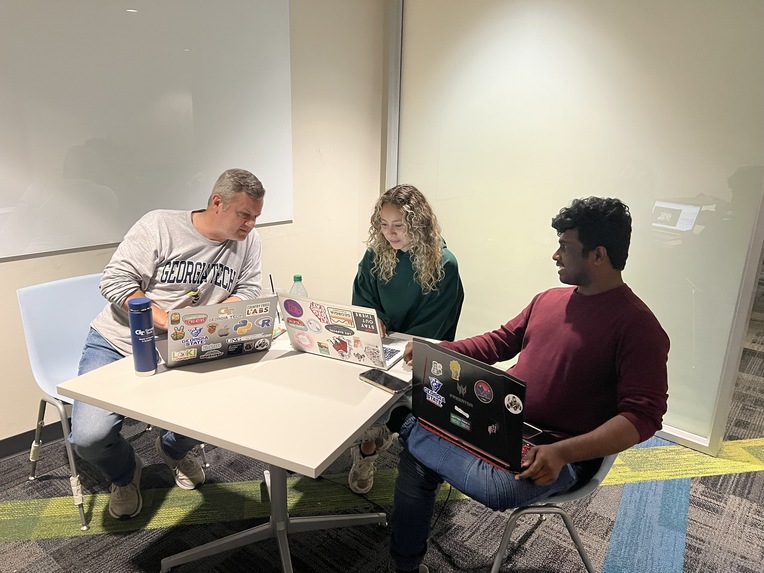

Project team - Sophie Castellon (left) & Joe Domaleski (center). Vinay Revanuru (right - visitor)

-

Our project team hard at work.

-

Joe codes late into the night

-

Joe and Sophie finish the project!

-

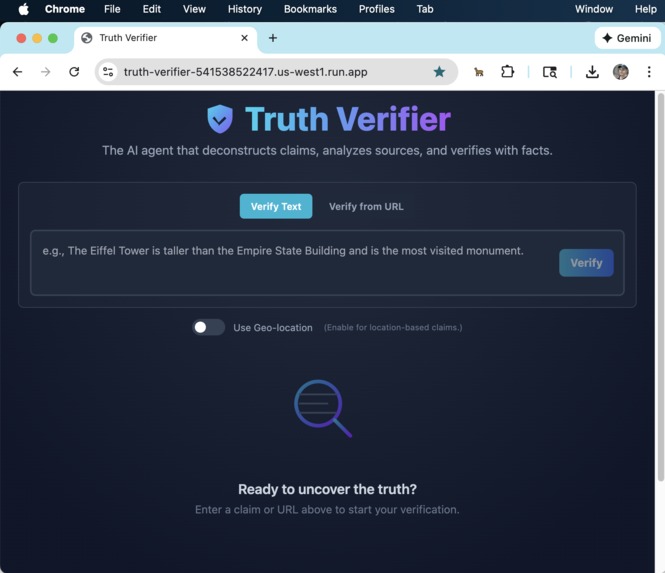

Screen shot of our Truth Verifier AI web application

Inspiration

In today's information-saturated world, the line between fact and fiction is increasingly blurred. Misinformation spreads faster than truth, and simple "true/false" labels often fail to capture the nuance of complex issues. We were inspired to build a tool that does more than just give a verdict, we wanted to build an AI partner for critical thinking. Our goal was to create an application that not only verifies claims but also illuminates the entire information ecosystem surrounding them, empowering users to see the full context, understand source biases, and ultimately, make more informed decisions.

What it does

Truth Verifier is a sophisticated, AI-powered fact-checking agent. Users can provide a simple text claim or the URL of an entire news article for a deep, real-time analysis. The AI agent then performs a comprehensive, multi-step investigation:

- Deconstructs the Claim: It intelligently breaks down complex statements or entire articles into distinct, verifiable sub-claims.

- Gathers Evidence: Using Google Search and Google Maps, the agent gathers real-time information to ground its analysis in verifiable facts.

- Analyzes Sources: It evaluates the credibility of its most definitive sources, assessing their bias (e.g., Left-leaning, Neutral, Right-leaning) and tone (e.g., Objective, Opinionated).

- Delivers an Interactive Report: The findings are presented in a polished, easy-to-understand report, featuring:

- A "Truth Meter" for an at-a-glance confidence score.

- A detailed, synthesized explanation of its reasoning.

- Our unique "Source Landscape" chart, which visually plots the sources on a Bias vs. Tone grid, providing a powerful overview of the media landscape.

- Transparent Process: While the agent works, the UI displays a dynamic, step-by-step visualization of its "thinking" process, making the complex backend operations transparent and engaging for the user.

How we built it

This project was built using a full suite of Google Gemini tools, with Google AI Studio serving as our primary development partner from concept to completion.

- AI Core: The application's brain is an agentic workflow powered by the Google Gemini API (specifically the

gemini-2.5-flashmodel for its balance of speed and reasoning). We used careful prompt engineering and a strict JSON schema to guide the model through its multi-step analysis. It leverages Retrieval-Augmented Generation (RAG) by grounding all its findings in real-time data from its integrated tools: Google Search and Google Maps. - Frontend: The UI is a responsive and modern single-page application built with React.js and TypeScript, ensuring a robust and type-safe codebase.

- Styling: All components are styled with Tailwind CSS for rapid, utility-first design and a polished, professional aesthetic.

- Development Environment: The entire application, from the initial boilerplate to complex components, was co-developed with the AI in Google AI Studio. It was our pair programmer, our debugger, and our documentation writer.

- AI-Generated Project Video: To fully embrace an AI-native workflow, we used Google's Veo 3.1 model to generate our project's overview video. By providing a descriptive prompt, we transformed our concept into a dynamic visual presentation with cartoon avatars, demonstrating how generative AI can accelerate not just coding, but the entire creative process of a hackathon submission.

Challenges we ran into

Building a reliable AI agent is a journey of discovery and refinement. Our biggest challenges were:

- Taming AI Hallucinations: In our initial tests, the model would sometimes generate plausible but incorrect information. We solved this by rigorously enforcing the use of Google Search and Google Maps as grounding tools and explicitly instructing the model in its prompt to base all its explanations on the retrieved data.

- Mastering Google AI Studio: Moving from simple "one-shot" prompts to a complex, multi-step agentic workflow with strict JSON output was a significant learning curve. It required iterative prompt engineering and a deep understanding of how to structure instructions for the model to follow reliably.

- Bridging the Rendering Gap: We discovered a jarring delay between the AI finishing its work and the results appearing on screen. We overcame this by synchronizing our UI's loading state with the application's rendering process, creating a new "Generating Report" step that seamlessly bridges the client-side processing time.

- Understanding Tool Capabilities: We learned that simply giving an AI a tool doesn't guarantee it will be used effectively. Through experimentation, we refined our prompts to guide the model on when and how to use its tools, drastically improving the quality of its output.

- Video Generation Iterations: Even with the most advanced AI video generation models like Veo 3, generating videos is challenging, time-consuming, and iterative.

Accomplishments that we're proud of

Our greatest accomplishment is not just the final app, but the process we used to create it. We are incredibly proud of:

- The Source Landscape Chart: This is a novel data visualization that provides an immediate, intuitive understanding of the media ecosystem around a claim.

- The Transparent Agentic UI: We successfully turned a boring loading screen into an engaging, real-time look into the AI's "thinking" process, which builds user trust and understanding.

- Building a Fully Deployable App with AI: We pushed the boundaries of AI-assisted development. The AI didn't just write code snippets; it helped build the entire application, generated the deployment guide for Firebase, created technical explanations, and even helped write our Devpost story.

What we learned

This hackathon really opened our eyes towards the future of software development. We learned how to build a fully-deployable app using AI, including the code, deployment, explanations, and even the project video. Most importantly:

- The key to reliable AI is grounding. By forcing the model to use tools and cite sources (RAG), we transformed it from a creative "storyteller" into a dependable "researcher."

- Prompt engineering is an art and a science. The nuance in how you instruct the model has a massive impact on the quality and reliability of the output.

- The role of the developer is evolving. With tools like Google Gemini, we spent less time on boilerplate code and more time on high-level architecture, user experience, and creative problem-solving. AI is the ultimate pair programmer.

What's next for Truth Verifier: An AI Fact-Checking Agent

This is just the beginning. We envision a future where Truth Verifier becomes a comprehensive suite for digital literacy. Our next steps include:

- Interactive Chat: Allowing users to ask follow-up questions about the analysis in a conversational chat interface.

- Multi-modal Analysis: Expanding the agent's capabilities to analyze claims made in images and videos within articles.

- Historical Tracking: Building a feature to track how information and claims evolve over time.

Built With

- browser-geolocation-api

- firebase

- firebase-hosting

- gemini-2.5-flash

- geolocation-api

- google-ai-studio

- google-maps

- google-search-api

- react.js

- tailwind-css

- tailwind.css

- the-google-gemini-api-(gemini-2.5-flash)

- typescript

Log in or sign up for Devpost to join the conversation.