-

-

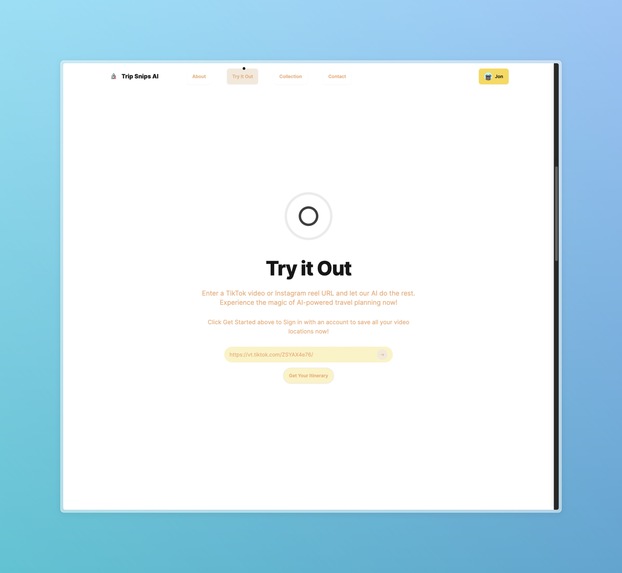

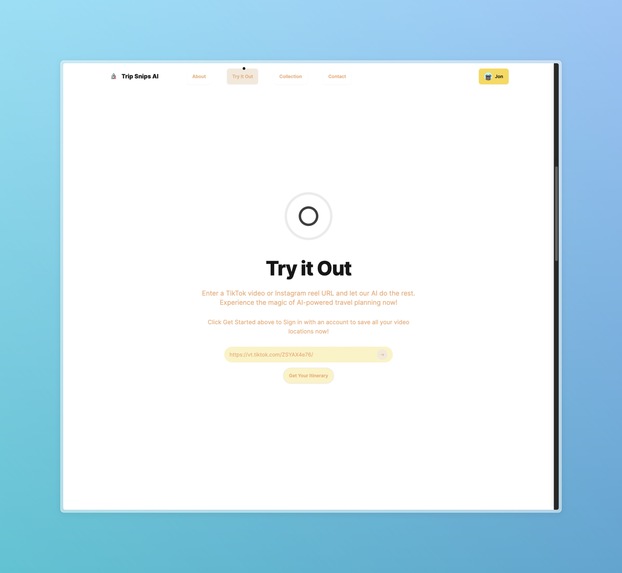

Try It Out Screen where users can enter their video url link they want AI to extract

-

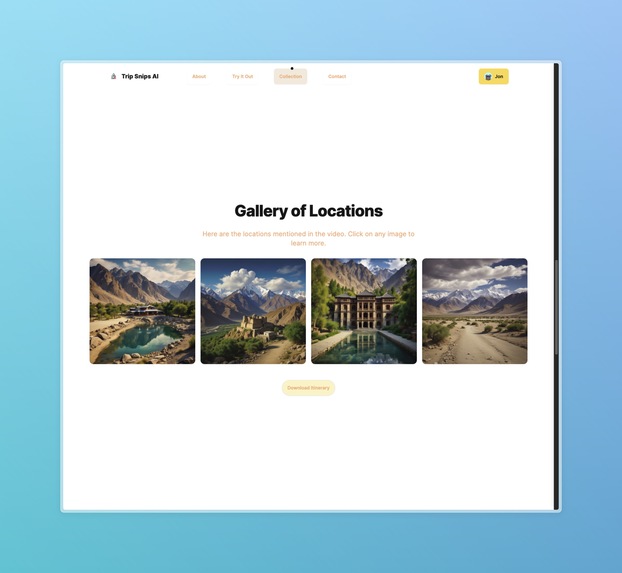

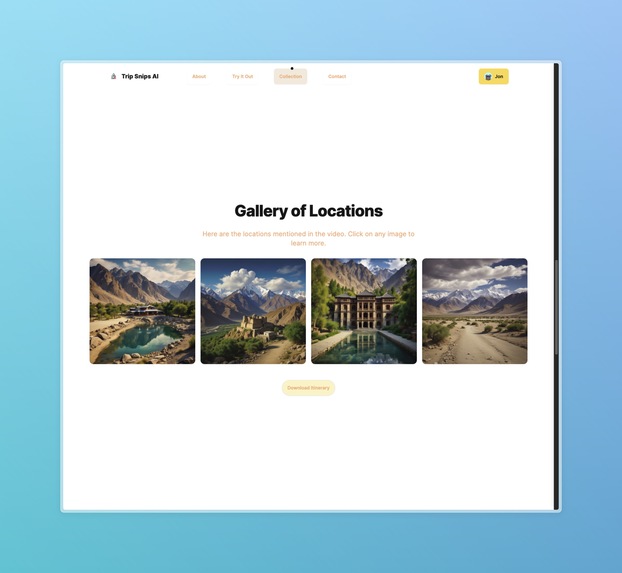

Gallery List of location extracted from the video

-

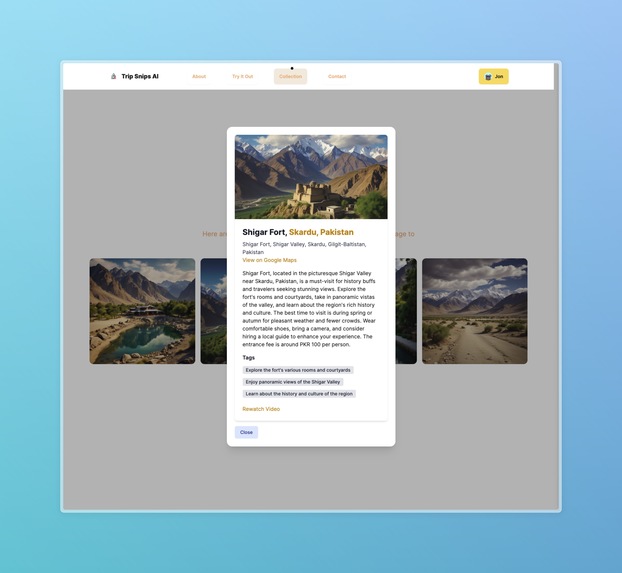

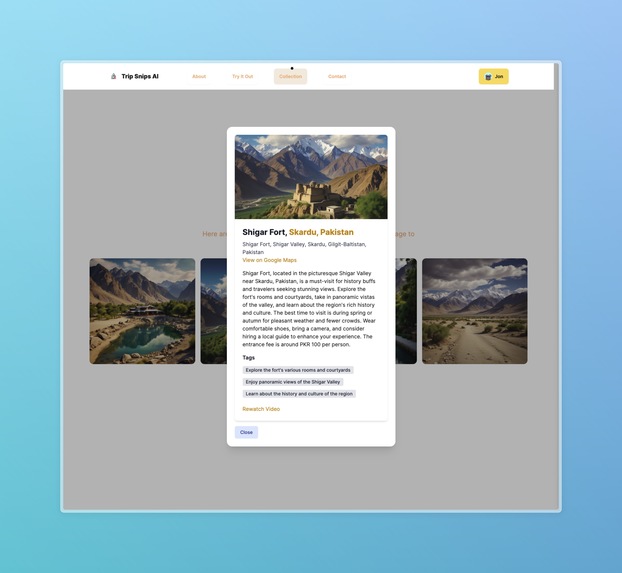

Each image is clickable leading to the information rich card of extracted details

-

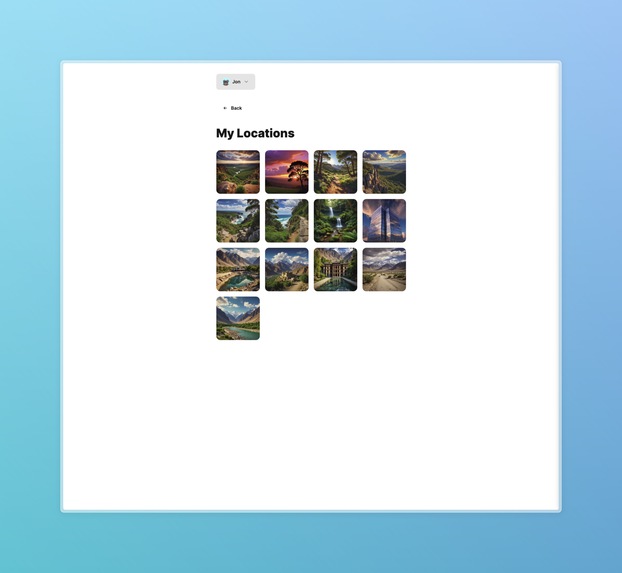

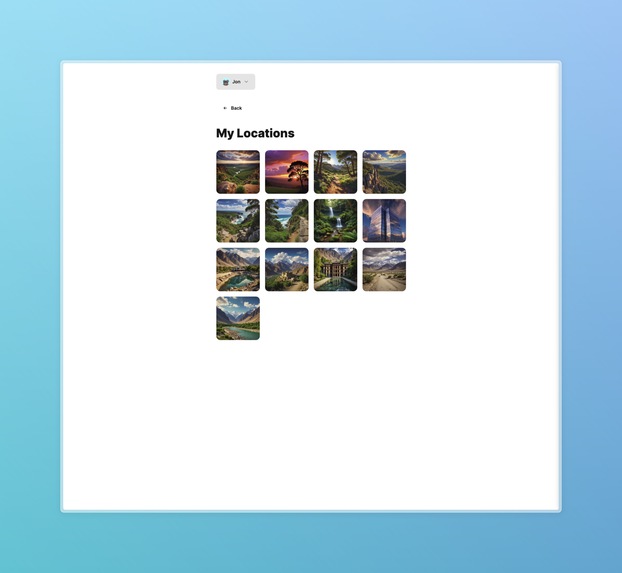

A users gallery of all locations extracted from all history of videos entered by user

Inspiration

Traveling has always been a joy for us, but the planning phase often feels like a bottleneck. While there’s excitement in mapping out our adventures, the repetitive tasks of searching for travel videos and manually inputting details into spreadsheets or planning software can be draining. And when we can’t find the specific information we need, it becomes a frustrating cycle of endless searches. Recently, we’ve noticed an influx of travel tips on platforms like TikTok and Instagram reels, which we love incorporating into our plans. However, capturing this information repeatedly is time-consuming. As tech enthusiasts, we’re exploring ways to leverage AI technology and other software tools to streamline this process.

What It Does

Trip Snips AI takes a TikTok video or Instagram reel URL link and extracts all the travel locations mentioned in the video. It consolidates the information into a rich, simple card UI that includes country, city, and place details, addresses, coordinates, activities, prices, tips, peak hours, and notes.

How We Built It

Our project comprises a full-stack Next.js app with a FastAPI backend worker. The worker is triggered only when new videos need processing. Once processed, the videos yield extracted locations with detailed information for each mentioned place. Each location is also assigned an AI-generated image using the Replicate API call to a Bytedance/SDXL model, which generates an image in approximately three seconds.

All videos, users, and locations are stored in a MongoDB database, ensuring that the AI workflow LLM calls are not rerun for the same video URL, leading to cost savings. Users can view a gallery of all their processed locations from the video URLs they have entered.

Challenges We Ran Into

One significant challenge was the learning curve associated with using Langchain and Langgraph to develop a robust extraction workflow. Structured output extraction can be unreliable with LLMs, as they naturally output strings rather than JSON. To address this, we had to create custom parsers to interpret the LLM’s output. Ensuring reliability from one output to the next, especially when chaining these nodes together, proved difficult. We are considering using more structured LLM frameworks like BoundaryML in the future.

Additionally, scraping the contents of social media posts via a share URL link was challenging. However, we devised our own methods to effectively scrape these posts through the provided URLs.

Accomplishments That We’re Proud Of

The initial extraction time on average took about 3 minutes per video, and we are proud to have brought that down to less than a minute on average. We managed to parallelize the async calls to the LLM, ensuring that all locations in a video are processed in parallel instead of sequentially. The use of Gemini-1.5-Flash helped to greatly reduce the processing time as well. We’re proud of our UI and UX design and are happily using our app for our own travel insights for our graduation trips.

What We Learned

We have barely scratched the surface of the potential of AI and LLMs. With single and multi-agent workflows, there are many automations AI can speed up for efficiency. As we continue to see the release of more capable models, the outputs generally get more reliable. However, we still need guardrails and frameworks to keep the outputs in check. We’ve learned to experiment with many different agentic frameworks like Langgraph, CrewAI, and Autogen. We finally landed on Langgraph for its versatility and compatibility with Langchain. We experimented with many different LLMs like GPT-4o, Claude 3.5-Sonnet, LLaMA3-70B-Groq, and Google Gemini-1.5-Flash. We found the free tier for Google Gemini and the speed of their flash model to be most optimal for the project.

What’s Next for Trip Snips AI

We plan to use better, more reliable structured output frameworks like BoundaryML. We aim to use faster LLM inference platforms like Groq when their on-demand API becomes publicly available to speed up the extraction workflows to less than a second. We will implement a map feature to allow users to view all their saved locations on a map. Additionally, we plan to allow users to edit any travel notes they wish to add to each location card. Lastly, we aim to create multi-agent workflows with CrewAI for itinerary planning, where given constraints like the dates of travel and the locations chosen, the AI will plan the most optimal route and scheduling. At the end of the day, we hope that Tripsnips becomes an end-to-end travel application that allows users to freedom to explore travelling in their own way.

Built With

- daisyui

- docker

- fastapi

- github-jobs

- google-cloud

- google-cloud-run

- google-gemini

- google-oauth

- langchain

- langgraph

- mongodb

- mongoose

- nextjs

- python

- replicate

- serper

- t

- tailwind

- telegram

- tiktok

- typescript

Log in or sign up for Devpost to join the conversation.