-

-

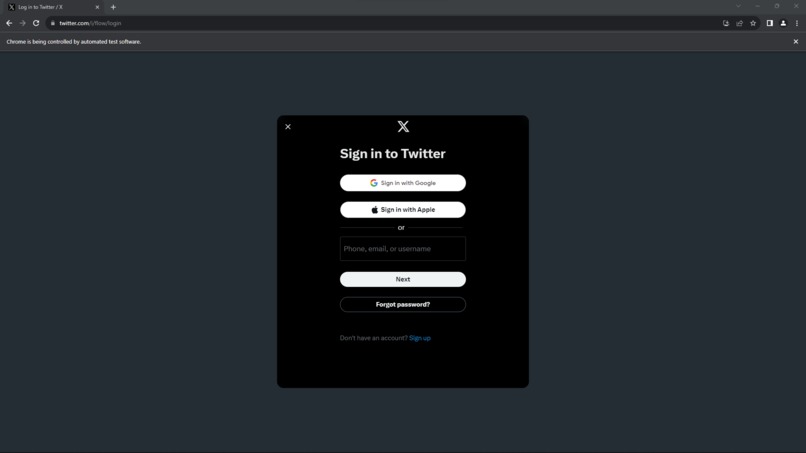

Using selenium a WebDriver (chrome) is opened.

-

WebDriver moves to twitter.com and signs in.

-

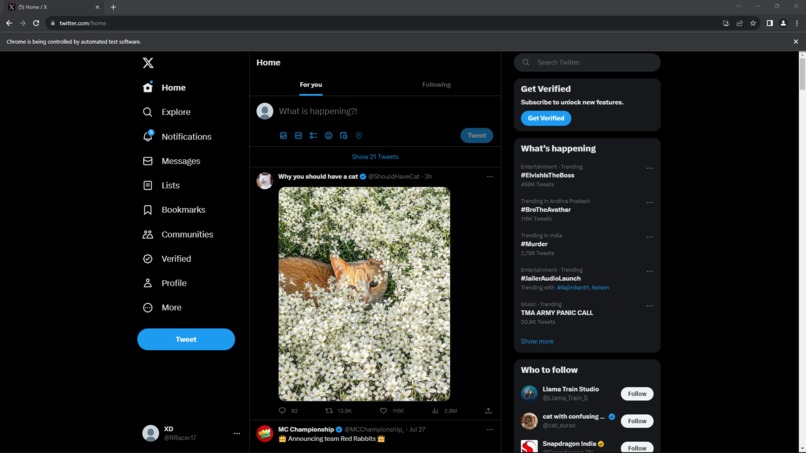

Signing in is successful and X(formerly twitter) home page opens.

-

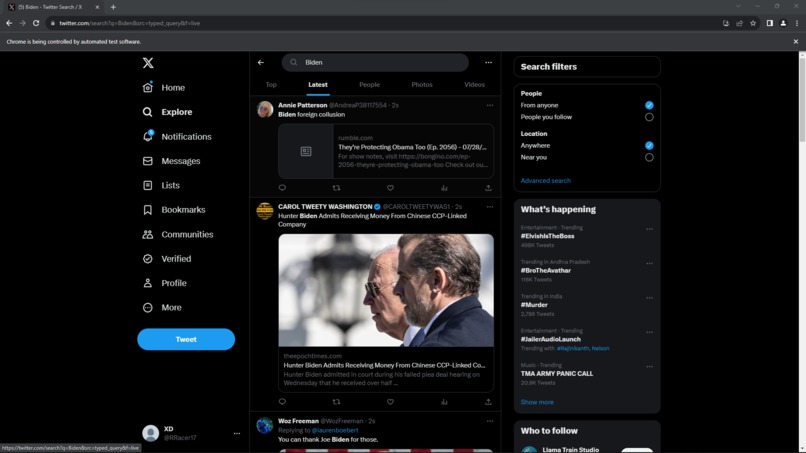

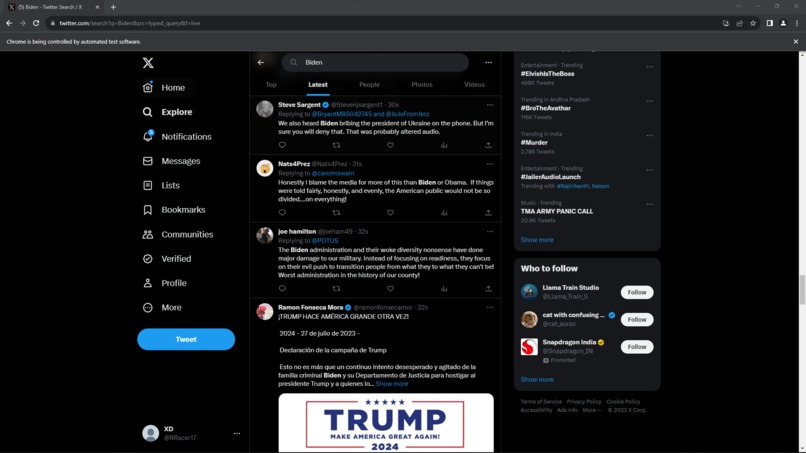

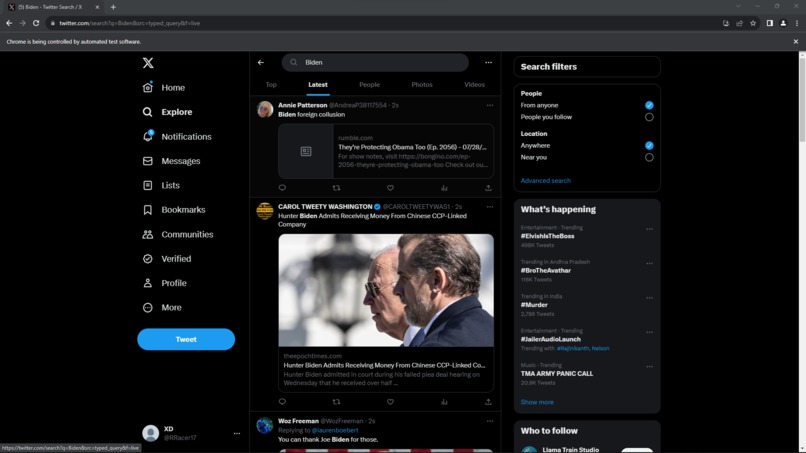

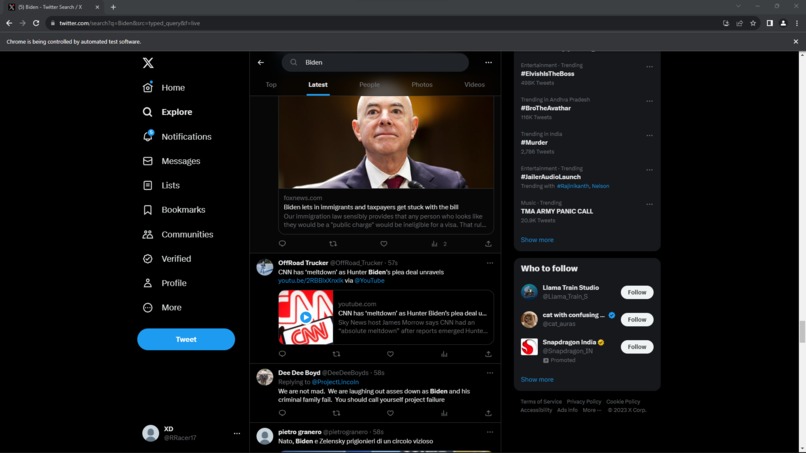

The given search topic is searched and a tab for latest tweets is opened.

-

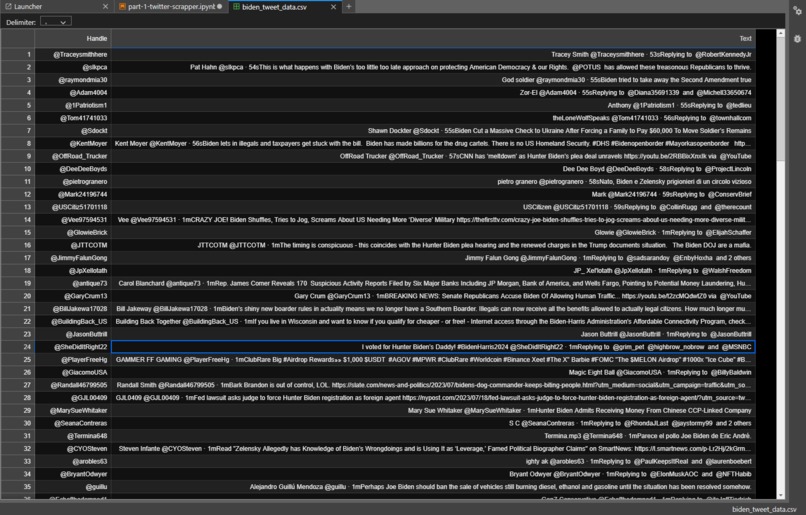

Page scroll occurs and data(user handle and the tweet) is captured.

-

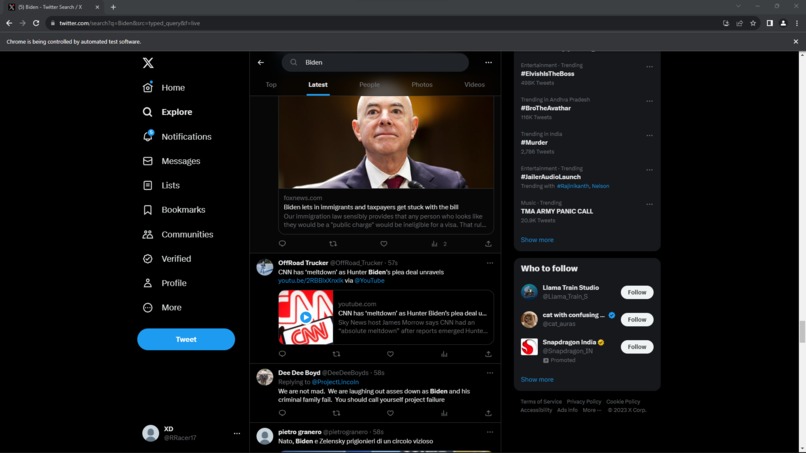

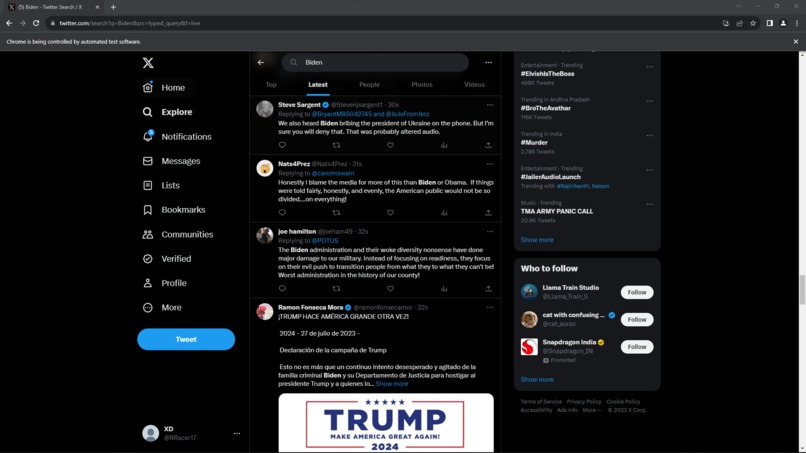

another example of page scroll.

-

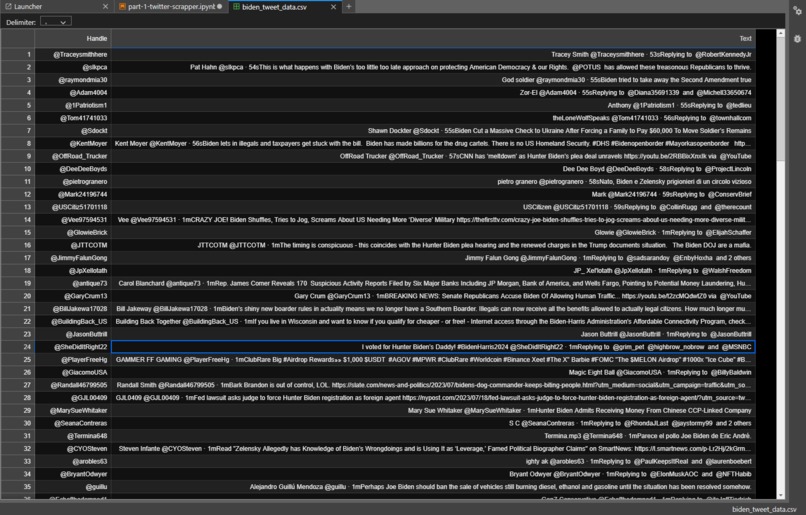

Data captured is stored in a .csv file under the headers Handle and Text(tweet).

Inspiration

Our inspiration for the Toxic Comment Analysis project stemmed from the growing concern over online toxicity and its impact on digital communities. We aimed to develop a solution that could effectively identify and analyze toxic comments, fostering a safer and more respectful online environment. Hannah Smith, a 14-year-old girl from the UK, committed suicide in 2013 after being relentlessly targeted by cyber bullies on the social media platform Ask.fm. The perpetrators subjected Hannah to abusive messages and threats, pushing her to the brink.

What it does

The Toxic Comment Analysis project utilizes machine learning techniques to classify and analyze toxic comments in online platforms such as social media and discussion forums. By leveraging natural language processing algorithms, the system can detect various forms of toxicity, including hate speech, harassment, and cyberbullying.

How we built it

We built the Toxic Comment Analysis project using Python and popular machine learning libraries such as PyTorch and hugging-face. The development process involved collecting and preprocessing a diverse dataset of user comments, training machine learning models, and implementing a user-friendly interface for comment analysis.

Challenges we ran into

Developing a system for toxic comment analysis raised ethical considerations regarding privacy, freedom of speech, and algorithmic bias. We had to carefully consider the potential impact of our project on individuals and communities and implement safeguards to mitigate potential harm.

Accomplishments that we're proud of

We are proud to have developed a highly accurate (94% accuracy and a 0.988 auc score) and efficient system for toxic comment analysis. Our project has the potential to make a meaningful impact by promoting healthier online interactions and mitigating the spread of toxicity in digital communities.

What we learned

Throughout the development process, we gained valuable insights into natural language processing, machine learning model training, and data preprocessing techniques. We also learned about the importance of ethical considerations and responsible AI deployment in addressing online toxicity.

What's next for Toxic Comment Analysis

In the future, we plan to further enhance the capabilities of our system by integrating advanced natural language processing models and expanding the dataset to improve model performance. Additionally, we aim to explore real-time monitoring and intervention strategies to proactively address toxic comments in online platforms.

Log in or sign up for Devpost to join the conversation.