-

-

Using mediapipe and the Roboflow model we trained, we locate the center of the mouth and the tongue tip, creating a vector to control mouse

-

The vector is translated into mouse movements using python library called pyautogui

-

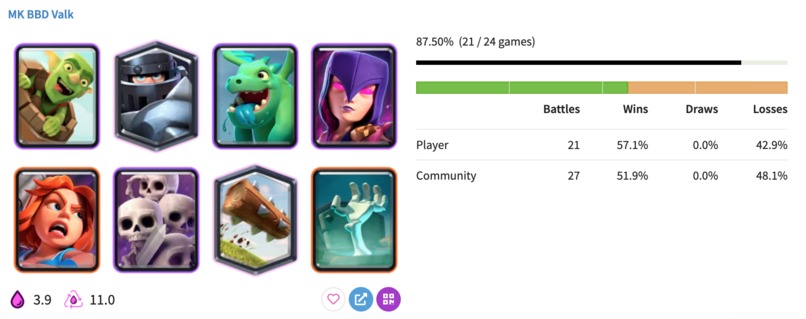

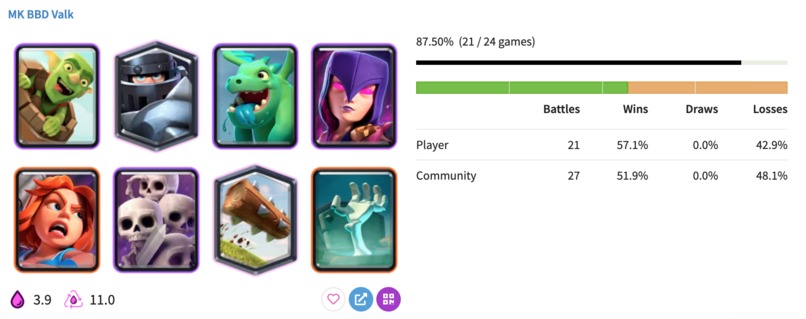

We accurately the deck our opponent has by web scraping a Clash Royale database that contains the past games/cards they have played.

-

You navigate between cards with A and D. To deploy a card, you simply open your mouth and point your tongue in the spot you want the card.

Inspiration

Clash Royale is a very popular game among the youth, but few people play it with style; everyone just plays it regularly (they're very chalant). We wanted to build a way to play Clash Royale that was even more fun than it already was, while upgrading the amount of nonchalance a player can have.

What it does

With TongueRoyale, you can play this game with your tongue to increase nonchalance and mog your opponent by telling them what they have in their deck, before they can even place a card.

How we built it

To control the mouse with the tongue, we had to use Mediapipe and OpenCV to detect the center of the mouth, but no CV models could accurately detect the tip of the tongue, so we trained a model on Roboflow to find this part of the tongue. Once you have the tip of the tongue and the mouth center, you can create a vector where the center of the mouth is the starting point, and translate the vector into mouse movement. This was the main component of the tongue controlling system. Other things we had to do were detecting when the mouth was open or closed, so that if the model falsely detected tongue movement from something weird in the background, nothing would happen. This was easily achieved with CV libraries. We also had to build a system that would let users easily navigate between cards with the left and right arrow keys, using just two buttons, to simplify the input required from them. Our team implemented this by using a Python library called pyautogui, which easily moves the mouse with a program.

In order to find the deck our opponents were most likely playing, we had to web scrape (using BeatifulSoup) a third-party Clash Royale database that stores previous games/cards played by someone. To use it, we had to get the name of the opponent and their clan name in text, which was done using Google's Vision AI to guarantee the high accuracy of this process.

Challenges we ran into

We had difficulty when we were trying to track tongue movement to play the game. Due to the limitations of the computer vision libraries, we decided to train our own model using Roboflow. We spent some time annotating the images to train the model, but it was all worth it at the end, as the program can now pick up tongue movement and track direction with much higher accuracy than old CV libraries that were just estimating where the tongue tip was.

Our project pivoted a few times before it got to what it is now. We tried using a pre-built model to track the movement of troops in the game, but had to pivot due to limited computing power (no GPU). We also tried using an algorithm to predict the opponent's hand, which didn't work out due to the lack of training data and time constraints. Eventually, we found we could use web-scraping to give the player an edge in Clash Royale matches by showing them the 8 cards the opponent has in their deck.

What we learned

We learned how to use Mediapipe to track and pinpoint tongue movement, how to effectively use Roboflow to train a computer vision model, how to use Google Vision AI and Google Colab, and how to translate vectors into mouse movements with Python libraries.

Accomplishments that we're proud of

Making a system to play Clash Royale with your tongue. The capability of figuring out opponents' decks based on their past battles, analyzed from a third-party database

What's next for TongueRoyale

Add an AI system to help improve your Clash Royale gameplay in order to progress you to Ultimate Champion, just like the starter tutorial teaches you the basics.

Built With

- beautiful-soup

- mediapipe

- numpy

- opencv

- pyautogui

- python

- roboflow

Log in or sign up for Devpost to join the conversation.