-

-

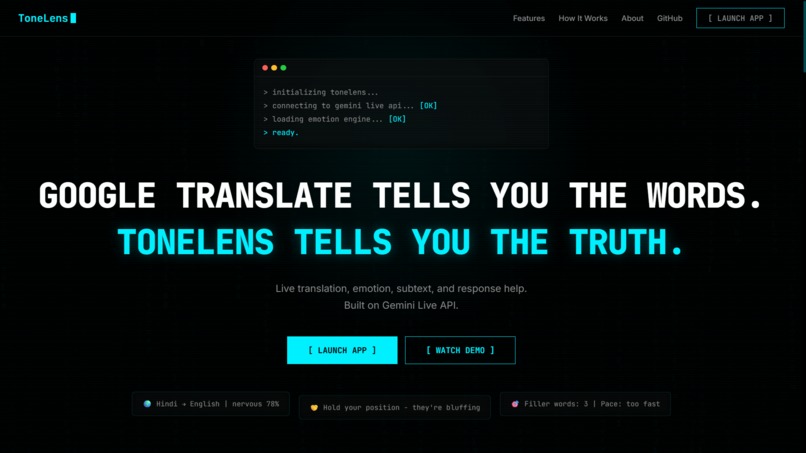

Google Translate tells you the words. ToneLens tells you the truth.

-

Real-time emotion detection, translation, and tactical suggestions - all live.

-

Six agent modes powered by Gemini Live API

-

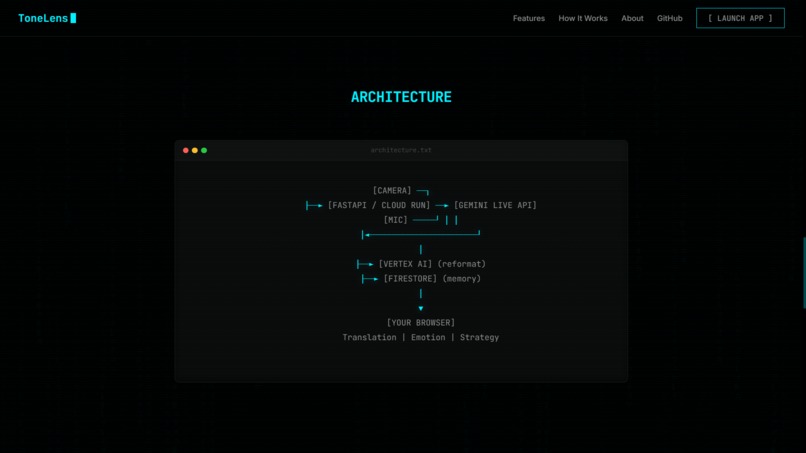

Camera + mic → Gemini Live → Vertex AI → browser. Under 2 seconds

-

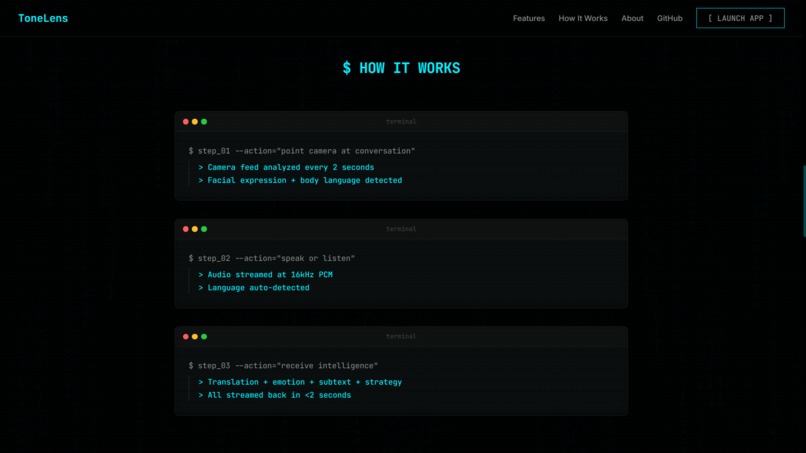

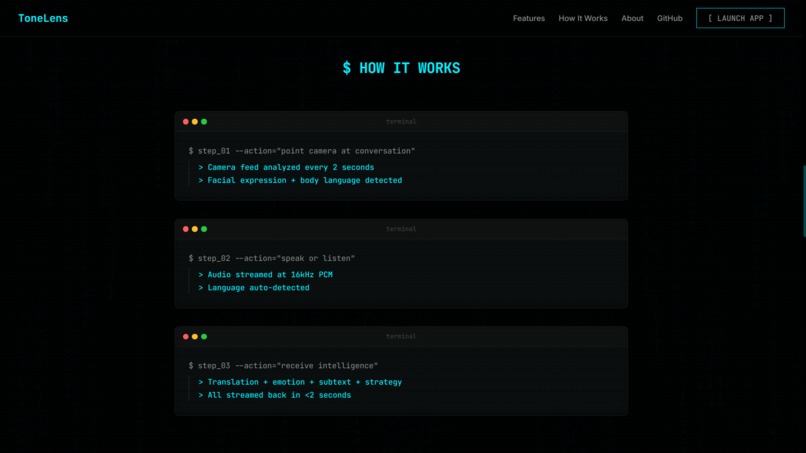

Point. Speak. Receive intelligence. Three steps, real-time.

-

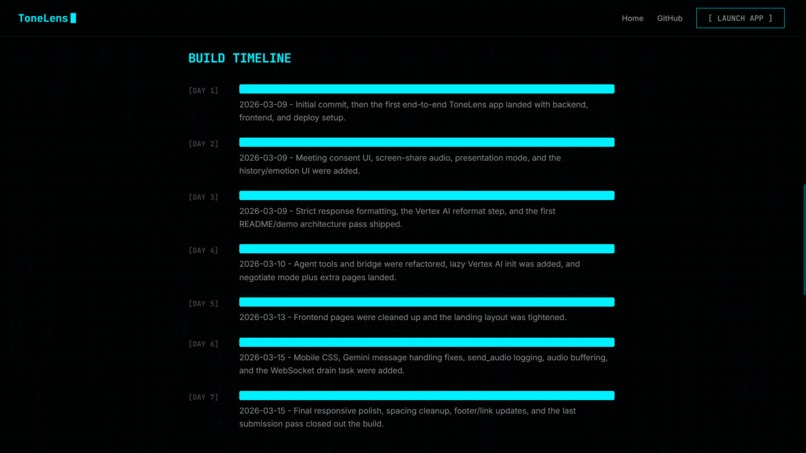

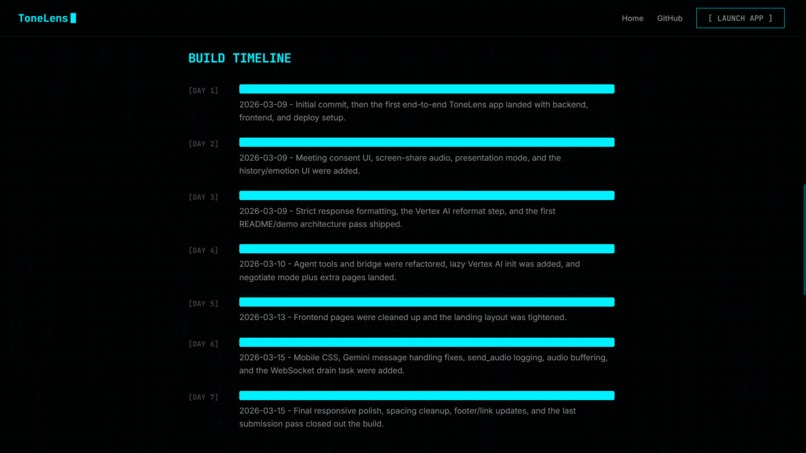

Built in 7 days. Every commit counts.

-

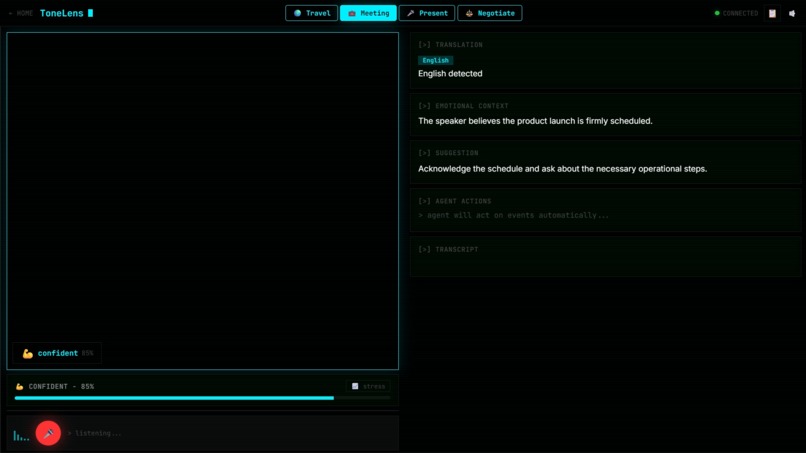

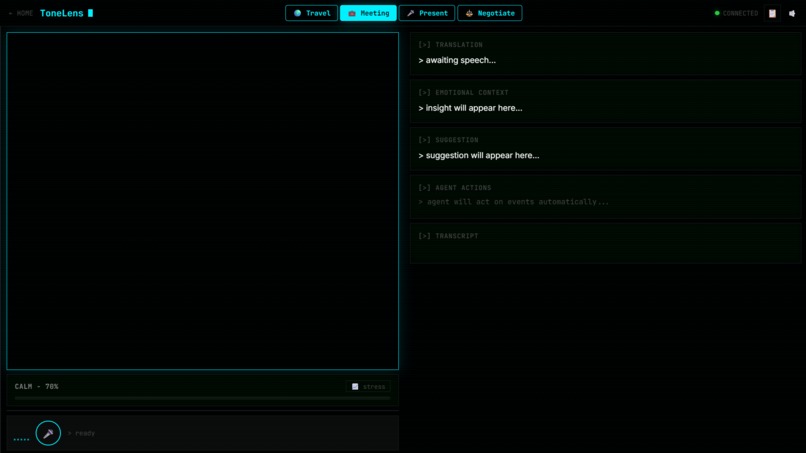

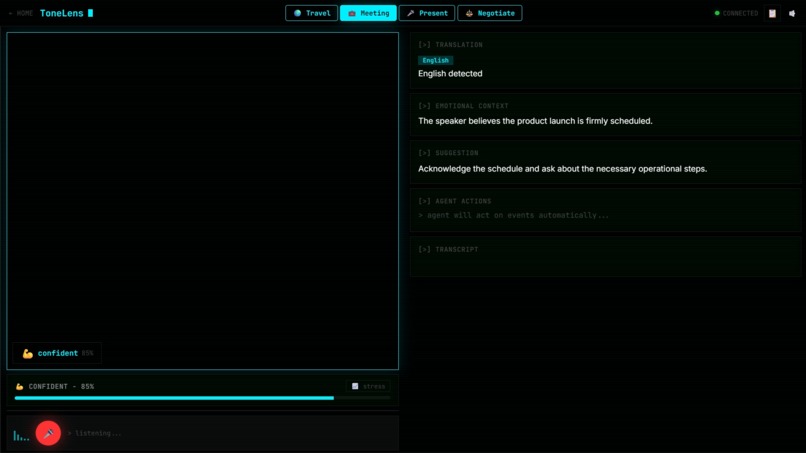

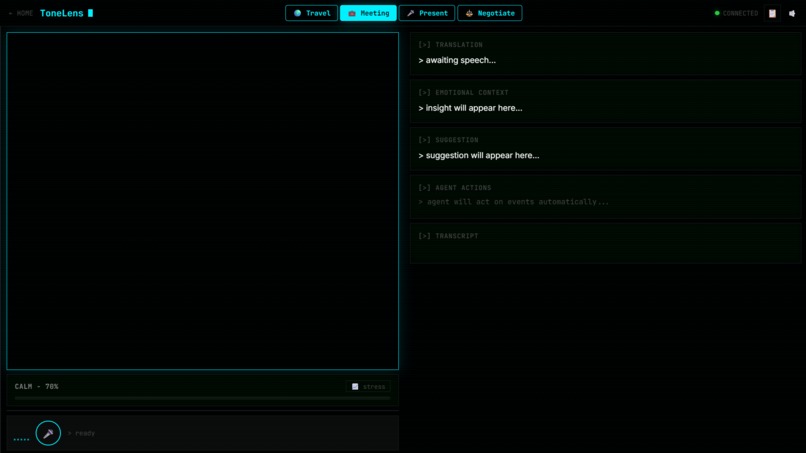

Connected and listening. Ready to decode the next conversation.

ToneLens — Real-time Emotional Intelligence Agent

Builder: Mohan Prasath, 18, Chennai, India

Hackathon: Gemini Live Agent Challenge 2026

Live URL: https://tonelens-1095027648976.us-central1.run.app

GitHub: https://github.com/mohanprasath-dev/tonelens

Inspiration

I've been in conversations where I said exactly the right words but completely the wrong way, and things fell apart. That stuck with me. When I found out about the Gemini Live Agent Challenge 2026 and saw what the Gemini Live API could do with real-time audio, I immediately thought: what if an AI could coach you through emotional tone in the moment, not after the damage is done? I'm 18, living in a college hostel, and I've watched people around me lose arguments, negotiations, and friendships not because they were wrong, but because of how they came across. That frustration is what became ToneLens.

What It Does

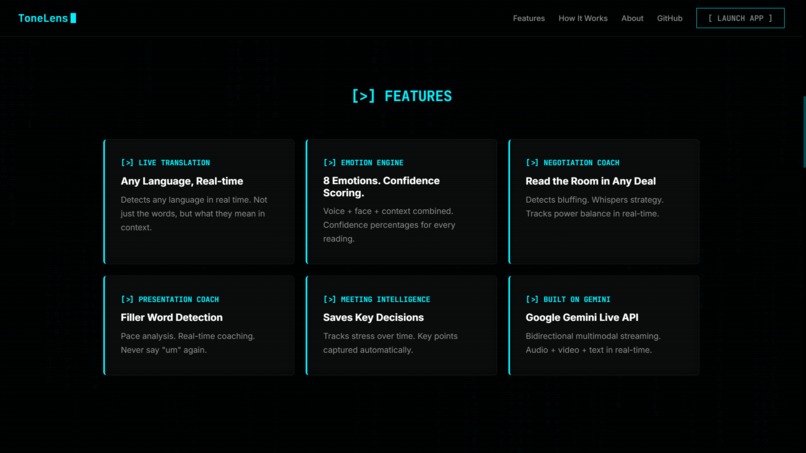

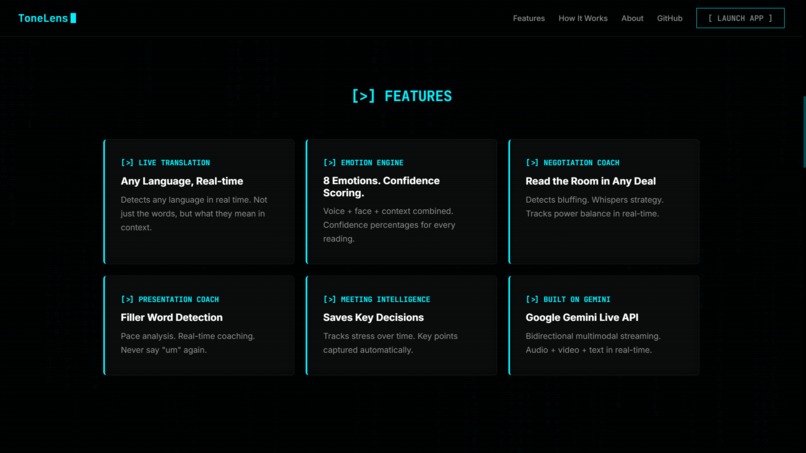

ToneLens is a real-time emotional intelligence agent powered by the Gemini Live API that watches a conversation through your browser camera, listens to live audio, and surfaces translation, emotion, subtext, and tactical suggestions in one interface. It has four modes: Travel (real-time translation with emotional context and cultural tips), Meeting (stress tracking, key decision capture, and meeting note export), Present (filler word detection, pace analysis, real-time delivery coaching), and Negotiate (power balance scoring, whisper coach, and momentum tracking mid-deal). The whole interface runs on a black and matrix-green aesthetic because this is serious tooling, not a therapy app. Everything streams live through Gemini Live API with no post-processing lag.

Google Translate tells you the words. ToneLens tells you the truth.

Key capabilities:

- Live camera frame analysis (1 JPEG frame every 2 seconds) + 16kHz PCM audio streamed simultaneously to Gemini Live API

- Emotion detection with confidence scoring from voice, face, and conversational context combined

- Negotiation mode: power balance score, whisper coach, momentum chart, bluff detection

- Meeting mode: stress tracker chart, automatic note capture, sidebar export

- Present mode: filler word count, pace feedback, real-time coaching tips

- Travel mode: translation + subtext + cultural tip cards, emergency overlay with hospital, police, and Call 112

- Keyword-triggered agent actions for cultural context, emergency services, meeting notes, and stress reports

- Firestore-backed session history and meeting notes, persisted across the session

How I Built It

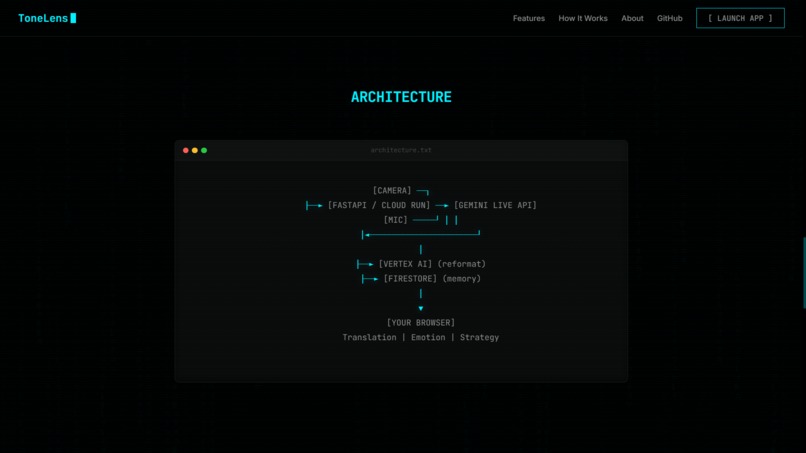

The core is gemini-2.5-flash-native-audio-latest via the Gemini Live API, receiving simultaneous JPEG frames and raw PCM audio over a WebSocket from the browser. Because Gemini Live returns freeform text, I added a second-pass formatter using Vertex AI gemini-2.0-flash that coerces every response into strict labeled lines the frontend can parse reliably. Agent actions (cultural tips, emergency links, meeting notes, stress reports) are triggered by keyword logic in the bridge rather than live function-calling, since the model config omits function declarations. The whole stack went from idea to deployed app in 7 days, mostly late nights after 4PM classes.

Stack:

- Gemini Live API (

gemini-2.5-flash-native-audio-latest, audio + video streaming) - Vertex AI (

gemini-2.0-flash, second-pass structured output formatter) - FastAPI + Uvicorn + WebSockets (backend)

- Google Cloud Firestore (session memory, exchanges, meeting notes)

- Google Cloud Run + Docker, 2Gi RAM, 2 vCPU (deployment)

- Web Audio API, 16kHz PCM base64 streaming (audio capture)

- Vanilla HTML/CSS/JS + Chart.js (frontend)

google-genai,google-adk, Python (AI SDK layer)

Architecture:

[CAMERA] ──┐

├──► [FASTAPI / CLOUD RUN] ──► [GEMINI LIVE API]

[MIC] ─────┘ │ │

│◄───────────────────────┘

│

├──► [VERTEX AI] (structured formatter)

├──► [FIRESTORE] (session memory)

├──► [agent.py] (cultural / emergency / notes / stress)

│

▼

[YOUR BROWSER]

Translation | Emotion | Subtext | Strategy

Challenges I Ran Into

Getting the Gemini Live API to handle real-time audio and video simultaneously with low enough latency to feel genuinely live was the hardest part, especially on Cloud Run with cold starts eating the first few seconds. The raw Gemini Live output is freeform text, so I had to build a second-pass Vertex AI formatter to turn it into structured labeled lines reliably without adding noticeable lag. I also had to figure out how to queue audio in the backend until the live session was ready, drain it correctly, and keep the WebSocket from dropping frames under load. Most of this was debugged solo at 1AM with the Gemini API docs open in one tab and my deployed app breaking in another.

Accomplishments I'm Proud Of

Getting all four modes working end-to-end with the Gemini Live API in a single cohesive deployed app is something I'm genuinely proud of. The Negotiate mode actually works in a way that surprised me during testing: it caught tension in a mock salary negotiation roleplay that I hadn't consciously noticed myself. Building a real multimodal pipeline, browser camera and mic capture, live WebSocket transport, Gemini Live audio response, structured second-pass reasoning, and Firestore session memory, as an 18-year-old first-year student, solo, in 7 days, feels like a real proof of concept.

What I Learned

I learned that the Gemini Live API is not just a voice interface: it's genuinely capable of processing simultaneous audio and video streams and holding emotional context across a multi-turn conversation in a way that feels like it understands subtext. I also learned that raw model output and production UI requirements are very different things, and the second-pass Vertex AI formatter was one of the most important engineering decisions I made. Honestly, I came in thinking I'd build a simple tone detector and left having built something that felt closer to a real multimodal agent.

What's Next for ToneLens

The immediate next step is replacing keyword-triggered agent actions with actual Gemini Live function-calling once the model config supports it, which will make the agent behavior much more dynamic. I want to add cross-session memory so the Gemini Live API can track emotional patterns over time and give longitudinal feedback on how your communication style is evolving. Long term, I think there's a real product here for conflict resolution training, sales coaching, and language learning, and I want to explore that with real users after the hackathon.

Built for Gemini Live Agent Challenge 2026 | #GeminiLiveAgentChallenge Launch App | GitHub | LinkedIn

Built With

- cloud-run

- fastapi

- firestore

- gemini-live-api

- google-adk

- javascript

- python

- vertex-ai

- websockets

Log in or sign up for Devpost to join the conversation.