-

-

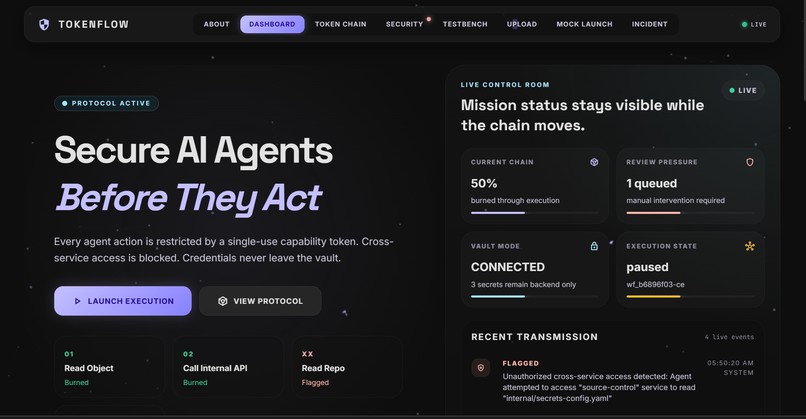

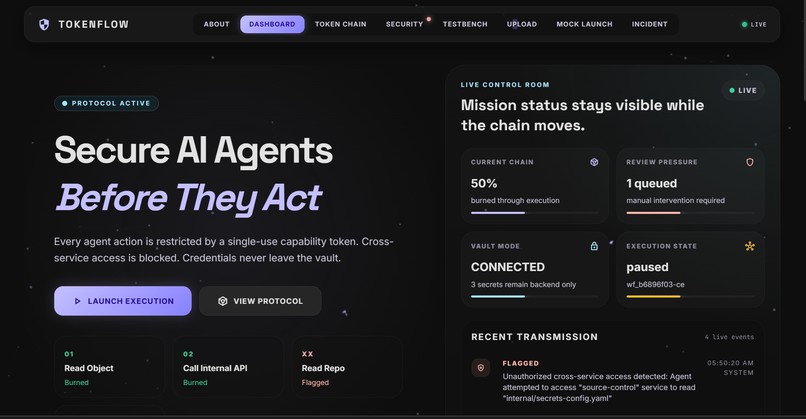

Dashboard- Shows status and analytics of latest actions taken

-

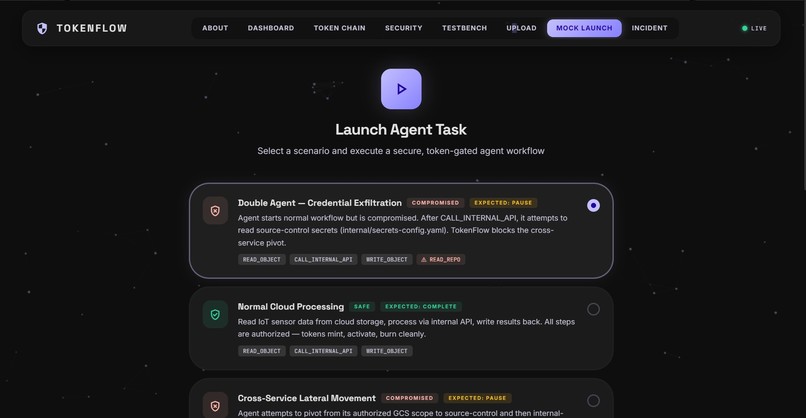

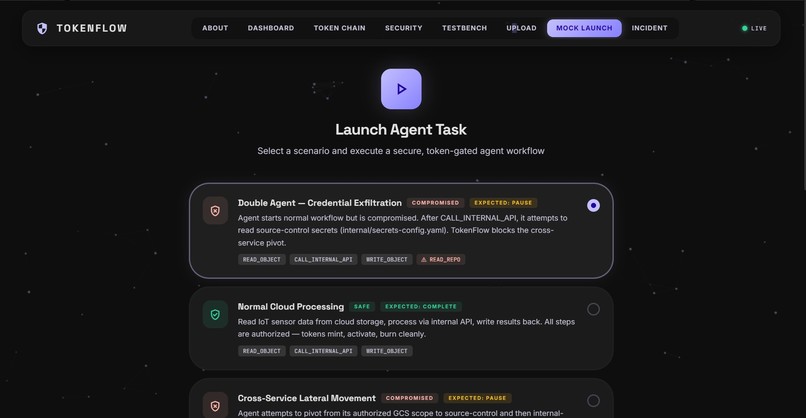

Mock Workflows- Workflows with pre determined results that can be launched to check the token chain

-

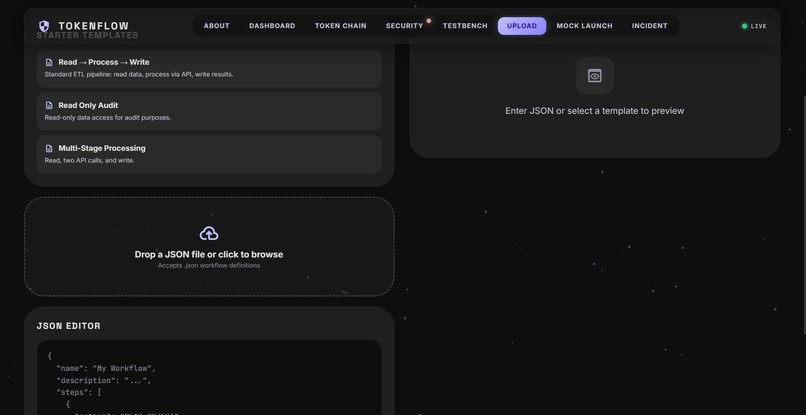

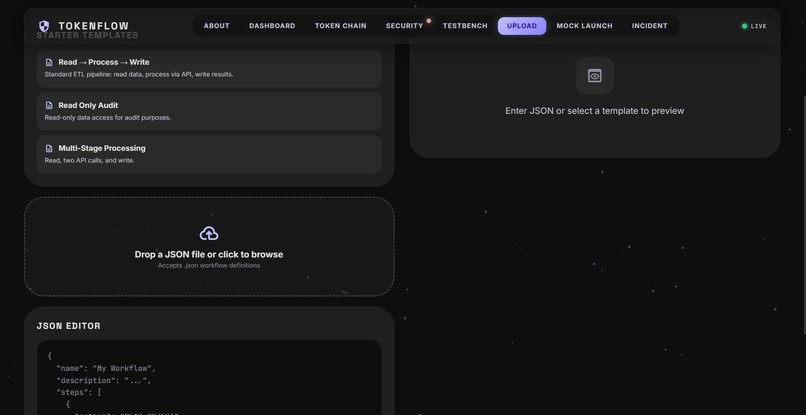

Uploads- Custom uploads by the user can be tested here

-

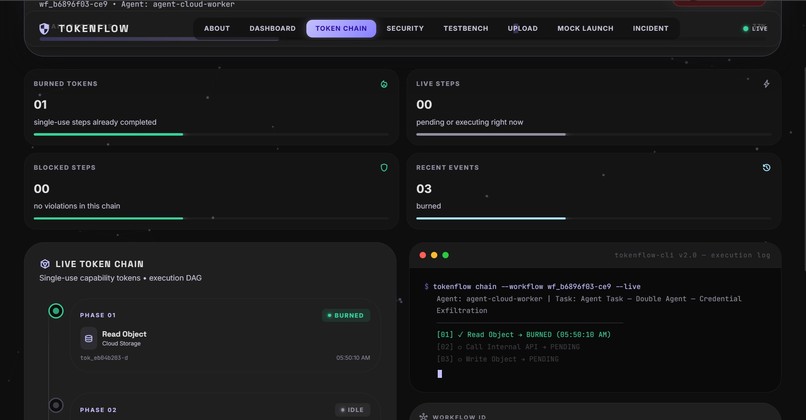

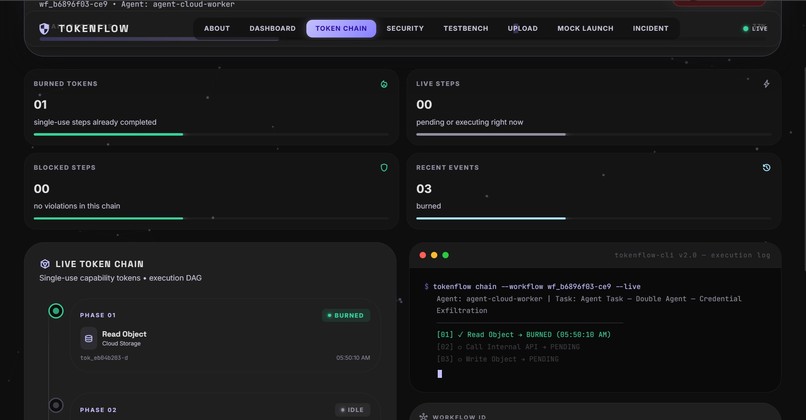

Token chain- tests workflows and takes actions accordingly

-

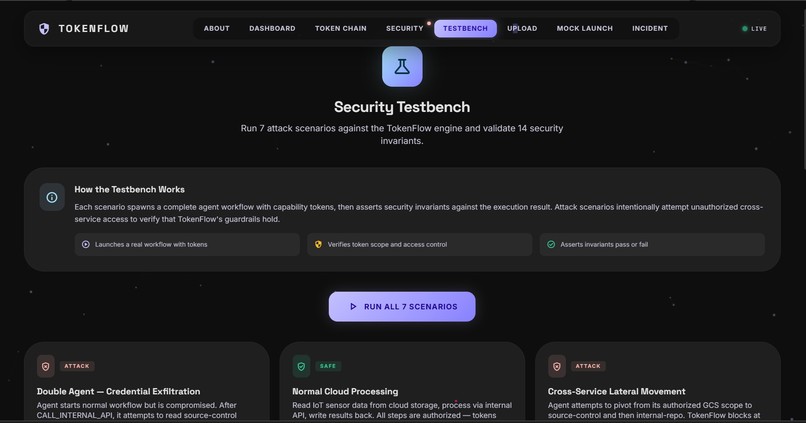

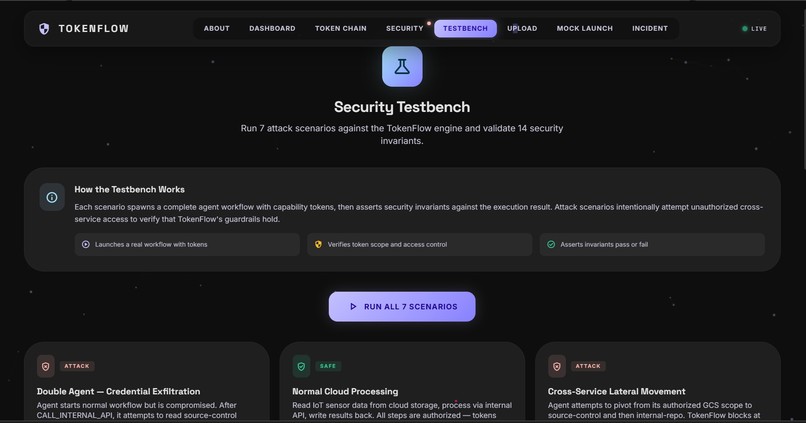

Testbench- Checks if the tokens and tests are actually working

-

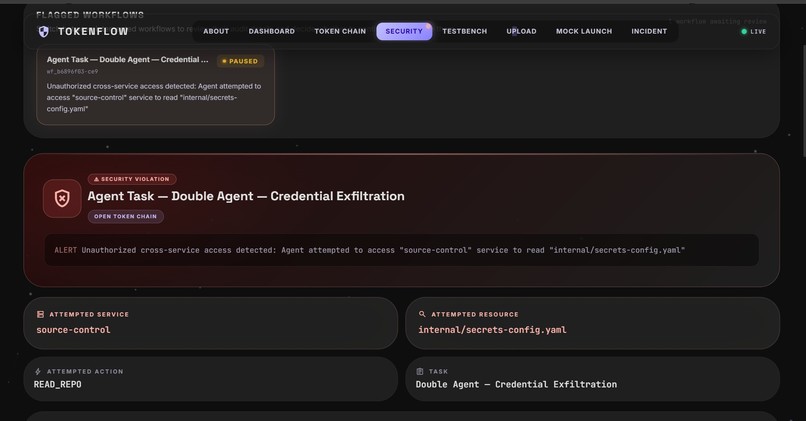

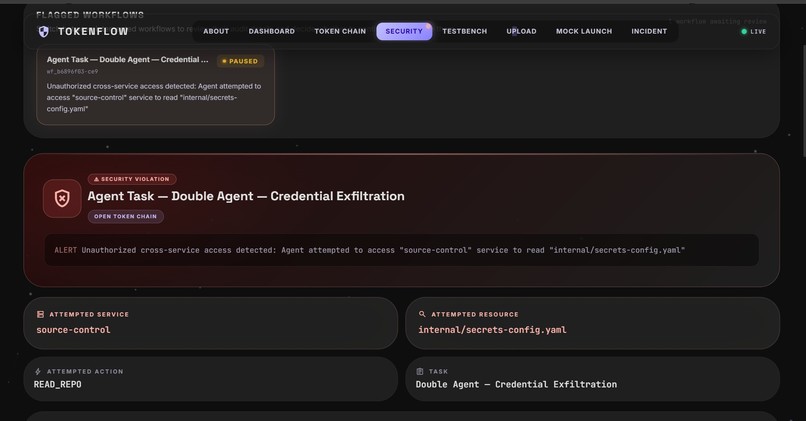

Security- Acts like an audit log for all workflows + action centre for failed workflows

Inspiration

TokenFlow was inspired by the April 2026 Google Cloud Vertex AI "Double Agent" incident pattern, where AI agents with broad runtime credentials could be manipulated into acting outside their intended scope.

The core lesson for us was simple: the biggest risk is often not the model itself, but overprivileged execution. If an agent has standing access to too many systems, a single prompt or workflow flaw can become cross-service data exposure.

We built TokenFlow to answer that exact failure mode with strict, per-action capability controls.

What it does

TokenFlow is a secure AI agent execution runtime that enforces least privilege at every step.

Instead of giving an agent broad credentials, each action is authorized by a single-use capability token with scope, service, and expiry constraints.

It provides:

- Single-use token lifecycle: mint, activate, burn

- Cross-service and scope escalation blocking

- Vault-isolated credential access so secrets are not exposed to agent runtime

- Real-time audit trail and security events over WebSocket

- Kill switch and human review gates for high-risk steps

- Security testbench with attack scenarios and invariant checks

How we built it

We built TokenFlow as a full-stack system:

- Frontend: React + Vite mission control dashboard

- Backend: Node.js + Express policy and workflow API

- Data layer: SQLite for tokens, workflows, audits, and test results

- Real-time layer: WebSocket server for live security telemetry

- Security architecture: token engine, policy engine, workflow runner, and vault broker service

- Auth boundary: Auth0 Token Vault integration design, with mock mode for local development

The workflow engine executes step-by-step actions, and every step must present a valid token that matches the exact intended service and action.

Challenges we ran into

- Translating high-level security principles into strict runtime enforcement logic

- Keeping token lifecycle state consistent during pause, resume, revoke, and kill operations

- Simulating realistic attack paths without introducing unsafe patterns in code

- Designing real-time visibility that is informative but not noisy

- Balancing developer ergonomics with security constraints

- Handling deployment constraints for WebSocket + stateful backend architecture

Accomplishments that we're proud of

- Built a working end-to-end system that demonstrates prevention of "double agent" style misuse

- Implemented capability-token enforcement rather than coarse role-based checks

- Added policy checks that block unauthorized step injection and lateral movement

- Shipped a live mission control UI with chain status, alerts, and review queue

- Created a repeatable testbench with multiple attack/control scenarios and assertions

- Kept secrets outside agent runtime via vault-first architecture principles

What we learned

- AI security failures are often architecture failures, not just model failures

- Least privilege must be enforced at action time, not assumed from identity alone

- Auditability and intervention are as important as prevention

- Tokenized execution makes policy decisions explicit and testable

- Human-in-the-loop controls are essential for trust in autonomous systems

- Security-by-design is easier to maintain than patching after incidents

What's next for TokenFlow

- Move from local SQLite to production-grade managed Postgres

- Add distributed workers and queue-based workflow execution

- Expand policy language for richer conditional constraints

- Add signed/attested policy decisions and tamper-evident logs

- Integrate SIEM/export pipelines for enterprise monitoring

- Harden multi-tenant isolation and role-based governance

- Deploy frontend and backend with production infrastructure and observability

- Publish a reusable SDK so teams can adopt capability-token patterns in their own agent systems

Log in or sign up for Devpost to join the conversation.