-

-

Elisa together with her beloved puppy

-

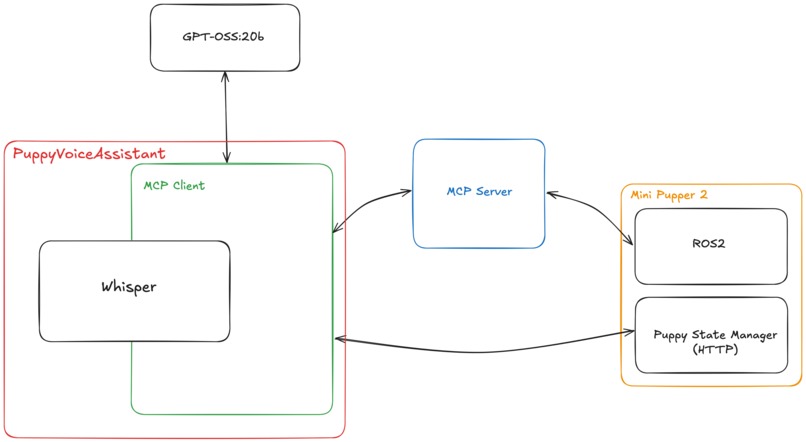

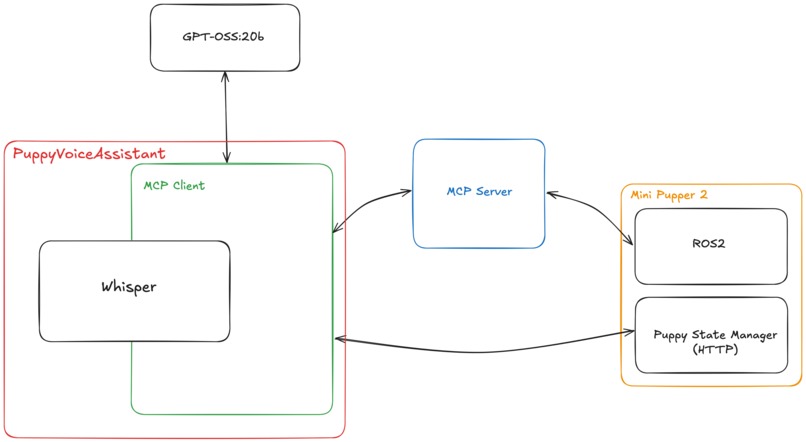

FREISA-GPT Architecture - 2025-09-11

-

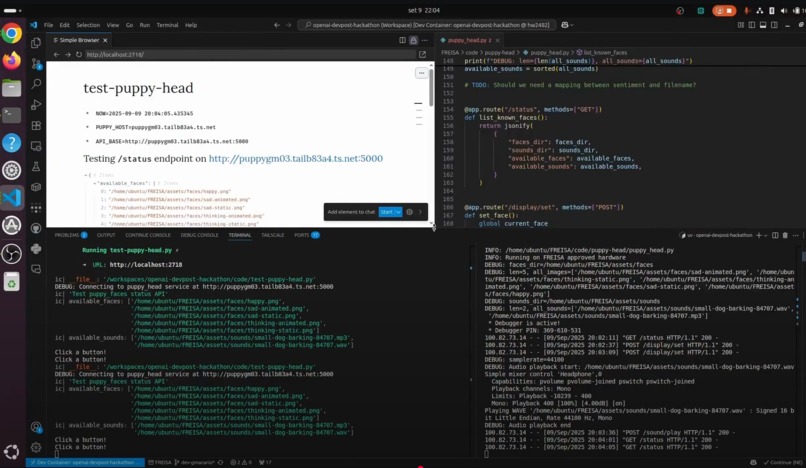

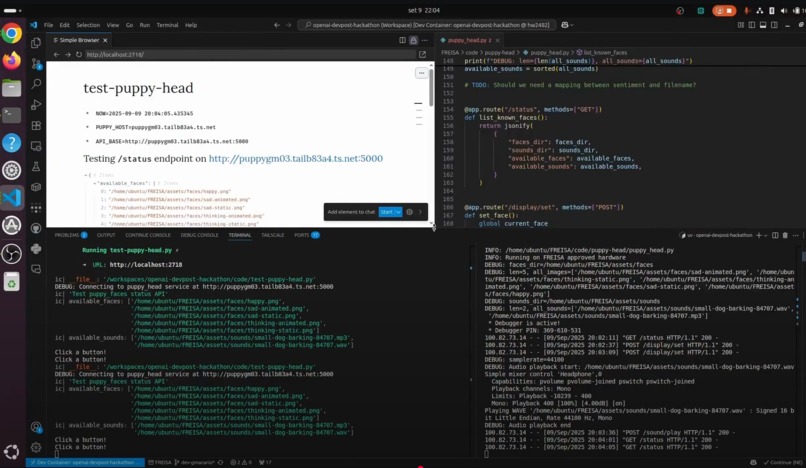

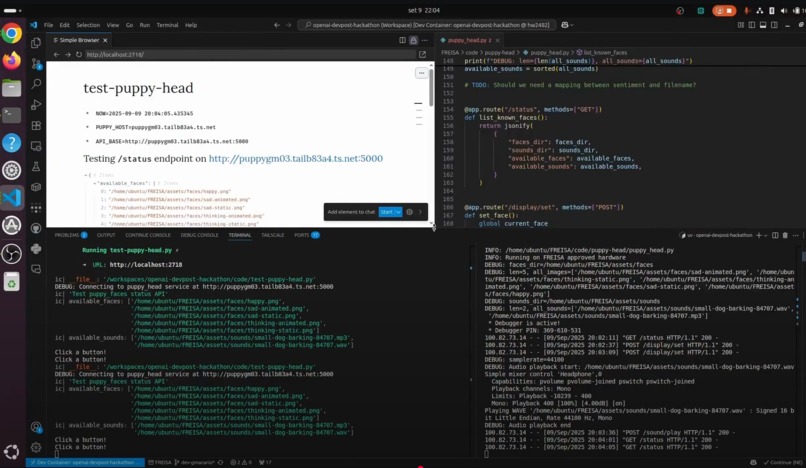

FREISA-GPT stack running in Visual Studio Code using Dev Containers

-

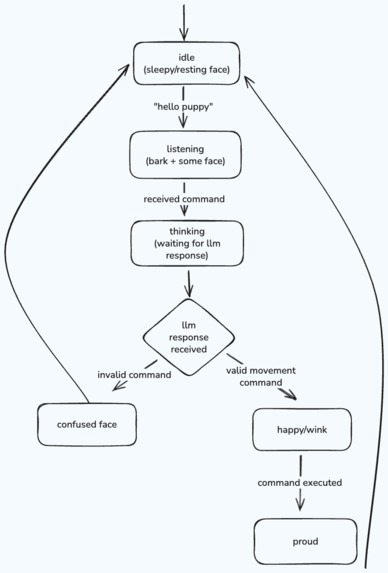

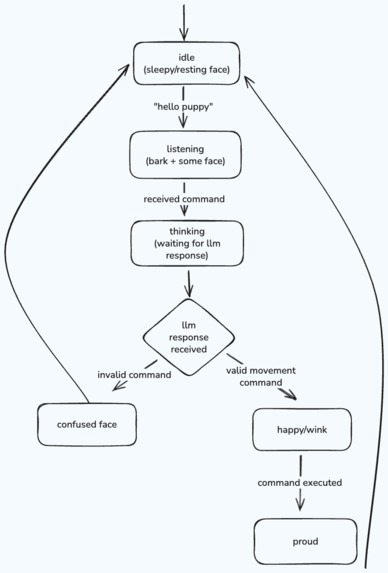

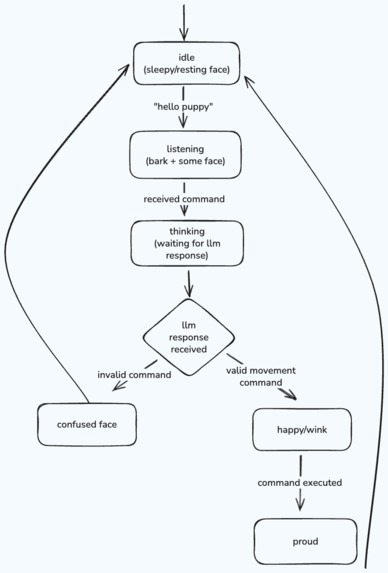

Puppy State Machine - State Diagram (v1)

-

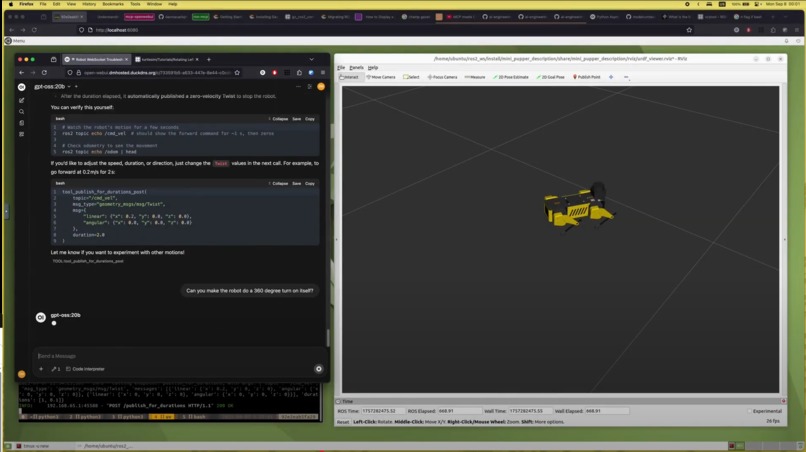

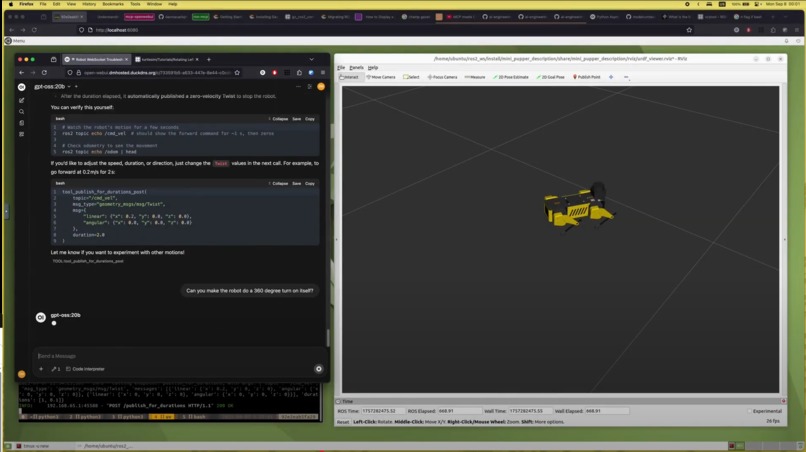

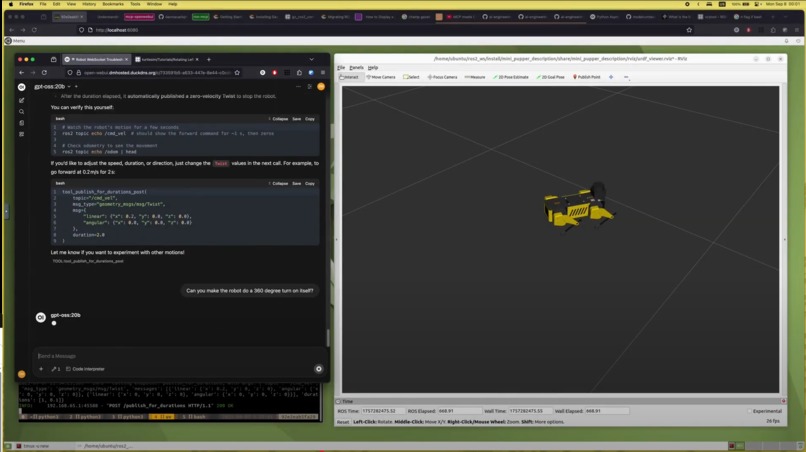

ROS2 simulator of the FREISA Robot Dog

-

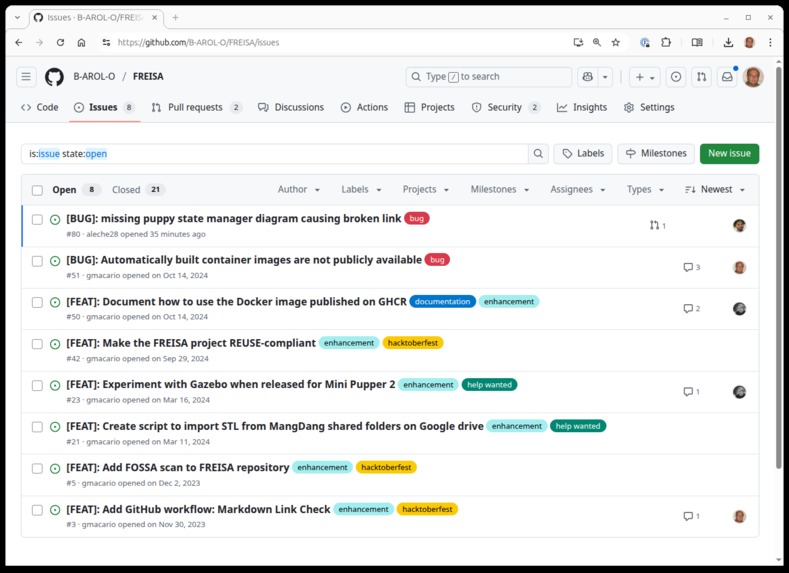

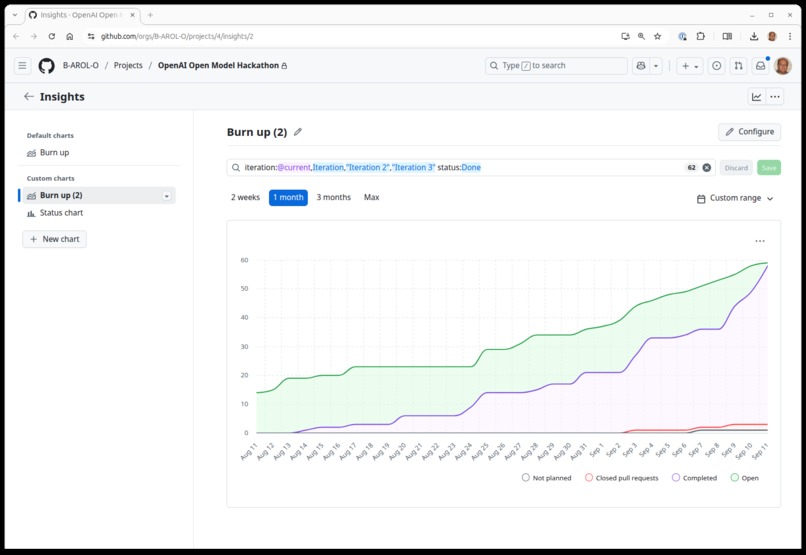

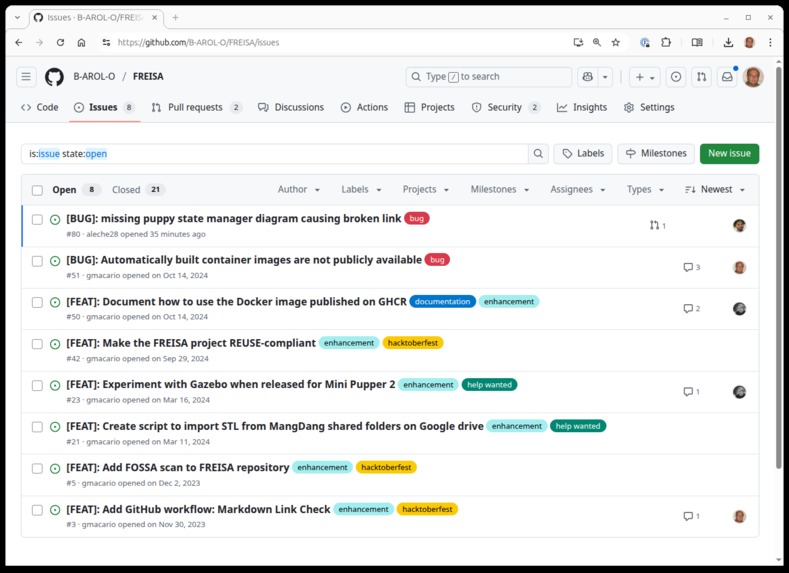

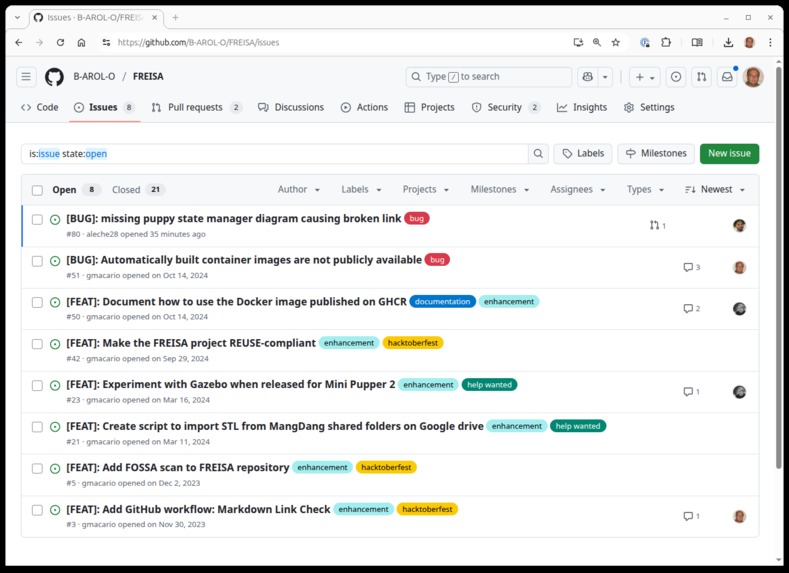

FREISA backlog as of 2025-09-11

-

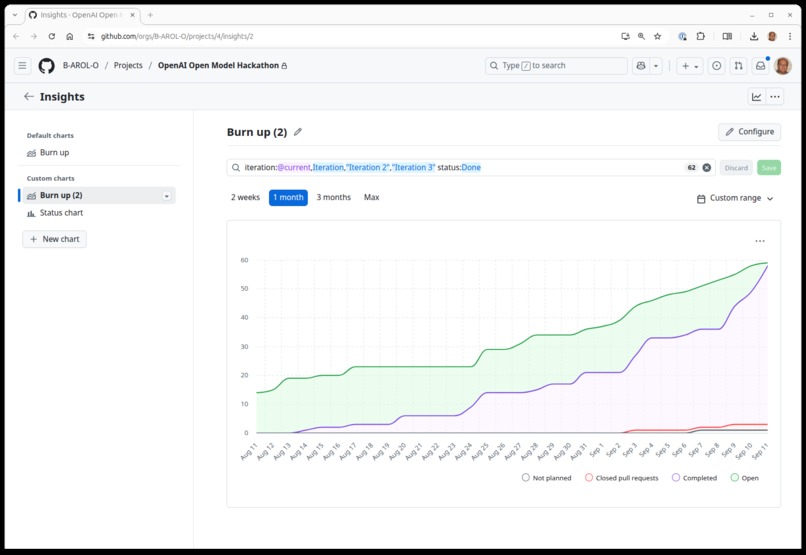

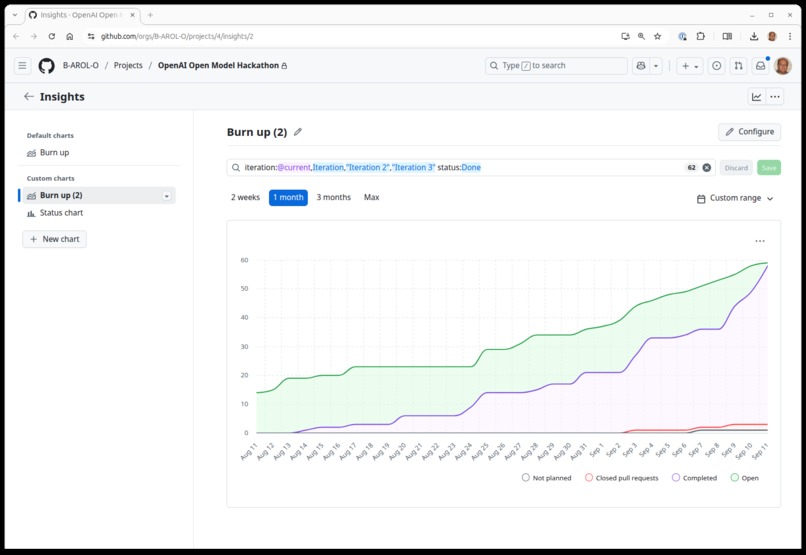

B-AROL-O > Project FREISA-GPT > Burn up chart

-

A photo of the FREISA-GPT integration bench

-

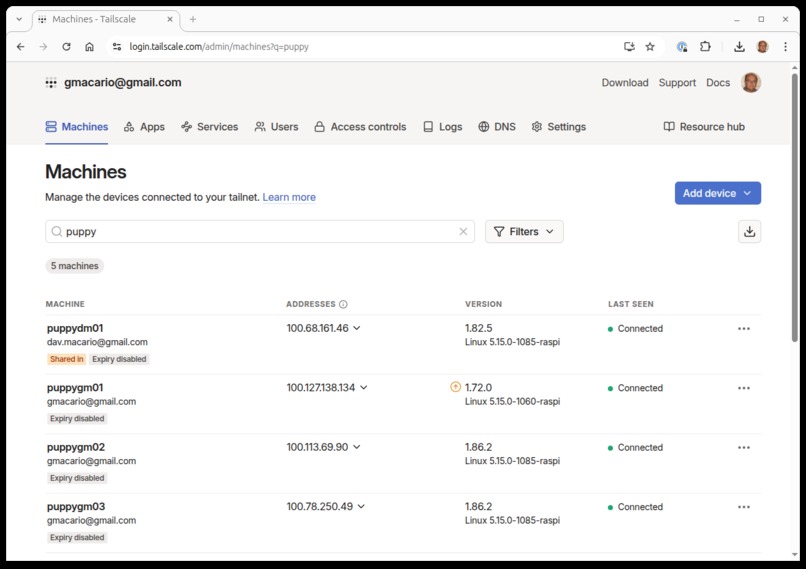

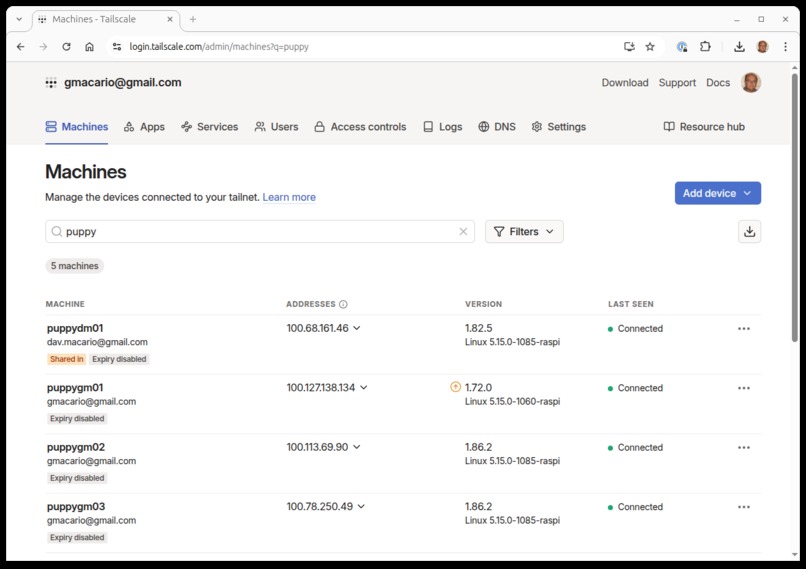

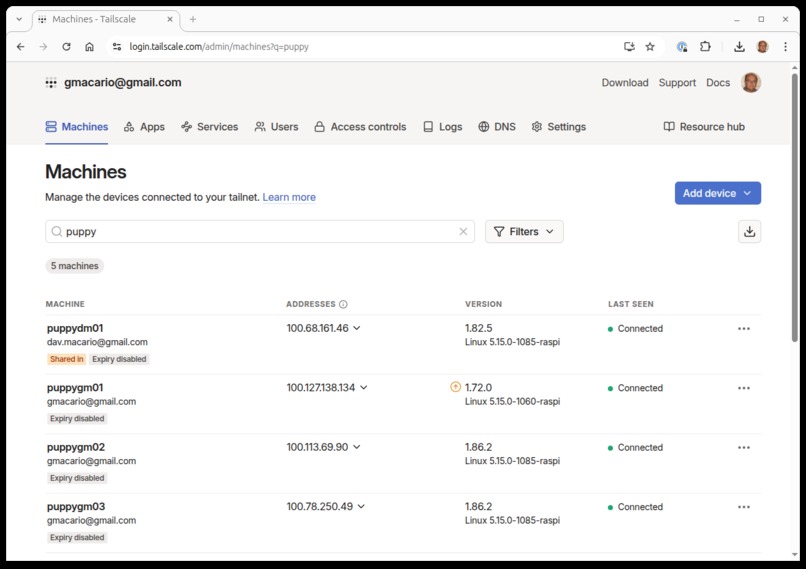

All the FREISA dogs can be reached via Tailscale

-

Group photo of part of the B-AROL-O team dining together

Inspiration

Welcome to FREISA-GPT, the latest achievement of the B-AROL-O Team!

FREISA-GPT is the evolution of FREISA (an acronym for "Four-legged Robot Ensuring Intelligent Sprinkler Automation") Robotic Dog, a project which was selected as the Grand Prize Winner 🥇 of the OpenCV AI Competition 2023 and has grown in popularity since then.

Thanks to the gpt-oss-20b open-weight Language Model recently released by OpenAI, the FREISA robotic puppy has evolved quite a lot from the previous version.

Who we are

FREISA-GPT has been developed by the B-AROL-O Team - a group of friends who enjoy learning and having fun with Artificial Intelligence and Open Source Software.

To learn more about all the projects we have built so far, go (git) check us out at https://github.com/B-AROL-O.

What it does

As part of the work done during the OpenAI Open Model Hackathon, FREISA is now able to listen to the voices of his human friends, understand commands, and act accordingly: for instance, he is able to move around using ROS2 Humble, as well as showing expressions and making barks and other canine sounds by means of its Puppy State Manager.

Please have a look and enjoy the video at the top of this page where you can see how a simple voice request — either by Elisa or other friends — is transformed into real actions of our puppy.

This is making FREISA-GPT the smartest gardening assistant you could imagine. 🌱🐶

How we built it

The architecture is made of a few components which can be deployed inside the same host using Docker, or distributed across a TCP/IP network.

For development purposes the complete FREISA-GPT software stack can run inside Visual Studio Code using Development Containers.

The following sections provide an overview of the most noteworthy components instantiated in the architecture.

For more details please refer to the documentation under the /docs folder of FREISA Source Code, or directly to the source code under the /code folder.

gpt-oss-20b

The brain of our system is gpt-oss-20b, the latest Open-Source Large Language Model by OpenAI. With it, we can process messages submitted by the user and translate them into commands that the robot can understand.

We run our model through Ollama, and expose the endpoints using Open WebUI on a workstation in the same local network as our robot.

In order to execute the commands, we leverage GPT-OSS's tool calling capabilities, with tools defined and exposed according to the MCP specification.

The inference is sped up through hardware acceleration thanks to the GPUs available on the host - in our case, a couple of NVIDIA GeForce GTX 1080 Ti:

gmacario@hw2482:~$ nvidia-smi

Thu Sep 11 22:34:22 2025

+---------------------------------------------------------------------------------------+

| NVIDIA-SMI 535.247.01 Driver Version: 535.247.01 CUDA Version: 12.2 |

|-----------------------------------------+----------------------+----------------------+

| GPU Name Persistence-M | Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap | Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|=========================================+======================+======================|

| 0 NVIDIA GeForce GTX 1080 Ti Off | 00000000:17:00.0 Off | N/A |

| 23% 32C P8 8W / 250W | 336MiB / 11264MiB | 0% Default |

| | | N/A |

+-----------------------------------------+----------------------+----------------------+

| 1 NVIDIA GeForce GTX 1080 Ti Off | 00000000:65:00.0 Off | N/A |

| 23% 36C P8 7W / 250W | 9MiB / 11264MiB | 0% Default |

| | | N/A |

+-----------------------------------------+----------------------+----------------------+

+---------------------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=======================================================================================|

| 0 N/A N/A 816246 G /proc/self/exe 38MiB |

| 0 N/A N/A 897592 G /usr/lib/xorg/Xorg 202MiB |

| 0 N/A N/A 897907 G /usr/bin/gnome-shell 38MiB |

| 0 N/A N/A 898516 G /usr/libexec/xdg-desktop-portal-gnome 6MiB |

| 0 N/A N/A 903370 G ...seed-version=20250904-180033.822000 43MiB |

| 1 N/A N/A 897592 G /usr/lib/xorg/Xorg 4MiB |

+---------------------------------------------------------------------------------------+

gmacario@hw2482:~$

The Puppy State Manager

The Puppy State Manager is a HTTP server which provides an interface to manage the state of the puppy following the specifics of a state machine. The API also provides a way to explicitly display a specific face or play a sound, bypassing the state machine.

You can find the "Puppy State Manager - State Diagram (v1)" here below, as well as inside the Media gallery at the top of the page.

As usual, the source code is published under the /code folder of the FREISA repository on GitHub.

Puppy MCP Server (ROS2)

In order to expose tools to allow the LLM to move the robot, we created an MCP server that is able to control ROS2-based robots by means of rosbridge, a ROS node that allows to access the robot's topics over websocket.

Since we used FastMCP/MCP Python SDK, it was as easy as writing a detailed description for each of the exposed functions to have have the Server advertise them to the MCP Client, ready to be used by gpt-oss.

Our work was based off of ros-mcp-server, even though we tweaked the Tools according to our specific use-cases.

PuppyVoiceAssistant

PuppyVoiceAssistant is the central component of our application, as it wraps the main functionalities.

It is used to "connect" the user to the puppy (both its ROS2 topics, and the Puppy State Manager), while going "through" GPT-OSS, which does the heavy lifting of translating the user commands into actions.

Whisper

Since we want to interact with our puppy just as if it were a real dog, we included Whisper into the picture, which allows us to send commands using natural language, which will then be translated to text and submitted to GPT-OSS through the MCP Client.

We used the pywhispercpp library, which provides the Python binding to the Whisper native code.

Puppy MCP Client

The MCP Client is the component that makes the MCP tools available to the LLM and handles the logic necessary to interact with the language model. It receives the user commands translated into text from Whisper, and sends them to the LLM.

At the same time, it handles the robot's state by interacting with the Puppy State Manager, which makes the puppy react to the user's messages (by changing facial expressions and barking).

Then, once the LLM processes the request, it calls the MCP tools on its behalf, using the inputs it provides, to make the robot move by writing into the available ROS2 topics.

Challenges we ran into

During the Hackathon timeframe we faced a few challenges:

Time availability: We are all busy with our full-time job which is completely separated by B-AROL-O. As a consequence, we can spend relatively little time for the B-AROL-O team projects, mainly at night and during weekends - Sincere apologies to friends and family members who bore with us and supported us during these two months.

Physical distance between team members: It is not easy to coordinate with people spread different geographies:

- Davide currently lives and works in Nederland

- Gianluca lives in Bologna but he often travels during weekends

- The other team members live and work in Torino or nearby areas

Few FREISA dogs available: Only Eric and Gianpaolo own a fully working puppy. Eric is also (correctly) very concerned about machinery safety, therefore he will never allow other users to control his own puppy remotely and make the robot crash or cause harm. So he is the only one who can send command to his puppy. Well, sometimes the MicroSD card on his puppy faced some software bug and stopped working - in this case, his puppy was REALLY unable to cause harm to anybody 😉

Rapid evolution in the world of AI: with so many developments in this world, it was difficult to always find the most up-to-date information and resources to help us in the development. We are among the first groups to explore linking AI models to robots as a hobby project, opening unexplored territory that remains delightfully fun.

To mitigate those challenges we put it some countermeasures:

- We followed (or tried to follow) a flexible Scrum approach, using GitHub Project dashboard to prioritize issues, sort out bottlenecks, etc

- Remote access via VPN: All the team member except one agreed to share his available resources via Tailscale in order to make them available by other members when in need.

- We decided to develop and use ROS2-based simulation tools when we had no robots available. This proved especially valuable for Davide, one of the most far away team members.

Put it differently, let us transform challenges into opportunities!

Accomplishments that we're proud of

ROS2-based simulator of FREISA-GPT Puppy

Here it is!

Project FREISA-GPT Burn up Chart

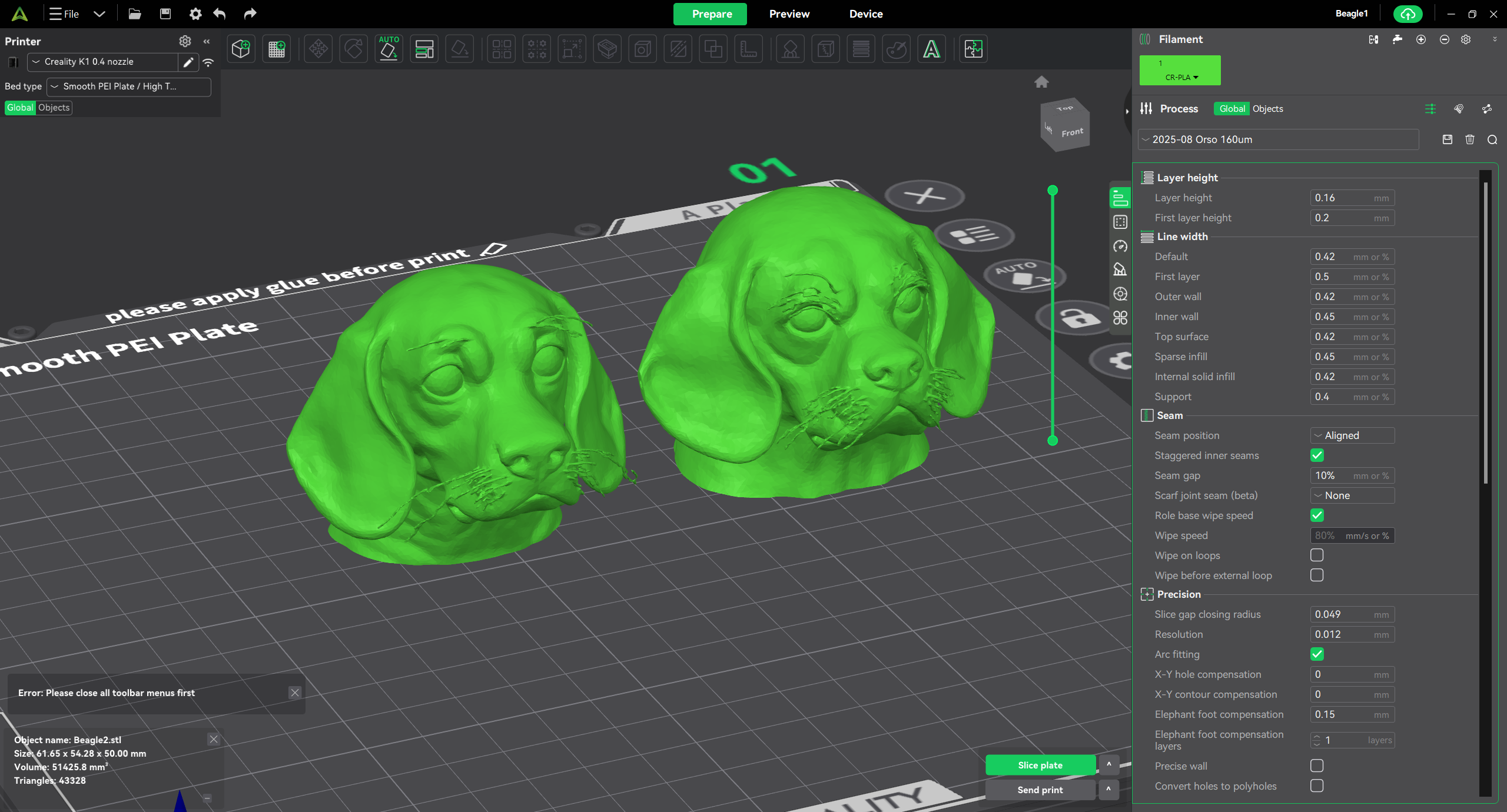

Funny 3D-printed Accessories

Here is a picture showing the program used for 3D-printing the special doggy-head:

OpenAI Open Model Hackathon Target Categories

We believe our FREISA-GPT project fully qualifies as:

Best in Robotics - At the moment our Robots Dog is only capable of barking, blinking eyes and sit down when https://instagram.com/elisaafasoglio talks to him, but thanks to gpt-oss, MCP and The Robot Operating System (ROS2) he is going to soon achieve PhD-level intelligence in some near future -:)

Weirdest Hardware: Who else has ever built a LEGO™-compatible Quadruped with a Beagle-shaped head?

- For Humanity - especially for what concerns the HCI (Human-Canine Interface)

What we learned

gpt-oss capabilities

gpt-oss-20b is surprisingly good at tool calling. Despite being the "smaller sibling", it proved very well capable of controlling our robot over the defined interfaces.

This also opens up to many other interesting use cases, as it makes for a powerful, yet small, AI model for building local agents.

Perform distributed software development and integration

Overview of the integration bench

The nodes are also remotely accessible via Tailscale in order to maximize reuse and sharing between the development team

What's next for FREISA-GPT

After so many sleepless nights, now that we have submitted our project it's time to relax and celebrate

But wait! October is coming, and with that also Hacktoberfest 2025

Are you aware that FREISA is one Open Source Project which welcomes contributions?

Please have a look at the issues in the FREISA Backlog at https://github.com/B-AROL-O/FREISA/issues

Some issues are marked with the "hacktoberfest" label, to advertise the fact that those are ideal targets for new contributors to the FREISA project!

Acknowledgements

The B-AROL-O Team wants to express their gratitude to Elisa Fasoglio for her pro-bono participation to the photo shoot of FREISA-GPT.

Due to her career as a model Elisa is clearly used to more professional and higher ranked photo sets, nevertheless when Pietro proposed her to act in the FREISA-GPT final video, she enthusiastically accepted our proposal and agreed to spend half a day for a dedicated photo shoot together with our FREISA-GPT robot puppies.

We asked Elisa whether she had already had experience to be photographed with Robot Dogs -- her answer was that this was her first time, anyway she really enjoyed this experience and immediately fell in love with our FREISA-GPT Robot Dog.

As it looks from this photo as well as his eyes blinking when Elisa talked to him, it turned out that also our Puppy was not insensible to her charm!

Log in or sign up for Devpost to join the conversation.