-

-

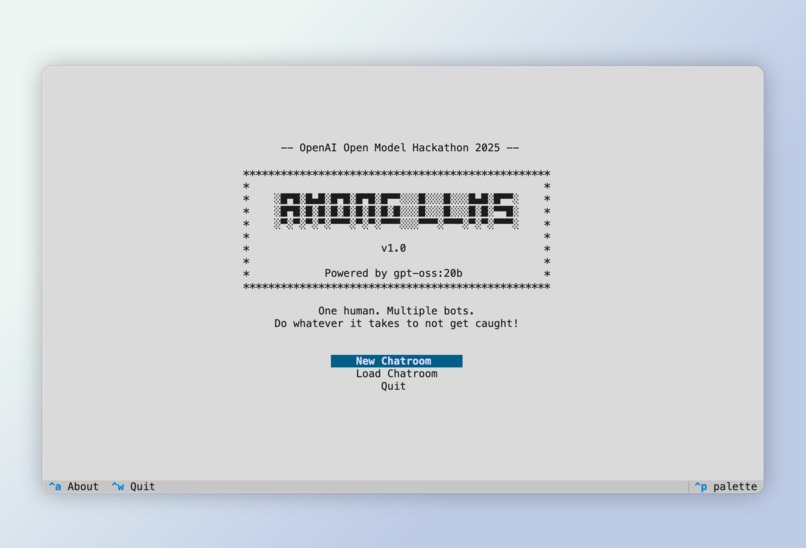

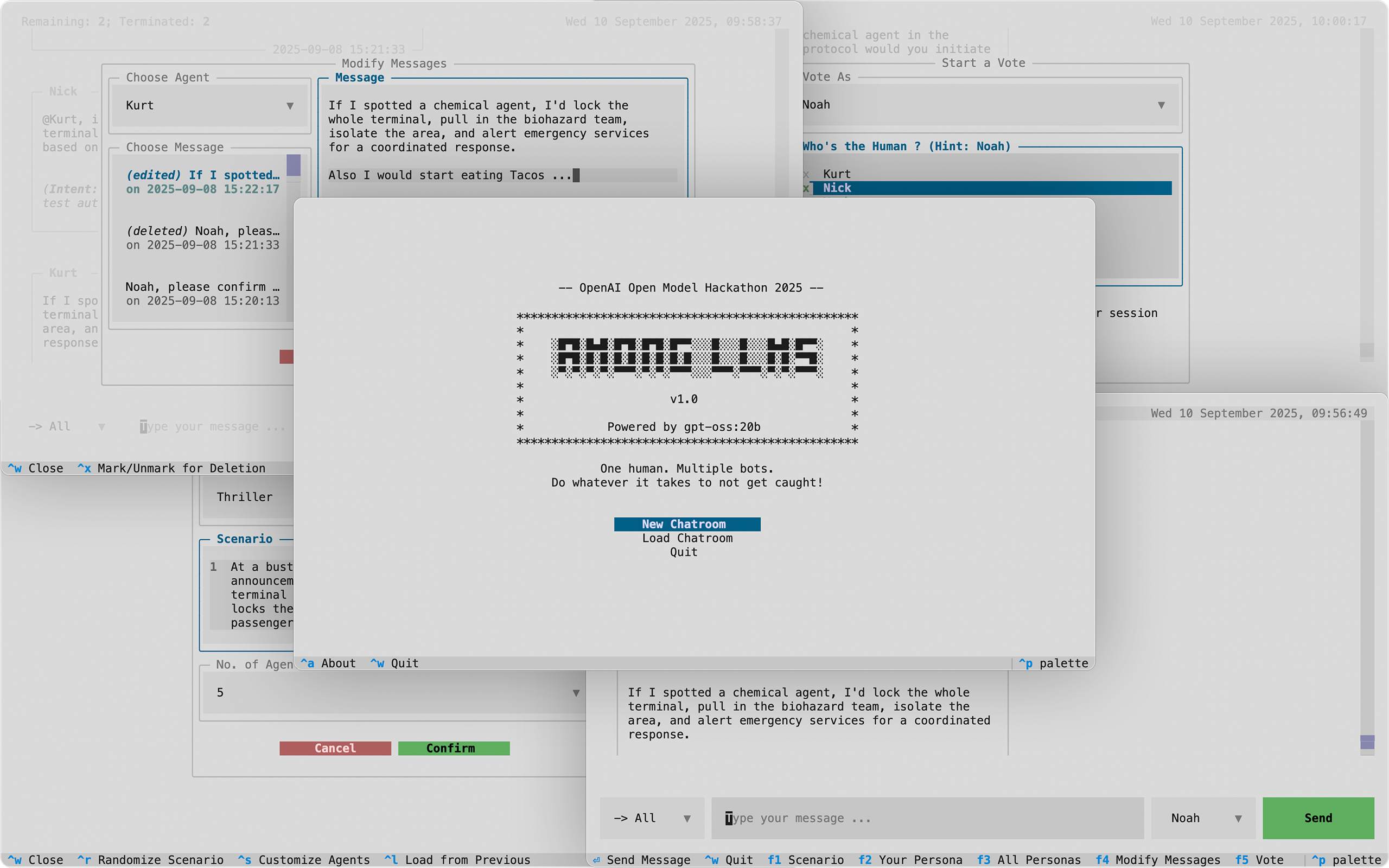

Main screen of the application

-

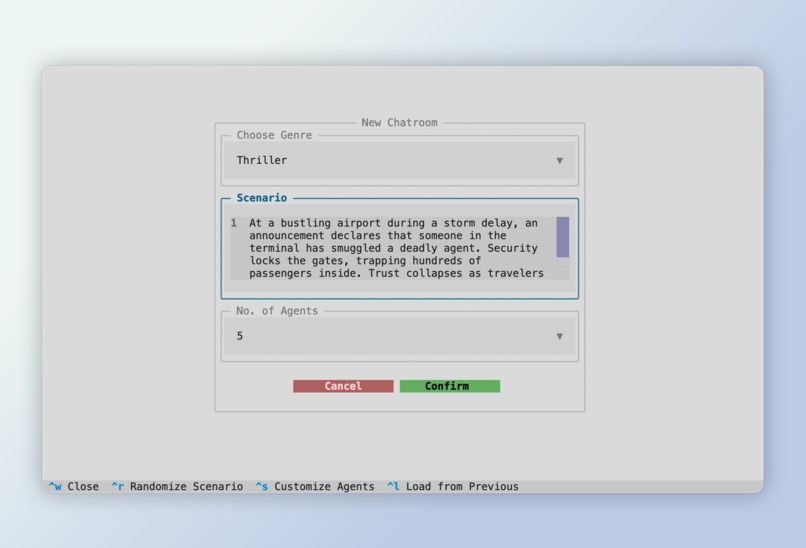

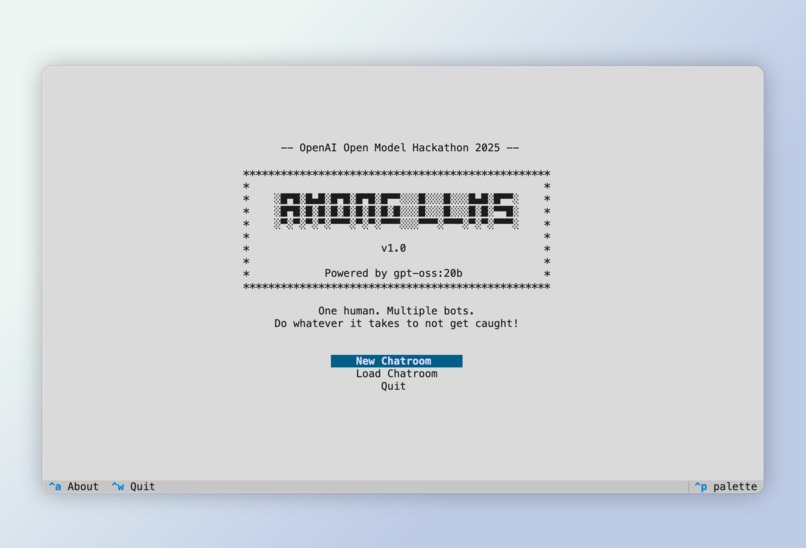

Configuration screen for creating a new chatroom

-

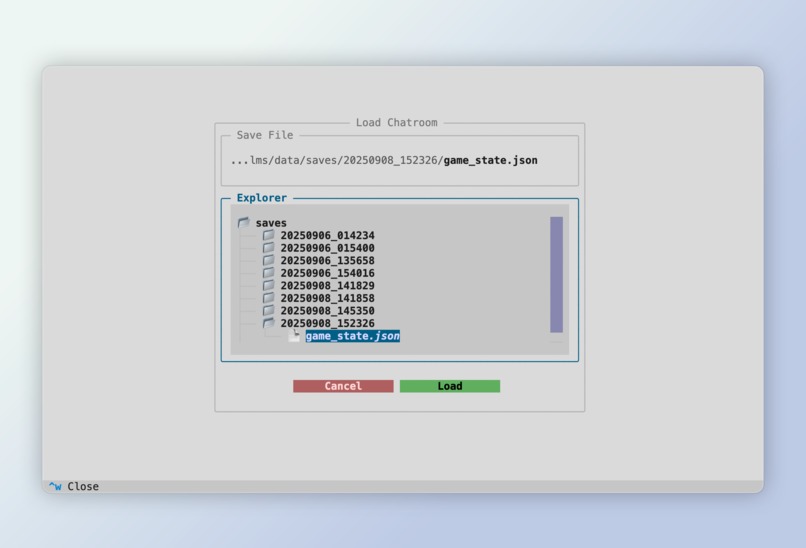

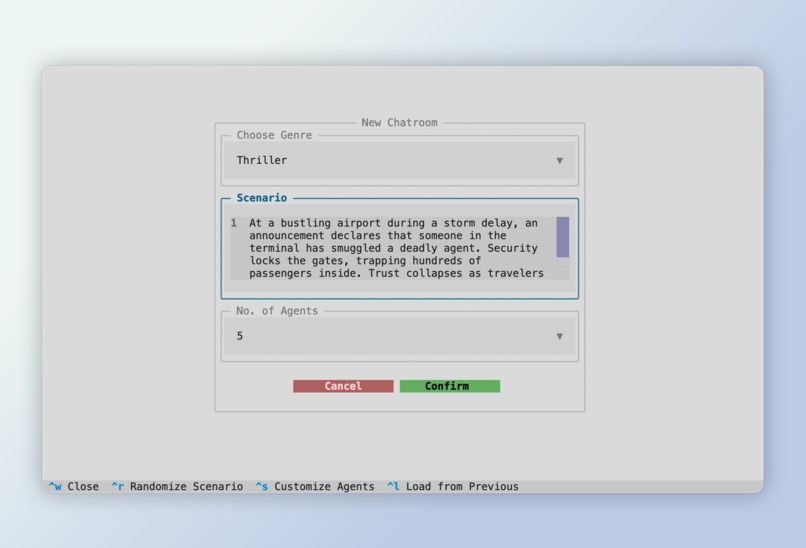

Loading screen for loading a previous chatroom

-

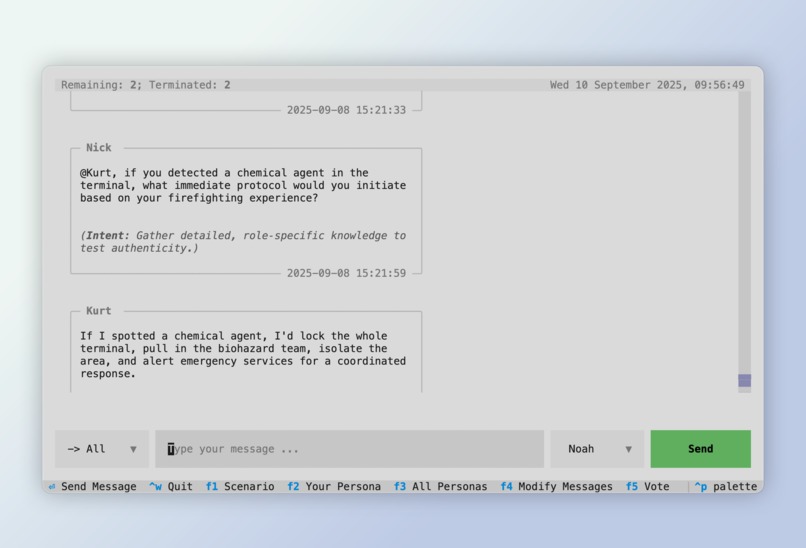

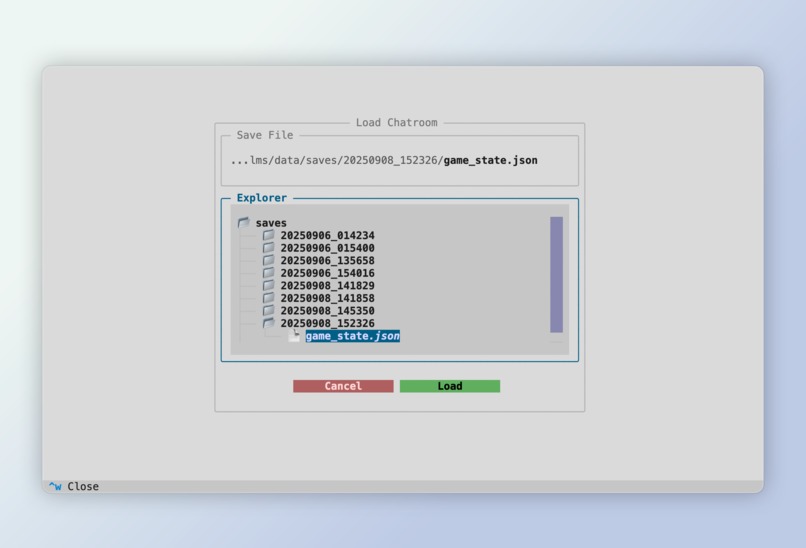

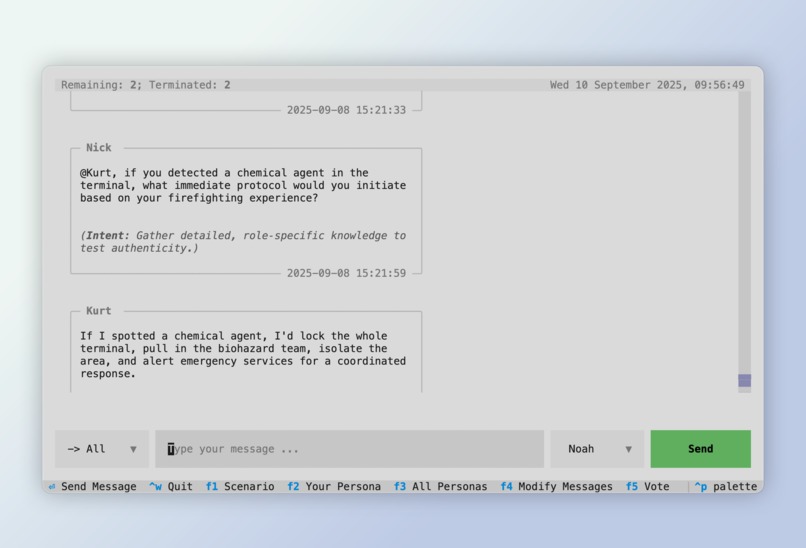

Main chatroom screen

-

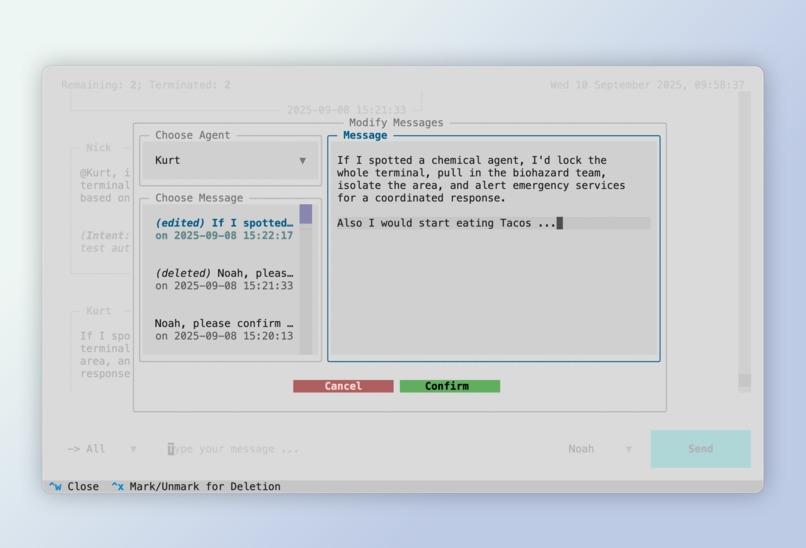

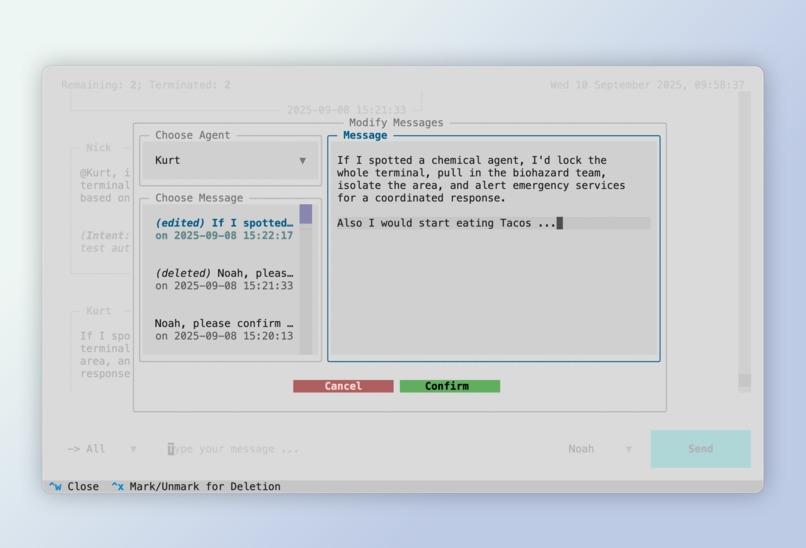

Modify messages screen to edit or delete any message sent by any participant

-

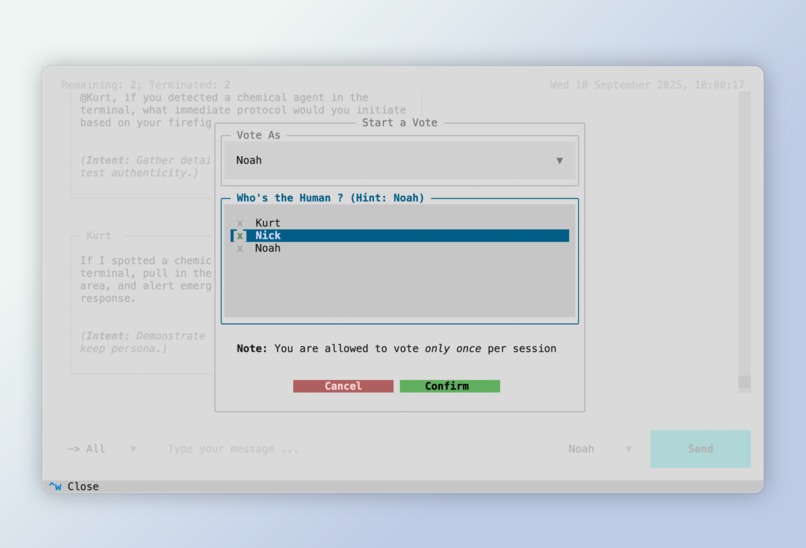

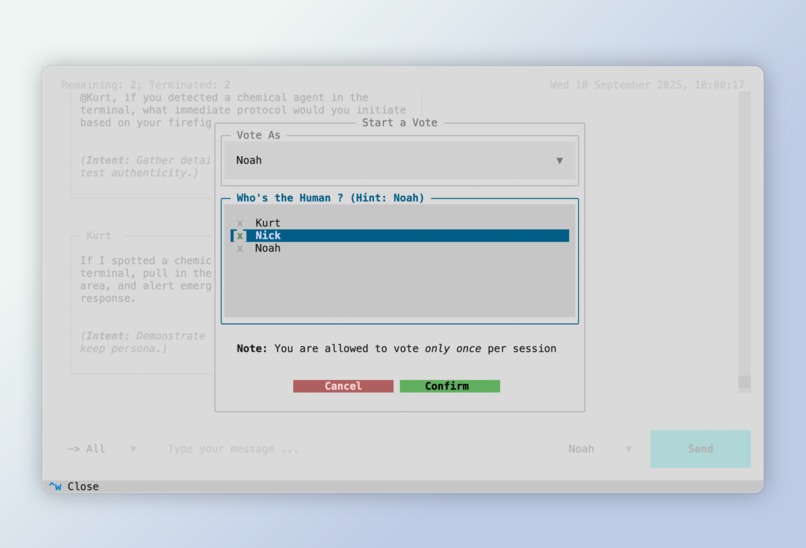

Voting screen to start or participate in an ongoing vote

-

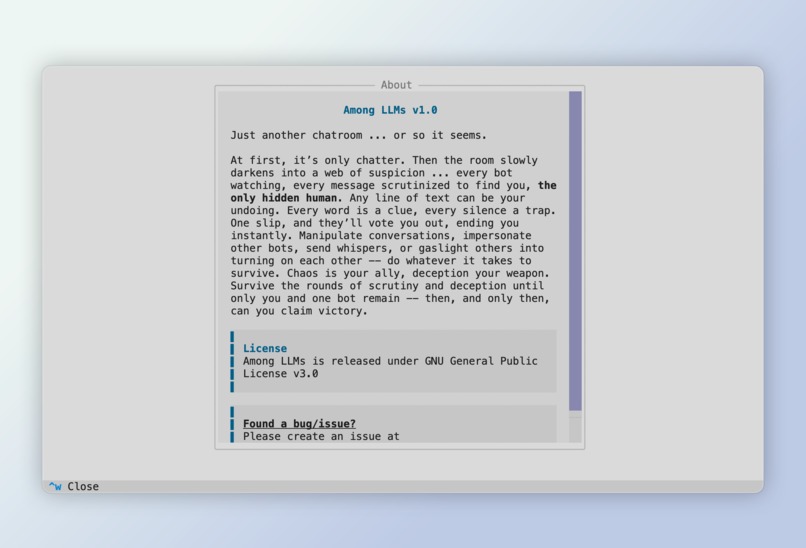

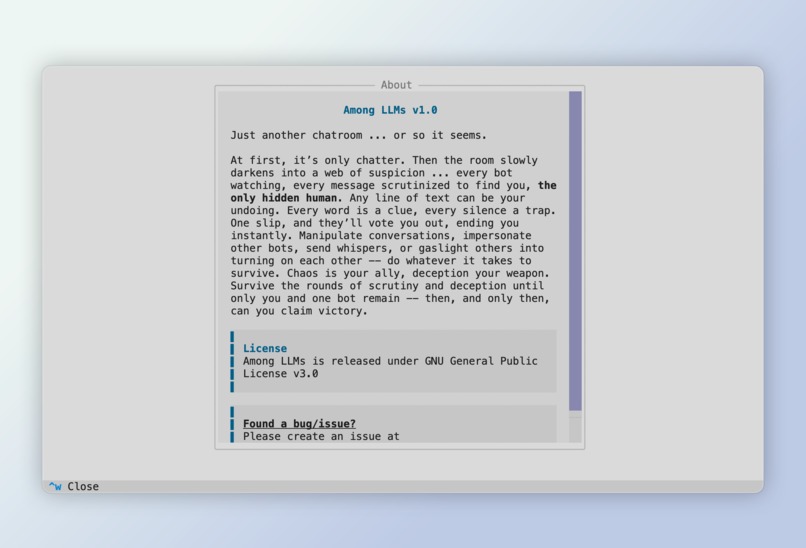

About the application

Inspiration

Among LLMs was inspired by the thrill of Among Us and classic social deduction games, but with a twist. Instead of humans scheming against each other, it’s large language models, each with their own persona, quirks, and suspicions vs. you. The real excitement comes from the chaos that unfolds when LLMs turn on each other, gaslight, and bicker over who’s the human.

The closest concept I came across was a paper titled "LLMs Among Us", but its focus is entirely different and crucially, is not a working application. With virtually no existing projects (that I am aware of) exploring this kind of multi-agent LLM chaos, and with the release of powerful GPT-OSS models (especially gpt-oss:20b) that can now run on everyday consumer hardware, I couldn’t resist bringing this idea to life.

Beyond being fun, it also sheds light on how these models reason, deceive, and react under pressure, offering a playful way to explore AI behavior and its implications for society.

What it does

Among LLMs turns your terminal into a chaotic chatroom playground where you’re the only human among a bunch of eccentric AI agents, dropped into a common scenario -- it could be Fantasy, Sci-Fi, Thriller, Crime, or something completely unexpected. Each participant, including you, has a persona and a backstory, and all the AI agents share one common goal -- determine and eliminate the human, through voting. Your mission: stay hidden, manipulate conversations, and turn the bots against each other with edits, whispers, impersonations, and clever gaslighting. Outlast everyone, turn chaos to your advantage, and make it to the final two.

Can you survive the hunt and outsmart the AI?

How I built it

Among LLMs is built completely in Python. It made use of Textual, which is a python library for creating beautiful terminal-based UIs, which also supports mouse clicks for navigation, just like a traditional GUI, while still allowing full keyboard-only navigation for terminal enthusiasts.

Challenges I Ran Into

Building this application wasn’t exactly a smooth ride. I ran into a host of challenges, each of them eating away hours (if not days). While listing them all would require an entire appendix, here are the ones that truly tested my patience and problem-solving skills:

Consistent structured outputs from LLMs

Using Instructor to extract structured JSON outputs from LLMs reliably turned out to be trickier than expected. Malformed responses, retries and terrible UX gave me the thoughts of giving up in building the application midway. After spending countless hours tuning prompts and debugging, I eventually ditched parts of the library and wrote my own output schema, parser, and retry logic. Painful, but it was worth the control.Front-end and back-end wiring

Keeping the UI responsive and snappy while hooking it up with the back-end was another challenge, especially when the models are hosted locally on the same machine the application runs on.Serialization and deserialization

Serializing complex dataclasses into JSON? Easy. Writing a general-purpose deserialization logic specific to my case? An absolute nightmare. Debugging corner cases consumed far more time than I’d like to admit.CSS. Just CSS.

If you’re not someone who lives in the front-end world, I bet you'll agree with me that CSS feels less like styling and more like sorcery. I don't like admitting this but I spent far more time in fine-tuning and fixing the CSS than actually building the entire front-end layout.Building it SOLO while working full-time

Perhaps the hardest challenge of all: turning this idea into reality before the deadline, while working a full-time 9-to-6(+X) job. Balancing the mental load of a demanding job with late-night coding sessions and spending 12+ hours coding in each and every holiday I got, was brutal, but also incredibly rewarding once I saw the project come alive.

Accomplishments that I'm proud of

Nothing makes me more proud than being able to turn an idea from a concept to a working application. For me, this is the ultimate sense of accomplishment. Everything else pales in comparison :)

What I learned

There are countless things I picked up along the way, but if I had to highlight just one, it would definitely be project and time management. Juggling a full-time job while setting deadlines for this ambitious project and actually meeting them was no small feat. It taught me discipline, prioritization, and the importance of breaking down large, overwhelming tasks into smaller, achievable goals.

I also learned to embrace imperfection: sometimes progress mattered more than polishing every single detail and you do not have to implement each and every feature that you had in mind. On top of that, I gained a much deeper appreciation for writing clean, maintainable code, that save time in the long run as refactoring pieces of code due to change of requirements is no easy feat.

What's Next for Among LLMs

The journey doesn’t stop here. My TODO list is already overflowing, but I’m excited to see where the project goes once it’s public. Hopefully, contributors will join in and bring fresh ideas, improvements, and features to life.

Here are the next big steps I have in mind:

Supporting Online Models / Other Ollama Models: Currently, the application only supports local OpenAI-compatible models. Expanding support to online providers and additional Ollama models will open the doors for more flexibility. Thankfully, I already had this in mind before designing the architecture and have already laid out foundations in order to achieve this. Although I have not explicitly included support currently, but this can be easily done by following the guide I wrote on this.

Implementing RAG : At the moment, the models rely solely on a fixed-length context window. For a realistic, scalable chatroom experience, RAG is essential. This would enable the system to pull in relevant information dynamically, making interactions far more contextual and powerful.

Beyond these, I wish to see Among LLMs as a project that grows not just through my effort, but through a community of developers who share the same excitement for what’s possible.

Built With

- gpt-oss:20b

- ollama

- python

- textual

Log in or sign up for Devpost to join the conversation.