-

-

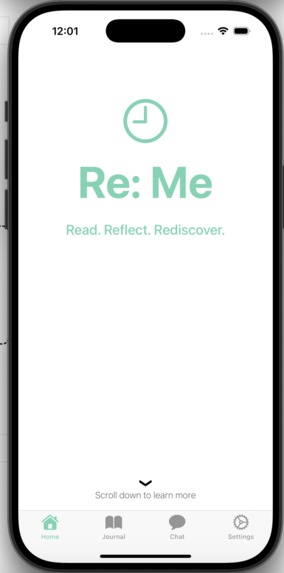

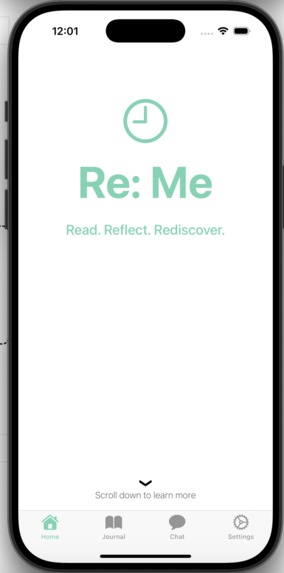

This is the home page on the mobile app for iOS

-

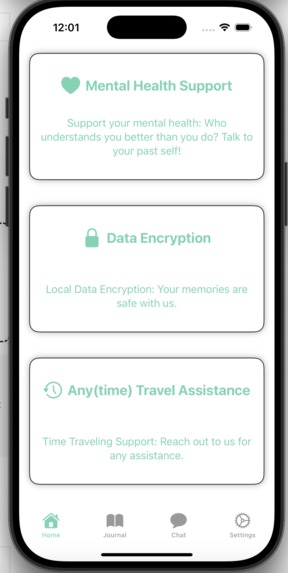

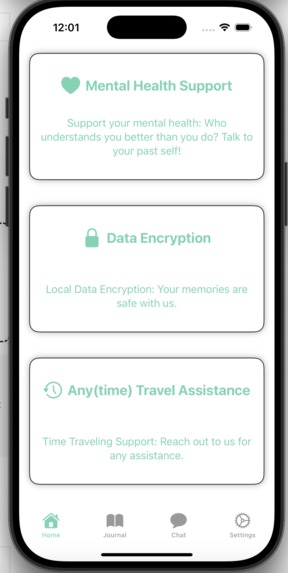

This is the list of features on the iOS app

-

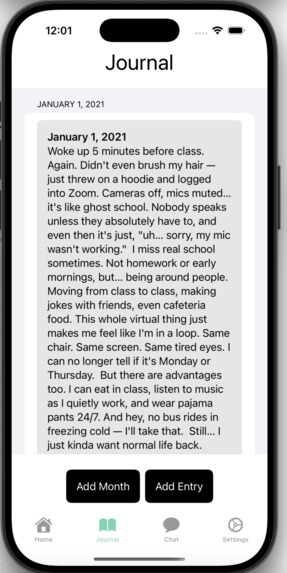

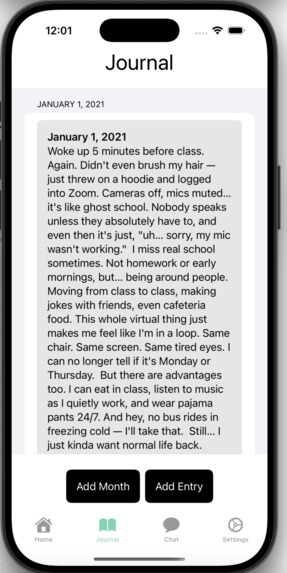

This is the journals tab on the iOS App

-

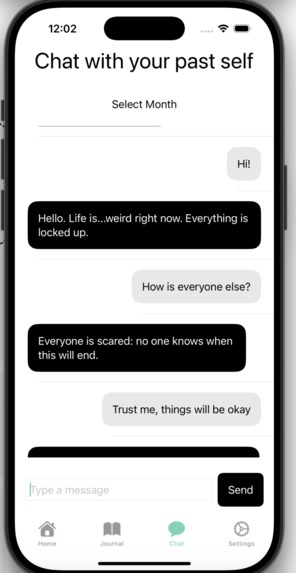

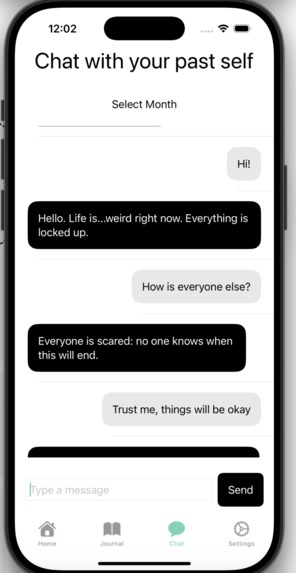

This is the chat section on the iOS App

-

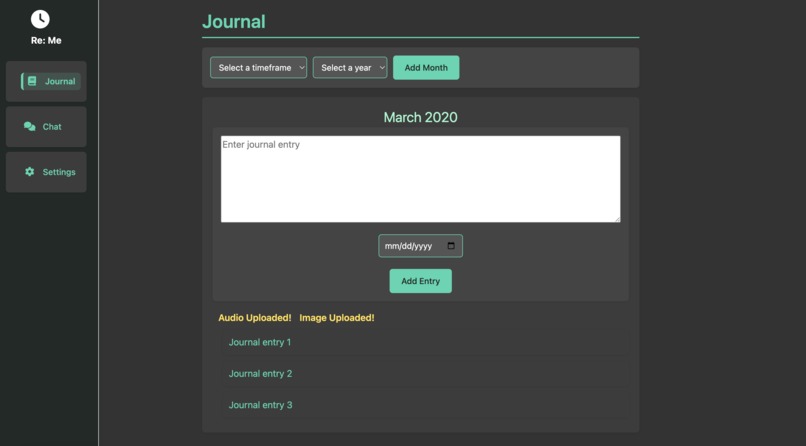

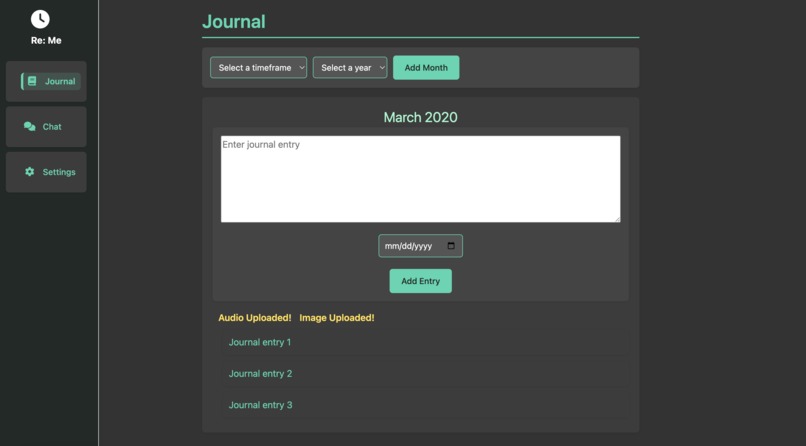

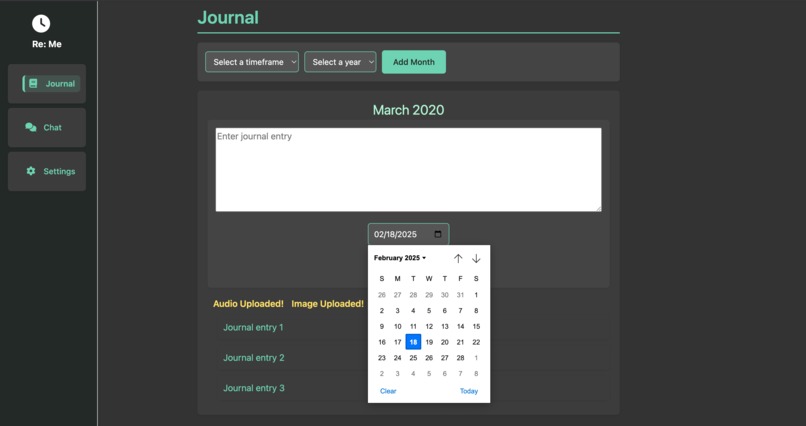

This is the journal entry on the website

-

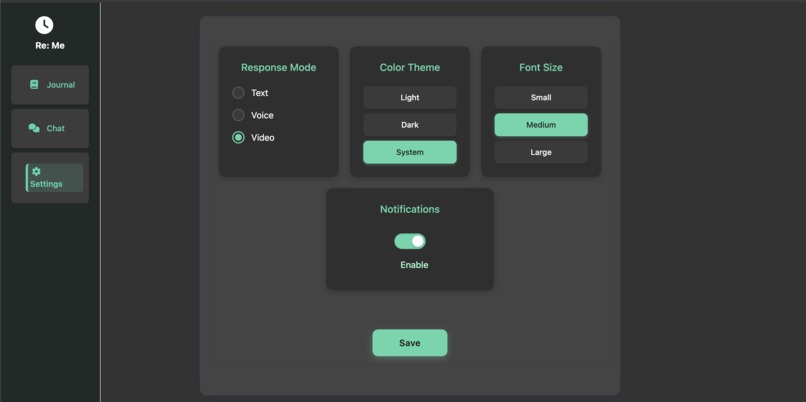

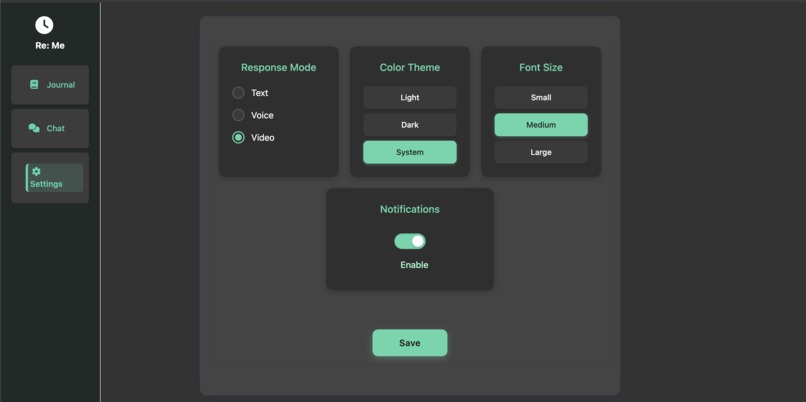

This is the settings page on the website

-

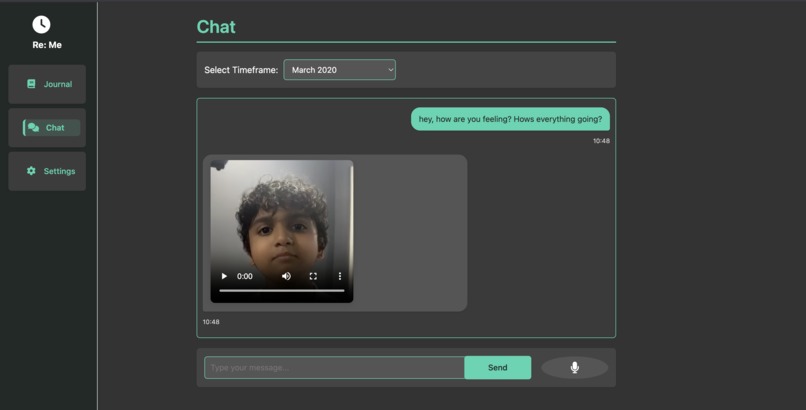

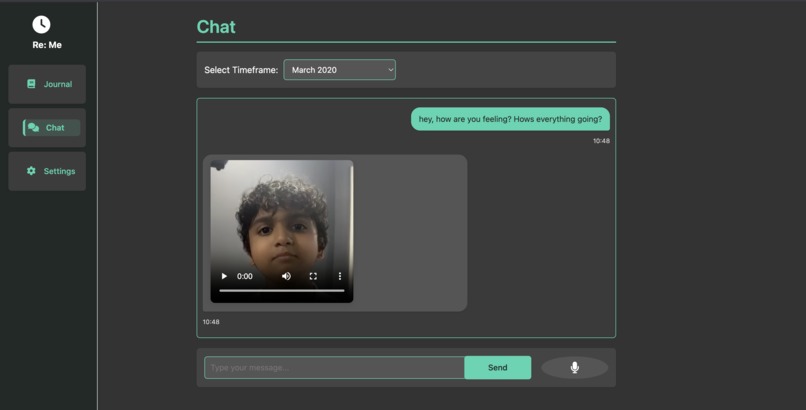

This is the video chat on the website

-

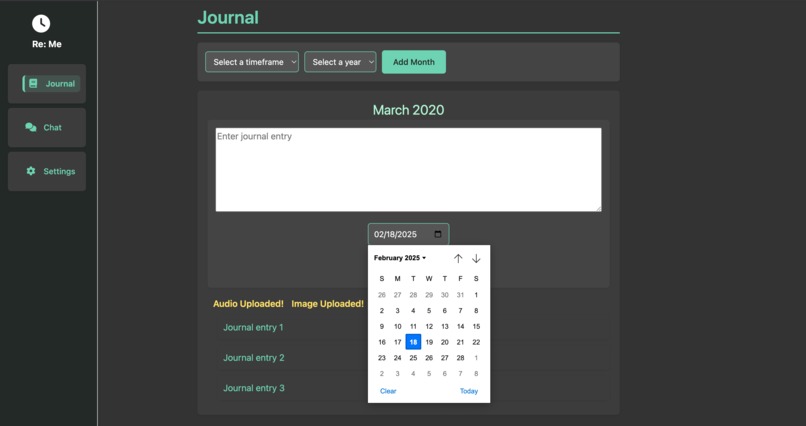

This is the calendar to select a journal date on the website

Inspiration

When we heard about the theme of time travel, we looked back at what we wish we could go back to and re-experience. While we physically cannot go back to those times, we realized we could find a way to talk to ourselves from that time, and Re: Me was created.

What it does

Re: Me is a journaling app that transforms written words into compelling FaceTime, Audio, or text-style conversations with your past self. Users upload journal text, which is fed through AI to generate natural-sounding answers, a computer voice, and even a recreation of one's face! Paired with a sample picture of the user, the system generates a deepfake-style avatar that has a natural-looking conversation on screen. The result is an emotionally impactful video dialogue that brings introspection to life in a very personal, immersive, and cutting-edge manner.

How we built it

We created the backend, which encompassed the deepfake generation (SadTalker + TTS) and input of journals into the AI (Gemini API), which created concise user-specific responses in Python. We then used Swift and Flask (Mobile App and Web Application) to create the front-end, which contains the entirety of the app and journaling platforms. The deepfake takes in emulated audio from Gemini's output and a sample picture of the person being cloned, and turns it into a talking video. We used a free public generative AI in order to assist with the frontend development due to the massive amount of code needed to render the mobile and web apps.

Challenges we ran into

We ran into extensive issues setting up the Swift due to the lack of flexibility and compatibility with the software. This meant that attempting to add features would destroy the app. We eventually overcame this problem upon trying over and over (the whole night!).

Accomplishments that we're proud of

We spent a generous amount of time integrating the complex backend into the front-end, which we are very proud of. This was especially difficult in the iOS app. We got the DeepFake voice to sound almost exactly like the real voice of the test subject but due to our time constraints on the video we couldn't completely showcase the accuracy.

What we learned

We learned to think twice before deciding to use Swift and spending 6+ hours debugging. On top of that, we also learned so much about app and website development and effectively implemented the Gemini API into our backend.

What's next for Re: Me

We would like to introduce a more complicated conversational structure to make conversations somewhat more complicated and create more emotional responses from the AI agent. We also want to introduce more cross-user interactions in the app and allow different users to communicate and share their journals and past selves.

Log in or sign up for Devpost to join the conversation.