-

-

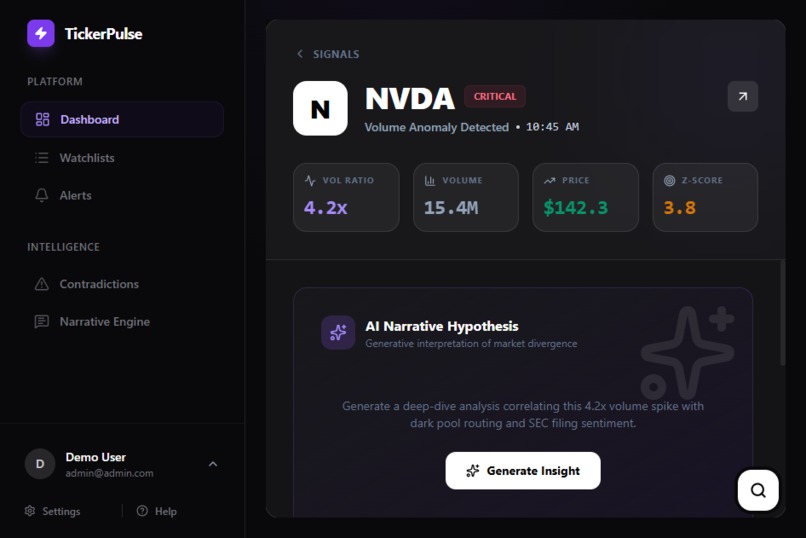

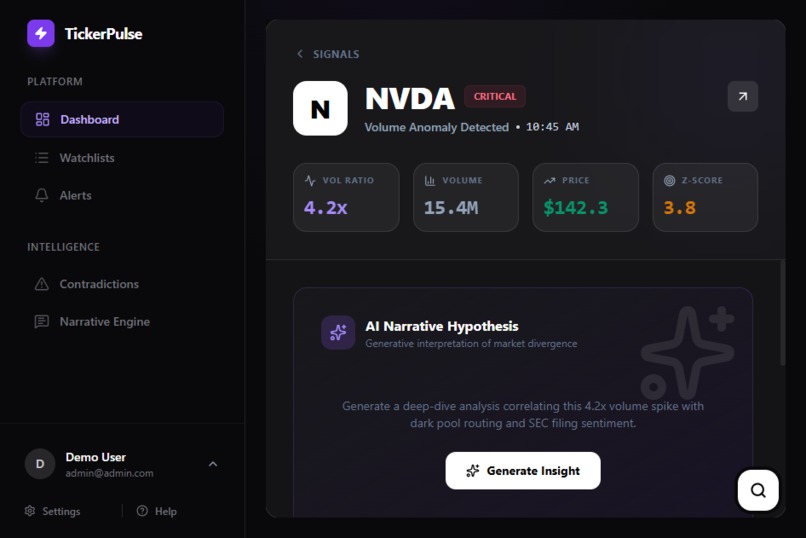

TickerPulse UI: Dashboard showing Gemini 3 analyzing a 4.2x volume spike to generate a real-time Narrative Hypothesis.

-

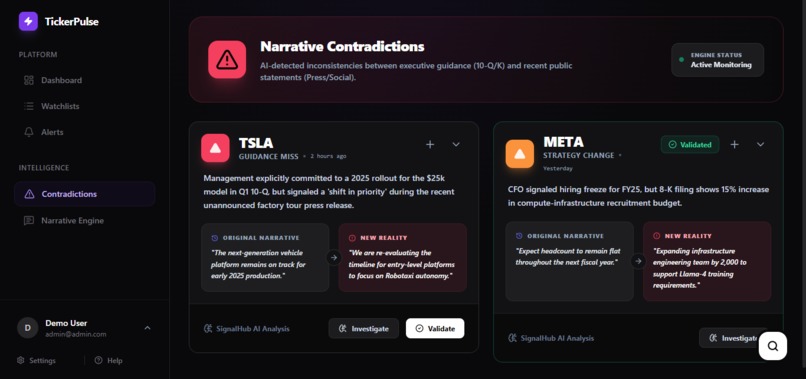

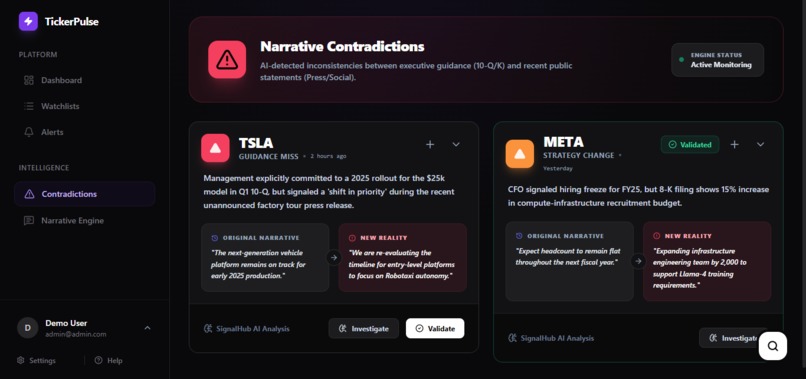

TickerPulse UI: Contradiction page showing aI-detected inconsistencies between executive guidance (10-Q/K) and recent public statements.

-

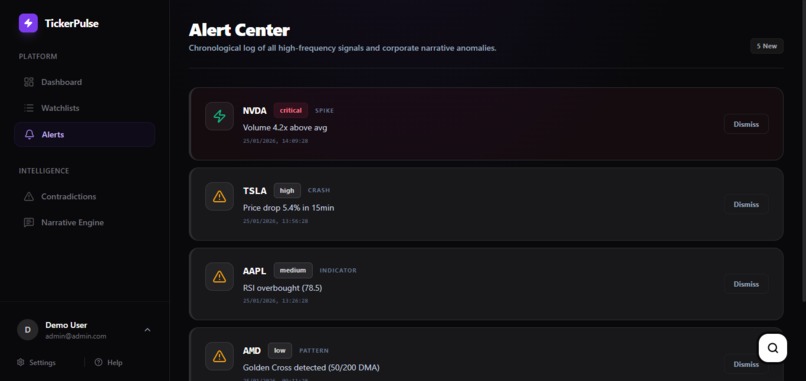

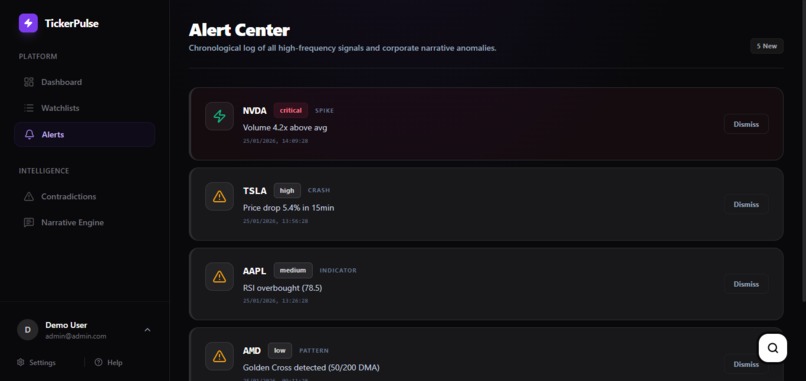

TickerPulse UI: Alerts page showing a chronological log of all high-frequency signals and narrative anomalies based on user preference.

Inspiration

Some years back, I was a university student trying to trade stocks part-time when I discovered a frustrating truth: the financial market is a game of "who sees it first." And retail traders are playing with a handicap.

I'd spend 10+ hours every week doing detective work—cross-referencing SEC filings, scanning news for catalysts, checking volume patterns, trying to understand if a price move was backed by real information or just noise. By the time I pieced everything together, the opportunity was gone.

Meanwhile, institutional traders have $30,000/year Bloomberg terminals and teams of analysts doing this work in real-time. I had to stop trading because the time investment was unsustainable.

I kept thinking: what if AI could do the connecting for me? What if I could just ask what's happening with a stock and get an intelligent, cross-referenced answer in seconds?

That's what I built.

What it does

TickerPulseAI is an AI-powered financial analyst that detects market signals others miss. It's not just a dashboard—it's an autonomous system that monitors, correlates, and explains market movements in real-time.

Real-world example: In February 2024, a biotech company filed a routine 10-Q. Buried on page 47, they quietly removed language about "accelerated Phase 3 trials." No news headline. No press release. But institutional money started moving. TickerPulseAI would have caught this in seconds.

What We Detect That Others Miss

The key difference: We don't just tell you volume spiked—we tell you WHY the market is moving before the news cycle catches up.

- Volume Spikes: Z-score statistical analysis, not just basic alerts

- Narrative Shifts: Cross-quarter AI audit of SEC filings

- Silent Guidance Removal: Semantic diff detection across documents

- Pre-News Leakage: Divergence detection when volume moves before catalysts

The Natural Intelligence Layer

Users can skip complex dashboards and simply ask questions in plain English. Ask "Why is the volume spiking on $AAPL?" and get an instant forensic report.

TickerPulseAI automatically:

- Checks for statistical volume anomalies

- Scans recent news and social sentiment

- Searches SEC filings for material events

- Verifies if movement is backed by real catalysts

- Synthesizes everything into a clear, actionable answer

Users get analysis, not just data.

How I built it

I built TickerPulseAI solo as a complete analytical system combining statistical detection with AI reasoning.

The Core Architecture

Statistical Detection Layer: TimescaleDB monitors real-time market data, using rolling z-score analysis to separate institutional movement from market noise. This catches volume spikes that matter before they make headlines.

Two-Stage AI Pipeline: I implemented a cost-efficient system where Gemini Flash screens 400+ daily SEC filings for materiality, passing only high-impact filings to Gemini Pro for deep cross-quarter analysis using the 1M+ token context window. The AI compares current filings against years of historical documents to detect tone shifts, removed guidance, and narrative contradictions.

Function Calling Architecture: I defined 13 specialized tools that Gemini intelligently calls based on user questions—market data tools, sentiment analysis, SEC filing retrieval, and comprehensive health checks. Gemini decides which tools to use and in what order, then synthesizes results into coherent analysis.

Real-Time Correlation Engine: Redis + Bull Queue orchestrates parallel searches across news archives, SEC databases, and sentiment data. When a volume spike occurs, the system triggers simultaneous searches and delivers a unified divergence score in under 3 seconds.

Tech Stack: Node.js + Fastify, React + Vite, PostgreSQL + TimescaleDB + pgvector, Gemini 3 (Flash + Pro), WebSockets for live delivery.

Challenges I ran into

Real-Time Data Correlation: Correlating a volume spike (milliseconds) with filings and sentiment data (database searches) without lag was technically demanding. My Redis-backed job queue triggers parallel searches across multiple sources simultaneously, maintaining sub-3-second response times.

Function Calling Precision: The hardest part wasn't building the database—it was designing function signatures so Gemini would know when and how to use each tool. Early versions called wrong functions or skipped context. I iterated extensively on tool descriptions and parameter schemas to guide effective decision-making.

Accomplishments that I'm proud of

Automating the Forensic Analyst Workflow: What used to take me 10+ hours per week in university—manually digging through SEC EDGAR, cross-referencing news, analyzing volume patterns—is now automated in under 3 seconds. I built a system that replicates the work of a high-end research analyst.

The Divergence Detection Engine: By combining statistical z-scores, news/social sentiment, and SEC filing analysis, the system identifies when markets are moving on "whispers" that haven't hit the public domain yet. It catches volume spikes that happen before news breaks—potential information leakage that even professional tools miss.

Precision Narrative Tracking: The system successfully detects semantic changes across quarters—when companies quietly remove positive guidance, when risk language shifts from confident to hedged, when management tone changes even if the words differ. This level of detail requires reading hundreds of pages per company. The AI does it automatically.

It Actually Works: I tested this on real market data. The system successfully identified volume anomalies with 3σ+ statistical significance, correctly correlated spikes with events, detected narrative changes between filings, and flagged divergences where volume moved before news. It's not just a demo—it works.

What I learned

LLMs as Reasoning Coordinators: Before this project, I thought of LLMs as content creators. Gemini's function calling showed me they can be reasoning coordinators—deciding which data to fetch, in what order, and how to synthesize it. The model doesn't just answer questions. It figures out what questions to ask the database to answer the user's question.

The Power of Large Context Windows: With Gemini 3's 1M+ token window, I can feed entire 100+ page SEC filings, multiple years of statements, and cross-document comparisons in a single pass. This enables pattern detection and contradiction-finding impossible with smaller context windows.

Multi-Model Economics: Using Gemini Pro for everything is too slow and expensive for real-time applications. My Flash → Pro pipeline processes 400+ filings daily while staying cost-efficient: Flash screens out 95% of noise, Pro analyzes only the 5% that matters. This is the key to building economically viable AI systems in production.

Context is King in Finance: A volume spike alone means nothing. Only when statistical anomalies, news sentiment, and SEC filing analysis align should you trigger an alert. This is how you separate "market noise" from "institutional movement."

What's next for TickerPulseAI

Expand Analytical Capabilities: Add sector analysis to detect trends across multiple tickers, pattern matching to find historical scenarios similar to current conditions, and predictive scoring using filing language to forecast earnings misses.

Enhanced Narrative Intelligence: Move from detecting when narratives change to understanding how—automatic extraction of specific metrics, quarter-over-quarter quantitative comparison, and detection of hedging language that signals management uncertainty.

Real-World Validation: Get this into the hands of actual retail traders to validate: Are the signals actionable? Does it save them time? What features matter most?

Why This Matters

Information asymmetry is the biggest barrier for retail traders. Institutional traders have teams, infrastructure, and tools costing hundreds of thousands per year. Retail traders have free charting tools and fragmented information sources.

I'm not trying to replace Bloomberg Terminal. I'm trying to give individual traders a way to ask intelligent questions and get cross-referenced answers without spending hours doing manual research.

This is what AI should be doing—not replacing human judgment, but removing the drudgery so humans can focus on making decisions instead of gathering data.

Built With

- bull

- fastify

- gemini

- node.js

- postgress

- react

- redis

- supabase

- timescaledb

- typescript

- vercel

Log in or sign up for Devpost to join the conversation.