🦸♂️ ThisComicIsAlive - The Immersive AI Comic Reader

Inspiration

I wanted to solve the "passive observer" trap of digital comic reading. We've been reading comics on screens the same way for 20 years—static JPEGs that feel lifeless compared to the action they depict. Inspired by the moving newspapers in Harry Potter, I built this app to transform static pages into a living, breathing world. I wanted to give readers that "professional twitch" of excitement where characters move, speak, and where you can actually talk to an AI companion about the plot twists as they happen.

What it does

ThisComicIsAlive is an interactive web application where:

- Users Upload Comics: You upload any comic page or PDF. The app instantly "reads" the layout using Computer Vision.

- Living Panels: Static panels are transformed into looping 16-frame animations. Characters breathe, blink, and move slightly, maintaining the original art style perfectly.

- Immersive Audio Drama: The app analyzes the scene and generates a multi-speaker audio drama. A narrator describes the action, and characters speak their bubbles with distinct voices based on gender and age.

- Live Companion: You are never reading alone. A "Live Companion" AI watches the comic with you. You can speak to it via microphone ("Who is that guy?", "Why is he angry?"), and it responds in real-time with context-aware answers.

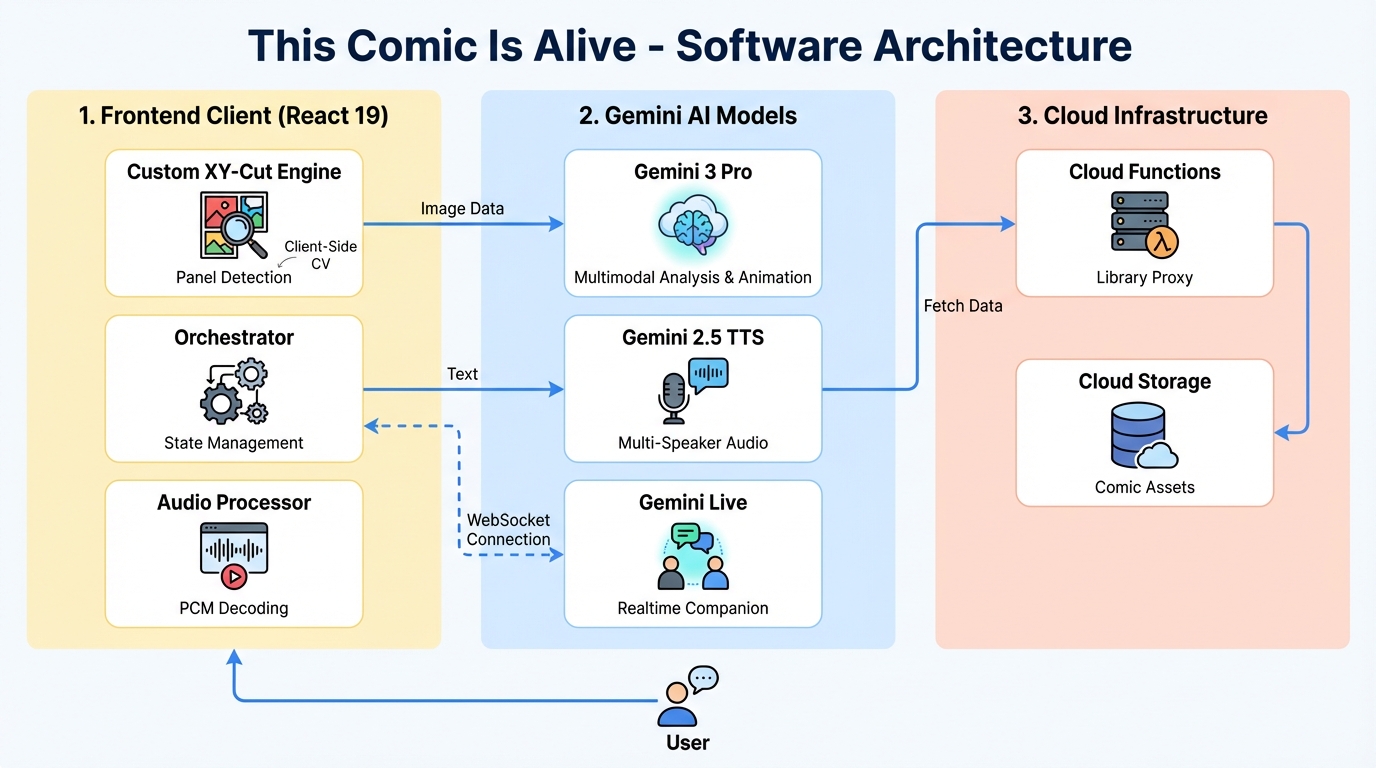

How I built it

I built this application using Google Al Studios, interactivity, and the latest Google AI capabilities.

🛠️ Google AI Studio: The Prototyping Lab

Google AI Studio was the secret weapon that made the complex "Living Panel" feature possible. It served as my command center for:

- Prompt Engineering the Grid: Generating consistent 4x4 animation grids is notoriously difficult. I used AI Studio's visual interface to iteratively refine the

<spatial_anchors>and<critical_rule>system instructions. By dragging and dropping comic panels directly into the UI, I tweaked the prompt untilgemini-3-prostopped "hallucinating" new characters and started acting like a "Technical Draftsman." - Model Selection: I used the "Compare" features to test

gemini-3-flashvsgemini-3-proside-by-side. This helped me decide to assign "The Director" role (analysis) to Flash for speed, while keeping Pro for the high-fidelity visuals. - Live API Testing: Before writing a single line of WebSocket code, I used the AI Studio Live interface to test voice interactions, ensuring the model could handle the latency of real-time conversation.

The AI Pipeline (Gemini 3 & 2.5)

I leveraged a specific combination of Gemini models to create a synchronized multimedia experience:

-

gemini-3-pro-image-preview(The Animator):- Frame Generation: I used the high-fidelity Gemini 3 Pro model to generate 4x4 animation grids from single static images. By enforcing strict spatial anchors in the prompt, I achieved consistent character movement without hallucinations.

- Magic Edit: This model also powers the "Edit" feature, allowing users to modify specific panels (e.g., "Make it rain") using in-painting techniques.

-

gemini-3-pro-preview(The Director):- Scene Analysis: I used Flash for its speed to analyze panels, extract dialogue, identify speaker gender, and determine the mood. It returns structured JSON that drives the audio engine.

-

gemini-2.5-flash-preview-tts(The Voice Cast):- Character Voices: Based on the gender data from the Flash model, I dynamically assign specific voice IDs (Fenrir for men, Kore for women, Puck/Zephyr for kids) to generate a full audio performance.

-

gemini-2.5-flash-native-audio-preview(The Companion):- Real-Time Interaction: For the "Live Chat" feature, I used the native audio capability of Gemini Live. It streams audio bidirectionally via WebSocket, allowing the user to have a natural conversation with the app about the visual content.

- Real-Time Interaction: For the "Live Chat" feature, I used the native audio capability of Gemini Live. It streams audio bidirectionally via WebSocket, allowing the user to have a natural conversation with the app about the visual content.

The Frontend & Vision

- Frontend: React 19 with TypeScript and Tailwind CSS.

- Computer Vision: Instead of relying solely on AI for layout, I wrote a custom Recursive XY-Cut algorithm using the HTML5 Canvas API. It scans pixel density profiles to mathematically detect panel boundaries in milliseconds, ensuring 100% accuracy on grid layouts.

- Cloud Storage: Integrated a Google Cloud Function to handle library synchronization, allowing users to persist their comic collections.

Challenges I ran into

Rate Limits & Concurrency: Running high-resolution image generation (Animation), text analysis, and TTS generation simultaneously for every panel triggered 429 Too Many Requests errors constantly.

- Solution: I implemented a "Simultaneous Execution" strategy with robust error handling. The app fires all requests at once for maximum speed but includes a smart exponential backoff retry system that logs exactly which model is throttling.

Style Consistency: Early animation tests looked like 3D Pixar renders, losing the comic's hand-drawn charm.

- Solution: I refined the system prompts to enforce a "2D Comic Style" constraint and added a custom SVG Turbulence filter (

#living-comic-filter) over the canvas to mimic the "boil" of hand-drawn animation lines.

Accomplishments that I'm proud of

- The "Zero-Latency" Feel: By parallelizing the Audio and Visual pipelines, the app starts playing the narration almost instantly while the heavy animation frames generate in the background. It feels magical.

- Mathematical Precision: My custom XY-Cut algorithm for panel detection works surprisingly well. It doesn't hallucinate boxes like some OD models; it actually "sees" the gutters.

- Seamless Multimodality: The way the Live Companion "sees" the image context I send it and responds to voice queries creates a truly shared reading experience.

What I learned

I learned that Gemini 3 Pro is exceptionally good at following strict geometric instructions (like "draw a 4x4 grid"), which was impossible with previous models. I also learned the importance of Audio Context management in the browser—handling multiple TTS streams alongside a live microphone input required careful state management to avoid feedback loops.

What's next for ThisComicIsAlive

- Video Generation (Veo): Integrating Google Veo to turn key action panels into full 5-second video clips.

- Multi-User Sessions: Allowing two friends to read the same comic in different locations with a shared AI companion mediating the discussion.

Built With

- gemini3apis

- google-ai-studios

Log in or sign up for Devpost to join the conversation.