Background & Inspiration

In 2016, 3,450 people in the US alone were killed due to distracted drivers. In 2015, 3,477 people were killed and 391,000 were injured[1]. If you had a say in the matter, what would you hope the number of casualties to be this year? 1,000? 500? 2,000? If I said, that in 2018, there will only be 3 deaths due to distracted driving, what would you say? That’s great? That it sounds impossible? What if those 3 people were your family members? When it’s personal, the only number that should be acceptable is 0. For me, this is personal. Even if the number was 1, that 1 was my father. When I was just 7 years old, my father passed away in a car accident that was caused by a distracted driver. Since then, I have aspired to use my experience in technology and computer science to create something so that no one has go through the same thing my family had to go through.

What It Does

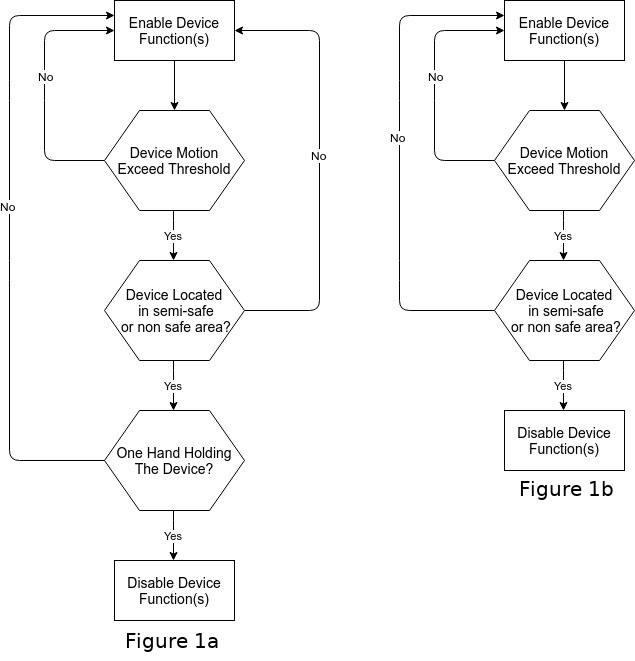

The technology I have invented prevents people from using specific phone applications while driving such as Messages, Facebook, Instagram, etc. I have modified an Android launcher to use the GPS and the rear camera of the phone, plus an additional capacitive sensor installed in the back of the phone. The GPS is used to determine if the user is in a moving vehicle. Once the GPS resolves that the user is in a moving vehicle, the rear camera is then used to determine where the user is inside the car (i.e. driver, passenger, backseat) by using machine-learning algorithms, and the capacitive sensor is used to determine how many hands the user has on the back of the phone (Figure 1a). In the case that the capacitive sensor is not available, the Android launcher will rely exclusively on the GPS and rear camera (Figure 1b).

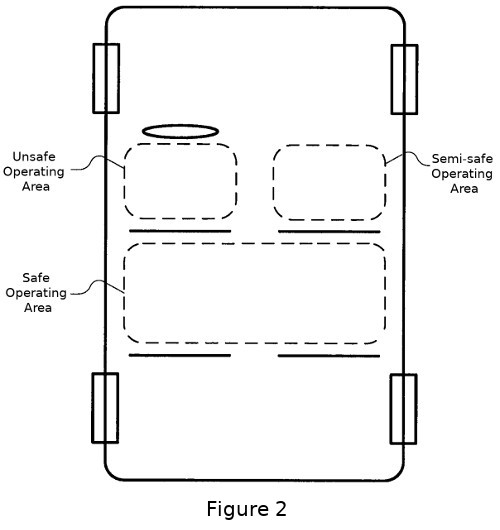

When all three sensors are in play, the system is able to determine in which of the three areas of the car the user is located, as depicted in Figure 2. If the user is located in the Safe Operating Area no additional restrictions are added for the user to use the phone. If the user is located in the front of the car, then the system requires the user to have both hands on the phone in order for all applications to work. The system requires two hands because it is assumed that a driver would have one hand on the phone and one hand on the steering wheel. To then find out if the user is in the Semi-Safe Operating Area or the Unsafe Operating Area, the capacitive sensors will detect the number of fingers on the back of the phone. If it is concluded that the user has both hands on the phone, then the user is assumed not to be the driver, locating them in the Semi-Safe Operating Area. If the user does not meet the hand requirements of the capacitive sensors, they are assumed to be located in the Unsafe Area, and the Android launcher will not open any of the aforementioned distracting applications and a warning message will be displayed and make the applications unusable until the sensors’ criteria is met.

How It's Built

To solve the problem described above I modified an Android launcher to use three sensors (capacitive matrix, rear phone camera, and GPS) to determine in which of the three areas of the car the user is located. The Android Launcher that I modified, KISS, is an open source launcher.

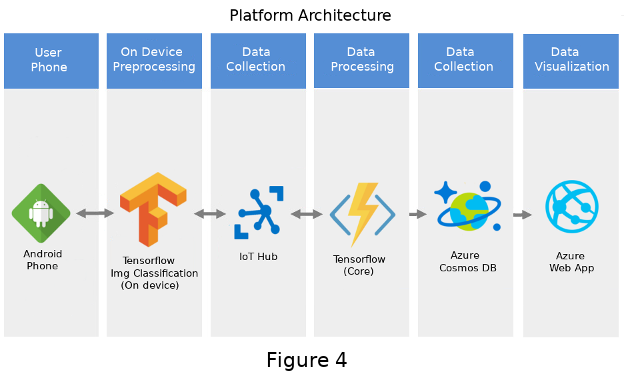

Figure 4 depicts the workflow of the application. Once the Android launcher is instantiated, it automatically takes pictures every 10 seconds using the rear camera without user interaction. The images are then forwarded to the local Tensorflow installed in the phone, then are classified into a set of pre-trained categories. If the accuracy of the images is below 80% or the system has taken more than 10 pictures, all of them with an accuracy over 80%, then the pictures are forwarded to the Azure IoT hub. A set of three serverless functions then classifies the images and sends the answer back to the end user.

The first serverless function parses the incoming data from the user’s phone and converts the data URI to an image (jpeg). Then, the function puts the image path into the queue, causing the second function to be triggered. The second serverless function runs the Tensorflow model (inception V3) with the image stored in the previous function. The results provide a more accurate location of the user in the car, and are then stored in another queue which causes the third and last function to be triggered. The third serverless function stores the location results into the mongoDB which then will be queried by the Azure Web App to display the live statistics. In addition, the results are sent back to the user. Once the system receives the results from the cloud, it compares both Tensorflow results: the ones obtained from the local Tensorflow, and the ones obtained from the cloud. If there are any discrepancies, the result defaults to those from the cloud since the Tensorflow model has a higher accuracy than the one in the phone (table below).

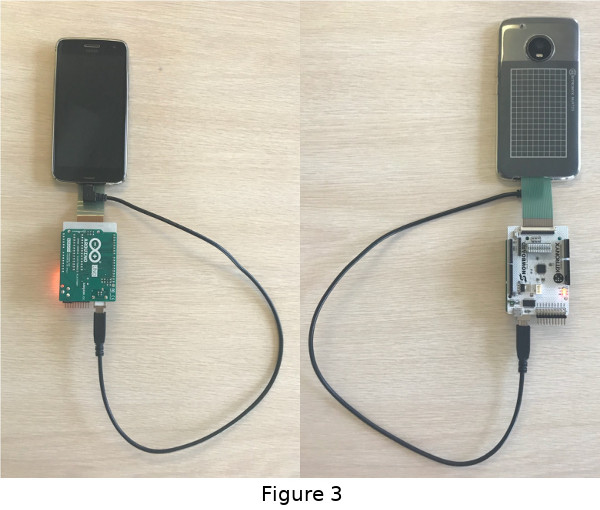

Once the system has figured out the physical location of the user inside the car, the capacitive matrix obtains the number of fingers on the back of the phone and then either enables or disables certain apps of the phone. Figure 3 depicts how the capacitive sensor is integrated with the phone. If the user tries to open one of the distracting applications before the results from the cloud are received, the application will solely rely on the results from the phone. Once the results from the cloud are received, if they are different than those of the phone, and the phone had allowed the application to be opened, the application will now be killed due to the initial false positive.

The are two reasons why I decided to have two Tensorflow models (one in the cloud and one in the phone). The first reason is because the Tensorflow model on the phone uses a very lightweight algorithm that can provide results very quickly, which are needed in order to make decisions on enabling or disabling applications. The downside is that the accuracy is lower, which introduces false positives. This means that in some cases the applications would be incorrectly enabled for the driver or disabled for the passengers. To reduce the number of false positives, I used the Azure serverless functions to run a much slower yet much more accurate algorithm (table below). The second reason why I wanted to have two Tensorflow models was so I could reduce the amount of data that is sent over the network and not overuse the user's data plan.

| Model | Top-1 Accuracy | Top-5 Accuracy | Execution Time |

|---|---|---|---|

| Mobilenet_0.25_128 (Phone) | 41.5% | 66.3% | 6.2 ms |

| Inception V3 (Azure) | 78.0% | 93.9% | ~2 seconds |

Accomplishments

I accomplished a complex multi-faceted solution that will ultimately reduce the number of accidents and deaths due to distracted driving. The technology that I presented can be used as is with current phone technology or it can be used in conjunction with the external capacitive sensor. The capacitive sensor is not a requirement for proper functionality of the Android launcher. The advantage to this solution is that it will be faster to implement in the current market since it works with existing phones' technology. Compare this to recent developments in this area such as Apple’s Do Not Disturb While Driving[2] mode, which only uses the GPS sensor to determine if the user is in a moving vehicle. The downside of Apple's technology is that it doesn’t distinguish whether the user is a driver or a passenger, resulting in the end user getting frustrated and reducing the number of people that will actually use it.

How To Use It

1.1- Download and install the Android Apk.

OR

1.2- Deploy Azure Functions (optional - Azure IoT Hub and Functions are currently running).

1.2.1- Change the Azure IoT credentials on the Android App (fr.neamar.kiss.utils.DataHolder).

1.2.2- Compile the Android App.

1.2.3- Install the compiled Android App.

2- Grant draw over other apps, location, camera and storage permissions.

3- Make Kiss Launcher the default home app.

4- Open the Kiss Launcher.

5- Block any app you want (hold down the app you want to block for 2 seconds and a menu will appear, select add to blocked list).

6- Connect the capacitive matrix to the arduino and load the code to the arduino (optional).

7 - Connect the capacitive matrix to the phone (optional).

8- Find a driver and jump into the car.

9- Test the app.

Try It Out

https://github.com/egibert/TextAndDrive -- Git Repo

https://github.com/egibert/TextAndDrive/raw/master/Android%20APK/TextAndDrive.apk -- Android APK

https://textanddrive.azurewebsites.net -- Azure Web App

References

1 - NHTSA

Built With

- android

- arduino

- azure-iot-suite

- tensorflow

Log in or sign up for Devpost to join the conversation.