-

-

Logo

-

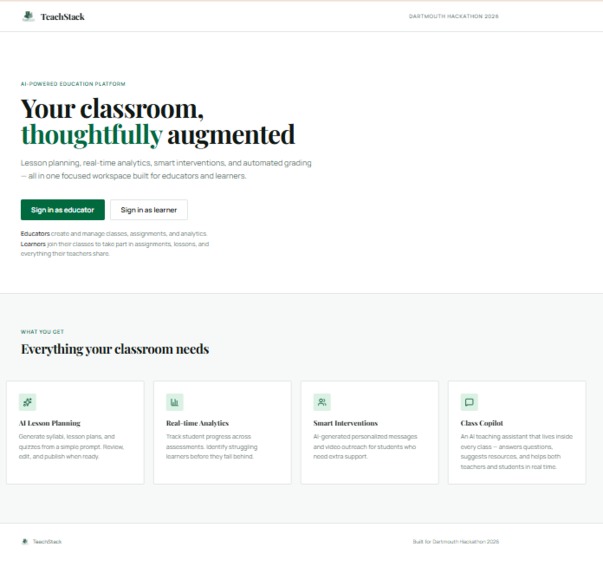

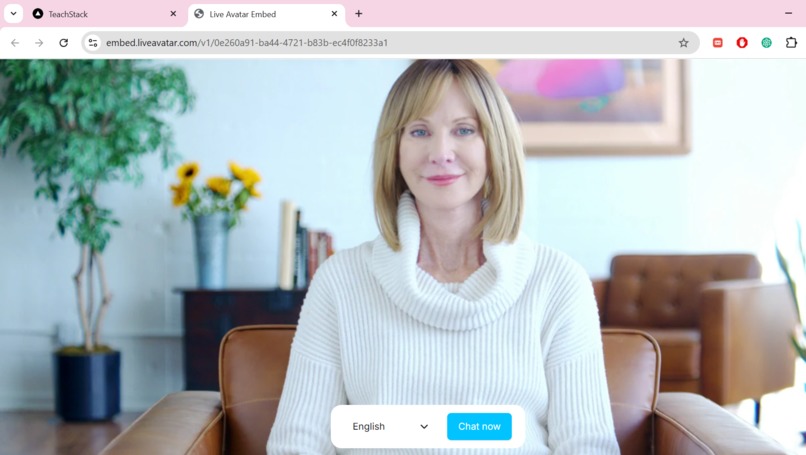

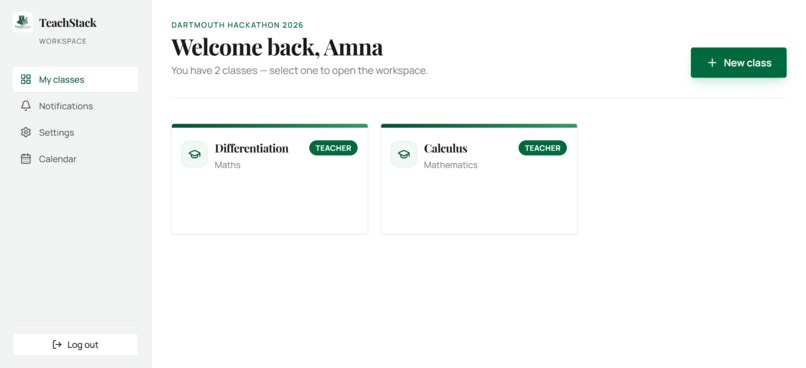

Landing page

-

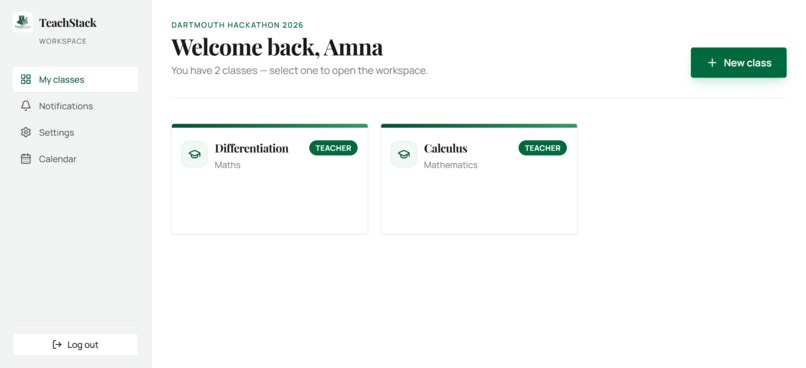

teacher dashboard

-

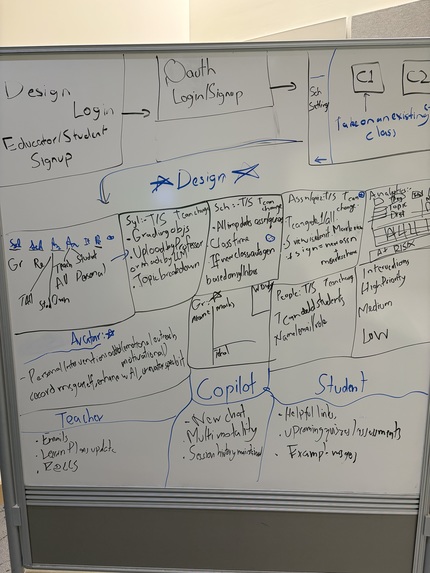

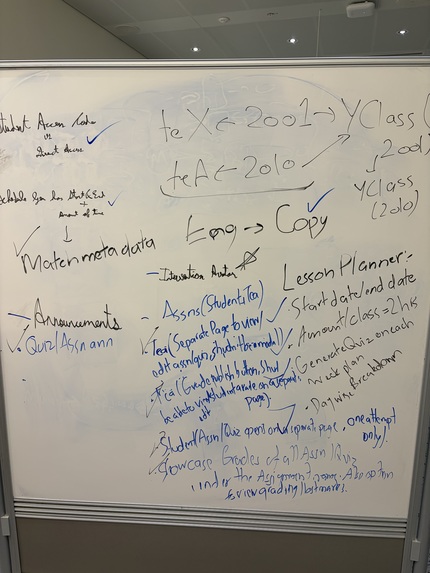

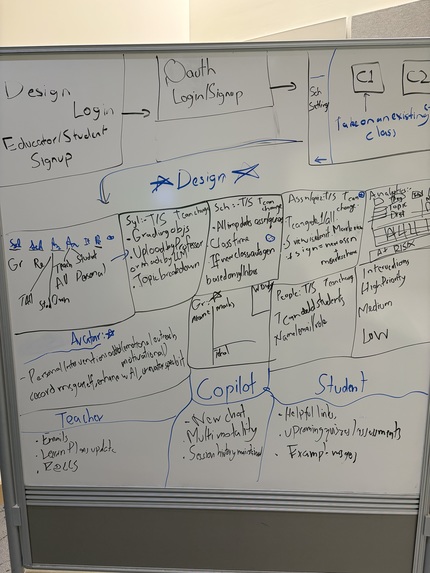

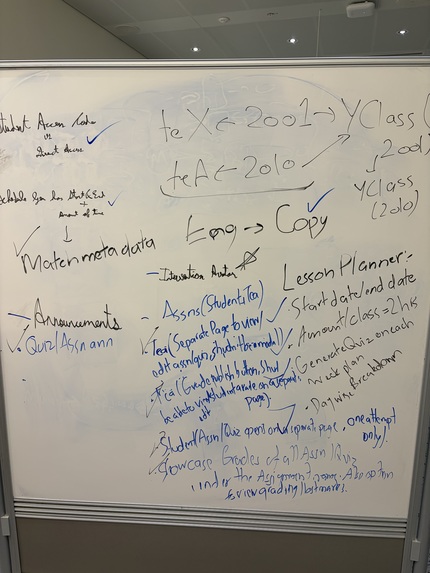

Individual Wireframes

-

Supporting Features

-

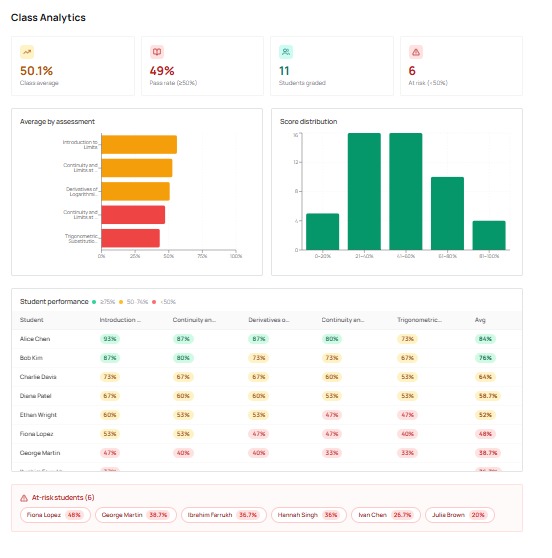

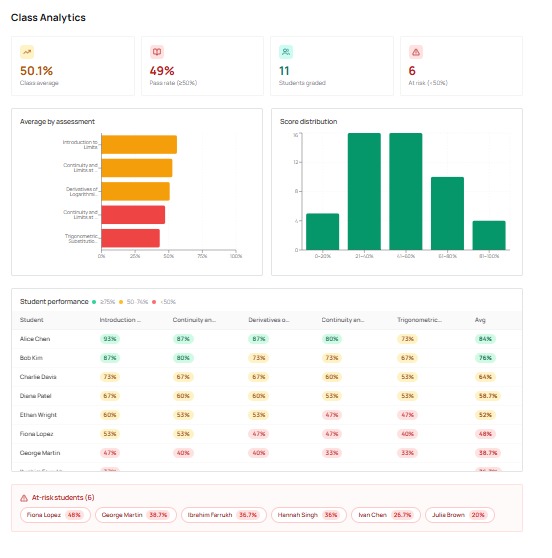

teacher class analytics

-

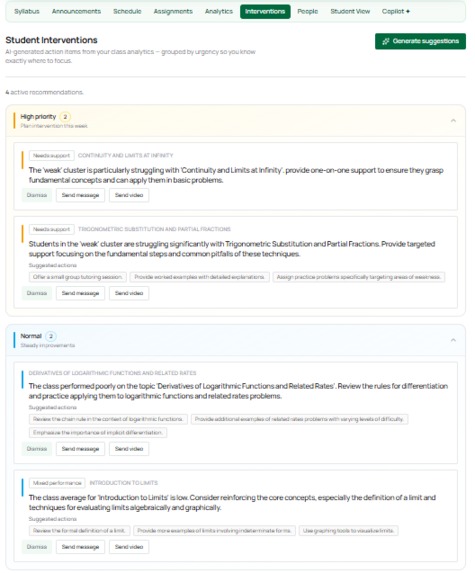

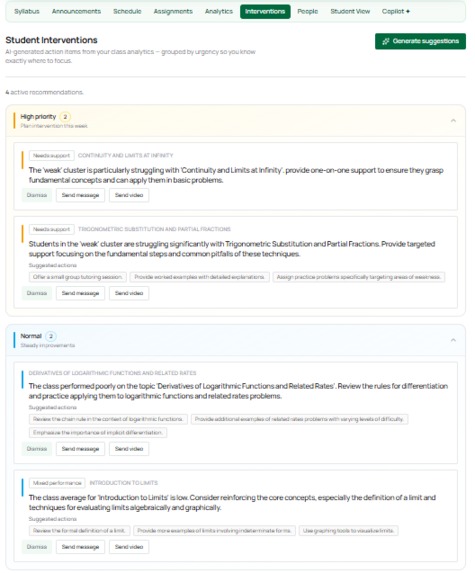

Interventions

-

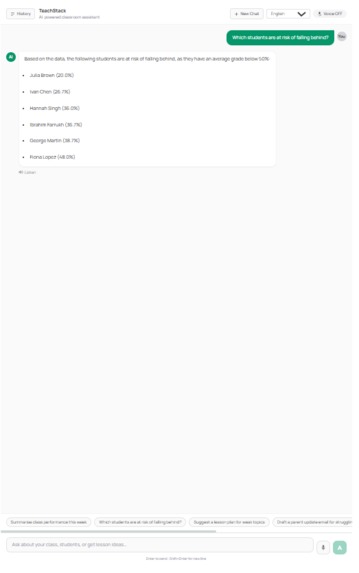

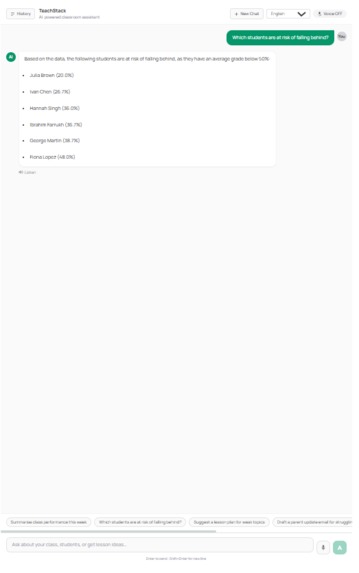

teacher copilot view with example

-

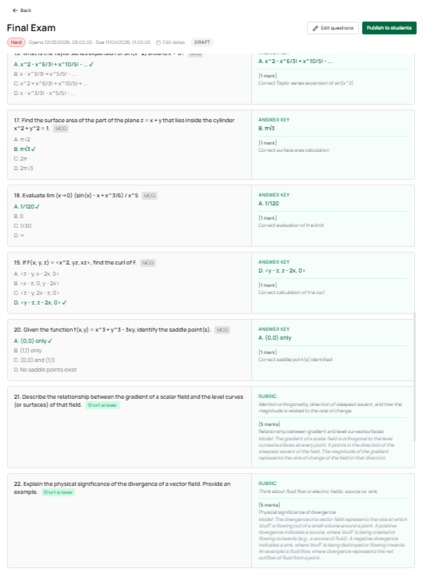

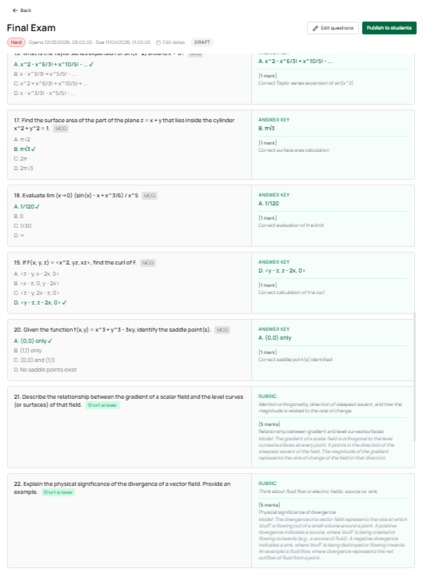

generated assessment with rubric

-

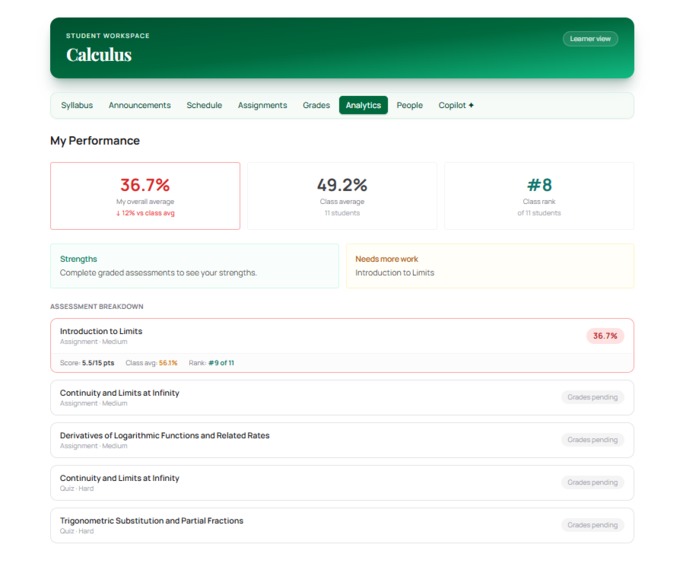

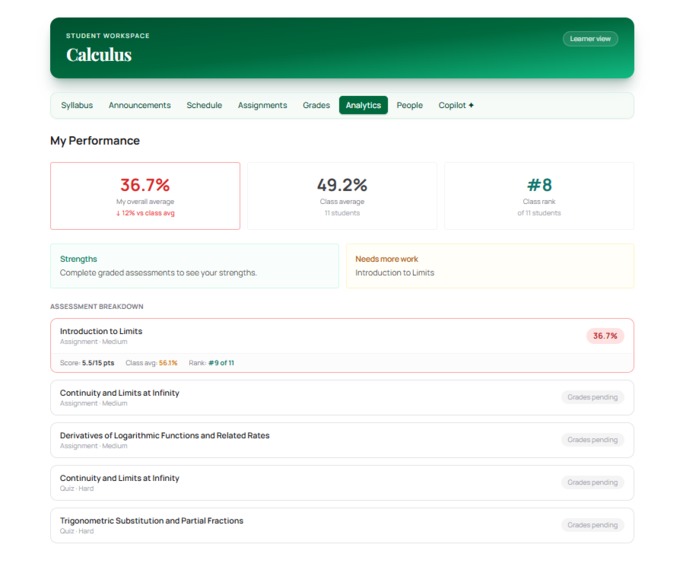

student analytics page

-

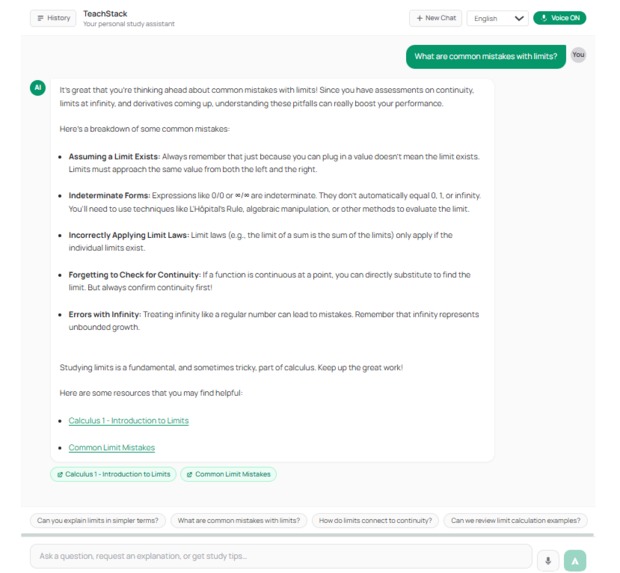

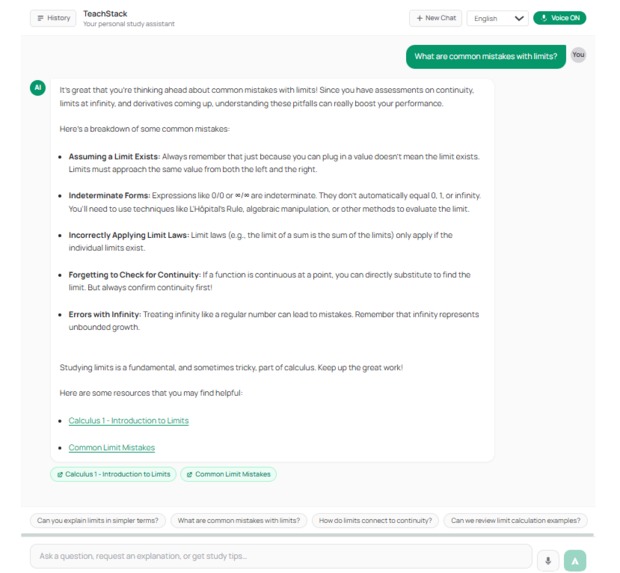

student copilot

-

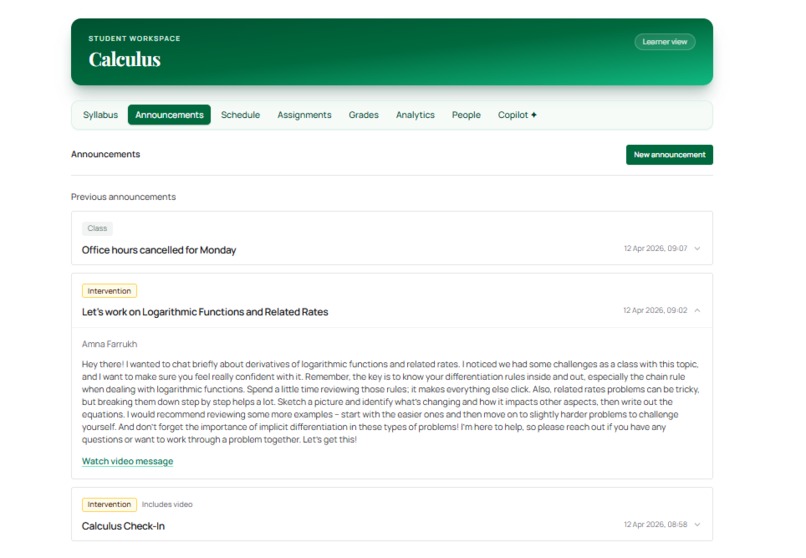

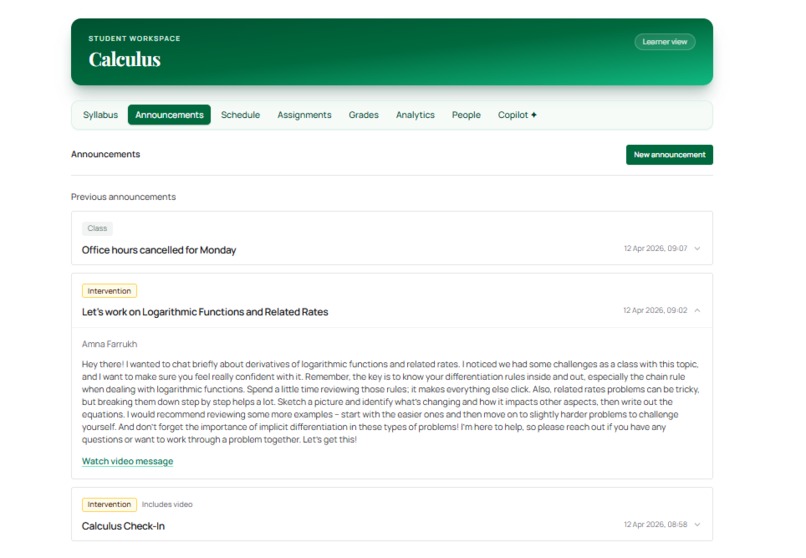

Announcements for normal and intervention messages

-

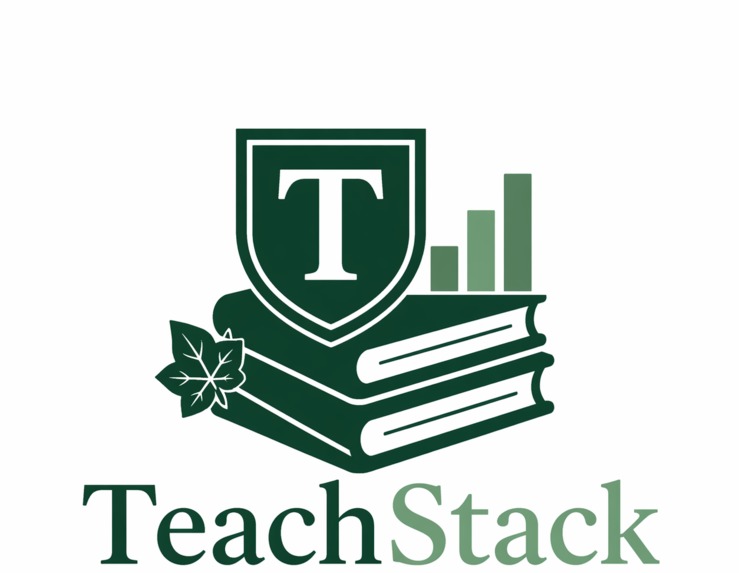

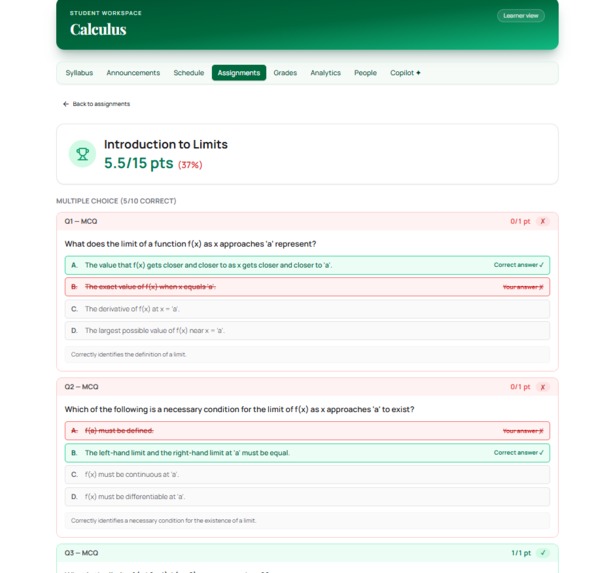

Generated rubric and result view on student side

-

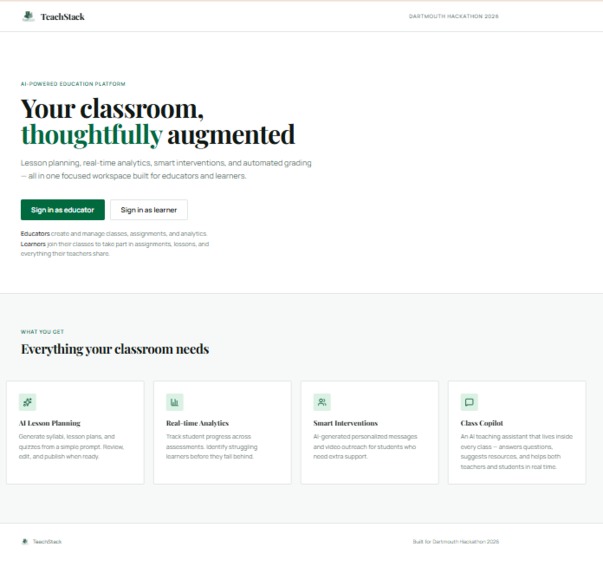

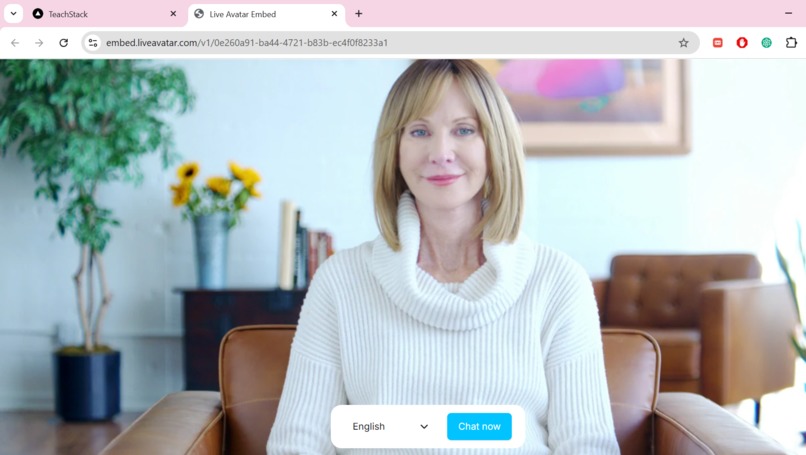

Live avatar personalised video message from teacher

Inspiration

We started from a simple observation: most teachers don't need "more AI": they need less fragmentation. Planning, grading, communication, and early warning signals live in different tabs and tools, forcing teachers to context-switch constantly instead of acting with intention. TeachStack is our attempt to build a single class workspace that handles the routine so teachers can spend attention where it matters - relationships, explanations, and timely support.

What it does

TeachStack lets a teacher run a class end-to-end in one place:

- Syllabus & schedule — paste a syllabus or generate syllabus with AI using a prompt and AI distributes topics across a real calendar with configurable meeting days

- Assignments & auto-grading — publish assessments from the weekly unit or create from scratch with mark schemes with the help of AI; the system grades submissions and flags borderline cases

- Analytics — per-student, per-assessment score breakdowns with cluster detection to surface who's falling behind and why

- Interventions — AI-generated action items grouped by urgency (Critical → High → Normal → Low), with one-click direct message or personalized video outreach to specific students

- Copilot — a voice-capable AI assistant with full class context; a teacher can ask "which students are struggling with calculus?" and get a specific, named answer grounded in real gradebook data

- Student view — learners see their own grades, schedule, announcements, and a personal copilot, all scoped to their class enrollment

How we built it

- Frontend: Next.js 15 (App Router) + React, Tailwind CSS, Supabase auth with JWT session handling, and a class-scoped UI shell that switches between teacher and student surfaces automatically

- Backend: FastAPI with 21 modular routers covering classes, assessments, grading, analytics, interventions, copilot, notifications, schedule generation, and more

- Data & security: Supabase PostgreSQL with SQL migrations in-repo, server-side class membership checks on every route, and Row-Level Security policies so data never crosses class boundaries

- AI & integrations: LLM completions via OpenRouter (configurable model, used Gemini Flash Model family for this project), ElevenLabs TTS for voice responses, Web Speech API for browser-native speech-to-text, and LiveAvatar for personalized video interventions

Challenges we ran into

Authorization complexity — almost every feature intersects with "who is in this class?" and "is this user a teacher here?" Small mistakes become data leaks or broken UX. We ended up with explicit membership checks at both the API and RLS layers.

Making analytics trustworthy — translating raw scores into "signals" is as much a communication problem as a math problem. Teachers need to understand what an aggregate means before they act on it. We keep the class mean front and center:

$$\bar{x}=\frac{1}{n}\sum_{i=1}^{n} x_i$$

And support weighted scoring when assignments shouldn't count equally — which is closer to how real grading actually works:

$$s=\frac{\sum_{i=1}^{n} w_i x_i}{\sum_{i=1}^{n} w_i}$$

The challenge was surfacing these numbers with enough context that a teacher acts confidently rather than just seeing a dashboard.

AI reliability — provider errors, quota exhaustion, and latency are real in production. We treat these as first-class states with explicit error types (LlmQuotaExceededError, LlmProviderError) and graceful degradation so the rest of the teaching workflow never feels brittle.

Schedule consistency — when topics shift or meeting days change, rebuilding a schedule creates subtle ordering bugs that are genuinely painful to debug. We built a deterministic rebuild function that treats the week/item structure as the source of truth and derives calendar sessions from it, not the other way around.

Accomplishments that we're proud of

- The Copilot actually knows your class — it gets the real roster, per-student per-assessment scores, and weak topic list injected into context. When you ask who's struggling, it tells you by name.

- Voice mode works end-to-end — speak a question, get a spoken answer, with auto-play and auto-send wired together so the interaction feels natural rather than bolted on.

- Personalized interventions that feel human — when analytics flags a struggling student, teachers can send a direct message or a personalized AI-generated video addressed to that student by name. Feedback that used to arrive as a generic email now feels like it came from someone who actually noticed.

- Analytics that surface what matters — not just averages, but per-student per-assessment breakdowns, performance cluster detection, and at-risk identification so teachers know exactly where to focus rather than guessing from a grade distribution.

- AI grading that respects teacher judgment — submissions are graded automatically against teacher-defined mark schemes, with borderline cases flagged for review. Teachers stop spending evenings marking and start spending that time on students who need them.

- Syllabus-to-schedule in one click — paste or generate a syllabus, set your meeting days and course dates, and the AI distributes topics across a real calendar. Lesson planning that used to take hours takes minutes.

- A realistic permission model — teachers and students see genuinely different surfaces in the same class, enforced at every layer, not just the UI.

- Deployed and live on Vercel + Render with a real Supabase backend — not a local demo.

What we learned

- The product value is mostly in workflow integration and trustworthy data, not in any single model call. The copilot is useful because the gradebook is real, not because the model is smart.

- Great teaching software has to be opinionated about clarity — especially for analytics and intervention suggestions, where vague output erodes teacher trust fast.

- Feature velocity stays sustainable when migrations, API contracts, and UI assumptions stay aligned as surface area grows. We learned this the hard way with a few merge conflicts.

What's next for TeachStack

- Intervention follow-up tracking — right now suggestions are generated and acted on, but there's no loop back to check if they worked. Closing that loop is the most valuable thing we could add.

- Topic Content — currently the schedule just showcase the topic titles, but we should support a page from that where the teacher can add notes and other resources from those classes. It's a strong feature with tons of use cases that removes additional dependency.

- Parent/family communication layer — the intervention outreach exists for students; extending it to families with appropriate context is a natural next step for real schools.

- Analytics explainability — showing teachers not just what the numbers are but why a student is flagged, including which specific assessments dragged the score down.

- Multi-teacher classrooms — co-teaching and TA workflows come up immediately in any real school deployment.

- Student Safety — since this application will be used by children, adding safety guard rails to ensure their protection from harmful content would be necessity for real-world deployment.

Log in or sign up for Devpost to join the conversation.