-

-

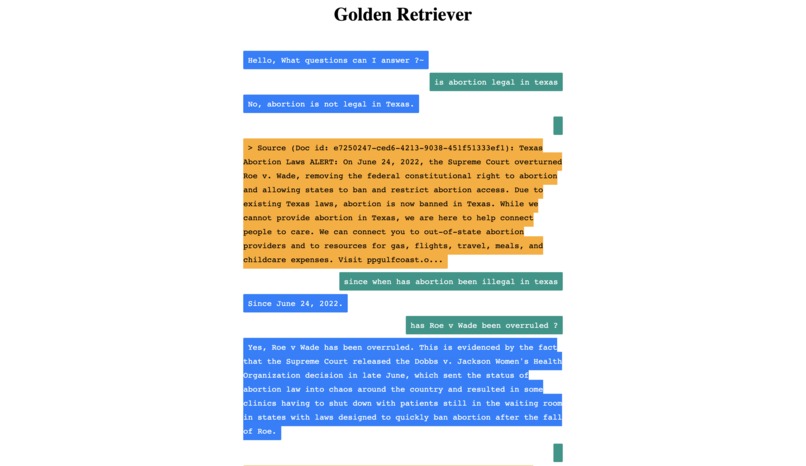

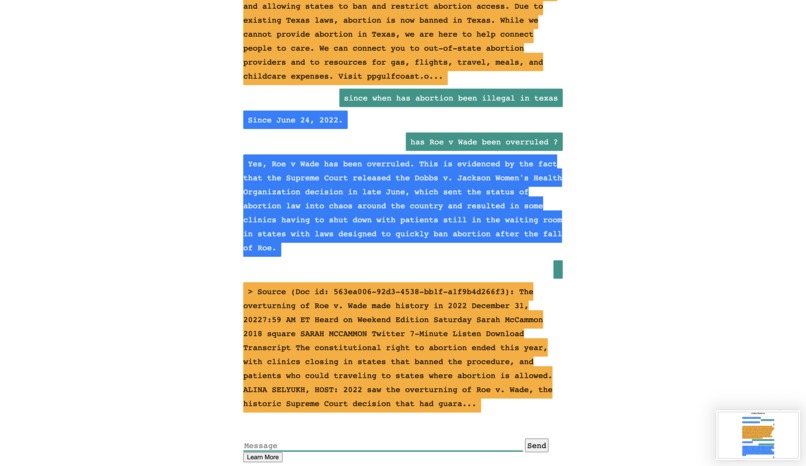

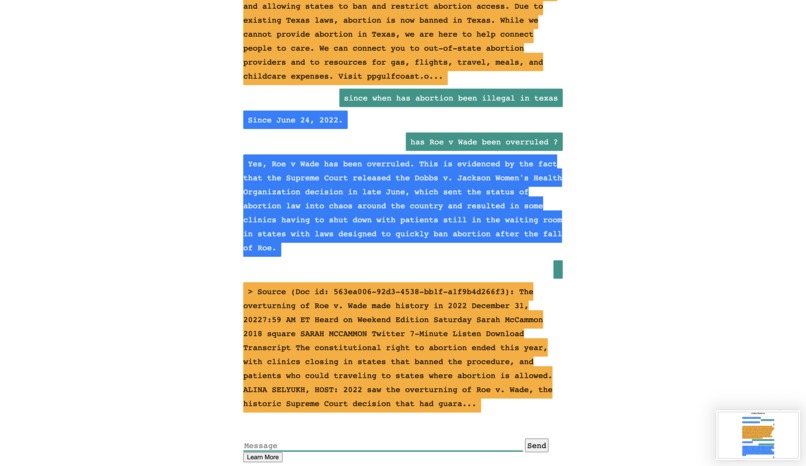

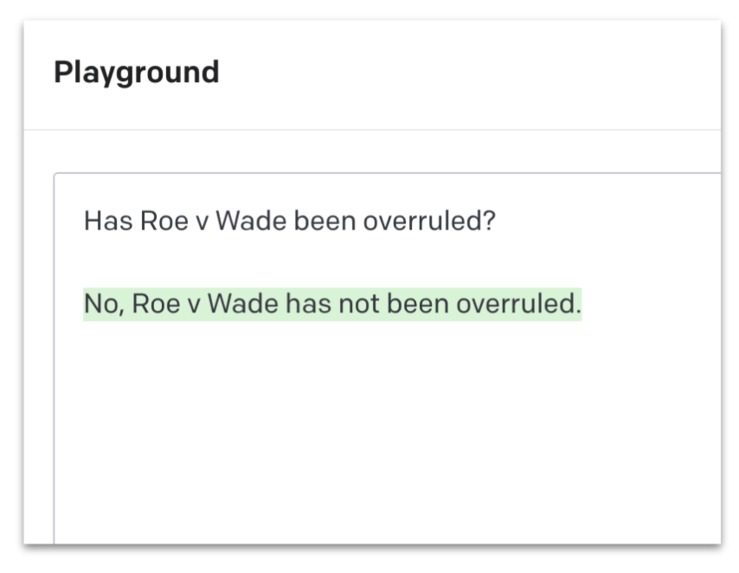

Golden Retriever answers questions about case law with pinpoint accuracy, even regarding questions regarding recent events, unlike GPT3.

-

Golden Retriever shows the exact source where it obtained its information with an explanation of how it derived its response, unlike GPT3.

-

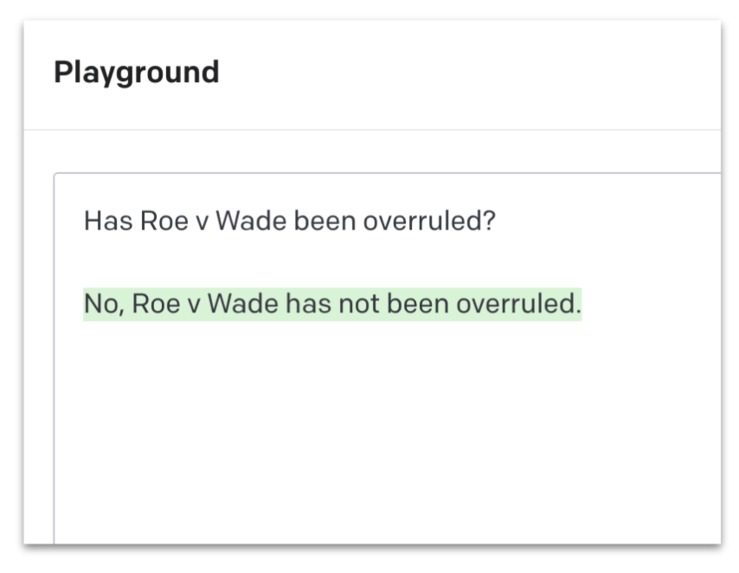

GPT3 does not have updated information. Consequently, it can respond with inaccurate information.

Inspiration

A close friend of ours was excited about her future at Stanford and beyond– she had a supportive partner, and a bright future ahead of her. But when she found out she was unexpectedly pregnant, her world turned upside down. She was shocked and scared, unsure of what to do. She knew that having a child right now wasn't an option for her. She wasn't ready, financially or emotionally, to take on the responsibility of motherhood. But with the recent overturn of Roe v Wade, she wasn't sure what her options were for an abortion.

She turned to ChatGPT for answers, hoping to find accurate and reliable information. She typed in her questions, and the AI-powered language model responded with what seemed like helpful advice.

But as she dug deeper into the information she was getting, she began to realize that not all of it was accurate. The sources that ChatGPT was referring to for clinics were in locations where abortion was no longer legal. She started to feel overwhelmed and confused. She didn't know who to trust or where to turn for accurate information about her options. She felt trapped like her fate was being decided by forces beyond her control.

With that, we realized that ChatGPT and its underlying technology (GPT3) was incredibly powerful, but had extremely systematic and foundational flaws. These are technologies that now millions are beginning to rely on, but it struggles with issues that are intrinsic to the value it’s meant to provide. We knew that it was necessary to build something better, safer, more accurate, and leveraged tools – specifically retrieval augmentation – in order to improve accuracy and provide responses based on information that the system hasn’t been trained on (for instance events and content since 2021). Enter Golden Retriever.

What it does

Imagine having access to an intelligent assistant that can help you navigate the vast sea of information out there. In many ways, we have that with ChatGPT and GPT3, but Golden Retriever, our tool, puts an end to character limitations on prompts/queries, eliminates the risk of “hallucination,” meaning answering questions incorrectly and inaccurately but confidently, and answers the questions you need to be answered (including and especially when you probe it) with incredible depth and detail. Further, it allows you to provide sources you’d want it to analyze, whereas current GPT tools are limited to information it has been trained on. Retrieval augmentation is a game-changing technology, and entirely revolutionizes the way we approach closed-domain question answering.

That’s why we built Golden Retriever. How does Golden Retriever circumvent these challenges? Golden Retriever uses a data structure that allows for a larger prompt size and gives you the freedom to connect to any external data source.

The use case we envision as incredibly pertinent in today’s world is legal counsel – traditionally, it’s expensive, inaccessible, and is a resource that most underrepresented and marginalized communities in the United States don’t have adequate access to. Golden Retriever is a revolutionary tool that can provide you with reliable legal advice when you can't afford to consult a legal professional. Whether you're facing a legal issue but don't have the time or money to consult a lawyer, or you simply want to gain a better understanding of your legal rights and responsibilities, such as when it comes to abortion, Golden Retriever can help.

As it pertains to this use case, with Golden Retriever, you can easily connect to a wide range of external data sources, including legal databases, court cases, and legal articles, to obtain accurate and up-to-date information about the legal issue you're facing. You can ask specific legal questions and receive detailed responses that take into account the context and specifics of your situation. You can even probe it to get specific advice based on your personal circumstances.

For example, imagine you're facing a difficult decision related to abortion, but you don't have the resources to consult a legal professional. Using Golden Retriever which leverages GPT Index, you can input your query and obtain a detailed response that outlines your legal rights and responsibilities, as well as any potential legal remedies available to you – it all simply depends on the information you’re looking for and ask.

How we built it

First, we loaded in the data using a Data Connector called SimpleDirectoryReader, which parses over a specified directory containing files that contain the data. Then, we wrote a Python script where we used GPTKeywordTableIndex as the interface that would connect our data with a GPT LLM using GPTIndex. We feed the pre-trained LLM with a large corpus of text that acts as the knowledge database of the GPT model. Then we group chunks of the textual data into nodes and extract the keywords from the nodes, also building a direct mapping from each keyword back to its corresponding node.

Then we prompt the user for a query in a GUI created in Flask. GPT Keyword Table Index gets a list of tuples that contain the nodes that store chunks of relevant textual data. We extract relevant keywords from the query and match those with pre-extracted node keywords to fetch the corresponding nodes. Once we have the nodes, we prepend the information in the nodes to the query and feed that into GPT to create an object response. This object contains the summarized text that will be displayed to the user and all the nodes' information that contributed to this summary. We are able to essentially cite the information we display to the user, where each node is uniquely identified by a Doc id.

Challenges we ran into

When we gave a query and found a bunch of keywords on pre-processed nodes, it wasn’t hard to generate an effective response, but it was hard to find the source of the text, and finding what chunks of data from our database our system was using to construct a response. Meaning, one of the key features of our product was that the response shows exactly what information from our database it used to derive the conclusion it came to — generally, the system is “memoryless” and cannot be asked for effective and detailed follow-up questions about where it specifically generated that information. Nevertheless, we overcame this challenge and found out how to access the object where the data of the source leveraged for the response was being sourced.

Accomplishments that we're proud of

Hallucination is considered one of the more dangerous and hard-to-diagnose issues within GPT3. When asked for sources to back up answers, GPT3 is capable of hallucinating sources, providing entirely rational-sounding justifications for its answers. Further, to properly prompt these tools to create unique and well-crafted answers, detailed prompts are necessary. Often, we’d even want prompts to leverage research articles, news articles, books, and extremely large data sets. Not only have we reduced hallucination to a negligible degree, but we’ve eliminated the limitations that come with maximum query sizes, and enabled any type of source and any quantity of sources to be leveraged in query responses and analyses.

This is a new frontier, and we’re excited and honored to have the privilege of bringing it about.

What we learned

Our team members learned the entire lifecycle of a project in such a nascent phase – from researching current discoveries and work to building and leveraging these tools in unison with our own goals, to eventually using these outputs to make them easy to interface and interact with. When it comes to human-facing technologies such as chatbots and question-answering, human feedback and interaction are vital. In just 36 hours, we replicated this entire lifecycle, from the ideation phase to research, to build on top of current infrastructures, to developing new APIs and interfaces that are easy and fun to interact with. Given that one of the problems we’re attempting to solve is inaccessibility, doing so is vital.

What's next for Golden Retriever: Retrieval Augmented GPT

Our current application of choice for Golden Retriever is making legal counsel more accurate, accessible, and affordable to broader audiences whether it comes to criminal or civil law. However, we genuinely see Golden Retriever as being an application to almost any use case – namely and most directly education, content generation for marketing and writing, and medical diagnoses. The guarantee we obtain from all inferences, answers, and analyses being backed by sources, and being able to feed in sources through retrieval augmentation that the system wasn’t even trained on, broadens the array of use cases beyond what we might have ever envisioned prior for AI chatbots.

Log in or sign up for Devpost to join the conversation.