-

-

GIF

GIF

Volume Control Gesture (Thumbs up to increase volume | Thumbs down to decrease volume)

-

GIF

GIF

Backwards and Forwards Gesture (Go back a page with left hand | Go forward a page with right hand)

-

GIF

GIF

Middle Mouse Button Gesture

-

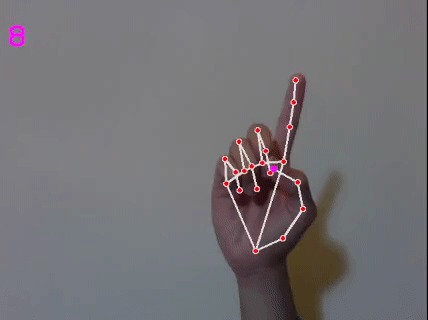

GIF

GIF

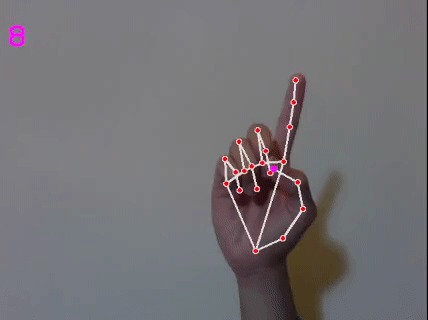

Click Gesture (Right click with right hand | Left click with left hand)

-

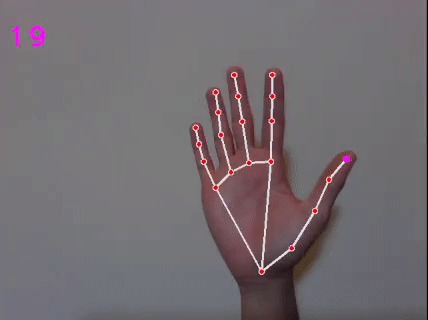

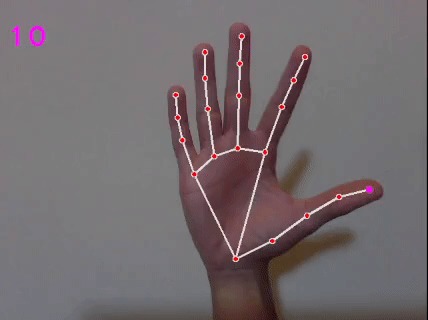

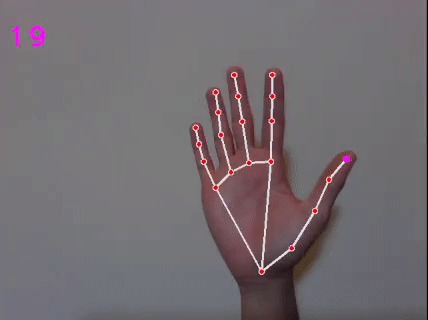

GIF

GIF

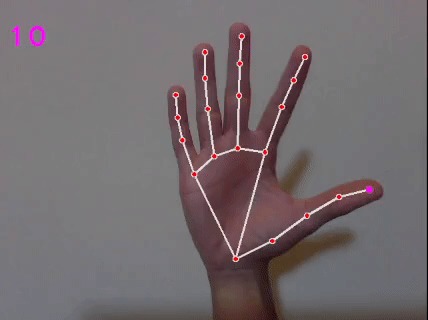

Mouse Movement Gesture

Formal Demo: https://youtu.be/HlXvsAoi7uU

This is a python application that utilizes computer vision to detect (using a camera) hand motions from the user, interprets them, and translates these hand motions into actions that are performed on the computer with a mouse and keyboard.

What inspired us

While brainstorming ideas for this hackathon, our team came across multiple cutting-edge Computer Vision APIs. Wanting to expand our skill-set, we decided to choose one of the APIs as the basis of our project geared towards making computer use more enticing. Additionally, with classes taking much of our energy, we wanted to make surfing the web a more relaxed experience.

What we learned

Throughout this project we learned the following:

- The intricacies of setting up our development environment in both GitHub and Python

- The process of implementing the mediapipe API into Python

- Implementing the OpenCV package into our project

- Learned strategies to bug fix major logic errors in Python

How we built our project

We began by spending a few hours setting up everyone's development environment. We continued by understanding the API and packages we were going to use by watching videos and reading documentation. After that we initialized our camera and the hand-tracking module to assess the data from our recorded images. Then we came together and started creating the first gesture for our program. Once we were all comfortable with programming together, we decided to split up and work on gestures individually. Once our gestures were done, we implemented a simple GUI for our program.

Challenges we faced

One of our biggest issues was certain packages not being compatible with recent versions of Python. This proved difficult as we all had different versions, and a package that would be necessary for a specific gesture was not guaranteed to work on everyone's computer. Another setback for our team was deciding on what we wanted our project to be for this hackathon. We spent almost all of day 1 brainstorming until we agreed on the concept seen today. Other than those issues, our development went smoothly.

Log in or sign up for Devpost to join the conversation.