-

TaVS Logo

-

Side perspective of the glove. Near the maximum desired size for the fully implemented end product. In view: Ultrasonic and accelerometer

-

Back perspective of the glove.

-

View of the glove's palm. Small scale haptic feedback motors are embedded within the gloves interior.

-

The glove maintains an extremely flexible and breathable form factor, even with all of the sensors attached!

-

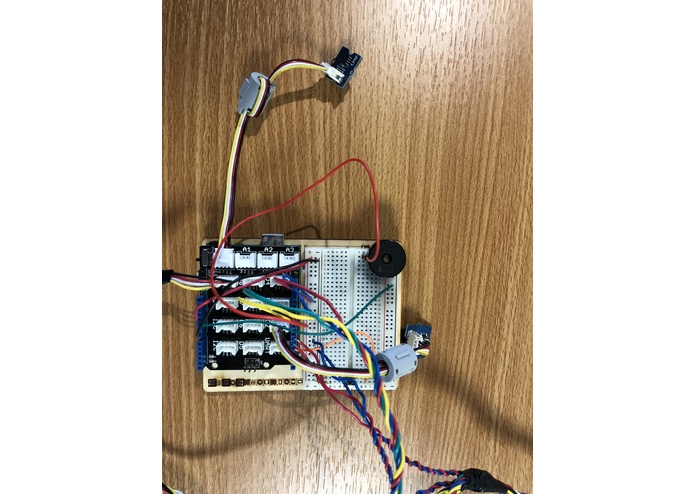

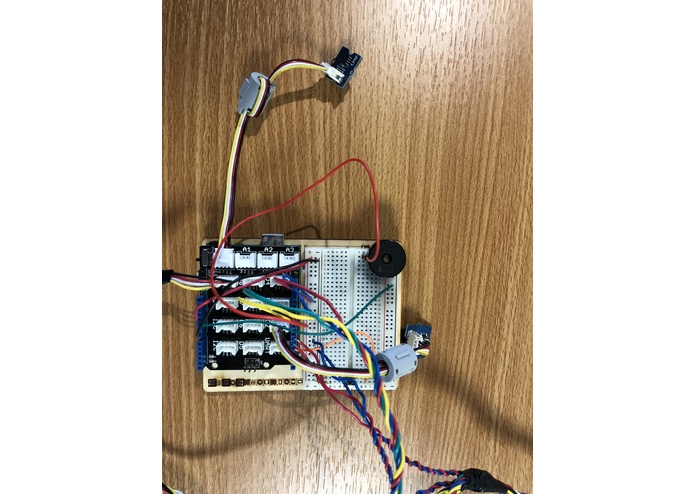

View of the microcontroller and base components: Two buttons, a speaker, a temperature sensor and a base shield.

-

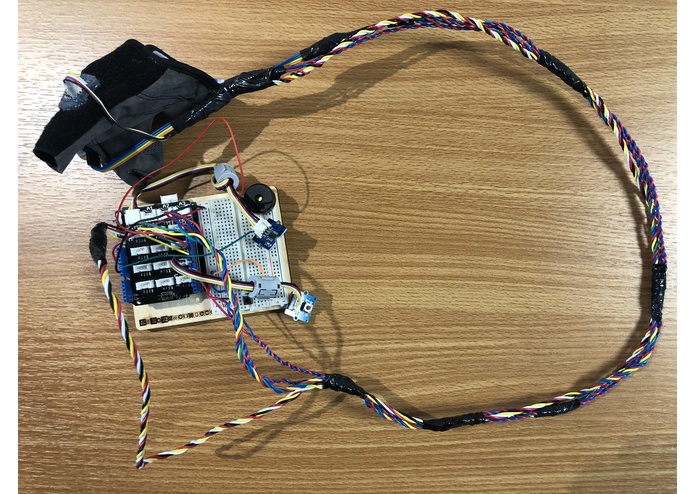

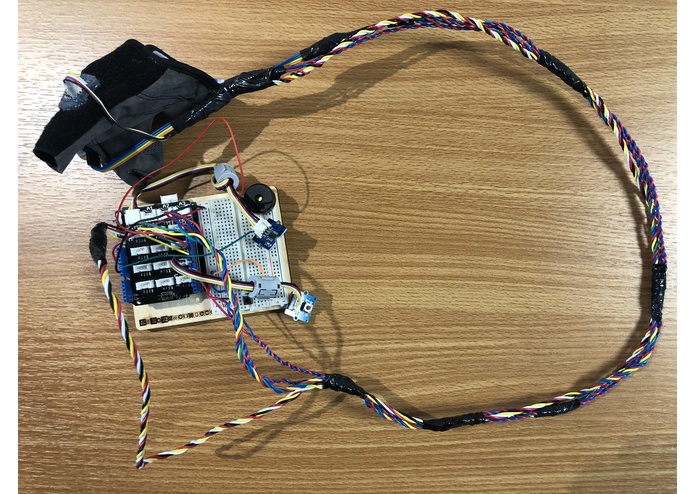

The entire prototype model. Ideally, the whole of the final product will be more compact than the prototype glove alone!

Inspiration

The current navigation tool used by the visually impaired is a long pole used by bouncing it across the ground. It was invented in 1912 and has remained virtually the same since. The "white cane" makes the user stand out in a crowd, and comes with a plethora of limitations. It is time for an upgrade.

What it does

The TaVS (Tactile Vision System), uses an ultra sonic sensor, paired with advanced algorithmic processing to produce instant haptic feedback to user. TaVS gives the user a dynamic sense of space, and eliminates many of the problems of a traditional "white cane". TaVS is light weight and portable. The system is comfortably worn on the hand, allowing for inconspicuous travel. TaVS changes its tactile output based on the environment around it; empowering the visually impaired with next level spatial awareness. The Tactile Vision System is controlled through a mixture gestures and physical buttons, allowing the user to calibrate the system with the rotation of their wrist, and set off an emergency alarm with the push of a button.

How I built it

TaVS is currently prototyped with an arduino uno microcontroller. The microcontroller interfaces with a temperature sensor, ultrasonic sensor, and vibration motors. An algorithm adjust the speed of sound in for the ultrasonic sensor based on the temperature to ensure accurate readings. I wrote all of the code in VS Code using C++.

Challenges I ran into

The first ultrasonic sensor I used was faulty. It took time to determine that the sensor itself was the issue, rather than my code. Debugging the program and manually running through the algorithms allowed me to re confirm my code. I subsequently swapped out the sensor, and eliminated the issue.

Accomplishments that I'm proud of

This project was my first time using C++ on a relatively large scale. I felt that I was able to learn the language fluidly as I developed the TaVS, and I noticed my pace of progress accelerate as I went.

What's next for TaVS - Tactile Vision System

TaVS can take on a much smaller form factor through a different microcontroller (such as the ADA1501, or a custom alternative). I unfortunately did not have a power pack to run the system for the hackathon, however a typical cell phone battery pack will easily power the system. The TaVS has incredible potential for functional expansion. For instance, gesture controls could be added with an accelerometer, and a headphone jack would allow for auditory feedback. Adding an infrared sensor in conjunction with the ultrasonic sensor would allow for increased accuracy in all conditions.

Log in or sign up for Devpost to join the conversation.