-

-

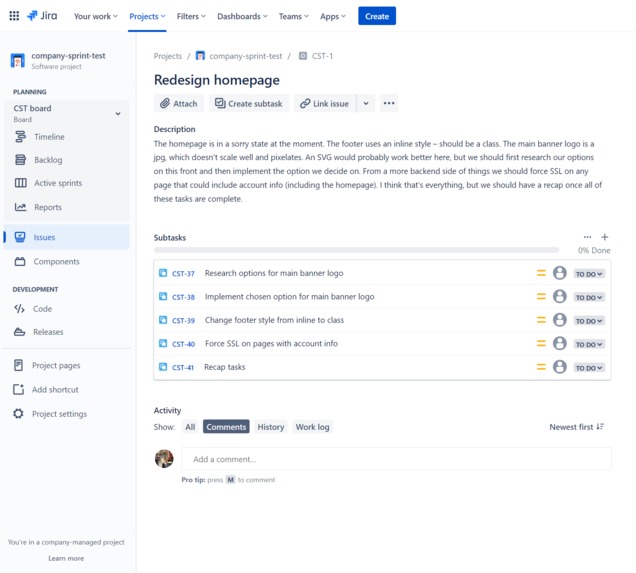

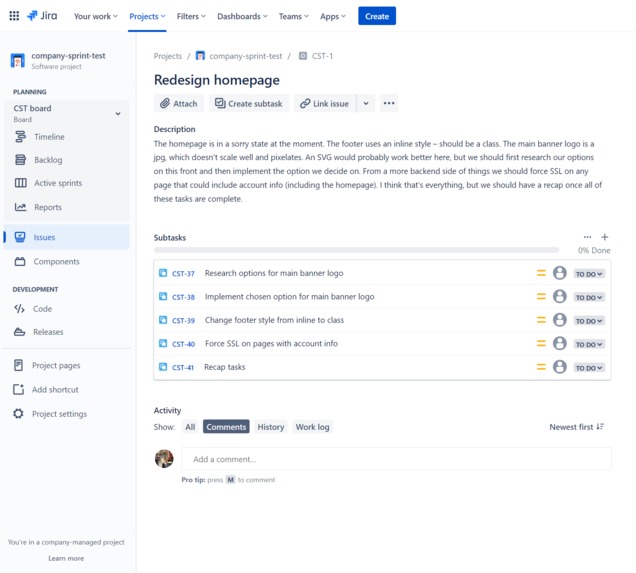

Extract subtasks from blocks of unstructured, out of sequence text

-

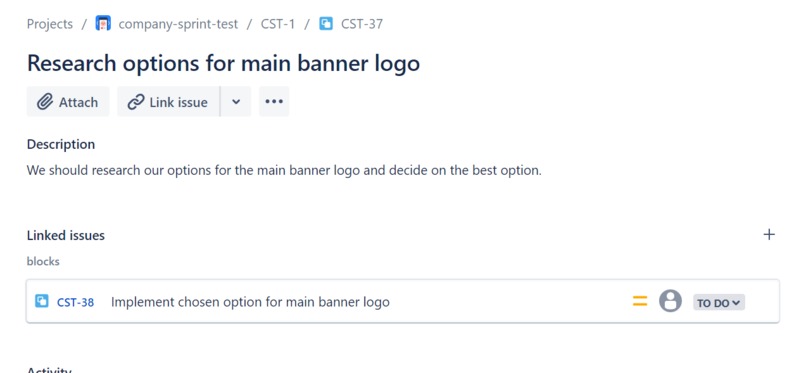

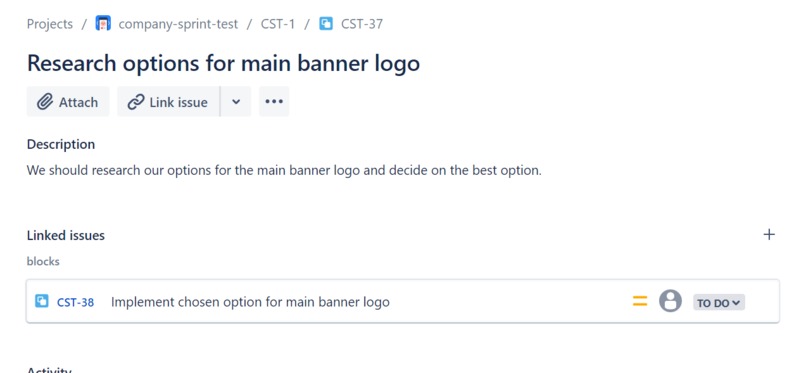

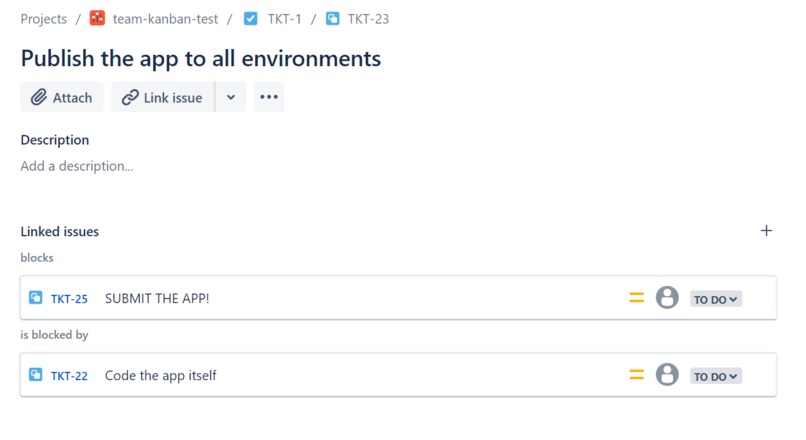

Descriptions and blocking relations are identified and added to the new subtask

-

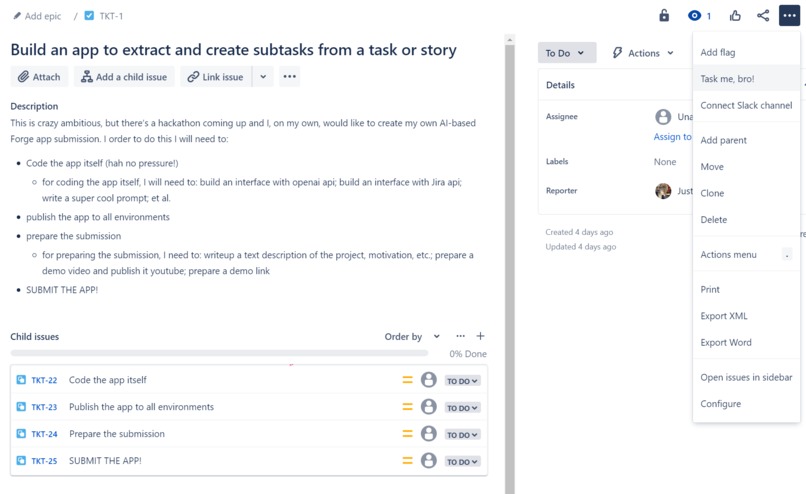

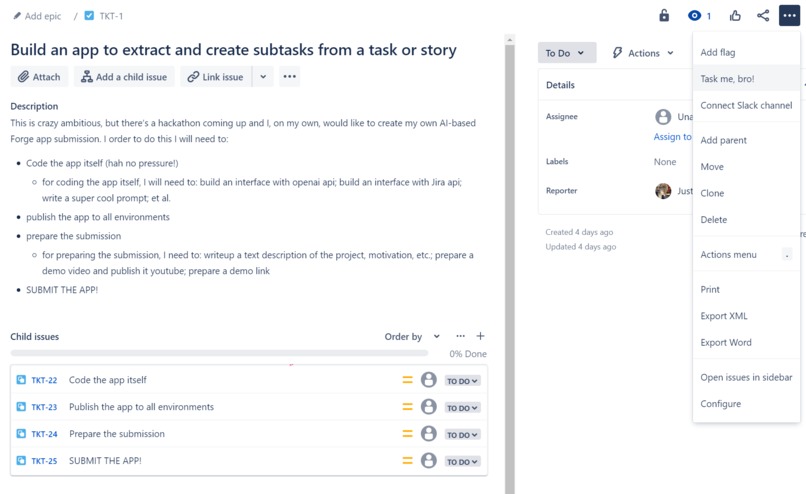

Already added some structure? No problem, bullets and subpoints are also supported

-

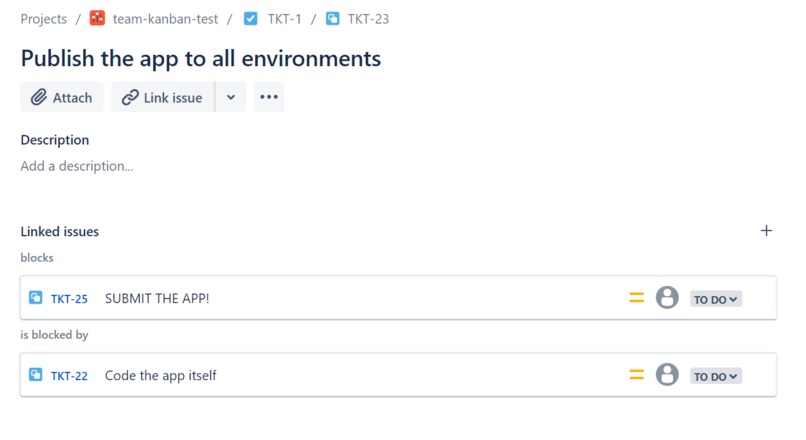

Issue relations can go both ways, and are not limited in number (multi-out, multi-in, or a mix)

Inspiration

The main inspiration for Task me, bro! is the DORA DevOps best practice of Working In Small Batches. Overly large tasks are far less likely to be completed and can become huge time sinks.

In contrast, breaking tasks up into smaller subtasks means:

- a clear starting point ("what do we need to do first?")

- dependencies between tasks are identified, enabling parallel work ("What can Bob work on while Alice is finishing up Task 1?")

- progress is easier to monitor ("90% of subtasks complete!")

- partial completion is possible

- early feedback from affected individuals or end users

- Coding: reduced risk of merge conflicts

However, breaking up tasks can be a tedious and frustrating process. It would be a great learning tool and development accelerator to have an app for this... ;)

(I am the guy who goes through the whole team's tickets and tidies them up, creates the issue relation links, et al. Yes yes they'll never learn if you do it for them but they're also not going to learn if you don't)

What it does

A Jira task or story is ingested and -- based on the description -- smaller component "subtasks" are extracted. The subtasks are organized logically in the order that they should be tackled. Relations between subtasks are identified, and the subtasks (and their issue relations) are created in Jira Cloud.

How I built it

- TypeScript

- Atlassian Forge

- Jira APIs

- OpenAI API (gpt3.5-turbo)

- prompt engineering wizardry

- LOTS of

forge deployspamming while iterating and debugging

Challenges I ran into

The primary challenge was (and remains) dealing with latency.

The latency of API-based LLMs is somewhat affected by the size and complexity of your prompt, but mainly by the generative text output. The more tokens it's outputting, the longer you have to wait for a response (unless streaming). Since special characters are usually individual tokens (ex. ")"), in contrast to entire words of several characters being a single token (ex. "complex"), the more "unnatural" your output the longer it takes to generate. A plain text paragraph is generated much faster than a JSON of a similar character count.

Also: anecdotally, Forge is slower interacting with OpenAI than in other environments or products. I assume this is due to the security/privacy overhead of leaving the Atlassian Cloud. With a 25-second timeout on Forge requests, you have to be quick AND ensure consistent run times for your app. Or leverage Forge's asynchronous options.

Accomplishments that I'm proud of

Task me, bro! has been tackled as a weekend warrior solo project to address one of the pain points I personally experience on an almost daily basis.

I HATE being a part of a feature group where tasks have not been meaningfully broken up, and it's painful being the reviewer or reviewee of a feature branch that has 2 weeks' worth of updates in it. Building a tool that can help us developers learn good "task hygiene" and DevOps practices with respect to working in smaller batches is outstanding -- I can't wait to get it to the marketplace so all can benefit.

The fact that this was accomplished in such a short time by a single engineer is pretty crazy. This would not have been possible without the incredibly fast AI development cycle resulting from the synergy of the Forge platform and API-based LLMs (OpenAI).

What I learned

I had to do a deep dive crash course on the latest developments in Forge (I haven't touched it for almost two years) and familiarize myself with Jira Cloud APIs. I now feel confident with both frameworks for maintaining and improving Task me, bro! as well as taking a stab at some of the other app projects on my pain points list.

On the ML side I've worked with LLMs in the past -- both locally fine-tuned and hosted as well as API-based -- but usually for much larger projects. I haven't used LLMs for such significant (in number of tokens output per request) generative tasks before, so I had to invent creative ways to work around and reduce the significant lag time.

What's next for Task me, bro!

- Marketplace!

- asynchronous option, for "fire and forget" usage (and no 25-second Forge limit)

- miscellaneous edge case handling

Keep an eye on App Sauce for the upcoming release of Task me, bro! and other solutions!

Built With

- forge

- generativeai

- jira

- llm

- openai

- typescript

Log in or sign up for Devpost to join the conversation.