-

-

Homepage | Type any idea and Synapse spins up a canvas around it. Quick-start prompts get you moving in seconds

-

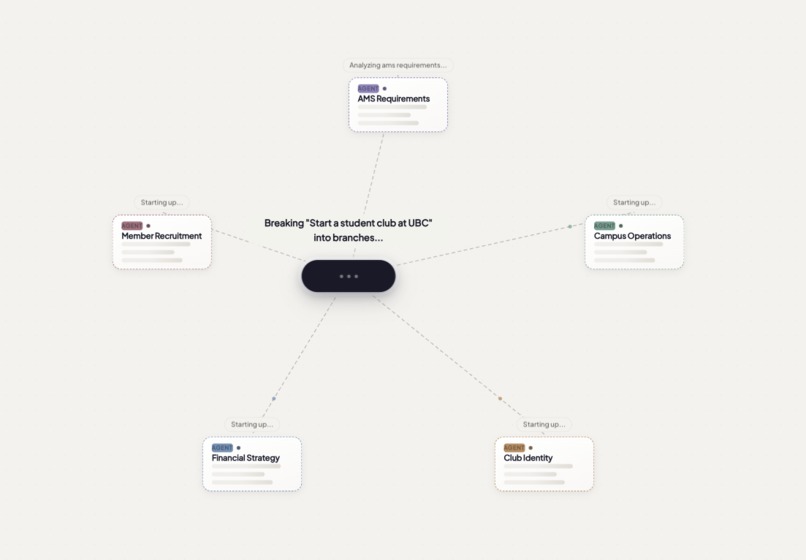

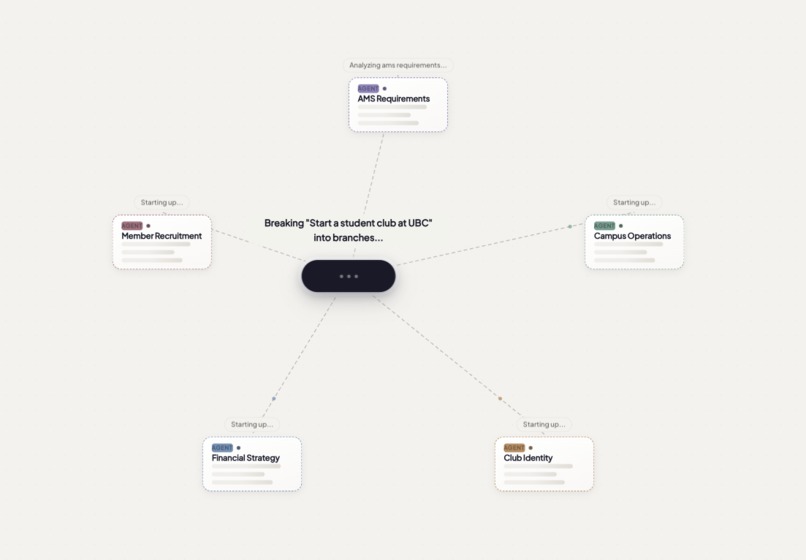

Branching | The moment an idea becomes a canvas. Synapse breaks your input into specialized AI agents

-

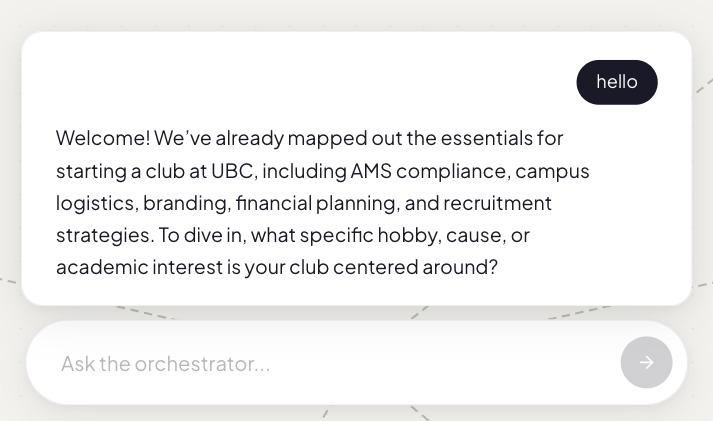

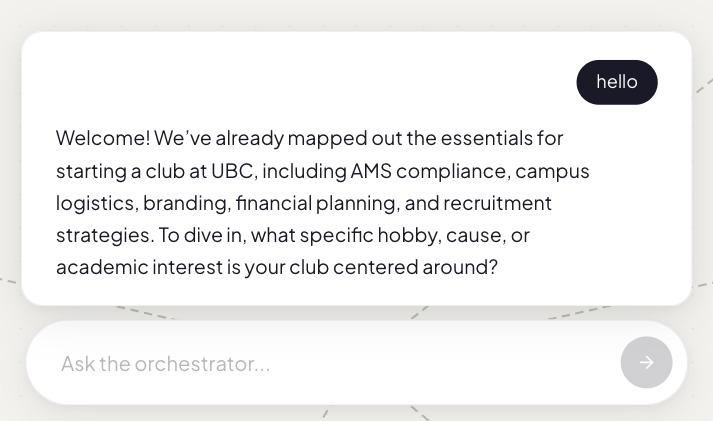

Orchestrator Chat | It holds full context of every agent and synthesizes it into one response

-

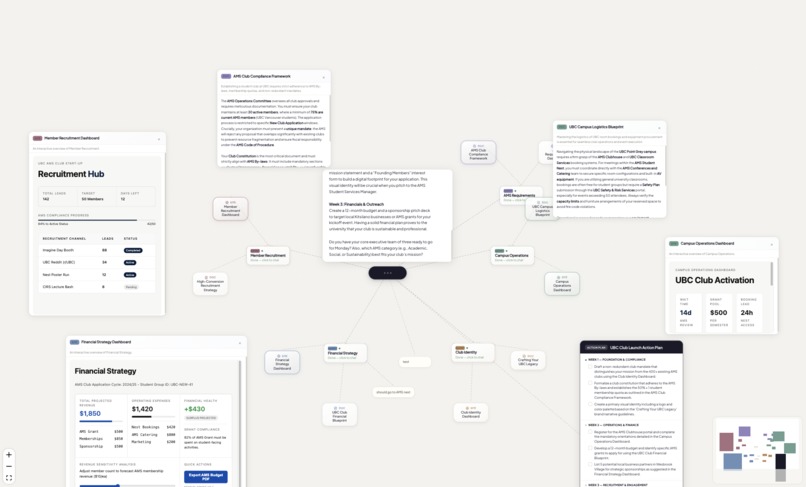

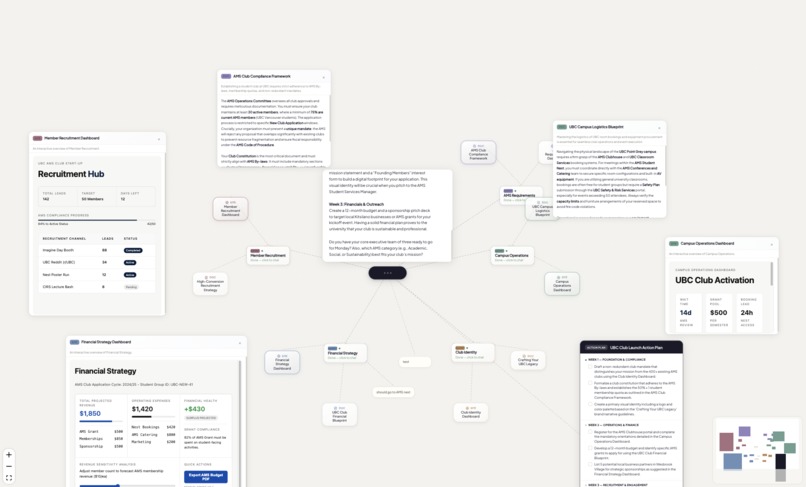

Deep Dive | Inside the canvas, each agent produces its own report, dashboard, and visuals, all interconnected

Inspiration

Almost every AI tool today is built around a chat window. One input. One output. Repeat.

We kept asking: what if AI could think the way you actually think: branching, exploring multiple directions at once, not just answering one question at a time?

That's what Synapse is.

What it does

You type an idea. Synapse breaks it into specialized AI agents, each one researching a different angle in parallel.

Each agent produces its own report, dashboard, and visuals. You can chat with any of them directly, or ask the orchestrator for a summary across everything.

When you're ready to move: generate a full action plan in one click.

How we built it

- Frontend: Next.js 15, React 19, TypeScript, Tailwind CSS, React Flow for the spatial canvas

- AI: Google Gemini for agent reasoning, document and mockup generation

- Agents: Fetch.ai uAgents for multi-agent orchestration

- Backend: Next.js API routes with parallel Gemini calls via Promise.all

Challenges

Cutting agent load time in half by splitting doc and mockup generation into parallel calls. Getting React Flow to play nicely with draggable panels and chat scroll. Making AI outputs consistent enough for a live demo.

What we learned

Spatial interfaces change how you think. Seeing everything at once makes the thinking feel different. That was the whole point.

What's next

Multiplayer canvas, deeper integrations, and true decentralized agent discovery via Agentverse.

Built With

- fetch.ai-uagents

- google-gemini-api

- next.js

- python

- react

- react-flow

- tailwind-css

- typescript

Log in or sign up for Devpost to join the conversation.