-

-

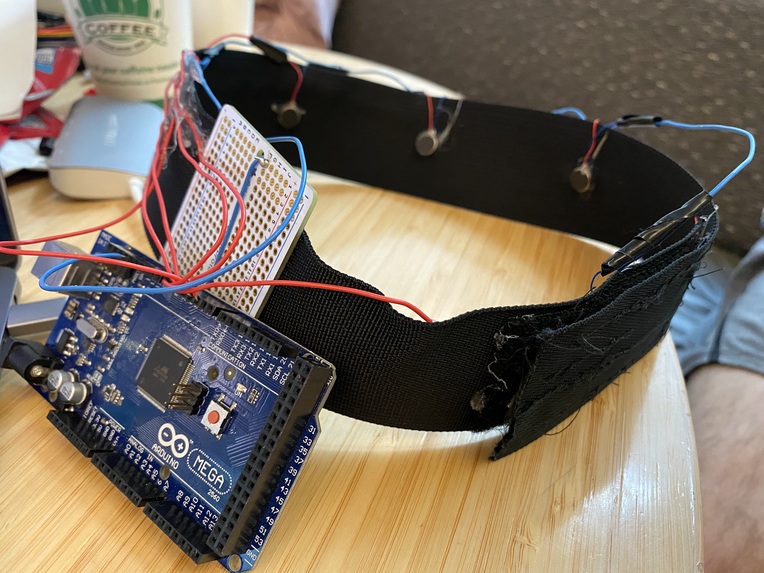

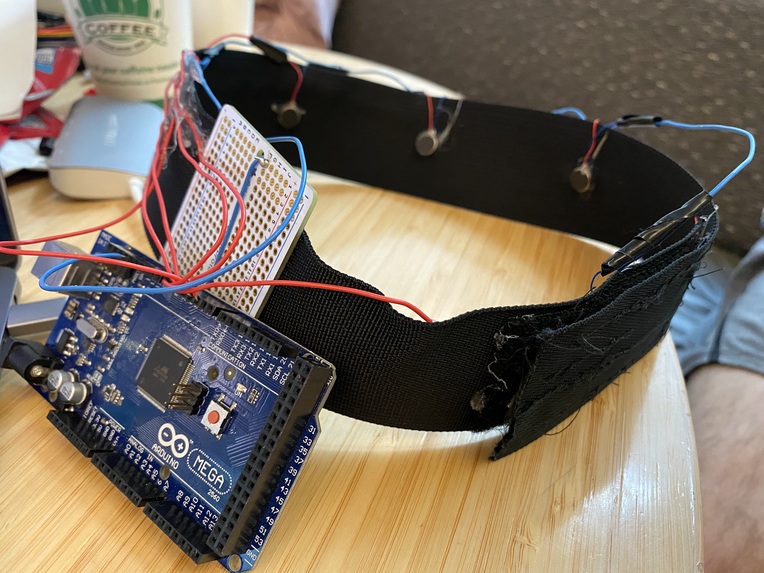

A view of the handcrafted headband with the haptic devices and circuitry visible.

-

A user wearing the full assembly of the haptic headband and Magic Leap headset.

-

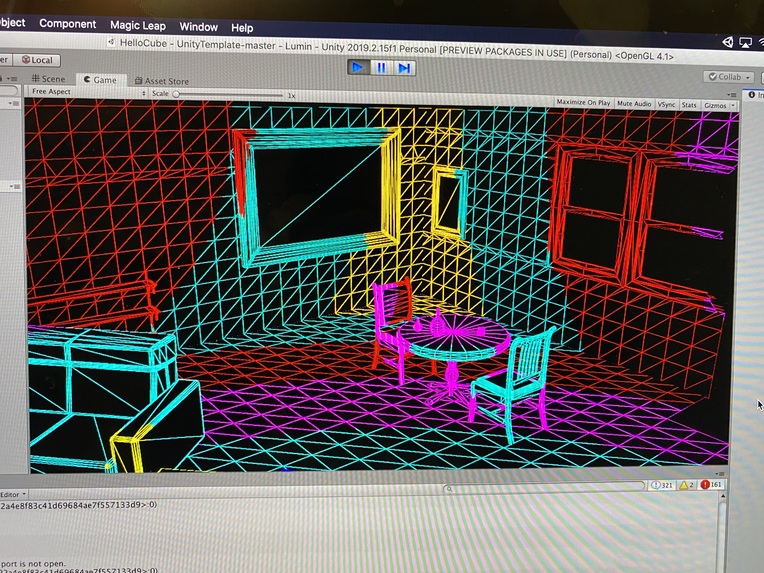

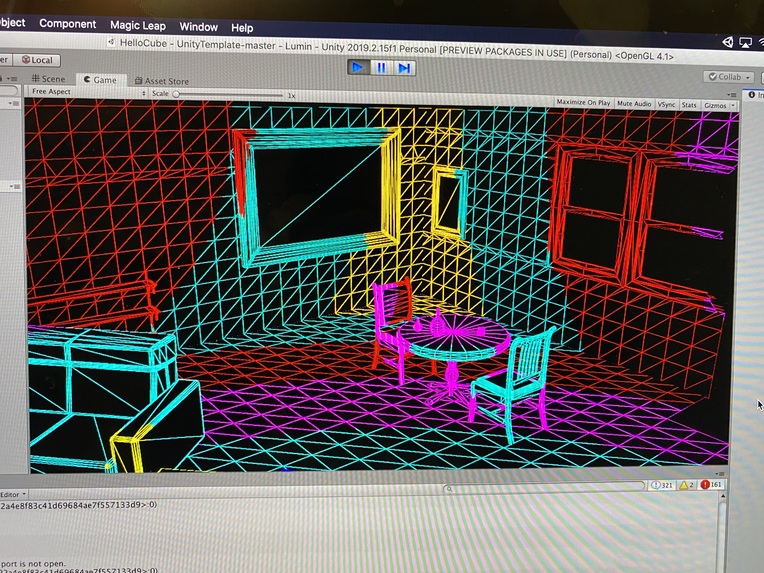

An example view of the 3D mesh created by the Magic Leap in Unity.

-

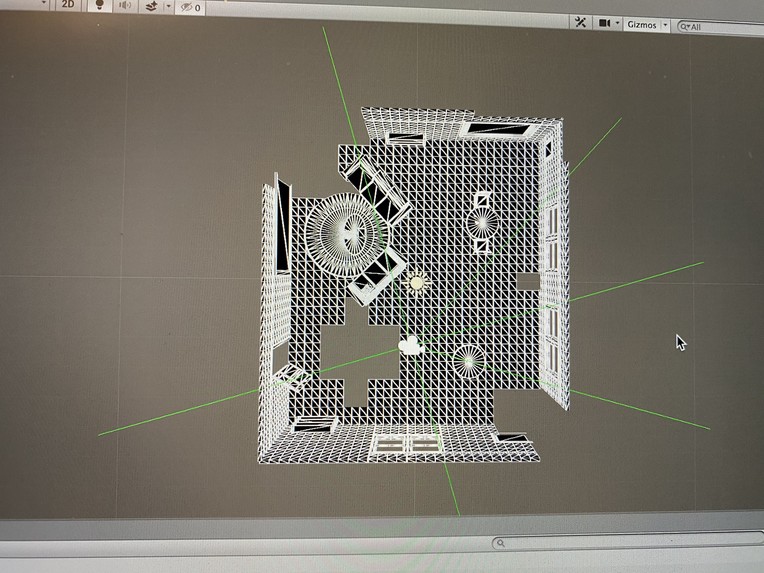

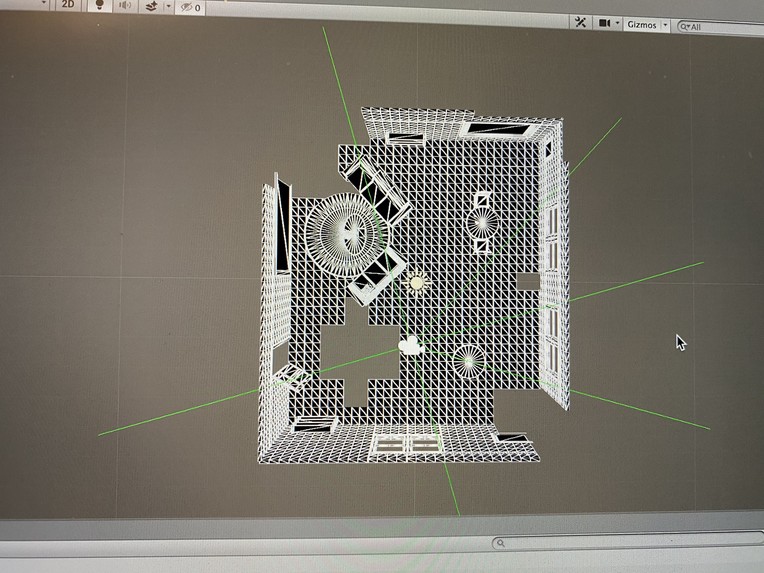

This demonstrates the raycast vectors we use in order to detect obstacles in a room.

Inspiration

We came to this Treehacks wanting to contribute our efforts towards health care. After checking out and working with some of the fascinating technologies we had available to us, we realized that the Magic Leap AR device would be perfect for developing a hack to help the visually impaired.

What it does

Sibylline uses Magic Leap AR to create a 3D model of the nearby world. It calculates the user distance from nearby objects and provides haptic feedback through a specifically designed headband. As a person gets closer to an object, the buzzing intensity increases in the direction of the object. Maneuverability options also include helping somebody walk in a straight line, by signaling deviations in their path.

How we built it

Magic Leap creates a 3D triangle mesh of the nearby world. We used the Unity video game engine to interface with the model. Raycasts are sent in 6 different directions relative to the way the user is facing and calculate the distance towards the nearest object. These Raycasts correspond to 6 actuators that are attached to a headband and connected via an Arduino. These actuators buzz with higher intensity as the user gets closer to a nearby object.

Challenges we ran into

For our initial prototype, the haptic buzzers would either be completely off or completely on. While this did allow the user to detect when an obstacle was vaguely near them in a certain direction, they had no way of knowing how far away it was. To solve this, we adjusted the actuators to modulate their intensity.

Additionally, raycasts were initially bound to the orientation of the head, meaning the user wouldn't detect obstacles in front of them if they were slouched or looking down. We had to take this into consideration when modifying our raycast vectors.

Accomplishments that we're proud of

We're proud of the system we've built. It uses a complicated stream of data which must be carefully routed through several applications, and the final result is an intensely interesting product to use. We've been able to build off of this system to craft a few interesting and useful quality of life features, and there's still plenty of room for more.

Additionally, we're proud of the extraordinary amount of potential our idea still has. We've accomplished more than just building a hack with a single use case, we've built an entirely new system that can be iterated upon and refined to improve the base functionality and add new capabilities.

What we learned

We jumped into a lot of new technologies and new skillsets while making this hack. Some of our team members used Arduino microcontrollers for the first time, while one of us learned how to solder. We all had to work hard to figure out how to interface with the Magic Leap, and we learned more about how meshing works in the Unity editor as well.

Lastly, though we cannot hope to fully understand the experience of vision impairment or blindness, we've cultivated a bit more empathy for some of the challenges such individuals face.

What's next for Sybilline

With industry support, we could significantly expand functionality of Sybilline to apply a number of other vision related tasks. For example, with AI computer vision, Sybilline could tell the user what are objects in front of them. We would be able to create a chunk-based loading system for multiple "zones" throughout the world, so the device isn't limited to a certain area. We would also want to prioritize the meshing for faster-moving objects, like people in a hallway or cars in an intersection. With more advanced hardware, we could explore other sensory modalities as our primary method of feedback, like using directional pressure rather than buzzing. In a fully focused, specifically designed final product, we would like to have more camera angles to get more meshing data with, and an additional suite of sensors to cover other immediate concerns for the user.

Log in or sign up for Devpost to join the conversation.